Device Groups#

Device Groups provide an abstraction layer for multi-device virtual hardware provisioning. Platforms can detect sets of physically connected devices (for example GPUs on NVLink or GPU-NIC pairs), then present them as one logical unit to the VM. That keeps latency-sensitive, bandwidth-heavy AI work on the intended PCIe and NVLink paths instead of ad hoc assignment.

Device groups consist of two or more hardware devices that share a common PCIe switch or a direct interconnect. This simplifies virtual hardware assignment and enables:

Multi-GPU and GPU-NIC communication - NVLink-connected GPUs can be provisioned together for peer-to-peer bandwidth and low latency on large-batch training and NCCL-heavy workloads. GPU-NIC pairs under the same PCIe switch or that meet GPUDirect RDMA criteria are grouped so storage or network DMA can target GPU memory without weak pairings; NICs that fail the thresholds are omitted.

Topology consistency - Unlike manual device assignment, Device Groups guarantee correct placement across PCIe switches and interconnects, even after reboots or events like live migration.

Simplified provisioning - Abstracting PCIe and NVLink topology into logical units reduces manual topology scripts and misconfiguration when deploying multi-device VMs.

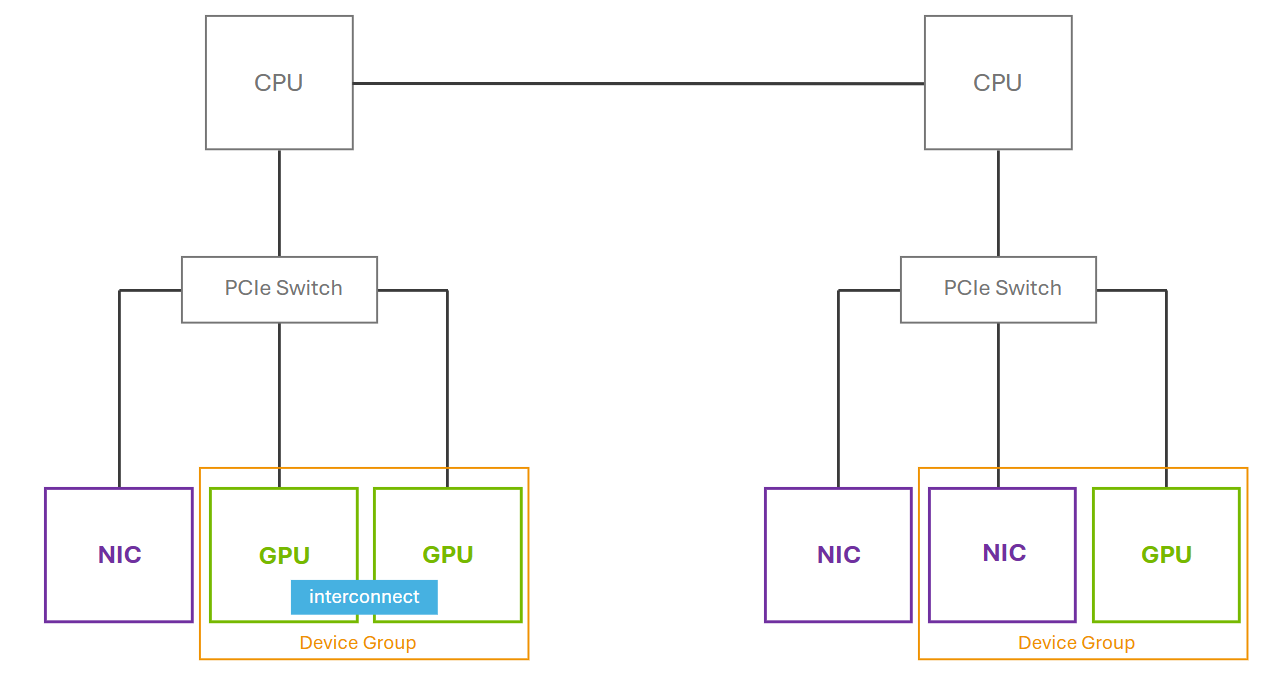

This figure shows how devices (GPUs and NICs) that share a common PCIe switch or a direct GPU interconnect can be presented as a device group. On the right side, although two NICs are connected to the same PCIe switch as the GPU, only one NIC is included in the device group. This is because the NVIDIA driver identifies and exposes only the GPU-NIC pairings that meet the necessary criteria like GPUDirect RDMA. Adjacent NICs that do not satisfy these requirements are excluded.

For more information regarding Hypervisor Platform support for Device Groups, refer to the vGPU Device Groups documentation.

Limitations#

Device Groups require hypervisor-level support; check your hypervisor’s release notes for availability.

Only NICs that meet GPUDirect RDMA criteria are included in a device group. Adjacent NICs on the same PCIe switch that do not satisfy these requirements are excluded.

Device group composition is determined at driver load time. Hot-adding or removing devices after the vGPU Manager loads is not supported.

Live migration of VMs using device groups is subject to the same constraints as multi-vGPU live migration. Refer to Live Migration for details.