Installing the DGX Software#

This section requires that you have already installed Red Hat Enterprise Linux 8 or derived operating system on the DGX system. You can skip this section of you already installed the DGX software stack during a kickstart install.

Configuring a System Proxy#

If your network requires use of a proxy, then

Edit the file

/etc/dnf/dnf.confand make sure the following lines are present in the[main]section, using the parameters that apply to your network:proxy=http://<Proxy-Server-IP-Address>:<Proxy-Port> proxy_username=<Proxy-User-Name> proxy_password=<Proxy-Password>

Enabling the DGX Software Repository#

Attention

By running these commands you are confirming that you have read and agree to be bound by the DGX Software License Agreement. You are also confirming that you understand that any pre-release software and materials available that you elect to install in a DGX may not be fully functional, may contain errors or design flaws, and may have reduced or different security, privacy, availability, and reliability standards relative to commercial versions of NVIDIA software and materials, and that you use pre-release versions at your risk.

Install the NVIDIA DGX Package for Red Hat Enterprise Linux.

sudo dnf install -y https://repo.download.nvidia.com/baseos/el/el-files/8/nvidia-repo-setup-21.06-1.el8.x86_64.rpm

Installing Required Components#

On Red Hat Enterprise Linux, run the following commands to enable additional repositories required by the DGX Software.

sudo subscription-manager repos --enable=rhel-8-for-x86_64-appstream-rpms sudo subscription-manager repos --enable=rhel-8-for-x86_64-baseos-rpms sudo subscription-manager repos --enable=codeready-builder-for-rhel-8-x86_64-rpms

Upgrade to the latest software.

Note

Before performing the upgrade, consult the release notes for additional instructions depending on the specific EL8 release.

sudo dnf update -y --nobest

Install DGX tools and configuration files.

For DGX-1, install DGX-1 Configurations.

sudo dnf group install -y 'DGX-1 Configurations'

For the DGX-2, install DGX-2 Configurations.

sudo dnf group install -y 'DGX-2 Configurations'

For the DGX A100, install DGX A100 Configurations.

sudo dnf group install -y 'DGX A100 Configurations'

For the DGX H100, install DGX H100 Configurations.

sudo dnf group install -y 'DGX H100 Configurations'

For the DGX A800, install DGX A800 Configurations.

sudo dnf group install -y 'DGX A800 Configurations'

For the DGX Station, install DGX Station Configurations.

sudo dnf group install -y 'DGX Station Configurations'

For the DGX Station A100, install DGX Station A100 Configurations.

sudo dnf group install -y 'DGX Station A100 Configurations'

For the DGX Station A800, install DGX Station A800 Configurations.

sudo dnf group install -y 'DGX Station A800 Configurations'

The configuration changes take effect only after rebooting the system, which will be performed after installing the CUDA driver.

Configure the

/raidpartition.All DGX systems support RAID 0 or RAID 5 arrays.

The following commands create a RAID array, mount it to

/raidand create an appropriate entry in/etc/fstab.To create a RAID 0 array:

sudo /usr/bin/configure_raid_array.py -c -f

To create a RAID 5 array:

sudo /usr/bin/configure_raid_array.py -c -f -5

Note

The RAID array must be configured before installing

nvidia-conf-cachefilesd, which places the proper SELinux label on the/raiddirectory. If you ever need to recreate the RAID array — which will wipe out any labeling on/raid— afternvidia-conf-cachefilesdhas already been installed, be sure to restore the label manually before restartingcachefilesd.sudo restorecon /raid sudo systemctl restart cachefilesd

Optional: If you wish to use your RAID array for caching, install

nvidia-conf-cachefilesd. This will update thecachefilesdconfiguration to use the/raidpartition.sudo dnf install -y nvidia-conf-cachefilesd

Install the NVIDIA CUDA driver.

Replace the

525value in the following commands with the NVIDIA GPU driver branch that you want to install. Refer to the NVIDIA DGX Software for Red Hat Enterprise Linux 8 Release Notes for information about the supported driver branches.If you need to install a driver that corresponds to a specific CUDA library version, refer to the driver release notes in the NVIDIA Driver Documentation. The CUDA Toolkit version in the release notes identifies the CUDA version.

Important

If you are installing the CUDA driver from a local repository, follow the instructions at Installing the NVIDIA CUDA Driver from the Local Repository instead of this step.

Optional: List the available driver modules.

sudo dnf module list nvidia-driver

For non-NVSwitch systems such as DGX-1, DGX Station, and DGX Station A100, install the driver using the default and src profiles.

sudo dnf module install --nobest -y nvidia-driver:525/{default,src} sudo dnf install -y nv-persistence-mode libnvidia-nscq-525

For NVSwitch systems such as DGX-2 and DGX A100/A800, install the driver using the fabric manager (fm) and src profiles.

sudo dnf module install --nobest -y nvidia-driver:525/{fm,src} sudo dnf install -y nv-persistence-mode nvidia-fm-enable

For DGX H100, install the DKMS version of the driver using the fabric manager (fm) profile:

sudo dnf module install --nobest -y nvidia-driver:535-dkms/fm sudo dnf install -y nv-persistence-mode nvidia-fm-enable

(DGX Station A100/A800 Only) Install additional packages required for DGX Station A100 and DGX Station A800.

These packages must be installed after installation of the

nvidia-drivermodule.sudo dnf install -y nvidia-conf-xconfig nv-docker-gpus

Reboot the system to load the drivers and to update system configurations.

Issue the reboot.

sudo rebootAfter the system has rebooted, verify that the drivers have been loaded and are handling the NVIDIA devices.

nvidia-smi

Example Output

+-----------------------------------------------------------------------------+ | NVIDIA-SMI 525.125.06 Driver Version: 525.125.06 CUDA Version: 12.0 | |-------------------------------+----------------------+----------------------+ | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 Tesla V100-SXM2... On | 00000000:06:00.0 Off | 0 | | N/A 35C P0 42W / 300W | 0MiB / 16160MiB | 0% Default | | | | N/A | +-------------------------------+----------------------+----------------------+ | 1 Tesla V100-SXM2... On | 00000000:07:00.0 Off | 0 | | N/A 35C P0 44W / 300W | 0MiB / 16160MiB | 0% Default | | | | N/A | +-------------------------------+----------------------+----------------------+ ... +-------------------------------+----------------------+----------------------+ | 7 Tesla V100-SXM2... On | 00000000:8A:00.0 Off | 0 | | N/A 35C P0 43W / 300W | 0MiB / 16160MiB | 0% Default | | | | N/A | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------------------------------------------------+

Install the NVIDIA container device plugin.

Install

docker-ce.As this may conflict with existing packages on the system, specify the

--allowerasingoption:sudo dnf install -y docker-ce --allowerasing

Install the NVIDIA Container Runtime group.

sudo dnf group install -y 'NVIDIA Container Runtime'

Restart the docker daemon.

sudo systemctl restart docker

Run the following command to verify the installation.

sudo docker run --gpus=all --rm nvcr.io/nvidia/cuda:12.0.0-base-ubi8 nvidia-smi

See the section Running Containers for more information about this command.

Example Output

+-----------------------------------------------------------------------------+ | NVIDIA-SMI 525.125.06 Driver Version: 525.125.06 CUDA Version: 12.0 | |-------------------------------+----------------------+----------------------+ | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 Tesla V100-SXM2... On | 00000000:06:00.0 Off | 0 | | N/A 35C P0 42W / 300W | 0MiB / 16160MiB | 0% Default | | | | N/A | +-------------------------------+----------------------+----------------------+ | 1 Tesla V100-SXM2... On | 00000000:07:00.0 Off | 0 | | N/A 35C P0 44W / 300W | 0MiB / 16160MiB | 0% Default | | | | N/A | +-------------------------------+----------------------+----------------------+ ... +-------------------------------+----------------------+----------------------+ | 7 Tesla V100-SXM2... On | 00000000:8A:00.0 Off | 0 | | N/A 35C P0 43W / 300W | 0MiB / 16160MiB | 0% Default | | | | N/A | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------

Installation of another required software component is explained in Using the NVIDIA Mellanox InfiniBand Drivers.

Installing Optional Components#

The DGX is fully functional after installing the components as described in Installing Required Components. If you intend to launch NGC containers (which incorporate the CUDA toolkit, NCCL, cuDNN, and TensorRT) on the DGX system, which is the expected use case, then you can skip this section.

If you intend to use your DGX system as a development system for running deep learning applications on bare metal, then install the optional components as described in this section.

To install the CUDA Toolkit 12.0, issue the following.

$ sudo dnf install -y cuda-toolkit-12-0 cuda-compat-12-0 nvidia-cuda-compat-setup

To administer self-encrypting drives, install the

nv-disk-encryptpackage, issue the following.$ sudo dnf install -y nv-disk-encrypt $ sudo reboot

Refer to the “Managing Self-Encrypting Drives” section in the DGX A100/A800 User Guide for usage information.

To install the NVIDIA Collectives Communication Library (NCCL) Runtime, refer to the NCCL:Getting Started documentation.

To install the CUDA Deep Neural Networks (cuDNN) Library Runtime, refer to the NVIDIA cuDNN page.

To install NVIDIA TensorRT, refer to the NVIDIA TensorRT page.

To install NVIDIA GPUDirect Storage (GDS), issue the following to install the GDS packages.

$ sudo dnf install nvidia-gds

Be sure to enable GDS within the MLNX_OFED driver if you install the driver. Refer to Using the NVIDIA Mellanox InfiniBand Drivers.

Installing the Optional NVIDIA Desktop Theme#

The DGX Software Repository also provides optional theme packages and desktop wallpapers to give the user-interface an NVIDIA look-and-feel. These packages would have been installed as part of the DGX Station Configurations group, but users can also manually install this:

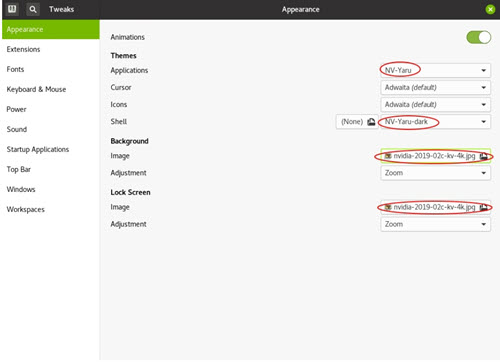

To apply the theme and background images, first open gnome-tweaks.

Under Applications, select one of the NV-Yaru themes.

This comes in default, light, and dark variations.

Under Shell, select the**NV-Yaru-dark** theme.

If this field is grayed out, you may need to reboot the system or restart GDM in order to enable the user-themes extension.

To restart GDM, issue the following.

sudo systemctl restart gdmSelect one of the NVIDIA wallpapers for the background image and lock screen.