DSSD¶

Preparing the Dataset¶

The dataset for DSSD contains images and corresponding label files in KITTI text label format.

The data structure must be in the following format:

/Dataset_01

/images

0000.jpg

0001.jpg

0002.jpg

...

...

...

N.jpg

/labels

0000.txt

0001.txt

0002.txt

...

...

...

N.txt

Creating a Configuration File¶

Below is a sample for the DSSD spec file. It has six major components: dssd_config,

training_config, eval_config, nms_config, augmentation_config,

and dataset_config. The format of the spec file is a protobuf text (prototxt) message and

each of its fields can be either a basic data type or a nested message. The top level structure

of the spec file is summarized in the table below.

random_seed: 42

dssd_config {

aspect_ratios: "[[1.0, 2.0, 0.5], [1.0, 2.0, 0.5, 3.0, 1.0/3.0], [1.0, 2.0, 0.5, 3.0, 1.0/3.0], [1.0, 2.0, 0.5, 3.0, 1.0/3.0], [1.0, 2.0, 0.5], [1.0, 2.0, 0.5]]"

scales: "[0.07, 0.15, 0.33, 0.51, 0.69, 0.87, 1.05]"

two_boxes_for_ar1: true

clip_boxes: false

variances: "[0.1, 0.1, 0.2, 0.2]"

arch: "resnet"

nlayers: 18

freeze_bn: false

freeze_blocks: 0

}

training_config {

batch_size_per_gpu: 16

num_epochs: 80

enable_qat: false

learning_rate {

soft_start_annealing_schedule {

min_learning_rate: 5e-5

max_learning_rate: 2e-2

soft_start: 0.15

annealing: 0.8

}

}

regularizer {

type: L1

weight: 3e-5

}

}

eval_config {

validation_period_during_training: 10

average_precision_mode: SAMPLE

batch_size: 16

matching_iou_threshold: 0.5

}

nms_config {

confidence_threshold: 0.01

clustering_iou_threshold: 0.6

top_k: 200

}

augmentation_config {

output_width: 300

output_height: 300

output_channel: 3

}

dataset_config {

data_sources: {

label_directory_path: "/path/to/train/labels"

image_directory_path: "/path/to/train/images"

}

include_difficult_in_training: true

target_class_mapping {

key: "car"

value: "car"

}

target_class_mapping {

key: "pedestrian"

value: "pedestrian"

}

target_class_mapping {

key: "cyclist"

value: "cyclist"

}

target_class_mapping {

key: "van"

value: "car"

}

target_class_mapping {

key: "person_sitting"

value: "pedestrian"

}

validation_data_sources: {

label_directory_path: "/path/to/val/labels"

image_directory_path: "/path/to/val/images"

}

}

Training Config¶

The training configuration (training_config) defines the parameters needed for the

training, evaluation, and inference. Details are summarized in the table below.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

batch_size_per_gpu |

The batch size for each GPU, so the effective batch size is “batch_size_per_gpu * num_gpus” |

Unsigned int, positive |

– |

num_epochs |

The anchor batch size used to train the RPN |

Unsigned int, positive. |

– |

enable_qat |

Whether to use quantization-aware training |

Boolean |

– |

learning_rate |

Only soft_start_annealing_schedule with these nested parameters is supported:

|

Message type. |

– |

regularizer |

This parameter configures the regularizer to be used while training and contains the following nested parameters:

|

Message type. |

L1 (Note: NVIDIA suggests using the L1 regularizer when training a network before pruning as L1 regularization helps make the network weights more prunable.) |

Evaluation Config¶

The evaluation configuration (eval_config) defines the parameters needed for the evaluation

either during training or as a standalone procedure. Details are summarized in the table below.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

validation_period_during_training |

The number of training epochs per validation. |

Unsigned int, positive |

10 |

average_precision_mode |

The Average Precision (AP) calculation mode can be either SAMPLE or INTEGRATE. SAMPLE is used as VOC metrics for VOC 2009 or before. INTEGRATE is used for VOC 2010 or after. |

ENUM type ( SAMPLE or INTEGRATE) |

SAMPLE |

matching_iou_threshold |

The lowest IoU of the predicted box and ground truth box that can be considered a match. |

Boolean |

0.5 |

NMS Config¶

The NMS configuration (nms_config) defines the parameters needed for NMS postprocessing.

The NMS configuration applies to the NMS layer of the model in training, validation, evaluation,

inference, and export. Details are summarized in the table below.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

confidence_threshold |

Boxes with a confidence score less than confidence_threshold are discarded before applying NMS. |

float |

0.01 |

cluster_iou_threshold |

The IoU threshold below which boxes will go through the NMS process. |

float |

0.6 |

top_k |

top_k boxes will be output after the NMS keras layer. If the number of valid boxes is less than k, the returned array will be padded with boxes whose confidence score is 0. |

Unsigned int |

200 |

Augmentation Config¶

The augmentation_config parameter defines the image size after preprocessing.

The augmentation methods in the SSD paper will be performed during training, including random flip, zoom-in,

zoom-out and color jittering. And the augmented images will be resized to the output shape defined

in augmentation_config. In evaluation process, only the resize will be performed.

Note

The details of augmentation methods can be found in setcion 2.2 and 3.6 of the paper.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

output_width |

The width of preprocessed images and the network input |

Unsigned int |

300 |

output_height |

The height of preprocessed images and the network input |

Unsigned int |

300 |

output_channel |

The channel of preprocessed images |

Unsigned int |

3 |

Dataset Config¶

The dataset configuration (dataset_config) defines the parameters needed for the data

loader. The configuration is shared with DetectNet_v2. See the Dataloader

section for more information.

DSSD Config¶

The DSSD configuration (dssd_config) defines the parameters needed for building the DSSD

model. Details are summarized in the table below.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

aspect_ratios_global |

The anchor boxes of aspect ratios defined in aspect_ratios_global will be generated for each feature layer used for prediction. Note that either the aspect_ratios_global or aspect_ratios parameter is required; you don’t need to specify both. |

string |

“[1.0, 2.0, 0.5, 3.0, 0.33]” |

aspect_ratios |

The length of the outer list must be equivalent to the number of feature layers used for anchor box generation, and the i-th layer will have anchor boxes with aspect ratios defined in aspect_ratios[i]. Note that either the aspect_ratios_global or aspect_ratios parameter is required; you don’t need to specify both. |

string |

“[[1.0,2.0,0.5], [1.0,2.0,0.5], [1.0,2.0,0.5], [1.0,2.0,0.5], [1.0,2.0,0.5], [1.0, 2.0, 0.5, 3.0, 0.33]]” |

two_boxes_for_ar1 |

This setting is only relevant for layers that have 1.0 as the aspect ratio. If two_boxes_for_ar1 is true, two boxes will be generated with an aspect ratio of 1: one with a scale for this layer and the other with a scale that is the geometric mean of the scale for this layer and the scale for the next layer. |

Boolean |

True |

clip_boxes |

If this parameter is True, all corner anchor boxes will be truncated so they are fully inside the feature images. |

Boolean |

False |

scales |

A list of positive floats containing scaling factors per convolutional predictor layer. This list must be one element longer than the number of predictor layers so that, if two_boxes_for_ar1 is true, the second aspect-ratio 1.0 box for the last layer can have a proper scale. Except for the last element in this list, each positive float is the scaling factor for boxes in that layer. For example, if for one layer the scale is 0.1, then the generated anchor box with aspect ratio 1 for that layer (the first aspect-ratio 1 box if two_boxes_for_ar1 is set to True) will have its height and width as 0.1*min (img_h, img_w). min_scale and max_scale are two positive floats. If both of them appear in the config, the program can automatically generate the scales by evenly splitting the space between min_scale and max_scale. |

string |

“[0.05, 0.1, 0.25, 0.4, 0.55, 0.7, 0.85]” |

min_scale/max_scale |

If both appear in the config, scales will be generated evenly by splitting the space between min_scale and max_scale. |

float |

– |

variances |

A list of 4 positive floats. The four floats, in order, represent variances for box center x, box center y, log box height, and log box width. The box offset for box center (cx, cy) and log box size (height/width) w.r.t. anchor will be divided by their respective variance value. Therefore, larger variances result in less significant differences between two different boxes on encoded offsets. |

– |

|

steps |

An optional list inside quotation marks with a length that is the number of feature layers for prediction. The elements should be floats or tuples/lists of two floats. The steps define how many pixels apart the anchor-box center points should be. If the element is a float, both vertical and horizontal margin is the same. Otherwise, the first value is step_vertical and the second value is step_horizontal. If steps are not provided, anchor boxes will be distributed uniformly inside the image. |

string |

– |

offsets |

An optional list of floats inside quotation marks with length equal to the number of feature layers for prediction. The first anchor box will have a margin of offsets[i]*steps[i] pixels from the left and top borders. If offsets are not provided, 0.5 will be used as default value. |

string |

– |

arch |

The backbone for feature extraction. Currently, “resnet”, “vgg”, “darknet”, “googlenet”, “mobilenet_v1”, “mobilenet_v2” and “squeezenet” are supported. |

string |

resnet |

nlayers |

The number of conv layers in a specific arch. For “resnet”, 10, 18, 34, 50 and 101 are supported. For “vgg”, 16 and 19 are supported. For “darknet”, 19 and 53 are supported. All other networks don’t have this configuration, and users should delete this parameter from the config file. |

Unsigned int |

– |

pred_num_channels |

This setting controls the number of channels of the convolutional layers in the DSSD prediction module. Setting this value to 0 will disable the DSSD prediction module. Supported values for this setting are 0, 256, 512 and 1024. A larger value gives a larger network and usually means the network is harder to train. |

Unsigned int |

512 |

freeze_bn |

Whether to freeze all batch normalization layers during training. |

boolean |

False |

freeze_blocks |

The list of block IDs to be frozen in the model during training. You can choose to freeze some of the CNN blocks in the model to make the training more stable and/or easier to converge. The definition of a block is heuristic for a specific architecture. For example, by stride or by logical blocks in the model, etc. However, the block ID numbers identify the blocks in the model in a sequential order so you don’t have to know the exact locations of the blocks when you do training. As a general principle, the smaller the block ID, the closer it is to the model input; the larger the block ID, the closer it is to the model output. You can divide the whole model into several blocks and optionally freeze a subset of it. Note that for FasterRCNN, you can only freeze the blocks that are before the ROI pooling layer. Any layer after the ROI pooling layer will not be frozen anyway. For different backbones, the number of blocks and the block ID for each block are different. It deserves some detailed explanations on how to specify the block IDs for each backbone. |

list(repeated integers)

|

– |

Training the Model¶

Train the DSSD model using this command:

tlt dssd train -e <experiment_spec>

-r <output_dir>

-k <key>

[--gpus <num_gpus>]

[--gpu_index <gpu_index>]

[--use_amp]

[--log_file <log_file>]

[-m <resume_model_path>]

[--initial_epoch <initial_epoch>]

Required Arguments¶

-r, --results_dir:code:: Path to the folder where the experiment output is written.-k, --key: Provide the encryption key to decrypt the model.-e, --experiment_spec_file: Experiment specification file to set up the evaluation experiment. This should be the same as the training specification file.

Optional Arguments¶

--gpus num_gpus: Number of GPUs to use and processes to launch for training. The default = 1.--gpu_index: The GPU indices used to run the training. We can specify the GPU indices used to run training when the machine has multiple GPUs installed.--use_amp: A flag to enable AMP training.--log_file: The path to the log file. Defaults tostdout.-m, --resume_model_weights: Path to a pre-trained model or model to continue training.--initial_epoch: Epoch number to resume from.--use_multiprocessing: Enable multiprocessing mode in data generator.-h, --help: Show this help message and exit.

Here’s an example of using the train command on a DSSD model:

tlt dssd train --gpus 2 -e /path/to/dssd_spec.txt -r /path/to/result -k $KEY

Evaluating the Model¶

Use following command to run evaluation for a DSSD model:

tlt dssd evaluate -m <model>

-e <experiment_spec_file>

[-k <key>]

[--gpu_index <gpu_index>]

[--log_file <log_file>]

Required Arguments¶

-m, --model: The.tltmodel orTRTengine to be evaluated.-e, --experiment_spec_file: The experiment spec file to set up the evaluation experiment. This should be the same as the training spec file.

Optional Arguments¶

-h, --help: Show this help message and exit.-k, --key:The encoding key for the.tltmodel--gpu_index: The index of the GPU to run evaluation (useful when the machine has multiple GPUs installed). Note that evaluation can only run on a single GPU.--log_file: The path to the log file. The default path isstdout.

Here is a sample command to evaluate a DSSD model:

tlt dssd evaluate -m /path/to/trained_tlt_dssd_model -k <model_key> -e /path/to/dssd_spec.txt

Running Inference on the Model¶

The inference tool for DSSD networks can be used to visualize bboxes or generate

frame-by-frame KITTI format labels on a directory of images. Here’s an example of using this tool:

tlt dssd inference -i <input directory>

-o <output annotated image directory>

-e <experiment spec file>

-m <model file>

-k <key>

[-l <output label directory>]

[-t <visualization threshold>]

[--gpu_index <gpu_index>]

[--log_file <log_file>]

Required Arguments¶

-m, --model: The path to the pretrained model (TLT model).-i, --in_image_dir: The directory of input images for inference.-o, --out_image_dir: The directory path to output annotated images.-k, --key: The key to the load model.-e, --config_path: The path to an experiment spec file for training.

Optional Arguments¶

-t, --draw_conf_thres: The threshold for drawing a bbox. (default: 0.3)-h, --help: Show this help message and exit.-l, --out_label_dir: The directory to output KITTI labels to.--gpu_index: The index of the GPU to run evaluation (useful when the machine has multiple GPUs installed). Note that evaluation can only run on a single GPU.--log_file: The path to the log file. The default path isstdout.

Here is a sample of using inference with the DSSD model:

tlt dssd inference -i /path/to/input/images_dir -o /path/to/output/dir -m /path/to/trained_tlt_dssd_model -k <model_key> -e /path/to/dssd_spec.txt

Pruning the Model¶

Pruning removes parameters from the model to reduce the model size without compromising the integrity of the model itself.

The prune command includes these parameters:

tlt dssd prune [-h]

-m <pretrained_model>

-o <output_file> -k <key>

[-n <normalizer>]

[-eq <equalization_criterion>]

[-pg <pruning_granularity>]

[-pth <pruning threshold>]

[-nf <min_num_filters>]

[-el [<excluded_list>]

[--gpu_index <gpu_index>]

[--log_file <log_file>]

Required Arguments¶

-m, --pretrained_model: The path to the pretrained model.-o, --output_file: The path to output checkpoints to.-k, --key: The key to load a.tltmodel.

Optional Arguments¶

-h, --help: Show this help message and exit.-n, –normalizer:maxto normalize by dividing each norm by the maximum norm within a layer;L2to normalize by dividing by the L2 norm of the vector comprising all kernel norms. (default: max)-eq, --equalization_criterion: Criteria to equalize the stats of inputs to an element wise op layer, or depth-wise convolutional layer. This parameter is useful for resnets and mobilenets. Options arearithmetic_mean,:code:geometric_mean,union, andintersection. (default:union)-pg, -pruning_granularity: Number of filters to remove at a time. (default:8)-pth: Threshold to compare normalized norm against. (default:0.1)-nf, --min_num_filters: Minimum number of filters to keep per layer. (default:16)-el, --excluded_layers: List of excluded_layers. Examples: -i item1 item2 (default: [])--gpu_index: The index of the GPU to run evaluation (useful when the machine has multiple GPUs installed). Note that evaluation can only run on a single GPU.--log_file: The path to the log file. Defaults tostdout.

Here’s an example of using the prune command:

tlt dssd prune -m /workspace/output/weights/resnet_003.tlt \

-o /workspace/output/weights/resnet_003_pruned.tlt \

-eq union \

-pth 0.7 -k $KEY

After pruning, the model needs to be retrained. See Re-training the Pruned Model for more details.

Re-training the Pruned Model¶

Once the model has been pruned, there might be a slight decrease in accuracy. This happens

because some previously useful weights may have been removed. To regain accuracy,

NVIDIA recommends that you retrain this pruned model over the same dataset. To do this, use

the tlt dssd train command with an updated spec file that points to the newly pruned model

as the pretrained model file.

Users are advised to turn off the regularizer in the training_config for detectnet to

recover the accuracy when retraining a pruned model. You may do this by setting the regularizer

type to NO_REG, as mentioned here. All the other parameters may be

retained in the spec file from the previous training.

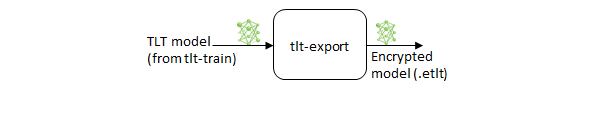

Exporting the Model¶

The Transfer Learning Toolkit includes the export command to export and prepare

TLT models for Deploying to DeepStream. The export

command optionally generates the calibration cache for TensorRT INT8 engine calibration.

Exporting the model decouples the training process from inference and allows conversion to

TensorRT engines outside the TLT environment. TensorRT engines are specific to each hardware

configuration and should be generated for each unique inference environment. This may be

interchangeably referred to as the .trt or .engine file. The same exported TLT

model may be used universally across training and deployment hardware. This is referred to as the

.etlt file or encrypted TLT file. During model export, the TLT model is encrypted with a

private key. This key is required when you deploy this model for inference.

INT8 Mode Overview¶

TensorRT engines can be generated in INT8 mode to improve performance, but require a calibration

cache at engine creation-time. The calibration cache is generated using a calibration tensor

file, if export is run with the --data_type flag set to int8.

Pre-generating the calibration information and caching it removes the need for calibrating the

model on the inference machine. Moving the calibration cache is usually much more convenient than

moving the calibration tensorfile since it is a much smaller file and can be moved with the

exported model. Using the calibration cache also speeds up engine creation as building the

cache can take several minutes to generate depending on the size of the Tensorfile and the model

itself.

The export tool can generate an INT8 calibration cache by ingesting training data using the following method:

Pointing the tool to a directory of images that you want to use to calibrate the model. For this option, make sure to create a sub-sampled directory of random images that best represent your training dataset.

FP16/FP32 Model¶

The calibration.bin is only required if you need to run inference at INT8 precision. For

FP16/FP32-based inference, the export step is much simpler: all you need to do is provide

a model from the train step to export to convert it into an encrypted TLT

model.

Exporting command¶

Use the following command to export a DSSD model:

tlt dssd export [-h]

-m <path to the .tlt model file generated by tlt train>

-k <key>

[-o <path to output file>]

[--cal_data_file <path to tensor file>]

[--cal_image_dir <path to the directory images to calibrate the model]

[--cal_cache_file <path to output calibration file>]

[--data_type <Data type for the TensorRT backend during export>]

[--batches <Number of batches to calibrate over>]

[--max_batch_size <maximum trt batch size>]

[--max_workspace_size <maximum workspace size]

[--batch_size <batch size to TensorRT engine>]

[--experiment_spec <path to experiment spec file>]

[--engine_file <path to the TensorRT engine file>]

[--verbose Verbosity of the logger]

[--force_ptq Flag to force PTQ]

Required Arguments¶

-m, --model: The path to the.tltmodel file to be exported usingexport.-k, --key: The key used to save the.tltmodel file.-e, --experiment_spec: The path to the spec file.

Optional Arguments¶

-o, --output_file: The path to save the exported model to. The default path is./<input_file>.etlt.--data_type: The desired engine data type. The options are “fp32”, “fp16”, “int8”. The default value is “fp32”. If using int8, the following INT8 arguments are required.-s, --strict_type_constraints: A Boolean flag to indicate whether or not to apply the TensorRTstrict_type_constraintswhen building the TensorRT engine. Note this is only for applying the strict type of INT8 mode.

INT8 Export Mode Required Arguments¶

--cal_data_file: The tensorfile generated fromtlt-int8-tensorfilefor calibrating the engine. This can also be an output file if used with--cal_image_dir.--cal_image_dir: The directory of images to use for calibration

Note

The --cal_image_dir parameter applies the necessary preprocessing

to generate a tensorfile at the path mentioned in the --cal_data_file

parameter, which is in turn used for calibration. The number of generated batches in the

tensorfile is obtained from the value set to the --batches parameter,

and the batch_size is obtained from the value set to the --batch_size

parameter. Ensure that the directory mentioned in --cal_image_dir has at least

batch_size * batches number of images in it. The valid image extensions are

.jpg, .jpeg, and .png. In this case, the input_dimensions

of the calibration tensors are derived from the input layer of the .tlt model.

INT8 Export Optional Arguments¶

--cal_cache_file: The path to save the calibration cache file to. The default value is./cal.bin.--batches: The number of batches to use for calibration and inference testing. The default value is 10.--batch_size: The batch size to use for calibration. The default value is 8.--max_batch_size: The maximum batch size of the TensorRT engine. The default value is 16.--max_workspace_size: The maximum workspace size of the TensorRT engine. The default value is 1073741824 = 1<<30.--experiment_spec: The experiment_spec for training/inference/evaluation. This is used to generate the graphsurgeon config script for FasterRCNN from the experiment_spec (which is only useful for FasterRCNN). Use this argument when DetectNet_v2 and FasterRCNN also set up the dataloader-based calibrator to leverage the training dataloader to calibrate the model.--engine_file: The path to the serialized TensorRT engine file. Note that this file is hardware specific and cannot be generalized across GPUs. Use this argument to quickly test your model accuracy using TensorRT on the host. As the TensorRT engine file is hardware specific, you cannot use this engine file for deployment unless the deployment GPU is identical to the training GPU.--force_ptq: A Boolean flag to force post-training quantization on the exported.etltmodel.

Note

When exporting a model that was trained with QAT enabled, the tensor scale factors to

calibrate the activations are peeled out of the model and serialized to a TensorRT-readable cache

file defined by the cal_cache_file argument. However, the current version of

QAT doesn’t natively support DLA int8 deployment on Jetson. To deploy

this model on Jetson with DLA int8, use the --force_ptq flag to use

TensorRT post-training quantization to generate the calibration cache file.

Exporting a Model¶

Here’s a sample command using the --cal_image_dir option:

tlt dssd export -m $USER_EXPERIMENT_DIR/data/dssd/dssd_kitti_retrain_epoch12.tlt \

-o $USER_EXPERIMENT_DIR/data/dssd/dssd_kitti_retrain.int8.etlt \

-e $SPECS_DIR/dssd_kitti_retrain_spec.txt \

--key $KEY \

--cal_image_dir $USER_EXPERIMENT_DIR/data/KITTI/val/image_2 \

--data_type int8 \

--batch_size 8 \

--batches 10 \

--cal_data_file $USER_EXPERIMENT_DIR/data/dssd/cal.tensorfile \

--cal_cache_file $USER_EXPERIMENT_DIR/data/dssd/cal.bin \

--engine_file $USER_EXPERIMENT_DIR/data/dssd/detection.trt

Deploying to Deepstream¶

To run a DSSD model in DeepStream, you need a label file and a DeepStream configuration file. In addition, you need to compile the TensorRT 7+ Open source software and DSSD bounding box parser for DeepStream.

A DeepStream sample with documentation on how to run inference using the trained DSSD models from TLT is provided here on GitHub.

Prerequisites for DSSD Model¶

DSSD requires the batchTilePlugin, which is available in the TensorRT open source repo, but not in TensorRT 7.0. Detailed instructions to build TensorRT OSS can be found in TensorRT Open Source Software (OSS).

DSSD requires custom bounding-box parsers that are not built in to the DeepStream SDK. The source code to build custom bounding box parsers for DSSD is available here. The following instructions can be used to build the bounding box parser:

Step 1: Install git-lfs (git >= 1.8.2):

Note

git-lfs are needed to support downloading model files >5MB.

curl -s https://packagecloud.io/install/repositories/github/git-lfs/

script.deb.sh | sudo bash

sudo apt-get install git-lfs

git lfs install

Step 2: Download the source code with HTTPS:

git clone -b release/tlt3.0

https://github.com/NVIDIA-AI-IOT/deepstream_tlt_apps

Step 3: Build:

// or Path for DS installation

export CUDA_VER=10.2 // CUDA version, e.g. 10.2

make

This generates libnvds_infercustomparser_tlt.so in the directory post_processor.

Label File¶

The label file is a text file containing the names of the classes (with background) that the DSSD

model is trained to detect. The order in which the classes are listed in the label file must match

the order in which the model predicts the output. During the training, TLT DSSD will set the

background as the first class, specify all other target class names in lower case, and sort them

in alphabetical order. For example, if the dataset_config is:

dataset_config {

data_sources: {

label_directory_path: "/path/to/train/labels"

image_directory_path: "/path/to/train/images"

}

include_difficult_in_training: true

target_class_mapping {

key: "car"

value: "car"

}

target_class_mapping {

key: "person"

value: "person"

}

target_class_mapping {

key: "cyclist"

value: "cyclist"

}

}

Then, the corresponding classification_lables.txt file would look like this:

background

bicycle

car

person

DeepStream Configuration File¶

The detection model is typically used as a primary inference engine. It can also be used as

a secondary inference engine. To run this model in the sample deepstream-app, you must

modify the existing config_infer_primary.txt file to point to this model as well as the

custom parser.

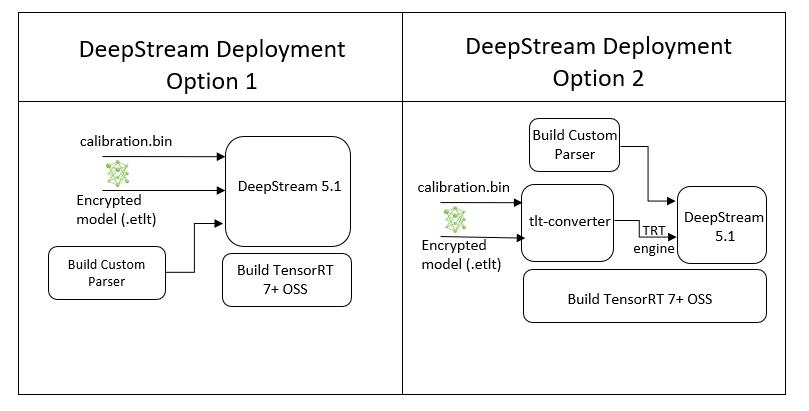

Option 1: Integrate the model (.etlt) directly in the DeepStream app.

For this option, users will need to add the following parameters in the configuration file.

The int8-calib-file is only required for INT8 precision.

tlt-encoded-model=<TLT exported .etlt>

tlt-model-key=<Model export key>

int8-calib-file=<Calibration cache file>

The tlt-encoded-model parameter points to the exported model (.etlt) from TLT. The

tlt-model-key is the encryption key used during model export.

Option 2: Integrate the TensorRT engine file with the DeepStream app.

Generate the TensorRT engine using

tlt-converter.Once the engine file is generated successfully, modify the following parameters to use this engine with DeepStream:

model-engine-file=<PATH to generated TensorRT engine>

All other parameters are common between the two approaches. Add the label file generated above using following:

labelfile-path=<Classification labels>

For all the options, see the configuration file below. To learn more about the parameters, refer to the DeepStream Development Guide.

[property]

gpu-id=0

net-scale-factor=1.0

offsets=103.939;116.779;123.68

model-color-format=1

labelfile-path=<Path to dssd_labels.txt>

tlt-encoded-model=<Path to DSSD TLT model>

tlt-model-key=<Key to decrypt model>

uff-input-dims=3;384;1248;0

uff-input-blob-name=Input

batch-size=1

## 0=FP32, 1=INT8, 2=FP16 mode

network-mode=0

num-detected-classes=3

interval=0

gie-unique-id=1

is-classifier=0

#network-type=0

output-blob-names=NMS

parse-bbox-func-name=NvDsInferParseCustomNMSTLT

custom-lib-path=<Path to libnvds_infercustomparser_tlt.so>

[class-attrs-all]

threshold=0.3

roi-top-offset=0

roi-bottom-offset=0

detected-min-w=0

detected-min-h=0

detected-max-w=0

detected-max-h=0