Open Model Architectures¶

Transfer Learning Toolkit supports image classification, six object detection architectures–including YOLOv3, YOLOv4, FasterRCNN, SSD, DSSD, RetinaNet, and DetectNet_v2–and a semantic and instance segmentation architecture, namely UNet and MaskRCNN. In addition, there are 16 classification backbones supported by TLT. For a complete list of all the permutations that are supported by TLT, see the matrix below:

ImageClassification |

Object Detection |

Instance Segmentation |

Semantic Segmentation |

|||||||

Backbone |

DetectNet_V2 |

FasterRCNN |

SSD |

YOLOv3 |

RetinaNet |

DSSD |

YOLOv4 |

MaskRCNN |

UNet |

|

ResNet10/18/34/50/101 |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

VGG 16/19 |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

|

GoogLeNet |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

||

MobileNet V1/V2 |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

||

SqueezeNet |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

|||

DarkNet 19/53 |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

||

CSPDarkNet 19/53 |

Yes |

Yes |

||||||||

Efficient B0 |

Yes |

Yes |

Yes |

Yes |

Yes |

|||||

Model Requirements¶

Classification¶

Input size: 3 * H * W (W, H >= 16)

Input format: JPG, JPEG, PNG

Note

Classification input images do not need to be manually resized. The input dataloader resizes images as needed.

Object Detection¶

DetectNet_v2¶

Input size: C * W * H (where C = 1 or 3, W > =480, H >=272 and W, H are multiples of 16)

Image format: JPG, JPEG, PNG

Label format: KITTI detection

Note

The train tool does not support training on images of multiple resolutions, or resizing

images during training. All of the images must be resized offline to the final training size

and the corresponding bounding boxes must be scaled accordingly.

FasterRCNN¶

Input size: C * W * H (where C = 1 or 3; W > =160; H >=160)

Image format: JPG, JPEG, PNG

Label format: KITTI detection

Note

The train tool does not support training on images of multiple resolutions, or resizing

images during training. All of the images must be resized offline to the final training size

and the corresponding bounding boxes must be scaled accordingly.

SSD¶

Input size: C * W * H (where C = 1 or 3, W >= 128, H >= 128)

Image format: JPG, JPEG, PNG

Label format: KITTI detection

DSSD¶

Input size: C * W * H (where C = 1 or 3, W >= 128, H >= 128)

Image format: JPG, JPEG, PNG

Label format: KITTI detection

YOLOv3¶

Input size: C * W * H (where C = 1 or 3, W >= 128, H >= 128, W, H are multiples of 32)

Image format: JPG, JPEG, PNG

Label format: KITTI detection

YOLOv4¶

Input size: C * W * H (where C = 1 or 3, W >= 128, H >= 128, W, H are multiples of 32)

Image format: JPG, JPEG, PNG

Label format: KITTI detection

RetinaNet¶

Input size: C * W * H (where C = 1 or 3, W >= 128, H >= 128, W, H are multiples of 32)

Image format: JPG, JPEG, PNG

Label format: KITTI detection

Instance Segmentation¶

MaskRCNN¶

Input size: C * W * H (where C = 3, W > =128, H >=128 and W, H are multiples of 32)

Image format: JPG

Label format: COCO detection

Semantic Segmentation¶

UNet¶

Input size: C * W * H (where C = 3, W > =128, H >=128 and W, H are multiples of 32)

Image format: JPG, JPEG, PNG, BMP

Label format: Image/Mask pair

Note

The train tool does not support training on images of multiple resolutions. All of the images and masks must be of equal size.

However, image and masks need not be necessarily equal to model input size. The images/ masks will be resized to the model input size during training.

Training¶

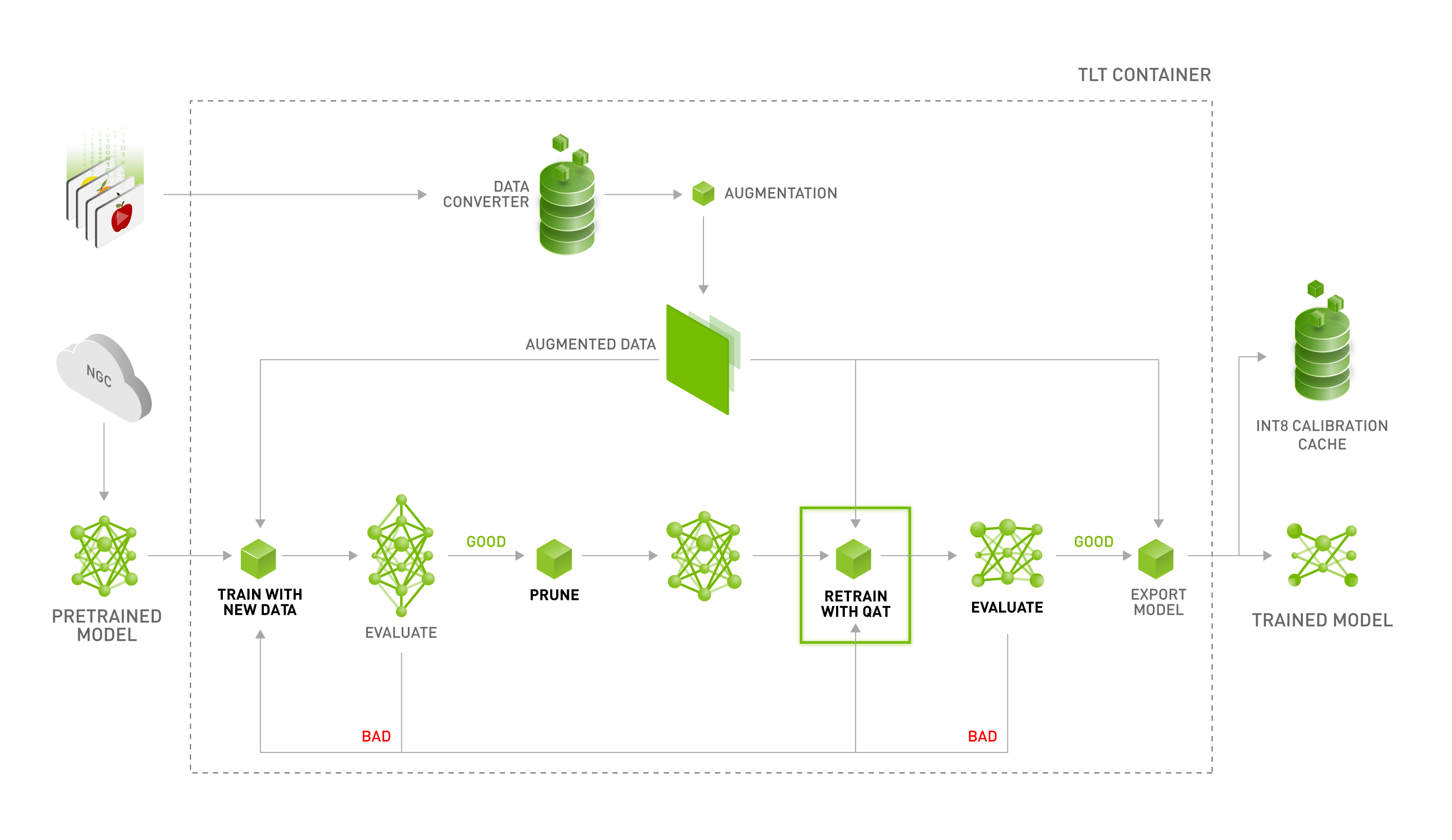

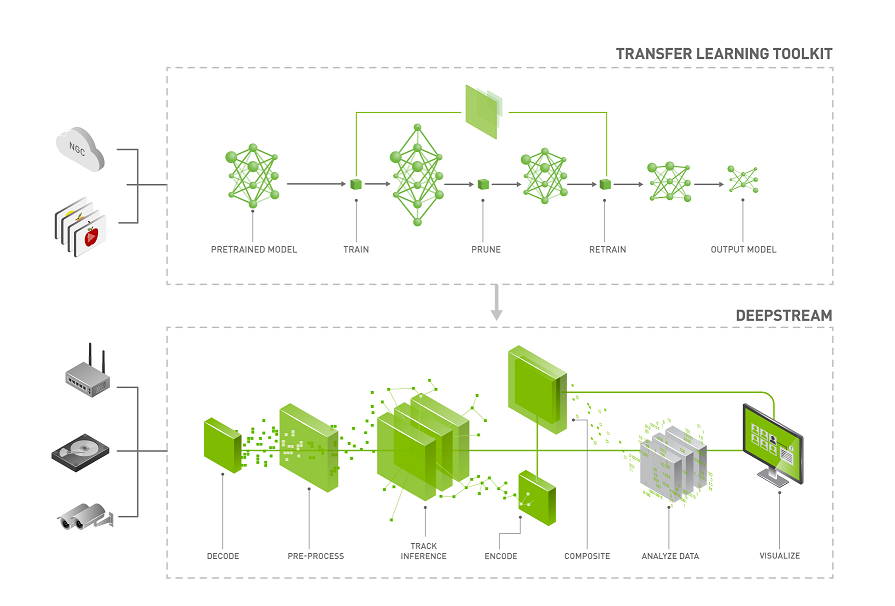

The TLT container contains Jupyter notebooks and the necessary spec files to train any network combination. The pre-trained weight for each backbone is provided on NGC. The pre-trained model is trained on Open image dataset. The pre-trained weights provide a great starting point for applying transfer learning on your own dataset.

To get started, first choose the type of model that you want to train, then go to the appropriate model card on NGC and choose one of the supported backbones.

Model to train |

NGC model card |

YOLOv3 |

|

YOLOv4 |

|

SSD |

|

FasterRCNN |

|

RetinaNet |

|

DSSD |

|

DetectNet_v2 |

|

MaskRCNN |

|

Image Classification |

|

UNet |

Once you pick the appropriate pre-trained model, follow the TLT workflow to use your dataset and pre-trained model to export a tuned model that is adapted to your use case. The TLT Workflow sections walk you through all the steps in training.

Deployment¶

You can deploy your trained model on any edge device using DeepStream and TensorRT. See

Deploying to DeepStream chapter of every network for deployment instructions.

Model |

Model output format |

Prunable |

INT8 |

Compatible with DS5.0/5.0.1 |

Compatible with DS5.1 |

TRT-OSS required |

|---|---|---|---|---|---|---|

DetectNet_v2 |

Encrypted UFF |

Yes |

Yes |

Yes |

Yes |

No |

FasterRCNN |

Encrypted UFF |

Yes |

Yes |

Yes |

Yes |

Yes |

SSD |

Encrypted UFF |

Yes |

Yes |

Yes |

Yes |

Yes |

YOLOv3 |

Encrypted UFF |

Yes |

Yes |

Yes |

Yes (with TRT 7.1) |

Yes |

YOLOv4 |

Encrypted UFF |

Yes |

Yes |

Yes |

Yes (with TRT 7.1) |

Yes |

DSSD |

Encrypted UFF |

Yes |

Yes |

Yes |

Yes |

Yes |

RetinaNet |

Encrypted UFF |

Yes |

Yes |

Yes |

Yes |

Yes |

MaskRCNN |

Encrypted UFF |

No |

Yes |

|||

UNET |

Encrypted ONNX |

No |

Yes |

No |

Yes |