BlueField Software Overview

NVIDIA provides software which enables users to fully utilize the NVIDIA® BlueField® DPU and enjoy the rich feature-set it provides. Using the BlueField software packages, users are able to:

Quickly and easily boot an initial Linux image on your development board

Port existing applications to and develop new applications for BlueField

Patch, configure, rebuild, update or otherwise customize your image

Debug, profile, and tune their development system using open source development tools taking advantage of the diverse and vibrant Arm ecosystem

The BlueField family of DPU devices combines an array of 64-bit Armv8 A72 cores coupled with the NVIDIA® ConnectX® interconnect. Standard Linux distributions run on the Arm cores allowing common open source development tools to be used. Developers should find the programming environment familiar and intuitive which in turn allows them to quickly and efficiently design, implement and verify their control-plane and data-plane applications.

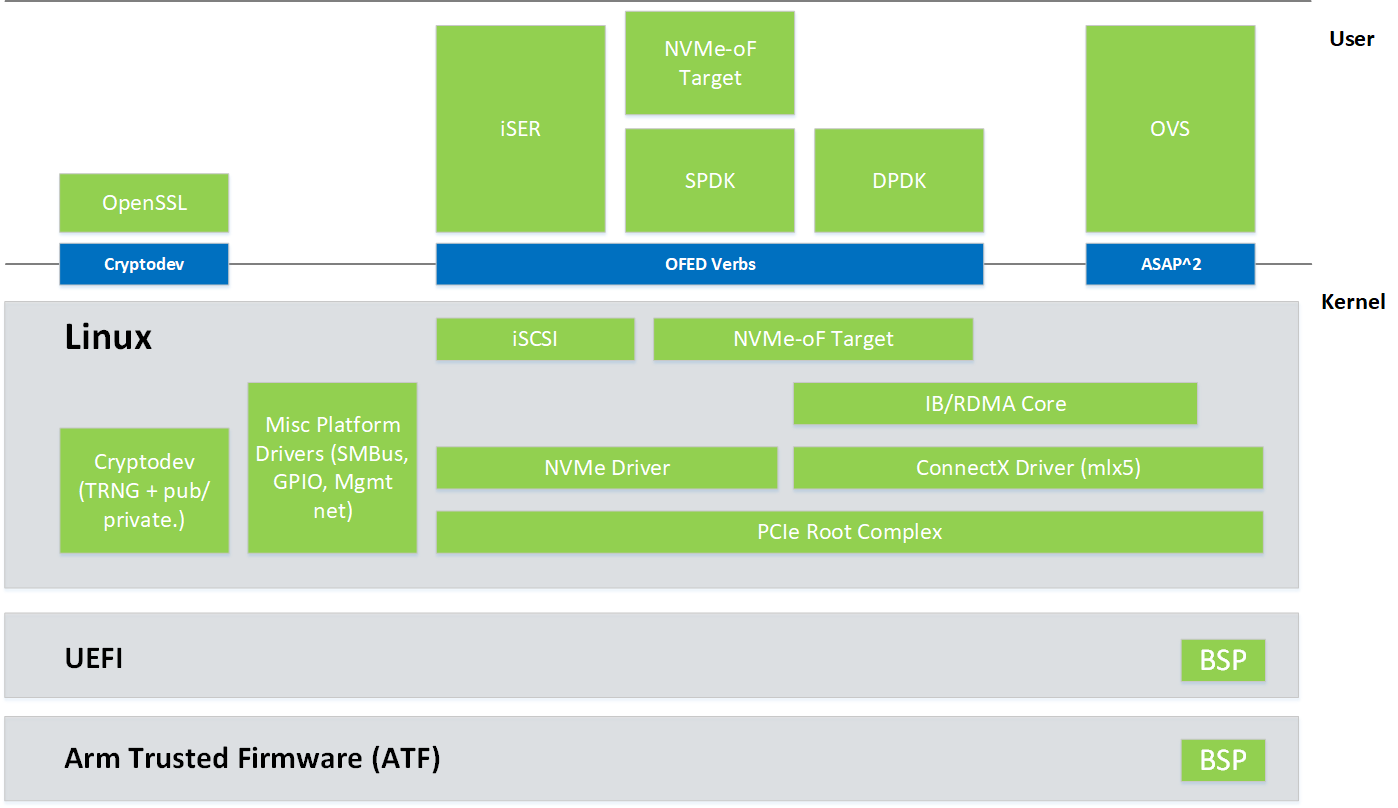

BlueField SW ships with the NVIDIA ® BlueField ® Reference Platform. BlueField SW is a reference Linux distribution based on the Ubuntu Server distribution extended to include the DOCA stack for Arm and a Linux kernel which supports NVMe-oF. This software distribution can run all customer-based Linux applications seamlessly.

The following are other software elements delivered with BlueField DPU:

Arm Trusted Firmware (ATF) for BlueField

UEFI for BlueField

OpenBMC for BMC (ASPEED 2500) found on development board

DOCA stack

Mellanox MFT

BlueField DPU includes hardware support for the Arm DS5 suite as well as CoreSight™ debug. As such, a wide range of commercial off-the-shelf Arm debug tools should work seamlessly with BlueField. The CoreSight debugger interface can be accessed via RShim interface (USB or PCIe if using DPU) as well which could be used for debugging with open-source tools like OpenOCD.

The BlueField DPU also supports the ubiquitous GDB.

BlueField software provides the foundation for building a JBOF (Just a Bunch of Flash) storage system including NVMe-oF target software, PCIe switch support, NVDIMM-N support, and NVMe disk hot-swap support.

BlueField SW allows enabling ConnectX offload such as RDMA/RoCE, T10 DIF signature offload, erasure coding offload, iSER, Storage Spaces Direct, and more.

The BlueField architecture is a combination of two preexisting standard off-the-shelf components, Arm AArch64 processors, and ConnectX-5 (for BlueField), ConnectX-6 Dx (for BlueField-2), or network controller, each with its own rich software ecosystem. As such, almost any of the programmer-visible software interfaces in BlueField come from existing standard interfaces for the respective components.

The Arm related interfaces (including those related to the boot process, PCIe connectivity, and cryptographic operation acceleration) are standard Linux on Arm interfaces. These interfaces are enabled by drivers and low-level code provided by NVIDIA as part of the BlueField software delivered and upstreamed to respective open-source projects, such as Linux.

The ConnectX network controller-related interfaces (including those for Ethernet and InfiniBand connectivity, RDMA and RoCE, and storage and network operation acceleration) are identical to the interfaces that support ConnectX standalone network controller cards. These interfaces take advantage of the DOCA software stack and InfiniBand verbs-based interfaces to support software.

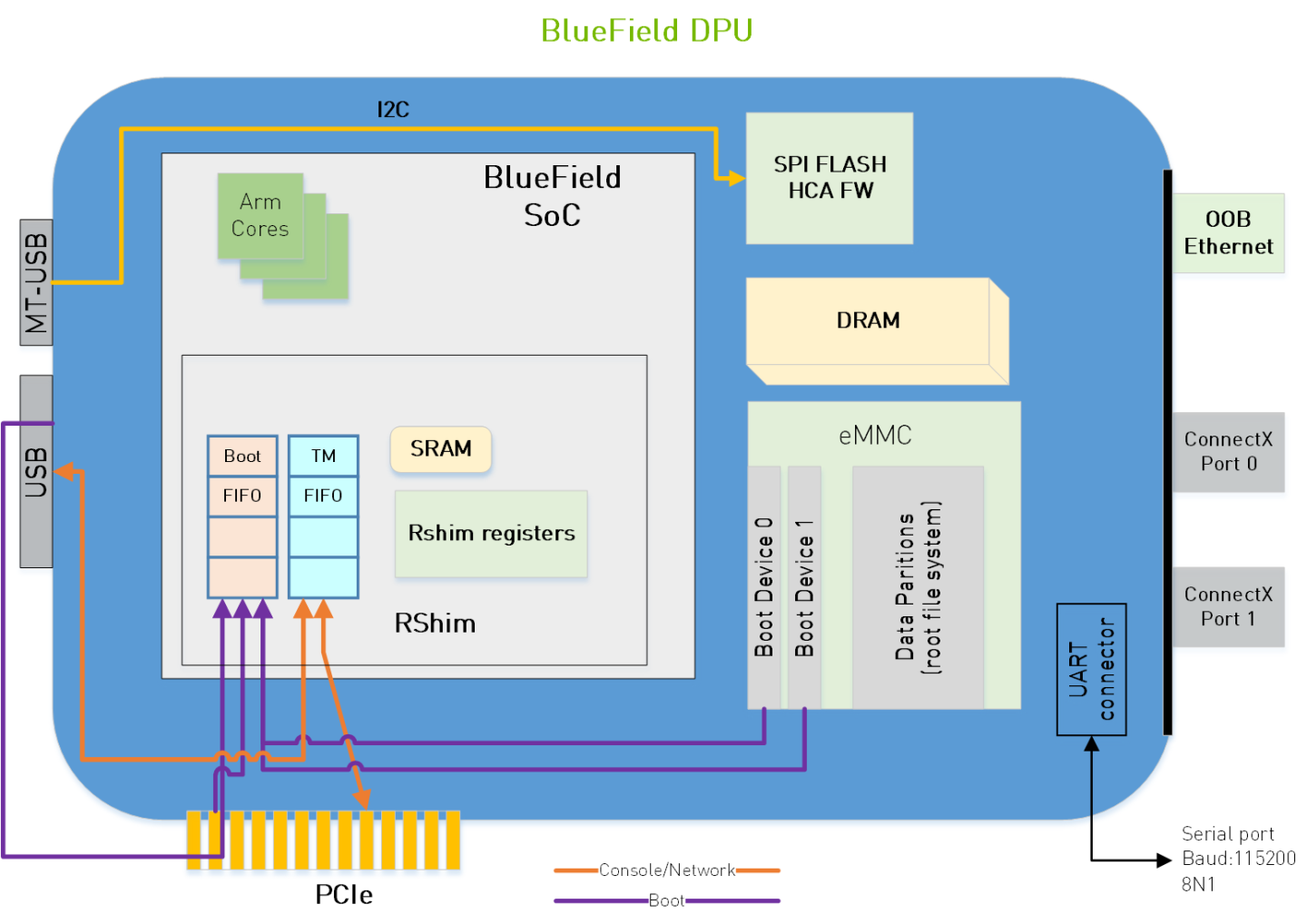

The BlueField DPU has multiple connections. Users can connect to the system via different consoles, network connections, and a JTAG connector.

System Consoles

The BlueField DPU has multiple console interfaces:

Serial console 0 (/dev/ttyAMA0 on the Arm cores)

Requires cable to NC-SI connector on DPU 25G

Requires serial cable to 3-pin connector on DPU 100G

Connected to BMC serial port on BF1200 platforms

Serial console 1 (/dev/ttyAMA1 on the Arm cores but only for BF1200 reference platform)

ttyAMA1 is the console connection on the front panel of the BF1200

Virtual RShim console (/dev/hvc0 on the Arm cores) is driven by

The RShim PCIe driver (does not require a cable but the system cannot be in isolation mode as isolation mode disables the PCIe device needed)

The RShim USB driver (requires USB cable)

It is not possible to use both the PCIe and USB RShim interfaces at the same time

Network Interfaces

The DPU has multiple network interfaces.

ConnectX Ethernet/InfiniBand interfaces

RShim virtual Ethernet interface (via USB or PCIe)

The virtual Ethernet interface can be very useful for debugging, installation, or basic management. The name of the interface on the host DPU server depends on the host operating system. The interface name on the Arm cores is normally "tmfifo_net0". The virtual network interface is only capable of roughly 10MB/s operation and should not be considered for production network traffic.OOB Ethernet interface (BlueField-2 only)

BlueField-2 based platforms feature an OOB 1GbE management port. This interface provides a 1Gb/s full duplex connection to the Arm cores. The interface name is normally "oob_net0". The interface enables TCP/IP network connectivity to the Arm cores (e.g. for file transfer protocols, SSH, and PXE boot). The OOB port is not a path for the BlueField-2 boot stream (i.e. any attempt to push a BFB to this port will not work).