Install the NVIDIA GPU Operator#

Added in version 2.0.

The GPU Operator allows DevOps Engineers of Kubernetes clusters to manage GPU nodes just like CPU nodes in the cluster. It installs and manages the lifecycle of software components so GPU accelerated applications can be run on Kubernetes.

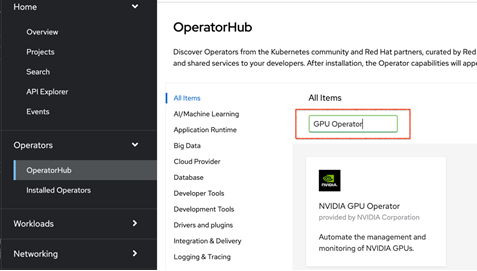

In the OpenShift Container Platform web console from the side menu, navigate to Operators > Operator Hub and ensure All Items is selected.

In Operators > Operator Hub search for the NVIDIA GPU Operator.

Note

For additional information see the Red Hat OpenShift Container Platform documentation.

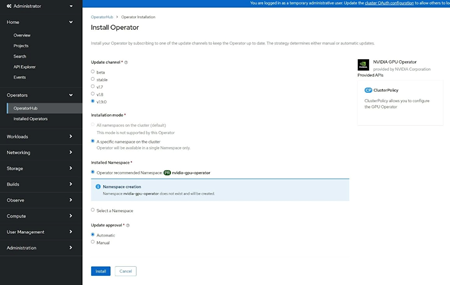

Select the NVIDIA GPU Operator , click Install. In the subsequent screen click Install.

The Install Operator dialog screen display, click Install.

Note

Here, you can select the namespace where you want to deploy the GPU Operator. The suggested namespace to use is the nvidia-gpu-operator. You can choose any existing namespace or create a new namespace under Select a Namespace.

If you install in any other namespace other than nvidia-gpu-operator, the GPU Operator will not automatically enable namespace monitoring, and metrics and alerts wil; not be collected by Prometheus. If only trusted operators are installed in this namespace, you can manually enable namespace monitoring with this command:

$ oc label ns/$NAMESPACE_NAME openshift.io/cluster-monitoring=true