User-Centric Timing for KV Cache Benchmarking

User-Centric Timing for KV Cache Benchmarking

User-Centric Timing for KV Cache Benchmarking

Use user-centric timing when you need to:

num_users / QPS seconds between their turns, enabling controlled cache TTL testingImagine a customer support chatbot serving 15 concurrent users. Each user:

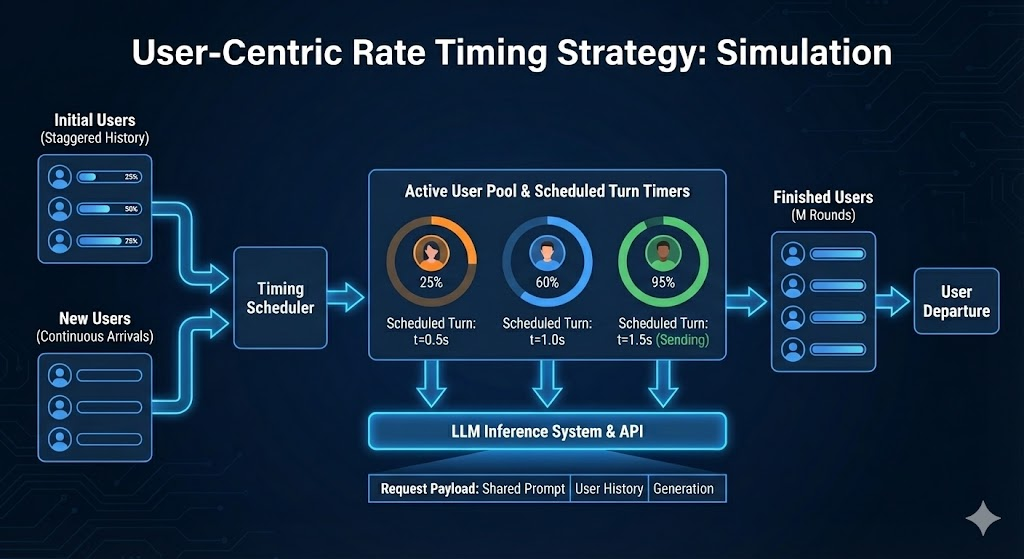

User-centric timing recreates this pattern with controlled, consistent timing. You can test whether your KV cache retains entries for exactly 15 seconds, 30 seconds, or any specific gap—something request-rate mode doesn’t guarantee because continuation turns are issued at the next available rate interval rather than after a fixed per-user delay.

This configures 15 simulated users with sessions averaging 20 turns:

In request-rate mode, after a turn completes, the next turn is queued and issued at the next rate interval. This means per-user turn gaps vary depending on when the previous turn finished relative to the rate clock—making it hard to test specific cache TTL thresholds.

User-centric timing solves this with fixed per-user turn gaps:

The gap between each user’s requests is:

User-centric mode uses “virtual history” to simulate steady-state behavior immediately. Instead of all users starting at turn 0 simultaneously, users are assigned virtual “ages” at startup—creating an immediate mix of new users and continuations that simulates joining an already-running system.

When a response takes longer than the turn gap, the scheduler:

This avoids burst load from catching up to the original schedule.

For effective KV cache benchmarking, configure prompts to create realistic prefix sharing patterns:

Note: In multi-turn conversations, previous turns (inputs + responses) also accumulate in the request, growing the total prompt size with each turn. The user context prompt is synthetic padding separate from this accumulated history—both contribute to the total context length.

Important: User-centric mode does NOT automatically limit concurrency. While the timing model spaces out requests, slow server responses can cause request buildup.

To prevent overwhelming the server, you can cap concurrency with --concurrency. If you set this, use a value at least equal to --num-users to avoid constraining user sessions.

Sample Output (Successful Run):

Test with higher QPS (shorter per-user gaps):

Sample Output (Successful Run):

Gap = 15 / 4.0 = 3.75 seconds between each user’s requests.

Test cache TTL limits with 30-second per-user gaps:

Sample Output (Successful Run):

Gap = 15 / 0.5 = 30 seconds between each user’s requests.

With effective caching:

Without caching or cache misses:

--user-centric-rate is set (not --request-rate)--num-users is specifiedPossible causes:

Solutions:

--user-centric-rate or decreasing --num-users--shared-system-prompt-length to enable prefix sharing--random-seed for reproducible dataset sampling--benchmark-duration for more samples