NVIDIA Parabricks WDL/Nextflow Workflows

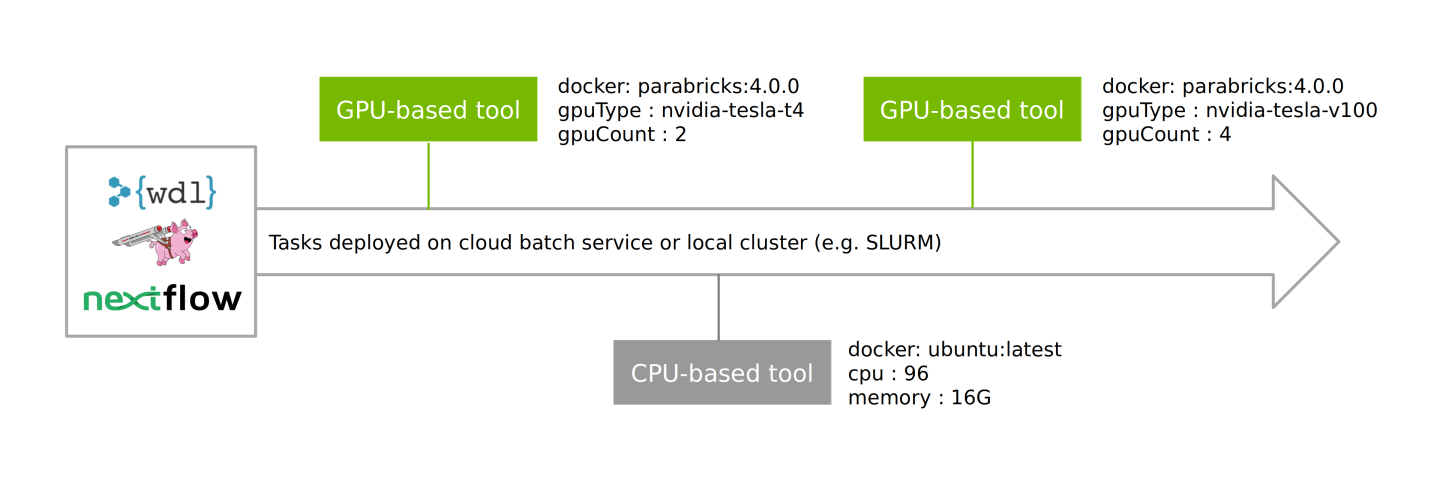

Parabricks containers are compatible with WDL and NextFlow for building customized workflows, intertwining GPU- and CPU-powered tasks with different compute requirements, and deploying at scale.

These enable workflows to be deployed on cloud batch services as well as local clusters (e.g. SLURM) in a well managed process, pulling from a combination of Parabricks and third-party containers and running these on pre-defined nodes.

For further information on running these workflows, and to see the open-source reference workflows, which can be easily forked/edited, visit the Parabricks Workflows repository. This repository includes recommended instance configurations for deploying the GPU-based tools on cloud and can be easily forked/edited for your own purposes.