High Availability#

This documentation is part of NVIDIA DGX BasePOD: Deployment Guide Featuring NVIDIA DGX A100 Systems.

Warning

The # prompt indicates commands that you execute as the root user on a head node. The % prompt indicates commands that you execute within cmsh.

Configure High Availability#

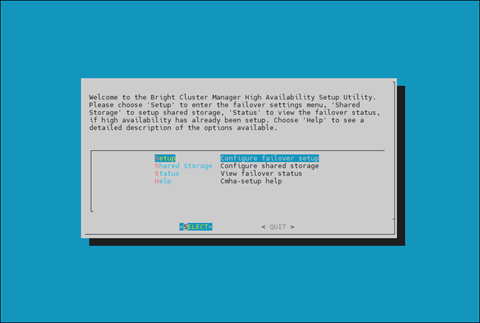

This section covers how to configure high availability (HA) using cmha-setup CLI wizard.

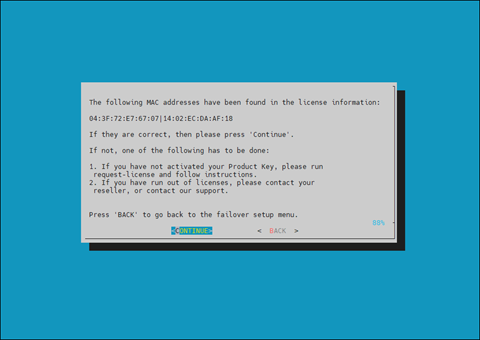

Ensure that both head nodes are licensed.

We provided the MAC address for the secondary head when we installed the cluster license. For details, see Cluster Configuration Steps.

1% main licenseinfo | grep ^MAC 2MAC address / Cloud ID 04:3F:72:E7:67:07|14:02:EC:DA:AF:18

Configure the NFS shared storage.

Mounts configured in fsmounts will be automatically mounted by the CMDaemon.

1% device 2% use master 3% fsmounts 4% add /nfs/general 5% set device 10.227.48.252:/var/nfs/general 6% set filesystem nfs 7% commit 8% show 9Parameter Value 10---------------------------- ------------------------------------------------ 11Device 10.227.48.252:/var/nfs/general 12Revision 13Filesystem nfs 14Mountpoint /nfs/general 15Dump no 16RDMA no 17Filesystem Check NONE 18Mount options defaults

Verify that the shared storage is mounted.

1# mount | grep '/nfs/general' 210.227.48.252:/var/nfs/general on /nfs/general type nfs4 (rw,relatime,vers=4.2,rsize=1048576,wsize=1048576,namlen=255,hard,proto=tcp,timeo=600,retrans=2,sec=sys,clientaddr=10.130.12210.227.48_lock=none,addr=10.130.122.252)10.227.48

Verify that head node has power control over the cluster nodes.

1% device 2% power -c dgx,k8s-master status 3[basepod-head1->device]% power -c dgx,k8s-master status 4ipmi0 .................... [ ON ] dgx01 5ipmi0 .................... [ ON ] dgx02 6ipmi0 .................... [ ON ] dgx03 7ipmi0 .................... [ ON ] dgx04 8ipmi0 .................... [ ON ] knode01 9ipmi0 .................... [ ON ] knode02 10ipmi0 .................... [ ON ] knode03 11[basepod-head1->device]%

Power off the cluster nodes. The cluster nodes must be powered off before configuring HA.

1% power -c k8s-master,dgx off 2ipmi0 .................... [ OFF ] knode01 3ipmi0 .................... [ OFF ] knode02 4ipmi0 .................... [ OFF ] knode03 5ipmi0 .................... [ OFF ] dgx01 6ipmi0 .................... [ OFF ] dgx02 7ipmi0 .................... [ OFF ] dgx03 8ipmi0 .................... [ OFF ] dgx04

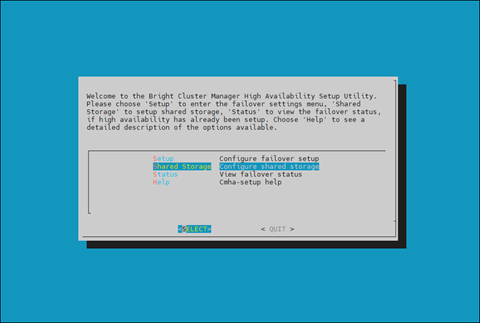

Start the cmha-setup CLI wizard as the root user on the primary head node.

1# cmha-setup

Choose Setup and then select SELECT.

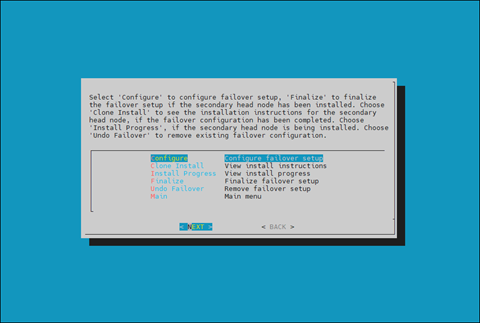

Choose Configure and then select NEXT.

Verify that the cluster license information found by the wizard is correct and then select CONTINUE.

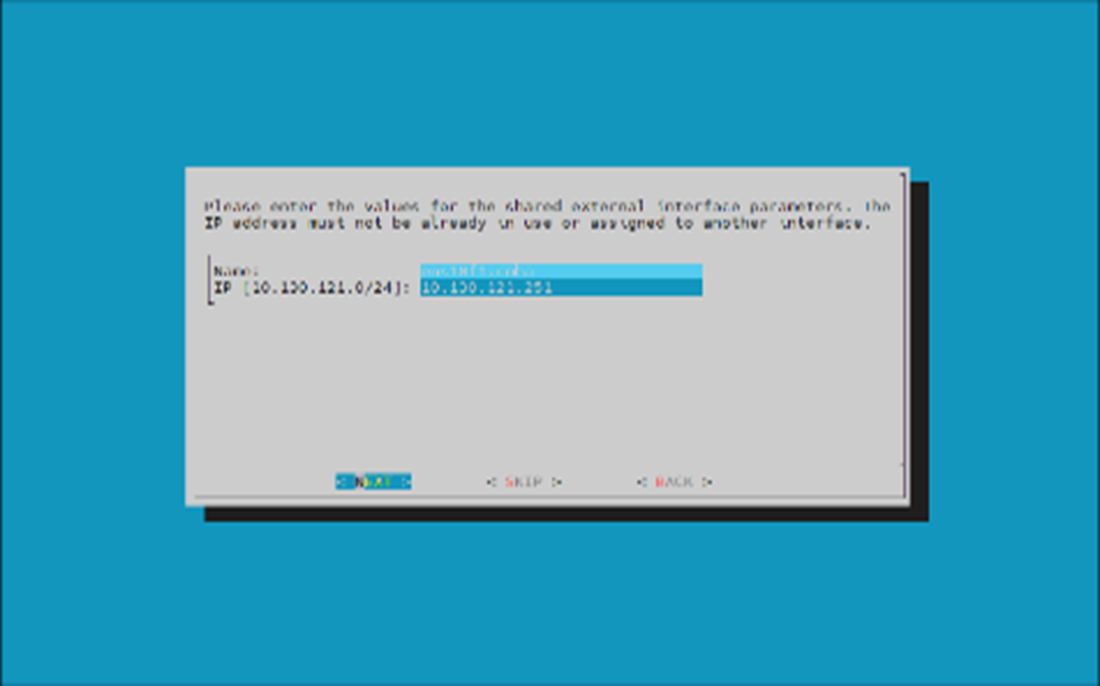

Configure an external virtual IP address to be used by the active head node in the HA configuration and then select NEXT.

This will be the IP that should always be used for accessing the active head nodes.

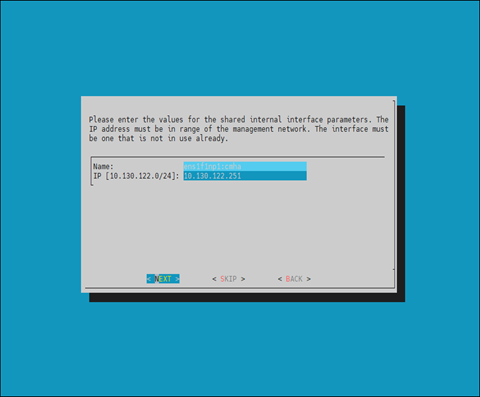

Provide an internal virtual IP address that will be used by the active head node in the HA configuration and then select NEXT.

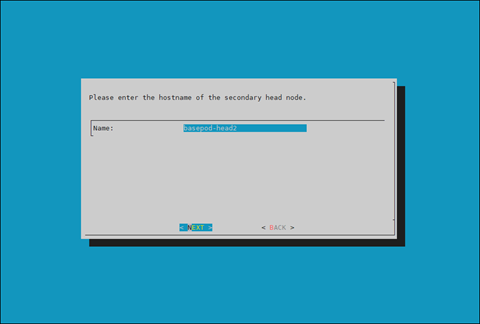

Provide the name of the secondary head node and then select NEXT.

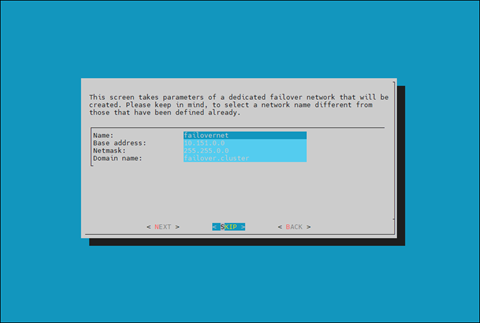

DGX BasePOD uses the internal network as the failover network, so select SKIP.

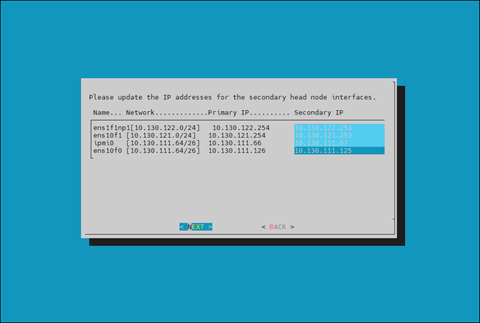

Configure the IP addresses for the secondary head node and then select NEXT.

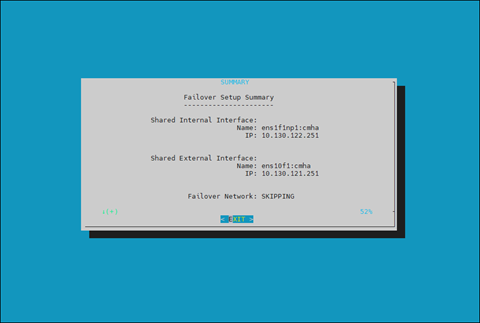

Review the summary of the configuration and then select NEXT.

This screen shoes the VIP that will be assigned to the internal and external interfaces.

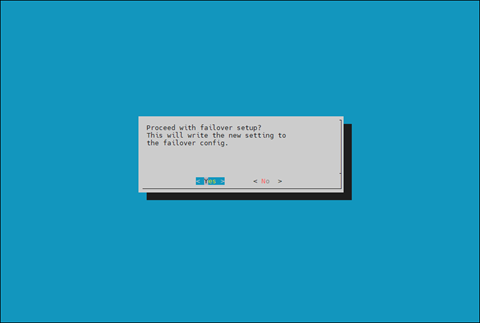

Select Yes to proceed with the failover configuration.

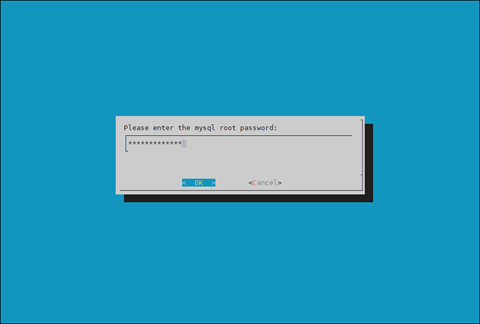

Enter the MySQL root password and then select OK. This should be the same as the root password.

The wizard implements the first steps in the HA configuration. If all the steps show OK, press ENTER to continue. The progress is shown below:

1Initializing failover setup on master.............. [ OK ] 2Updating shared internal interface................. [ OK ] 3Updating shared external interface................. [ OK ] 4Updating extra shared internal interfaces.......... [ OK ] 5Cloning head node.................................. [ OK ] 6Updating secondary master interfaces............... [ OK ] 7Updating Failover Object........................... [ OK ] 8Restarting cmdaemon................................ [ OK ] 9Press any key to continue

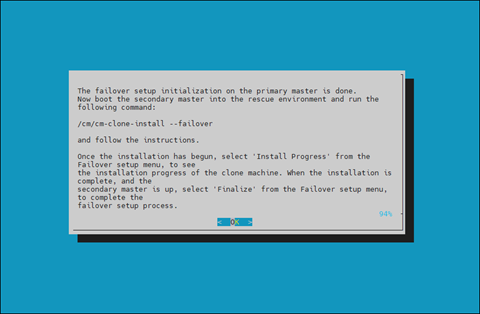

When the failover setup installation on the primary master is complete, select OK to exit the wizard.

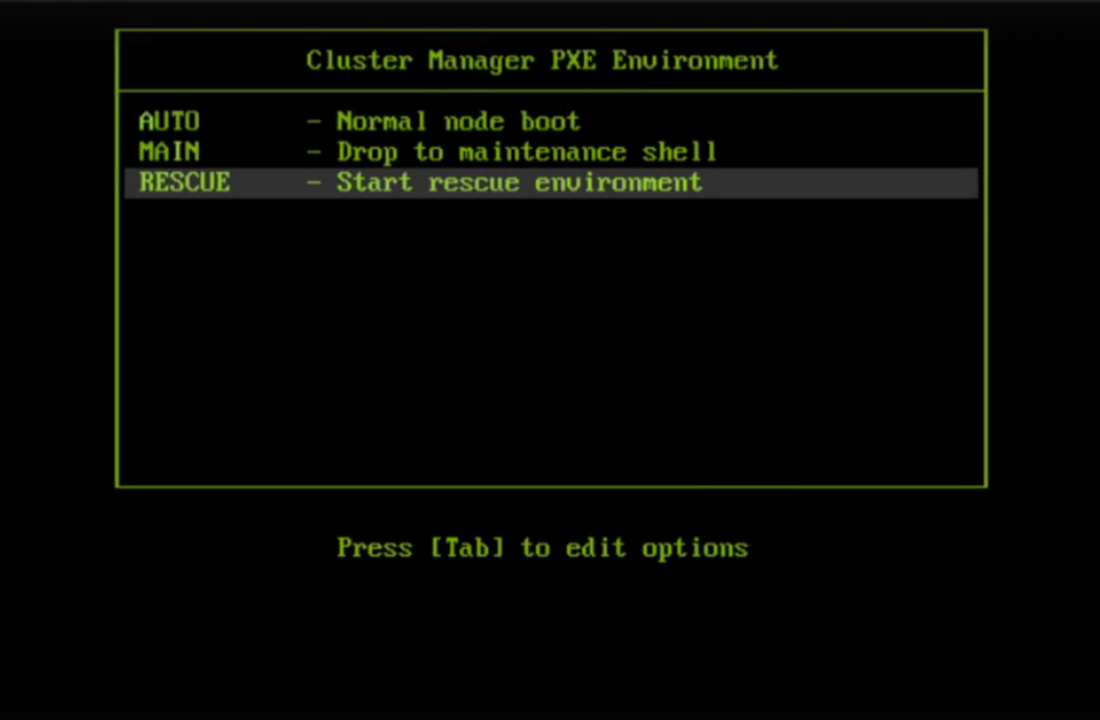

PXE boot the secondary head node and then select RESCUE from the grub menu.

Since this is the initial boot of this node, it must be done outside of Base Command Manager (BMC or physical power button).

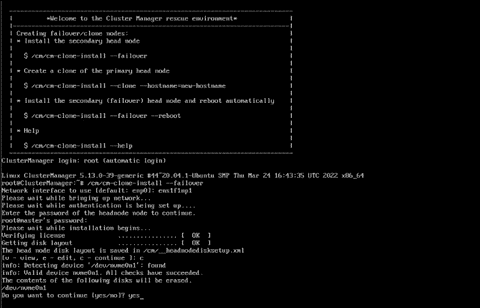

After the secondary head node has booted into the rescue environment, run the /cm/cm-clone-install –failover command, then enter yes when prompted.

The secondary head node will be cloned from the primary.

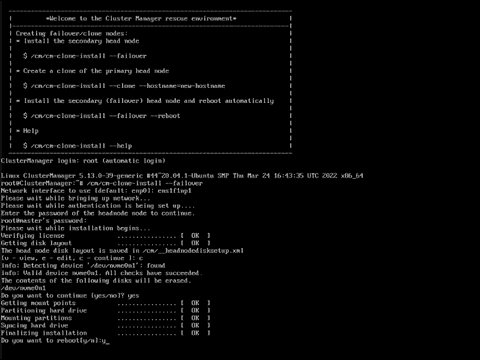

When cloning is completed, enter y to reboot the secondary head node.

The secondary must boot from its hard drive. PXE boot should not be enabled.

Wait for the secondary head node to reboot and then continue the HA setup procedure on the primary head node.

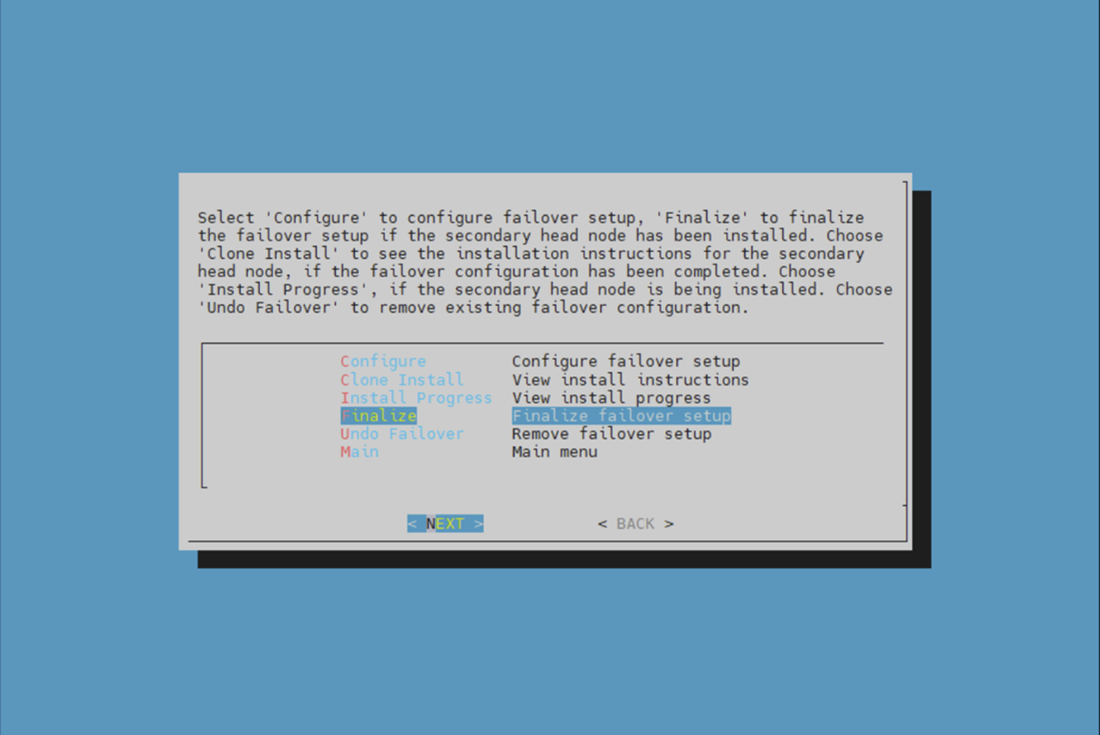

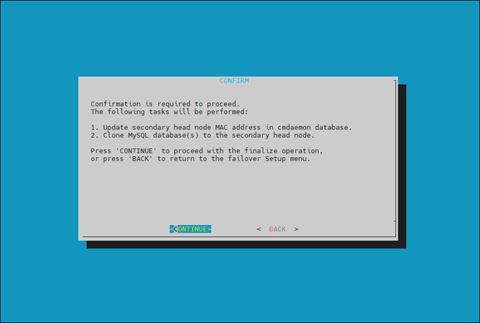

Choose finalize from the cmha-setup menu and then select NEXT.

This will clone the MySQL database from the primary to the secondary head node.

Select CONTINUE on the confirmation screen.

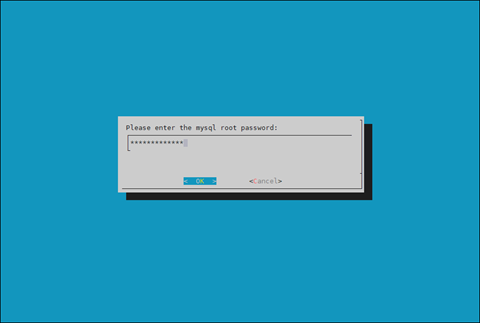

Enter the MySQL root password and then select OK. This should be the same as the root password.

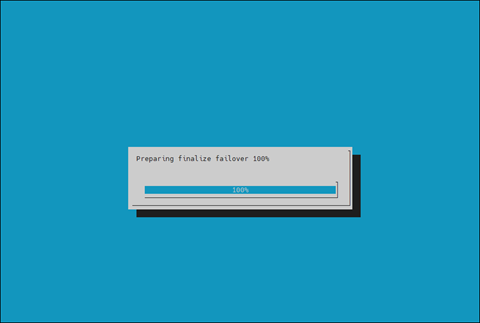

The cmha-setup wizard continues. Press ENTER to continue when prompted.

The progress is shown below:

1Updating secondary master mac address.............. [ OK ] 2Initializing failover setup on basepod-head2....... [ OK ] 3Stopping cmdaemon.................................. [ OK ] 4Cloning cmdaemon database.......................... [ OK ] 5Checking database consistency...................... [ OK ] 6Starting cmdaemon, chkconfig services.............. [ OK ] 7Cloning workload manager databases................. [ OK ] 8Cloning additional databases....................... [ OK ] 9Update DB permissions.............................. [ OK ] 10Checking for dedicated failover network............ [ OK ] 11Press any key to continue

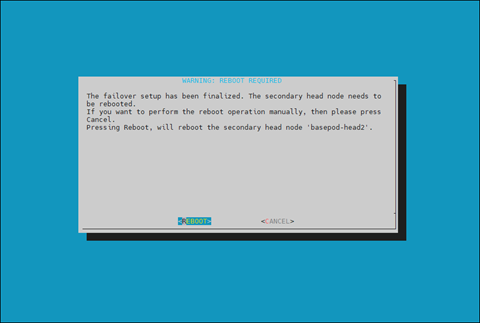

Select REBOOT when the WARNING: REBOOT REQUIRED screen is shown.

Wait for the secondary head node to reboot before continuing.

The secondary head node is now UP.

1% device list -f hostname:20,category:12,ip:20,status:15 2hostname (key) category ip status 3-------------------- ---------- -------------------- --------------- 4basepod-head1 10.227.48.254 [ UP ] 5basepod-head2 10.227.48.253 [ UP ] 6knode01 k8s-master 10.227.48.9 [ DOWN ] 7knode02 k8s-master 10.227.48.10 [ DOWN ] 8knode03 k8s-master 10.227.48.11 [ DOWN ] 9dgx01 dgx 10.227.48.5 [ DOWN ] 10dgx02 dgx 10.227.48.6 [ DOWN ] 11dgx03 dgx 10.227.48.7 [ DOWN ] 12dgx04 dgx 10.227.48.8 [ DOWN ]

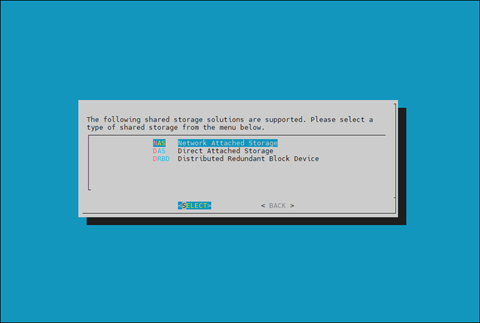

Choose Shared Storage from the cmha-setup menu and select SELECT.

In this final HA configuration step, cmha-setup will copy the /cm/shared and /home directories to the shared storage, and it configures both head nodes and all cluster nodes to mount it.

Choose NAS and then select SELECT.

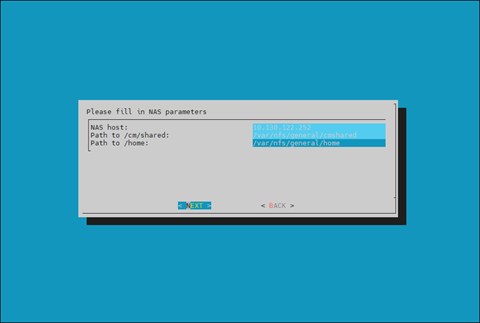

Choose /cm/shared and /home and then select NEXT.

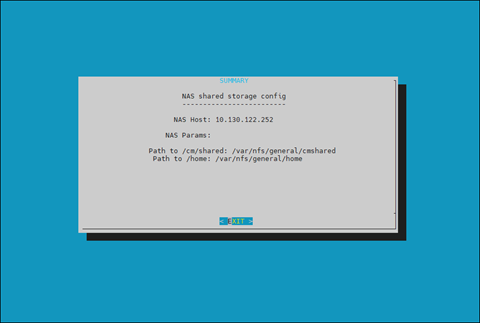

Provide the IP address of the NAS host, the paths for the /cm/shared and /home directories should be copied to on the shared storage and then select NEXT.

In this case, /var/nfs/general is exported, so the /cm/shared directory will be copied to 10.227.48.252:/var/nfs/general/cmshared, and it will be mounted over /cm/shared on the cluster nodes.

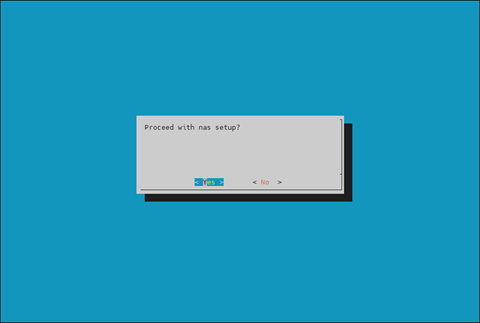

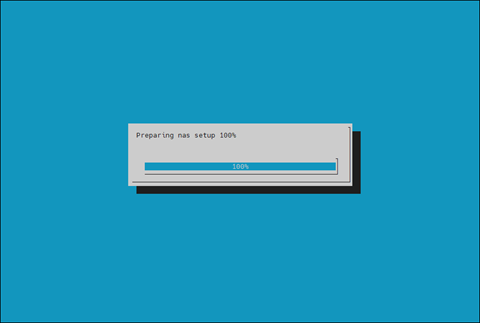

The wizard shows a summary of the information that it has collected. Press ENTER to continue.

Select YES when prompted to proceed with the setup.

The cmha-setup wizard proceeds with its work. When it completes, select ENTER to finish the HA setup.

The progress is shown below:

1Copying NAS data................................... [ OK ] 2Mount NAS storage.................................. [ OK ] 3Remove old fsmounts................................ [ OK ] 4Add new fsmounts................................... [ OK ] 5Remove old fsexports............................... [ OK ] 6Write NAS mount/unmount scripts.................... [ OK ] 7Copy mount/unmount scripts......................... [ OK ] 8Press any key to continue

cmha-setup is now complete. Select EXIT to return to the shell prompt.

Verify the High Availability Setup#

Run the cmha status command to verify that the failover configuration is correct and working as expected.

Note that the command tests the configuration from both directions: from the primary head node to the secondary, and from the secondary to the primary. The active head node is indicated by an asterisk.

1# cmha status 2Node Status: running in active mode 3 4basepod-head1* -> basepod-head2 5mysql [ OK ] 6ping [ OK ] 7status [ OK ] 8 9basepod-head2 -> basepod-head1* 10mysql [ OK ] 11ping [ OK ] 12status [ OK ]

Verify that the /cm/shared and /home directories are being mounted from the NAS server.

1# mount 2. . . some output omitted . . . 310.227.48.252:/var/nfs/general/cmshared on /cm/shared type nfs4 (rw,relatime,vers=4.2,rsize=32768,wsize=32768,namlen=255,hard,proto=tcp,timeo=600,retrans=2,sec=sys,clientaddr=10.130.12210.227.48_lock=none,addr=10.130.122.252)10.227.48 410.227.48.252:/var/nfs/general/home on /home type nfs4 (rw,relatime,vers=4.2,rsize=32768,wsize=32768,namlen=255,hard,proto=tcp,timeo=600,retrans=2,sec=sys,clientaddr=10.130.12210.227.48_lock=none,addr=10.130.122.252)10.227.48

Login to the head node to be made active and run cmha makeactive.

1# ssh basepod-head2 2# cmha makeactive 3========================================================================= 4This is the passive head node. Please confirm that this node should become 5the active head node. After this operation is complete, the HA status of 6the head nodes will be as follows: 7 8basepod-head2 will become active head node (current state: passive) 9basepod-head1 will become passive head node (current state: active) 10========================================================================= 11 12Continue(c)/Exit(e)? c 13 14Initiating failover.............................. [ OK ] 15 16basepod-head2 is now active head node, makeactive successful

Run the cmha status command again to verify that the secondary head node has become the active head node.

1# cmha status 2Node Status: running in active mode 3 4basepod-head2* -> basepod-head1 5mysql [ OK ] 6ping [ OK ] 7status [ OK ] 8 9basepod-head1 -> basepod-head2* 10mysql [ OK ] 11ping [ OK ] 12status [ OK ]

Manually failover back to the primary head node.

1# ssh basepod-head1 2# cmha makeactive 3 4=========================================================================== 5This is the passive head node. Please confirm that this node should become 6the active head node. After this operation is complete, the HA status of 7the head nodes will be as follows: 8 9basepod-head1 will become active head node (current state: passive) 10basepod-head2 will become passive head node (current state: active) 11=========================================================================== 12 13Continue(c)/Exit(e)? c 14 15Initiating failover.............................. [ OK ] 16 17basepod-head1 is now active head node, makeactive successful

Run cmsh status again to verify that the primary head node has become the active head node.

1# cmha status 2Node Status: running in active mode 3 4basepod-head1* -> basepod-head2 5mysql [ OK ] 6ping [ OK ] 7status [ OK ] 8 9basepod-head2 -> basepod-head1* 10mysql [ OK ] 11ping [ OK ] 12status [ OK ]

Power on the cluster nodes.

1# cmsh -c “power -c k8s-master,dgx on” 2ipmi0 .................... [ ON ] knode01 3ipmi0 .................... [ ON ] knode02 4ipmi0 .................... [ ON ] knode03 5ipmi0 .................... [ ON ] dgx01 6ipmi0 .................... [ ON ] dgx02 7ipmi0 .................... [ ON ] dgx03 8ipmi0 .................... [ ON ] dgx04

(Optional) Configure Jupyter High Availability#

If Jupyter was deployed on the primary head node before HA was configured, configure the Jupyter service to run on the active head node.

1% device 2% use basepod-head1 3% services 4% use cm-jupyterhub 5% show 6Parameter Value 7-------------------------------- -------------------------------------------- 8Revision 9Service cm-jupyterhub 10Run if ALWAYS 11Monitored yes 12Autostart yes 13Timeout -1 14Belongs to role yes 15Sickness check script 16Sickness check script timeout 10 17Sickness check interval 60

Set the runif parameter to active.

1% set runif active 2% commit 3 4% show 5Parameter Value 6-------------------------------- -------------------------------------------- 7Revision 8Service cm-jupyterhub 9Run if ACTIVE 10Monitored yes 11Autostart yes 12Timeout -1 13Belongs to role yes 14Sickness check script 15Sickness check script timeout 10 16Sickness check interval 60

Configure the Jupyter service on the secondary head node.

1% device 2% use basepod-head2 3% services 4% use cm-jupyterhub 5% set runif active