Introduction#

This installation guide provides step-by-step instructions for deploying and configuring the complete NVIDIA Mission Control 2.3 software stack on NVIDIA DGX B200/B300 and GB200/GB300 NVL72 systems. It covers the following:

Installation and configuration of all software components required to enable full NVIDIA Mission Control functionality

Software dependencies for each feature

Installation and deployment of the features themselves

Verification and testing procedures to ensure proper feature functionality

For more information about control plane hardware requirements, refer to: https://apps.nvidia.com/PID/ContentLibraries/Detail?id=1137731&srch=nmc%20hardware.

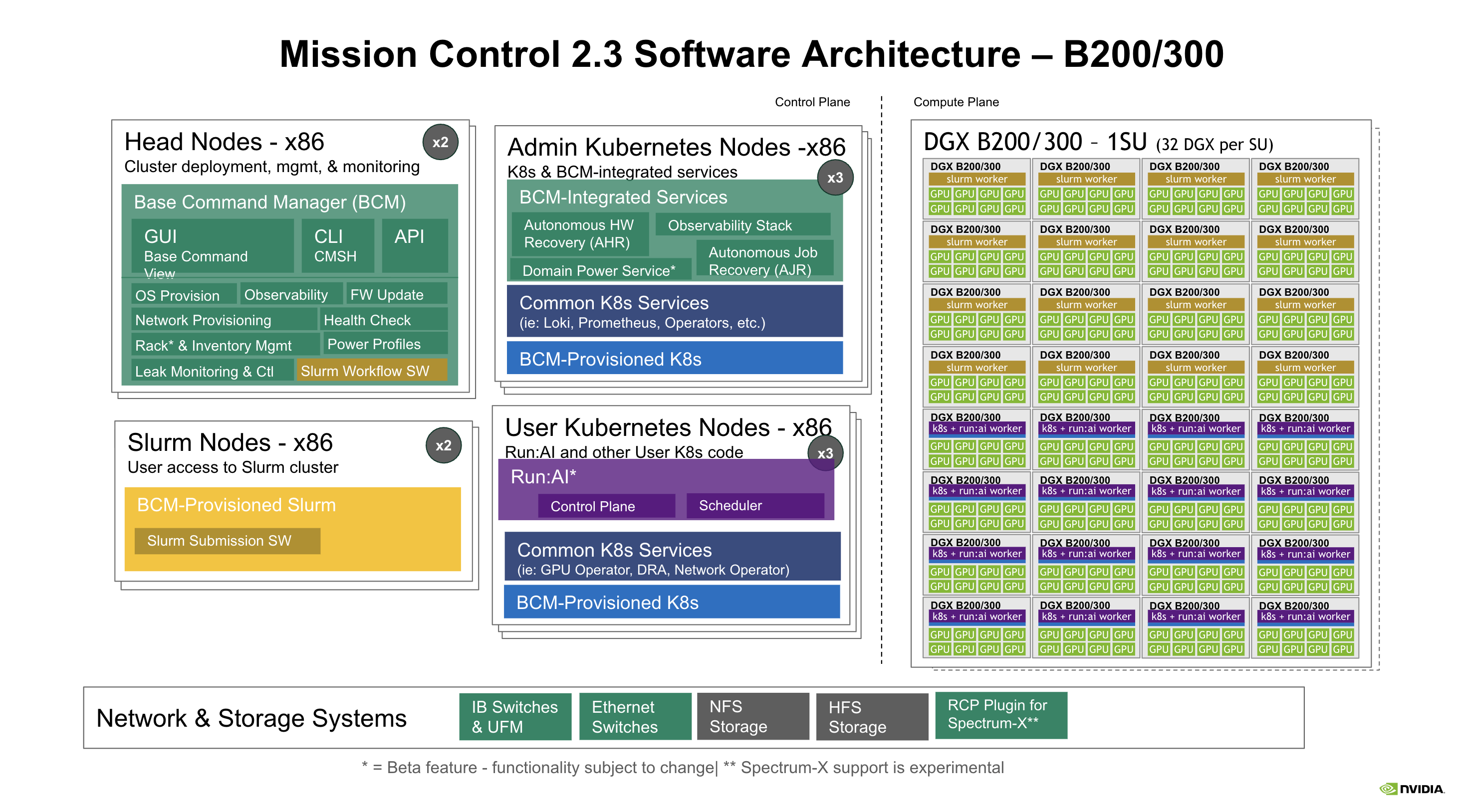

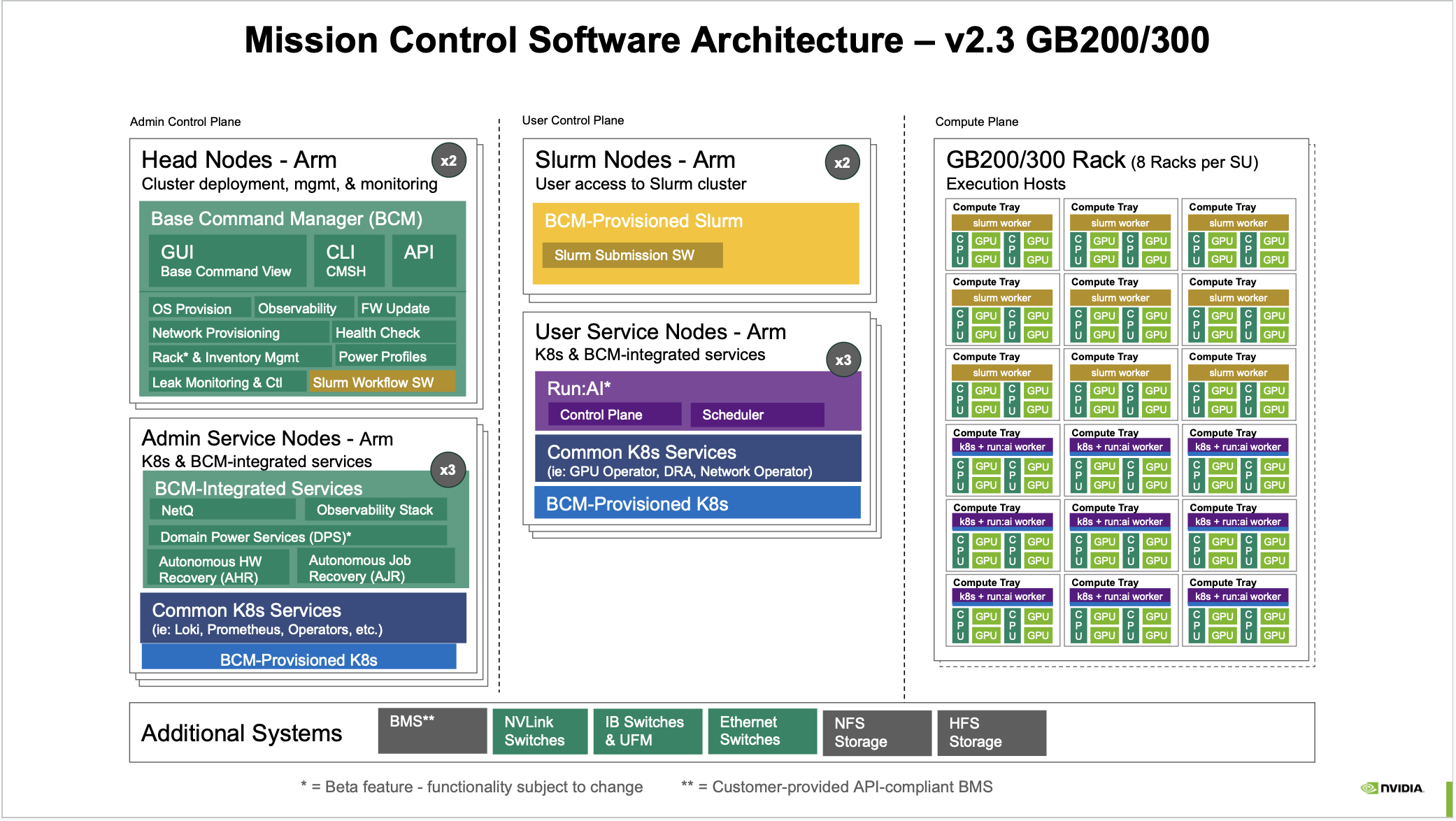

An overview of Mission Control is shown in the following figures.

Figure 1 Figure 1: Mission Control 2.3 Software Architecture – B200/300#

Figure 2 Figure 2: Mission Control Software Architecture – v2.3 GB200/300#

Assumptions and Prerequisites#

Before installing the NVIDIA Mission Control software, you must complete the following tasks within BCM 11, as outlined in the NVIDIA Mission Control Management Plane and Rack Setup Installation Guide:

All networks are defined and all switches (in-band and out-of-band) are “Up”

All control plane nodes are configured and “Up”:

slogin

K8s-admin (Admin Kubernetes nodes)

K8s-user (User Kubernetes nodes)

High Availability (HA) setup is configured and failover is verified.

For GB200/GB300 systems, the GB200 NVL72 rack(s) setup is complete and the following items are verified:

All NVLink switch chips are online with NMX-C/T enabled

NVLink switch leader is assigned

Each GB200/GB300 NVL72 rack contains 9 NVLink switch trays

All 18 compute trays per rack are provisioned and in an “Up” state

Power control is established at rack, compute tray, and NVLink switch tray levels

NFS setup is complete and available

A valid BCM license with Mission Control enabled is installed

Mission Control Components – DGX B200/B300#

The following describes the Mission Control 2.3 architecture for DGX B200/B300 systems (1 Scaling Unit = 32 DGX nodes). All control plane nodes are x86-based.

Control Plane#

The B200/B300 architecture separates management responsibilities across four sets of x86 control-plane node groups.

Head Nodes – x86 (×2, Cluster deployment, management, and monitoring)

Runs Base Command Manager (BCM) providing:

GUI (Base Command View), CLI (CMSH), and API interfaces

OS provisioning

Observability

Firmware (FW) Update

Network provisioning

Health Check

Rack and inventory management

Power Profiles

Leak monitoring and control

Slurm Workflow Software

Admin Kubernetes Nodes – x86 (×3, K8s and BCM-integrated services)

BCM-Integrated Services:

NVIDIA Mission Control-autonomous hardware recovery

Observability Stack

Domain Power Service* (Early Preview)

NVIDIA Mission Control-autonomous job recovery

Common K8s Services (e.g., Loki, Prometheus, Operators)

BCM-Provisioned K8s

Slurm Nodes – x86 (×2, User access to Slurm cluster)

BCM-Provisioned Slurm

Slurm Submission Software

User Kubernetes Nodes – x86 (×3, run:ai and other user K8s workloads)

run:ai

Common K8s Services (e.g., GPU Operator, DRA, Network Operator)

BCM-Provisioned K8s

Compute Plane#

DGX B200/B300 – 1 SU (32 DGX per SU)

Each DGX B200/B300 node runs as either a Slurm worker or a K8s + run:ai worker, depending on its assigned role. Each node contains 8 GPUs.

Network and Storage Systems#

InfiniBand (IB) Switches and UFM

Ethernet Switches

NFS Storage

High-Speed Storage

RCP Plugin for Spectrum-X** (experimental)

Note

* Domain Power Service is an Early Preview feature – functionality subject to change.

** Spectrum-X support is experimental.

Mission Control Components – GB200/GB300 NVL72#

The following describes the Mission Control 2.3 architecture for GB200/GB300 NVL72 systems (1 Scaling Unit = 8 Racks). All control plane nodes are Arm-based.

Admin Control Plane#

Head Nodes – Arm (×2, Cluster deployment, management, and monitoring)

Runs Base Command Manager (BCM) providing:

GUI (Base Command View), CLI (CMSH), and API interfaces

OS provisioning

Observability

Firmware (FW) Update

Network provisioning

Health Check

Rack and inventory management

Power Profiles

Leak monitoring and control

Slurm Workflow Software

Admin Service Nodes – Arm (×3, K8s and BCM-integrated services)

BCM-Integrated Services:

NetQ

Observability Stack

Domain Power Services (DPS)* (Early Preview)

NVIDIA Mission Control-autonomous hardware recovery

NVIDIA Mission Control-autonomous job recovery

Common K8s Services (e.g., Loki, Prometheus, Operators)

BCM-Provisioned K8s

User Control Plane#

Slurm Nodes – Arm (×2, User access to Slurm cluster)

BCM-Provisioned Slurm

Slurm Submission Software

User Service Nodes – Arm (×3, run:ai and other user K8s workloads)

run:ai* (Early Preview):

Control Plane

Scheduler

Common K8s Services (e.g., GPU Operator, DRA, Network Operator)

BCM-Provisioned K8s

Compute Plane#

GB200/GB300 Rack – 8 Racks per SU

Execution hosts composed of Compute Trays

Each Compute Tray contains CPU (C), GPU (U), and integrated memory

Trays run as either Slurm workers or K8s + run:ai workers depending on assigned role

Additional Systems#

The control plane integrates with the following additional infrastructure components:

BMS (Building Management Service) – Customer-provided API-compliant BMS**

NVLink Switches

InfiniBand (IB) Switches and UFM

Ethernet Switches

NFS Storage

High-Speed Storage

Note

* run:ai on GB200/GB300 is an Early Preview feature – functionality subject to change.

** BMS must be a customer-provided, API-compliant Building Management Service.

Key Features#

NVIDIA Mission Control 2.3 provides the following key capabilities:

Supports both training and inference workloads across DGX B200/B300 and GB200/GB300 NVL72 platforms

Provides centralized management for configuration and observability through BCM GUI, CLI, and API

Delivers a standardized, scalable, and secure control plane for all supported NVIDIA systems

Includes NVIDIA Mission Control-autonomous hardware recovery and NVIDIA Mission Control-autonomous job recovery for resilient, self-healing operations

Integrates run:ai and Slurm workload managers for flexible GPU orchestration

Supports Domain Power Services for power-aware workload management (Early Preview)

Provides NetQ unified observability for GB200/GB300 systems

Offers RCP Plugin for Spectrum-X for B200/B300 networking (experimental)