Virtual GPU Software User Guide

Virtual GPU Software User Guide

Documentation for administrators that explains how to install and configure NVIDIA Virtual GPU manager, configure virtual GPU software in pass-through mode, and install drivers on guest operating systems.

NVIDIA vGPU software is a graphics virtualization platform that provides virtual machines (VMs) access to NVIDIA GPU technology.

1.1. How NVIDIA vGPU Software Is Used

NVIDIA vGPU software can be used in several ways.

1.1.1. NVIDIA vGPU

NVIDIA Virtual GPU (vGPU™) enables multiple virtual machines (VMs) to have simultaneous, direct access to a single physical GPU, using the same NVIDIA graphics drivers that are deployed on non-virtualized operating systems. By doing this, NVIDIA vGPU provides VMs with unparalleled graphics performance and application compatibility, together with the cost-effectiveness and scalability brought about by sharing a GPU among multiple workloads.

For more information, see Installing and Configuring NVIDIA Virtual GPU Manager.

1.1.2. GPU Pass-Through

In GPU pass-through mode, an entire physical GPU is directly assigned to one VM, bypassing the NVIDA Virtual GPU Manager. In this mode of operation, the GPU is accessed exclusively by the NVIDIA driver running in the VM to which it is assigned. The GPU is not shared among VMs.

For more information, see Using GPU Pass-Through.

1.1.3. Bare-Metal Deployment

In a bare-metal deployment, you can use NVIDIA vGPU software graphics drivers with Quadro vDWS and GRID Virtual Applications licenses to deliver remote virtual desktops and applications. If you intend to use Tesla boards without a hypervisor for this purpose, use NVIDIA vGPU software graphics drivers, not other NVIDIA drivers.

To use NVIDIA vGPU software drivers for a bare-metal deployment, complete these tasks:

-

Install the driver on the physical host.

For instructions, see Installing the NVIDIA vGPU Software Graphics Driver.

-

License any NVIDIA vGPU software that you are using.

For instructions, see Virtual GPU Client Licensing User Guide.

-

Configure the platform for remote access.

To use graphics features with Tesla GPUs, you must use a supported remoting solution, for example, RemoteFX, XenApp, VNC, or similar technology.

-

Use the display settings feature of the host OS to configure the Tesla GPU as the primary display.

NVIDIA Tesla generally operates as a secondary device on bare-metal platforms.

-

If the system has multiple display adapters, disable display devices connected through adapters that are not from NVIDIA.

You can use the display settings feature of the host OS or the remoting solution for this purpose. On NVIDIA GPUs, including Tesla GPUs, a default display device is enabled.

Users can launch applications that require NVIDIA GPU technology for enhanced user experience only after displays that are driven by NVIDIA adapters are enabled.

1.2. How this Guide Is Organized

Virtual GPU Software User Guide is organized as follows:

- This chapter introduces the architecture and features of NVIDIA vGPU software.

- Installing and Configuring NVIDIA Virtual GPU Manager provides a step-by-step guide to installing and configuring vGPU on supported hypervisors.

- Using GPU Pass-Through explains how to configure a GPU for pass-through on supported hypervisors.

- Installing the NVIDIA vGPU Software Graphics Driver explains how to install NVIDIA vGPU software graphics driver on Windows and Linux operating systems.

- Licensing an NVIDIA vGPU explains how to license NVIDIA vGPU licensed products on Windows and Linux operating systems.

- Modifying a VM's NVIDIA vGPU Configuration explains how to remove a VM’s vGPU configuration and modify GPU assignments for vGPU-enabled vMware vSphere VMs.

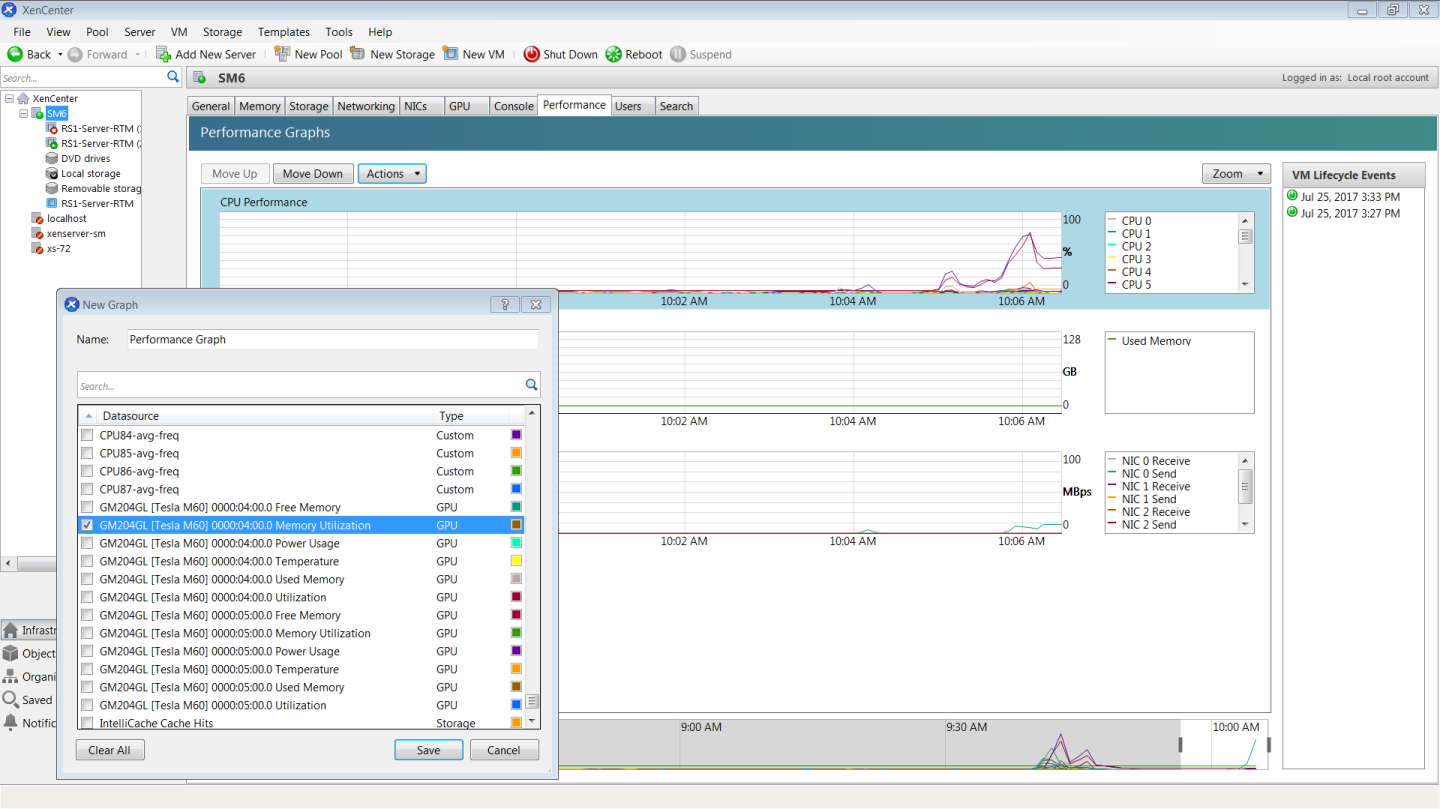

- Monitoring GPU Performance covers vGPU performance monitoring on XenServer.

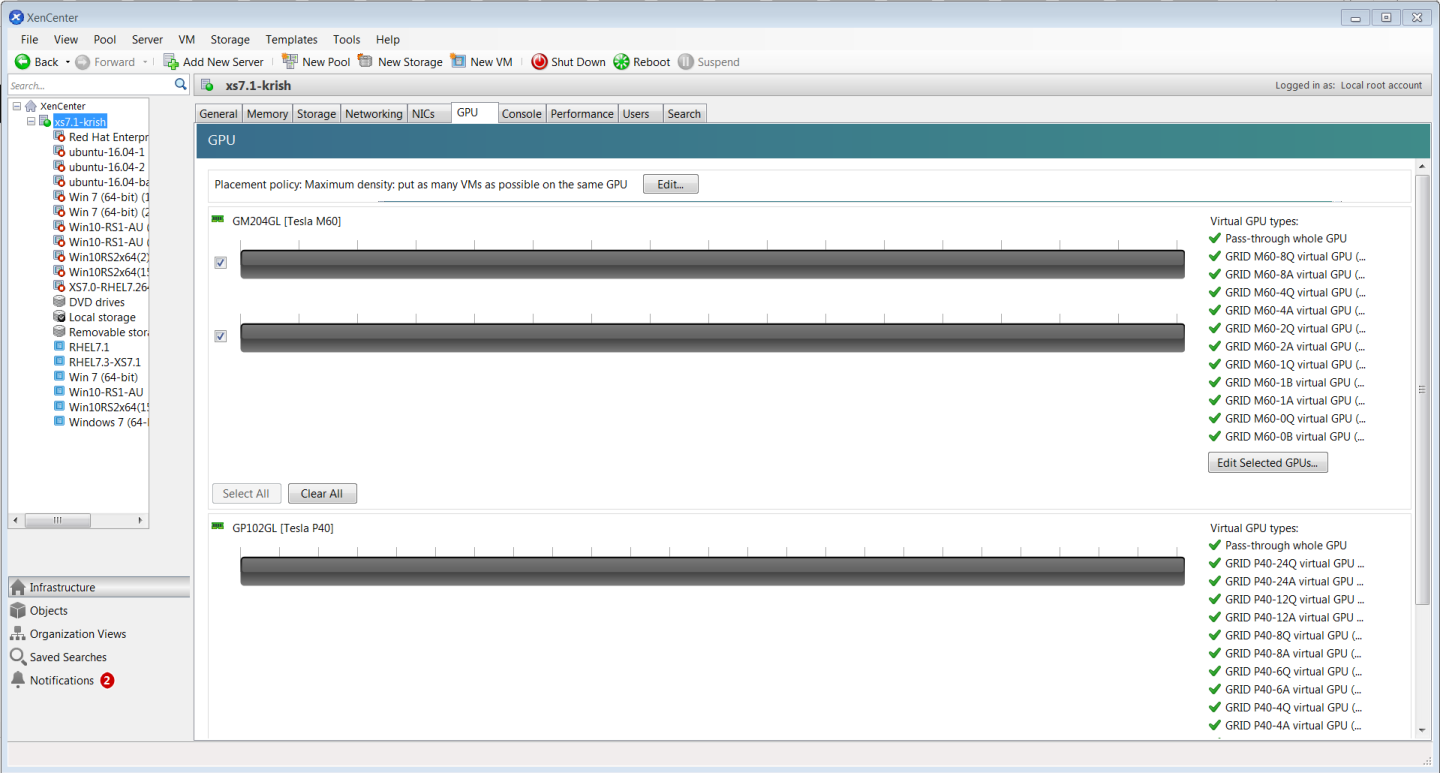

- XenServer vGPU Management covers vGPU management on XenServer.

- XenServer Performance Tuning covers vGPU performance optimization on XenServer.

- Troubleshooting provides guidance on troubleshooting.

1.3. NVIDIA vGPU Architecture

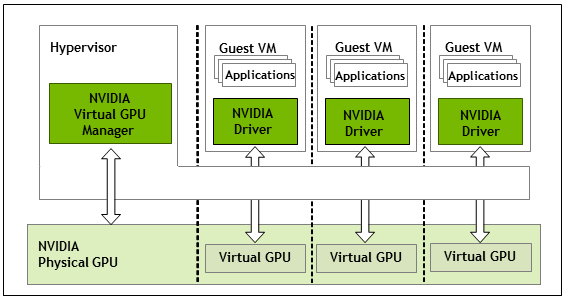

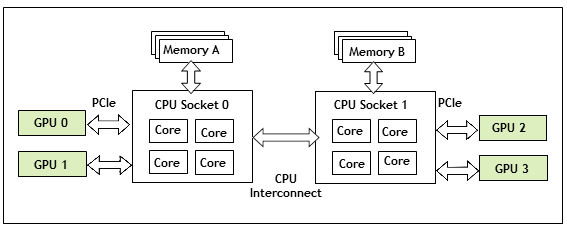

The high-level architecture of NVIDIA vGPU is illustrated in Figure 1. Under the control of the NVIDIA Virtual GPU Manager running under the hypervisor, NVIDIA physical GPUs are capable of supporting multiple virtual GPU devices (vGPUs) that can be assigned directly to guest VMs.

Guest VMs use NVIDIA vGPUs in the same manner as a physical GPU that has been passed through by the hypervisor: an NVIDIA driver loaded in the guest VM provides direct access to the GPU for performance-critical fast paths, and a paravirtualized interface to the NVIDIA Virtual GPU Manager is used for non-performant management operations.

Figure 1. NVIDIA vGPU System Architecture

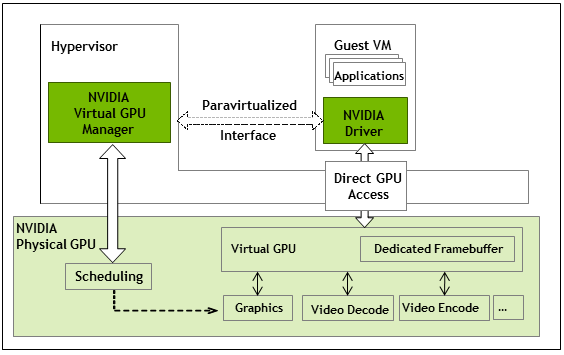

Each NVIDIA vGPU is analogous to a conventional GPU, having a fixed amount of GPU framebuffer, and one or more virtual display outputs or “heads”. The vGPU’s framebuffer is allocated out of the physical GPU’s framebuffer at the time the vGPU is created, and the vGPU retains exclusive use of that framebuffer until it is destroyed.

All vGPUs resident on a physical GPU share access to the GPU’s engines including the graphics (3D), video decode, and video encode engines.

Figure 2. NVIDIA vGPU Internal Architecture

1.4. Supported GPUs

NVIDIA vGPU is available as a licensed product on supported Tesla GPUs. For a list of recommended server platforms and supported GPUs, consult the release notes for supported hypervisors at NVIDIA Virtual GPU Software Documentation.

1.4.1. Virtual GPU Types

The number of physical GPUs that a board has depends on the board. Each physical GPU can support several different types of virtual GPU. Virtual GPU types have a fixed amount of frame buffer, number of supported display heads, and maximum resolutions. They are grouped into different series according to the different classes of workload at which they are targeted. Each series is identified by the last letter of the vGPU type name.

- Q-series virtual GPU types are targeted at designers and power users.

-

B-series virtual GPU types are targeted at power users.

-

A-series virtual GPU types are targeted at virtual applications users.1

The number after the board type in the vGPU type name denotes the amount of frame buffer that is allocated to a vGPU of that type. For example, a vGPU of type M60-2Q is allocated 2048 Mbytes of frame buffer on a Tesla M60 board.

Due to their differing resource requirements, the maximum number of vGPUs that can be created simultaneously on a physical GPU varies according to the vGPU type. For example, a Tesla M60 board can support up to 4 M60-2Q vGPUs on each of its two physical GPUs, for a total of 8 vGPUs, but only 2 M60-4Q vGPUs, for a total of 4 vGPUs.

NVIDIA vGPU is a licensed product on all supported GPU boards. A software license is required to enable all vGPU features within the guest VM. The type of license required depends on the vGPU type.

- Q-series virtual GPU types require a Quadro vDWS license.

- B-series virtual GPU types require a GRID Virtual PC license but can also be used with a Quadro vDWS license.

- A-series vGPU types require a GRID Virtual Applications license.

NVIDIA vGPUs with less than 1 Gbyte of frame buffer support only 1 virtual display head on a Windows 10 guest OS.

1.4.1.1. Tesla M60 Virtual GPU Types

Physical GPUs per board: 2

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| M60-8Q | Designer | 8192 | 4 | 4096×2160 | 1 | 2 | Quadro vDWS |

| M60-4Q | Designer | 4096 | 4 | 4096×2160 | 2 | 4 | Quadro vDWS |

| M60-2Q | Designer | 2048 | 4 | 4096×2160 | 4 | 8 | Quadro vDWS |

| M60-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 8 | 16 | Quadro vDWS |

| M60-0Q | Power User, Designer | 512 | 2 | 2560×1600 | 16 | 32 | Quadro vDWS |

| M60-2B | Power User | 2048 | 2 | 4096×2160 | 4 | 8 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: M60-2B4 | Power User | 2048 | 4 | 2560×1600 | 4 | 8 | GRID Virtual PC or Quadro vDWS |

| M60-1B | Power User | 1024 | 4 | 2560×1600 | 8 | 16 | GRID Virtual PC or Quadro vDWS |

| M60-0B | Power User | 512 | 2 | 2560×1600 | 16 | 32 | GRID Virtual PC or Quadro vDWS |

| M60-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 1 | 2 | GRID Virtual Application |

| M60-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 2 | 4 | GRID Virtual Application |

| M60-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 4 | 8 | GRID Virtual Application |

| M60-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 8 | 16 | GRID Virtual Application |

1.4.1.2. Tesla M10 Virtual GPU Types

Physical GPUs per board: 4

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| M10-8Q | Designer | 8192 | 4 | 4096×2160 | 1 | 4 | Quadro vDWS |

| M10-4Q | Designer | 4096 | 4 | 4096×2160 | 2 | 8 | Quadro vDWS |

| M10-2Q | Designer | 2048 | 4 | 4096×2160 | 4 | 16 | Quadro vDWS |

| M10-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 8 | 32 | Quadro vDWS |

| M10-0Q | Power User, Designer | 512 | 2 | 2560×1600 | 16 | 64 | Quadro vDWS |

| M10-2B | Power User | 2048 | 2 | 4096×2160 | 4 | 16 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: M10-2B4 | Power User | 2048 | 4 | 2560×1600 | 4 | 16 | GRID Virtual PC or Quadro vDWS |

| M10-1B | Power User | 1024 | 4 | 2560×1600 | 8 | 32 | GRID Virtual PC or Quadro vDWS |

| M10-0B | Power User | 512 | 2 | 2560×1600 | 16 | 64 | GRID Virtual PC or Quadro vDWS |

| M10-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 1 | 4 | GRID Virtual Application |

| M10-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 2 | 8 | GRID Virtual Application |

| M10-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 4 | 16 | GRID Virtual Application |

| M10-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 8 | 32 | GRID Virtual Application |

1.4.1.3. Tesla M6 Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| M6-8Q | Designer | 8192 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| M6-4Q | Designer | 4096 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| M6-2Q | Designer | 2048 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| M6-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 8 | 8 | Quadro vDWS |

| M6-0Q | Power User, Designer | 512 | 2 | 2560×1600 | 16 | 16 | Quadro vDWS |

| M6-2B | Power User | 2048 | 2 | 4096×2160 | 4 | 4 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: M6-2B4 | Power User | 2048 | 4 | 2560×1600 | 4 | 4 | GRID Virtual PC or Quadro vDWS |

| M6-1B | Power User | 1024 | 4 | 2560×1600 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| M6-0B | Power User | 512 | 2 | 2560×1600 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| M6-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| M6-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| M6-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| M6-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

1.4.1.4. Tesla P100 PCIe 12GB Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| P100C-12Q | Designer | 12288 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| P100C-6Q | Designer | 6144 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| P100C-4Q | Designer | 4096 | 4 | 4096×2160 | 3 | 3 | Quadro vDWS |

| P100C-2Q | Designer | 2048 | 4 | 4096×2160 | 6 | 6 | Quadro vDWS |

| P100C-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 12 | 12 | Quadro vDWS |

| P100C-2B | Power User | 2048 | 2 | 4096×2160 | 6 | 6 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: P100C-2B4 | Power User | 2048 | 4 | 2560×1600 | 6 | 6 | GRID Virtual PC or Quadro vDWS |

| P100C-1B | Power User | 1024 | 4 | 2560×1600 | 12 | 12 | GRID Virtual PC or Quadro vDWS |

| P100C-12A | Virtual Application User | 12288 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| P100C-6A | Virtual Application User | 6144 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| P100C-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 3 | 3 | GRID Virtual Application |

| P100C-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 6 | 6 | GRID Virtual Application |

| P100C-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 12 | 12 | GRID Virtual Application |

1.4.1.5. Tesla P100 PCIe 16GB Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| P100-16Q | Designer | 16384 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| P100-8Q | Designer | 8192 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| P100-4Q | Designer | 4096 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| P100-2Q | Designer | 2048 | 4 | 4096×2160 | 8 | 8 | Quadro vDWS |

| P100-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 16 | 16 | Quadro vDWS |

| P100-2B | Power User | 2048 | 2 | 4096×2160 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: P100-2B4 | Power User | 2048 | 4 | 2560×1600 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| P100-1B | Power User | 1024 | 4 | 2560×1600 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| P100-16A | Virtual Application User | 16384 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| P100-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| P100-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| P100-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

| P100-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 16 | 16 | GRID Virtual Application |

1.4.1.6. Tesla P100 SXM2 Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| P100X-16Q | Designer | 16384 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| P100X-8Q | Designer | 8192 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| P100X-4Q | Designer | 4096 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| P100X-2Q | Designer | 2048 | 4 | 4096×2160 | 8 | 8 | Quadro vDWS |

| P100X-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 16 | 16 | Quadro vDWS |

| P100X-2B | Power User | 2048 | 2 | 4096×2160 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: P100X-2B4 | Power User | 2048 | 4 | 2560×1600 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| P100X-1B | Power User | 1024 | 4 | 2560×1600 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| P100X-16A | Virtual Application User | 16384 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| P100X-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| P100X-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| P100X-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

| P100X-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 16 | 16 | GRID Virtual Application |

1.4.1.7. Tesla P40 Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| P40-24Q | Designer | 24576 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| P40-12Q | Designer | 12288 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| P40-8Q | Designer | 8192 | 4 | 4096×2160 | 3 | 3 | Quadro vDWS |

| P40-6Q | Designer | 6144 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| P40-4Q | Designer | 4096 | 4 | 4096×2160 | 6 | 6 | Quadro vDWS |

| P40-3Q | Designer | 3072 | 4 | 4096×2160 | 8 | 8 | Quadro vDWS |

| P40-2Q | Designer | 2048 | 4 | 4096×2160 | 12 | 12 | Quadro vDWS |

| P40-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 24 | 24 | Quadro vDWS |

| P40-2B | Power User | 2048 | 2 | 4096×2160 | 12 | 12 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: P40-2B4 | Power User | 2048 | 4 | 2560×1600 | 12 | 12 | GRID Virtual PC or Quadro vDWS |

| P40-1B | Power User | 1024 | 4 | 2560×1600 | 24 | 24 | GRID Virtual PC or Quadro vDWS |

| P40-24A | Virtual Application User | 24576 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| P40-12A | Virtual Application User | 12288 | 1 | 1280x10241 | 2 | 2 | GRID Virtual Application |

| P40-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 3 | 3 | GRID Virtual Application |

| P40-6A | Virtual Application User | 6144 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| P40-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 6 | 6 | GRID Virtual Application |

| P40-3A | Virtual Application User | 3072 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

| P40-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 12 | 12 | GRID Virtual Application |

| P40-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 24 | 24 | GRID Virtual Application |

1.4.1.8. Tesla P6 Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| P6-16Q | Designer | 16384 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| P6-8Q | Designer | 8192 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| P6-4Q | Designer | 4096 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| P6-2Q | Designer | 2048 | 4 | 4096×2160 | 8 | 8 | Quadro vDWS |

| P6-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 16 | 16 | Quadro vDWS |

| P6-2B | Power User | 2048 | 2 | 4096×2160 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: P6-2B4 | Power User | 2048 | 4 | 2560×1600 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| P6-1B | Power User | 1024 | 4 | 2560×1600 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| P6-16A | Virtual Application User | 16384 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| P6-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| P6-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| P6-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

| P6-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 16 | 16 | GRID Virtual Application |

1.4.1.9. Tesla P4 Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| P4-8Q | Designer | 8192 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| P4-4Q | Designer | 4096 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| P4-2Q | Designer | 2048 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| P4-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 8 | 8 | Quadro vDWS |

| P4-2B | Power User | 2048 | 2 | 4096×2160 | 4 | 4 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: P4-2B4 | Power User | 2048 | 4 | 2560×1600 | 4 | 4 | GRID Virtual PC or Quadro vDWS |

| P4-1B | Power User | 1024 | 4 | 2560×1600 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| P4-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| P4-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| P4-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| P4-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

1.4.1.10. Tesla V100 SXM2 Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| V100X-16Q | Designer | 16384 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| V100X-8Q | Designer | 8192 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| V100X-4Q | Designer | 4096 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| V100X-2Q | Designer | 2048 | 4 | 4096×2160 | 8 | 8 | Quadro vDWS |

| V100X-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 16 | 16 | Quadro vDWS |

| V100X-2B | Power User | 2048 | 2 | 4096×2160 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: V100X-2B4 | Power User | 2048 | 4 | 2560×1600 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| V100X-1B | Power User | 1024 | 4 | 2560×1600 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| V100X-16A | Virtual Application User | 16384 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| V100X-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| V100X-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| V100X-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

| V100X-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 16 | 16 | GRID Virtual Application |

1.4.1.11. Tesla V100 SXM2 32GB Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| V100DX-32Q | Designer | 32768 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| V100DX-16Q | Designer | 16384 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| V100DX-8Q | Designer | 8192 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| V100DX-4Q | Designer | 4096 | 4 | 4096×2160 | 8 | 8 | Quadro vDWS |

| V100DX-2Q | Designer | 2048 | 4 | 4096×2160 | 16 | 16 | Quadro vDWS |

| V100DX-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 32 | 32 | Quadro vDWS |

| V100DX-2B | Power User | 2048 | 2 | 4096×2160 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: V100DX-2B4 | Power User | 2048 | 4 | 2560×1600 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| V100DX-1B | Power User | 1024 | 4 | 2560×1600 | 32 | 32 | GRID Virtual PC or Quadro vDWS |

| V100DX-32A | Virtual Application User | 32768 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| V100DX-16A | Virtual Application User | 16384 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| V100DX-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| V100DX-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

| V100DX-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 16 | 16 | GRID Virtual Application |

| V100DX-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 32 | 32 | GRID Virtual Application |

1.4.1.12. Tesla V100 PCIe Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| V100-16Q | Designer | 16384 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| V100-8Q | Designer | 8192 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| V100-4Q | Designer | 4096 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| V100-2Q | Designer | 2048 | 4 | 4096×2160 | 8 | 8 | Quadro vDWS |

| V100-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 16 | 16 | Quadro vDWS |

| V100-2B | Power User | 2048 | 2 | 4096×2160 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: V100-2B4 | Power User | 2048 | 4 | 2560×1600 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| V100-1B | Power User | 1024 | 4 | 2560×1600 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| V100-16A | Virtual Application User | 16384 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| V100-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| V100-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| V100-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

| V100-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 16 | 16 | GRID Virtual Application |

1.4.1.13. Tesla V100 PCIe 32GB Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| V100D-32Q | Designer | 32768 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| V100D-16Q | Designer | 16384 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| V100D-8Q | Designer | 8192 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| V100D-4Q | Designer | 4096 | 4 | 4096×2160 | 8 | 8 | Quadro vDWS |

| V100D-2Q | Designer | 2048 | 4 | 4096×2160 | 16 | 16 | Quadro vDWS |

| V100D-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 32 | 32 | Quadro vDWS |

| V100D-2B | Power User | 2048 | 2 | 4096×2160 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: V100D-2B4 | Power User | 2048 | 4 | 2560×1600 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| V100D-1B | Power User | 1024 | 4 | 2560×1600 | 32 | 32 | GRID Virtual PC or Quadro vDWS |

| V100D-32A | Virtual Application User | 32768 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| V100D-16A | Virtual Application User | 16384 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| V100D-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| V100D-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

| V100D-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 16 | 16 | GRID Virtual Application |

| V100D-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 32 | 32 | GRID Virtual Application |

1.4.1.14. Tesla V100 FHHL Virtual GPU Types

Physical GPUs per board: 1

| Virtual GPU Type | Intended Use Case | Frame Buffer (Mbytes) | Virtual Display Heads | Maximum Resolution per Display Head | Maximum vGPUs per GPU | Maximum vGPUs per Board | Required License Edition |

|---|---|---|---|---|---|---|---|

| V100L-16Q | Designer | 16384 | 4 | 4096×2160 | 1 | 1 | Quadro vDWS |

| V100L-8Q | Designer | 8192 | 4 | 4096×2160 | 2 | 2 | Quadro vDWS |

| V100L-4Q | Designer | 4096 | 4 | 4096×2160 | 4 | 4 | Quadro vDWS |

| V100L-2Q | Designer | 2048 | 4 | 4096×2160 | 8 | 8 | Quadro vDWS |

| V100L-1Q | Power User, Designer | 1024 | 2 | 4096×2160 | 16 | 16 | Quadro vDWS |

| V100L-2B | Power User | 2048 | 2 | 4096×2160 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| Since 6.2: V100L-2B4 | Power User | 2048 | 4 | 2560×1600 | 8 | 8 | GRID Virtual PC or Quadro vDWS |

| V100L-1B | Power User | 1024 | 4 | 2560×1600 | 16 | 16 | GRID Virtual PC or Quadro vDWS |

| V100L-16A | Virtual Application User | 16384 | 1 | 1280×10241 | 1 | 1 | GRID Virtual Application |

| V100L-8A | Virtual Application User | 8192 | 1 | 1280×10241 | 2 | 2 | GRID Virtual Application |

| V100L-4A | Virtual Application User | 4096 | 1 | 1280×10241 | 4 | 4 | GRID Virtual Application |

| V100L-2A | Virtual Application User | 2048 | 1 | 1280×10241 | 8 | 8 | GRID Virtual Application |

| V100L-1A | Virtual Application User | 1024 | 1 | 1280×10241 | 16 | 16 | GRID Virtual Application |

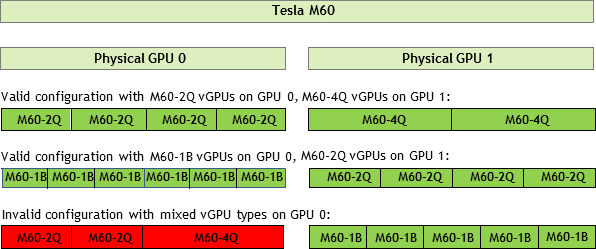

1.4.2. Homogeneous Virtual GPUs

This release of NVIDIA vGPU supports only homogeneous virtual GPUs. At any given time, the virtual GPUs resident on a single physical GPU must be all of the same type. However, this restriction doesn’t extend across physical GPUs on the same card. Different physical GPUs on the same card may host different types of virtual GPU at the same time, provided that the vGPU types on any one physical GPU are the same.

For example, a Tesla M60 card has two physical GPUs, and can support several types of virtual GPU. Figure 3 shows the following examples of valid and invalid virtual GPU configurations on Tesla M60:

- A valid configuration with M60-2Q vGPUs on GPU 0 and M60-4Q vGPUs on GPU 1

- A valid configuration with M60-1B vGPUs on GPU 0 and M60-2Q vGPUs on GPU 1

- An invalid configuration with mixed vGPU types on GPU 0

Figure 3. Example vGPU Configurations on Tesla M60

1.5. Guest VM Support

NVIDIA vGPU supports Windows and Linux guest VM operating systems. The supported vGPU types depend on the guest VM OS.

For details of the supported releases of Windows and Linux, and for further information on supported configurations, see the driver release notes for your hypervisor at NVIDIA Virtual GPU Software Documentation.

1.5.1. Windows Guest VM Support

Windows guest VMs are supported on all NVIDIA vGPU types.

1.5.2. Linux Guest VM support

64-bit Linux guest VMs are supported only on Q-series and B-series NVIDIA vGPUs.

1.6. NVIDIA vGPU Software Features

NVIDIA vGPU software includes Quadro vDWS, GRID Virtual PC, and GRID Virtual Applications.

1.6.1. API Support on NVIDIA vGPU

NVIDIA vGPU includes support for the following APIs:

- DirectX 12, Direct2D, and DirectX Video Acceleration (DXVA)

- OpenGL 4.5

- NVIDIA vGPU software SDK (remote graphics acceleration)

- Vulkan 1.0

1.6.2. NVIDIA CUDA Toolkit and OpenCL Support on NVIDIA vGPU Software

OpenCL and CUDA applications are supported on the following NVIDIA vGPU types:

- The 8Q vGPU type on Tesla M6, Tesla M10, and Tesla M60 GPUs

- All Q-series vGPU types on the following GPUs:

- Tesla P4

- Tesla P6

- Tesla P40

- Tesla P100 SXM2

- Tesla P100 PCIe 16 GB

- Tesla P100 PCIe 12 GB

- Tesla V100 SXM2

- Tesla V100 PCIe

- Tesla V100 FHHL

NVIDIA vGPU does not support the following NVIDIA CUDA Toolkit features:

- Unified Memory

- Dynamic page retirement

- Error-correcting code (ECC)

- Peer-to-peer

- GPUDirect remote direct memory access (RDMA)

- Development tools such as IDEs, debuggers, profilers, and utilities as listed under CUDA Toolkit Major Components in CUDA Toolkit 9.1 Release Notes for Windows, Linux, and Mac OS

These features are supported in GPU pass-through mode and in bare-metal deployments.

For more information about NVIDIA CUDA Toolkit, see CUDA Toolkit 9.1 Documentation.

1.6.3. Additional Quadro vDWS Features

In addition to the features of GRID Virtual PC and GRID Virtual Applications, Quadro vDWS provides the following features:

- Workstation-specific graphics features and accelerations

- Certified drivers for professional applications

- GPU pass through for workstation or professional 3D graphics

In pass-through mode, Quadro vDWS supports up to four virtual display heads at 4K resolution.

The process for installing and configuring NVIDIA Virtual GPU Manager depends on the hypervisor that you are using. After you complete this process, you can install the display drivers for your guest OS and license any NVIDIA vGPU software licensed products that you are using.

2.1. Prerequisites for Using NVIDIA vGPU

Before proceeding, ensure that these prerequisites are met:

- You have a server platform that is capable of hosting your chosen hypervisor and NVIDIA GPUs that support NVIDIA vGPU software.

- One or more NVIDIA GPUs that support NVIDIA vGPU software is installed in your server platform.

- You have downloaded the NVIDIA vGPU software package for your chosen hypervisor, which consists of the following software:

- NVIDIA Virtual GPU Manager for your hypervisor

- NVIDIA vGPU software graphics drivers for supported guest operating systems

- The following software is installed according to the instructions in the software vendor's documentation:

- Your chosen hypervisor, for example, Citrix XenServer, Red Hat Enterprise Linux KVM, Red Hat Virtualization (RHV), or VMware vSphere Hypervisor (ESXi)

- The software for managing your chosen hypervisor, for example, Citrix XenCenter management GUI, or VMware vCenter Server

- The virtual desktop software that you will use with virtual machines (VMs) running NVIDIA Virtual GPU, for example, Citrix XenDesktop, or VMware Horizon

Note:If you are using VMware vSphere Hypervisor (ESXi), ensure that the ESXi host on which you will configure a VM with NVIDIA vGPU is not a member of a VMware Distributed Resource Scheduler (DRS) cluster.

- A VM to be enabled with vGPU is created.

Note:

Multiple vGPUs in a VM are not supported.

- Your chosen guest OS is installed in the VM.

For information about supported hardware and software, and any known issues for this release of NVIDIA vGPU software, refer to the Release Notes for your chosen hypervisor:

- Virtual GPU Software for Citrix XenServer Release Notes

- Virtual GPU Software for Red Hat Enterprise Linux with KVM Release Notes

- Virtual GPU Software for VMware vSphere Release Notes

2.2. Switching the Mode of a Tesla M60 or M6 GPU

Tesla M60 and M6 GPUs support compute mode and graphics mode. NVIDIA vGPU requires GPUs that support both modes to operate in graphics mode.

Only Tesla M60 and M6 GPUs require and support mode switching. Other GPUs that support NVIDIA vGPU do not require or support mode switching.

Recent Tesla M60 GPUs and M6 GPUs are supplied in graphics mode. However, your GPU might be in compute mode if it is an older Tesla M60 GPU or M6 GPU, or if its mode has previously been changed.

If your GPU supports both modes but is in compute mode, you must use the gpumodeswitch tool to change the mode of the GPU to graphics mode. If you are unsure which mode your GPU is in, use the gpumodeswitch tool to find out the mode.

For more information, see gpumodeswitch User Guide.

2.3. Installing and Configuring the NVIDIA Virtual GPU Manager for Citrix XenServer

The following topics step you through the process of setting up a single Citrix XenServer VM to use NVIDIA vGPU. After the process is complete, you can install the graphics driver for your guest OS and license any NVIDIA vGPU software licensed products that you are using.

These setup steps assume familiarity with the XenServer skills covered in XenServer Basics.

2.3.1. Installing and Updating the NVIDIA Virtual GPU Manager for XenServer

The NVIDIA Virtual GPU Manager runs in XenServer’s dom0. The NVIDIA Virtual GPU Manager for Citrix XenServer is supplied as an RPM file and as a Supplemental Pack.

NVIDIA Virtual GPU Manager and Guest VM drivers must be matched from the same main driver branch. If you update vGPU Manager to a release from another driver branch, guest VMs will boot with vGPU disabled until their guest vGPU driver is updated to match the vGPU Manager version. Consult Virtual GPU Software for Citrix XenServer Release Notes for further details.

2.3.1.1. Installing the RPM package for XenServer

The RPM file must be copied to XenServer’s dom0 prior to installation (see Copying files to dom0).

- Use the rpm command to install the package:

[root@xenserver ~]# rpm -iv NVIDIA-vGPU-xenserver-7.0-390.113.x86_64.rpm Preparing packages for installation... NVIDIA-vGPU-xenserver-7.0-390.113 [root@xenserver ~]#

- Reboot the XenServer platform:

[root@xenserver ~]# shutdown –r now Broadcast message from root (pts/1) (Fri Oct 12 14:24:11 2020): The system is going down for reboot NOW! [root@xenserver ~]#

2.3.1.2. Updating the RPM Package for XenServer

If an existing NVIDIA Virtual GPU Manager is already installed on the system and you want to upgrade, follow these steps:

- Shut down any VMs that are using NVIDIA vGPU.

- Install the new package using the –U option to the rpm command, to upgrade from the previously installed package:

[root@xenserver ~]# rpm -Uv NVIDIA-vGPU-xenserver-7.0-390.113.x86_64.rpm Preparing packages for installation... NVIDIA-vGPU-xenserver-7.0-390.113 [root@xenserver ~]#

Note:You can query the version of the current NVIDIA Virtual GPU Manager package using the rpm –q command:

[root@xenserver ~]# rpm –q NVIDIA-vGPU-xenserver-7.0-390.113 [root@xenserver ~]# If an existing NVIDIA GRID package is already installed and you don’t select the upgrade (-U) option when installing a newer GRID package, the rpm command will return many conflict errors. Preparing packages for installation... file /usr/bin/nvidia-smi from install of NVIDIA-vGPU-xenserver-7.0-390.113.x86_64 conflicts with file from package NVIDIA-vGPU-xenserver-7.0-390.94.x86_64 file /usr/lib/libnvidia-ml.so from install of NVIDIA-vGPU-xenserver-7.0-390.113.x86_64 conflicts with file from package NVIDIA-vGPU-xenserver-7.0-390.94.x86_64 ...

- Reboot the XenServer platform:

[root@xenserver ~]# shutdown –r now Broadcast message from root (pts/1) (Fri Oct 12 14:24:11 2020): The system is going down for reboot NOW! [root@xenserver ~]#

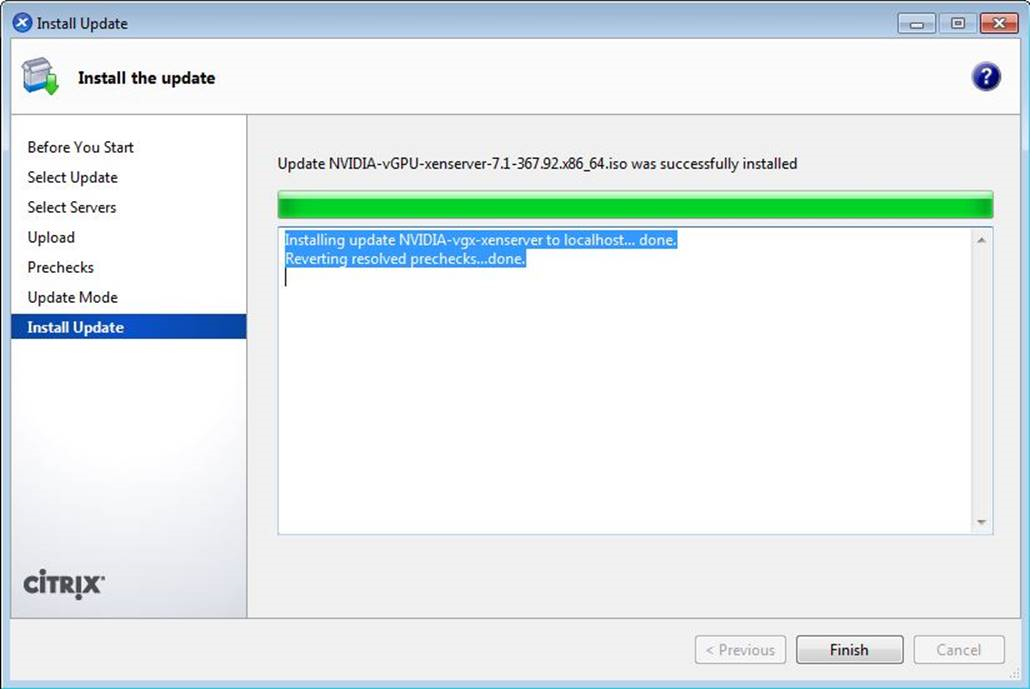

2.3.1.3. Installing or Updating the Supplemental Pack for XenServer

XenCenter can be used to install or update Supplemental Packs on XenServer hosts. The NVIDIA Virtual GPU Manager supplemental pack is provided as an ISO.

- Select Install Update from the Tools menu.

- Click Next after going through the instructions on the Before You Start section.

- Click Select update or supplemental pack from disk on the Select Update section and open NVIDIA’s XenServer Supplemental Pack ISO.

Figure 4. NVIDIA vGPU Manager supplemental pack selected in XenCenter

- Click Next on the Select Update section.

- In the Select Servers section select all the XenServer hosts on which the Supplemental Pack should be installed on and click Next.

- Click Next on the Upload section once the Supplemental Pack has been uploaded to all the XenServer hosts.

- Click Next on the Prechecks section.

- Click Install Update on the Update Mode section.

- Click Finish on the Install Update section.

Figure 5. Successful installation of NVIDIA vGPU Manager supplemental pack

2.3.1.4. Verifying the Installation of the NVIDIA vGPU Software for XenServer Package

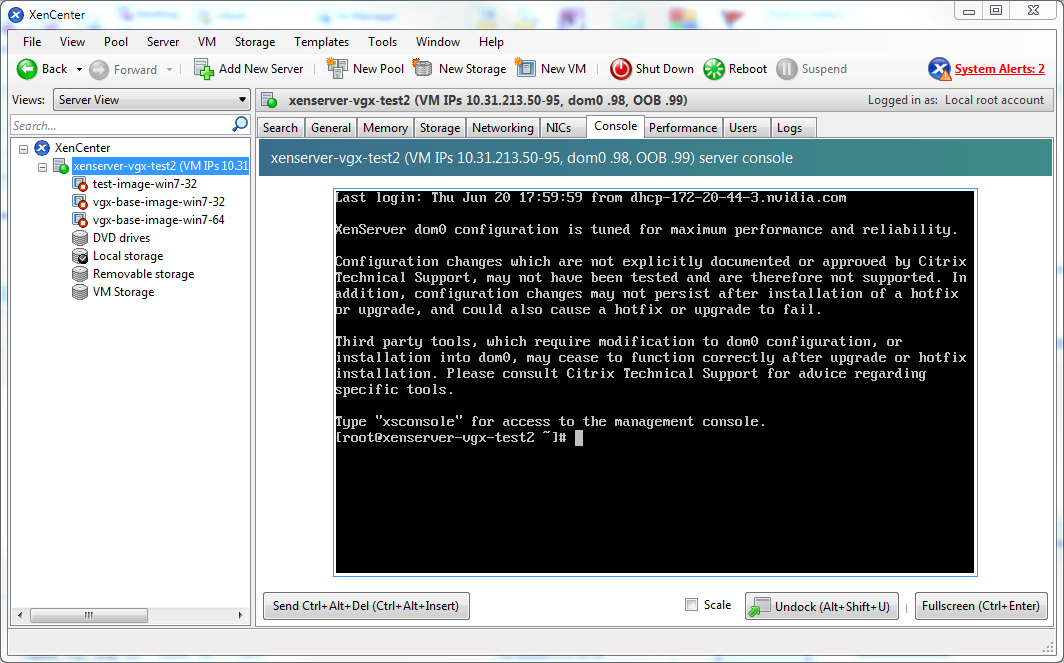

After the XenServer platform has rebooted, verify the installation of the NVIDIA vGPU software package for XenServer.

- Verify that the NVIDIA vGPU software package is installed and loaded correctly by checking for the NVIDIA kernel driver in the list of kernel loaded modules.

[root@xenserver ~]# lsmod | grep nvidia nvidia 9522927 0 i2c_core 20294 2 nvidia,i2c_i801 [root@xenserver ~]#

- Verify that the NVIDIA kernel driver can successfully communicate with the NVIDIA physical GPUs in your system by running the nvidia-smi command. The nvidia-smi command is described in more detail in NVIDIA System Management Interface nvidia-smi.

Running the nvidia-smi command should produce a listing of the GPUs in your platform.

[root@xenserver ~]# nvidia-smi

Fri Oct 12 18:46:50 2020

+------------------------------------------------------+

| NVIDIA-SMI 390.113 Driver Version: 390.115 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 Tesla M60 On | 00000000:05:00.0 Off | Off |

| N/A 25C P8 24W / 150W | 13MiB / 8191MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

| 1 Tesla M60 On | 00000000:06:00.0 Off | Off |

| N/A 24C P8 24W / 150W | 13MiB / 8191MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

| 2 Tesla M60 On | 00000000:86:00.0 Off | Off |

| N/A 25C P8 25W / 150W | 13MiB / 8191MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

| 3 Tesla M60 On | 00000000:87:00.0 Off | Off |

| N/A 28C P8 24W / 150W | 13MiB / 8191MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

[root@xenserver ~]#

If nvidia-smi fails to run or doesn’t produce the expected output for all the NVIDIA GPUs in your system, see Troubleshooting for troubleshooting steps.

2.3.2. 6.0 Only: Configuring vGPU Migration with XenMotion for Citrix XenServer

Since 6.1: vGPU migration is enabled by default and this task is not required.

NVIDIA vGPU software supports XenMotion for VMs that are configured with vGPU. XenMotion enables you to move a running VM from one physical host machine to another host with very little disruption or downtime and no loss of data. For a VM that is configured with vGPU, the vGPU is migrated with the VM to an NVIDIA GPU on the other host. The NVIDIA GPUs on both host machines must be of the same type.

For details about which Citrix XenServer versions, NVIDIA GPUs, and guest OS releases support XenMotion with vGPU, see Virtual GPU Software for Citrix XenServer Release Notes.

Perform this task in the XenServer dom0 shell on each physical host machine for which you want to configure vGPU migration.

Before configuring vGPU migration for a host, ensure that the current NVIDIA Virtual GPU Manager for XenServer package is installed on the host.

For best performance, follow these guidelines:

- Use shared storage, such as NFS, iSCSI, or Fiberchannel.

If shared storage is not used, migration can take a very long time because vDISK must also be migrated.

- Use 10 GB networking.

- Set the registry key that is required to enable vGPU migration by adding the following entry to the /etc/modprobe.d/nvidia.conf file.

options nvidia NVreg_RegistryDwords="RMEnableVgpuMigration=1"

- Reboot the XenServer platform.

[root@xenserver ~]# shutdown –r now

If you want to unset the registry entry that is required to enable vGPU migration, prefix the line in /etc/modprobe.d/nvidia.conf with the comment character #. Then reboot the XenServer platform.

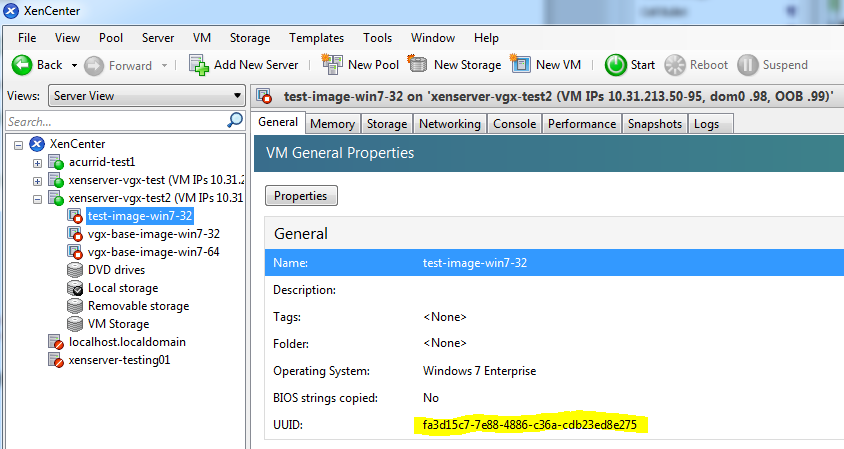

2.3.3. Configuring a Citrix XenServer VM with Virtual GPU

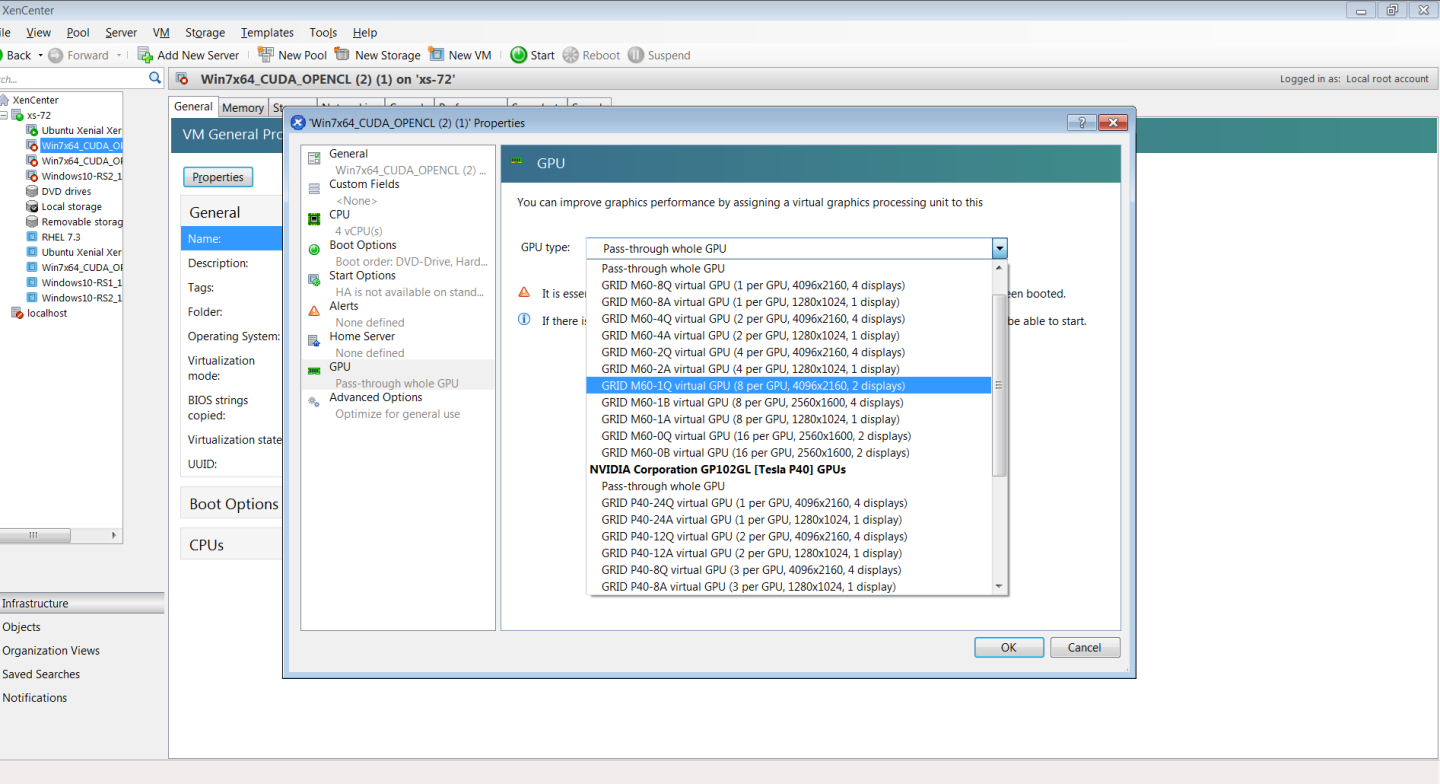

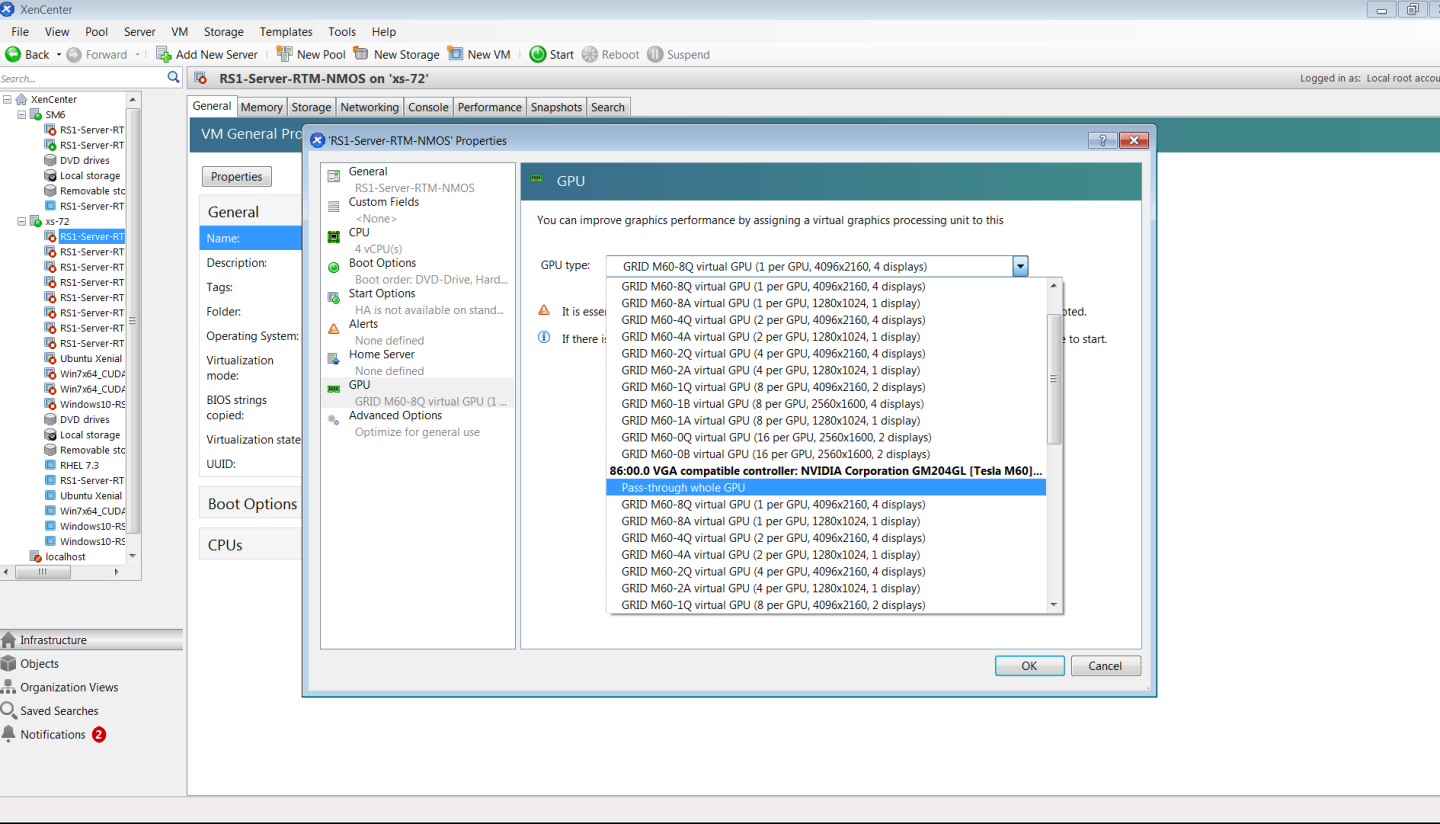

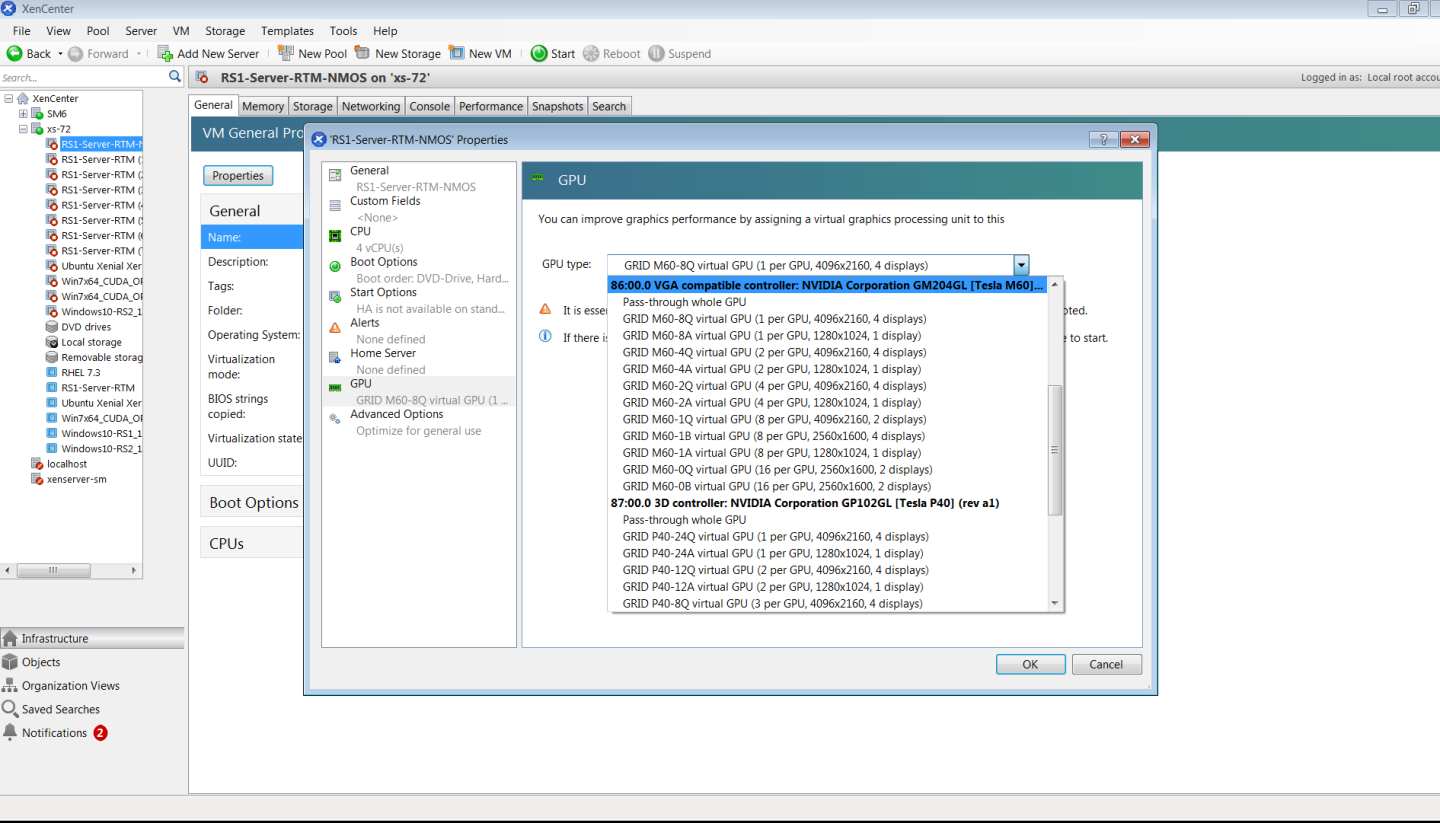

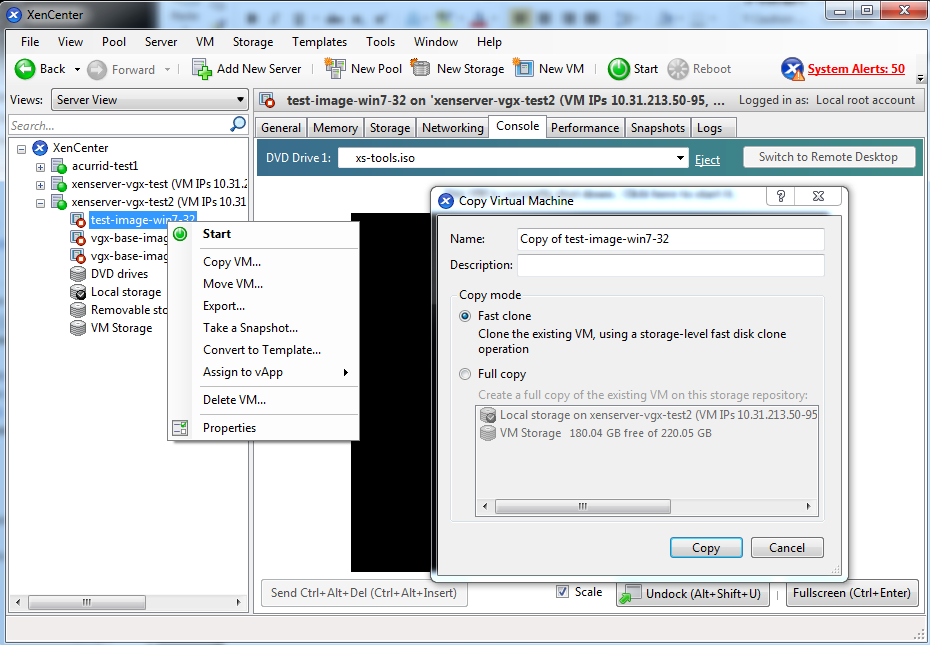

XenServer supports configuration and management of virtual GPUs using XenCenter, or the xe command line tool that is run in a XenServer dom0 shell. Basic configuration using XenCenter is described in the following sections. Command line management using xe is described in XenServer vGPU Management.

- Ensure the VM is powered off.

- Right-click the VM in XenCenter, select Properties to open the VM’s properties, and select the GPU property. The available GPU types are listed in the GPU type drop-down list:

Figure 6. Using Citrix XenCenter to configure a VM with a vGPU

After you have configured a XenServer VM with a vGPU, start the VM, either from XenCenter or by using xe vm-start in a dom0 shell. You can view the VM’s console in XenCenter.

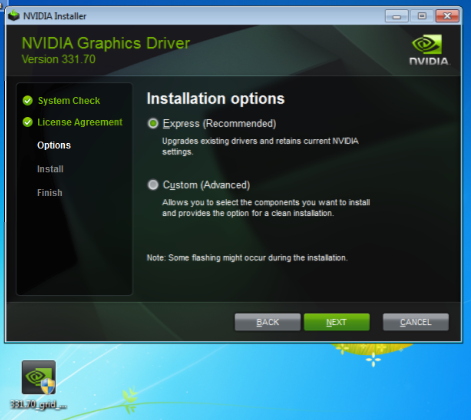

After the VM has booted, install the NVIDIA vGPU software graphics driver as explained in Installing the NVIDIA vGPU Software Graphics Driver.

2.4. Installing the Virtual GPU Manager Package for Linux KVM

NVIDIA vGPU software for Linux Kernel-based Virtual Machine (KVM) (Linux KVM) is intended only for use with supported versions of Linux KVM hypervisors. For details about which Linux KVM hypervisor versions are supported, see Virtual GPU Software for Generic Linux with KVM Release Notes.

If you are using Red Hat Enterprise Linux KVM, follow the instructions in Since 6.1: Installing and Configuring the NVIDIA Virtual GPU Manager for Red Hat Enterprise Linux KVM or RHV.

Before installing the Virtual GPU Manager package for Linux KVM, ensure that the following prerequisites are met:

-

The following packages are installed on the Linux KVM server:

- The

x86_64build of the GNU Compiler Collection (GCC) - Linux kernel headers

- The

-

The package file is copied to a directory in the file system of the Linux KVM server.

If the Nouveau driver for NVIDIA graphics cards is present, disable it before installing the package.

- Change to the directory on the Linux KVM server that contains the package file.

# cd package-file-directory

- package-file-directory

- The path to the directory that contains the package file.

- Make the package file executable.

# chmod +x package-file-name

- package-file-name

- The name of the file that contains the Virtual GPU Manager package for Linux KVM, for example NVIDIA-Linux-x86_64-390.42-vgpu-kvm.run.

- Run the package file as the root user.

# sudo sh./package-file-name

- Accept the license agreement to continue with the installation.

- When installation has completed, select OK to exit the installer.

- Reboot the Linux KVM server.

# systemctl reboot

2.5. Since 6.1: Installing and Configuring the NVIDIA Virtual GPU Manager for Red Hat Enterprise Linux KVM or RHV

The following topics step you through the process of setting up a single Red Hat Enterprise Linux Kernel-based Virtual Machine (KVM) or Red Hat Virtualization (RHV) VM to use NVIDIA vGPU.

Red Hat Enterprise Linux KVM and RHV use the same Virtual GPU Manager package, but are configured with NVIDIA vGPU in different ways.

For RHV, follow this sequence of instructions:

- Installing the NVIDIA Virtual GPU Manager for Red Hat Enterprise Linux KVM or RHV

- Adding a vGPU to a Red Hat Virtualization (RHV) VM

For Red Hat Enterprise Linux KVM, follow this sequence of instructions:

- Installing the NVIDIA Virtual GPU Manager for Red Hat Enterprise Linux KVM or RHV

- Getting the BDF and Domain of a GPU on Red Hat Enterprise Linux KVM

- Creating an NVIDIA vGPU on Red Hat Enterprise Linux KVM

- Adding a vGPU to a Red Hat Enterprise Linux KVM VM

- Setting vGPU Plugin Parameters on Red Hat Enterprise Linux KVM

After the process is complete, you can install the graphics driver for your guest OS and license any NVIDIA vGPU software licensed products that you are using.

If you are using a generic Linux KVM hypervisor, follow the instructions in Installing the Virtual GPU Manager Package for Linux KVM.

2.5.1. Installing the NVIDIA Virtual GPU Manager for Red Hat Enterprise Linux KVM or RHV

The NVIDIA Virtual GPU Manager for Red Hat Enterprise Linux KVM and Red Hat Virtualization (RHV) is provided as a .rpm file.

NVIDIA Virtual GPU Manager and Guest VM drivers must be matched from the same main driver branch. If you update vGPU Manager to a release from another driver branch, guest VMs will boot with vGPU disabled until their guest vGPU driver is updated to match the vGPU Manager version. Consult Virtual GPU Software for Red Hat Enterprise Linux with KVM Release Notes for further details.

2.5.1.1. Installing the Virtual GPU Manager Package for Red Hat Enterprise Linux KVM or RHV

Before installing the RPM package for Red Hat Enterprise Linux KVM or RHV, ensure that the sshd service on the Red Hat Enterprise Linux KVM or RHV server is configured to permit root login. If the Nouveau driver for NVIDIA graphics cards is present, disable it before installing the package. For instructions, see How to disable the Nouveau driver and install the Nvidia driver in RHEL 7 (Red Hat subscription required).

- Securely copy the RPM file from the system where you downloaded the file to the Red Hat Enterprise Linux KVM or RHV server.

- From a Windows system, use a secure copy client such as WinSCP.

- From a Linux system, use the scp command.

- Use secure shell (SSH) to log in as root to the Red Hat Enterprise Linux KVM or RHV server.

# ssh root@kvm-server

- kvm-server

- The host name or IP address of the Red Hat Enterprise Linux KVM or RHV server.

- Change to the directory on the Red Hat Enterprise Linux KVM or RHV server to which you copied the RPM file.

# cd rpm-file-directory

- rpm-file-directory

- The path to the directory to which you copied the RPM file.

- Use the rpm command to install the package.

# rpm -iv NVIDIA-vGPU-rhel-7.5-390.113.x86_64.rpm Preparing packages for installation... NVIDIA-vGPU-rhel-7.5-390.113 #

- Reboot the Red Hat Enterprise Linux KVM or RHV server.

# systemctl reboot

2.5.1.2. Verifying the Installation of the NVIDIA vGPU Software for Red Hat Enterprise Linux KVM or RHV

After the Red Hat Enterprise Linux KVM or RHV server has rebooted, verify the installation of the NVIDIA vGPU software package for Red Hat Enterprise Linux KVM or RHV.

- Verify that the NVIDIA vGPU software package is installed and loaded correctly by checking for the VFIO drivers in the list of kernel loaded modules.

# lsmod | grep vfio nvidia_vgpu_vfio 27099 0 nvidia 12316924 1 nvidia_vgpu_vfio vfio_mdev 12841 0 mdev 20414 2 vfio_mdev,nvidia_vgpu_vfio vfio_iommu_type1 22342 0 vfio 32331 3 vfio_mdev,nvidia_vgpu_vfio,vfio_iommu_type1 #

- Verify that the libvirtd service is active and running.

# service libvirtd status

- Verify that the NVIDIA kernel driver can successfully communicate with the NVIDIA physical GPUs in your system by running the nvidia-smi command. The nvidia-smi command is described in more detail in NVIDIA System Management Interface nvidia-smi.

Running the nvidia-smi command should produce a listing of the GPUs in your platform.

# nvidia-smi

Fri Oct 12 18:46:50 2020

+------------------------------------------------------+

| NVIDIA-SMI 390.113 Driver Version: 390.115 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 Tesla M60 On | 0000:85:00.0 Off | Off |

| N/A 23C P8 23W / 150W | 13MiB / 8191MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

| 1 Tesla M60 On | 0000:86:00.0 Off | Off |

| N/A 29C P8 23W / 150W | 13MiB / 8191MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

| 2 Tesla P40 On | 0000:87:00.0 Off | Off |

| N/A 21C P8 18W / 250W | 53MiB / 24575MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

#

If nvidia-smi fails to run or doesn’t produce the expected output for all the NVIDIA GPUs in your system, see Troubleshooting for troubleshooting steps.

2.5.2. Adding a vGPU to a Red Hat Virtualization (RHV) VM

Ensure that the VM to which you want to add the vGPU is shut down.

- Determine the mediated device type (

mdev_type) identifiers of the vGPU types available on the RHV host.# vdsm-client Host hostdevListByCaps ... "mdev": { "nvidia-155": { "name": "GRID M10-2B", "available_instances": "4" }, "nvidia-36": { "name": "GRID M10-0Q", "available_instances": "16" }, ...

mdev_typeidentifiers of the following vGPU types:- For the

GRID M10-2BvGPU type, themdev_typeidentifier isnvidia-155. - For the

GRID M10-0QvGPU type, themdev_typeidentifier isnvidia-36.

- For the

- Note the

mdev_typeidentifier of the vGPU type that you want to add. - Log in to the RHV Administration Portal.

- From the Main Navigation Menu, choose Compute > Virtual Machines > virtual-machine-name.

- virtual-machine-name

- The name of the virtual machine to which you want to add the vGPU.

- Click Edit.

- In the Edit Virtual Machine window that opens, click Show Advanced Options and in the list of options, select Custom Properties.

- From the drop-down list, select mdev_type.

- In the text field, type the

mdev_typeidentifier of the vGPU type that you want to add and click OK.

After adding a vGPU to an RHV VM, start the VM.

After the VM has booted, install the NVIDIA vGPU software graphics driver as explained in Installing the NVIDIA vGPU Software Graphics Driver.

2.5.3. Getting the BDF and Domain of a GPU on Red Hat Enterprise Linux KVM

Sometimes when configuring a physical GPU for use with NVIDIA vGPU software, you must find out which directory in the sysfs file system represents the GPU. This directory is identified by the domain, bus, slot, and function of the GPU.

For more information about the directory in the sysfs file system represents a physical GPU, see NVIDIA vGPU Information in the sysfs File System.

- Obtain the PCI device bus/device/function (BDF) of the physical GPU.

# lspci | grep NVIDIA

The NVIDIA GPUs listed in this example have the PCI device BDFs

06:00.0and07:00.0.# lspci | grep NVIDIA 06:00.0 VGA compatible controller: NVIDIA Corporation GM204GL [Tesla M10] (rev a1) 07:00.0 VGA compatible controller: NVIDIA Corporation GM204GL [Tesla M10] (rev a1)

- Obtain the full identifier of the GPU from its PCI device BDF.

# virsh nodedev-list --cap pci| grep transformed-bdf

- transformed-bdf

-

The PCI device BDF of the GPU with the colon and the period replaced with underscores, for example,

06_00_0.

This example obtains the full identifier of the GPU with the PCI device BDF

06:00.0.# virsh nodedev-list --cap pci| grep 06_00_0 pci_0000_06_00_0

- Obtain the domain, bus, slot, and function of the GPU from the full identifier of the GPU.

virsh nodedev-dumpxml full-identifier| egrep 'domain|bus|slot|function'

- full-identifier

-

The full identifier of the GPU that you obtained in the previous step, for example,

pci_0000_06_00_0.

This example obtains the domain, bus, slot, and function of the GPU with the PCI device BDF

06:00.0.# virsh nodedev-dumpxml pci_0000_06_00_0| egrep 'domain|bus|slot|function' <domain>0x0000</domain> <bus>0x06</bus> <slot>0x00</slot> <function>0x0</function> <address domain='0x0000' bus='0x06' slot='0x00' function='0x0'/>

2.5.4. Creating an NVIDIA vGPU on Red Hat Enterprise Linux KVM

For each vGPU that you want to create, perform this task in a Linux command shell on the Red Hat Enterprise Linux KVM host.

The mdev device file that you create to represent the vGPU does not persist when the host is rebooted and must be re-created after the host is rebooted. If necessary, you can use standard features of the operating system to automate the re-creation of this device file when the host is booted, for example, by writing a custom script that is executed when the host is rebooted.

Before you begin, ensure that you have the domain, bus, slot, and function of the GPU on which you are creating the vGPU. For instructions, see Getting the BDF and Domain of a GPU on Red Hat Enterprise Linux KVM.

- Change to the mdev_supported_types directory for the physical GPU.

# cd /sys/class/mdev_bus/domain\:bus\:slot.function/mdev_supported_types/

- domain

- bus

- slot

- function

-

The domain, bus, slot, and function of the GPU, without the

0xprefix.

This example changes to the mdev_supported_types directory for the GPU with the domain

0000and PCI device BDF06:00.0.# cd /sys/bus/pci/devices/0000\:06\:00.0/mdev_supported_types/

- Find out which subdirectory of mdev_supported_types contains registration information for the vGPU type that you want to create.

# grep -l "vgpu-type" nvidia-*/name

- vgpu-type

-

The vGPU type, for example,

M10-2Q.

This example shows that the registration information for the M10-2Q vGPU type is contained in the nvidia-41 subdirectory of mdev_supported_types.

# grep -l "M10-2Q" nvidia-*/name nvidia-41/name

- Confirm that you can create an instance of the vGPU type on the physical GPU.

# cat subdirectory/available_instances

- subdirectory

-

The subdirectory that you found in the previous step, for example,

nvidia-41.

The number of available instances must be at least 1. If the number is 0, either an instance of another vGPU type already exists on the physical GPU, or the maximum number of allowed instances has already been created.

This example shows that four more instances of the M10-2Q vGPU type can be created on the physical GPU.

# cat nvidia-41/available_instances 4

- Generate a correctly formatted universally unique identifier (UUID) for the vGPU.

# uuidgen aa618089-8b16-4d01-a136-25a0f3c73123

- Write the UUID that you obtained in the previous step to the create file in the registration information directory for the vGPU type that you want to create.

# echo "uuid"> subdirectory/create

- uuid

- The UUID that you generated in the previous step, which will become the UUID of the vGPU that you want to create.

- subdirectory

-

The registration information directory for the vGPU type that you want to create, for example,

nvidia-41.

This example creates an instance of the M10-2Q vGPU type with the UUID

aa618089-8b16-4d01-a136-25a0f3c73123.# echo "aa618089-8b16-4d01-a136-25a0f3c73123" > nvidia-41/create

An

mdevdevice file for the vGPU is added is added to the parent physical device directory of the vGPU. The vGPU is identified by its UUID.The /sys/bus/mdev/devices/ directory contains a symbolic link to the

mdevdevice file. - Confirm that the vGPU was created.

# ls -l /sys/bus/mdev/devices/ total 0 lrwxrwxrwx. 1 root root 0 Nov 24 13:33 aa618089-8b16-4d01-a136-25a0f3c73123 -> ../../../devices/pci0000:00/0000:00:03.0/0000:03:00.0/0000:04:09.0/0000:06:00.0/aa618089-8b16-4d01-a136-25a0f3c73123

2.5.5. Adding a vGPU to a Red Hat Enterprise Linux KVM VM

Ensure that the following prerequisites are met:

- The VM to which you want to add the vGPU is shut down.

- The vGPU that you want to add has been created as explained in Creating an NVIDIA vGPU on Red Hat Enterprise Linux KVM.

You can add a vGPU to a Red Hat Enterprise Linux KVM VM by using any of the following tools:

- The virsh command

- The QEMU command line

After adding a vGPU to a Red Hat Enterprise Linux KVM VM, start the VM.

# virsh start vm-name

- vm-name

- The name of the VM that you added the vGPU to.

After the VM has booted, install the NVIDIA vGPU software graphics driver as explained in Installing the NVIDIA vGPU Software Graphics Driver.

2.5.5.1. Adding a vGPU to a Red Hat Enterprise Linux KVM VM by Using virsh

- In virsh, open for editing the XML file of the VM that you want to add the vGPU to.

# virsh edit vm-name

- vm-name

- The name of the VM to that you want to add the vGPU to.

- Add a device entry in the form of an

addresselement inside thesourceelement to add the vGPU to the guest VM.<device> ... <hostdev mode='subsystem' type='mdev' model='vfio-pci'> <source> <address uuid='uuid'/> </source> </hostdev> </device>

- uuid

- The UUID that was assigned to the vGPU when the vGPU was created.

This example adds a device entry for the vGPU with the UUID

aa618089-8b16-4d01-a136-25a0f3c73123.<device> ... <hostdev mode='subsystem' type='mdev' model='vfio-pci'> <source> <address uuid='a618089-8b16-4d01-a136-25a0f3c73123'/> </source> </hostdev> </device>

2.5.5.2. Adding a vGPU to a Red Hat Enterprise Linux KVM VM by Using the QEMU Command Line

Add the following options to the QEMU command line:

-device vfio-pci,sysfsdev=/sys/bus/mdev/devices/vgpu-uuid -uuid vm-uuid

- vgpu-uuid

- The UUID that was assigned to the vGPU when the vGPU was created.

- vm-uuid

- The UUID that was assigned to the VM when the VM was created.

This example adds the vGPU with the UUID aa618089-8b16-4d01-a136-25a0f3c73123 to the VM with the UUID ebb10a6e-7ac9-49aa-af92-f56bb8c65893.

-device vfio-pci,sysfsdev=/sys/bus/mdev/devices/aa618089-8b16-4d01-a136-25a0f3c73123 -uuid ebb10a6e-7ac9-49aa-af92-f56bb8c65893

2.5.6. Setting vGPU Plugin Parameters on Red Hat Enterprise Linux KVM

Plugin parameters for a vGPU control the behavior of the vGPU, such as the frame rate limiter (FRL) configuration in frames per second or whether console virtual network computing (VNC) for the vGPU is enabled. The VM to which the vGPU is assigned is started with these parameters.

For each vGPU for which you want to set plugin parameters, perform this task in a Linux command shell on the Red Hat Enterprise Linux KVM host.

- Change to the nvidia subdirectory of the

mdevdevice directory that represents the vGPU.# cd /sys/bus/mdev/devices/uuid/nvidia

- uuid

-

The UUID of the vGPU, for example,

aa618089-8b16-4d01-a136-25a0f3c73123.

- Write the plugin parameters that you want to set to the vgpu_params file in the directory that you changed to in the previous step.

# echo "plugin-config-params" > vgpu_params

- plugin-config-params

- A comma-separated list of parameter-value pairs, where each pair is of the form parameter-name=value.

This example disables frame rate limiting and console VNC for a vGPU.

# echo "frame_rate_limiter=0, disable_vnc=1" > vgpu_params

To clear any vGPU plugin parameters that were set previously, write a space to the vgpu_params file for the vGPU.

# echo " " > vgpu_params

2.5.7. Deleting a vGPU on Red Hat Enterprise Linux KVM

For each vGPU that you want to delete, perform this task in a Linux command shell on the Red Hat Enterprise Linux KVM host.

Before you begin, ensure that the following prerequisites are met:

- You have the domain, bus, slot, and function of the GPU where the vGPU that you want to delete resides. For instructions, see Getting the BDF and Domain of a GPU on Red Hat Enterprise Linux KVM.

- The VM to which the vGPU is assigned is shut down.

- Change to the mdev_supported_types directory for the physical GPU.

# cd /sys/class/mdev_bus/domain\:bus\:slot.function/mdev_supported_types/

- domain

- bus

- slot

- function

-

The domain, bus, slot, and function of the GPU, without the

0xprefix.

This example changes to the mdev_supported_types directory for the GPU with the PCI device BDF

06:00.0.# cd /sys/bus/pci/devices/0000\:06\:00.0/mdev_supported_types/

- Change to the subdirectory of mdev_supported_types that contains registration information for the vGPU.

# cd `find . -type d -name uuid`

- uuid

-

The UUID of the vGPU, for example,

aa618089-8b16-4d01-a136-25a0f3c73123.

- Write the value

1to the remove file in the registration information directory for the vGPU that you want to delete.# echo "1" > remove

Note:On the Red Hat Virtualization (RHV) kernel, if you try to remove a vGPU device while its VM is running, the vGPU device might not be removed even if the remove file has been written to successfully. To confirm that the vGPU device is removed, confirm that the UUID of the vGPU is not found in the sysfs file system.

2.5.8. Preparing a GPU Configured for Pass-Through for Use with vGPU

The mode in which a physical GPU is being used determines the Linux kernel module to which the GPU is bound. If you want to switch the mode in which a GPU is being used, you must unbind the GPU from its current kernel module and bind it to the kernel module for the new mode. After binding the GPU to the correct kernel module, you can then configure it for vGPU.

A physical GPU that is passed through to a VM is bound to the vfio-pci kernel module. A physical GPU that is bound to the vfio-pci kernel module can be used only for pass-through. To enable the GPU to be used for vGPU, the GPU must be unbound from vfio-pci kernel module and bound to the nvidia kernel module.

Before you begin, ensure that you have the domain, bus, slot, and function of the GPU that you are preparing for use with vGPU. For instructions, see Getting the BDF and Domain of a GPU on Red Hat Enterprise Linux KVM.

- Determine the kernel module to which the GPU is bound by running the lspci command with the -k option on the NVIDIA GPUs on your host.

# lspci -d 10de: -k

The

Kernel driver in use:field indicates the kernel module to which the GPU is bound.The following example shows that the NVIDIA Tesla M60 GPU with BDF

06:00.0is bound to thevfio-pcikernel module and is being used for GPU pass through.06:00.0 VGA compatible controller: NVIDIA Corporation GM204GL [Tesla M60] (rev a1) Subsystem: NVIDIA Corporation Device 115e Kernel driver in use: vfio-pci

- Unbind the GPU from

vfio-pcikernel module.- Change to the sysfs directory that represents the

vfio-pcikernel module.# cd /sys/bus/pci/drivers/vfio-pci

- Write the domain, bus, slot, and function of the GPU to the unbind file in this directory.

# echo domain:bus:slot.function > unbind

- domain

- bus

- slot

- function

-

The domain, bus, slot, and function of the GPU, without a

0xprefix.

This example writes the domain, bus, slot, and function of the GPU with the domain

0000and PCI device BDF06:00.0.# echo 0000:06:00.0 > unbind

- Change to the sysfs directory that represents the

- Bind the GPU to the

nvidiakernel module.- Change to the sysfs directory that contains the PCI device information for the physical GPU.

# cd /sys/bus/pci/devices/domain\:bus\:slot.function

- domain

- bus

- slot

- function

-

The domain, bus, slot, and function of the GPU, without a

0xprefix.

This example changes to the sysfs directory that contains the PCI device information for the GPU with the domain

0000and PCI device BDF06:00.0.# cd /sys/bus/pci/devices/0000\:06\:00.0

- Write the kernel module name

nvidiato the driver_override file in this directory.# echo nvidia > driver_override

- Change to the sysfs directory that represents the

nvidiakernel module.# cd /sys/bus/pci/drivers/nvidia

- Write the domain, bus, slot, and function of the GPU to the bind file in this directory.

# echo domain:bus:slot.function > bind

- domain

- bus

- slot

- function

-

The domain, bus, slot, and function of the GPU, without a

0xprefix.

This example writes the domain, bus, slot, and function of the GPU with the domain

0000and PCI device BDF06:00.0.# echo 0000:06:00.0 > bind

- Change to the sysfs directory that contains the PCI device information for the physical GPU.

You can now configure the GPU with vGPU as explained in Since 6.1: Installing and Configuring the NVIDIA Virtual GPU Manager for Red Hat Enterprise Linux KVM or RHV.

2.5.9. NVIDIA vGPU Information in the sysfs File System

Information about the NVIDIA vGPU types supported by each physical GPU in a Red Hat Enterprise Linux KVM host is stored in the sysfs file system.

All physical GPUs on the host are registered with the mdev kernel module. Information about the physical GPUs and the vGPU types that can be created on each physical GPU is stored in directories and files under the /sys/class/mdev_bus/ directory.

The sysfs directory for each physical GPU is at the following locations:

- /sys/bus/pci/devices/

- /sys/class/mdev_bus/

Both directories are a symbolic link to the real directory for PCI devices in the sysfs file system.

The organization the sysfs directory for each physical GPU is as follows:

/sys/class/mdev_bus/

|-parent-physical-device

|-mdev_supported_types

|-nvidia-vgputype-id

|-available_instances

|-create

|-description

|-device_api

|-devices

|-name

- parent-physical-device

-

Each physical GPU on the host is represented by a subdirectory of the /sys/class/mdev_bus/ directory.

The name of each subdirectory is as follows:

domain\:bus\:slot.function

domain, bus, slot, function are the domain, bus, slot, and function of the GPU, for example,

0000\:06\:00.0.Each directory is a symbolic link to the real directory for PCI devices in the sysfs file system. For example:

# ll /sys/class/mdev_bus/ total 0 lrwxrwxrwx. 1 root root 0 Dec 12 03:20 0000:05:00.0 -> ../../devices/pci0000:00/0000:00:03.0/0000:03:00.0/0000:04:08.0/0000:05:00.0 lrwxrwxrwx. 1 root root 0 Dec 12 03:20 0000:06:00.0 -> ../../devices/pci0000:00/0000:00:03.0/0000:03:00.0/0000:04:09.0/0000:06:00.0 lrwxrwxrwx. 1 root root 0 Dec 12 03:20 0000:07:00.0 -> ../../devices/pci0000:00/0000:00:03.0/0000:03:00.0/0000:04:10.0/0000:07:00.0 lrwxrwxrwx. 1 root root 0 Dec 12 03:20 0000:08:00.0 -> ../../devices/pci0000:00/0000:00:03.0/0000:03:00.0/0000:04:11.0/0000:08:00.0

- mdev_supported_types

-

After the Virtual GPU Manager is installed on the host and the host has been rebooted, a directory named mdev_supported_types is created under the sysfs directory for each physical GPU. The mdev_supported_types directory contains a subdirectory for each vGPU type that the physical GPU supports. The name of each subdirectory is nvidia-vgputype-id, where vgputype-id is an unsigned integer serial number. For example:

# ll mdev_supported_types/ total 0 drwxr-xr-x 3 root root 0 Dec 6 01:37 nvidia-35 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-36 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-37 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-38 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-39 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-40 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-41 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-42 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-43 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-44 drwxr-xr-x 3 root root 0 Dec 5 10:43 nvidia-45

- nvidia-vgputype-id

-

Each directory represents an individual vGPU type and contains the following files and directories:

- available_instances

-

This file contains the number of instances of this vGPU type that can still be created. This file is updated any time a vGPU of this type is created on or removed from the physical GPU.

Note:

When a vGPU is created, the content of the available_instances for all other vGPU types on the physical GPU is set to 0. This behavior enforces the requirement that all vGPUs on a physical GPU must be of the same type.

- create

- This file is used for creating a vGPU instance. A vGPU instance is created by writing the UUID of the vGPU to this file. The file is write only.

- description

-

This file contains the following details of the vGPU type:

- The maximum number of virtual display heads that the vGPU type supports

- The frame rate limiter (FRL) configuration in frames per second

- The frame buffer size in Mbytes

- The maximum resolution per display head

- The maximum number of vGPU instances per physical GPU

For example:

# cat description num_heads=4, frl_config=60, framebuffer=2048M, max_resolution=4096x2160, max_instance=4

- device_api

-

This file contains the string

vfio_pcito indicate that a vGPU is a PCI device. - devices

-

This directory contains all the

mdevdevices that are created for the vGPU type. For example:# ll devices total 0 lrwxrwxrwx 1 root root 0 Dec 6 01:52 aa618089-8b16-4d01-a136-25a0f3c73123 -> ../../../aa618089-8b16-4d01-a136-25a0f3c73123

- name

-

This file contains the name of the vGPU type. For example:

# cat name GRID M10-2Q

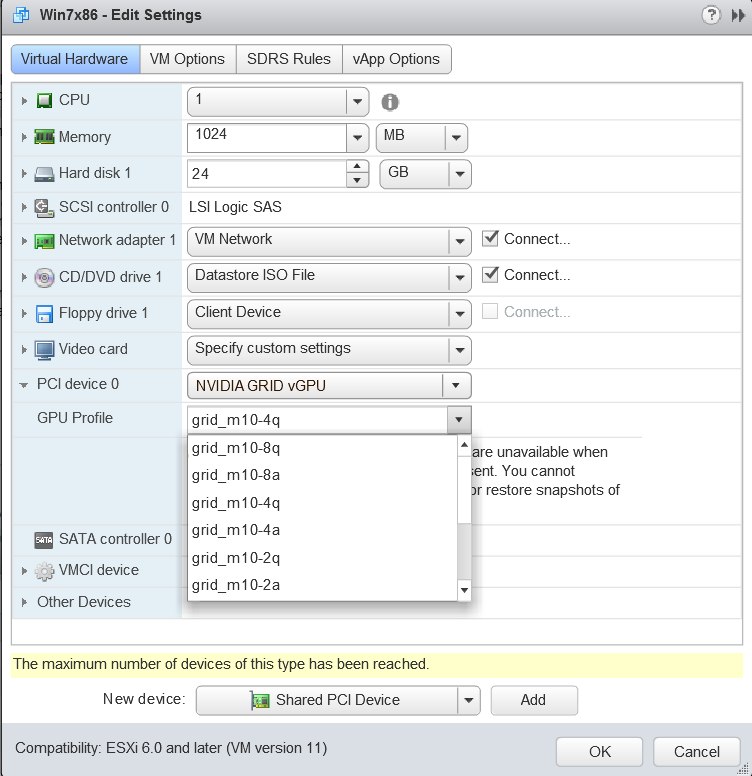

2.6. Installing and Configuring the NVIDIA Virtual GPU Manager for VMware vSphere

You can use the NVIDIA Virtual GPU Manager for VMware vSphere to set up a VMware vSphere VM to use NVIDIA vGPU or VMware vSGA. The vGPU Manager vSphere Installation Bundles (VIBs) for VMware vSphere 6.5 and later provide vSGA and vGPU functionality in a single VIB. For VMware vSphere 6.0, vSGA and vGPU functionality are provided in separate vGPU Manager VIBs.

For NVIDIA vGPU, follow this sequence of instructions:

- Installing and Updating the NVIDIA Virtual GPU Manager for vSphere

- 6.0 Only: Configuring Suspend and Resume for VMware vSphere

- Changing the Default Graphics Type in VMware vSphere 6.5 and Later

- Configuring a vSphere VM with NVIDIA vGPU

After configuring a vSphere VM to use NVIDIA vGPU, you can install the NVIDIA vGPU software graphics driver for your guest OS and license any NVIDIA vGPU software licensed products that you are using.

For VMware vSGA, follow this sequence of instructions:

- Installing and Updating the NVIDIA Virtual GPU Manager for vSphere

- Configuring a vSphere VM with VMware vSGA

Installation of the NVIDIA vGPU software graphics driver for the guest OS is not required for vSGA.

2.6.1. Installing and Updating the NVIDIA Virtual GPU Manager for vSphere

The NVIDIA Virtual GPU Manager runs on the ESXi host. It is provided in the following formats:

- As a VIB file, which must be copied to the ESXi host and then installed

- As an offline bundle that you can import manually as explained in Import Patches Manually in the VMware vSphere documentation

NVIDIA Virtual GPU Manager and Guest VM drivers must be matched from the same main driver branch. If you update vGPU Manager to a release from another driver branch, guest VMs will boot with vGPU disabled until their guest vGPU driver is updated to match the vGPU Manager version. Consult Virtual GPU Software for VMware vSphere Release Notes for further details.

2.6.1.1. Installing the NVIDIA Virtual GPU Manager Package for vSphere

To install the vGPU Manager VIB you need to access the ESXi host via the ESXi Shell or SSH. Refer to VMware’s documentation on how to enable ESXi Shell or SSH for an ESXi host.

Before proceeding with the vGPU Manager installation make sure that all VMs are powered off and the ESXi host is placed in maintenance mode. Refer to VMware’s documentation on how to place an ESXi host in maintenance mode.

- Use the esxcli command to install the vGPU Manager package:

[root@esxi:~] esxcli software vib install -v directory/NVIDIA-vGPU-VMware_ESXi_6.0_Host_Driver_390.113-1OEM.600.0.0.2159203.vib Installation Result Message: Operation finished successfully. Reboot Required: false VIBs Installed: NVIDIA-vGPU-VMware_ESXi_6.0_Host_Driver_390.113-1OEM.600.0.0.2159203 VIBs Removed: VIBs Skipped:

directory is the absolute path to the directory that contains the VIB file. You must specify the absolute path even if the VIB file is in the current working directory.

- Reboot the ESXi host and remove it from maintenance mode.

2.6.1.2. Updating the NVIDIA Virtual GPU Manager Package for vSphere

Update the vGPU Manager VIB package if you want to install a new version of NVIDIA Virtual GPU Manager on a system where an existing version is already installed.

To update the vGPU Manager VIB you need to access the ESXi host via the ESXi Shell or SSH. Refer to VMware’s documentation on how to enable ESXi Shell or SSH for an ESXi host.

Before proceeding with the vGPU Manager update, make sure that all VMs are powered off and the ESXi host is placed in maintenance mode. Refer to VMware’s documentation on how to place an ESXi host in maintenance mode

- Use the esxcli command to update the vGPU Manager package:

[root@esxi:~] esxcli software vib update -v directory/NVIDIA-vGPU-VMware_ESXi_6.0_Host_Driver_390.113-1OEM.600.0.0.2159203.vib Installation Result Message: Operation finished successfully. Reboot Required: false VIBs Installed: NVIDIA-vGPU-VMware_ESXi_6.0_Host_Driver_390.113-1OEM.600.0.0.2159203 VIBs Removed: NVIDIA-vGPU-VMware_ESXi_6.0_Host_Driver_390.94-1OEM.600.0.0.2159203 VIBs Skipped:

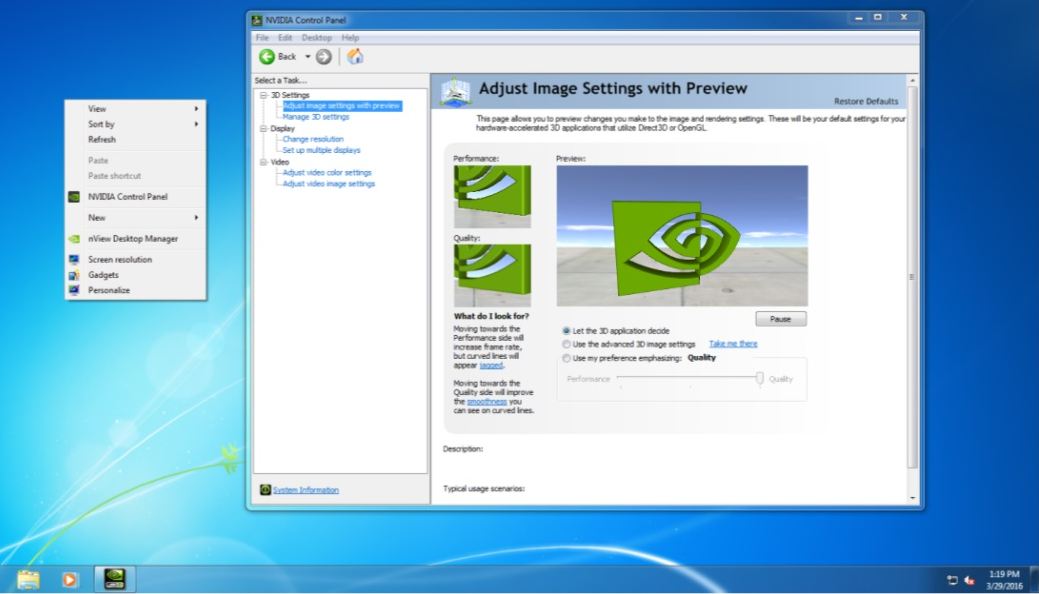

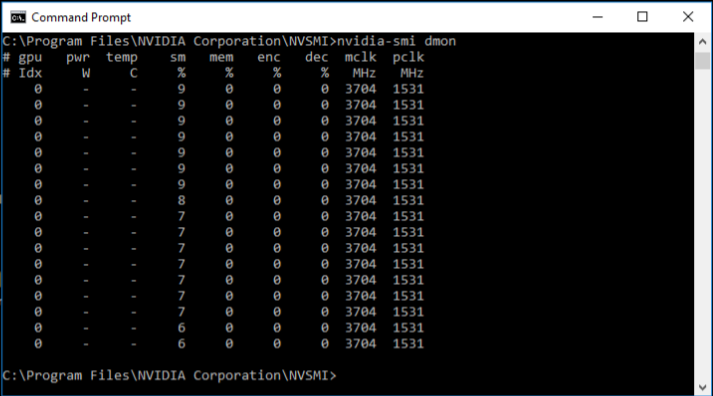

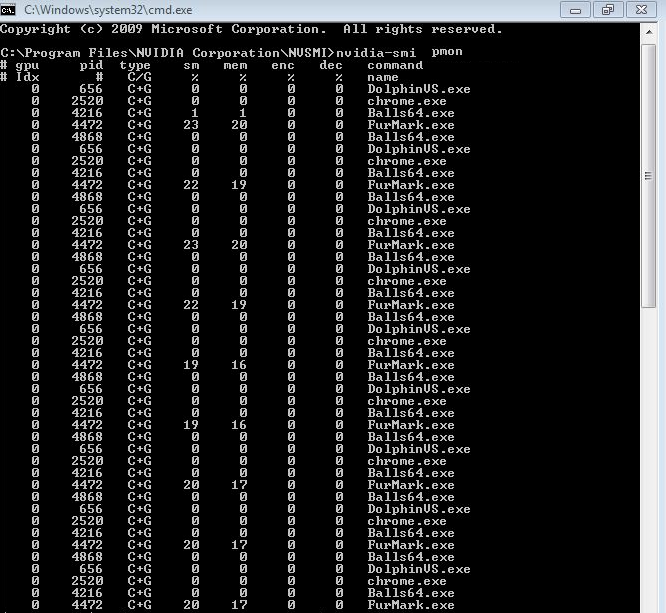

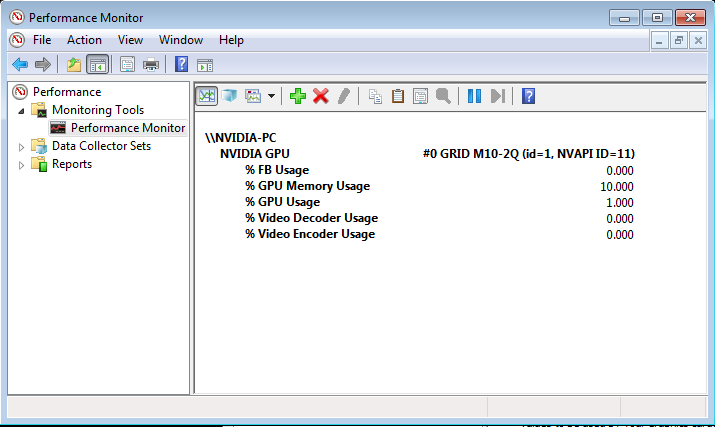

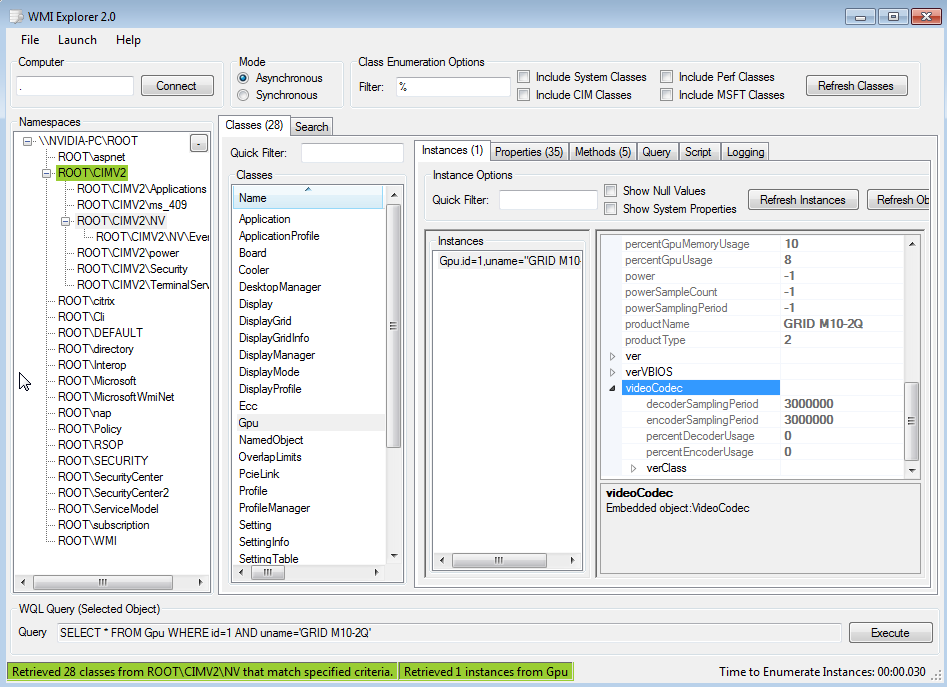

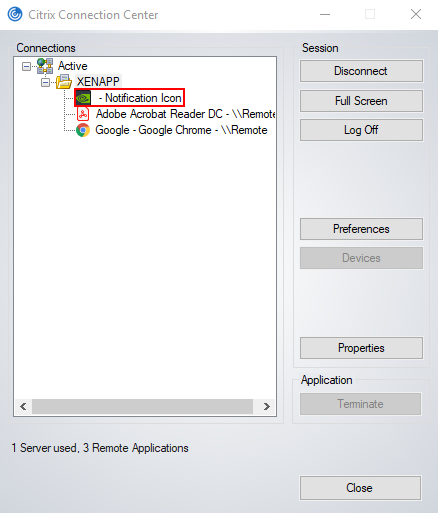

directory is the path to the directory that contains the VIB file.