Features

The application binary name for the combined cuPHY-CP + cuPHY is cuphycontroller. When cuphycontroller starts, it reads static configuration from configuration yaml files. This section describes the fields in the yaml files.

l2adapter_filename

Feature Status: MFL

This field contains the filename of the yaml-format config file for l2 adapter configuration

low_priority_core

Feature Status: MFL

CPU core shared by all low-priority threads, isolated CPU core is preferred. Can be non-isolated CPU core but make sure no other heavy load task on it.

nic_tput_alert_threshold_mbps

Feature Status: MFL

This parameter is used to monitor NIC throughput. The units are in Mbps, i.e. 85000 = 85 Gbps. This value is almost the max throughput that can be achieved with accurate send scheduling for a 100 Gbps link. A gRPC client(reference: $cuBB_SDK/cuPHY-CP/cuphyoam/examples/test_grpc_push_notification_client.cpp) needs to be implemented to receive the alert.

cuphydriver_config

Feature Status: MFL

This container holds configuration for cuphydriver

standalone

Feature Status: MFL

0 - run cuphydriver integrated with other cuPHY-CP components

1 - run cuphydriver in standalone mode (no l2adapter, etc)

validation

Feature Status: MFL

Enables additional validation checks at run-time

0 - Disabled

1 - Enabled

num_slots

Feature Status: MFL

Number of lots to run in cuphydriver standalone test.

log_level

Feature Status: MFL

cuPHYDriver log level: DBG, INFO, ERROR.

profiler_sec

Feature Status: MFL

Number of seconds to run the cuda profiling tool.

dpdk_thread

Feature Status: MFL

Sets the CPU core used by the primary DPDK thread. It does not have to be an isolated core. (And the dpdk thread itself is defaulted to ‘SCHED_FIFO+priority 95’)

dpdk_verbose_logs

Feature Status: MFL

Enable maximum log level in DPDK

0 - Disable

1 - Enable

accu_tx_sched_res_ns

Feature Status: MFL

Sets the accuracy of the accurate transmit scheduling, in units of nanoseconds.

accu_tx_sched_disable

Feature Status: MFL

Disable accurate TX scheduling.

0 - packets are sent according to the TX timestamp

1 - packets are sent whenever it is convenient

fh_stats_dump_cpu_core

Feature Status: MFL

Sets the CPU core used by the FH stats logging thread. It does not have to be an isolated core. (And currently the default FH stats polling interval is 500ms.)

pdump_client_thread

Feature Status: MFL

CPU core to use for pdump client. Set to -1 to disable fronthaul RX traffic PCAP capture.

See:

https://doc.dpdk.org/guides/howto/packet_capture_framework.html

aerial-fh README.md

mps_sm_pusch

Feature Status: MFL

Number of SMs for PUSCH channel

mps_sm_pucch

Feature Status: MFL

Number of SMs for PUCCH channel

mps_sm_pusch

Feature Status: MFL

Number of SMs for PUSCH channel

mps_sm_prach

Feature Status: MFL

Number of SMs for PRACH channel

mps_sm_ul_order

Feature Status: MFL

Number of SMs for UL order kernel

mps_sm_pdsch

Feature Status: MFL

Number of SMs for PDSCH channel

mps_sm_pdcch

Feature Status: MFL

Number of SMs for PDCCH channel

mps_sm_pbch

Feature Status: MFL

Number of SMs for PBCH channel

mps_sm_srs

Feature Status: MFL

Number of SMs for SRS channel

mps_sm_gpu_comms

Feature Status: MFL

Number of SMs for GPU comms

nics

Feature Status: MFL

Container for NIC configuration parameters

nic

Feature Status: MFL

PCIe bus address of the NIC port

mtu

Feature Status: MFL

Maximum transmission size, in bytes, supported by the Fronthaul U-plane and C-plane

cpu_mbufs

Feature Status: MFL

Number of preallocated DPDK memory buffers (mbufs) used for Ethernet packets.

uplane_tx_handles

Feature Status: MFL

The number of pre-allocated transmit handles which link the U-plane prepare() and transmit() functions.

txq_count

Feature Status: MFL

NIC transmit queue count.

Must be large enough to handle all cells attached to this NIC port.

Each cell uses one TXQ for C-plane and txq_count_uplane TXQs for U-plane.

rxq_count

Feature Status: MFL

Receive queue count

This value must be large enough to handle all cell attached to this NIC port.

Each cell uses one RXQ to receive all uplink traffic

txq_size

Feature Status: MFL

Number of packets which can fit in each transmit queue

rxq_size

Feature Status: MFL

Number of packets which can be buffered in each receive queue

gpu

Feature Status: MFL

CUDA device to receive uplink packets from this NIC port.

gpus

Feature Status: MFL

List of GPU device IDs. In order to use gpudirect, the GPU must be on the same PCIe root complex as the NIC. In order to maximize performance, the GPU should be on the same PCIe switch as the NIC. Currently only the first entry in the list is used.

workers_ul

Feature Status: MFL

List of pinned CPU cores used for uplink worker threads.

workers_dl

Feature Status: MFL

List of pinned CPU cores used for downlink worker threads.

debug_worker

Feature Status: MFL

For performance debug purpose we set this to a free core to work with the enable_*_tracing logs.

prometheus_thread

Feature Status: MFL

Pinned CPU core for updating NIC metrics once per second

start_section_id_srs

Feature Status: MFL

ORAN CUS start section ID for the SRS channel.

start_section_id_prach

Feature Status: MFL

ORAN CUS start section ID for the PRACH channel.

enable_ul_cuphy_graphs

Feature Status: MFL

Enable UL processing with CUDA graphs.

enable_dl_cuphy_graphs

Feature Status: MFL

Enable DL processing with CUDA graphs.

section_3_time_offset

Feature Status: MFL

Time offset, in units of nanoseconds, for the PRACH channel.

ul_order_timeout_cpu_ns

Feature Status: MFL

Timeout, in units of nanoseconds, for the uplink order kernel to receive any U-plane packets for this slot.

ul_order_timeout_gpu_ns

Feature Status: MFL

Timeout, in units of nanoseconds, for the order kernel to complete execution on the GPU

pusch_sinr

Feature Status: MFL

Enable pusch sinr calculation (0 by default)

pusch_rssi

Feature Status: MFL

Enable PUSCH RSSI calculation (0 by default)

pusch_tdi

Feature Status: MFL

Enable PUSCH TDI processing (0 by default)

pusch_cfo

Feature Status: MFL

Enable PUSCH CFO calculations (0 by default)

pusch_dftsofdm

Feature Status: MFL

DFT-s-OFDM enable/disable flag: 0 - disable, 1 - enable

pusch_to

Feature Status: MFL

It is only used for timing offset reporting to L2. If the timing offset estimate is not used by L2, it can be disabled.

pusch_select_eqcoeffalgo

Feature Status: MFL

Algorithm selector for PUSCH noise interference estimation and channel equalization. The following values are supported: 0: Regularized zero-forcing (RZF) 1: Diagonal MMSE regularization 2: Minimum Mean Square Error - Interference Rejection Combining (MMSE-IRC) 3: MMSE-IRC with RBLW covariance shrinkage 4: MMSE-IRC with OAS covariance shrinkage.

pusch_select_chestalgo

Feature Status: MFL

Channel estimation algorithm selection: 0 - legacy MMSE, 1 - multi-stage MMSE with delay estimation

pusch_tbsizecheck

Feature Status: MFL

Tb size verification enable/disable flag: 0 - disable, 1 - enable

pusch_deviceGraphLaunchEn

Feature Status: MFL

Static flag to allow device graph launch in PUSCH

pusch_waitTimeOutPreEarlyHarqUs

Feature Status: MFL

Timeout threshold in microseconds for receiving OFDM symbols for PUSCH early-HARQ processing.

pusch_waitTimeOutPostEarlyHarqUs

Feature Status: MFL

Timeout threshold in microseconds for receiving OFDM symbols for PUSCH non-early-HARQ processing (essentially all the PUSCH symbols).

puxch_polarDcdrListSz

Feature Status: MFL

List size used in List Decoding of Polar codes

enable_cpu_task_tracing

Feature Status: MFL

The flag is used to trace and instrument DL/UL CPU tasks running on existing cuphydriver cores.

enable_prepare_tracing

Feature Status: MFL

It’s for tracing the U-plane packet preperation kernel durations and end times and need the debug worker to be enabled.

enable_dl_cqe_tracing

Feature Status: MFL

Enables tracing of DL CQEs (debug feature to check for DL U-plane packets’ timing at the NIC)

ul_rx_pkt_tracing_level

Feature Status: MFL

This Yaml param can be set to 3 different values: 0 (default, recommended) : Will only keep count of the early/ontime/late packet counters per slot as seen by the DU (Reorder kernel) for the Uplink U-plane packets 1 : Also Captures and logs earliest/latest packet timestamp per symbol per slot as seen by the DU. 2 : Also Captures and logs timestamp of each packet received per symbol per slot as seen by the DU.

split_ul_cuda_streams

Feature Status: MFL

Keep default of 0. This allows back to back UL slots to overlap their processing. Please keep disabled to maintain performance of first UL slot in every group of 2.

aggr_obj_non_avail_th

Feature Status: MFL

Keep the default value at 5. This param sets the threshold for successive non-availability of L1 objects (can be interpreted as L1 handler necessary to schedule PHY compute tasks to the GPU). Unavailability could imply the execution timeline falling behind the expected L1 timeline budget

dl_wait_th_ns

Feature Status: MFL

This parameter is used for error handling in the event of GPU failure. Please keep defaults.

sendCPlane_timing_error_th_ns

Feature Status: MFL

Keep the default value at 50000 (50 us). The threshold is used as a check for the proximity of the current time during C-plane task’s execution to the actual scheduled C-plane packet’s transmission time. Meeting the threshold check would result in C-plane packet transmission being dropped for the slot.

pusch_forcedNumCsi2Bits

Feature Status: MFL

Debug feaure if > 0, overrides the number of PUSCH CSI-P2 bits for all CSI-P2 UCIs with the non-zero value provided. Recommend setting to 0

mMIMO_enable

Feature Status: MFL

Keep at default of 0. This flag is used for future capability.

enable_srs

Feature Status: MFL

Enable/disable SRS

ue_mode

Feature Status: MFL

Flag for spectral effeciency feature (Should be enabled on the RU side Yaml to emulate UE operation)

cplane_disable

Feature Status: MFL

Disable C-plane for all cells

0 - Enable C-plane

1 - Disable C-plane

cells

Feature Status: MFL

List of containers of cell parameters

name

Feature Status: MFL

Name of the cell

cell_id

Feature Status: MFL

ID of the cell.

src_mac_addr

Feature Status: MFL

Source MAC address for U-plane and C-plane packets. Set to 00:00:00:00:00:00 to use the MAC address of the NIC port in use.

dst_mac_addr

Feature Status: MFL

Destination MAC address for U-plane and C-plane packets

nic

Feature Status: MFL

gNB NIC port to which the cell is attached

Must match the ‘nic’ key value in one of the elements of in the ‘nics’ list

vlan

Feature Status: MFL

VLAN ID used for C-plane and U-plane packets

pcp

Feature Status: MFL

QoS priority codepoint used for C-plane and U-plane Ethernet packets

txq_count_uplane

Feature Status: MFL

Number of transmit queues used for U-plane

eAxC_id_ssb_pbch

Feature Status: MFL

List of eAxC IDs to use for SSB/PBCH

eAxC_id_pdcch

Feature Status: MFL

List of eAxC IDs to use for PDCCH

eAxC_id_pdsch

Feature Status: MFL

List of eAxC IDs to use for PDSCH

eAxC_id_srs

Feature Status: MFL

List of eAxC IDs to use for CSI RS

eAxC_id_pusch

Feature Status: MFL

List of eAxC IDs to use for PUSCH

eAxC_id_pucch

Feature Status: MFL

List of eAxC IDs to use for PUCCH

eAxC_id_srs

Feature Status: MFL

List of eAxC IDs to use for SRS

eAxC_id_prach

Feature Status: MFL

List of eAxC IDs to use for PRACH

compression_bits

Feature Status: MFL

Number of bits used for each RE on DL U-plane channels.

decompression_bits

Feature Status: MFL

Number of bits used per RE on uplink U-plane channels.

fs_offset_dl

Feature Status: MFL

Downlink U-plane scaling per ORAN CUS 6.1.3

exponent_dl

Feature Status: MFL

Downlink U-plane scaling per ORAN CUS 6.1.3

ref_dl

Feature Status: MFL

Downlink U-plane scaling per ORAN CUS 6.1.3

fs_offset_ul

Feature Status: MFL

Uplink U-plane scaling per ORAN CUS 6.1.3

exponent_ul

Feature Status: MFL

Uplink U-plane scaling per ORAN CUS 6.1.3

max_amp_ul

Feature Status: MFL

Maximum full scale amplitude used in uplink U-plane scaling per ORAN CUS 6.1.3

mu

Feature Status: MFL

3GPP subcarrier bandwidth index ‘mu’

0 - 15 kHz

1 - 30 kHz

2 - 60 kHz

3 - 120 kHz

4 - 240 kHz

T1a_max_up_ns

Feature Status: MFL

Scheduled timing advance before time-zero for downlink U-plane egress from DU, per ORAN CUS.

T1a_max_cp_ul_ns

Feature Status: MFL

Scheduled timing advance before time-zero for uplink C-plane egress from DU, per ORAN CUS.

Ta4_min_ns

Feature Status: MFL

Start of DU reception window after time-zero, per ORAN CUS

Ta4_max_ns

Feature Status: MFL

End of DU reception window after time-zero, per ORAN CUS

Tcp_adv_dl_ns

Feature Status: MFL

Downlink C-plane timing advance ahead of U-plane, in units of nanoseconds, per ORAN CUS.

ul_u_plane_tx_offset_ns

Feature Status: MFL

Flag for spectral effeciency feature (Should be set on the RU side Yaml to offset UL transmission start from T0)

pusch_prb_stride

Feature Status: MFL

Memory stride, in units of PRBs, for the PUSCH channel. Affects GPU memory layout.

prach_prb_stride

Feature Status: MFL

Memory stride, in units of PRBs, for the PRACH channel. Affects GPU memory layout.

srs_prb_stride

Feature Status: MFL

Memory stride, in units of PRBs, for the SRS. Affects GPU memory layout.

pusch_ldpc_max_num_itr_algo_type

Feature Status: MFL

0 - Fixed LDPC iteration count 1 - MCS based LDPC iteration count Recommend setting pusch_ldpc_max_num_itr_algo_type:1

pusch_fixed_max_num_ldpc_itrs

Feature Status: MFL

Unused currently, intended to replace pusch_ldpc_n_iterations

pusch_ldpc_n_iterations

Feature Status: MFL

Iteration count set to pusch_ldpc_n_iterations when fixed LDPC iteration count option is selected (pusch_ldpc_max_num_itr_algo_type:0) Since the default value of pusch_ldpc_max_num_itr_algo_type is 1 (iteration count optimized based on MCS), pusch_ldpc_n_iterations is unused

pusch_ldpc_algo_index

Feature Status: MFL

Algorithm index for LDPC decoder: 0 - automatic choice

pusch_ldpc_flags

Feature Status: MFL

pusch_ldpc_flags are flags which configure the LDPC decoder. pusch_ldpc_flags:2 selects an LDPC decoder which optimizes for throughput i..e processes more than one codeword (e.g 2) instead of latency

pusch_ldpc_use_half

Feature Status: MFL

Indication of input data type of LDPC decoder: : 0 - single precision, 1 - half precision

pusch_nMaxPrb

Feature Status: MFL

This is for memory allocation of max PRB range of peak cells vs average cells.

ul_gain_calibration

Feature Status: MFL

UL Configured Gain used to convert dBFS to dBm. (default if unspecified: 48.68)

lower_guard_bw

Feature Status: MFL

Lower Guard Bandwidth expressed in kHZ. Used for deriving freqOffset for each Rach Occasion. Default is 845.

tv_pusch

Feature Status: MFL

HDF5 file containing static configuration (filter coefficients, etc) for the PUSCH channel.

tv_prach

Feature Status: MFL

HDF5 file containing static configuration (filter coefficients, etc) for the PRACH channel.

pusch_ldpc_n_iterations

Feature Status: MFL

PUSCH LDPC channel coding iteration count

pusch_ldpc_early_termination

Feature Status: MFL

PUSCH LDPC channel coding early termination

0 - Disable

1 - Enable

workers_sched_priority

Feature Status: MFL

cuPHYDriver worker threads scheduling priority

dpdk_file_prefix

Feature Status: MFL

Shared data file prefix to use for the underlying DPDK process

wfreq

Feature Status: MFL

Filename containing the coefficients for channel estimation filters, in HDF5 (.h5) format

cell_group

Feature Status: MFL

Enable cuPHY cell groups

0 - disable

1 - enable

cell_group_num

Feature Status: Unassigned

Number of cells to be configured in L1 for the test

enable_h2d_copy_thread

Feature Status: Unassigned

Enable/disable offloading of h2d copy in L2A to a seperate copy thread

h2d_copy_thread_cpu_affinity

Feature Status: Unassigned

CPU core on which h2d copy thread in L2A should run(Applicable only if enable_h2d_copy_thread is 1)

h2d_copy_thread_sched_priority

Feature Status: Unassigned

h2d copy thread priority in L2A(Applicable only if enable_h2d_copy_thread is 1)

fix_beta_dl

Feature Status: Unassigned

Fix the beta_dl for local test with RU Emulator so that the output values are a bytematch to the TV.

Duplicate of fix_beta_dl

Feature Status: Unassigned

Fix the beta_dl for local test with RU Emulator so that the output values are a bytematch to the TV.

msg_type

Feature Status: MFL

Defines the L2/L1 interface API. Supported options are:

scf_fapi_gnb - Use the small cell forum API

phy_class

Feature Status: MFL

Same as msg_type.

tick_generator_mode

Feature Status: MFL

The SLOT.incication interval generator mode:

0 - poll + sleep. During each tick the thread sleep some time to release the CPU core to avoid hang the system, then poll the system time.

1 - sleep. Sleep to absolute timestamp, no polling.

2 - timer_fd. Start a timer and call epoll_wait() on the timer_fd.

allowed_fapi_latency

Feature Status: MFL

Allowed maximum latency of SLOT FAPI messages which send from L2 to L1, otherwise the message will be ignored and dropped.

Unit: slot.

Default is 0, it means L2 message should be received in current slot.

allowed_tick_error

Feature Status: MFL

Allowed tick interval error. Unit: us

Tick interval error is printed in statistic style. If observed tick error > allowed, the log will be printed as Error level.

timer_thread_config

Feature Status: MFL

Configuration for the timer thread

name

Feature Status: MFL

Name of thread

cpu_affinity

Feature Status: MFL

Id of pinned CPU core used for timer thread.

sched_priority

Feature Status: MFL

Scheduling priority of timer thread.

message_thread_config

Feature Status: MFL

Configuration container for the L2/L1 message processing thread.

name

Feature Status: MFL

Name of thread

cpu_affinity

Feature Status: MFL

Id of pinned CPU core used for timer thread.

sched_priority

Feature Status: MFL

Scheduling priority of message thread.

ptp

Feature Status: MFL

ptp configs for GPS_ALPHA, GPS_BETA

gps_alpha

Feature Status: MFL

GPS Alpha value for ORAN WG4 CUS section 9.7.2.

Default value = 0 if undefined.

gps_beta

Feature Status: MFL

GPS Beta value for ORAN WG4 CUS section 9.7.2.

Default value = 0 if undefined.

mu_highest

Feature Status: MFL

Highest supported mu, used for scheduling TTI tick rate

slot_advance

Feature Status: MFL

Timing advance ahead of time-zero, in units of slots, for L1 to notify L2 of a slot request.

enableTickDynamicSfnSlot

Feature Status: MFL

Enable dynamic slot/sfn

staticPucchSlotNum

Feature Status: MFL

Debugging param for testing against RU Emulator to send set static PUCCH slot number

staticPuschSlotNum

Feature Status: MFL

Debugging param for testing against RU Emulator to send set static PUSCH slot number

staticPdschSlotNum

Feature Status: MFL

Debugging param for testing against RU Emulator to send set static PDSCH slot number

staticPdcchSlotNum

Feature Status: MFL

Debugging param for testing against RU Emulator to send set static PDCCH slot number

staticCsiRsSlotNum

Feature Status: MFL

Debugging param for testing against RU Emulator to send set static CSI-RS slot number

staticSsbPcid

Feature Status: MFL

Debugging param for testing against RU Emulator to send set static SSB phycellId

staticSsbSFN

Feature Status: MFL

Debugging param for testing against RU Emulator to send set static SSB SFN

instances

Feature Status: MFL

Container for cell instances

name

Feature Status: MFL

Name of the instance

layerMap

Feature Status: MFL

List of mappings to layers.

nvipc_config_file

Feature Status: MFL

Config dedicated yaml file for nvipc. Example: nvipc_multi_instances.yaml

transport

Feature Status: MFL

Configuration container for L2/L1 message transport parameters

type

Feature Status: MFL

Transport type. One of shm, dpdk or udp

udp_config

Feature Status: MFL

Configuration container for the udp transport type.

local_port

Feature Status: MFL

UDP port used by L1

remote_port

Feature Status: MFL

UDP port used by L2.

shm_config

Feature Status: MFL

Configuration container for the shared memory transport type.

primary

Feature Status: MFL

Indicates process is primary for shared memory access.

prefix

Feature Status: MFL

Prefix used in creating shared memory filename.

cuda_device_id

Feature Status: MFL

Set this parameter to a valid GPU device ID to enable CPU data memory pool allocation in host pinned memory. Set to -1 to disable this feature.

ring_len

Feature Status: MFL

Length, in bytes, of the ring used for shared memory transport.

mempool_size

Feature Status: MFL

Configuration container for the memory pools used in shared memory transport.

cpu_msg

Feature Status: MFL

Configuration container for the shared memory transport for CPU messages (i.e. L2/L1 FAPI messages)

buf_size

Feature Status: MFL

Buffer size in bytes

pool_len

Feature Status: MFL

Pool length in buffers.

cpu_data

Feature Status: MFL

Configuration container for the shared memory transport for CPU data elements (i.e. downlink and uplink transport blocks)

buf_size

Feature Status: MFL

Buffer size in bytes

pool_len

Feature Status: MFL

Pool length in buffers.

cuda_data

Feature Status: MFL

Configuration container for the shared memory transport for GPU data elements

buf_size

Feature Status: MFL

Buffer size in bytes

pool_len

Feature Status: MFL

Pool length in buffers.

dpdk_config

Feature Status: MFL

Configurations for the DPDK over NIC transport type.

primary

Feature Status: MFL

Indicates process is primary for shared memory access.

prefix

Feature Status: MFL

The name used in creating shared memory files and searching DPDK memory pools

local_nic_pci

Feature Status: MFL

The NIC address or name used in IPC.

peer_nic_mac

Feature Status: MFL

The peer NIC MAC address, only need to be set in secondary process (L2/MAC).

cuda_device_id

Feature Status: MFL

Set this parameter to a valid GPU device ID to enable CPU data memory pool allocation in host pinned memory. Set to -1 to disable this feature.

need_eal_init

Feature Status: MFL

Whether nvipc need to call rte_eal_init() to initiate the DPDK context. 1 - initiate by nvipc; 0 - initiate by other module in the same process.

lcore_id

Feature Status: MFL

The logic core number for nvipc_nic_poll thread.

mempool_size

Feature Status: MFL

Configuration container for the memory pools used in shared memory transport.

cpu_msg

Feature Status: MFL

Configuration container for the shared memory transport for CPU messages (i.e. L2/L1 FAPI messages)

buf_size

Feature Status: MFL

Buffer size in bytes

pool_len

Feature Status: MFL

Pool length in buffers.

cpu_data

Feature Status: MFL

Configuration container for the shared memory transport for CPU data elements (i.e. downlink and uplink transport blocks)

buf_size

Feature Status: MFL

Buffer size in bytes

pool_len

Feature Status: MFL

Pool length in buffers.

cuda_data

Feature Status: MFL

Configuration container for the shared memory transport for GPU data elements

buf_size

Feature Status: MFL

Buffer size in bytes

pool_len

Feature Status: MFL

Pool length in buffers.

app_config

Feature Status: MFL

Configurations for all transport types, mostly used for debug.

grpc_forward

Feature Status: MFL

Whether to enable forwarding nvipc messages and how many messages to be forwarded automatically from initialization. Here count = 0 means forwarding every message forever.

0: disabled;

1: enabled but doesn’t start forwarding at initial;

-1: enabled and start forwarding at initial with count = 0;

Other positive number: enabled and start forwarding at initial with count = grpc_forward.

debug_timing

Feature Status: MFL

For debug only.

Whether to record timestamp of allocating, sending, receiving, releasing of all nvipc messages.

pcap_enable

Feature Status: MFL

For debug only.

Whether to capture nvipc messages to pcap file.

pcap_cpu_core

Feature Status: MFL

CPU core of background pcap log save thread

pcap_cache_size_bits

Feature Status: MFL

Size of /dev/shm/${prefix}_pcap. If set to 29, size will be 2^29 = 512MB

pcap_file_size_bits

Feature Status: MFL

Max size of /dev/shm/${prefix}_pcap. If set to 31, size will be 2^31 = 2GB

pcap_max_data_size

Feature Status: MFL

Max DL/UL FAPI data size to capture reduce pcap size.

aerial_metrics_backend_address

Feature Status: MFL

Aerial Prometheus metrics backend address

pucch_dtx_thresholds

Feature Status: MFL

Array of scale factors for DTX Thresholds of each PUCCH format.

Default value if not present is 1.0, which means the thresholds are not scaled.

For PUCCH format 0 and 1, -100.0 will be replaced with 1.0.

Example:

pucch_dtx_thresholds: [-100.0, -100.0, 1.0, 1.0, -100.0]

pusch_dtx_thresholds

Feature Status: MFL

Scale factor for DTX Thresholds of UCI on PUSCH.

Default value if not present is 1.0, which means the threshold is not scaled.

Example:

pusch_dtx_thresholds: 1.0

enable_precoding

Feature Status: MFL

Enable/Disable Precoding PDUs to be parsed in L2Adapter.

Default value is 0

enable_precoding: 0/1

prepone_h2d_copy

Feature Status: MFL

Enable/Disable preponing of H2D copy in L2Adapter.

Default value is 1

prepone_h2d_copy: 0/1

enable_beam_forming

Feature Status: MFL

Enables/Disables BeamIds to parsed in L2Adapter

Default value : 0

enable_beam_forming: 1

pusch_cfo (deprecated)

Feature Status: MFL

This field is deprecated in Aerial SDK 22.1.

[STRIKEOUT:Enable/Disable CFO correction for cuPHY’s PUSCH processing]

[STRIKEOUT:Default value is 0 ]

[STRIKEOUT:pusch_cfo: 1]

pusch_tdi (deprecated)

Feature Status: MFL

This field is deprecated in Aerial SDK 22.1.

[STRIKEOUT:Enable Time domain interpolation for cuPHY’s PUSCH]

[STRIKEOUT:Default value is 0]

[STRIKEOUT:pusch_tdi: 1]

dl_tb_loc

Feature Status: MFL

Transport block location in inside nvipc buffer

Default value is 1 ,

dl_tb_loc: 0 # TB is located in inline with nvipc’s msg buffer

dl_tb_loc: 1 # TB is located in nvipc’s CPU data buffer

dl_tb_loc: 2 # TB is located in nvipc’s GPU buffer

staticSsbSlotNum

Feature Status: MFL

staticSsbSlotNum

Override the incoming slot number with the Yaml configured SlotNumber for SS/PBCH

Example

staticSsbSlotNum:10

The application binary name for the combined O-RU + UE emulator is ru-emulator. When ru-emulator starts, it reads static configuration from a configuration yaml file. This section describes the fields in the yaml file.

core_list

Feature Status: MFL

List of CPU cores that RU Emulator could use

nic_interface

Feature Status: MFL

PCIe address of NIC to use i.e. b5:00.1

peerethaddr

Feature Status: MFL

MAC address of cuPHYController port

nvlog_name

Feature Status: Unassigned

The nvlog instance name for ru-emulator. Detailed nvlog configurations are in nvlog_config.yaml

cell_configs

Cell configs agreed upon with DU

name

Feature Status: MFL

Cell string name (largely unused)

eth

Feature Status: MFL

Cell MAC address

compression_bits

Feature Status: MFL

Compression method for DL throughput packets

decompression_bits

Feature Status: MFL

Compression method for sending UL packets

flow_list

Feature Status: MFL

eAxC list

eAxC_prach_list

Feature Status: MFL

eAxC prach list

vlan

Feature Status: MFL

vlan to use for RX and TX

nic

Feature Status: Unassigned

Index of the nic to use in the nics list

tti

Feature Status: MFL

Slot indication inverval

Deprecated: dl_compress_bits

Feature Status: MFL

compression bits for DL

Deprecated: ul_compress_bits

Feature Status: MFL

compression bits for UL

validate_dl_timing

Validate DL timing (need to be PTP synchronized)

Deprecated: dl_timing_delay_us

Feature Status: MFL

t1a_max_up from ORAN

timing_histogram

Feature Status: MFL

generate histogram

timing_histogram_bin_size

Feature Status: MFL

histogram bin size

Deprecated: dl_symbol_window_size

Feature Status: MFL

symbol accepted window size

oran_timing_info

dl_c_plane_timing_delay

Feature Status: Unassigned

t1a_max_up from ORAN

dl_c_plane_window_size

Feature Status: Unassigned

DL C Plane RX ontime window size

ul_c_plane_timing_delay

Feature Status: Unassigned

T1a_max_cp_ul from ORAN

ul_c_plane_window_size

Feature Status: Unassigned

UL C Plane RX ontime window size

dl_u_plane_timing_delay

Feature Status: Unassigned

T2a_max_up from ORAN

dl_u_plane_window_size

Feature Status: Unassigned

DL U Plane RX ontime window size

ul_u_plane_tx_offset

Feature Status: Unassigned

Ta4_min_up from ORAN

During run-time, Aerial components can be re-configured or queried for status via gRPC remote procedure calls (RPCs). The RPCs are defined in “protocol buffers” syntax, allowing support for clients written in any of the languages supported by gRPC and protocol buffers.

More information about gRPC may be found at: https://grpc.io/docs/what-is-grpc/core-concepts/

More information about protocol buffers may be found at: https://developers.google.com/protocol-buffers

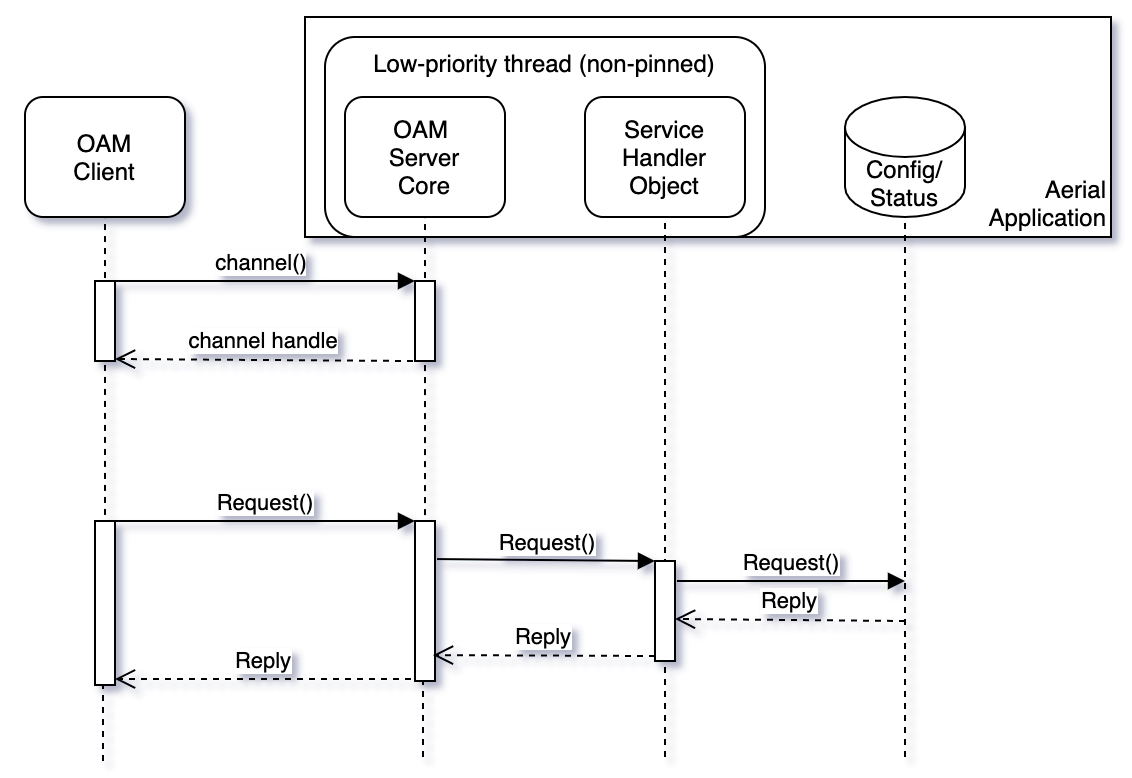

Simple Request/Reply Flow

Feature Status: MFL

Aerial applications support a request/reply flow using the gRPC framework with protobufs messages. At run-time, certain configuration items may be updated and certain status information may be queried. An external OAM client interfaces with the Aerial application acting as the gRPC server.

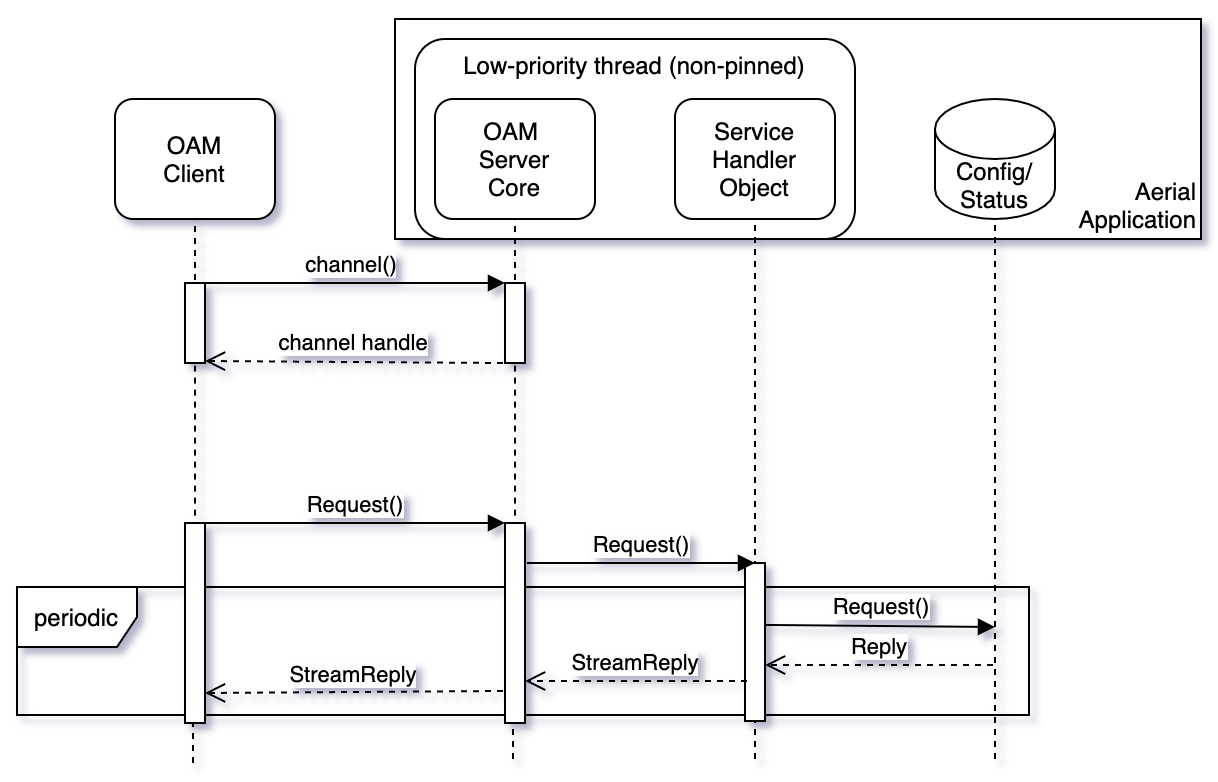

Streaming Request/Replies

Feature Status: MFL

Aerial applications support the gRPC streaming feature for sending (a)periodic status between client and server.

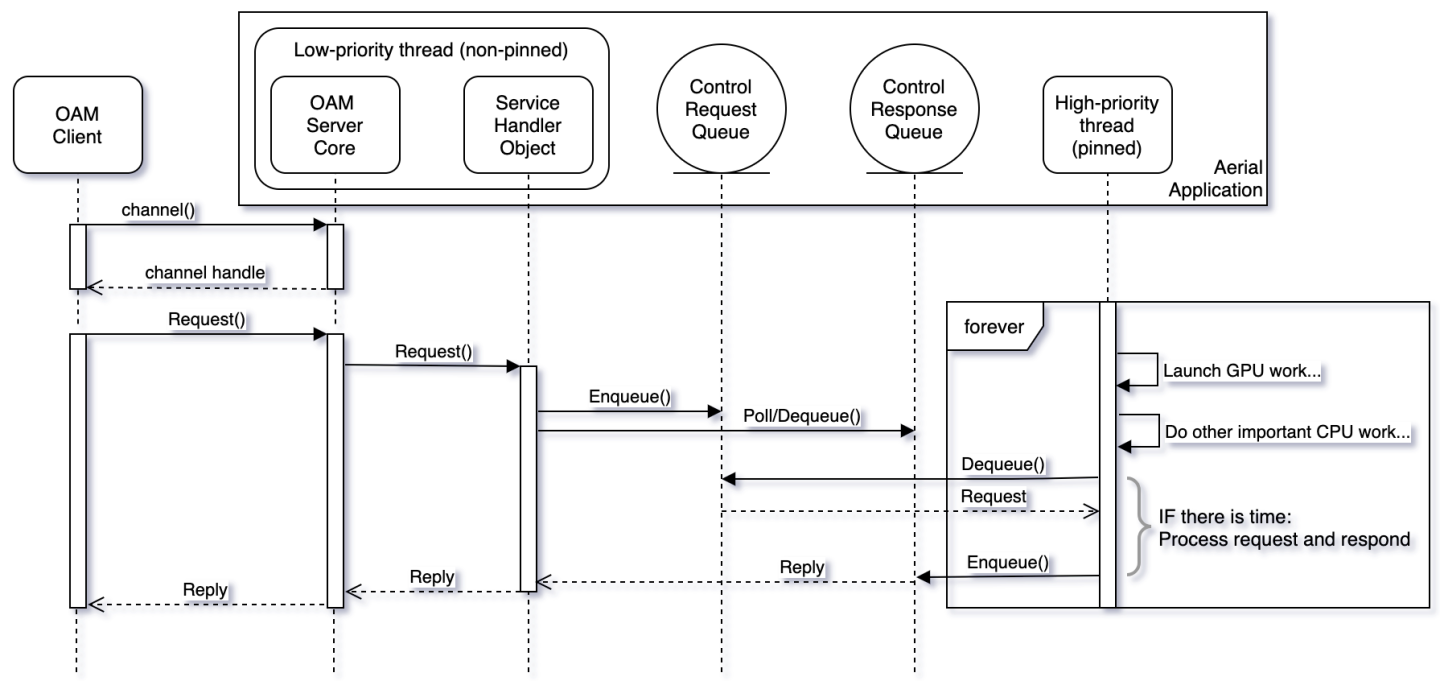

Asynchronous Interthread Communication

Feature Status: MFL

Certain request/reply scenarios require interaction with the high-priority CPU-pinned threads orchestrating GPU work. These interactions occur through Aerial-internal asynchronous queues, and requests are processed on a best effort basis which prioritizes the orchestration of GPU kernel launches and other L1 tasks.

Aerial Common Service definition

/\*

\* Copyright (c) 2021, NVIDIA CORPORATION. All rights reserved.

\*

\* NVIDIA CORPORATION and its licensors retain all intellectual property

\* and proprietary rights in and to this software, related documentation

\* and any modifications thereto. Any use, reproduction, disclosure or

\* distribution of this software and related documentation without an

express

\* license agreement from NVIDIA CORPORATION is strictly prohibited.

\*/

syntax = "proto3";

package aerial;

service Common {

rpc GetSFN (GenericRequest) returns (SFNReply) {}

rpc GetCpuUtilization (GenericRequest) returns (CpuUtilizationReply) {}

rpc SetPuschH5DumpNextCrc (GenericRequest) returns (DummyReply) {}

rpc GetFAPIStream (FAPIStreamRequest) returns (stream FAPIStreamReply)

{}

}

message GenericRequest {

string name = 1;

}

message SFNReply {

int32 sfn = 1;

int32 slot = 2;

}

message DummyReply {

}

message CpuUtilizationPerCore {

int32 core_id = 1;

int32 utilization_x1000 = 2;

}

message CpuUtilizationReply {

repeated CpuUtilizationPerCore core = 1;

}

message FAPIStreamRequest {

int32 client_id = 1;

int32 total_msgs_requested = 2;

}

message FAPIStreamReply {

int32 client_id = 1;

bytes msg_buf = 2;

bytes data_buf = 3;

}

rpc GetCpuUtilization

Feature Status: MFL

The GetCpuUtilization returns a variable-length array of CPU utilization per-high-priority-core.

CPU utilization is available through the Prometheus node exporter, however the design approach used by Aerial high-priority threads results in a false 100% CPU core utilization per thread. This RPC allows retrieval of the actual CPU utilization of high-priority threads. High-priority threads are pinned to specific CPU cores.

rpc GetFAPIStream

Feature Status: MFL

This RPC requests snooping of one or more (up to infinite number) of SCF FAPI messages. The snooped messages are delivered from the Aerial gRPC server to a third party client. See cuPHY-CP/cuphyoam/examples/aerial_get_l2msgs.py for an example client.

rpc TerminateCuphycontroller

Feature Status: MFL

This RPC message terminates cuPHYController with immediate effect

rpc CellParamUpdateRequest

Feature Status: MFL

This RPC message updates cell configuration without stopping the cell. Message specification:

message CellParamUpdateRequest {

int32 cell_id = 1;

string dst_mac_addr = 2;

int32 vlan_tci = 3;

}

dst_mac_addr must be in ‘XX:XX:XX:XX:XX:XX’ format

vlan_tci must include the 16-bit TCI value of 802.1Q tag

List of parameters supported by dynamic OAM via gRPC and CONFIG.request (M-plane)

Parameter name |

Configuration unit: accross all cells/per cell config |

Cell outage: in-service/out-of-service |

OAM command |

Note |

|---|---|---|---|---|

| ru_type | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –ru_type $RU_TYPE | $RU_TYPE : 1 for FXN_RU, 2 for FJT_RU, 3 for OTHER_RU(including ru_emulator) |

| nic | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –nic $NIC | nic PCIe address. It has to be one of the nic ports configured in cuphycontroller yaml file |

| dst_mac_addr | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –dst_mac_addr $DST_MAC_ADDR –vlan_id $VLAN_ID –pcp $PCP | dst_mac_addr, vlan id and pcp have to be updated together |

| vlan_id | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –dst_mac_addr $DST_MAC_ADDR –vlan_id $VLAN_ID –pcp $PCP | dst_mac_addr, vlan id and pcp have to be updated together |

| pcp | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –dst_mac_addr $DST_MAC_ADDR –vlan_id $VLAN_ID –pcp $PCP | dst_mac_addr, vlan id and pcp have to be updated together |

| compression_bits | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –compression_bits $COMPRESSION_BITS | |

| decompression_bits | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –decompression_bits $DECOMPRESSION_BITS | |

| exponent_dl | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –exponent_dl $EXPONENT_DL | |

| exponent_ul | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –exponent_ul $EXPONENT_UL | |

| prusch_prb_stride | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –pusch_prb_stride $PUSCH_PRB_STRIDE | |

| prach_prb_stride | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –prach_prb_stride $PRACH_PRB_STRIDE | |

| max_amp_ul | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –max_amp_ul $MAX_AMP_UL | |

| section_3_time_offset | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –section_3_time_offset $SECTION_3_TIME_OFFSET | |

| fh_distance_range | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –fh_distance_range $FH_DISTANCE_RANGE | $FH_DISTANCE_RANGE : 0 for 0~30km, 1 for 20~50km Suppose below are the default configs in cuhycontroller yaml config file which correspond to FH_DISTANCE_RANGE option 0 (0~30km) t1a_max_up_ns : d1 t1a_max_cp_ul_ns : d2 ta4_min_ns : d3 ta4_max_ns : d4 Updating FH_DISTANCE_RANGE option to 1 (20~50km) will adjust the values to below: t1a_max_up_ns : d1+$FH_EXTENSION_DELAY_ADJUSTMENT t1a_max_cp_ul_ns : d2+$FH_EXTENSION_DELAY_ADJUSTMENT ta4_min_ns : d3+$FH_EXTENSION_DELAY_ADJUSTMENT ta4_max_ns : d4+$FH_EXTENSION_DELAY_ADJUSTMENT $FH_EXTENSION_DELAY_ADJUSTMENT is 100us for now and can be tuned in source file: ${cuBB_SDK}/cuPHY-CP/cuphydriver/include/constant.hpp#L207 static constexpr uint32_t FH_EXTENSION_DELAY_ADJUSTMENT = 100000;//100us |

| ul_gain_calibration | per cell config | in-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –ul_gain_calibration $UL_GAIN_CALIBRATION | |

| lower_guard_bw | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –lower_guard_bw $LOWER_GUARD_BW | |

| ref_dl | per cell config | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –cell_id $CELL_ID –ref_dl $REF_DL | |

| attenuation_db | per cell config | in-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_param_attn_update.py $CELL_ID $ATTENUATION_DB | |

| gps_alpha | accross all cells | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –gps_alpha $GPS_ALPHA | All cells have to be in idle state before we can config this param |

| gps_beta | accross all cells | out-of-service | cd $cuBB_SDK/build/cuPHY-CP/cuphyoam && python3 $cuBB_SDK/cuPHY-CP/cuphyoam/examples/aerial_cell_multi_attrs_update.py –server_ip $SERVER_IP –gps_beta $GPS_BETA | All cells have to be in idle state before we can config this param |

| prachRootSequenceIndex | per cell config | out-of-service | Via FAPI CONFIG.request. See section Dynamic PRACH Configuration and Init Sequence Test | |

| prachZeroCorrConf | per cell config | out-of-service | Via FAPI CONFIG.request. See section Dynamic PRACH Configuration and Init Sequence Test | |

| numPrachFdOccasions | per cell config | out-of-service | Via FAPI CONFIG.request. See section Dynamic PRACH Configuration and Init Sequence Test | |

| restrictedSetConfig | per cell config | out-of-service | Via FAPI CONFIG.request. See section Dynamic PRACH Configuration and Init Sequence Test | |

| prachConfigIndex | per cell config | out-of-service | Via FAPI CONFIG.request. See section Dynamic PRACH Configuration and Init Sequence Test | |

| K1 | per cell config | out-of-service | Via FAPI CONFIG.request. See section Dynamic PRACH Configuration and Init Sequence Test |

In the OAM commands, we can use ‘localhost’ for $SERVER_IP when running on DU server, otherwise please use DU server numeric IP address. $CELL_ID is mplane id which starts from 1. The default values of the params can be found in corresponding cuphycontroller yaml config file: $cuBB_SDK/cuPHY-CP/cuphycontroller/config/cuphycontroller_xxx.yaml

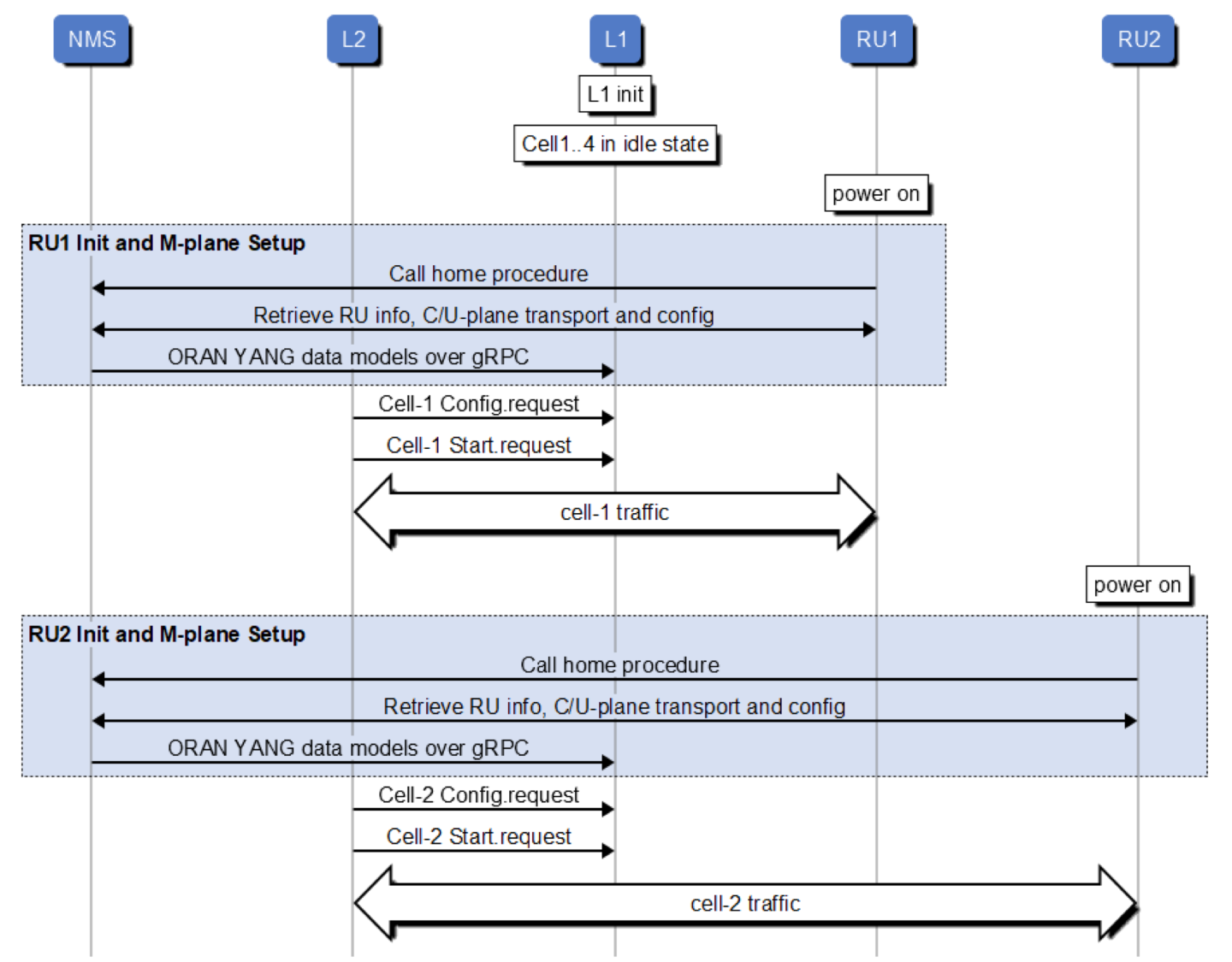

Aerial will support M-plane hybrid mode to allows NMS/SMO using ORAN YANG data models to pass RU capabilities, C/U–plane transport config, U-plane config to L1.

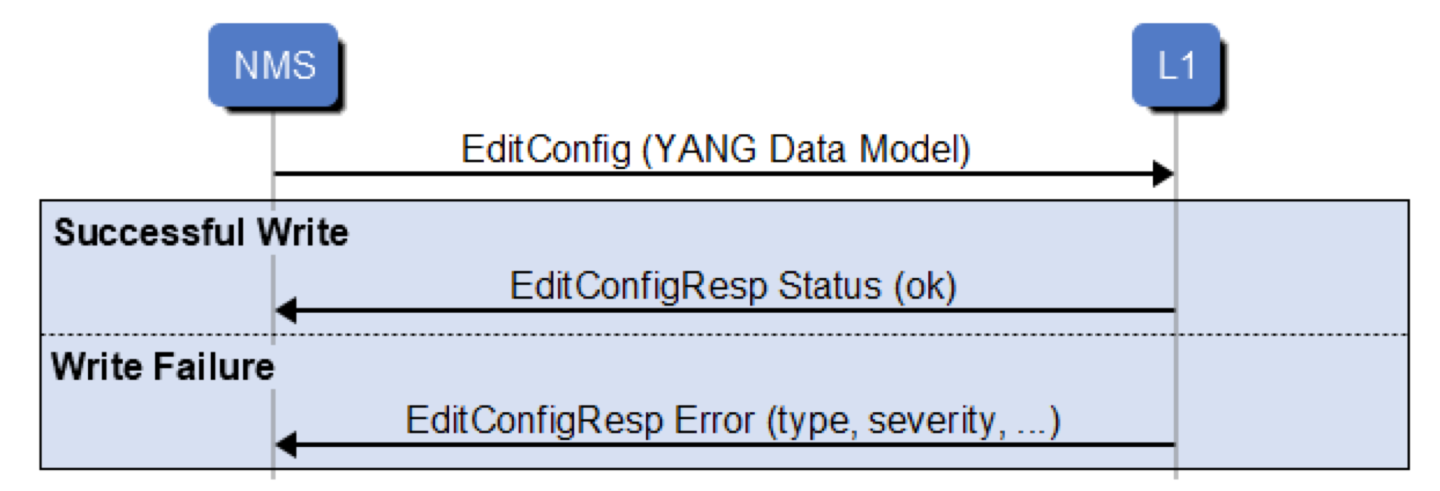

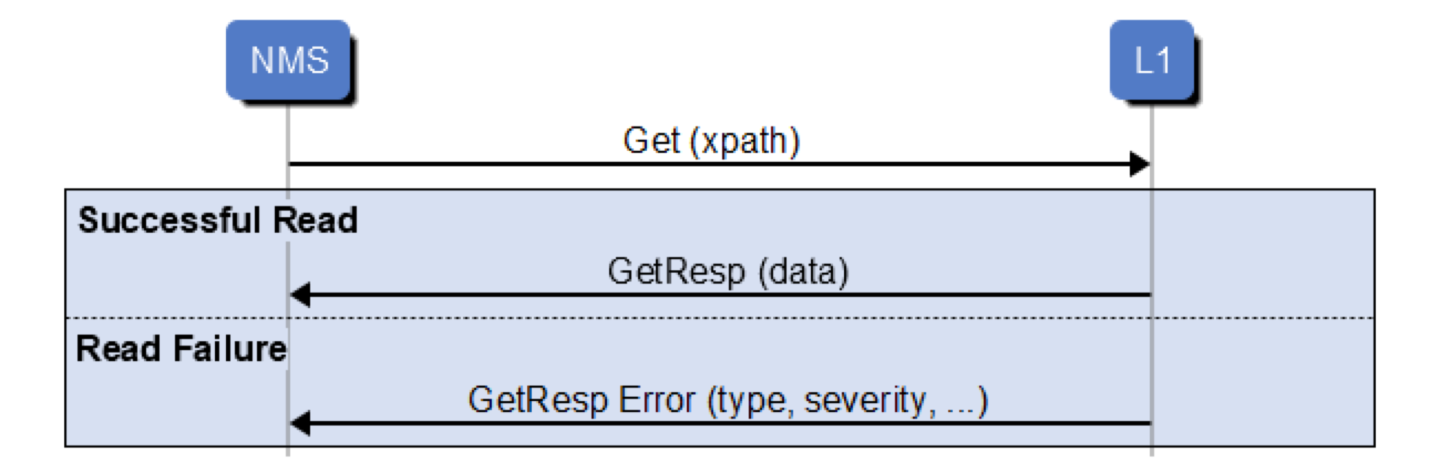

Here is the high level sequence diagram(How the NMS/SMO retrieve or compose the ORAN YANG data model from O-RU is out of scope of this document):

Data Model Procedures-Yang data tree write procedure

Data Model Procedures-Yang data tree read procedure

Data Model transfer APIs(gRPC ProtoBuf contract)

syntax = "proto3";

package p9_messages.v1;

service P9Messages {

rpc HandleMsg (Msg) returns (Msg) {}

}

message Msg

{

Header header = 1;

Body body = 2;

}

message Header

{

string msg_id = 1; // Message identifier to

// 1) Identify requests and notifications

// 2) Correlate requests and response

optional string oru_name = 2; // The name (identifier) of the O-RU, if present.

int32 vf_id = 3; // The identifier for the FAPI VF ID

int32 phy_id = 4; // The identifier for the FAPI PHY ID

optional int32 trp_id = 5; // The identifier PHY’s TRP, if any

}

message Body

{

oneof msg_body

{

Request request = 1;

Response response = 2;

}

}

message Request

{

oneof req_type

{

Get get = 1;

EditConfig edit_config = 2;

}

}

message Response

{

oneof resp_type

{

GetResp get_resp = 1;

EditConfigResp edit_config_resp = 2;

}

}

message Get { repeated bytes filter = 1; }

message GetResp

{

Status status_resp = 1;

bytes data = 2;

}

message EditConfig

{

bytes delta_config = 1; // List of Node changes with the associated operation to apply to the node

}

message EditConfigResp { Status status_resp = 1; }

message Error

{ // Type of error as defined in RFC 6241 section 4.3

string error_type = 1; // Error type defined in RFC 6241, Appendix B

string error_tag = 2; // Error tag defined in RFC 6241, Appendix B

string error_severity = 3; // Error severity defined in RFC 6241, Appendix B

string error_app_tag = 4; // Error app tag defined in RFC 6241, Appendix B

string error_path = 5; // Error path defined in RFC 6241, Appendix B

string error_message = 6; // Error message defined in RFC 6241, Appendix B

}

message Status

{

enum StatusCode

{

OK = 0;

ERROR_GENERAL = 1;

}

StatusCode status_code = 1;

repeated Error error = 2; // Optional: Error information

}

List of parameters supported by YANG Model

Parameter name |

Configuration unit: accross all cells/per cell config |

Cell outage: in-service/out-of-service |

Description |

YANG Model |

xpath |

|---|---|---|---|---|---|

| o-du-mac-address | per cell config | out-of-service | DU side mac address, it will be translated to corresponding ‘nic’ internally | o-ran-uplane-conf.yang o-ran-processing-element.yang ietf-interfaces.yang | /processing-elements/ru-elements/transport-flow/eth-flow/o-du-mac-address |

| ru-mac-address | per cell config | out-of-service | mac address of the corresponding RU | o-ran-uplane-conf.yang o-ran-processing-element.yang ietf-interfaces.yang | /processing-elements/ru-elements/transport-flow/eth-flow/ru-mac-address |

| vlan-id | per cell config | out-of-service | vlan id | ietf-interfaces.yang o-ran-interfaces.yang o-ran-processing-element.yang | /processing-elements/ru-elements/transport-flow/eth-flow/vlan-id |

| pcp | per cell config | out-of-service | vlan priority level | ietf-interfaces.yang o-ran-interfaces.yang o-ran-processing-element.yang | /interfaces/interface/class-of-service/u-plane-marking |

| decompression_bits | per cell config | out-of-service | Indicate the bit length after compression. Value 9 and 14 for BFP, 16 for no compression | o-ran-uplane-conf.yang | /user-plane-configuration/low-level-tx-endpoints/compression/iq-bitwidth |

| compression_bits | per cell config | out-of-service | Indicate the bit length after compression. Value 9 and 14 for BFP, 16 for no compression | o-ran-uplane-conf.yang | /user-plane-configuration/low-level-rx-endpoints/compression/iq-bitwidth |

| exponent_dl | per cell config | out-of-service | o-ran-uplane-conf.yang o-ran-compression-factors.yang | /user-plane-configuration/low-level-rx-endpoints/compression/exponent | |

| exponent_ul | per cell config | out-of-service | o-ran-uplane-conf.yang o-ran-compression-factors.yang | /user-plane-configuration/low-level-tx-endpoints/compression/exponent |

Reference examples

Here is a client side reference implementation: $cuBB_SDK/cuPHY-CP/cuphyoam/examples/p9_msg_client_grpc_test.cpp. Below are a few examples for update/retrieval of related params

Update ru-mac-address, vlan-id and pcp

#step 1: Edit $cuBB_SDK/cuPHY-CP/cuphyoam/examples/mac_vlan_pcp.xml and update ru_mac, vlan_id and pcp accordingly

#step 2: Run below cmd to do the provisioning

$cuBB_SDK/build/cuPHY-CP/cuphyoam/p9_msg_client_grpc_test --phy_id $mplane_id --cmd edit_config --xml_file $cuBB_SDK/cuPHY-CP/cuphyoam/examples/mac_vlan_pcp.xml

#step 3: Run below cmds to retrieve the config

$cuBB_SDK/build/cuPHY-CP/cuphyoam/p9_msg_client_grpc_test --phy_id $mplane_id --cmd get --xpath /o-ran-processing-element:processing-elements

$cuBB_SDK/build/cuPHY-CP/cuphyoam/p9_msg_client_grpc_test --phy_id $mplane_id --cmd get --xpath /ietf-interfaces:interfaces

Update o-du-mac-address(du nic port)

#step 1: Edit $cuBB_SDK/cuPHY-CP/cuphyoam/examples/nic_du_mac.xml and update du_mac which will be translated to corresponding nic port internally

#step 2: Run below cmd to do the provisioning

$cuBB_SDK/build/cuPHY-CP/cuphyoam/p9_msg_client_grpc_test --phy_id $mplane_id --cmd edit_config --xml_file $cuBB_SDK/cuPHY-CP/cuphyoam/examples/nic_du_mac.xml

#step 3: Run below cmd to retrieve the config

$cuBB_SDK/build/cuPHY-CP/cuphyoam/p9_msg_client_grpc_test --phy_id $mplane_id --cmd get --xpath /o-ran-processing-element:processing-elements

Update compression and decompression bits

#step 1: Edit $cuBB_SDK/cuPHY-CP/cuphyoam/examples/comp_decomp_bits.xml and update compression and decompression bits accordingly

#step 2: Run below cmd to do the provisioning

$cuBB_SDK/build/cuPHY-CP/cuphyoam/p9_msg_client_grpc_test --phy_id $mplane_id --cmd edit_config --xml_file $cuBB_SDK/cuPHY-CP/cuphyoam/examples/comp_decomp_bits.xml

#step 3: Run below cmd to retrieve the config

$cuBB_SDK/build/cuPHY-CP/cuphyoam/p9_msg_client_grpc_test --phy_id $mplane_id --cmd get --xpath /o-ran-uplane-conf:user-plane-configuration

Update dl and ul exponent

#step 1: Edit $cuBB_SDK/cuPHY-CP/cuphyoam/examples/dl_ul_exponent.xml and dl and ul exponent accordingly

#step 2: Run below cmd to do the provisioning

$cuBB_SDK/build/cuPHY-CP/cuphyoam/p9_msg_client_grpc_test --phy_id $mplane_id --cmd edit_config --xml_file $cuBB_SDK/cuPHY-CP/cuphyoam/examples/dl_ul_exponent.xml

#step 3: Run below cmd to retrieve the config

$cuBB_SDK/build/cuPHY-CP/cuphyoam/p9_msg_client_grpc_test --phy_id $mplane_id --cmd get --xpath /o-ran-uplane-conf:user-plane-configuration

Log Levels

Feature Status: MFL

Nvlog supports the following log levels: Fatal, Error, Console, Warning, Info, Debug, and Verbose

A Fatal log message results in process termination. For other log levels, the process continues execution. A typical deployment will send Fatal, Error, and Console levels to stdout. Console level is for printing something which is neither a warning nor an error, but you want to print to stdout.

nvlog

Feature Status: MFL

This yaml container contains parameters related to nvlog configuration, see nvlog_config.yaml

name

Feature Status: MFL

Used to create the shared memory log file. Shared memory handle is /dev/shm/${name}.log and temp logfile is named /tmp/${name}.log

primary

Feature Status: MFL

In all processes logging to the same file, set the first starting porcess to be primary, set others to be secondary.

shm_log_level

Feature Status: MFL

Sets the log level threshold for the high performance shared memory logger. Log messages with a level at or below this threshold will be sent to the shared memory logger.

Log levels: 0 - NONE, 1 - FATAL, 2 - ERROR, 3 - CONSOLE, 4 - WARNING, 5 - INFO, 6 - DEBUG, 7 - VERBOSE

Setting the log level to LOG_NONE means no logs will be sent to the shared memory logger.

console_log_level

Feature Status: MFL

Sets the log level threshold for printing to the console. Log messages with a level at or below this threshold will be printed to stdout.

max_file_size_bits

Feature Status: MFL

Define the rotating log file /var/log/aerial/${name}.log size. Size = 2 ^ bits:

shm_cache_size_bits

Feature Status: MFL

Define the SHM cache file /dev/shm/${name}.log size. Size = 2 ^ bits.

log_buf_size

Feature Status: MFL

Max log string length of one time call of the nvlog API

max_threads

Feature Status: MFL

The maximum number of threads which are using nvlog all together

save_to_file

Feature Status: MFL

Whether to copy and save the SHM cache log to a rotating log file under /var/log/aerial/ folder

cpu_core_id

Feature Status: MFL

CPU core ID for the background log saving thread. -1 means the core is not pinned.

prefix_opts

Feature Status: MFL

bit5 - thread_id

bit4 - sequence number

bit3 - log level

bit2 - module type

bit1 - date

bit0 - time stamp

Refer to nvlog.h for more details.

The OAM Metrics API is used internally by cuPHY-CP components to report metrics (counters, gauges, and histograms). The metrics are exposed via a Prometheus Aerial exporter.

Host Metrics

Feature Status: MFL

Host metrics are provided via the Prometheus node exporter. The node exporter provides many thousands of metrics about the host hardware and OS, such as but not limited to

CPU statistics

Disk statistics

Filesystem statistics

Memory statistics

Network statistics

Please refer to https://github.com/prometheus/node_exporter and https://prometheus.io/docs/guides/node-exporter/ for detailed documentation on the node exporter.

GPU Metrics

Feature Status: MFL

GPU hardware metrics are provided through the GPU Operator via the Prometheus dcgm exporter. The dcgm exporter provides many thousands of metrics about the GPU and PCIe bus connection, such as but not limited to

GPU hardware clock rates

GPU hardware temperatures

GPU hardware power consumption

GPU memory utilization

GPU hardware errors including ECC

PCIe throughput

Please refer to https://github.com/NVIDIA/gpu-operator for details on the GPU operator.

Please refer to https://github.com/NVIDIA/gpu-monitoring-tools for detailed documentation on the dcgm exporter.

An example grafana dashboard is available at https://grafana.com/grafana/dashboards/12239

Aerial Metric Naming Conventions

Feature Status: MFL

In addition to metrics available through the node exporter and dcgm exporter, Aerial exposes several application metrics.

Metric names are per https://prometheus.io/docs/practices/naming/ and will follow the format aerial_<component>_<sub-component>_<metric description>_<units>

Metric types are per https://prometheus.io/docs/concepts/metric_types/

The component and sub-component definitions are in the table below:

Co mponent |

Su b-Component |

Description |

|---|---|---|

| cuphycp | cuPHY Control Plane application | |

| fapi | L2/L1 interface metrics | |

| cplane | Fronthaul C-plane metrics | |

| uplane | Fronthaul U-plane metrics | |

| net | Generic network interface metrics | |

| cuphy | cuPHY L1 library | |

| pbch | Physical Broadcast Channel metrics | |

| pdsch | Physical Downlink Shared Channel metrics | |

| pdcch | Physical Downlink Common Channel metrics | |

| pusch | Physical Uplink Shared Channel metrics | |

| pucch | Physical Uplink Common Channel metrics | |

| prach | Physical Random Access Channel metrics |

For each metric, the description, metric type and metric tags are provided. Tags are a way of providing granularity to metrics without creating new metrics.

Metrics exporter port

Feature Status: MFL

Aerial metrics are exported on port TBD

L2/L1 Interface Metrics

aerial_cuphycp_slots_total

Feature Status: MFL

Description: Counts the total number of processed slots

Metric type: counter

Metric tags:

type: “UL” or “DL”

cell: “cell number”

aerial_cuphycp_fapi_rx_packets

Feature Status: MFL

Description: Counts the total number of messages L1 receives from L2

Metric type: counter

Metric tags:

msg_type: “type of PDU”

cell: “cell number”

aerial_cuphycp_fapi_tx_packets

Feature Status: MFL

Description: Counts the total number of messages L1 transmits to L2

Metric type: counter

Metric tags:

msg_type: “type of PDU”

cell: “cell number”

Fronthaul Interface Metrics

aerial_cuphycp_cplane_tx_packets_total

Feature Status: MFL

Description: Counts the total number of C-plane packets transmitted by L1 over ORAN Fronthaul interface

Metric type: counter

Metric tags:

cell: “cell number”

aerial_cuphycp_cplane_tx_bytes_total

Feature Status: MFL

Description: Counts the total number of C-plane bytes transmitted by L1 over ORAN Fronthaul interface

Metric type: counter

Metric tags:

cell: “cell number”

aerial_cuphycp_uplane_rx_packets_total

Feature Status: MFL

Description: Counts the total number of U-plane packets received by L1 over ORAN Fronthaul interface

Metric type: counter

Metric tags:

cell: “cell number”

aerial_cuphycp_uplane_rx_bytes_total

Feature Status: MFL

Description: Counts the total number of U-plane bytes received by L1 over ORAN Fronthaul interface

Metric type: counter

Metric tags:

cell: “cell number”

aerial_cuphycp_uplane_tx_packets_total

Feature Status: MFL

Description: Counts the total number of U-plane packets transmitted by L1 over ORAN Fronthaul interface

Metric type: counter

Metric tags:

cell: “cell number”

aerial_cuphycp_uplane_tx_bytes_total

Feature Status: MFL

Description: Counts the total number of U-plane bytes transmitted by L1 over ORAN Fronthaul interface

Metric type: counter

Metric tags:

cell: “cell number”

aerial_cuphycp_uplane_lost_prbs_total

Feature Status: MFL

Description: Counts the total number of PRBs expected but not received by L1 over ORAN Fronthaul interface

Metric type: counter

Metric tags:

cell: “cell number”

channel: One of “prach” or “pusch”

NIC Metrics

aerial_cuphycp_net_rx_failed_packets_total

Feature Status: MFL

Description: Counts the total number of erroneous packets received

Metric type: counter

Metric tags:

nic: “nic port BDF address”

aerial_cuphycp_net_rx_nombuf_packets_total

Feature Status: MFL

Description: Counts the total number of receive packets dropped due to the lack of free mbufs

Metric type: Counter

Metric tags:

nic: “nic port BDF address”

aerial_cuphycp_net_rx_dropped_packets_total

Feature Status: MFL

Description: Counts the total number of receive packets dropped by the NIC hardware

Metric type: Counter

Metric tags:

nic: “nic port BDF address”

aerial_cuphycp_net_tx_failed_packets_total

Feature Status: MFL

Description: Counts the total number of instances a packet failed to transmit

Metric type: Counter

Metric tags:

nic: “nic port BDF address”

aerial_cuphycp_net_tx_accu_sched_missed_interrupt_errors_total

Feature Status: MFL

Description: Counts the total number of instances accurate send scheduling missed an interrupt

Metric type: Counter

Metric tags:

nic: “nic port BDF address”

aerial_cuphycp_net_tx_accu_sched_rearm_queue_errors_total

Feature Status: MFL

Description: Counts the total number of accurate send scheduling rearm queue errors

Metric type: Counter

Metric tags:

nic: “nic port BDF address”

aerial_cuphycp_net_tx_accu_sched_clock_queue_errors_total

Feature Status: MFL

Description: Counts the total number accurate send scheduling clock queue errors

Metric type: Counter

Metric tags:

nic: “nic port BDF address”

aerial_cuphycp_net_tx_accu_sched_timestamp_past_errors_total

Feature Status: MFL

Description: Counts the total number of accurate send scheduling timestamp in the past errors

Metric type: Counter

Metric tags:

nic: “nic port BDF address”

aerial_cuphycp_net_tx_accu_sched_timestamp_future_errors_total

Feature Status: MFL

Description: Counts the total number of accurate send scheduling timestamp in the future errors

Metric type: Counter

Metric tags:

nic: “nic port BDF address”

aerial_cuphycp_net_tx_accu_sched_clock_queue_jitter_ns

Feature Status: MFL

Description: Current measurement of accurate send scheduling clock queue jitter, in units of nanoseconds

Metric type: Gauge

Metric tags:

nic: “nic port BDF address”

Details:

This gauge shows the TX scheduling timestamp jitter, i.e. how far each individual Clock Queue (CQ) completion is from UTC time.

If we set CQ completion frequency to 2MHz (tx_pp=500) we will might

see the following completions:

cqe 0 at 0 ns

cqe 1 at 505 ns

cqe 2 at 996 ns

cqe 3 at 1514 ns

…

tx_pp_jitter is the time difference between two consecutive CQ completions

aerial_cuphycp_net_tx_accu_sched_clock_queue_wander_ns

Feature Status: MFL

Description: Current measurement of the divergence of Clock Queue (CQ) completions from UTC time over a longer time period (~8s)

Metric type: Gauge

Metric tags:

nic: “nic port BDF address”

Application Performance Metrics

aerial_cuphycp_slot_processing_duration_us

Feature Status: MFL

Description: Counts the total number of slots with GPU processing duration in each 250us-wide histogram bin

Metric type: Histogram

Metric tags:

cell: “cell number”

channel: one of “pbch”, “pdcch”, “pdsch”, “prach”, or “pusch”

le: histogram less-than-or-equal-to 250us-wide histogram bins, for 250, 500, …, 2000, +inf bins.

aerial_cuphycp_slot_processing_duration_us

Feature Status: Roadmap

Description: Counts the total number of slots with GPU processing duration in each 250us-wide histogram bin

Metric type: Histogram

Metric tags:

cell: “cell number”

channel: one of “pucch”

le: histogram less-than-or-equal-to 250us-wide histogram bins, for 250, 500, …, 2000, +inf bins.

PUSCH Metrics

aerial_cuphycp_slot_pusch_processing_duration_us

Feature Status: MFL

Description: Counts the total number of PUSCH slots with GPU processing duration in each 250us-wide histogram bin

Metric type: Histogram

Metric tags:

cell: “cell number”

le: histogram less-than-or-equal-to 250us-wide histogram bins, range 0 to 2000us.

aerial_cuphycp_pusch_rx_tb_bytes_total

Feature Status: MFL

Description: Counts the total number of transport block bytes received in the PUSCH channel

Metric type: Counter

Metric tags:

cell: “cell number”

aerial_cuphycp_pusch_rx_tb_total

Feature Status: MFL

Description: Counts the total number of transport blocks received in the PUSCH channel

Metric type: Counter

Metric tags:

cell: “cell number”

aerial_cuphycp_pusch_rx_tb_crc_error_total

Feature Status: MFL

Description: Counts the total number of transport blocks received with CRC errors in the PUSCH channel

Metric type: Counter

Metric tags:

cell: “cell number”

aerial_cuphycp_pusch_nrofuesperslot

Feature Status: MFL

Description: Counts the total number of UEs processed in each slot per histogram bin PUSCH channel

Metric type: Histogram

Metric tags:

cell: “cell number”

le: Histogram bin less-than-or-equal-to for 2, 4, …, 24, +inf bins.

PRACH Metrics

aerial_cuphy_prach_rx_preambles_total

Feature Status: MFL

Description: Counts the total number of detected preambles in PRACH channel

Metric type: Counter

Metric tags:

cell: “cell number”

PDSCH Metrics

aerial_cuphycp_slot_pdsch_processing_duration_us

Feature Status: MFL

Description: Counts the total number of PDSCH slots with GPU processing duration in each 250us-wide histogram bin

Metric type: Histogram

Metric tags:

cell: “cell number”

le: histogram less-than-or-equal-to 250us-wide histogram bins, range 0 to 2000us.

aerial_cuphy_pdsch_tx_tb_bytes_total

Feature Status: MFL

Description: Counts the total number of transport block bytes transmitted in the PDSCH channel

Metric type: Counter

Metric tags:

cell: “cell number”

aerial_cuphy_pdsch_tx_tb_total

Feature Status: MFL

Description: Counts the total number of transport blocks transmitted in the PDSCH channel

Metric type: Counter

Metric tags:

cell: “cell number”

aerial_cuphycp_pdsch_nrofuesperslot

Feature Status: MFL

Description: Counts the total number of UEs processed in each slot per histogram bin PDSCH channel

Metric type: Histogram

Metric tags:

cell: “cell number”

le: Histogram bin less-than-or-equal-to for 2, 4, …, 24, +inf bins.

Example cuphycontroller config

# Copyright (c) 2017-2020, NVIDIA CORPORATION. All rights reserved.

#

# Redistribution and use in source and binary forms, with or without modification, are permitted

# provided that the following conditions are met:

# * Redistributions of source code must retain the above copyright notice, this list of

# conditions and the following disclaimer.

# * Redistributions in binary form must reproduce the above copyright notice, this list of

# conditions and the following disclaimer in the documentation and/or other materials

# provided with the distribution.

# * Neither the name of the NVIDIA CORPORATION nor the names of its contributors may be used

# to endorse or promote products derived from this software without specific prior written

# permission.

#

# THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND ANY EXPRESS OR

# IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND

# FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL NVIDIA CORPORATION BE LIABLE

# FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING,

# BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS;

# OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT,

# STRICT LIABILITY, OR TOR (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

# OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

---

l2adapter_filename: l2_adapter_config_F08_R750.yaml

aerial_metrics_backend_address: 127.0.0.1:8081

# CPU core shared by all low-priority threads

low_priority_core: 23

nic_tput_alert_threshold_mbps: 85000

cuphydriver_config:

standalone: 0

validation: 0

num_slots: 8

log_level: DBG

profiler_sec: 0

dpdk_thread: 23

dpdk_verbose_logs: 0

accu_tx_sched_res_ns: 500

accu_tx_sched_disable: 0

fh_stats_dump_cpu_core: 23

pdump_client_thread: -1

mps_sm_pusch: 66

mps_sm_pucch: 16

mps_sm_prach: 2

mps_sm_ul_order: 16

mps_sm_pdsch: 102

mps_sm_pdcch: 10

mps_sm_pbch: 2

mps_sm_gpu_comms: 16

mps_sm_srs: 16

pdsch_fallback: 0

dpdk_file_prefix: cuphycontroller

nics:

- nic: 0000:cc:00.0

mtu: 1514

cpu_mbufs: 196608

uplane_tx_handles: 64

txq_count: 60

rxq_count: 20

txq_size: 8192

rxq_size: 16384

gpu: 0

gpus:

- 0

# Set GPUID to the GPU sharing the PCIe switch as NIC

# run nvidia-smi topo -m to find out which GPU

# Non-Hyperthreaded

workers_ul:

- 5

- 7

workers_dl:

- 11

- 13

- 15

debug_worker: -1

workers_sched_priority: 95

prometheus_thread: -1

start_section_id_srs: 3072

start_section_id_prach: 2048

enable_ul_cuphy_graphs: 1

enable_dl_cuphy_graphs: 1

ul_order_timeout_cpu_ns: 8000000

ul_order_timeout_log_interval_ns: 1000000000

ul_order_timeout_gpu_ns: 3000000

ul_order_timeout_gpu_log_enable: 0

cplane_disable: 0

gpu_init_comms_dl: 1

cell_group: 1

cell_group_num: 16

fix_beta_dl: 1

pusch_sinr: 2

pusch_rssi: 1

pusch_tdi: 1

pusch_cfo: 1

pusch_dftsofdm: 0

pusch_to: 1

pusch_select_eqcoeffalgo: 3

pusch_select_chestalgo: 1

pusch_tbsizecheck: 1

pusch_subSlotProcEn: 0

pusch_deviceGraphLaunchEn: 1

pusch_waitTimeOutPreEarlyHarqUs: 5000

pusch_waitTimeOutPostEarlyHarqUs: 5000

puxch_polarDcdrListSz: 8

enable_cpu_task_tracing: 0

enable_prepare_tracing: 0

enable_dl_cqe_tracing: 0

ul_rx_pkt_tracing_level: 0

enable_h2d_copy_thread: 1

h2d_copy_thread_cpu_affinity : 41

h2d_copy_thread_sched_priority : 95 #0->SCHED_OTHER, >0->Actual

split_ul_cuda_streams: 0 # 1=Put UL slot 4 and slot 5 on different streams for DDDSUUDDDD pattern

serialize_pucch_pusch: 0 # 1=Force serialization of PUSCH/PUCCH

# Note: for Early Harq (EH) order is PUSCH EH -> PUCCH -> PUSCH non EH

# for non-Early Harq order is PUCCH -> all PUSCH processing

aggr_obj_non_avail_th: 5 # Threshold for consecutive non-availability of Aggregated objects(UL/DL) or DL/UL buffers

dl_wait_th_ns:

- 500000 #H2D copy wait threshold

- 4000000 #cuPHY DL channel wait threshold

sendCPlane_timing_error_th_ns : 50000

pusch_forcedNumCsi2Bits: 0

mMIMO_enable: 0

enable_srs: 0

ue_mode: 0

cells:

- name: O-RU 0

cell_id: 1

ru_type: 3

# set to 00:00:00:00:00:00 to use the MAC address of the NIC port to use

src_mac_addr: 00:00:00:00:00:00

dst_mac_addr: 20:04:9B:9E:27:A3

nic: 0000:cc:00.0

vlan: 2

pcp: 7

txq_count_uplane: 1

eAxC_id_ssb_pbch: [8, 0, 1, 2]

eAxC_id_pdcch: [8, 0, 1, 2]

eAxC_id_pdsch: [8, 0, 1, 2]

eAxC_id_csirs: [8, 0, 1, 2]

eAxC_id_pusch: [8, 0, 1, 2]

eAxC_id_pucch: [8, 0, 1, 2]

eAxC_id_srs: [8, 0, 1, 2]

eAxC_id_prach: [15, 7, 0, 1]

compression_bits: 9

decompression_bits: 9

section_3_time_offset: 484

fs_offset_dl: 0

exponent_dl: 4

ref_dl: 0

fs_offset_ul: 0

exponent_ul: 4

max_amp_ul: 65504

mu: 1

T1a_max_up_ns: 345000

T1a_max_cp_ul_ns: 336000

Ta4_min_ns: 50000

Ta4_max_ns: 331000

Tcp_adv_dl_ns: 125000

ul_u_plane_tx_offset_ns: 280000

fh_len_range: 0

pusch_prb_stride: 273

prach_prb_stride: 12

srs_prb_stride: 12

pusch_ldpc_max_num_itr_algo_type: 1

pusch_fixed_max_num_ldpc_itrs: 10

pusch_ldpc_n_iterations: 7

pusch_ldpc_early_termination: 0

pusch_ldpc_algo_index: 0

pusch_ldpc_flags: 2

pusch_ldpc_use_half: 1

pusch_nMaxPrb: 273

ul_gain_calibration: 48.68

lower_guard_bw: 845

tv_pusch: cuPhyChEstCoeffs.h5

...

Example l2_adapter_config yaml file

# Copyright (c) 2017-2020, NVIDIA CORPORATION. All rights reserved.

#

# Redistribution and use in source and binary forms, with or without modification, are permitted

# provided that the following conditions are met:

# * Redistributions of source code must retain the above copyright notice, this list of

# conditions and the following disclaimer.

# * Redistributions in binary form must reproduce the above copyright notice, this list of

# conditions and the following disclaimer in the documentation and/or other materials

# provided with the distribution.

# * Neither the name of the NVIDIA CORPORATION nor the names of its contributors may be used

# to endorse or promote products derived from this software without specific prior written

# permission.

#

# THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND ANY EXPRESS OR

# IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND

# FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL NVIDIA CORPORATION BE LIABLE

# FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING,

# BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS;

# OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT,

# STRICT LIABILITY, OR TOR (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

# OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

---

#gnb_module

msg_type: scf_5g_fapi

phy_class: scf_5g_fapi

slot_advance: 3

# tick_generator_mode: 0 - poll + sleep; 1 - sleep; 2 - timer_fd

tick_generator_mode: 1

# Allowed maximum latency of SLOT FAPI messages which send from L2 to L1. Unit: slot

allowed_fapi_latency: 1

# Allowed tick interval error. Unit: us

allowed_tick_error: 10

timer_thread_config:

name: timer_thread

cpu_affinity: 29

sched_priority: 99

message_thread_config:

name: msg_processing

#core assignment

cpu_affinity: 29

# thread priority

sched_priority: 95

# Lowest TTI for Ticking

mu_highest: 1

dl_tb_loc: 1

enableTickDynamicSfnSlot: 0

staticPucchSlotNum: 0

staticPuschSlotNum: 0

staticPdcchSlotNum: 0

staticPdschSlotNum: 0

staticCsiRsSlotNum: 0

staticSsbPcid: -1

staticSsbSFN: -1

cell_group: 1

enable_precoding: 1

enable_beam_forming: 1

prepone_h2d_copy: 1

pucch_dtx_thresholds: [-100.0, -100.0, 1.0, 1.0, -100.0]

pusch_dtx_thresholds: 1.0

instances:

# PHY 0

-

name: scf_gnb_configure_module_0_instance_0

-

name: scf_gnb_configure_module_0_instance_1

-

name: scf_gnb_configure_module_0_instance_2

-

name: scf_gnb_configure_module_0_instance_3

-

name: scf_gnb_configure_module_0_instance_4

-

name: scf_gnb_configure_module_0_instance_5

-

name: scf_gnb_configure_module_0_instance_6

-

name: scf_gnb_configure_module_0_instance_7

-

name: scf_gnb_configure_module_0_instance_8

-

name: scf_gnb_configure_module_0_instance_9

-

name: scf_gnb_configure_module_0_instance_10

-

name: scf_gnb_configure_module_0_instance_11

-

name: scf_gnb_configure_module_0_instance_12

-

name: scf_gnb_configure_module_0_instance_13

-

name: scf_gnb_configure_module_0_instance_14

-

name: scf_gnb_configure_module_0_instance_15

-

name: scf_gnb_configure_module_0_instance_16

-

name: scf_gnb_configure_module_0_instance_17

-

name: scf_gnb_configure_module_0_instance_18

-

name: scf_gnb_configure_module_0_instance_19

# Config dedicated yaml file for nvipc. Example: nvipc_multi_instances.yaml

nvipc_config_file: null

# Transport settings for nvIPC

transport:

type: shm

udp_config:

local_port: 38556

remort_port: 38555

shm_config:

primary: 1

prefix: nvipc

cuda_device_id: 0

ring_len: 8192

mempool_size:

cpu_msg:

buf_size: 8192

pool_len: 4096

cpu_data:

buf_size: 576000

pool_len: 1024

cuda_data:

buf_size: 307200

pool_len: 0

gpu_data:

buf_size: 576000

pool_len: 0

dpdk_config:

primary: 1

prefix: nvipc

local_nic_pci: 0000:b5:00.0

peer_nic_mac: 00:00:00:00:00:00

cuda_device_id: 0

need_eal_init: 0

lcore_id: 11

mempool_size:

cpu_msg:

buf_size: 8192

pool_len: 4096

cpu_data:

buf_size: 576000

pool_len: 1024

cuda_data:

buf_size: 307200

pool_len: 0

app_config:

grpc_forward: 0

debug_timing: 0

pcap_enable: 0

pcap_cpu_core: 17 # CPU core of background pcap log save thread

pcap_cache_size_bits: 29 # 2^29 = 512MB, size of /dev/shm/${prefix}_pcap

pcap_file_size_bits: 31 # 2^31 = 2GB, max size of /dev/shm/${prefix}_pcap

pcap_max_data_size: 8000 # Max DL/UL FAPI data size to capture reduce pcap size.

...

Example ru-emulator configuration file

---

ru_emulator:

core_list: 1-19

# PCI Address of NIC interface used

nic_interface: b5:00.1

# MAC address of cuPHYController port in use

peerethaddr: 0c:42:a1:d1:d0:a1

# VLAN agreed upon with DU

vlan: 2

port: 0

# DPDK Configs

dpdk_burst: 32

dpdk_mbufs: 65536