Jetson Platform Services#

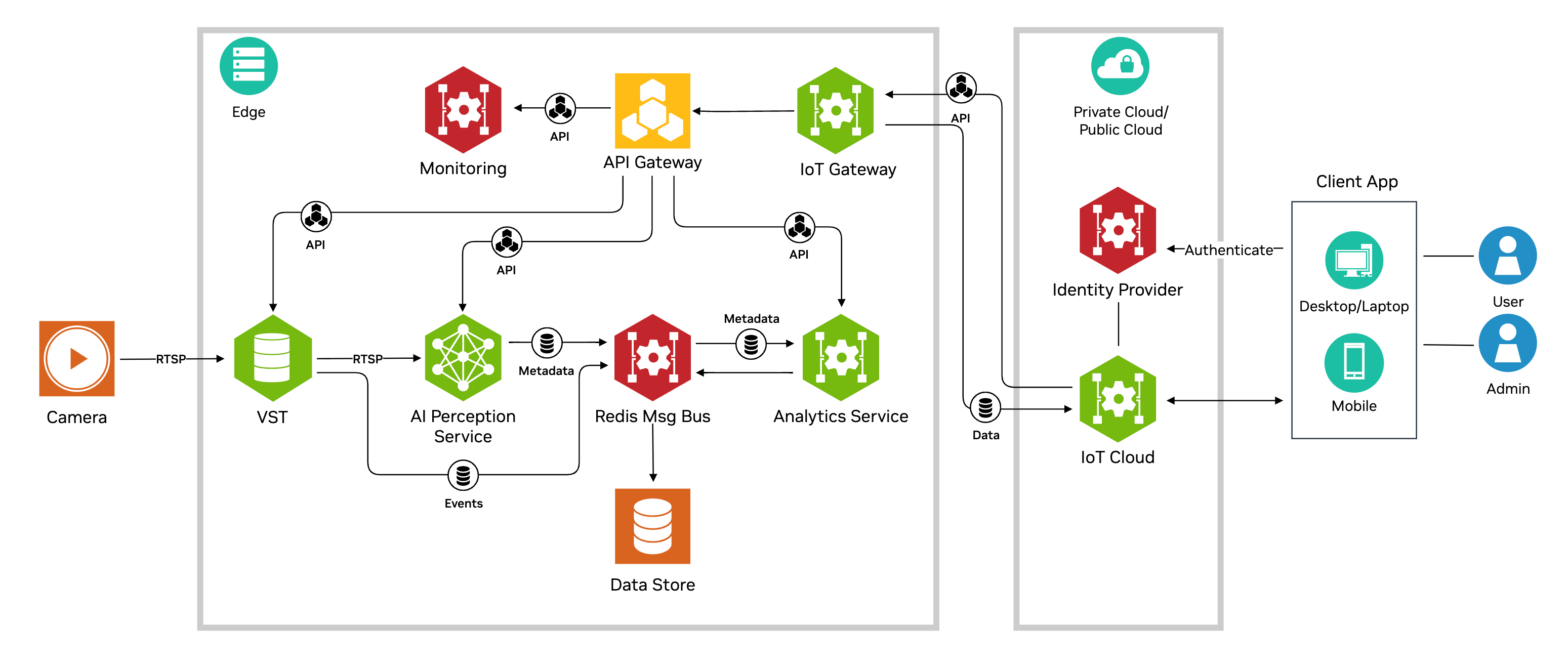

Jetson Platform Services provide a platform to simplify development, deployment and management of Edge AI applications on NVIDIA Jetson. They provide a modular & extensible architecture for developers to distill large complex applications into smaller modular microservice with APIs to integrate into other apps & services. At its core are a collection of AI services leveraging generative AI, deep learning, and analytics, which provide state of the art capabilities including video analytics, video understanding and summarization, text based prompting, zero shot detection and spatio temporal analysis of object movement. These in combination with existing pieces in the Metropolis ecosystem such as Video Storage Toolkit (VST) and DeepStream provide building blocks to quickly assemble fully functional systems for vision and other edge AI applications.

Addressing system maturity is another objective by enabling functionality needed for productization including security, monitoring and alerts, IoT/API gateways, and deployment - all available as out of the box functionality that developers can readily leverage.

Software Stack#

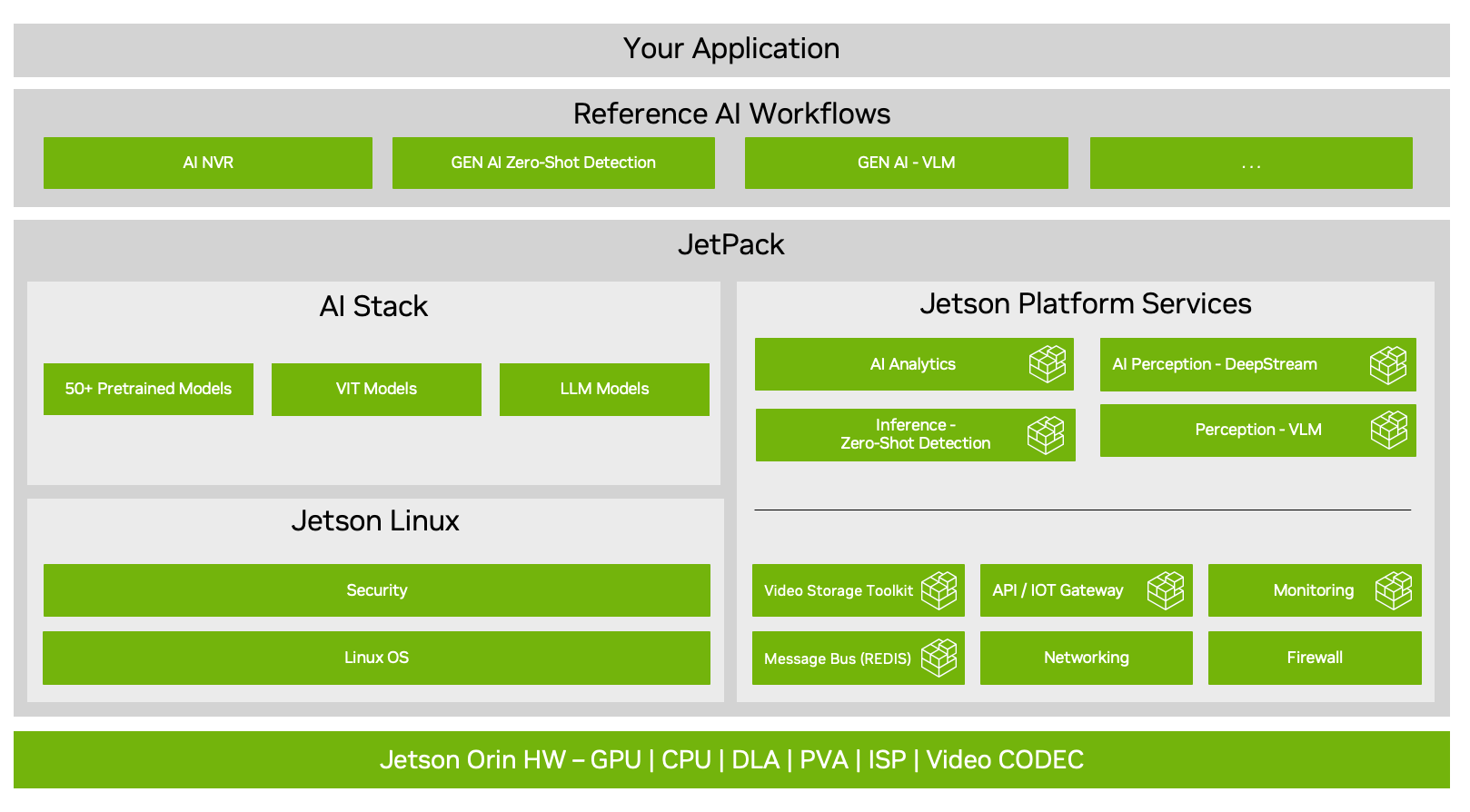

The Jetson software stack comprising the new Jetson Platform Services layer is shown in the diagram below. It is based on Jetpack 6.1 GA and supported on various variants of Jetson Orin, including: Orin AGX, NX16, NX8 and Nano 8GB. It comprises a suite of pre-built and customizable services divided into two main classes of software: (a) AI services (b) Foundation Services. Reference AI workflows (included with the release) demonstrate how end to end applications can be assembled by leveraging the Platform Services.

AI Services#

AI Services provide out-of-the-box, optimized video processing and analytics capabilities leveraging a combination of generative AI, deep learning, computer vision (tracking) and streaming analytics techniques, all through standardized APIs. These are containerized software that can be integrated into a user application using a deployment framework such as docker compose, as illustrated with the reference workflows.

The list of AI Services are summarized in the table below:

Name |

Description |

Features |

Orin Platforms Supported |

|---|---|---|---|

DeepStream Perception Service |

Optimized video analytics pipelines implemented using using NVIDIA DeepStream SDK |

Multi-stream object detection and tracking using PeopleNet or YOLO (v8s) models |

AGX, NX16, NX8, Nano |

VLM Inference Service |

Streaming Inference service using the VILA Video Language Model (VLM) based on generative AI |

video question answering (VQA), video summarization and prompt based alerts |

AGX, NX16, Nano (experimental) |

Zero Shot Detection Inference Service |

open world object detection |

object detection for large collection of object classes without any prior training using the Nano OWL generative AI model customized for Jetson |

AGX, NX16, Nano (experimental) |

Grounding DINO (GDINO) |

open vocabulary object detection |

object detection supporting limitless category of objects using generative AI |

AGX |

VLM Video Summarization |

API based video summarization |

accurate, generalizable technique based on natural language interfaces for summarizing video files using video language models (VLMs) |

AGX |

Analytics AI Service |

spatio temporal analysis of object movement in spaces |

use of streaming analytics enabling use cases including line crossing, region of interest counting, trajectories and heatmaps |

AGX, NX16, NX8, Nano |

At the core of AI Services is the use of REST APIs, which uniformly enable configuration and retrieval of results associated these the services. Developers create end applications to address real world use cases by building on top of these APIs. AI Service APIs can optionally be presented external to the system through the API Gateway (a.k.a Ingress) platform service (see below) that can be configured and used out of the box.

Foundation Services#

Foundation Services are domain agnostic services supporting commonly needed system functionality supported through including:

Video Storage Toolkit: Enables camera discovery, video storage, hardware accelerated video decode and streaming

Redis (Message Bus): Provides a shared message bus and storage for microservices

Ingress (API Gateway): Enables a standard mechanism to present microservice APIs to clients

Storage: Provisions external storage and allocates to various microservices

Networking: Manages network interfaces for connecting to IP cameras

Monitoring: Visualization of system utilization and application metrics using dashboards

Firewall: Controls ingress and egress network traffic to the system

Reference Workflows#

Applications are built by configuring and instantiating the AI Services that leverage underlying foundation services, along with use case specific logic that interacts with microservices using the APIs. Use of REST APIs allows clients to interact with systems remotely and securely through HTTP. Jetson Services and application logic run as containers on the system, and deployed as a bundle using docker compose. Containerized deployment allows software to be packaged and independently deployed remotely.

This release provides reference workflows listed below that leverage several pieces of the architecture to support end to end applications:

AI enabled network video recorder (AI-NVR) enables real time analytics on multiple streams using a combination of deep learning, computer vision, and streaming analytics in addition to supporting traditional NVR capabilities such as stream capture, storage, and video streaming.

Video Insights Agent (VIA) for real time video summarization using generative AI

These workflows leverage several microservices that get integrated with application logic through containers as “app bundles” and deployed through using the docker-compose utility.

Reference Cloud#

Reference Cloud which was included previously as part of Jetson Platform Services is not included as part of the v2.0 release. Note that the reference cloud deployments from prior releases may not be compatible with the device software from v2.0 release as well.

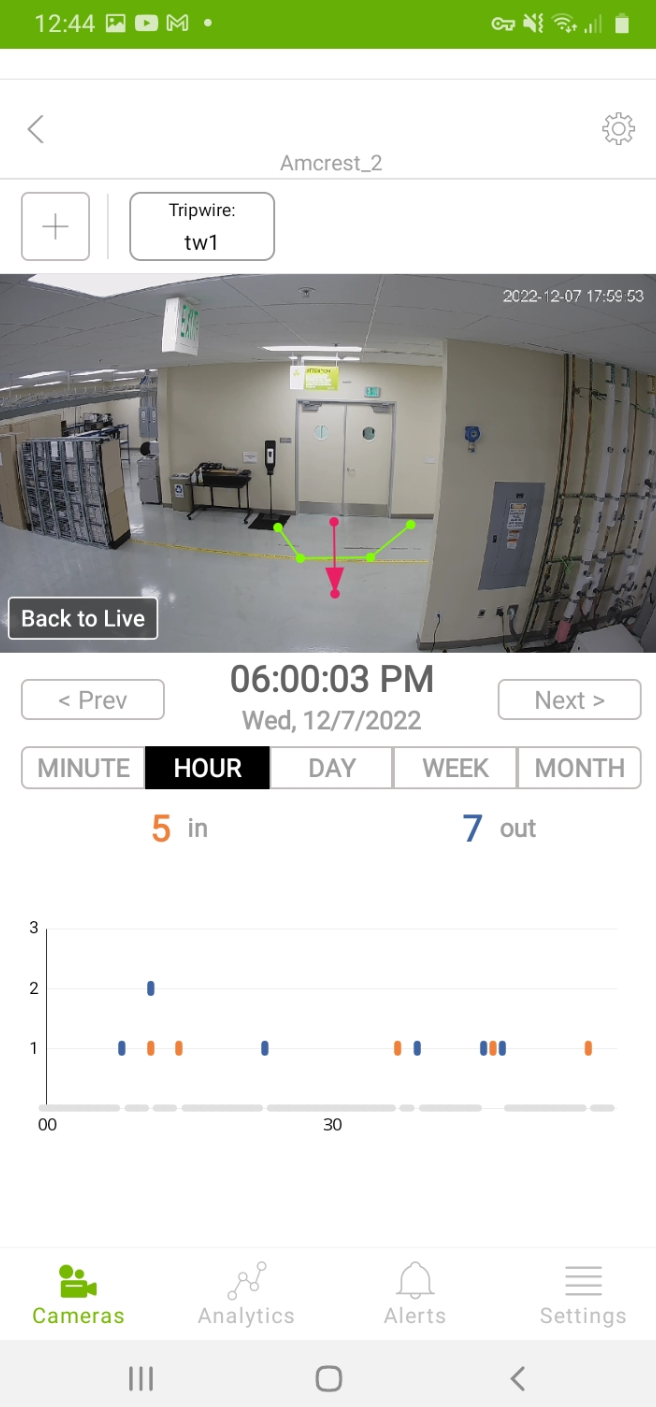

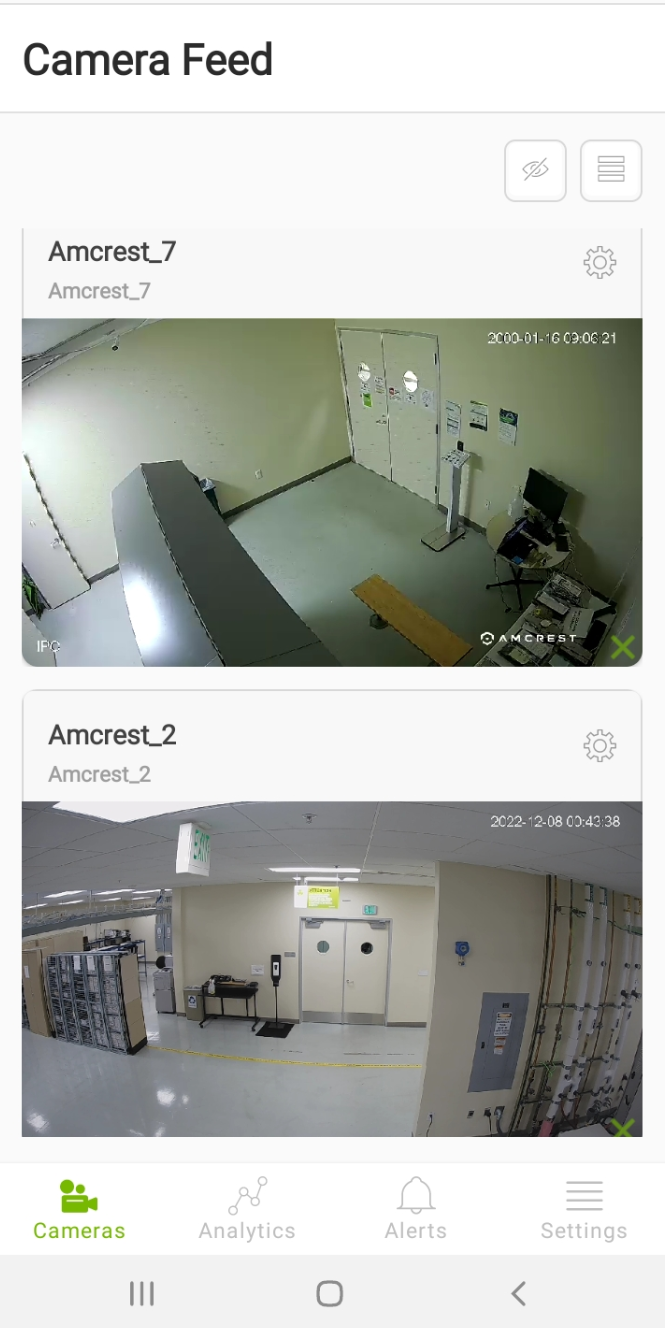

Reference Mobile Application#

The release includes a reference Android application to visualize how an end user client can interact with the system running both the AI-NVR and VIA workflows. The app showcases accessing various capabilities of the underlying software remotely using APIs including: live and recorded video streaming through webRTC supported by VST, analytics creation and visualization and alerts as supported by analytics inference service(see images below), and chat with video capability supported by VIA. The app interacts with the system solely using REST APIs.

Disaggregation#

While the canonical form of the software stack is that both AI Services and Foundation Services are installed in completion for full functionality, the general philosophy is that various pieces in the software stack could be installed in a disaggregated, piecemeal manner as motivated by specific use cases. Choice of specific microservices would depend on functionality relevant to their particular case and whether they have custom functionality. For instance, developers bringing their non DeepStream based inference can leverage VST for ingesting camera videos, and use resultant RTSP streams output from that for feeding their custom AI software.

Documentation Organization#

The rest of the documentation is organized as below. We recommend following the documentation in the same order.

The Quick Start Guide provides instructions for getting started with deploying the Jetson Platform Services stack on Orin hardware, showcasing streaming video, inference and analytics capabilities.

Refer to NVIDIA AI Services for Jetson for an overview of the various Inference Services along with detailed description of each.

Refer to the Overview for a description of Platform Services that provide a collection of general, reusable Linux services necessary for building mature, production grade systems on Jetson.

VST: Specifically, refer Video Storage Toolkit (VST) for camera discovery, video storage, video streaming

Refer to AI NVR for a description of extending the ‘Quick Start’ deployment to build a performant, mature AI application in the form of a Network Video Recorder using the platform.

Refer to AI-NVR Mobile Application User Guide for a rich Android based client to interact with the AI-NVR and VIA systems and view video, chat with the video, and create and view analytics and alerts.