Introduction

Mellanox SB77X0/SB78X0 switch systems provide the highest performing fabric solution in a 1U form factor by delivering up to 7.2Tb/s of non-blocking bandwidth with sub 90ns port-to-port latency. These systems are the industry's most cost-effective building blocks for embedded systems and storage with a need for low port density systems. Whether looking at price-to-performance or energy-to-performance, these systems offer superior performance, power and space, reducing capital and operating expenses and providing the best return-on-investment.

Powerful servers combined with high-performance storage and applications that use increasingly complex computations are causing data bandwidth requirements to spiral upward. As servers are deployed with next generation processors, High-Performance Computing (HPC) environments and Enterprise Data Centers (EDC) need every last bit of bandwidth delivered with Mellanox's EDR InfiniBand systems.

Built with Mellanox's latest SwitchIB™ InfiniBand EDR 100Gb/s switch device, these standalone systems are an ideal choice for top-of-rack leaf connectivity or for building small to extremely large sized clusters. These systems enable efficient computing with features such as static routing, adaptive routing, and advanced congestion management. These features ensure the maximum effective fabric bandwidth by eliminating congestion.

The SB7700/SB7800, dual-core x86 CPU, comes with an onboard subnet manager, enabling simple, out-of-the-box fabric bring-up for up to 2048 nodes.

SB7700/SB7800 switches run the same MLNX-OS® software package as Mellanox FDR products to deliver complete chassis management of the firmware, power supplies, fans and ports.

Mellanox's edge systems can also be coupled with Mellanox's Unified Fabric Manager (UFM®) software for managing scale-out InfiniBand computing environments. UFM enables data center operators to efficiently provision, monitor and operate the modern data center fabric. UFM boosts application performance and ensures that the fabric is up and running at all times.

InfiniBand systems come as internally or externally managed. Internally managed systems include a CPU that runs the management software (MLNX-OS®) and management ports, which are used to transfer management traffic into the system. Externally managed systems come without the CPU and management ports, and are managed using firmware tools.

The SB7780 InfiniBand router is based on the SwitchIB™ switch ASIC, and offers fully flexible 36 EDR 100Gb/s ports, which can be split among six different subnets. The InfiniBand router brings two major enhancements to the Mellanox switch portfolio:

Increases resiliency by segregating the data center’s network into several subnets, with each subnet running its own subnet manager, effectively isolating each subnet from the others’ availability or instability.

Enables scaling of the fabric up to a virtually unlimited number of nodes.

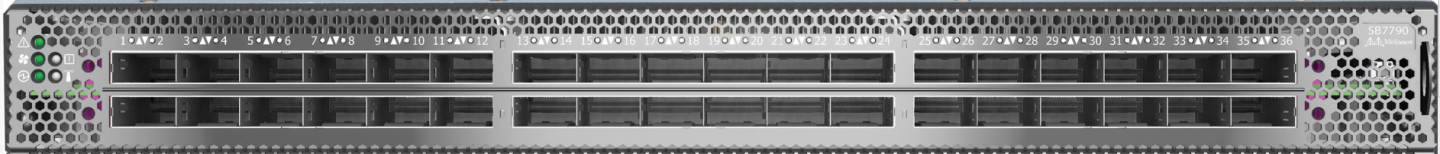

SB77X0/SB78X0 Front Side View

SB7700/SB7780/SB7800* Rear Side View

SB7790/SB7890 Rear Side View

*The SB7800 models include one MGT port labeled

.

The table below describes maximum throughput and interface speed per system model.

|

System Model |

EDR 100Gb/s QSFP28 Interfaces |

Max Throughput |

|

SB7700 |

36 |

7.2Tb/s |

|

SB7790 |

36 |

7.2Tb/s |

|

SB7800 |

36 |

7.2Tb/s |

|

SB7890 |

36 |

7.2Tb/s |

|

SB7780 |

36 |

7.2Tb/s |

The table below lists the various management interfaces and available replacement parts per system model.

|

System Model |

USB |

MGT |

I²C* |

Console* |

Replaceable PSU |

Replaceable Fan |

|

SB7700 |

Rear |

Rear (2 ports) |

Rear |

Rear |

Yes |

Yes |

|

SB7790 |

N/A |

N/A |

Rear |

N/A |

Yes |

Yes |

|

SB7800 |

Rear |

Rear (1 port) |

Rear |

Rear |

Yes |

Yes |

|

SB7890 |

N/A |

N/A |

Rear |

N/A |

Yes |

Yes |

|

SB7780 |

Rear |

Rear (2 ports) |

Rear |

Rear |

Yes |

Yes |

*The same connector is used for the I²C and the console interfaces.

For a full feature list, please refer to the systems’ product briefs at www.mellanox.com.

The list of certifications (such as EMC, Safety and others) per system for different regions of the world is located on the Mellanox website at http://www.mellanox.com/page/environmental_compliance.