Video Summarization Workflow#

The Video Summarization Workflow enables analysis and summarization of long-form video content that exceeds standard VLM context limitations.

Capabilities

Use Cases

Automated incident report generation

Event detection in extended video archives

Shift summaries and daily activity reports

Technical Approach

Standard VLMs are limited to processing short video clips. This workflow segments long videos, analyzes each segment with a VLM, and synthesizes the results into a coherent summary.

Estimated Deployment Time: 15-20 minutes

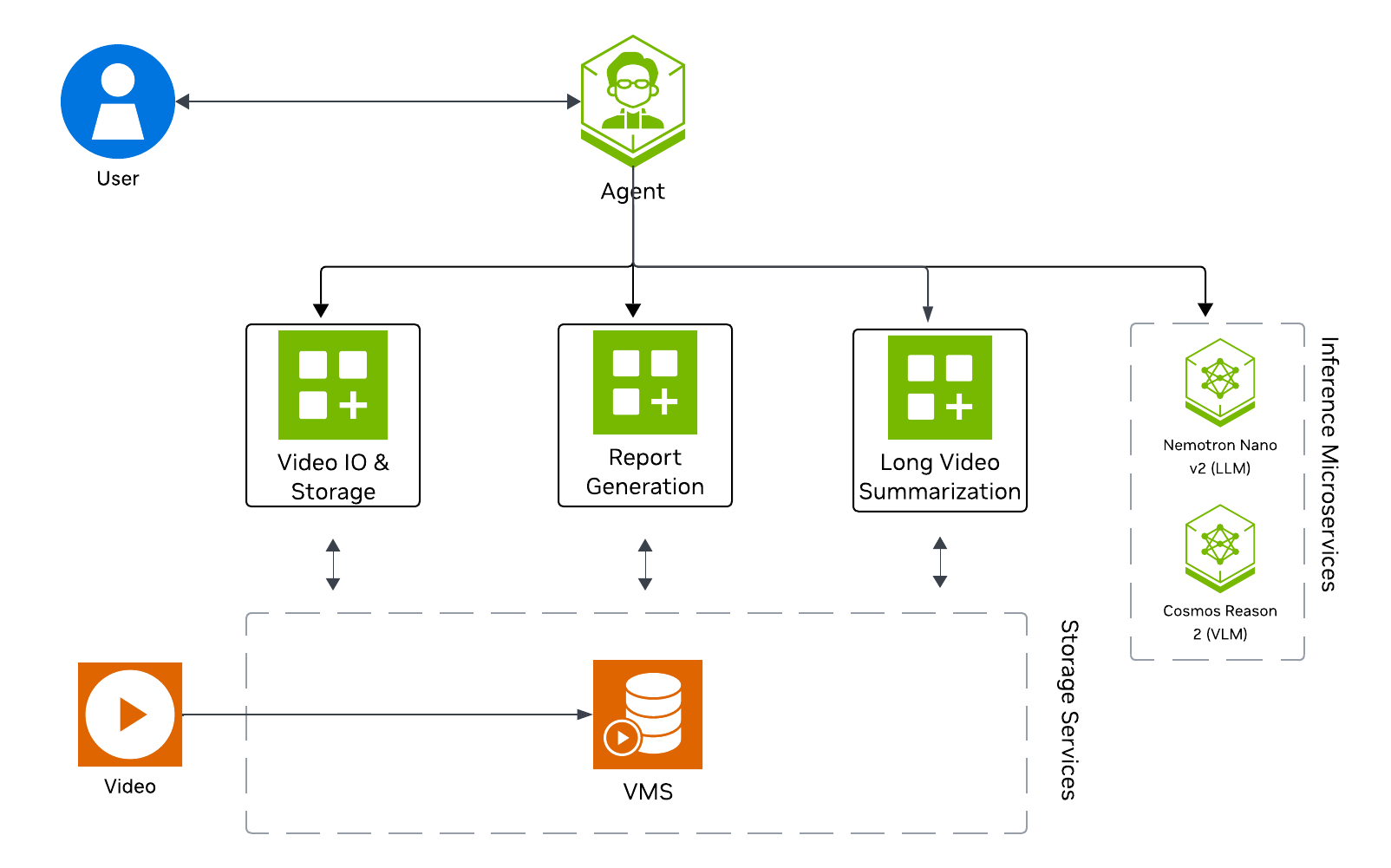

The following diagram illustrates the video summarization architecture:

Key Features of the Vision Agent with Long Video Summarization:

Quickly generate an overall summary within seconds, offering a high-level narrative of the video.

Formulate timestamped highlights of the video based on user-defined events.

Processes uploaded video files (minutes to hours in duration)

Generates narrative summaries of video content

Returns results through the AI agent interface

What’s being deployed#

VSS Agent: Agent service that orchestrates tool calls and model inference to answer questions and generate outputs

VSS Agent UI: Web UI with chat, video upload, and different views

VSS Video IO & Storage (VIOS): Video ingestion, recording, and playback services used by the agent for video access and management

Nemotron LLM (NIM): LLM inference service used for reasoning, tool selection, and response generation

Cosmos Reason 2 (NIM): Vision-language model with physical reasoning capabilities

VSS Long Video Summarization: Microservice for segmenting and summarizing long-form video content

ELK (without Logstash): Elasticsearch, and Kibana stack for log storage and analysis

Phoenix: Observability and telemetry service for agent workflow monitoring

Prerequisites#

Before you begin, ensure all of the prerequisites are met. See Prerequisites for more details.

Deploy#

Note

For instructions on downloading sample data and the deployment package, see Download Sample Data and Deployment Package in the Quickstart guide.

Skip to Step 1: Deploy the Agent if you have already downloaded and deployed another agent workflow.

Step 1: Deploy the Agent#

Based on your GPU, run the following command to deploy the agent:

deployments/dev-profile.sh up -p lvs

deployments/dev-profile.sh up -p lvs -H RTX6000PROBW

deployments/dev-profile.sh up -p lvs -H L40S

This deployment uses the following defaults:

Host IP: Primary IP from

ip routeLLM mode: local_shared

VLM mode: local_shared

LLM model: nvidia-nemotron-nano-9b-v2

VLM model: cosmos-reason2-8b

This command will download the necessary containers from the NGC Docker registry and start the agent. Depending on your network speed, this may take a few minutes.

Note

NGC API Key: The deployment requires an NGC CLI API key. You can either set it as an environment variable (export NGC_CLI_API_KEY='your_ngc_api_key') or pass it as a command-line argument using -k 'your_ngc_api_key'.

Note

For advanced deployment options such as hardware profiles, NIM configurations, and model selection, see Advanced Deployment Options.

Once complete, check that all the containers are running and healthy:

docker ps

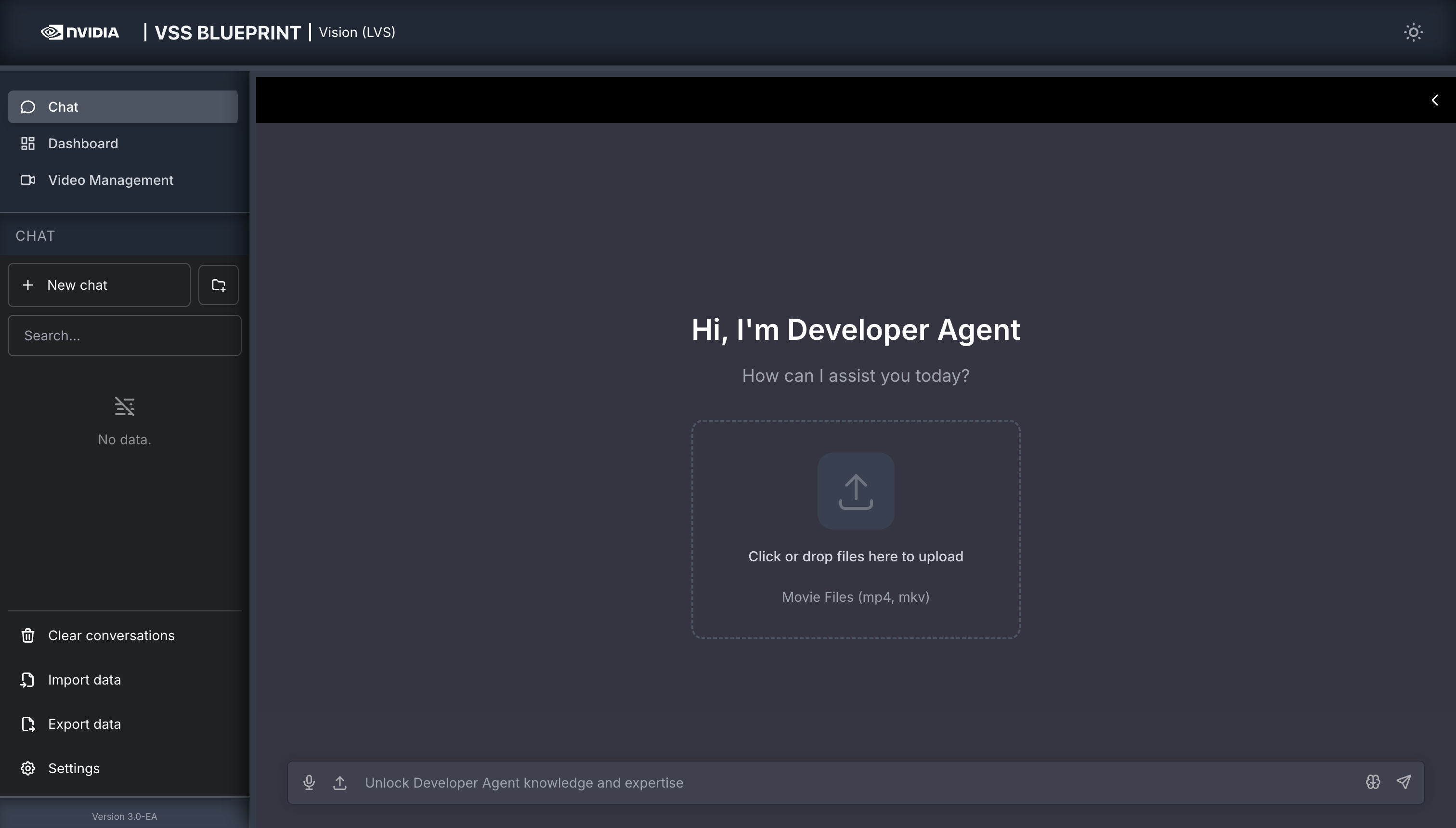

Once all the containers are running, you can access the agent UI at http://<HOST_IP>:3000/.

Step 2: Upload a video#

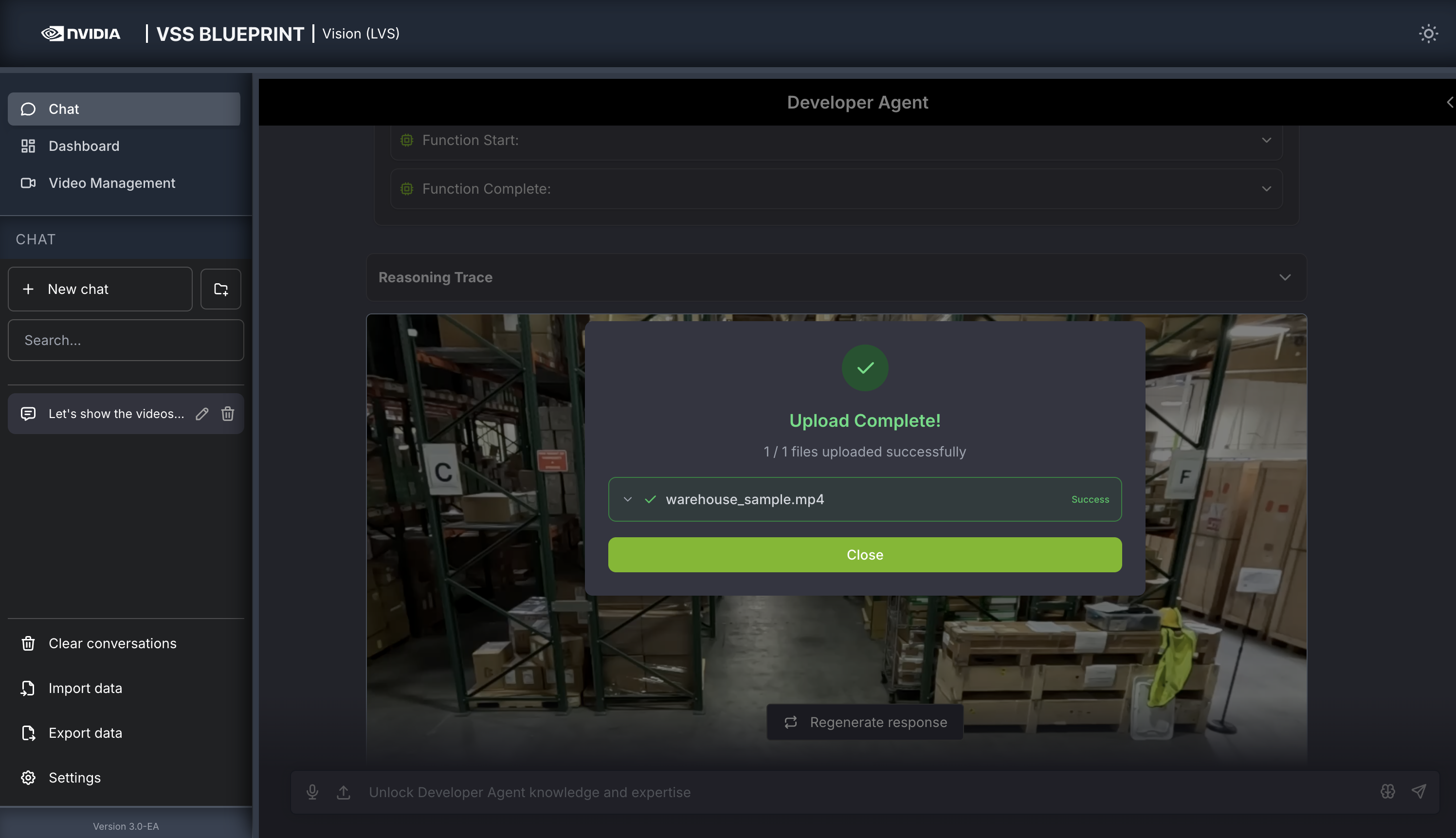

In the chat interface, drag and drop the video warehouse_sample.mp4 into the chat window.

Once the video is uploaded, the agent will respond with a message indicating that the video has been uploaded.

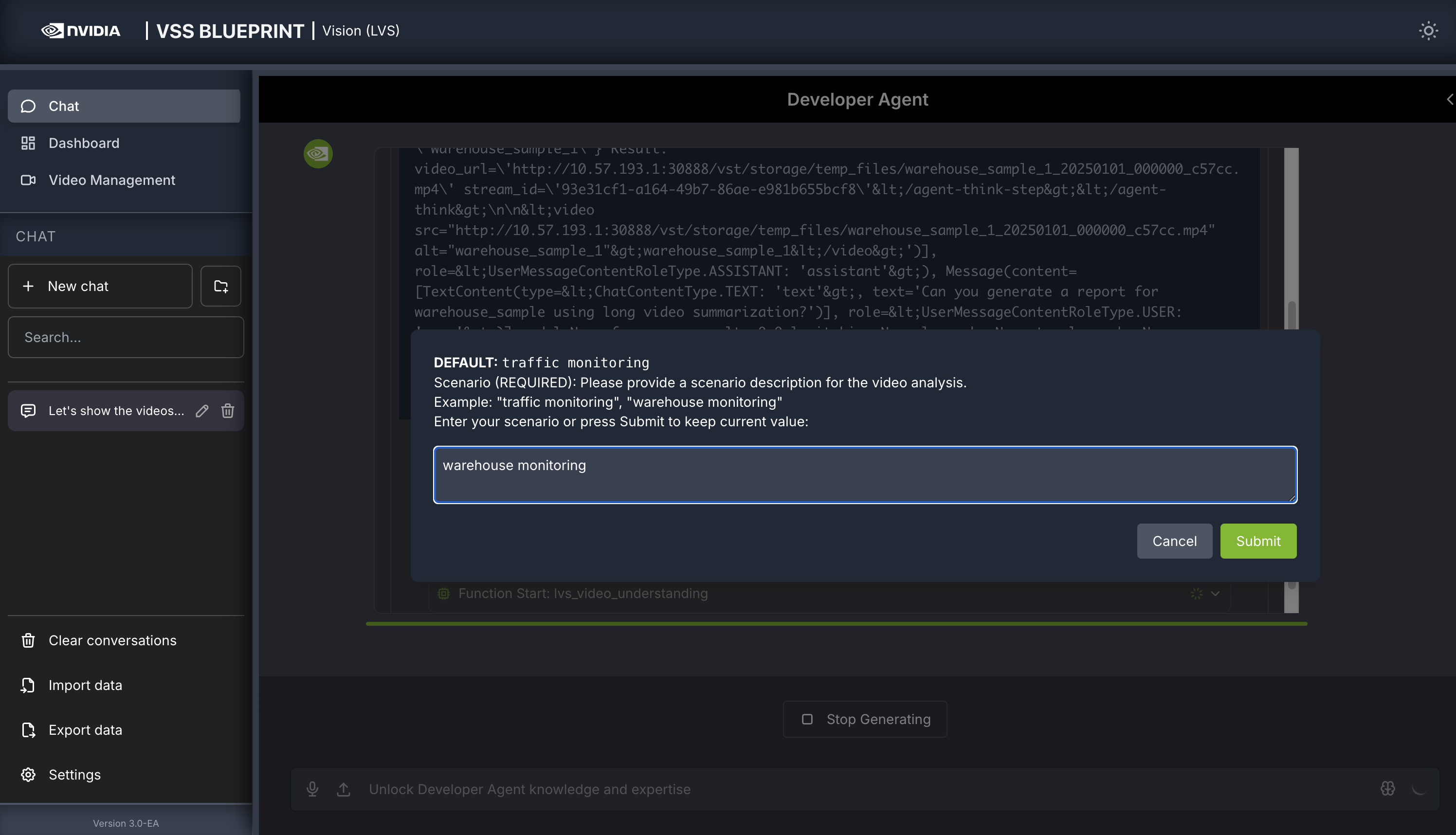

Step 3: Generate a report#

You can now ask the agent to generate a report about the video. Here is an example prompt:

Can you generate a report for warehouse_sample using long video summarization?

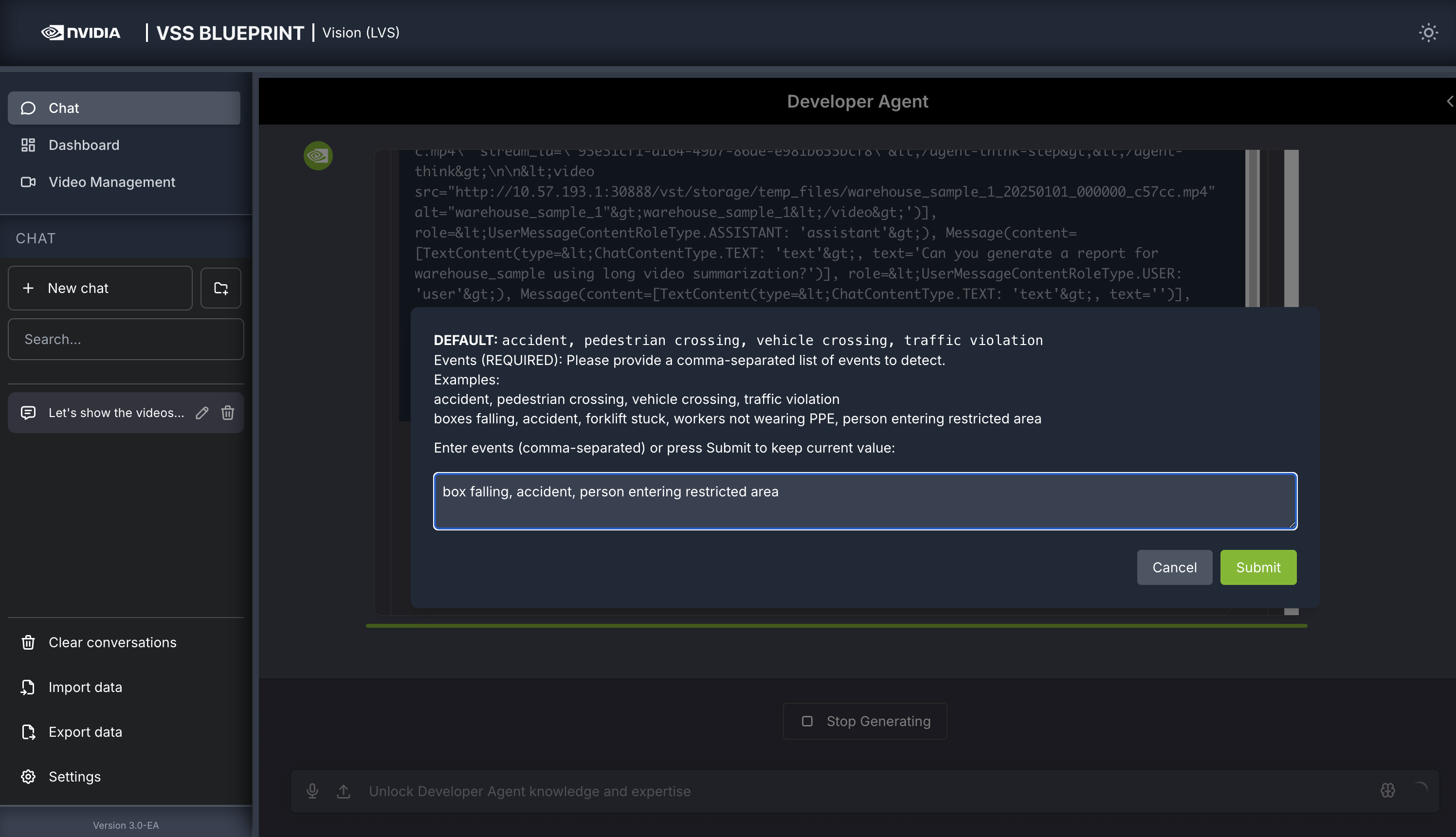

The agent will prompt you with 3 dialog windows to customize the LVS microservice parameters:

- Scenario

Describe the monitoring context. For example:

warehouse monitoring

- Events

List events of interest to track. For example:

box falling, accident, person entering restricted area

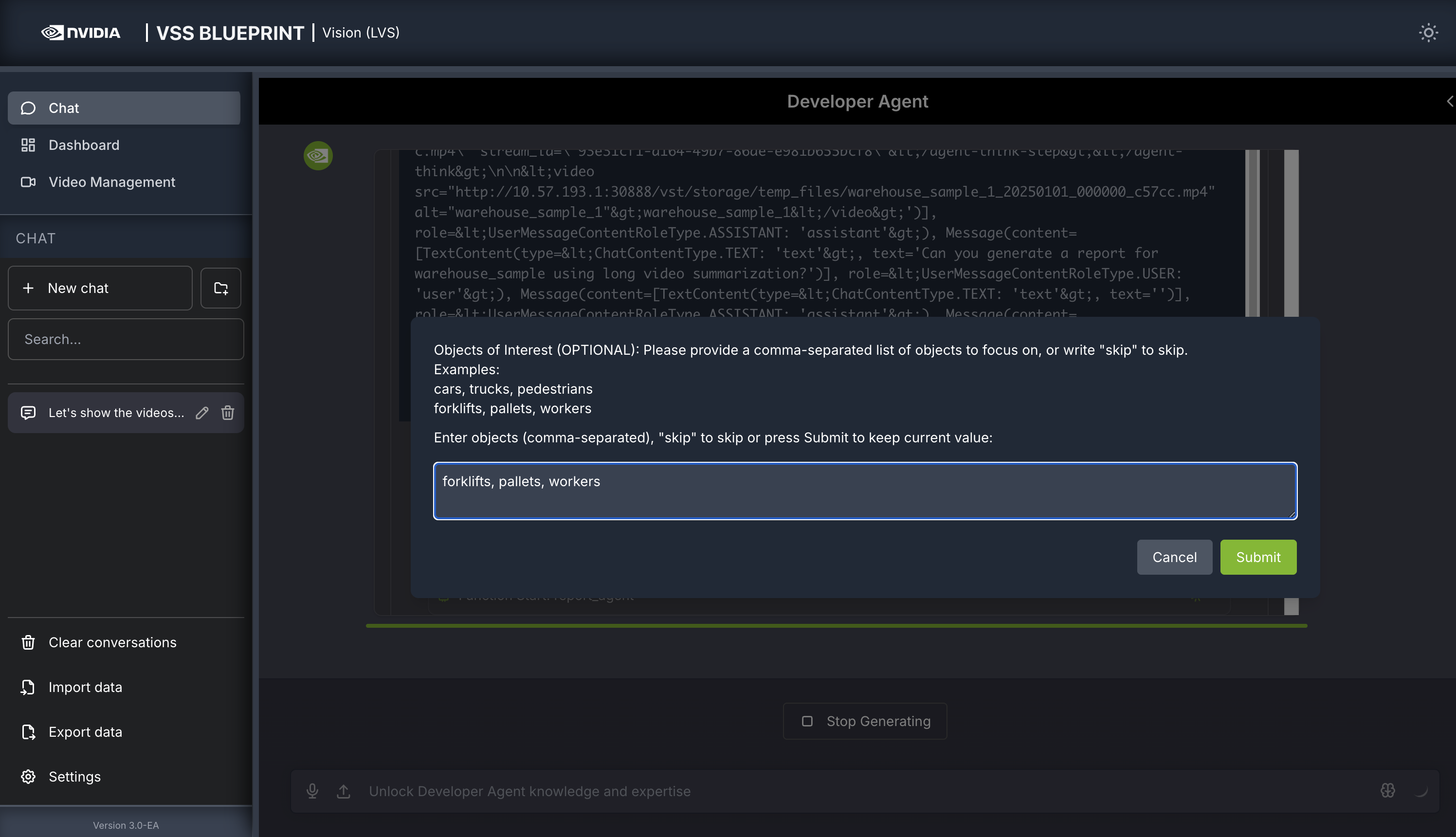

- Objects of Interest

Specify objects to monitor. For example:

forklifts, pallets, workers

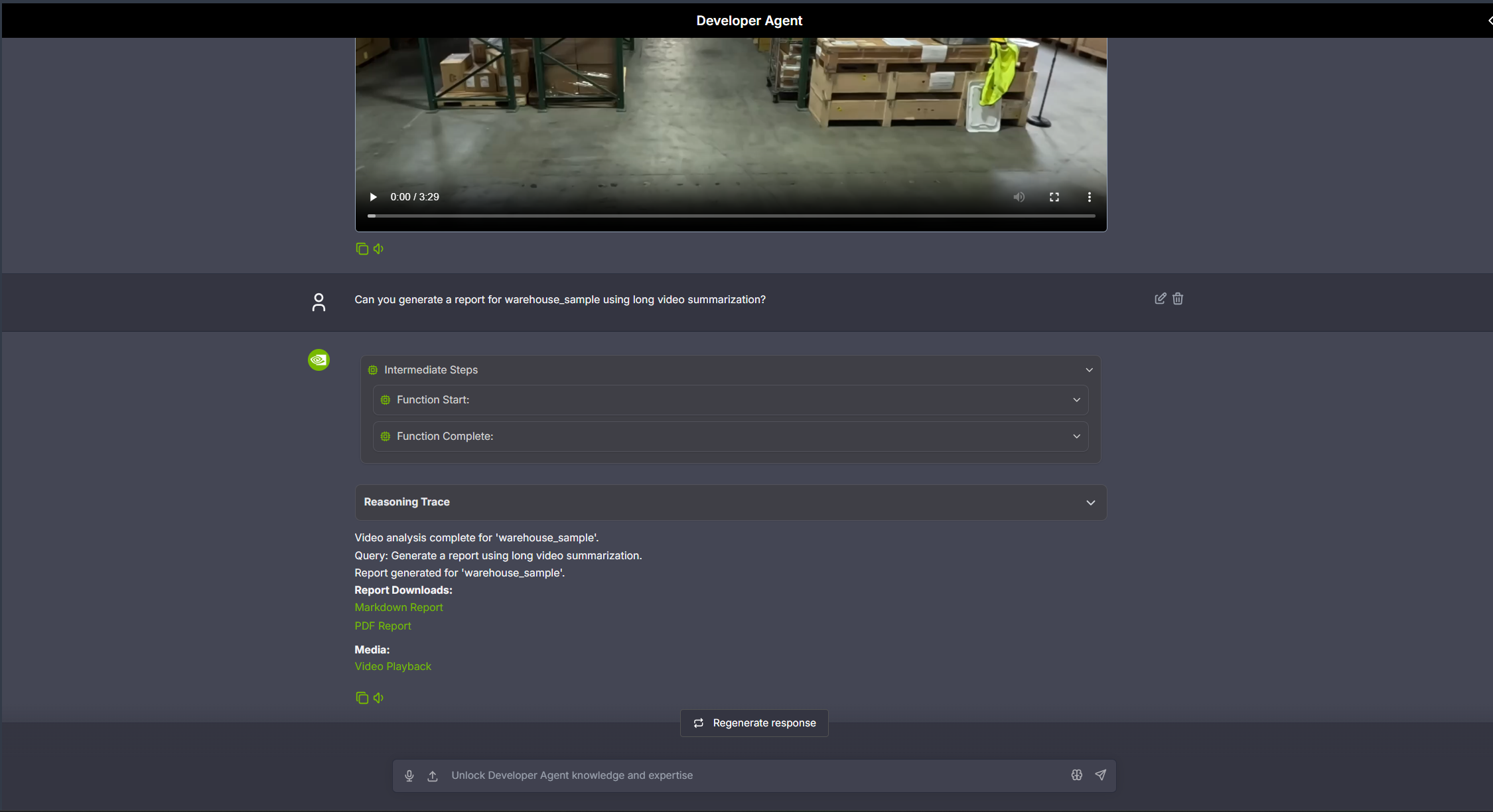

The agent will show the intermediate steps of the agent’s reasoning while the response is being generated and then output the final answer. You can download the report in PDF format by clicking on “PDF Report” in the agent’s response:

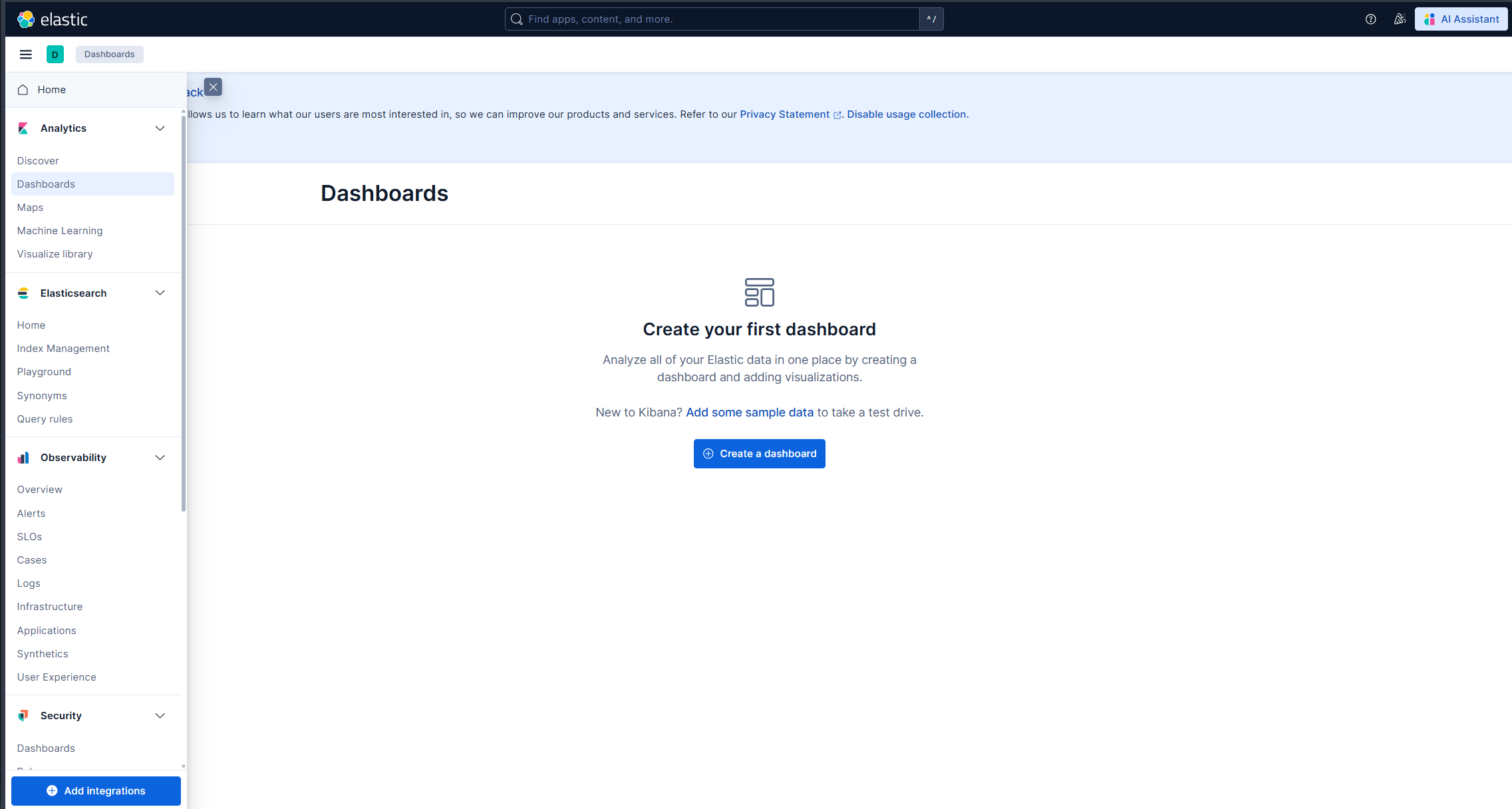

Step 4: Search for specific events in the Dashboard#

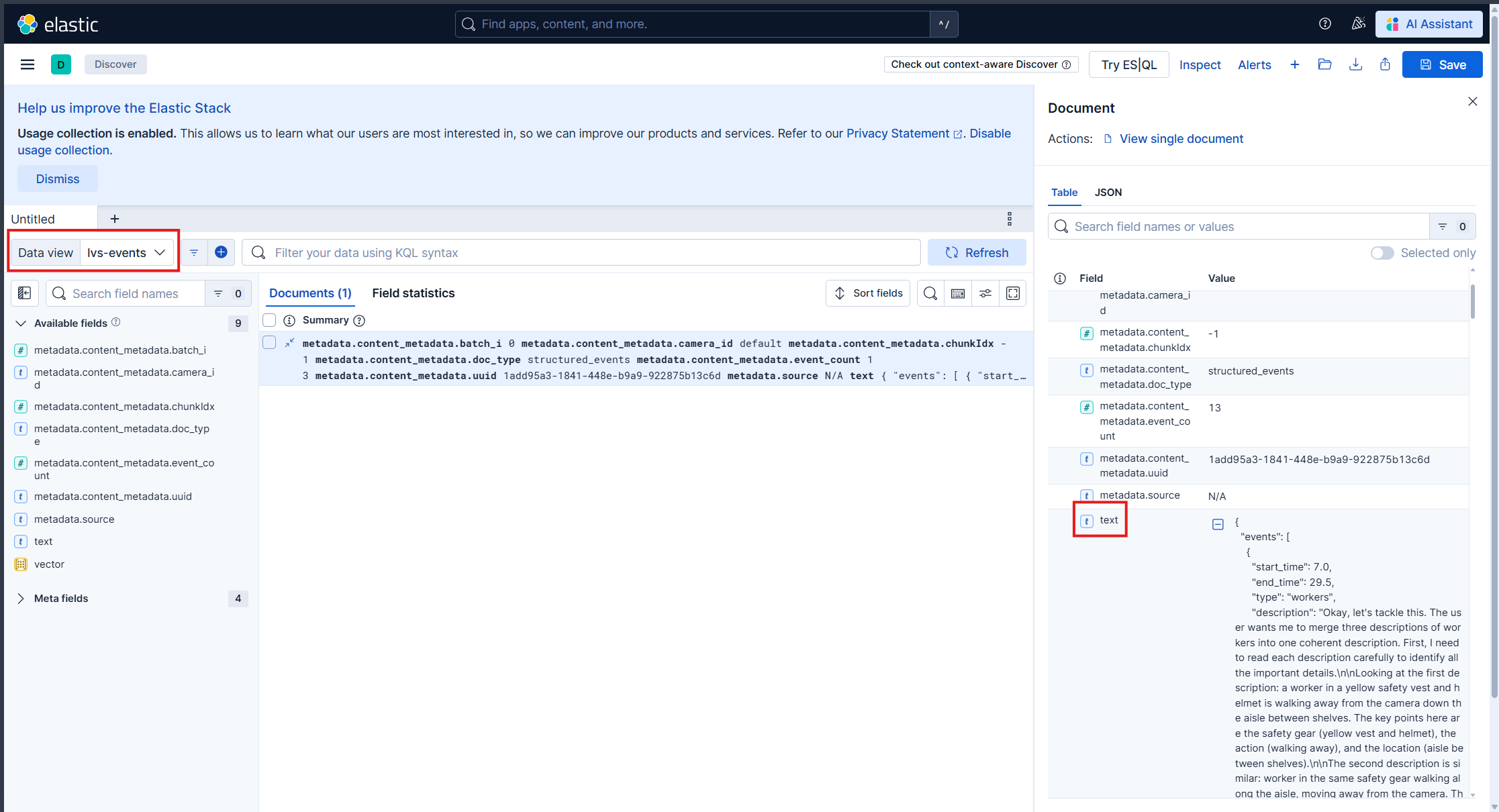

On the left sidebar, click on “Dashboard” to open the elastic dashboard in the main window. From the meniu icon, choose the “Discover” tab.

Set lvs-events in the Data view dropdown. Here you can see for each query, the events that were detected in the video. When you click on a row item, a panel will open in the right side with details about the backend request and the event.

Step 5: Teardown the Agent#

To teardown the agent, run the following command:

deployments/dev-profile.sh down

This command will stop and remove the agent containers.

Next steps#

Once you’ve familiarized yourself with the long video summarization workflow, you can explore adding other agent workflows, such as search and alerting.

Additionally, you can dive deeper into the agent tools for video management, report generation, and video understanding.

Appendix: Advanced Deployment Options#

The deployment script supports additional configuration options for advanced use cases. To view all available options, run:

deployments/dev-profile.sh --help

Hardware Profile#

Specify the hardware profile to optimize for your GPU:

deployments/dev-profile.sh up -p lvs -H RTX6000PROBW

Available hardware profiles: H100 (default), L40S, RTX6000PROBW

Host IP Configuration#

Manually specify the host IP address:

deployments/dev-profile.sh up -p lvs -i '<HOST_IP>'

Default: Primary IP from ip route

Externally Accessible IP#

Optionally specify an externally accessible IP address for services that need to be reached from outside the host:

deployments/dev-profile.sh up -p lvs -e '<EXTERNALLY_ACCESSIBLE_IP>'

LLM and VLM Configuration#

Configure the LLM and VLM (NVIDIA Inference Microservice) modes independently:

deployments/dev-profile.sh up -p lvs --llm-mode local --vlm-mode local

Available modes:

local_shared(default): Shared local NIM instancelocal: Dedicated local NIM instanceremote: Use remote NIM endpoints

Constraint: Both --llm-mode and --vlm-mode must be local_shared, or neither can be local_shared.

For remote LLM and VLM, specify the base URLs:

deployments/dev-profile.sh up -p lvs \

--llm-mode remote \

--vlm-mode remote \

--llm-base-url https://your-llm-endpoint.com \

--vlm-base-url https://your-vlm-endpoint.com

Note

To deploy your own remote NIM endpoint, refer to the NVIDIA NIM Deployment Guide for instructions on setting up NIM on your infrastructure.

Model Selection#

Specify custom LLM and VLM models:

deployments/dev-profile.sh up -p lvs \

--llm llama-3.3-nemotron-super-49b-v1.5 \

--vlm cosmos-reason2-8b

Available LLM models: nvidia-nemotron-nano-9b-v2, nemotron-3-nano, llama-3.3-nemotron-super-49b-v1.5, gpt-oss-20b

Available VLM models: cosmos-reason1-7b, cosmos-reason2-8b, qwen3-vl-8b-instruct

Note

Only the default models nvidia-nemotron-nano-9b-v2 (LLM) and cosmos-reason2-8b (VLM) have been verified on local and local_shared NIM modes.

Device Assignment#

Assign specific GPU devices for LLM and VLM:

deployments/dev-profile.sh up -p lvs \

--llm-device-id 0 \

--vlm-device-id 1

Note: --llm-device-id is not allowed if --llm-mode is remote. --vlm-device-id is not allowed if --vlm-mode is local_shared or remote.

Custom VLM Weights#

The VSS Blueprint supports using custom VLM weights to enhance video summarization capabilities for specific domains or scenarios.

Download Custom Weights

Before using custom weights, you need to download them from NGC or Hugging Face. For detailed instructions on downloading custom weights, see the Custom VLM Weights section in Prerequisites.

Deploy with Custom Weights

Once you have downloaded custom weights to a local directory, specify the path when deploying:

deployments/dev-profile.sh up -p lvs \

--vlm-custom-weights /path/to/custom/weights

Skip Custom Weights

To deploy without custom weights and use the default model weights (no download, no environment variable set):

deployments/dev-profile.sh up -p lvs \

--vlm-custom-weights None

Dry Run#

To preview the deployment commands without executing them:

deployments/dev-profile.sh up -p lvs -d

Note: The -d or --dry-run flag is also available for the down command.

Known Issues#

LLM Reasoning Toggle: Toggling the LLM reasoning option in the chat window input box does not take effect. This limitation will be revisited in the next release.

For additional known issues and limitations, see:

Agent Known Issues - VSS Agent known issues and limitations

Known Issues - VSS Agent UI known issues

Troubleshooting#

When encountering issues with the LVS workflow:

View container logs - See Viewing Container Logs for instructions on viewing and analyzing container logs

Navigate the Phoenix UI - See Navigating the Phoenix UI for step-by-step guidance on viewing traces and debugging agent workflows

Check known issues - Review Agent Known Issues (agent) and Known Issues (UI) for documented limitations and workarounds