Graph Execution Framework (GXF)

Holoscan GXF applications are built as compute graphs, based on GXF. This design provides modularity at the application level since existing entities can be swapped or updated without needing to recompile any extensions or application.

Those are the key terms used throughout this guide:

Each node in the graph is known as an entity

Each edge in the graph is known as a connection

Each entity is a collection of components

Each component performs a specific set of subtasks in that entity

The implementation of a component’s task is known as a codelet

Codelets are grouped in extensions

Similarly, the componentization of the entity itself allows for even more isolated changes. For example, if in an entity we have an input, an output, and a compute component, we can update the compute component without changing the input and output.

At its core, GXF provides a very thin API with a plug-in model to load in custom extensions. Applications built on top

of GXF are composed of components. The primary component is a Codelet that provides an interface for

start(), tick(), and stop() functions. Configuration parameters are bound within the

registerInterface() function.

In addition to the Codelet class, there are several others providing the underpinnings of GXF:

Scheduler and Scheduling Terms: components that determine how and when the tick() of a Codelet executes. This can be single or multithreaded, support conditional execution, asynchronous scheduling, and other custom behavior.

Memory Allocator: provides a system for up-front allocating a large contiguous memory pool and then re-using regions as needed. Memory can be pinned to the device (enabling zero-copy between Codelets when messages are not modified) or host or customized for other potential behavior.

Receivers, Transmitters, and Message Router: a message passing system between Codelets that supports zero-copy.

Tensor: the common message type is a tensor. It provides a simple abstraction for numeric data that can be allocated, serialized, sent between Codelets, etc. Tensors can be rank 1 to 7 supporting a variety of common data types like arrays, vectors, matrices, multi-channel images, video, regularly sampled time-series data, and higher dimensional constructs popular with deep learning flows.

Parameters: configuration variables that specify constants used by the Codelet loaded from the application yaml file modifiable without recompiling.

Let us look at an example of a Holoscan entity to try to understand its general anatomy. As an example let’s start with the entity definition for an image format converter entity named format_converter_entity as shown below.

Listing 20 An example GXF Application YAML snippet

%YAML 1.2

---

# other entities declared

---

name: format_converter_entity

components:

- name: in_tensor

type: nvidia::gxf::DoubleBufferReceiver

- type: nvidia::gxf::MessageAvailableSchedulingTerm

parameters:

receiver: in_tensor

min_size: 1

- name: out_tensor

type: nvidia::gxf::DoubleBufferTransmitter

- type: nvidia::gxf::DownstreamReceptiveSchedulingTerm

parameters:

transmitter: out_tensor

min_size: 1

- name: pool

type: nvidia::gxf::BlockMemoryPool

parameters:

storage_type: 1

block_size: 4919040 # 854 * 480 * 3 (channel) * 4 (bytes per pixel)

num_blocks: 2

- name: format_converter_component

type: nvidia::holoscan::formatconverter::FormatConverter

parameters:

in: in_tensor

out: out_tensor

out_tensor_name: source_video

out_dtype: "float32"

scale_min: 0.0

scale_max: 255.0

pool: pool

---

# other entities declared

---

components:

- name: input_connection

type: nvidia::gxf::Connection

parameters:

source: upstream_entity/output

target: format_converter/in_tensor

---

components:

- name: output_connection

type: nvidia::gxf::Connection

parameters:

source: format_converter/out_tensor

target: downstream_entity/input

---

name: scheduler

components:

- type: nvidia::gxf::GreedyScheduler

Above:

The entity

format_converter_entityreceives a message in itsin_tensormessage from an upstream entityupstream_entityas declared in theinput_connection.The received message is passed to the

format_converter_componentcomponent to convert the tensor element precision fromuint8tofloat32and scale any input in the[0, 255]intensity range.The

format_converter_componentcomponent finally places the result in theout_tensormessage so that its result is made available to a downstream entity (downstream_entas declared inoutput_connection).The

Connectioncomponents tie the inputs and outputs of various components together, in the above caseupstream_entity/output -> format_converter_entity/in_tensorandformat_converter_entity/out_tensor -> downstream_entity/input.The

schedulerentity declares aGreedyScheduler“system component” which orchestrates the execution of the entities declared in the graph. In the specific case ofGreedySchedulerentities are scheduled to run exclusively, where no more than one entity can run at any given time.

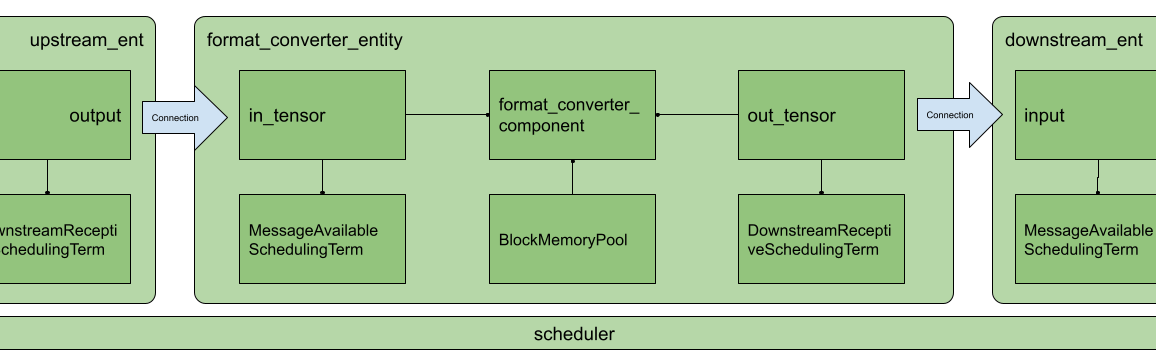

The YAML snippet above can be visually represented as follows.

Fig. 25 Arrangement of components and entities in a Holoscan application

In the image, as in the YAML, you will notice the use of MessageAvailableSchedulingTerm, DownstreamReceptiveSchedulingTerm, and BlockMemoryPool. These are components that play a “supporting” role to in_tensor, out_tensor, and format_converter_component components respectively. Specifically:

MessageAvailableSchedulingTermis a component that takes aReceiver`` (in this caseDoubleBufferReceivernamedin_tensor) and alerts the graphExecutorthat a message is available. This alert triggersformat_converter_component`.DownstreamReceptiveSchedulingTermis a component that takes aTransmitter(in this caseDoubleBufferTransmitternamedout_tensor) and alerts the graphExecutorthat a message has been placed on the output.BlockMemoryPoolprovides two blocks of almost5MBallocated on the GPU device and is used byformat_converted_entto allocate the output tensor where the converted data will be placed within the format converted component.

Together these components allow the entity to perform a specific function and coordinate communication with other entities in the graph via the declared scheduler.

More generally, an entity can be thought of as a collection of components where components can be passed to one another to perform specific subtasks (e.g. event triggering or message notification, format conversion, memory allocation), and an application as a graph of entities.

The scheduler is a component of type nvidia::gxf::System which orchestrates the execution components in each entity at application runtime based on triggering rules.

Entities communicate with one another via messages which may contain one or more payloads. Messages are passed and received via a component of type nvidia::gxf::Queue from which both nvidia::gxf::Receiver and nvidia::gxf::Transmitter are derived. Every entity that receives and transmits messages has at least one receiver and one transmitter queue.

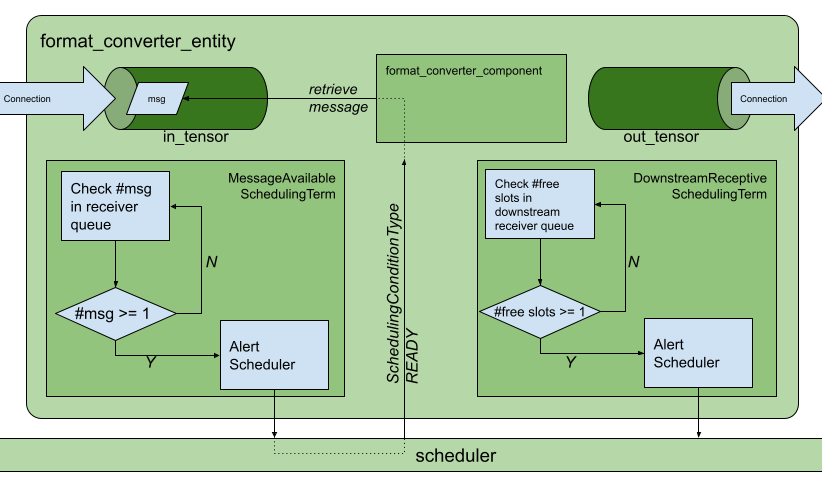

Holoscan uses the nvidia::gxf::SchedulingTerm component to coordinate data access and component orchestration for a Scheduler which invokes execution through the tick() function in each Codelet.

A SchedulingTerm defines a specific condition that is used by an entity to let the scheduler know when it’s ready for execution.

In the above example, we used a MessageAvailableSchedulingTerm to trigger the execution of the components waiting for data from in_tensor receiver queue, namely format_converter_component.

Listing 21 MessageAvailableSchedulingTerm

- type: nvidia::gxf::MessageAvailableSchedulingTerm

parameters:

receiver: in_tensor

min_size: 1

Similarly, DownStreamReceptiveSchedulingTerm checks whether the out_tensor transmitter queue has at least one outgoing message in it. If there are one or more outgoing messages, DownStreamReceptiveSchedulingTerm will notify the scheduler which in turn attempts to place the message in the receiver queue of a downstream entity. If, however, the downstream entity has a full receiver queue, the message is held in the out_tensor queue as a means to handle back-pressure.

Listing 22 DownstreamReceptiveSchedulingTerm

- type: nvidia::gxf::DownstreamReceptiveSchedulingTerm

parameters:

transmitter: out_tensor

min_size: 1

If we were to draw the entity in Fig. 25 in greater detail it would look something like the following.

Fig. 26 Receive and transmit

Queues and

SchedulingTerms in entities.

Up to this point, we have covered the “entity component system” at a high level and showed the functional parts of an entity, namely, the messaging queues and the scheduling terms that support the execution of components in the entity. To complete the picture, the next section covers the anatomy and lifecycle of a component, and how to handle events within it.

Please follow Developing Holoscan GXF Extensions section first for a detailed explanation of the GXF extension development process.

For our application, we create the directory apps/my_recorder_app_gxf with the application definition file my_recorder_gxf.yaml. The my_recorder_gxf.yaml application is as follows:

Listing 23 apps/my_recorder_app_gxf/my_recorder_gxf.yaml

%YAML 1.2

---

name: replayer

components:

- name: output

type: nvidia::gxf::DoubleBufferTransmitter

- name: allocator

type: nvidia::gxf::UnboundedAllocator

- name: component_serializer

type: nvidia::gxf::StdComponentSerializer

parameters:

allocator: allocator

- name: entity_serializer

type: nvidia::holoscan::stream_playback::VideoStreamSerializer # inheriting from nvidia::gxf::EntitySerializer

parameters:

component_serializers: [component_serializer]

- type: nvidia::holoscan::stream_playback::VideoStreamReplayer

parameters:

transmitter: output

entity_serializer: entity_serializer

boolean_scheduling_term: boolean_scheduling

directory: "/workspace/test_data/endoscopy/video"

basename: "surgical_video"

frame_rate: 0 # as specified in timestamps

repeat: false # default: false

realtime: true # default: true

count: 0 # default: 0 (no frame count restriction)

- name: boolean_scheduling

type: nvidia::gxf::BooleanSchedulingTerm

- type: nvidia::gxf::DownstreamReceptiveSchedulingTerm

parameters:

transmitter: output

min_size: 1

---

name: recorder

components:

- name: input

type: nvidia::gxf::DoubleBufferReceiver

- name: allocator

type: nvidia::gxf::UnboundedAllocator

- name: component_serializer

type: nvidia::gxf::StdComponentSerializer

parameters:

allocator: allocator

- name: entity_serializer

type: nvidia::holoscan::stream_playback::VideoStreamSerializer # inheriting from nvidia::gxf::EntitySerializer

parameters:

component_serializers: [component_serializer]

- type: MyRecorder

parameters:

receiver: input

serializer: entity_serializer

out_directory: "/tmp"

basename: "tensor_out"

- type: nvidia::gxf::MessageAvailableSchedulingTerm

parameters:

receiver: input

min_size: 1

---

components:

- name: input_connection

type: nvidia::gxf::Connection

parameters:

source: replayer/output

target: recorder/input

---

name: scheduler

components:

- name: clock

type: nvidia::gxf::RealtimeClock

- name: greedy_scheduler

type: nvidia::gxf::GreedyScheduler

parameters:

clock: clock

Above:

The replayer reads data from

/workspace/test_data/endoscopy/video/surgical_video.gxf_[index|entities]files, deserializes the binary data to anvidia::gxf::TensorusingVideoStreamSerializer, and puts the data on an output message in thereplayer/outputtransmitter queue.The

input_connectioncomponent connects thereplayer/outputtransmitter queue to therecorder/inputreceiver queue.The recorder reads the data in the

inputreceiver queue, usesStdEntitySerializerto convert the receivednvidia::gxf::Tensorto a binary stream, and outputs to the/tmp/tensor_out.gxf_[index|entities]location specified in the parameters.The

schedulercomponent, while not explicitly connected to the application-specific entities, performs the orchestration of the components discussed in the Data Flow and Triggering Rules.

Note the use of the component_serializer in our newly built recorder. This component is declared separately in the entity

- name: entity_serializer

type: nvidia::holoscan::stream_playback::VideoStreamSerializer # inheriting from nvidia::gxf::EntitySerializer

parameters:

component_serializers: [component_serializer]

and passed into MyRecorder via the serializer parameter which we exposed in the extension development section (Declare the Parameters to Expose at the Application Level).

- type: MyRecorder

parameters:

receiver: input

serializer: entity_serializer

directory: "/tmp"

basename: "tensor_out"

For our app to be able to load (and also compile where necessary) the extensions required at runtime, we need to declare a CMake file apps/my_recorder_app_gxf/CMakeLists.txt as follows.

Listing 24 apps/my_recorder_app_gxf/CMakeLists.txt

list(APPEND APP_COMMON_EXTENSIONS

GXF::std

GXF::cuda

GXF::multimedia

GXF::serialization

)

create_gxe_application(

NAME my_recorder_gxf

YAML my_recorder_gxf.yaml

EXTENSIONS

${APP_COMMON_EXTENSIONS}

my_recorder

stream_playback

)

# Support automatic datasets download at build time

# Create a CMake target for the my recorder test

add_custom_target(my_recorder_gxf ALL)

# Download the associated dataset if needed

if(HOLOSCAN_DOWNLOAD_DATASETS)

add_dependencies(my_recorder_gxf endoscopy_data)

endif()

In the declaration of create_gxe_application we list:

my_recordercomponent declared in the CMake file of the extension development section under theEXTENSIONSargumentthe existing

stream_playbackHoloscan extension which reads data from disk

We also create a dependency between my_recorder_gxf and endoscopy_data targets so that it downloads endoscopy test data when building the application.

To make our newly built application discoverable by the build, in the root of the repository, we add the following line

add_subdirectory(my_recorder_app_gxf)

to apps/CMakeLists.txt.

We now have a minimal working application to test the integration of our newly built MyRecorder extension.

To run our application in a local development container:

Follow the instructions under the Using a Development Container section steps 1-5 (try clearing the CMake cache by removing the

buildfolder before compiling).You can execute the following commands to build

./run install_gxf ./run build # ./run clear_cache # if you want to clear build/install/cache folders

Our test application can now be run in the development container using the command

./apps/my_recorder_app_gxf/my_recorder_gxf

from inside the development container.

(You can execute

./run launchto run the development container.)@LINUX:/workspace/holoscan-sdk/build$ ./apps/my_recorder_app_gxf/my_recorder_gxf 2022-08-24 04:46:47.333 INFO gxf/gxe/gxe.cpp@230: Creating context 2022-08-24 04:46:47.339 INFO gxf/gxe/gxe.cpp@107: Loading app: 'apps/my_recorder_app_gxf/my_recorder_gxf.yaml' 2022-08-24 04:46:47.339 INFO gxf/std/yaml_file_loader.cpp@117: Loading GXF entities from YAML file 'apps/my_recorder_app_gxf/my_recorder_gxf.yaml'... 2022-08-24 04:46:47.340 INFO gxf/gxe/gxe.cpp@291: Initializing... 2022-08-24 04:46:47.437 INFO gxf/gxe/gxe.cpp@298: Running... 2022-08-24 04:46:47.437 INFO gxf/std/greedy_scheduler.cpp@170: Scheduling 2 entities 2022-08-24 04:47:14.829 INFO /workspace/holoscan-sdk/gxf_extensions/stream_playback/video_stream_replayer.cpp@144: Reach end of file or playback count reaches to the limit. Stop ticking. 2022-08-24 04:47:14.829 INFO gxf/std/greedy_scheduler.cpp@329: Scheduler stopped: Some entities are waiting for execution, but there are no periodic or async entities to get out of the deadlock. 2022-08-24 04:47:14.829 INFO gxf/std/greedy_scheduler.cpp@353: Scheduler finished. 2022-08-24 04:47:14.829 INFO gxf/gxe/gxe.cpp@320: Deinitializing... 2022-08-24 04:47:14.863 INFO gxf/gxe/gxe.cpp@327: Destroying context 2022-08-24 04:47:14.863 INFO gxf/gxe/gxe.cpp@333: Context destroyed.

A successful run (it takes about 30 secs) will result in output files (tensor_out.gxf_index and tensor_out.gxf_entities in /tmp) that match the original input files (surgical_video.gxf_index and surgical_video.gxf_entities under test_data/endoscopy/video) exactly.

@LINUX:/workspace/holoscan-sdk/build$ ls -al /tmp/

total 821384

drwxrwxrwt 1 root root 4096 Aug 24 04:37 .

drwxr-xr-x 1 root root 4096 Aug 24 04:36 ..

drwxrwxrwt 2 root root 4096 Aug 11 21:42 .X11-unix

-rw-r--r-- 1 1000 1000 729309 Aug 24 04:47 gxf_log

-rw-r--r-- 1 1000 1000 840054484 Aug 24 04:47 tensor_out.gxf_entities

-rw-r--r-- 1 1000 1000 16392 Aug 24 04:47 tensor_out.gxf_index

@LINUX:/workspace/holoscan-sdk/build$ ls -al ../test_data/endoscopy/video/

total 839116

drwxr-xr-x 2 1000 1000 4096 Aug 24 02:08 .

drwxr-xr-x 4 1000 1000 4096 Aug 24 02:07 ..

-rw-r--r-- 1 1000 1000 19164125 Jun 17 16:31 raw.mp4

-rw-r--r-- 1 1000 1000 840054484 Jun 17 16:31 surgical_video.gxf_entities

-rw-r--r-- 1 1000 1000 16392 Jun 17 16:31 surgical_video.gxf_index