Overview

NVIDIA Clara is an open, scalable computing platform that enables development of medical imaging applications for hybrid (embedded, on-premise, or cloud) computing environments to create intelligent instruments and automated healthcare workflows.

The Clara Deploy SDK provides a set of GPU-accelerated libraries for computing, graphics and AI; example applications for image processing and rendering; and computational workflows for CT, MRI and ultrasound data. These all leverage Docker based containers and Kubernetes to help virtualize medical image workflows via connecting to PACS or scale medical instrument applications for any instrument.

This documentation provides information on getting started with the Clara Deploy SDK. It describes an example application, workflow generation, Clara containers, and also provides release note information. Developers who are deploying or developing on Clara should have at least a basic understanding of the related technologies, including Docker, Kubernetes, Helm and TensorRT Inference Server (TRTIS).

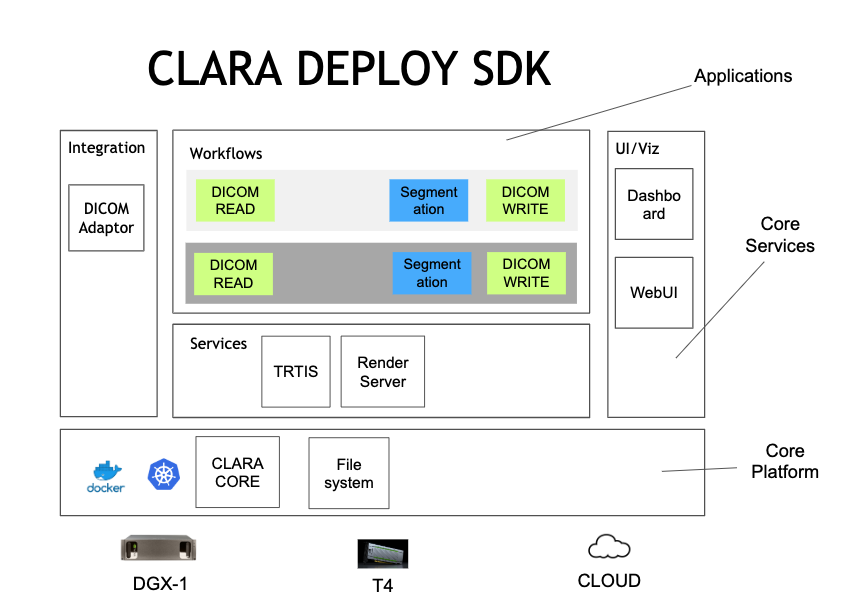

The Clara Deploy SDK is a collection of containers that work together to provide an end to end medical image processing workflow. The overall ecosystem can run on different cloud providers or local hardware with Pascal or newer GPUs. The Clara Deploy SDK can be broken up into the Core Platform, Core Services, Integrations and Applications as seen in the diagram below:

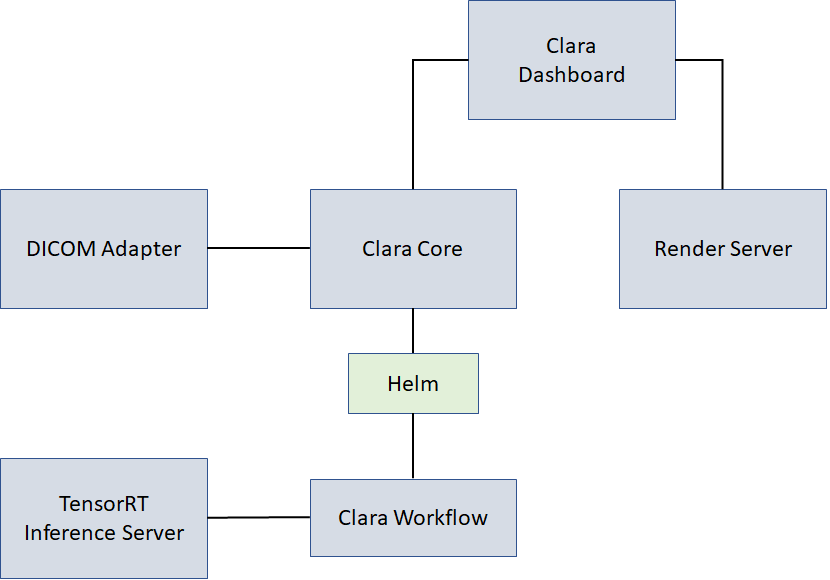

The Clara Deploy SDK is run via a Helm chart. The different components communicate with each other via the paths seen in the diagram below:

Clara Core component runs as the central part of the Clara Deploy SDK and controls all aspects of Clara payloads, workflows, jobs, and results. It performs the following tasks:

Accepts and executes workflows as jobs.

Deploys workflow containers when needed via Helm, and removes them when those resources are needed elsewhere.

Is the source of all system state truth.

DICOM Adapter is the integration point between the hospital PACS and the Clara Deploy SDK. In the typical Clara Deploy SDK deployment, it is the first data interface to Clara. DICOM Adapter provides the ability to receive DICOM data, put the data in a payload, and trigger a workflow. When the workflow has produced a result, DICOM Adapter moves the result to a PACS.

A Clara Workflow is a collection of containers that are configured to work together to execute a medical image processing task. Clara publishes an API that enables any container to be added to a workflow in the Clara Deploy SDK. These containers are Docker containers based on nvidia-docker with applications enhanced to support the Clara Container Workflow Driver.

The Tensor RT Inference Server is an inferencing solution optimized for NVIDIA GPUs. It provides an inference service via an HTTP or gRPC endpoint.

Clara Dashboard is the interface for monitoring an instance of the Clara Deploy SDK. It performs the following tasks:

Displays the rendering of the output images.

Displays status of running jobs.

Render Server provides visualization of medical data.

The Clara I/O model is designed to follow standards that can be executed and scaled by Kubernetes. Payloads and results are separated to preserve payloads for restarting when unsuccessful or interrupted for priority, and to allow the inputs to be reused in multiple workflows.

Payloads (inputs) are presented as read-only volumes.

Jobs are the execution of a workflow on a payload.

Results (outputs, scratch space) are read-write volumes, paired with the jobs that created them in one-to-one relationships.

Datasets (reusable inputs) are read-only volumes.

Ports allow for application I/O.

Workflow configurations are Helm charts.

Runtime container configuration is passed via a YAML file.