DeepStream GPU Video Source Plugin

DeepStream SDK is based on the GStreamer framework, and provides a collection of proprietary GStreamer plugins that provide various GPU accelerated features for optimizing video analytic applications.

While DeepStream provides many plugins to meet the needs of a wide variety of use cases, it may be the case that a new plugin may be required in order to allow DeepStream integration with custom data input, output, or processing algorithms. In the case of Clara Holoscan, which provides high performance edge computing platforms for medical video analytics applications, one of the most common requirements will be the ability to capture video data from an external hardware device such that the data can be efficiently processed on the GPU using DeepStream or some other proprietary algorithms. For example, an ultrasound device may need to input raw data directly into NVIDIA GPU memory so that it can be beamformed on the GPU before being passed through a model in DeepStream for AI inference and annotation.

Due to the proprietary nature of third party hardware devices or processing algorithms it is not possible for NVIDIA to provide a generic GStreamer plugin that will work with all third party products, and it will generally be the partner’s responsibility to develop their own plugins. That being said, we also recognize that writing GStreamer plugins may be difficult for developers without any GStreamer experience, and writing these plugins such that they can work with GPU buffers and integrate with DeepStream complicates this process even further.

To help partners get started with writing GPU and DeepStream-enabled GStreamer

plugins, the Clara Holoscan SDK includes a GPU Video Test Source GStreamer

plugin, nvvideotestsrc. This sample plugin produces a stream of GPU

buffers that are allocated, filled with a test pattern using CUDA, and then

output by the plugin such that they can be consumed by downstream DeepStream

components. When consumed only by other DeepStream or GPU-enabled plugins,

this means that the buffers can go from end-to-end directly in GPU memory,

avoiding any copies to/from system memory throughout the pipeline.

The DeepStream GPU Video Test Source Plugin is automatically installed with the Clara Holoscan SDK. To test this plugin, the following command can be used.

$ gst-launch-1.0 nvvideotestsrc ! nveglglessink

This command, using the default nvvideotestsrc plugin parameters, will

bring up a window showing an SMPTE color bars test pattern.

Fig. 4 nvvideotestsrc plugin SMPTE color bars pattern

Additional test patterns are available, some of which include animation. The

pattern that is generated by the plugin can be configured using the

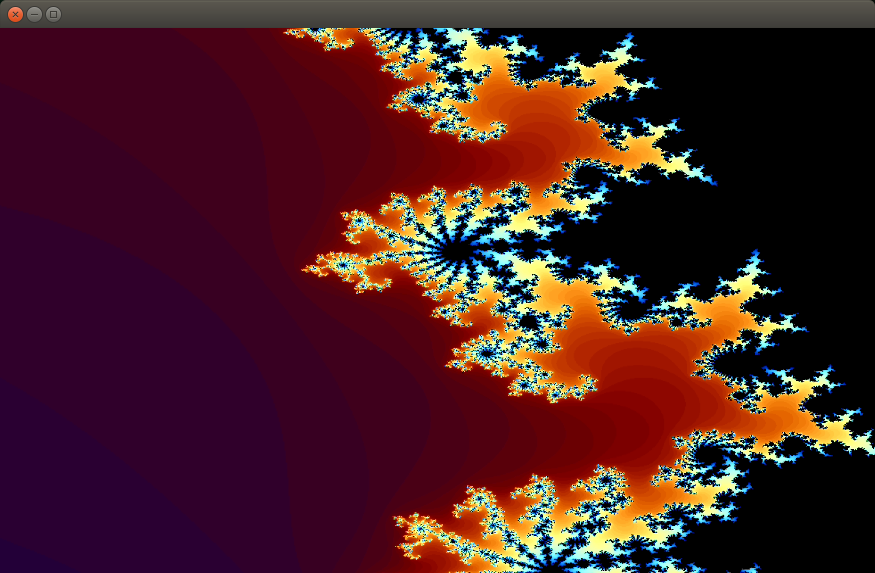

pattern parameter. For example, to generate a Mandelbrot set pattern,

which animates to zoom in and out of the image, the following command can

be used.

$ gst-launch-1.0 nvvideotestsrc pattern=mandelbrot ! nveglglessink

Fig. 5 nvvideotestsrc plugin Mandelbrot set pattern

Unless a downstream component specifically requests a specific video resolution

or framerate, the plugin will default to a 1280x720 stream at 60FPS. To request

a specific resolution or framerate, the GStreamer caps can also be provided to

the gst-launch-1.0 command between the nvvideotestsrc and

downstream plugin. For example, the following command will tell the plugin to

generate a 1920x1080 stream at 30FPS.

$ gst-launch-1.0 nvvideotestsrc ! 'video/x-raw(memory:NVMM), format=NV12, width=1920, height=1080, framerate=30/1' ! nveglglessink

If any of these commands fail, make sure that the DISPLAY environment

variable is set to the ID of the X11 display you are using, e.g.:

$ export DISPLAY=:0

The source code for the GPU Video Test Source plugin is installed to the following path.

/opt/nvidia/clara-holoscan-sdk/clara-holoscan-gstnvvideotestsrc

The plugin is compiled and installed from this location during the Clara Holoscan SDK installation. If any changes to the plugin source code are made, the plugin can be recompiled and installed with the following.

$ cd /opt/nvidia/clara-holoscan-sdk/clara-holoscan-gstnvvideotestsrc/build

$ sudo make && sudo make install

A primary use for the Clara Holoscan SDK is to develop edge devices that captures video frames from an external device into GPU memory for further processing and analytics. In this case, it is recommended that the device drivers for the external device take advantage of GPUDirect RDMA such that data from the device is copied directly into GPU memory so that it doesn’t need to first pass through system memory. Assuming the device drivers support RDMA, a GStreamer plugin can then use this RDMA support to accelerate DeepStream and GPU-enabled pipelines.

There are a number of resources that can be helpful for developers to add GPUDirect RDMA support to their DeepStream and GPU-enabled Clara Holoscan products.

The jetson-rdma-picoevb GPUDirect RDMA sample drivers and applications.

This sample provides a minimal hardware-based demonstration of GPUDirect RDMA including both the kernel drivers and userspace applications required to interface with an inexpensive and off-the-shelf FPGA board (PicoEVB). This is a good starting point for learning how to add RDMA support to your device drivers.

The

nvvideotestsrcplugin. The source code for this plugin provides some basic placeholders and documentation that help point developers in the right direction when wanting to add RDMA support to a GStreamer source plugin. Specifically, thegst_nv_video_test_src_fillmethod – which is the GStreamer callback responsible for filling the GPU buffers – contains the following.gst_buffer_map(buffer, &map, GST_MAP_READWRITE); // The memory of a GstBuffer allocated by an NvDsBufferPool contains // the NvBufSurface descriptor which then describes the actual GPU // buffer allocation(s) in its surfaceList. NvBufSurface *surf = (NvBufSurface*)map.data; // Use CUDA to fill the GPU buffer with a test pattern. // // NOTE: In this source, we currently only fill the GPU buffer using CUDA. // This source could be modified to fill the buffer instead with other // mechanisms such as mapped CPU writes or RDMA transfers: // // 1) To use mapped CPU writes, the GPU buffer could be mapped into // the CPU address space using NvBufSurfaceMap. // // 2) To use RDMA transfers from another hardware device, the GPU // address for the buffer(s) (i.e. the `dataPtr` member of the // NvBufSurfaceParams) could be passed here to the device driver // that is responsible for performing the RDMA transfer into the // buffer. Details on how RDMA to NVIDIA GPUs can be performed by // device drivers is provided in a demonstration application // available at https://github.com/NVIDIA/jetson-rdma-picoevb. // // For more details on the NvBufSurface API, see nvbufsurface.h // gst_nv_video_test_src_cuda_prepare(self, surf->surfaceList); self->cuda_fill_image(self); // Set the numFilled field of the surface with the number of surfaces // that have been filled (always 1 in this example). // This metadata is required by other downstream DeepStream plugins. surf->numFilled = 1; gst_buffer_unmap(buffer, &map);

The AJA Video Systems NTV2 SDK and GStreamer plugin.

The use of AJA Video Systems hardware with Clara Holoscan platforms is documented in the AJA Video Systems section. This setup requires the installation of the AJA NTV2 SDK and Drivers and the AJA GStreamer plugin. These components are both open source and provide real world examples of video capture device drivers and a GStreamer plugin that use GPUDirect RDMA, respectively.

In the case of the device driver support within the NTV2 SDK, all of the RDMA support is enabled at compile time using the

AJA_RDMAflag, and so the RDMA specifics within the driver can be located using the#ifdef AJA_RDMAdirectives within the source code.In the case of the GStreamer plugin, the RDMA support was added to the plugin by this change: Add NVMM RDMA support for NVIDIA GPU buffer output.