Developer Guide

NVIDIA DOCA Developer Guide

This document details the recommended steps to set up an NVIDIA DOCA development environment.

This guide is intended for software developers aiming to modify existing NVIDIA® DOCA applications or develop their own DOCA-based software.

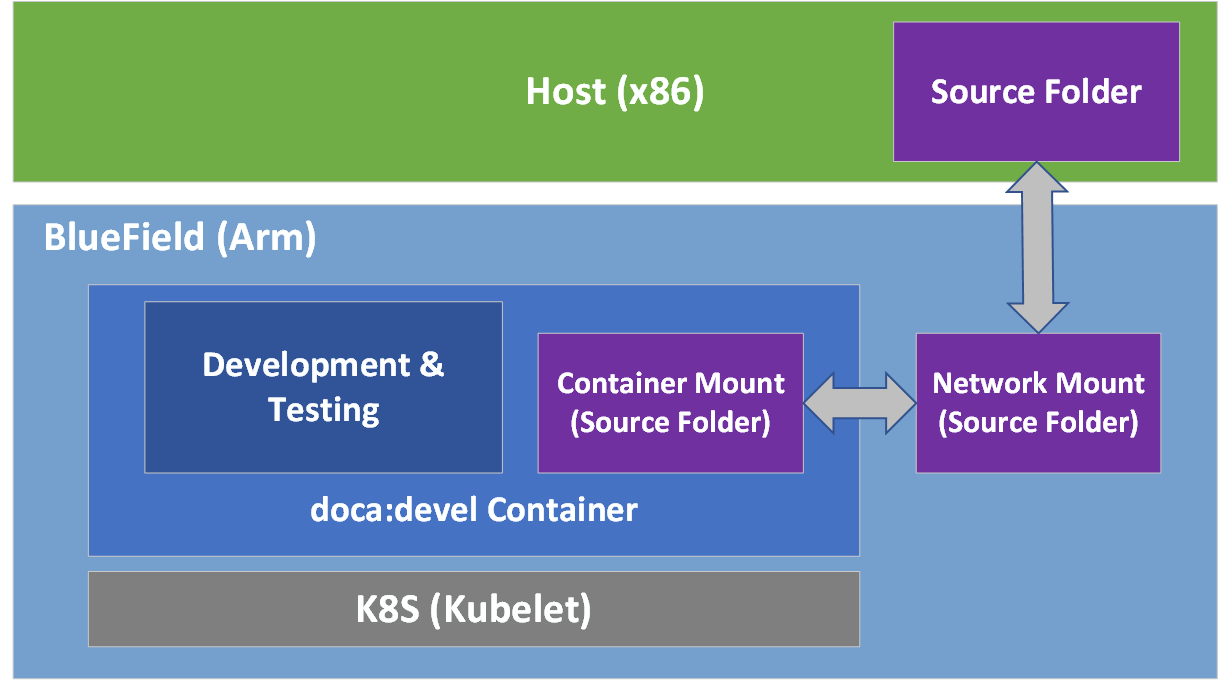

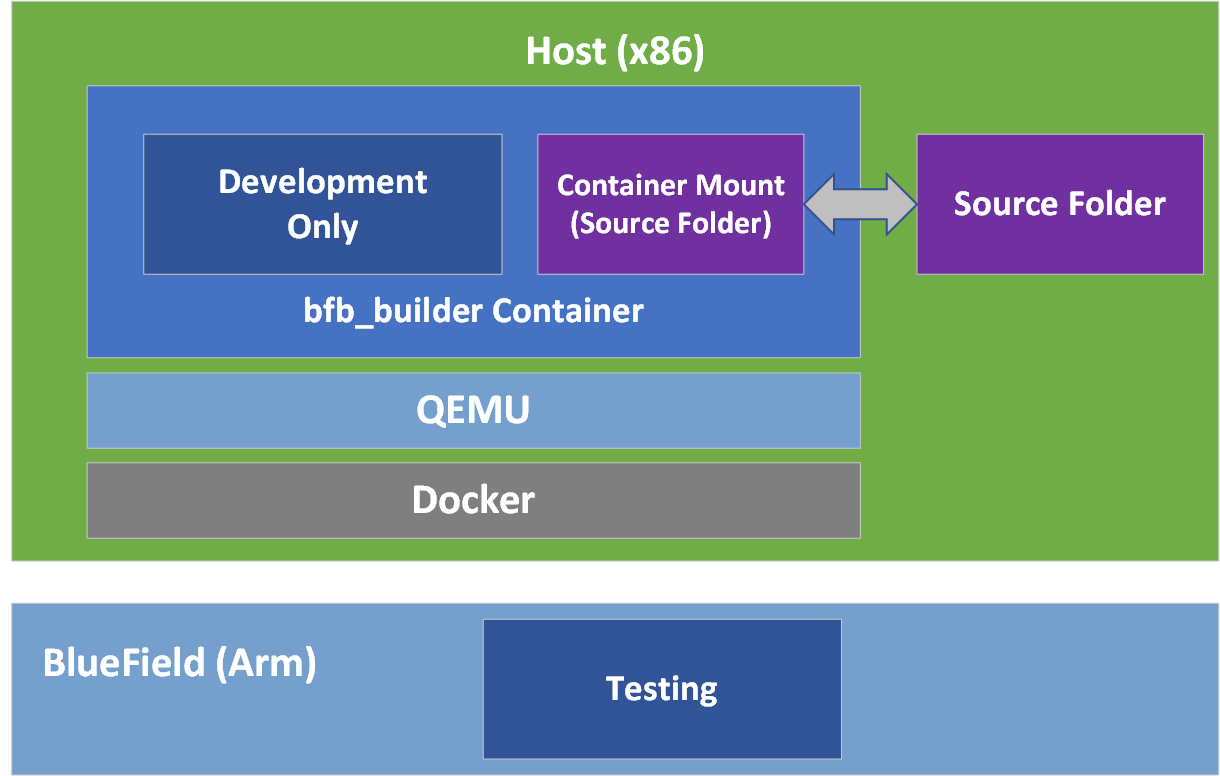

For steps to install DOCA on NVIDIA® BlueField® DPU, refer to the DOCA Installation Guide. This guide focuses on the recommended flow for developing DOCA-based software, and will target two main environments:

- BlueField DPU is accessible and can be used during the development and testing process

- BlueField DPU is inaccessible, and the development happens on the host/a different server

It is recommended to follow the former case, leveraging the DPU during the development and testing process.

2.1. Setup

A DOCA development container is created as part of the DOCA base image containers. It is recommended that it is deployed on top of the DPU. The doca:devel container may be found on NGC, and the full instructions for deploying the DOCA container on the DPU can be found on the DOCA Container User Guide page.

The development container allows developers to develop and test their DOCA-based software in a developer-friendly environment that comes pre-shipped with a set of handy development tools. In contrast to the BlueField OS that is meant to be an efficient runtime environment for DOCA products, the development container is focused on improving the development experience and is designed for that purpose.

2.2. Development

It is recommended not to do the development within the doca:devel container.

That said, some developers prefer different integrated development environments (IDEs) or development tools, and sometimes will prefer working using a graphical IDE, at least until it is time to compile the code. As such, the recommendation is to mount a network share to the DPU (refer to "Useful CLI Commands" for more information) and configure it in the container's .yaml file. This way the code is used by the graphical IDE and is directly accessible inside the development container.

| Before | Shared folder is commented out.

|

| After | Shared folder is active.

|

The container's .yaml file is sensitive to indentations. Please make sure to use only spaces (''), and to keep each indentation level at a width of 2 space characters.

Having the same code folder accessible from the IDE and the container helps prevent edge cases where the compilation fails due to a typo in the code, but the typo is only fixed locally within the container and not propagated to the main source folder.

2.3. Testing

The container is marked as "privileged", hence it can directly access the HW capabilities of the BlueField DPU. This means that once the tested program compiles successfully, it can be directly tested from within the container without the need to copy it to the DPU and running it there.

2.4. Publishing

Once the program passes the testing phase, it should be prepared for deployment. While some proof-of-concept (POC) programs are just copied "as-is" in their binary form, most deployments will probably be in the form of a package (.deb/.rpm) or a container.

Construction of the binary package can be done as-is inside the current doca:devel container, or as part of a CI pipeline that will leverage the same development container as part of it.

For the construction of a container to ship the developed software, it is recommended to use a multi-staged build that ships the software on top of the runtime-oriented DOCA base images:

- doca:base-rt – slim DOCA runtime environment

- doca:full-rt – full DOCA runtime environment similar to BlueField OS

The runtime DOCA base images, alongside more details about their structure, can be found under the same NGC page that hosts the doca:devel image.

For a multi-staged build, it is recommended to compile the software inside the doca:devel container, and later copy it to one of the runtime container images. All relevant images must be pulled directly from NGC (using docker pull) to the container registry of the DPU.

3.1. Setup

If the development process needs to be done without access to a BlueField DPU, the recommendation is to use a QEMU-based deployment of a container on top of a regular x86 server. The development container for the host will be a "bfb_builder" development container which comes as part of the SDK Manager during installation of DOCA on the host.

- Make sure Docker is installed on your host. Run:

docker version

If it is not installed, visit the official Install Docker Engine webpage for installation instructions.

- Install QEMU on the host.

Note:

This step is for x86 hosts only. If you are working on an aarch64 host, move to the next step.

- For an Ubuntu host, run:

sudo apt-get install qemu binfmt-support qemu-user-static sudo docker run --rm --privileged multiarch/qemu-user-static --reset -p yes

- For a CentOS/RHEL 7.x host, run:

sudo yum install epel-release sudo yum install qemu-system-arm

- For a CentOS 8.0/8.2 host, run:

sudo yum install epel-release sudo yum install qemu-kvm

- For a Fedora host, run:

sudo yum install qemu-system-aarch64

- For an Ubuntu host, run:

- If you are using CentOS or Fedora on the host, verify if

qemu-aarch64.confexists. Run:$ cat /etc/binfmt.d/qemu-aarch64.conf

echo ":qemu-aarch64:M::\x7fELF\x02\x01\x01\x00\x00\x00\x00\x00\x00\x00\x00\x00\x02\x00\xb7:\xff\xff\xff\xff\xff\xff\xff\xfc\xff\xff\xff\xff\xff\xff\xff\xff\xfe\xff\xff:/usr/bin/qemu-aarch64-static:" > /etc/binfmt.d/qemu-aarch64.conf

- If you are using CentOS or Fedora on the host, restart system binfmt. Run:

$ sudo systemctl restart systemd-binfmt

- Load the docker image.

- Make sure the docker service is started. Run:

systemctl daemon-reload systemctl start docker

- Go to the location the tar file is saved at and run the following command from the host:

sudo docker load -i <filename>

sudo docker load -i bfb_builder_ubuntu20.04-5.3-1.0.0.0-3.6.0.11699-1.tar

Note:The loading process may take a while. After the image is loaded, you may find its ID using the command

docker images.

- Make sure the docker service is started. Run:

- Run the docker image:

sudo docker run -v <source-code-folder>:<dest-folder-on-docker> --privileged -it -e container=docker <image-name/ID>

/<...>/buildEnv, the destination folder on the docker is/app, and the image is the one downloaded in the previous step, the command will look like this:sudo docker run -v /<...>/buildEnv:/app --privileged -it -e container=docker doca_v1.11_bluefield_os_ubuntu_20.04-mlnx-5.4

Or, if you use a loaded image with the ID 185c50ecb31d, the command will be:sudo docker run -v /<...>/buildEnv:/app --privileged -it -e container=docker 185c50ecb31d

After running this command, you get a shell inside the container where you can build your project using the regular build commands.Note:Make sure you map a folder that everyone has Write privileges to. Otherwise, the docker will not be able to write the output file to it.

Note:The folder will be mapped to the destination folder. In this example the folder

/appinside the docker will be mapped to/<...>/buildEnv.

3.2. Development

Much like the development phase when using a BlueField DPU explained above, it is recommended to develop within the container running on top of QEMU.

3.3. Testing

While the compilation can be performed on top of the container, testing the compiled software must be done on top of a BlueField-2 DPU. This is because the QEMU environment emulates an aarch64 architecture, but it does not emulate the hardware devices present on the BlueField DPU. Therefore, the tested program will not be able to access the devices needed for its successful execution, thus mandating that the testing is done on top of a physical DPU.

3.4. Publishing

The publishing process is similar to the publishing process when using a BlueField DPU, the only difference being the containers being deployed on top of QEMU for emulating the aarch64 architecture.

Notice

This document is provided for information purposes only and shall not be regarded as a warranty of a certain functionality, condition, or quality of a product. NVIDIA Corporation nor any of its direct or indirect subsidiaries and affiliates (collectively: “NVIDIA”) make no representations or warranties, expressed or implied, as to the accuracy or completeness of the information contained in this document and assume no responsibility for any errors contained herein. NVIDIA shall have no liability for the consequences or use of such information or for any infringement of patents or other rights of third parties that may result from its use. This document is not a commitment to develop, release, or deliver any Material (defined below), code, or functionality.

NVIDIA reserves the right to make corrections, modifications, enhancements, improvements, and any other changes to this document, at any time without notice.

Customer should obtain the latest relevant information before placing orders and should verify that such information is current and complete.

NVIDIA products are sold subject to the NVIDIA standard terms and conditions of sale supplied at the time of order acknowledgement, unless otherwise agreed in an individual sales agreement signed by authorized representatives of NVIDIA and customer (“Terms of Sale”). NVIDIA hereby expressly objects to applying any customer general terms and conditions with regards to the purchase of the NVIDIA product referenced in this document. No contractual obligations are formed either directly or indirectly by this document.

NVIDIA products are not designed, authorized, or warranted to be suitable for use in medical, military, aircraft, space, or life support equipment, nor in applications where failure or malfunction of the NVIDIA product can reasonably be expected to result in personal injury, death, or property or environmental damage. NVIDIA accepts no liability for inclusion and/or use of NVIDIA products in such equipment or applications and therefore such inclusion and/or use is at customer’s own risk.

NVIDIA makes no representation or warranty that products based on this document will be suitable for any specified use. Testing of all parameters of each product is not necessarily performed by NVIDIA. It is customer’s sole responsibility to evaluate and determine the applicability of any information contained in this document, ensure the product is suitable and fit for the application planned by customer, and perform the necessary testing for the application in order to avoid a default of the application or the product. Weaknesses in customer’s product designs may affect the quality and reliability of the NVIDIA product and may result in additional or different conditions and/or requirements beyond those contained in this document. NVIDIA accepts no liability related to any default, damage, costs, or problem which may be based on or attributable to: (i) the use of the NVIDIA product in any manner that is contrary to this document or (ii) customer product designs.

No license, either expressed or implied, is granted under any NVIDIA patent right, copyright, or other NVIDIA intellectual property right under this document. Information published by NVIDIA regarding third-party products or services does not constitute a license from NVIDIA to use such products or services or a warranty or endorsement thereof. Use of such information may require a license from a third party under the patents or other intellectual property rights of the third party, or a license from NVIDIA under the patents or other intellectual property rights of NVIDIA.

Reproduction of information in this document is permissible only if approved in advance by NVIDIA in writing, reproduced without alteration and in full compliance with all applicable export laws and regulations, and accompanied by all associated conditions, limitations, and notices.

THIS DOCUMENT AND ALL NVIDIA DESIGN SPECIFICATIONS, REFERENCE BOARDS, FILES, DRAWINGS, DIAGNOSTICS, LISTS, AND OTHER DOCUMENTS (TOGETHER AND SEPARATELY, “MATERIALS”) ARE BEING PROVIDED “AS IS.” NVIDIA MAKES NO WARRANTIES, EXPRESSED, IMPLIED, STATUTORY, OR OTHERWISE WITH RESPECT TO THE MATERIALS, AND EXPRESSLY DISCLAIMS ALL IMPLIED WARRANTIES OF NONINFRINGEMENT, MERCHANTABILITY, AND FITNESS FOR A PARTICULAR PURPOSE. TO THE EXTENT NOT PROHIBITED BY LAW, IN NO EVENT WILL NVIDIA BE LIABLE FOR ANY DAMAGES, INCLUDING WITHOUT LIMITATION ANY DIRECT, INDIRECT, SPECIAL, INCIDENTAL, PUNITIVE, OR CONSEQUENTIAL DAMAGES, HOWEVER CAUSED AND REGARDLESS OF THE THEORY OF LIABILITY, ARISING OUT OF ANY USE OF THIS DOCUMENT, EVEN IF NVIDIA HAS BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES. Notwithstanding any damages that customer might incur for any reason whatsoever, NVIDIA’s aggregate and cumulative liability towards customer for the products described herein shall be limited in accordance with the Terms of Sale for the product.

Trademarks

NVIDIA, the NVIDIA logo, and Mellanox are trademarks and/or registered trademarks of Mellanox Technologies Ltd. and/or NVIDIA Corporation in the U.S. and in other countries. The registered trademark Linux® is used pursuant to a sublicense from the Linux Foundation, the exclusive licensee of Linus Torvalds, owner of the mark on a world¬wide basis. Other company and product names may be trademarks of the respective companies with which they are associated.

Copyright

© 2022 NVIDIA Corporation & affiliates. All rights reserved.