Forecasting with HEAVY.AI and Prophet

See the Prophet project documentation to learn more and stay up to date.

Prophet is an open-source library from Facebook that provides basic forecasting capabilities for time series data. HEAVY.AI includes Prophet in its data science foundation. With the Ibis backend for HEAVY.AI, you can create time series inputs into Prophet for quick forecasts. Combined with distributed execution frameworks like Dask or Ray, forecasts can be generated in parallel for multiple time series. In addition, you can use Altair to visualize forecast results and load forecast outputs back to HEAVY.AI so they can be used in Heavy Immerse.

The following example shows how to generate a simple Prophet forecast using Ibis to extract data from HEAVY.AI, use Altair to visualize the forecast results, and then use Ray to create forecasts in parallel for multiple time series.

Using Ibis with Prophet

Let’s use the flights dataset to create a forecast. The example uses HEAVY.AI server, but you can use any dataset that has date or timestamp as a key attribute. Connect via Ibis.

First, predict daily arrival delays for a single airport, given the annual history of arrival delays for that airport. To do this, create an Ibis expression that shapes data into the format expected by Prophet.

For simplicity, Prophet always expects a time series to be a two-column dataset of the form date, value.With Ibis, you can specify an expression to alias column names as well as perform additional transformations to date/time fields.

Now, create a Python function to extract time series data for a specific airport. This uses Ibis, but returns an expression that evaluates to the average departure delay for a given city. (The columns are named ds and y as expected by Prophet.)

Try it to verify the output. You can customize the function to generate the time series granularity you want; for example, hourly delays instead of daily.

Now, create a simple forecast. Feed the time series above directly to Prophet and have it generate a forecast. In this case, you set the appropriate parameters to consider seasonality at a yearly level.

This generates a pandas dataframe with a forward-looking forecast for a month, based on past data for the year 2008 for New York City.

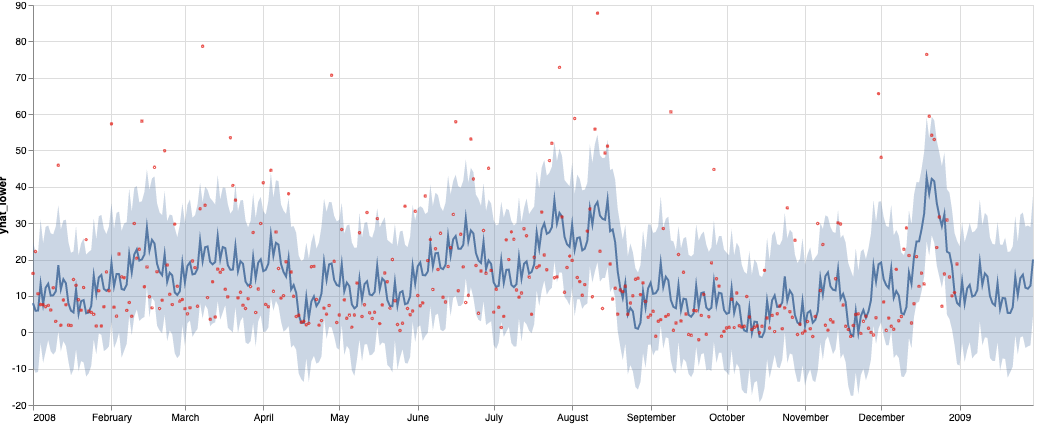

Finally, to see show how Ibis can support multiple backends, including pandas as an ibis backend, build an Altair chart for the forecast output dataframe to see what the forecast looks like. Use Altair’s compound chart functionality to overlay the original Ibis expression for the past data, with the error bounds and the forecast trend line.

The generated chart shows the past data and extends out by a month to show predicted arrival delay.

The above forecast is for a single city. If you want to create arrival delay forecasts for multiple cities in parallel, you can use frameworks like Dask or Ray that allow distributed execution.

Parallelizing the Forecasts

In the example above, the function get_delay_ts returns a single time series for a city. You may want to compute arrival delay forecasts for a large number cities. Each prophet forecast takes approximately 1-2 seconds.

Let’s see how many forecasts you need to generate:

At about 1-2 seconds per Prophet forecast, running these serially could take anywhere from 5-10 minutes**.** Because the time series for arrival delays for each city is independent, parallelization can speed up the process.

Using Dask

Use Dask to parallelize this operation. First, make sure you have Dask installed in your Python environment:

Now, modify the method slightly to get the time series of daily arrival delays for a city. Make the city an optional parameter, and group by the city.

Try this modified method to see if it works correctly without a parameter for the city name.

Now, use Dask to parallelize this operation.

You are ready to run parallelized forecasting. You have materialized the data for all cities in a single call, split the resulting dataframe by city, and handed it off to Dask to compute the forecast, returning an array with all the forecasts.

Once you start the run, you can examine the Dask UI at localhost:8787 (assuming you can access it on the running machine), where you can see the entire process. With 16 cores (as specified in the call to Dask), forecasts for 288 cities complete in approximately 75-90 seconds---a 3-6x increase in speed.

Using Ray

Next, let’s use Ray, a distributed execution engine for Python. Ray is similar to Dask, but more specifically targeted at machine learning and deep learning workflows, and can be used to parallelize existing Python code. To get started, install Ray.

Next, modify the methods used previously for use with Ray. In particular, declare the Prophet forecasting method to be a Ray remote method, which allows Ray to execute it on a cluster or a set of cores in parallel.

This starts a Ray distributed computation, similar to Dask. Ray is slightly faster for this same task.