Quick Start Guide#

Get NVIDIA AI Enterprise up and running in 30–60 minutes. This guide walks you through account activation and software installation on bare metal, virtualized, or public cloud infrastructure.

What You’ll Accomplish

By the end of this guide, you’ll have:

Activated your NVIDIA Enterprise Account and accessed the NGC Catalog

Installed NVIDIA AI Enterprise software components

Verified GPU-accelerated containers are working

Next Steps

After completing this guide, see Where to Go Next for directions on exploring your license, browsing software components, and finding additional resources.

Before You Begin#

Before you start, ensure the following prerequisites are met:

Hardware Requirements

NVIDIA-Certified Server Platform that supports NVIDIA AI Enterprise

One or more supported NVIDIA GPUs installed in your server

Important

The NVIDIA-Certified Systems requirement does not apply to GB200 NVL4, GB200 NVL72, and GB300 NVL72 systems. For these platforms, NVIDIA-Qualified server status is the prerequisite for NVIDIA AI Enterprise support. For more information, refer to the NVIDIA Qualified Systems Catalog.

Software & Licensing

Valid NVIDIA AI Enterprise software subscription

For bundled GPUs (such as NVIDIA H100 PCIe), activate your license before installation

Additional Information

Refer to the NVIDIA AI Enterprise Release Notes for supported hardware, software versions, and known issues.

Attention

Already have an account? Skip directly to Installing NVIDIA AI Enterprise Software Components.

Note

The following instructions do not apply to NVIDIA DGX systems. Refer to NVIDIA DGX Systems for DGX-specific documentation.

Activating the Accounts for NVIDIA AI Enterprise#

Do you already have an NVIDIA Enterprise Account?

Yes, I have an account → Skip to Installing NVIDIA AI Enterprise Software Components.

No, I need to create an account → Choose your scenario below.

Choose Your Scenario

First-time purchase - You received an order confirmation with an NVIDIA Entitlement Certificate. Follow the Register link in the certificate to create your NVIDIA Enterprise Account.

Evaluation to purchased license - You have two options:

Link your existing account - Use the same email address from your evaluation account. Follow Linking an Evaluation Account.

Create a new account - Use a different email address. Follow Creating your NVIDIA Enterprise Account.

Your Order Confirmation Message#

After your order for NVIDIA AI Enterprise is processed, you’ll receive an order confirmation message with your NVIDIA Entitlement Certificate attached. The certificate contains your product activation keys and provides instructions for using it.

If you’re a data center administrator, follow the instructions in the NVIDIA Entitlement Certificate to use the certificate. Otherwise, forward your order confirmation message, including the attached NVIDIA Entitlement Certificate, to a data center administrator in your organization.

What Your NVIDIA Enterprise Account Provides#

Your NVIDIA Enterprise Account provides login access to the following components through the NVIDIA Application Hub:

NVIDIA NGC - Access to all enterprise software, services, and management tools included in NVIDIA AI Enterprise

NVIDIA Enterprise Support Portal - Access to support services for NVIDIA AI Enterprise

NVIDIA Licensing Portal - Access to your entitlements and options for managing your NVIDIA AI Enterprise license servers. Installing and managing NVIDIA AI Enterprise license servers is a prerequisite for deploying NVIDIA vGPU for Compute.

Creating your NVIDIA Enterprise Account#

Prerequisites

Have your order confirmation message with the NVIDIA Entitlement Certificate ready

Choose a unique email address (if creating a new account separate from an evaluation account)

Steps

Follow the Register link in the instructions for using your NVIDIA Entitlement Certificate.

Fill out the NVIDIA Enterprise Account Registration page form and click REGISTER. A message confirming that an account has been created will appear. The email address you provided will then receive an email instructing you to log in to your account on the NVIDIA Application Hub.

Open the email instructing you to log in to your account and click Log In.

On the open NVIDIA Application Hub Login page, type the email address you provided in the text-entry field and click Sign In.

On the Create Your Account page that opens, provide and confirm a password for the account and click Create Account. A message prompting you to verify your email address appears. An email instructing you to verify your email address is sent to your provided email address.

Open the email instructing you to verify your email address and click Verify Email Address. A message confirming that your email address is confirmed appears.

Your account is now active. Continue to Installing NVIDIA AI Enterprise Software Components.

Linking an Evaluation Account to an NVIDIA Enterprise Account for Purchased Licenses#

Link your evaluation account to purchased licenses by registering with the same email address you used for your evaluation account. To create a separate account instead, see Creating your NVIDIA Enterprise Account and use a different email address.

Follow the Register link in the instructions for using the NVIDIA Entitlement Certificate for your purchased licenses.

Fill out the NVIDIA Enterprise Account Registration page form, specifying the email address with which you created your existing account, and click Register.

When a message stating that your email address is already linked to an evaluation account is displayed, click LINK TO NEW ACCOUNT.

Log in to the NVIDIA Licensing Portal with the credentials for your existing account.

Installing NVIDIA AI Enterprise Software Components#

NVIDIA AI Enterprise components are distributed through the NVIDIA NGC Catalog. The following resources are available:

NVIDIA AI Enterprise Software Suite - AI development tools and use case applications

NVIDIA AI Enterprise Infra 7 collection - Infrastructure and workload management components

NVIDIA Omniverse - OpenUSD-based development platform for building industrial digitalization and physical AI applications

NVIDIA Run:ai collection - AI workload orchestration and GPU scheduling platform (self-hosted)

Note

The NVIDIA AI Enterprise license includes NVIDIA Run:ai self-hosted deployments only. Run:ai SaaS is not included in the NVIDIA AI Enterprise license and remains a separate offering.

Accessing the NVIDIA AI Enterprise Application Software#

The application layer of NVIDIA AI Enterprise is intended for data scientists, ML engineers, and developers who build, optimize, and deploy AI models and applications on top of the infrastructure.

The NVIDIA AI Enterprise Software Suite includes AI frameworks, NIM microservices, and pretrained models distributed through the NVIDIA NGC Catalog.

Before downloading any NVIDIA AI Enterprise software assets, ensure you have signed in to NVIDIA NGC from the NVIDIA NGC Sign In page.

Go to NVIDIA AI Enterprise Supported on NVIDIA NGC to view the NVIDIA AI Enterprise Software Suite.

Browse the NVIDIA AI Enterprise Software Suite to find software assets.

Click an asset to learn more about it or download it.

Accessing the NVIDIA AI Enterprise Infrastructure Software#

The infrastructure layer of NVIDIA AI Enterprise is intended for cluster administrators and IT operations teams who need to set up and manage the GPU-accelerated platform that AI workloads run on.

The NVIDIA AI Enterprise Infra collection includes infrastructure management and orchestration software such as GPU Operator, Network Operator, and device plugins needed to prepare and scale your AI-ready infrastructure.

Before downloading any NVIDIA AI Enterprise software assets, ensure you have signed in to NVIDIA NGC from the NVIDIA NGC Sign In page.

Go to the NVIDIA AI Enterprise Infra 7 collection on NVIDIA NGC.

Click the Artifacts tab and select the resource.

Click Download and choose to download the resource using a direct download in the browser, the displayed wget command, or the NVIDIA NGC CLI.

Accessing NVIDIA Omniverse#

NVIDIA Omniverse is included with your NVIDIA AI Enterprise license. It provides a supported environment for building and deploying OpenUSD-based applications and workflows, including industrial digitalization, simulation, and physical AI.

Before downloading NVIDIA Omniverse software, ensure you have signed in to NVIDIA NGC from the NVIDIA NGC Sign In page.

Go to the NVIDIA Omniverse on NGC page on NVIDIA NGC.

Browse the available components, including Omniverse Kit SDK, Nucleus, and enterprise applications.

Click an asset to learn more about it or download it.

For more information about NVIDIA Omniverse, refer to the NVIDIA Omniverse documentation.

Accessing NVIDIA Run:ai#

NVIDIA Run:ai is an AI workload orchestration and GPU scheduling platform. It enables dynamic allocation of GPU resources across AI workloads, maximizing cluster utilization and accelerating time to results.

Note

The NVIDIA AI Enterprise license includes NVIDIA Run:ai self-hosted deployments. NVIDIA Run:ai SaaS is not included in the NVIDIA AI Enterprise license and remains a separate offering.

NVIDIA Run:ai self-hosted is distributed through its own dedicated collection on the NVIDIA NGC Catalog, separate from the NVIDIA AI Enterprise Infra collection.

Before downloading NVIDIA Run:ai software, ensure you have signed in to NVIDIA NGC from the NVIDIA NGC Sign In page.

Go to the NVIDIA Run:ai Self-Hosted collection on NVIDIA NGC.

Follow the steps in the NVIDIA Run:ai Self-Hosted Installation Guide.

Accessing Container Images from NGC#

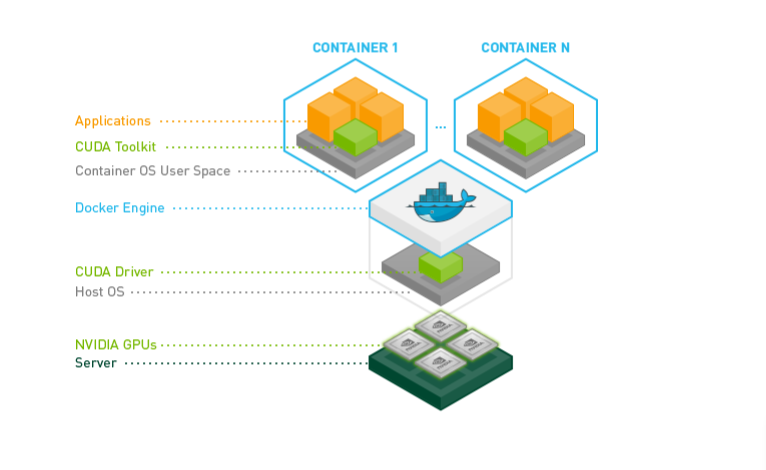

NVIDIA AI Enterprise software is distributed as container images through the NVIDIA NGC Catalog. Each container image contains the complete software stack, including CUDA libraries, cuDNN, Magnum IO components, TensorRT, and the framework.

Before pulling container images, ensure you have completed the following tasks:

From the host machine, obtain the Docker pull command from the component listing in the NGC Catalog.

Refer to NVIDIA AI Enterprise NGC supported software for the complete list.

Choose Your Deployment Type#

Select the deployment type that matches your infrastructure. Each option includes installation instructions.

Category |

Description |

Getting Started |

|---|---|---|

Bare Metal |

Direct installation on physical servers with dedicated GPUs |

|

Virtualized |

Deployment on VMware vSphere using NVIDIA vGPU for Compute |

|

Public Cloud |

Deployment on cloud platforms (AWS, Azure, GCP, OCI, Alibaba, Tencent) |

Note

For comprehensive deployment guides covering prerequisites, installation, configuration, and advanced scenarios, see the Planning & Deployment section on the NVIDIA AI Enterprise Docs Hub.

Installing NVIDIA AI Enterprise on Bare Metal Ubuntu#

This section provides instructions for a bare metal, single-node deployment of NVIDIA AI Enterprise using Docker on NVIDIA-Certified Systems (or NVIDIA-Qualified Systems for GB200 NVL4, GB200 NVL72, and GB300 NVL72).

Prerequisites#

To enable NVIDIA GPU acceleration for compute and AI workloads running in data centers:

Download the NVIDIA GPU data center drivers from NVIDIA Drivers.

Within the Manual Driver Search section, select Data Center/Tesla, your architecture type, and Linux 64-bit to download the

.runfile.

Installing NVIDIA AI Enterprise using the TRD Driver#

Installation of the NVIDIA AI Enterprise software driver for Linux requires:

Compiler toolchain

Kernel headers

Prerequisites

Ensure you follow the CUDA pre-installation steps.

Note

If you prefer to use a Debian package to install CUDA, refer to the Debian instructions.

Steps

Log into the system and check for updates.

sudo apt-get update

Install the GCC compiler and the make tool in the terminal.

sudo apt-get install build-essential

Copy the NVIDIA AI Enterprise Linux driver package, for example,

NVIDIA-Linux-x86_64-xxx.xx.xx.run, to the host machine where you are installing the driver.Where

xxx.xx.xxis the current NVIDIA AI Enterprise version and driver version.Navigate to the directory containing the NVIDIA Driver

.runfile. Then, add the Executable permission to the NVIDIA Driver file using thechmodcommand.sudo chmod +x NVIDIA-Linux-x86_64-xxx.xx.xx.run

From a console shell, run the driver installer as the root user and accept the defaults.

sudo sh ./NVIDIA-Linux-x86_64-xxx.xx.xx.run

Reboot the system.

sudo rebootAfter the system has rebooted, confirm that you can see your NVIDIA GPU device in the output from

nvidia-smi.# Verify GPU driver installation and GPU detection $ nvidia-smi

Expected output: GPU information table showing driver version, CUDA version, and GPU name. If no output appears or you see

"command not found", verify the driver installation completed successfully.

Installing the NVIDIA Container Toolkit#

The NVIDIA Container Toolkit enables building and running GPU-accelerated Docker containers. It includes a libnvidia-container library and utilities that configure containers to use NVIDIA GPUs automatically. For more information, refer to the NVIDIA Container Toolkit documentation.

Installation Steps

Install Docker - Install Docker Engine on Ubuntu. Refer to the Install Docker Engine on Ubuntu documentation for detailed instructions.

Install the NVIDIA Container Toolkit - Enable the Docker repository and install the NVIDIA Container Toolkit. Refer to the Installing the NVIDIA Container Toolkit documentation for step-by-step instructions.

Configure the Docker container runtime - Configure the Docker container runtime to use NVIDIA Container Runtime. Refer to the Configuration documentation.

Verification Steps

Run the

nvidia-smicommand from a CUDA container image. Use a CUDA Toolkit image version that matches or is earlier than your installed driver version.Note

Use a CUDA Toolkit image version that does not exceed your driver version. For example, if your driver supports CUDA 12.x, use a CUDA 12.x or earlier container image. For a list of available images, refer to nvidia/cuda on Docker Hub.

# Test GPU access from container (uses all available GPUs) # --rm: automatically remove container after exit # --runtime=nvidia: use NVIDIA Container Runtime # --gpus all: expose all GPUs to container $ sudo docker run --rm --runtime=nvidia --gpus all ubuntu nvidia-smi

Troubleshooting

- icon:

wrench

If you encounter

nvidia-container-cli: initialization error, verify:NVIDIA driver is loaded:

nvidia-smireturns successfully on hostContainer toolkit is installed:

which nvidia-container-cliDocker daemon restarted:

sudo systemctl restart docker

Start a GPU-enabled container on any two available GPUs.

$ docker run --runtime=nvidia --gpus 2 nvidia/cuda:xx.x.x-base-ubuntu22.04 nvidia-smi

Where

nvidia/cuda:xx.x.xis the current supported CUDA Toolkit version.Start a GPU-enabled container on two specific GPUs identified by their index numbers.

$ docker run --runtime=nvidia --gpus '"device=1,2"' nvidia/cuda:xx.x.x-base-ubuntu22.04 nvidia-smi

Where

nvidia/cuda:xx.x.xis the current supported CUDA Toolkit version.Start a GPU-enabled container on two specific GPUs, with one GPU identified by its UUID and the other GPU identified by its index number.

$ docker run --runtime=nvidia --gpus '"device=UUID-ABCDEF,1"' nvidia/cuda:xx.x.x-base-ubuntu22.04 nvidia-smi

Where

nvidia/cuda:xx.x.xis the current supported CUDA Toolkit version.Specify a GPU capability for the container.

$ docker run --runtime=nvidia --gpus all,capabilities=utility nvidia/cuda:xx.x.x-base-ubuntu22.04 nvidia-smi

Where

nvidia/cuda:xx.x.xis the current supported CUDA Toolkit version.

Obtaining NVIDIA Base Command Manager#

NVIDIA Base Command Manager provides cluster provisioning, workload management, and infrastructure monitoring for bare metal deployments.

Before obtaining NVIDIA Base Command Manager, ensure you have activated your NVIDIA Enterprise Account, as explained in Activating the Accounts for NVIDIA AI Enterprise.

Email your entitlement certificate to sw-bright-sales-ops@NVIDIA.onmicrosoft.com to request your NVIDIA Base Command Manager product keys. After your entitlement certificate has been reviewed, you will receive a product key. Generate a license key for the number of licenses you purchased.

Download NVIDIA Base Command Manager for your operating system.

Follow the steps in the NVIDIA Base Command Manager Installation Manual to create and license your head node.

For more information, refer to the Base Command Manager product manuals.

Installing NVIDIA AI Enterprise on VMware vSphere#

The NVIDIA AI Enterprise VMware Deployment Guide offers detailed information for deploying NVIDIA AI Enterprise on a third-party NVIDIA-Certified System running VMware vSphere using NVIDIA vGPU for Compute.

Prerequisites#

NVIDIA Virtual GPU Manager

NVIDIA vGPU for Compute Guest Driver

NVIDIA License System

To download the NVIDIA vGPU for Compute software drivers, follow the instructions for Accessing the NVIDIA AI Enterprise Infrastructure Software.

The NVIDIA AI Enterprise license entitles customers to download NVIDIA vGPU for Compute software through NVIDIA NGC. For instructions on accessing NGC, refer to Installing NVIDIA AI Enterprise Software Components. Deploying NVIDIA AI Enterprise using vGPU requires a valid license enforced by installing the NVIDIA License System.

NVIDIA License System Overview

- icon:

info

The NVIDIA License System is a pool of floating licenses for licensed NVIDIA software products configured with licenses obtained from the NVIDIA Licensing Portal.

Service Instance Types:

Cloud License Service (CLS): Hosted on the NVIDIA Licensing Portal

Delegated License Service (DLS): Hosted on-premises at a location accessible from your private network

An NVIDIA vGPU for Compute client VM with a network connection obtains a license by leasing it from an NVIDIA License System service instance. The service instance serves the license to the client over the network from a pool of floating licenses obtained from the NVIDIA Licensing Portal. The license is returned to the service instance when the licensed client no longer requires the license.

To activate an NVIDIA vGPU for Compute, software licensing must be configured for the vGPU VM client when booted. NVIDIA vGPU for Compute VMs run at a reduced capability until a license is acquired.

Documentation:

NVIDIA Licensing Quick Start Guide - Instructions for configuring an express Cloud License Service (CLS) instance and verifying license status

NVIDIA License System User Guide - Detailed instructions for installing, configuring, and managing the NVIDIA License System, including Delegated License Service (DLS) instances

Installing NVIDIA AI Enterprise on Microsoft Azure#

NVIDIA AI Enterprise can be run on Amazon Web Services (AWS), Google Cloud, Microsoft Azure, Oracle Cloud Infrastructure (OCI), Alibaba Cloud, and Tencent Cloud.

This section provides minimal instructions for deploying NVIDIA AI Enterprise on Microsoft Azure using the NVIDIA AI Enterprise VMI. For other cloud providers, refer to the Planning & Deployment section on the NVIDIA AI Enterprise Docs Hub.

Installing NVIDIA AI Enterprise on Microsoft Azure using the NVIDIA AI Enterprise VMI#

The NVIDIA AI Enterprise On-Demand VMI generates a GPU-accelerated virtual machine instance in minutes with pre-installed software to accelerate Machine Learning, Deep Learning, Data Science, and HPC workloads.

The On-Demand VMI is preconfigured with the following software:

Ubuntu Operating System

NVIDIA GPU Data Center Driver

Docker-ce

NVIDIA Container Toolkit

CSP CLI, NGC CLI

Miniconda, JupyterLab, Git

Token Activation Script

Getting started with NVIDIA AI Enterprise in your Enterprise (On-Demand) VMI cloud instance requires two steps:

Authorize the VMI cloud instance with NVIDIA NGC by copying over the provided instance ID token into the Activate Subscription page on NGC. There are four key steps to complete this part of the process:

Get an identity token from the VMI.

Activate your NVIDIA AI Enterprise subscription with the token.

Generate an API key to access the catalog.

Put the API key on the VMI.

Follow the NGC Catalog access instructions to complete this step.

Pulling and running NVIDIA AI Enterprise Containers. Refer to this section of the Cloud Deployment Guide for pulling and running NGC container images through the NVIDIA NGC Catalog.

Detailed instructions on installing NVIDIA AI Enterprise on the public cloud can be found in the Microsoft Azure Overview.

Deployment Types#

This section provides links to comprehensive deployment documentation for each supported environment. Choose the deployment option that matches your infrastructure.

Type |

Description |

|---|---|

Bare Metal |

Direct installation on physical servers with dedicated GPUs |

Virtualized |

Deployment on VMware vSphere using NVIDIA vGPU for Compute or GPU Passthrough |

Public Cloud |

Deployment on cloud platforms (AWS, Azure, GCP, OCI, Alibaba, Tencent) |

For comprehensive deployment guides covering prerequisites, installation, configuration, and advanced scenarios, refer to the Planning & Deployment section on the NVIDIA AI Enterprise Docs Hub.

Where to Go Next#

After completing the Quick Start Guide, use the following resources to continue your NVIDIA AI Enterprise journey:

If you need to… |

Go to |

Why |

|---|---|---|

Start: Get started from scratch (account, drivers, first workload) |

This Document |

Guides you through account activation, software installation, and running your first AI workload |

Discover: Browse available software components |

Complete catalog of Application and Infrastructure Layer components with links to NGC Catalog entries |

|

Research: Choose a release branch or check version compatibility |

Defines branch types (FB, PB, LTSB, Infrastructure), support periods, and includes the Interactive Lifecycle and Compatibility Explorer |

|

Plan: Plan a deployment architecture |

Planning & Deployment on the NVIDIA AI Enterprise Docs Hub |

Reference architectures, sizing guides, and deployment blueprints |

Validate: Validate your infrastructure stack |

Confirm that your NVIDIA GPU driver, NVIDIA GPU Operator, NVIDIA Network Operator, and NVIDIA Run:ai versions are compatible before deploying |

|

Application layer: Deploy or configure NVIDIA Omniverse |

Standalone documentation site — NVIDIA Omniverse is included in your NVIDIA AI Enterprise license, but is documented separately |

|

Kubernetes operators: Deploy NVIDIA GPU Operator, NVIDIA Network Operator, or NVIDIA DPU Operator (DPF) |

NVIDIA GPU Operator Documentation · NVIDIA Network Operator Documentation · NVIDIA DOCA Platform Framework (DPF) Documentation |

Standalone documentation sites for Kubernetes operator installation and configuration |

AI frameworks and NIMs: Set up NVIDIA NIM, NVIDIA NeMo, or other AI frameworks |

NVIDIA NIM Documentation · NVIDIA NeMo Documentation · NVIDIA NGC Catalog |

Standalone documentation sites; the NVIDIA NGC Catalog lists all supported software |

Infrastructure orchestration: Deploy NVIDIA Run:ai |

Standalone documentation site — NVIDIA Run:ai self-hosted is included in your NVIDIA AI Enterprise license (NVIDIA Run:ai SaaS is separate) |

|

Support: Check licensing or open a support case |

Support on the NVIDIA AI Enterprise Docs Hub |

NVIDIA Enterprise Support Services portal for licensing and technical support |