AIS configuration comprises:

Cluster-wide (global) configuration is protected, namely: checksummed, versioned, and safely replicated. In effect, global config defines cluster-wide defaults inherited by each node joining the cluster.

Local config includes:

Majority of the configuration knobs can be changed at runtime (and at any time). A few read-only variables are explicitly marked in the source; any attempt to modify those at runtime will return “read-only” error message.

For the most part, commands to view and update (CLI, cluster, node) configuration can be found here.

The same document also contains a brief theory of operation, command descriptions, numerous usage examples and more.

Important: as an input, CLI accepts both plain text and JSON-formatted values. For the latter, make sure to embed the (JSON value) argument into single quotes, e.g.:

However, plain-text updating is more common, e.g.:

To show the current cluster config in plain text and JSON:

And the same in JSON:

Note: some config values are read-only or otherwise protected and can be only listed, e.g.:

See also:

Configuring AIS cluster for production requires a careful consideration. First and foremost, there are assorted performance related recommendations.

Optimal performance settings will always depend on your (hardware, network) environment. Speaking of networking, AIS supports 3 (three) logical networks and will, therefore, benefit, performance-wise, if provisioned with up to 3 isolated physical networks or VLANs. The logical networks are:

with the corresponding JSON names, respectively:

hostnamehostname_intra_controlhostname_intra_dataAll aistore nodes - both ais targets and ais gateways - can be deployed as multi-homed servers. But of course, the capability is mostly important and relevant for the targets that may be required (and expected) to move a lot of traffic, as fast as possible.

Building up on the previous section’s example, here’s how it may look:

Note: additional NICs can be added (or removed) transparently for users, i.e. without requiring (or causing) any other changes.

The example above may serve as a simple illustration whereby t[fbarswQP] becomes a multi-homed device equally utilizing all 3 (three) IPv4 interfaces

The first thing to keep in mind is that there are 3 (three) separate, and separately maintained, pieces:

Specifically:

To show and/or change global config, simply type one of:

Typically, when we deploy a new AIS cluster, we use configuration template that contains all the defaults - see, for example, JSON template. Configuration sections in this template, and the knobs within those sections, must be self-explanatory, and the majority of those, except maybe just a few, have pre-assigned default values.

As stated above, each node in the cluster inherits global configuration with the capability to override the latter locally.

There are also node-specific settings, such as:

Since AIS supports n-way mirroring and erasure coding, we typically recommend not using LVMs and hardware RAIDs.

See also:

###Example: use --type option to show only local config

Example:

In the DEFAULT column above hyphen (-) indicates that the corresponding value is inherited and, as far as the node CCDpt8088, remains unchanged.

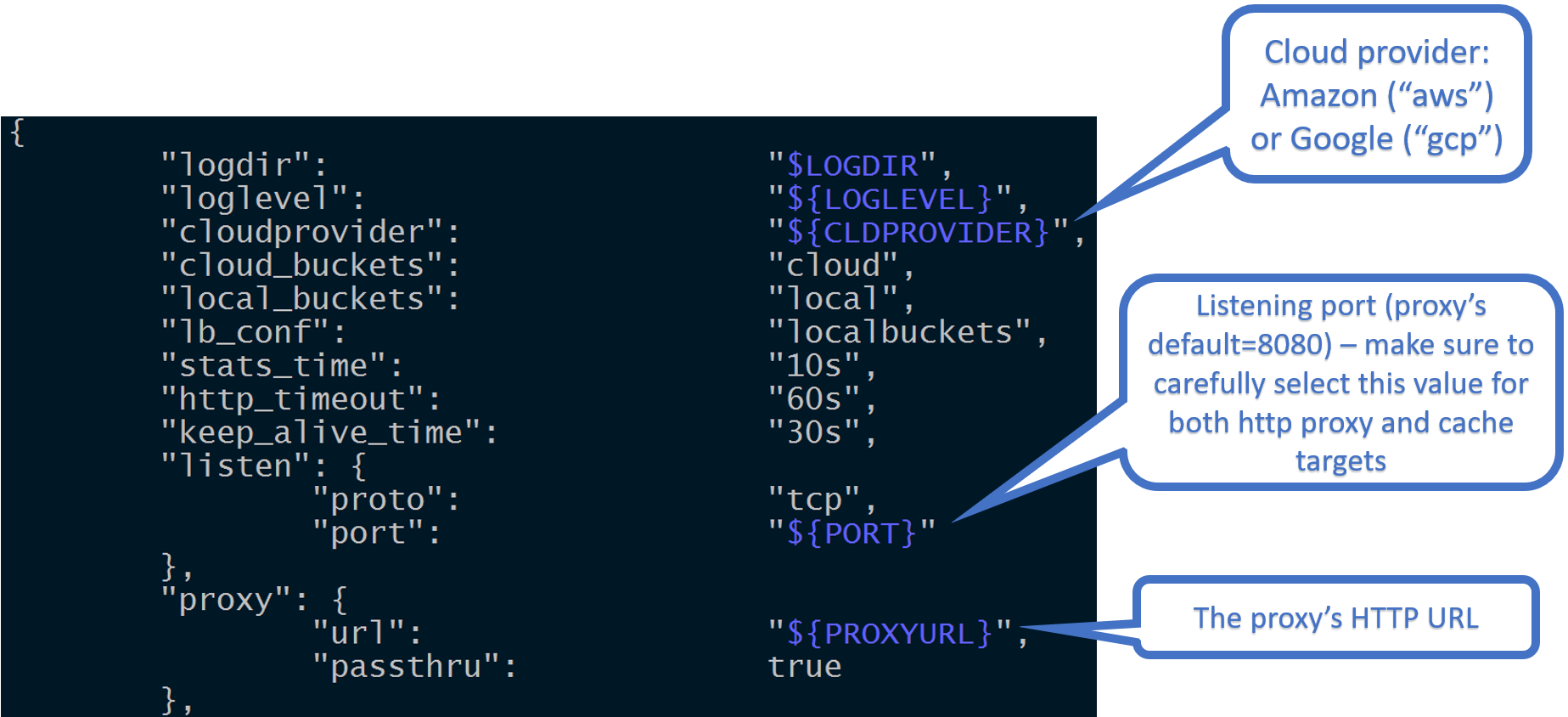

The picture illustrates one section of the configuration template that, in part, includes listening port:

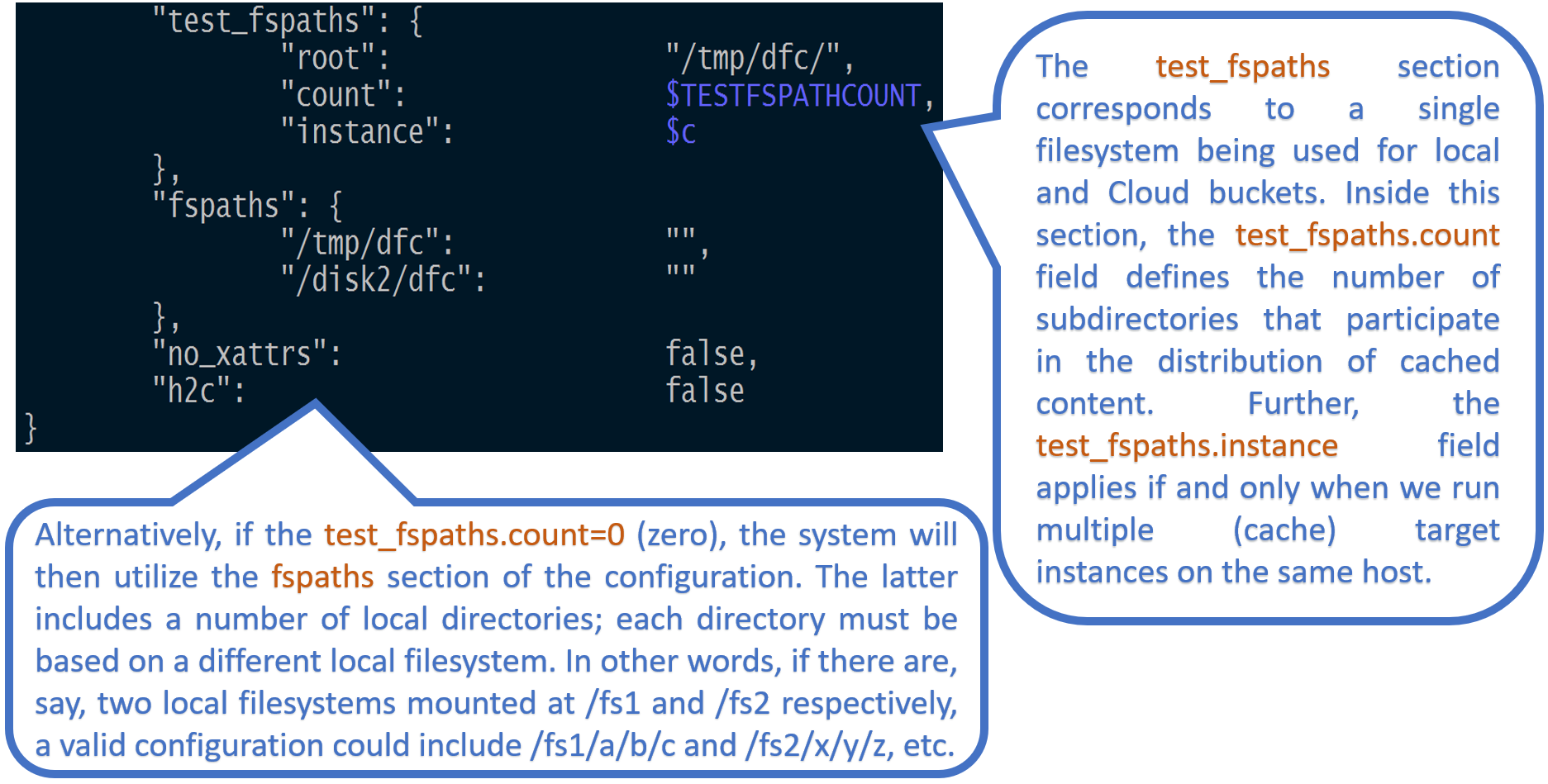

Further, test_fspaths section (see below) corresponds to a single local filesystem being partitioned between both local and Cloud buckets. In other words, the test_fspaths configuration option is intended strictly for development.

In production, we use an alternative configuration called fspaths: the section of the config that includes a number of local directories, whereby each directory is based on a different local filesystem solely utilizing one or more non shared disks.

For fspath and mountpath terminology and details, please see section Managing Mountpaths in this document.

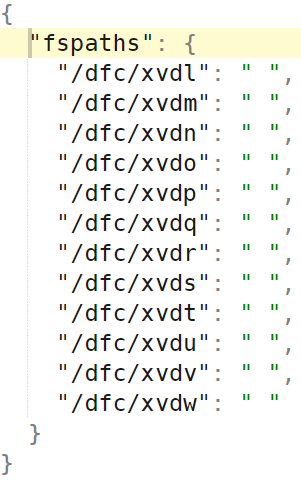

An example of 12 fspaths (and 12 local filesystems) follows below:

See also:

First, some basic facts:

/v1/daemon in its URL path./v1/cluster in its path.Both

daemonandclusterare the two RESTful resource abstractions supported by the API. Please see AIS API for naming conventions, RESTful resources, as well as API reference and details.

This will display all configuration sections and all the named knobs - i.e., configuration variables and their current values.

Most configuration options can be updated either on an individual (target or proxy) daemon, or the entire cluster. Some configurations are “overridable” and can be configured on a per-daemon basis. Some of these are shown in the table below.

For examples and alternative ways to format configuration-updating requests, please see the examples below.

Following is a table-summary that contains a subset of all settable knobs:

NOTE (May 2022): this table is somewhat outdated and must be revisited.

AIS command-line allows to override configuration at AIS node’s startup. For example:

As shown above, the CLI option in-question is: confjson.

Its value is a JSON-formatted map of string names and string values.

By default, the config provided in config_custom will be persisted on the disk.

To make it transient either add -transient=true flag or add additional JSON entry:

Another example. To override locally-configured address of the primary proxy, run:

To achieve the same on temporary basis, add -transient=true as follows:

Please see AIS command-line for other command-line options and details.

Configuration option fspaths specifies the list of local mountpath directories. Each configured fspath is, simply, a local directory that provides the basis for AIS mountpath.

In regards non-sharing of disks between mountpaths: for development we make an exception, such that multiple mountpaths are actually allowed to share a disk and coexist within a single filesystem. This is done strictly for development convenience, though.

AIStore REST API makes it possible to list, add, remove, enable, and disable a fspath (and, therefore, the corresponding local filesystem) at runtime. Filesystem’s health checker (FSHC) monitors the health of all local filesystems: a filesystem that “accumulates” I/O errors will be disabled and taken out, as far as the AIStore built-in mechanism of object distribution. For further details about FSHC, please refer to FSHC readme.

To make sure that AIStore does not utilize xattrs, configure:

checksum.type=noneversioning.enabled=true, andwrite_policy.md=neverfor all targets in AIStore cluster.

Or, simply update global configuration (to have those cluster-wide defaults later inherited by all newly created buckets).

This can be done via the common configuration “part” that’d be further used to deploy the cluster.

Extended attributes can be disabled on per bucket basis. To do this, turn off saving metadata to disks (CLI):

Disable extended attributes only if you need fast and temporary storage. Without xattrs, a node loses its objects after the node reboots. If extended attributes are disabled globally when deploying a cluster, node IDs are not permanent and a node can change its ID after it restarts.

To switch from HTTP protocol to an encrypted HTTPS, configure net.http.use_https=true and modify net.http.server_crt and net.http.server_key values so they point to your TLS certificate and key files respectively (see AIStore configuration).

The following HTTPS topics are also covered elsewhere:

Default installation enables filesystem health checker component called FSHC. FSHC can be also disabled via section “fshc” of the configuration.

When enabled, FSHC gets notified on every I/O error upon which it performs extensive checks on the corresponding local filesystem. One possible outcome of this health-checking process is that FSHC disables the faulty filesystems leaving the target with one filesystem less to distribute incoming data.

Please see FSHC readme for further details.

In addition to user-accessible public network, AIStore will optionally make use of the two other networks:

The way the corresponding config may look in production (e.g.) follows:

The fact that there are 3 logical networks is not a “limitation” - not a requirement to specifically have 3. Using the example above, here’s a small deployment-time change to run a single one:

Ideally though, production clusters are deployed over 3 physically different and isolated networks, whereby intense data traffic, for instance, does not introduce additional latency for the control one, etc.

Separately, there’s a multi-homing capability motivated by the fact that today’s server systems may often have, say, two 50Gbps network adapters. To deliver the entire 100Gbps without LACP trunking and (static) teaming, we could simply have something like:

No other changes. Just add the second NIC - second IPv4 addr 10.50.56.206 above, and that’s all.

The following assumes that G and T are the (hostname:port) of one of the deployed gateways (in a given AIS cluster) and one of the targets, respectively.

or, same:

Notice the two alternative ways to form the requests.

AIS CLI is an integrated management-and-monitoring command line tool. The following CLI command sequence, first - finds out all AIS knobs that contain substring “time” in their names, second - modifies list_timeout from 2 minutes to 5 minutes, and finally, displays the modified value:

The example above demonstrates cluster-wide configuration update but note: single-node updates are also supported.

periodic.stats_time = 1 minute, periodic.iostat_time_long = 4 secondsAIS configuration includes a section called disk. The disk in turn contains several knobs - one of those knobs is disk.iostat_time_long, another - disk.disk_util_low_wm. To update one or both of those named variables on all or one of the clustered nodes, you could:

disk.iostat_time_long = 3 seconds, disk.disk_util_low_wm = 40 percent on daemon with ID target1