AIStore + HuggingFace: Distributed Downloads for Large-Scale Machine Learning

AIStore + HuggingFace: Distributed Downloads for Large-Scale Machine Learning

AIStore + HuggingFace: Distributed Downloads for Large-Scale Machine Learning

Machine learning teams increasingly rely on large datasets from HuggingFace to power their models. But traditional download tools struggle with terabyte-scale datasets containing thousands of files, creating bottlenecks that slow development cycles.

This post introduces AIStore’s new HuggingFace download integration, which enables efficient downloads of large datasets with parallel batch jobs.

Sequential downloads create significant bottlenecks when dealing with complex datasets that have hundreds of thousands of files distributed across multiple directories.

AIStore addresses this by parallelizing downloads within each target using multiple workers (one per mountpath), batching jobs based on file size, and collecting file metadata in parallel. This approach leverages the network throughput from each individual target to the HuggingFace servers.

The following examples assume an active AIStore cluster. If the destination buckets (e.g., ais://datasets, ais://models) don’t exist, they will be created automatically with default properties.

AIStore’s CLI includes HuggingFace-specific flags for the ais download command that handle distributed operations behind the scenes.

The system uses some key techniques to improve download performance:

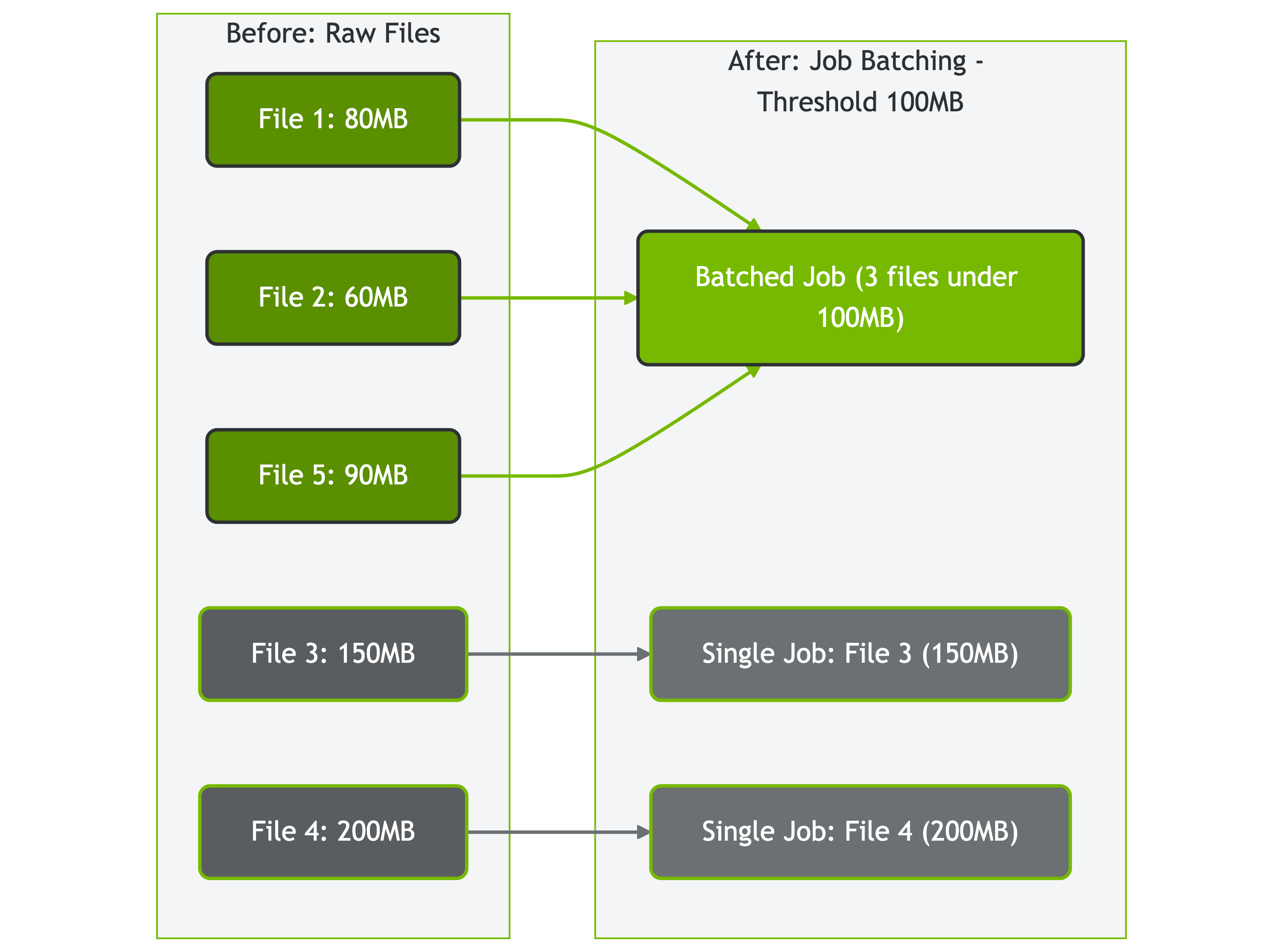

Job batching categorizes files based on configurable size thresholds:

Files are categorized into two groups:

Figure: How AIStore batches files based on size threshold (100MB in this example)

Figure: How AIStore batches files based on size threshold (100MB in this example)

Before downloading files, AIStore makes parallel HEAD requests to the HuggingFace API to collect file metadata (like file sizes) concurrently rather than sequentially. This reduces setup time for datasets with many files.

Let’s walk through an example downloading a machine learning dataset and processing it with ETL operations:

For this walkthrough, we’ll create and use three buckets:

ais://deepvs - for the initial dataset downloadais://ml-dataset - for ETL-processed filesais://ml-dataset-parsed - for the final parsed datasetIf these buckets don’t exist, they will be created automatically with default properties.

At this point, you have several options:

Why transform? HuggingFace datasets often have complex paths or formats that benefit from standardization. This walkthrough demonstrates ETL transformations for file organization (consistent naming) and format conversion (Parquet → JSON for framework compatibility).

Note: ETL operations require AIStore to be deployed on Kubernetes. See ETL documentation for deployment requirements and setup instructions.

Before applying transformations, initialize the required ETL containers:

AIStore integrates seamlessly with popular ML frameworks. Here’s how to use the processed dataset in your training pipeline:

The HuggingFace integration opens up some practical areas for expansion:

Download and Transform API: AIStore supports combining download and ETL transformation in a single API call, eliminating the two-step process shown in the walkthrough. This allows downloading HuggingFace datasets with immediate transformation (e.g., Parquet → JSON) in one operation. CLI integration for this functionality is in development.

Additional Dataset Formats: Beyond the current Parquet support, HuggingFace datasets are available in multiple formats that teams commonly need:

AIStore’s HuggingFace integration addresses common dataset download bottlenecks in machine learning workflows. Job batching and concurrent metadata collection enable efficient, parallel downloads of terabyte-scale datasets that would otherwise overwhelm traditional tools. Once stored in AIStore, teams can leverage local ETL operations to transform and prepare data without additional network transfers. This approach provides a streamlined path from raw downloads to training-ready datasets, eliminating the typical download-wait-process cycle that slows ML development.

AIStore Core Documentation

ETL (Extract, Transform, Load) Resources

External Resources