Algorithm Selection and Reproducible Builds#

The default behavior of TensorRT’s optimizer is to choose the algorithms that globally minimize the execution time of the engine. It does this by timing each implementation, and sometimes, when implementations have similar timings, system noise can determine which one is chosen on any particular run of the builder. Different implementations will typically use different orders of accumulation of floating point values, and two implementations can use different algorithms or even run at different precisions. As a result, different invocations of the builder will typically not result in engines that return bit-identical results.

Sometimes, it is important to have a deterministic build or recreate an earlier build’s algorithm choices. In the previous version of TensorRT, the above requirements were met by implementing IAlgorithmSelector. In the new version, the editable timing cache is used.

When the engine is being built for the first time, you supply the BuilderFlag::kEDITABLE_TIMING_CACHE flag to TensorRT to enable the editable cache. At the same time, you enable and retain the logs and cache files. The logs will provide the name, key, available tactics, and the selected tactic for each model layer. The cache file will record the decisions made by TensorRT.

Next time the same engine is being built, you supply the same flags to TensorRT and use the interface ITimingCache::update to update the cache. Specifically, select tactics for some layers. Then, pass the cache to TensorRT. In the building process, TensorRT will use the newly assigned tactic. Unlike before, in the new version, only one tactic can be assigned to each layer.

Strongly Typed Networks#

By default, TensorRT autotunes tensor types to generate the fastest engine. This can result in accuracy loss when model accuracy requires a layer to run with higher precision than TensorRT chooses. One approach is to use the ILayer::setPrecision and ILayer::setOutputType APIs to control a layer’s I/O types and, hence, its execution precision. This approach works, but figuring out which layers must be run at high precision to get the best accuracy can be challenging.

An alternative approach is to specify low-precision use in the model, such as AutoCast and Quantization from Model-Optimizer, and have TensorRT adhere to the precision specifications. TensorRT will still autotune over different data layouts to find an optimal set of kernels for the network.

When you specify that a network is strongly typed, TensorRT infers a type for each intermediate and output tensor using the rules in the operator type specification. TensorRT adheres to these inferred types while building the engine.

Because types are not autotuned, an engine built from a strongly typed network can be slower than one where TensorRT chooses tensor types. However, the build time can improve because fewer kernel alternatives are evaluated.

Strongly typed networks are not supported with DLA.

You can create a strongly typed network as follows:

1IBuilder* builder = ...;

2INetworkDefinition* network = builder->createNetworkV2(1U << static_cast<uint32_t>(NetworkDefinitionCreationFlag::kSTRONGLY_TYPED)))

1builder = trt.Builder(...)

2builder.create_network(1 << int(trt.NetworkDefinitionCreationFlag.STRONGLY_TYPED))

For strongly typed networks, the layer APIs setPrecision and setOutputType are not permitted, nor are the builder precision flags kFP16, kBF16, kFP8, kINT8, kINT4, and kFP4. The builder flag kTF32 is permitted as it controls TF32 Tensor Core usage for FP32 types rather than controlling the use of TF32 data types.

Reduced Precision in Weakly-Typed Networks#

Network-Level Control of Precision#

By default, TensorRT works with 32-bit precision but can also execute operations using 16-bit and 8-bit quantized floating points. Using lower precision requires less memory and enables faster computation.

Reduced precision support depends on your hardware (refer to Hardware and Precision). You can query the builder to check the supported precision support on a platform:

1if (builder->platformHasFastFp16()) { ... };

1if builder.platform_has_fp16:

Setting flags in the builder configuration informs TensorRT that it can select lower-precision implementations:

1config->setFlag(BuilderFlag::kFP16);

1config.set_flag(trt.BuilderFlag.FP16)

There are three precision flags: FP16, INT8, and TF32, and they can be enabled independently. TensorRT will still choose a higher-precision kernel if it results in a lower runtime or if no low-precision implementation exists.

When TensorRT chooses a precision for a layer, it automatically converts weights as necessary to run the layer.

While using FP16 and TF32 precisions is relatively straightforward, working with INT8 adds additional complexity. For more information, refer to the Working with Quantized Types section.

Note that even if the precision flags are enabled, the engine’s input/output bindings default to FP32. Refer to the I/O Formats section for information on how to set the data types and formats of the input/output bindings.

Layer-Level Control of Precision#

The builder flags provide permissive, coarse-grained control. However, sometimes, part of a network requires a higher dynamic range or is sensitive to numerical precision. You can constrain the input and output types on a per-layer basis:

1layer->setPrecision(DataType::kFP16)

1layer.precision = trt.fp16

This provides a preferred type (here, DataType::kFP16) for the inputs and outputs.

You can also set preferred types for the layer’s outputs:

1layer->setOutputType(out_tensor_index, DataType::kFLOAT)

1layer.set_output_type(out_tensor_index, trt.fp32)

The computation will use the same floating-point type as the inputs. Most TensorRT implementations have the same floating-point types for input and output; however, Convolution, Deconvolution, and FullyConnected can support quantized INT8 input and unquantized FP16 or FP32 output, as sometimes working with higher-precision outputs from quantized inputs is necessary to preserve accuracy.

Setting the precision constraint hints to TensorRT that it should select a layer implementation whose inputs and outputs match the preferred types, inserting reformat operations if the outputs of the previous layer and the inputs to the next layer do not match the requested types. Note that TensorRT will only be able to select an implementation with these types if they are also enabled using the flags in the builder configuration.

By default, TensorRT chooses such an implementation only if it results in a higher-performance network. If another implementation is faster, TensorRT will use it and issue a warning. You can override this behavior by preferring the type constraints in the builder configuration.

1config->setFlag(BuilderFlag::kPREFER_PRECISION_CONSTRAINTS)

1config.set_flag(trt.BuilderFlag.PREFER_PRECISION_CONSTRAINTS)

If the constraints are preferred, TensorRT obeys them unless there is no implementation with the preferred precision constraints, in which case it issues a warning and uses the fastest available implementation.

To change the warning to an error, use OBEY instead of PREFER:

1config->setFlag(BuilderFlag::kOBEY_PRECISION_CONSTRAINTS);

1config.set_flag(trt.BuilderFlag.OBEY_PRECISION_CONSTRAINTS);

sampleINT8API illustrates the use of reduced precision with these APIs.

Precision constraints are optional—you can query whether a constraint has been set using layer->precisionIsSet() in C++ or layer.precision_is_set in Python. If a precision constraint is not set, the result returned from layer->getPrecision() in C++ or reading the precision attribute in Python is not meaningful. Output type constraints are similarly optional.

If no constraints are set using ILayer::setPrecision or ILayer::setOutputType API, then BuilderFlag::kPREFER_PRECISION_CONSTRAINTS or BuilderFlag::kOBEY_PRECISION_CONSTRAINTS are ignored. A layer can choose from precision or output types based on allowed builder precisions.

Note that the ITensor::setType() API does not set the precision constraint of a tensor unless it is one of the input/output tensors of the network. Also, there is a distinction between layer->setOutputType() and layer->getOutput(i)->setType(). The former is an optional type constraining the implementation TensorRT will choose for a layer. The latter specifies the type of a network’s input/output and is ignored if the tensor is not a network input/output. If they are different, TensorRT will insert a cast to ensure that both specifications are respected. If you call setOutputType() for a layer that produces a network output, you should generally configure the corresponding network output to have the same type.

TF32#

TensorRT allows the use of TF32 Tensor Cores by default. When computing inner products, such as during convolution or matrix multiplication, TF32 execution does the following:

Rounds the FP32 multiplicands to FP16 precision but keeps the FP32 dynamic range.

Computes an exact product of the rounded multiplicands.

Accumulates the products in an FP32 sum.

TF32 Tensor Cores can speed up networks using FP32, typically with no loss of accuracy. It provides better numerical stability than FP16 for models that require an HDR (high dynamic range) for weights or activations.

There is no guarantee that TF32 Tensor Cores are used, and there is no way to force the implementation to use them—TensorRT can fall back to FP32 at any time and always falls back if the platform does not support TF32. However, you can disable their use by clearing the TF32 builder flag.

1config->clearFlag(BuilderFlag::kTF32);

1config.clear_flag(trt.BuilderFlag.TF32)

Setting the environment variable NVIDIA_TF32_OVERRIDE=0 when building an engine disables the use of TF32 despite setting BuilderFlag::kTF32. When set to 0, this environment variable overrides any defaults or programmatic configuration of NVIDIA libraries, so they never accelerate FP32 computations with TF32 Tensor Cores. This is meant to be a debugging tool only, and no code outside NVIDIA libraries should change the behavior based on this environment variable. Any other setting besides 0 is reserved for future use.

Warning

Setting the environment variable NVIDIA_TF32_OVERRIDE to a different value when running the engine can cause unpredictable precision/performance effects. It is best left unset when an engine is run.

Note

Unless your application requires the higher dynamic range provided by TF32, FP16 will be a better solution since it almost always yields faster performance.

BF16#

TensorRT supports the bfloat16 (brain float) floating point format on NVIDIA Ampere and later architectures. Like other precisions, it can be enabled using the corresponding builder flag:

1config->setFlag(BuilderFlag::kBF16);

1config.set_flag(trt.BuilderFlag.BF16)

Note that not all layers support bfloat16. For more information, refer to the TensorRT Operator documentation.

Control of Computational Precision#

Sometimes, it is desirable to control the internal precision of the computation in addition to setting the input and output precisions for an operator. TensorRT selects the computational precision by default based on the layer input type and global performance considerations.

There are two layers where TensorRT provides additional capabilities to control computational precision:

The INormalizationLayer provides a setPrecision method to control the precision of accumulation. By default, to avoid overflow errors, TensorRT accumulates in FP32, even in mixed precision mode, regardless of builder flags. You can use this method to specify FP16 accumulation instead.

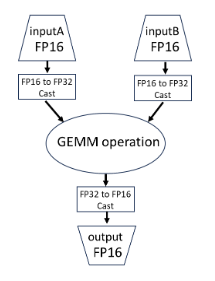

For the IMatrixMultiplyLayer, TensorRT, by default, selects accumulation precision based on the input types and performance considerations. However, the accumulation type is guaranteed to have a range at least as great as the input types. When using strongly typed mode, you can enforce FP32 precision for FP16 GEMMs by casting the inputs to FP32. TensorRT recognizes this pattern and fuses the casts with the GEMM, resulting in a single kernel with FP16 inputs and FP32 accumulation.