Overview

The NVIDIA TAO Toolkit is used with NVIDIA pre-trained models to create custom Computer Vision (CV) and Conversational AI models with the user’s own data. Training AI models using TAO Toolkit does not require expertise in AI or deep learning. A simplified Command Line Interface (CLI) abstracts away AI framework complexity enabling users to build production quality AI models using a simple spec file and one of the NVIDIA pre-trained models. With a basic understanding of deep learning and minimal to zero coding required, TAO Toolkit users are able to:

Fine-tune models for CV use cases such as object detection, image classification, segmentation, CR, Key point estimation using NVIDIA pre-trained CV models.

Fine-tune models for Conversational AI use cases such as Automatic Speech Recognition (ASR) or Natural Language Processing (NLP) using NVIDIA pre-trained Conversational AI models.

Add new classes to an existing pre-trained model.

Re-train a model to adapt to different use cases.

Use the model pruning capability on CV models to reduce the overall size of the model.

TAO Toolkit users create custom AI models by modifying the training hyperparameters defined in each spec file. This guide includes sample spec files and paramter definitions for all models supported by TAO. Refer to the corresponding Computer Vision or Conversational AI section for desired model. Along with creating accurate AI models, the TAO Toolkit is also capable of optimizing models for inference to achieve the highest throughput for deployment.

What is Transfer Learning?

Transfer learning is the process of transferring learned features from one application to

another. It is a commonly used training technique where a model trained on one task is

re-trained for use on a different task. This works surprisingly well as many of the early

layers in a neural network are the same for similar tasks. For example, many of the early

layers in a convolutional neural network used for a CV model are primarily used to identify

outlines, curves, and other features in an image. The learned features from these layers can

be applied to similar tasks carrying out the same identification in other domains. With transfer

learning, less data is required to accurately train a model compared to training that same

model from scratch. To learn more about transfer learning, read this blog.

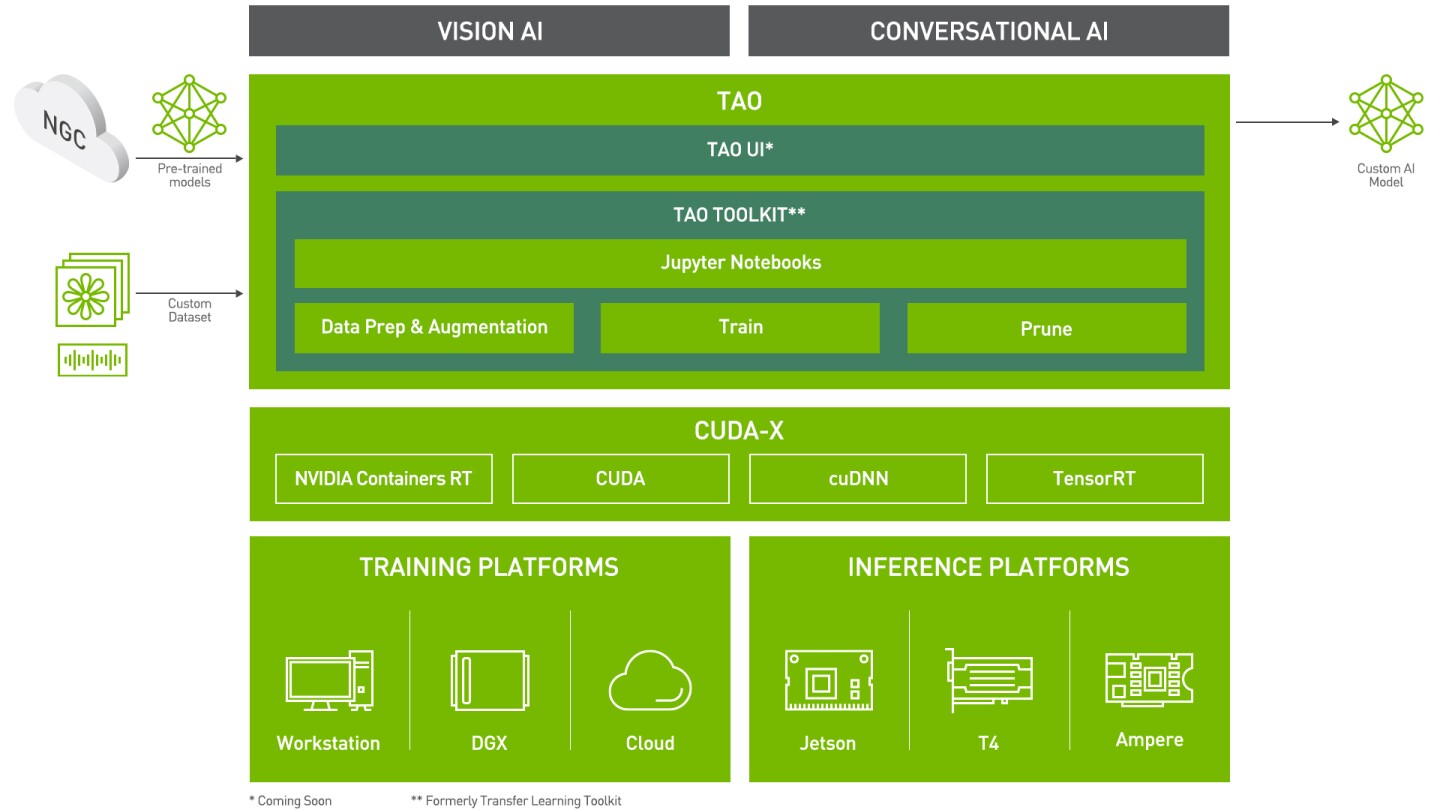

TAO Toolkit is a Python package hosted on the NVIDIA Python Package Index. It interacts with lower-level TAO dockers available from the NVIDIA GPU Accelerated Container Registry (NGC); TAO containers come pre-installed with all dependencies required for training. The CLI is run from Jupyter notebooks packaged inside each docker container and consists of a few simple commands such as data augmentation, train, evaluate, infer, prune, and export. The output of the TAO workflow is a trained model that can be deployed for inference on NVIDIA devices using DeepStream, TensorRT, Riva, and the TAO CV Inference Pipeline.

The TAO application layer is built on top of CUDA-X which contains all the lower level NVIDIA libraries. These include NVIDIA Container Runtime for GPU acceleration, CUDA and cuDNN for deep learning (DL) operations, and TensorRT for optimization and generating TensorRT compatible models for deployment. TensorRT is the NVIDIA inference optimization and runtime engine. Models that are generated with TAO are completely accelerated for TensorRT, so users can expect maximum inference performance without any extra effort.

With TAO Toolkit, users can train CV models for tasks such as object detection, image classification instance and semantic segmentation, optical character recognition (OCR), Facial landmark estimation, gaze estimation and more. Vision modality is used to extract insights from images or videos. For more information about all the CV models refer to the Computer Vision section of this documentation.

Users can also train Conversational AI models. Coversational AI is a term used to refer to a set of technologies which enable building intelligence capable of having a free flowing conversation. Building Conversational AI systems and applications is generally hard, and tailoring them to the needs of your enterprise is even harder. If you are interested in using TAO for Conversational AI applications, you can find more details in the ASR and NLP sections of this documentation. To learn more about deployment with Riva, refer to the Riva documentation and the section on Integrating TAO Trained Models to Riva; here you can find more details on how to use Riva for deploying Conversational AI models trained using TAO.

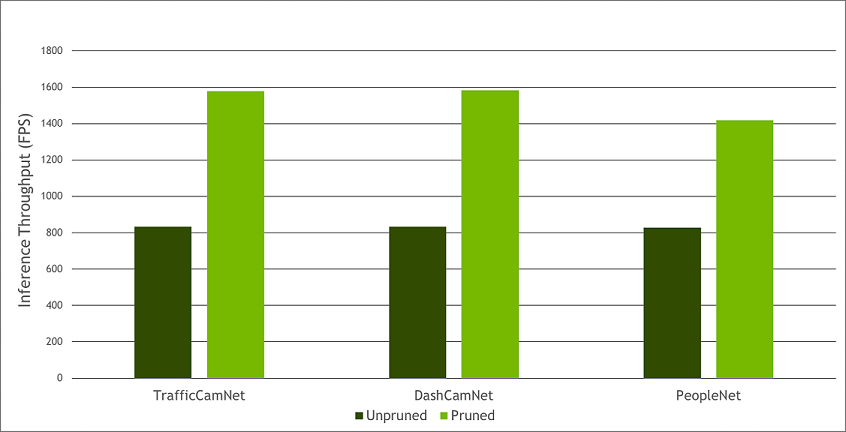

Model pruning is one of the key differentiators for TAO Toolkit. Pruning involves removing nodes in the neural network which contribute less to the overall accuracy of the model. With pruning, users are able to reduce the overall size of the model significantly resulting in a much lower memory footprint and higher inference throughput, which are very important for edge deployment. Currently pruning is only supported on most CV models and not on Conversational AI models. The following graph provides an example of performance gains achieved when going from an unpruned CV model to a pruned CV model (inference was run on an NVIDIA T4; TrafficCamNet, DashCamNet and PeopleNet are three of the custom pre-trained models that are available on NGC).

Pruned vs Unpruned Performance

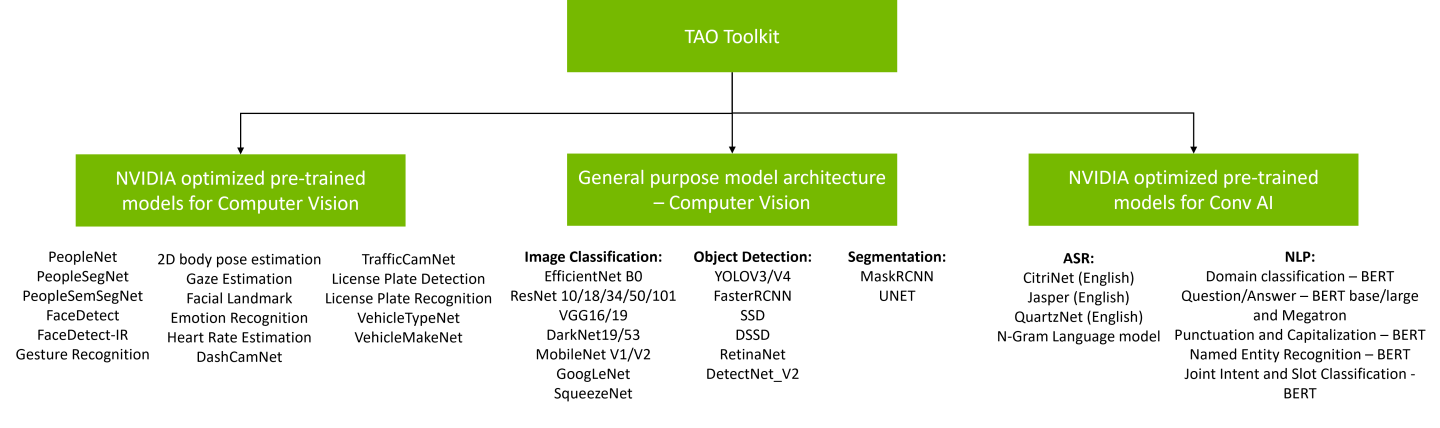

There are two types of pre-trained models that users can start with:

Purpose-built pre-trained models. These are highly accurate models that are trained on thousands of data inputs for a specific task. These domain focused models can either be used directly for inference or can be used with TAO Toolkit for transfer learning on users own dataset.

General purpose vision models. The pre-trained weights for these models merely act as a starting point to build more complex models. For computer vision usecases, these pre-trained weights are trained on Open image datasets and they provide a much better starting point for training versus starting from random initialization of weights.

Users are able to choose from 100+ permutations of model architecture and backbone with the general purpose vision models. For more information on finetuning models for conversational AI use cases, see the pretrained models section for Conversational AI.

* New in TAO Toolkit 3.0-21.08 GA

Purpose-built models are built for high accuracy and performance. These models can be deployed out of the box for applications in smart city, retail, public safety, healthcare, and others or can also be used to re-train with user’s own data. All models are trained on thousands of proprietary images and can achieve very high accuracy on NVIDIA test data. More information about each of these models is available in individual model cards. Typical use cases and some model KPIs are provided in the table below. PeopleNet can be used for detecting and counting people in smart buildings, retail, hospitals, etc. For smart traffic applications, TrafficCamNet and DashCamNet can be used to detect and track vehicles on the road.

Model Name |

Network Architecture |

Number of classes |

Accuracy |

Use Case |

|---|---|---|---|---|

DetectNet_v2-ResNet18 |

4 |

84% mAP |

Detect and track cars. |

|

DetectNet_v2-ResNet18/34 |

3 |

84% mAP |

People counting, heatmap generation, social distancing. |

|

DetectNet_v2-ResNet18 |

4 |

80% mAP |

Identify objects from a moving object. |

|

DetectNet_v2-ResNet18 |

1 |

96% mAP |

Detect face in a dark environment with IR camera. |

|

ResNet18 |

20 |

91% mAP |

Classifying car models. |

|

ResNet18 |

6 |

96% mAP |

Classifying type of cars as coupe, sedan, truck, etc. |

|

MaskRCNN-ResNet50 |

1 |

85% mAP |

Creates segmentation masks around people, provides pixel |

|

Vanilla Unet Dynamic |

2 |

92% mIOU |

Creates semantic segmentation masks for people. |

|

DetectNet_v2-ResNet18 |

1 |

98% mAP |

Detecting and localizing License plates on vehicles |

|

Tuned ResNet18 |

36(US) / 68(CH) |

97%(US)/99%(CH) |

Recognize License plates numbers |

|

Four branch AlexNet based model |

NA |

6.5 RMSE |

Detects person’s eye gaze |

|

Recombinator networks |

NA |

6.1 pixel error |

Estimates key points on person’s face |

|

Two branch model with attention |

NA |

0.7 BPM |

Estimates person’s heartrate from RGB video |

|

ResNet18 |

6 |

0.85 F1 score |

Recognize hand gestures |

|

5 Fully Connected Layers |

6 |

0.91 F1 score |

Recognize facial Emotion |

|

DetectNet_v2-ResNet18 |

1 |

85.3 mAP |

Detect faces from RGB or grayscale image |

|

Single shot bottom-up |

18 |

56.1% mAP* |

Estimates body key points for persons in the image |

The accuracy reported for BodyPoseNet is based on a model trained using COCO dataset. To reproduce the same accuracy, please use the sample notebook.

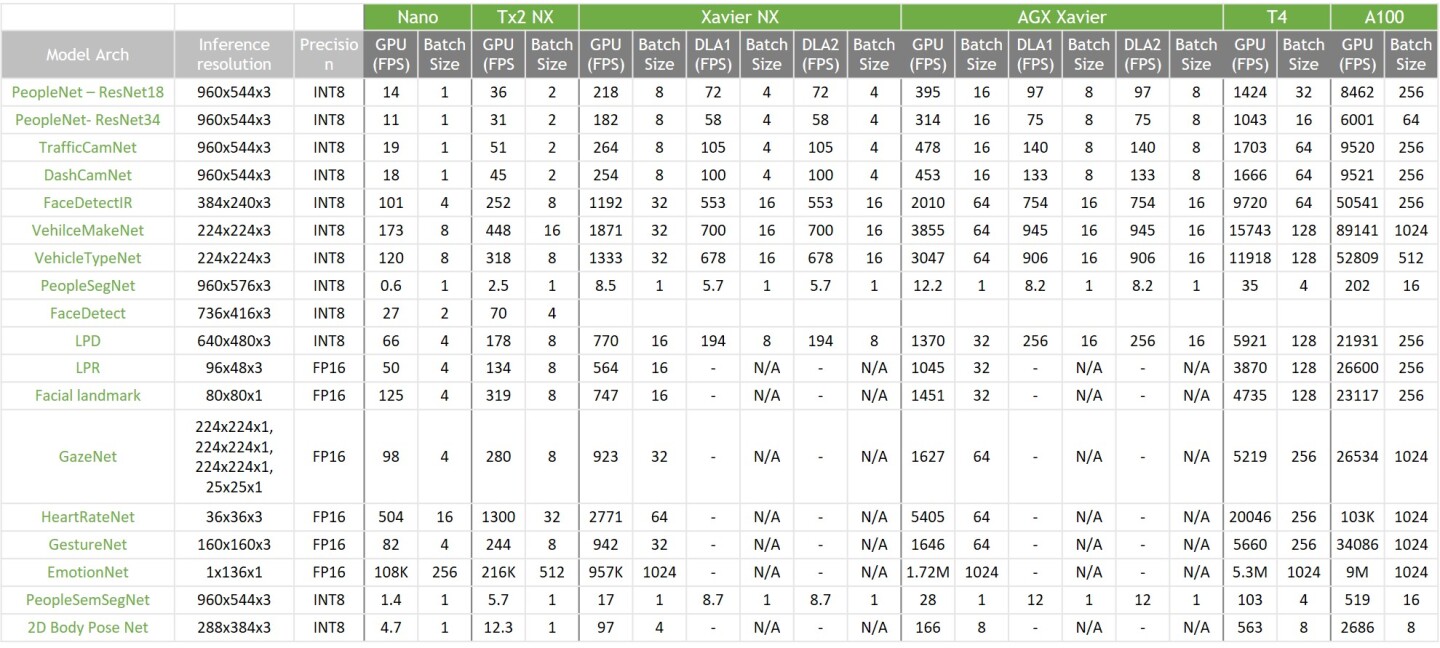

The performance of these pretrained models across various NVIDIA platforms is summarized in the table below. The numbers in the table are inference performance measured using the trtexec tool in TensorRT samples.

Performance Table of Pre-trained Models

With the general purpose models, users can train an image classification model, object detection model or an instance segmentation model. For classification, users can train using one of 15 available architectures such as ResNet, EfficientNet, VGG, MobileNet, GoogLeNet, SqueezeNet or DarkNet architecture. For object detection tasks, users can choose from wildly popular YOLOV3/V4, FasterRCNN, SSD as well as RetinaNet, DSSD and NVIDIA’s own DetectNet_v2 architecture. Finally, for instance segmentation, users can use the MaskRCNN for instance segmentation or UNET for semantic segmentation. This gives users the flexibility and control to build AI models for any number of applications, from smaller light weight models for edge GPUs to larger models for more complex tasks. For all the permutations and combinations, refer to the table below and see the Open Model Architectures section.

ImageClassification |

Object Detection |

Instance Segmentation |

Semantic Segmentation |

|||||||

Backbone |

DetectNet_V2 |

FasterRCNN |

SSD |

YOLOv3 |

RetinaNet |

DSSD |

YOLOv4 |

MaskRCNN |

UNet |

|

ResNet10/18/34/50/101 |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

VGG 16/19 |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

|

GoogLeNet |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

||

MobileNet V1/V2 |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

||

SqueezeNet |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

|||

DarkNet 19/53 |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

||

CSPDarkNet 19/53 |

Yes |

Yes |

||||||||

Efficientnet B0 |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

Yes |

|||

Efficientnet B1* |

Yes |

Yes |

||||||||

* New in TAO Toolkit 3.0-21.08 GA

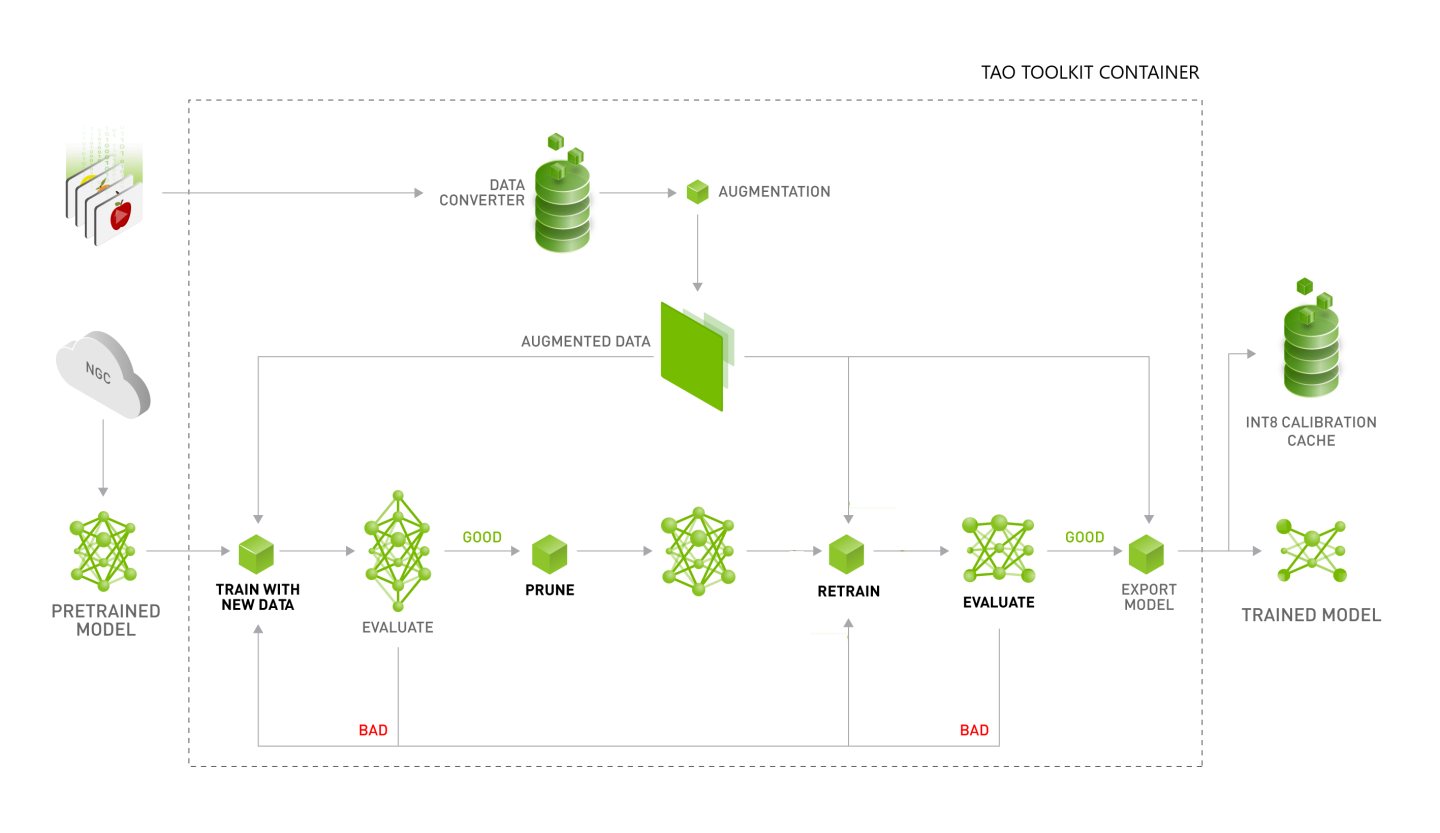

The goal of TAO is to train and fine-tune a model using the user’s own dataset. In the workflow diagram shown below, a user typically starts with a pre-trained model from NGC; either the highly accurate purpose-built model or just the pre-trained weights of the architecture of their choice. The other input is the user’s own dataset. The dataset is fed into the data converter, which can augment the data while training to introduce variations in the dataset. This is very important in training as the data variation improves the overall quality of the model and prevents overfitting. Users can also do offline augmentation with TAO, where the dataset is augmented before training. See the Offline Data Augmentation section for more information.

Once the dataset is prepared and augmented, the next step in the training process is to start training. The training hyperparameters are chosen through the spec file. More information about these hyperparameters can be found in the respective model section below. After the first training phase, users evaluate the model against a test set to see how the model works on the data it has never seen before. Once the model is deemed accurate, the next step is model pruning. If accuracy is not as expected, then the user might have to tune some hyperparameters and re-train. Training is a very iterative process, so you might have to try a few times before converging on the right model.

In model pruning, TAO will algorithmically remove neurons from the neural network which does not contribute significantly to the overall accuracy. Model pruning is only supported on CV models, so if you are training a Conv AI model, you can skip this step and go directly to model export. The model pruning step will inadvertently reduce the accuracy of the model. So after pruning, the next step is to re-train the model on the same dataset to recover the lost accuracy. After re-train, the user will evaluate the model on the same test set. If the accuracy is back to what was before pruning, then the user can move to the model export step. At this point, the user feels confident in accuracy of the model as well as inference performance. The exported model will be in ‘.etlt’ format which can be deployed directly on any NVIDIA GPU using DeepStream and TensorRT. In the export step, users can optionally generate an INT8 calibration cache that quantizes the floating-point weights to integer. Running inference at INT8 precision can provide more than 2x performance over FP16 or FP32 precision without sacrificing the accuracy of the model. To learn more about model export and deployment, see the Exporting the model and Deploying to DeepStream sections for different object detection models.

Once exported, any computer vision model in TAO may be deployed to a TensorRT optimized engine using

the TAO converter. You may refer to this section for more

information about the TAO converter and the TensorRT version matrix.

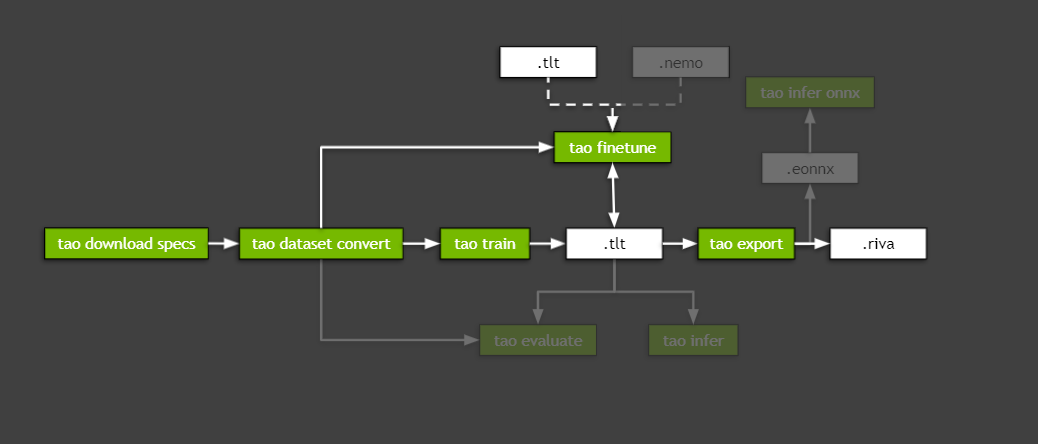

TAO ConvAI workflow is very similar to TAO computer vision workflow shown in last section, except

that pruning is not available for ConvAI at this time and the retraining has a specific command

called finetune.

The export of ConvAI is also slightly different: users can choose to export .riva file, which

can be deployed to Riva. Or export .eonnx file, which can be used by command infer_onnx.

Currently, exporting a ConvAI model to TensorRT engine file is not supported.

To learn more about how to use TAO, read the technical blogs which provide step-by-step guide to training with TAO:

Learn to Train with PeopleNet and other pre-trained model using TAO.

Learn how to train Instance segmentation model using MaskRCNN with TAO.

Learn how to improve INT8 accuracy using Quantization aware training(QAT) with TAO.

Learn how to Create a real time license plate detection and recognition app

Learn how to Prepare state of the art models for classification and object detection with TAO

Learn more on Building and Deploying Conversational AI models Using the NVIDIA TAO Toolkit

Learn how to train and optimize 2D body pose estimation model with TAO - 2D Pose Estimation Part 1 | 2D Pose Estimation Part 2.

If you have any questions when using TAO Toolkit to train a model and deploy to Riva or DeepStream, please log them at the