Agentic Evaluation Flow#

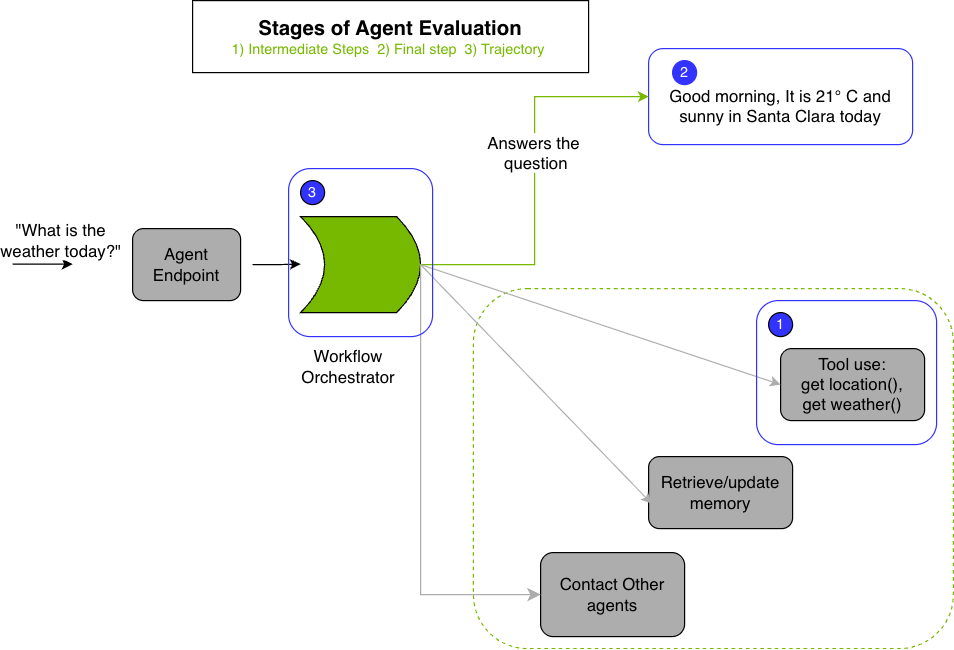

Agentic evaluation flow assesses the performance of agent-based or multi-step reasoning models, especially in scenarios requiring planning, tool use, and iterative reasoning.

Key stages of agent workflow evaluation:

1. Intermediate Steps Evaluation Assesses the correctness of intermediate steps during agent execution:

Tool Use: Validates that the agent invoked the right tools with correct arguments at each step. See Tool Call Accuracy for implementation details.

Retriever Models: Assesses effectiveness of document retrieval pipelines using retriever evaluations. See Retriever Evaluation Flow.

2. Final-Step Evaluation Evaluates the quality of the agent’s final output using:

Agent Goal Accuracy: Measures whether the agent successfully completed the requested task. See Agent Goal Accuracy with Reference and Agent Goal Accuracy without Reference.

Topic Adherence: Assesses how well the agent maintained focus on the assigned topic throughout the conversation. See Topic Adherence.

Custom Metrics: For domain-specific or custom evaluation criteria, use LLM-as-a-Judge with the

datatask type to evaluate agent outputs against custom metrics.

3. Trajectory Evaluation Evaluates the agent’s decision-making process by analyzing the entire sequence of actions taken to accomplish a goal. This includes assessing whether the agent chose appropriate tools in the correct order. See Trajectory Evaluation for configuration details.

Prerequisites#

Before running Agentic evaluations, ensure you have:

Dataset Requirements:

Uploaded your dataset to NeMo Data Store using Hugging Face CLI or SDK (for custom datasets)

Registered your dataset in NeMo Entity Store using the Dataset APIs (for custom datasets)

Set up or selected an existing

datasetevaluation targetFormatted your data with cached outputs (pre-generated model responses)

The dataset must be in JSONL format to enable RAGAS metrics calculation

Model Configuration:

Judge LLM configured for evaluation metrics (required for most agentic tasks - Tool Call Accuracy is the exception)

Proper task-specific data fields (varies by agentic task type)

Tip

For a complete dataset creation walkthrough, see the dataset management tutorials or follow the end-to-end evaluation example.

Note

Performance Tuning: You can improve evaluation performance by setting config.params.parallelism to control the number of concurrent requests. A typical default value is 16, but you may need to adjust based on your model’s capacity and rate limits.

Option |

Use Case |

Data Format |

Example |

|---|---|---|---|

Topic Adherence |

Measures topic focus in multi-turn conversations |

user_input, reference_topics |

“Is the agent’s answer about ‘technology’?” |

Tool Call Accuracy |

Evaluates tool/function call correctness |

user_input (with tool_calls) |

“Did the agent call the restaurant booking tool with correct args?” |

Agent Goal Accuracy with Reference |

Assesses goal completion with reference |

user_input, response, reference |

“Did the agent book a table as requested?” |

Agent Goal Accuracy without Reference |

Assesses goal completion without reference |

user_input, response |

“Did the agent complete the requested task?” |

Answer Accuracy |

Checks factual correctness |

user_input, response, reference |

“Did the agent answer ‘Paris’ for ‘What is the capital of France’?” |

Trajectory Evaluation |

Evaluates decision-making across action sequence |

question, generated_answer, answer, intermediate_steps (NAT format) |

“Did the agent choose appropriate tools in the right order?” |

Example Job Execution#

You can execute an Evaluation Job using either the Python SDK or cURL as follows, replacing <my-eval-config> with configs shown on this page:

Note

See Job Target and Configuration Matrix for details on target / config compatibility.

from nemo_microservices import NeMoMicroservices

client = NeMoMicroservices(

base_url="http(s)://<your evaluator service endpoint>"

)

job = client.v2.evaluation.jobs.create(

spec={

"target": {

"type": "dataset",

"name": "my-target-dataset-1",

"namespace": "my-organization",

"dataset": {

"files_url": "hf://datasets/<my-dataset-namespace>/<my-dataset-name>/<my-dataset-file-path>"

}

},

"config": <my-eval-config>

}

)

curl -X "POST" "$EVALUATOR_BASE_URL/v2/evaluation/jobs" \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '

{

"spec": {

"target": {

"type": "dataset",

"name": "my-target-dataset-1",

"namespace": "my-organization",

"dataset": {

"files_url": "hf://datasets/<my-dataset-namespace>/<my-dataset-name>/<my-dataset-file-path>"

}

},

"config": <my-eval-config>

}

}'

from nemo_microservices import NeMoMicroservices

client = NeMoMicroservices(

base_url="http(s)://<your evaluator service endpoint>"

)

job = client.evaluation.jobs.create(

namespace="my-organization",

target={

"type": "dataset",

"name": "my-target-dataset-1",

"namespace": "my-organization",

"dataset": {

"files_url": "hf://datasets/<my-dataset-namespace>/<my-dataset-name>/<my-dataset-file-path>"

}

},

config=<my-eval-config>

)

curl -X "POST" "$EVALUATOR_BASE_URL/v1/evaluation/jobs" \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '

{

"namespace": "my-organization",

"target": {

"type": "dataset",

"name": "my-target-dataset-1",

"namespace": "my-organization",

"dataset": {

"files_url": "hf://datasets/<my-dataset-namespace>/<my-dataset-name>/<my-dataset-file-path>"

}

},

"config": <my-eval-config>

}'

For a full example, see Run an Academic LM Harness Eval

Limitations#

Agentic evaluation only works with

datasettargets orcached_outputs(deprecated).Each metric can be computed via one job, and there can only be one task per job. Different metrics can’t be computed on the same dataset/job, as all metrics require different dataset formats.

For judge model requirements and constraints, refer to LLM-as-a-Judge Limitations.

Topic Adherence#

Topic Adherence requires judge model.

Note

Data format needs to be in .jsonl format. Here, data format is shown in JSON for clarity on structure.

{

"type": "agentic",

"params": {

"parallelism": 16

},

"tasks": {

"task1": {

"type": "topic_adherence",

"params": {

"metric_mode": "f1",

"judge": {

"model": {

"api_endpoint": {

"url": "<nim_url>",

"model_id": "meta/llama-3.1-70b-instruct",

"api_key": "<OPTIONAL_JUDGE_API_KEY>"

},

"prompt": {

"inference_params": {

"temperature": 0.1,

"max_tokens": 1024,

"max_retries": 10,

"request_timeout": 10

}

}

},

"extra": {

"judge_sanity_check": true

}

}

}

}

}

}

{

"type": "agentic",

"params": {

"parallelism": 16

},

"tasks": {

"task1": {

"type": "topic_adherence",

"params": {

"metric_mode": "f1",

"judge": {

"model": {

"api_endpoint": {

"url": "<nim_url>",

"model_id": "nvidia/llama-3.3-nemotron-super-49b-v1",

"api_key": "<OPTIONAL_JUDGE_API_KEY>"

},

"prompt": {

"system_prompt": "'detailed thinking on'",

"reasoning_params": {

"end_token": "</think>"

},

"inference_params": {

"temperature": 0.1,

"max_tokens": 1024,

"max_retries": 10,

"request_timeout": 10

}

}

}

}

}

}

}

}

{

"user_input": [

{"content": "how to keep healthy?", "type": "human"},

{"content": "Sure. Eat more fruit", "type": "ai"}

],

"reference_topics": ["technology"]

}

{

"tasks": {

"task1": {

"metrics": {

"topic_adherence(mode=f1)": {

"scores": {

"topic_adherence(mode=f1)": {

"value": 0.53

}

}

}

}

}

}

}

Agent Goal Accuracy with Reference#

Agent goal accuracy requires judge model.

Note

Data format needs to be in .jsonl format. Here, data format is shown in JSON for clarity on structure.

{

"type": "agentic",

"tasks": {

"task1": {

"type": "goal_accuracy_with_reference",

"params": {

"judge": {

"model": {

"api_endpoint": {

"url": "<nim_url>",

"model_id": "meta/llama-3.3-70b-instruct",

"api_key": "<OPTIONAL_JUDGE_API_KEY>"

},

"prompt": {

"inference_params": {

"max_tokens": 1024,

"max_retries": 10,

"request_timeout": 10,

"temperature": 0.1

}

}

}

}

}

}

}

}

{

"type": "agentic",

"tasks": {

"task1": {

"type": "goal_accuracy_with_reference",

"params": {

"judge": {

"model": {

"api_endpoint": {

"url": "<nim_url>",

"model_id": "nvidia/llama-3.3-nemotron-super-49b-v1",

"api_key": "<OPTIONAL_JUDGE_API_KEY>"

},

"prompt": {

"system_prompt": "'detailed thinking on'",

"reasoning_params": {

"end_token": "</think>"

},

"inference_params": {

"max_tokens": 1024,

"max_retries": 10,

"request_timeout": 10,

"temperature": 0.1

}

}

}

}

}

}

}

}

{

"user_input": [

{ "content": "Hey, book a table at the nearest best Chinese restaurant for 8:00pm", "type": "user" },

{ "content": "Sure, let me find the best options for you.", "type": "assistant", "tool_calls": [ { "name": "restaurant_search", "args": { "cuisine": "Chinese", "time": "8:00pm" } } ] },

{ "content": "Found a few options: 1. Golden Dragon, 2. Jade Palace", "type": "tool" },

{ "content": "I found some great options: Golden Dragon and Jade Palace. Which one would you prefer?", "type": "assistant" },

{ "content": "Let's go with Golden Dragon.", "type": "user" },

{ "content": "Great choice! I'll book a table for 8:00pm at Golden Dragon.", "type": "assistant", "tool_calls": [ { "name": "restaurant_book", "args": { "name": "Golden Dragon", "time": "8:00pm" } } ] },

{ "content": "Table booked at Golden Dragon for 8:00pm.", "type": "tool" },

{ "content": "Your table at Golden Dragon is booked for 8:00pm. Enjoy your meal!", "type": "assistant" },

{ "content": "thanks", "type": "user" }

],

"reference": "Table booked at one of the chinese restaurants at 8 pm"

}

{

"tasks": {

"task1": {

"metrics": {

"agent_goal_accuracy": {

"scores": {

"agent_goal_accuracy": {

"value": 1.0

}

}

}

}

}

}

}

Agent Goal Accuracy without Reference#

Agent goal accuracy without reference requires judge model.

Note

Data format needs to be in .jsonl format. Here, data format is shown in JSON for clarity on structure.

{

"type": "agentic",

"tasks": {

"task1": {

"type": "goal_accuracy_without_reference",

"params": {

"judge": {

"model": {

"api_endpoint": {

"url": "<nim_url>",

"model_id": "meta/llama-3.3-70b-instruct",

"api_key": "<OPTIONAL_JUDGE_API_KEY>"

},

"prompt": {

"inference_params": {

"max_tokens": 1024,

"max_retries": 10,

"request_timeout": 10,

"temperature": 0.1

}

}

}

}

}

}

}

}

{

"user_input": [

{ "content": "Set a reminder for my dentist appointment tomorrow at 2pm", "type": "user" },

{ "content": "I'll set that reminder for you.", "type": "assistant", "tool_calls": [ { "name": "set_reminder", "args": { "title": "Dentist appointment", "date": "tomorrow", "time": "2pm" } } ] },

{ "content": "Reminder set successfully.", "type": "tool" },

{ "content": "Your reminder for the dentist appointment tomorrow at 2pm has been set.", "type": "assistant" }

]

}

{

"tasks": {

"task1": {

"metrics": {

"agent_goal_accuracy": {

"scores": {

"agent_goal_accuracy": {

"value": 1.0

}

}

}

}

}

}

}

Tool Call Accuracy#

Note

Data format needs to be in .jsonl format. Here, data format is shown in JSON for clarity on structure.

{

"type": "agentic",

"tasks": {

"task1": {

"type": "tool_call_accuracy"

}

}

}

{

"user_input": [

{"content": "What's the weather like in New York right now?", "type": "human"},

{"content": "The current temperature in New York is 75°F and it's partly cloudy.", "type": "ai", "tool_calls": [{"name": "weather_check", "args": {"location": "New York"}}]},

{"content": "Can you translate that to Celsius?", "type": "human"},

{"content": "Let me convert that to Celsius for you.", "type": "ai", "tool_calls": [{"name": "temperature_conversion", "args": {"temperature_fahrenheit": 75}}]},

{"content": "75°F is approximately 23.9°C.", "type": "tool"},

{"content": "75°F is approximately 23.9°C.", "type": "ai"}

],

"reference_tool_calls": [

{"name": "weather_check", "args": {"location": "New York"}},

{"name": "temperature_conversion", "args": {"temperature_fahrenheit": 75}}

]

}

{

"tasks": {

"task1": {

"metrics": {

"tool_call_accuracy": {

"scores": {

"tool_call_accuracy": {

"value": 1.0

}

}

}

}

}

}

}

Answer Accuracy#

Answer accuracy requires judge model.

Note

Data format needs to be in .jsonl format. Here, data format is shown in JSON for clarity on structure.

{

"type": "agentic",

"tasks": {

"task1": {

"type": "answer_accuracy",

"params": {

"judge": {

"extra": {

"judge_sanity_check": false

},

"model": {

"api_endpoint": {

"url": "<nim_url>",

"model_id": "meta/llama-3.1-70b-instruct",

"api_key": "<OPTIONAL_API_KEY>"

},

"prompt": {

"inference_params": {

"temperature": 1,

"max_tokens": 1024,

"max_retries": 10,

"request_timeout": 10,

"stop": ["<|end_of_text|>", "<|eot|>"]

}

}

}

}

}

}

}

}

{

"type": "agentic",

"tasks": {

"task1": {

"type": "answer_accuracy",

"params": {

"judge": {

"extra": {

"judge_sanity_check": false

},

"model": {

"api_endpoint": {

"url": "<nim_url>",

"model_id": "nvidia/llama-3.3-nemotron-super-49b-v1",

"api_key": "<OPTIONAL_JUDGE_API_KEY>"

},

"prompt": {

"system_prompt": "'detailed thinking on'",

"reasoning_params": {

"end_token": "</think>"

},

"inference_params": {

"temperature": 0.1,

"max_tokens": 1024,

"max_retries": 10,

"request_timeout": 10

}

}

}

}

}

}

}

}

{"user_input": "What is the capital of France?", "response": "Paris", "reference": "Paris"}

{"user_input": "Who wrote 'Pride and Prejudice'?", "response": "Jane Austen", "reference": "Jane Austen"}

{

"tasks": {

"task1": {

"metrics": {

"answer_accuracy": {

"scores": {

"answer_accuracy": {

"value": 1.0

}

}

}

}

}

}

}

Trajectory Evaluation#

Trajectory evaluation assesses the agent’s decision-making process by analyzing the entire sequence of actions (trajectory) the agent took to accomplish a goal. This metric uses LangChain’s TrajectoryEvalChain to evaluate whether the agent’s choices were appropriate given the available tools and the task at hand.

Important

NAT Format Requirement: This metric supports the NVIDIA Agent Toolkit format with intermediate_steps containing detailed event traces.

Trajectory evaluation requires a judge model.

{

"type": "agentic",

"tasks": {

"task1": {

"type": "trajectory_evaluation",

"params": {

"parallelism": 4,

"trajectory_used_tools": "wikipedia_search,current_datetime,code_generation,dummy_custom_tool",

"trajectory_custom_tools": {

"dummy_custom_tool": "Do nothing. This tool is for test only",

"code_generation": "Useful to generate Python code. For any questions about code generation, you must only use this tool!",

"wikipedia_search": "Tool that retrieves relevant contexts from wikipedia search for the given question.\n\n Args:\n _type (str): The type of the object.\n max_results (int): Description unavailable. Defaults to 2."

},

"judge": {

"model": {

"api_endpoint": {

"url": "<nim_url>",

"model_id": "nvidia/llama-3.1-nemotron-ultra-253b-v1",

"api_key": "<OPTIONAL_JUDGE_API_KEY>"

},

"prompt": {

"inference_params": {

"temperature": 0.0,

"max_tokens": 32768,

"max_retries": 10,

"request_timeout": 60

}

}

}

}

}

}

}

}

Each data entry must follow the Nemo agent toolkit format with intermediate_steps containing event traces:

{

"question": "What are LLMs",

"generated_answer": "LLMs, or Large Language Models, are a type of artificial intelligence designed to process and generate human-like language. They are trained on vast amounts of text data and can be fine-tuned for specific tasks or guided by prompt engineering.",

"answer": "LLMs stand for Large Language Models, which are a type of machine learning model designed for natural language processing tasks such as language generation.",

"intermediate_steps": [

{

"payload": {

"event_type": "LLM_END",

"name": "meta/llama-3.1-70b-instruct",

"data": {

"input": "\nPrevious conversation history:\n\n\nQuestion: What are LLMs\n",

"output": "Thought: I need to find information about LLMs to answer this question.\n\nAction: wikipedia_search\nAction Input: {'question': 'LLMs'}\n\n"

}

}

},

{

"payload": {

"event_type": "TOOL_END",

"name": "wikipedia_search",

"data": {

"input": "{'question': 'LLMs'}",

"output": "<Document source=\"https://en.wikipedia.org/wiki/Large_language_model\" page=\"\"/>\nA large language model (LLM) is a language model trained with self-supervised machine learning..."

}

}

},

{

"payload": {

"event_type": "LLM_END",

"name": "meta/llama-3.1-70b-instruct",

"data": {

"input": "...",

"output": "Thought: I now know the final answer\n\nFinal Answer: LLMs, or Large Language Models, are a type of artificial intelligence..."

}

}

}

]

}

{

"tasks": {

"task1": {

"metrics": {

"trajectory_evaluation": {

"scores": {

"trajectory_evaluation": {

"value": 0.85

}

}

}

}

}

}

}

Required Configuration Parameters#

trajectory_used_tools (string, required)

Comma-separated list of tool names that were available to the agent during execution. This helps the evaluator understand what tools the agent had at its disposal.

Example: "wikipedia_search,current_datetime,code_generation,dummy_custom_tool"

trajectory_custom_tools (object, optional)

JSON object mapping custom tool names to their descriptions. Required for any tools that are not part of the Nemo agent toolkit default functions. This helps the judge LLM understand the purpose of each custom tool.

Example:

{

"dummy_custom_tool": "Do nothing. This tool is for test only",

"code_generation": "Useful to generate Python code. For any questions about code generation, you must only use this tool!"

}

Judge Schema#

Refer to Judge Model Configuration. Use tasks.params.judge in each agentic task to reference the judge settings.

Metrics#

Agentic evaluation uses RAGAS metrics to score agent outputs. RAGAS is a library for evaluating retrieval-augmented generation and agentic workflows using standardized, research-backed metrics.

Each task contains a set of metrics relevant to the Agentic evaluation, such as topic adherence, tool call accuracy, agent goal accuracy, or answer accuracy, depending on the metric selected in the job configuration.

Metric Name |

Description |

Value Range |

Notes |

|---|---|---|---|

Measures how well the agent sticks to the assigned topic (F1 mode) |

0.0–1.0 |

Requires judge LLM |

|

Accuracy of tool call predictions |

0.0–1.0 |

||

Accuracy in achieving the agent’s goal with reference |

0.0–1.0 |

||

Accuracy in achieving the agent’s goal without reference |

0.0–1.0 |

||

Accuracy of the agent’s answer |

0.0–1.0 |

||

|

Evaluates the agent’s decision-making process across the entire action sequence |

0.0–1.0 |

Requires judge LLM; Nemo agent toolkit format only |