CannyEdgeDetector#

Overview#

The Canny edge detector is a widely used edge detection algorithm in computer vision and image processing. Its purpose is to identify and extract the boundaries of objects within an image by detecting significant intensity changes, which correspond to edges.

Processing Steps#

Noise Reduction: The input image is first smoothed using a Gaussian filter to reduce noise. This step is not included in this operator.

Gradient Calculation: Use Sobel filter to calculate the intensity gradients of the image. Both the magnitude and direction (angle) of the gradient are computed at each pixel.

Non-Maximum Suppression: To thin out the edges and retain only the most significant ones, non-maximum suppression is applied. This step keeps only the local maxima in the gradient direction, resulting in one-pixel-wide edges.

Double Thresholding: Two thresholds are applied to classify edge pixels as strong, weak, or non-edges.

Edge Tracking by Hysteresis: Weak edges are included in the final output only if they are connected to strong edges. This linking process ensures that true edges are preserved while isolated noise-induced responses are discarded. This step is not included in this operator in the current release.

Implementation Details#

Limitations#

This implementation supports only U8 images. For the Sobel filter, only kernel sizes of 3x3, 5x5, and 7x7 are available. Since edge tracking by hysteresis is not included in this operator in the current release, the output is a U8 image that indicates the edge type of each pixel (strong, weak, or non-edge). Once edge tracking by hysteresis is applied, the output should be an image that contains only the detected edges.

Implementation Structure#

The implementation is organized into two memory stages. In the first stage, the intensity and direction of image gradients are computed. The second stage performs NMS and double thresholding. To optimize memory usage, VMEM buffers are overlaid between the two stages using unions, and intermediate results—specifically, the gradient intensity and direction—are written back to main memory. Since both stages are compute-bound, the latency of reading and writing these intermediate results can be effectively hidden by pipelining DMA transfers and VPU processing.

Dataflows#

Six RasterDataFlows are used for input, output, and intermediate results. RasterDataFlow can handle cases where the image size is not a multiple of the tile size.

The input raster dataflow pads the image boundary with zeros, with the padding size being the Sobel filter radius. The input image tiles are stored in a circular buffer in VMEM, reducing the computation of overlapped halo between tiles.

In the second stage, the input gradient intensity should be padded using boundary pixel extension (BPE) padding mode, with a padding size of 1. The input gradient intensity tiles are also stored in a circular buffer in VMEM. The input gradient direction has no halo, explicitly setting the halo size to 0 and the region of interest (ROI) to the image rounded up to multiples of tiles. When the image size is not a multiple of tiles, the tail tile in a tile row or column is padded with zeros, which represent the horizontal direction. So that these padded pixels do not affect the NMS results for valid image pixels in the tail tiles.

Output Demo#

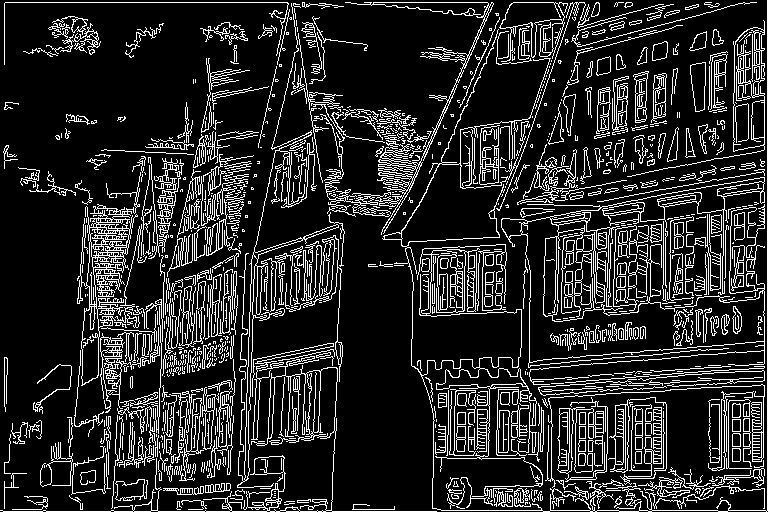

Although this operator does not include edge tracking by hysteresis, the output below shows the detected edges produced by combining this operator with the reference implementation of edge tracking by hysteresis. The image on the left is the input, while the image on the right shows the corresponding detected edges. The threshold for strong edges is 300 and the threshold for weak edges is 100.

Figure 1: Sample input image (left) and output results (right).

Performance#

Execution Time is the average time required to execute the operator on a single VPU core.

Note that each PVA contains two VPU cores, which can operate in parallel to process two streams simultaneously, or reduce execution time by approximately half by splitting the workload between the two cores.

Total Power represents the average total power consumed by the module when the operator is executed concurrently on both VPU cores.

For detailed information on interpreting the performance table below and understanding the benchmarking setup, see Performance Benchmark.

ImageSize |

DataType |

KernelSize |

Execution Time |

Submit Latency |

Total Power |

|---|---|---|---|---|---|

1920x1080 |

U8 |

3x3 |

1.996ms |

0.022ms |

13.135W |

1920x1080 |

U8 |

5x5 |

2.253ms |

0.021ms |

12.933W |

1920x1080 |

U8 |

7x7 |

2.255ms |

0.022ms |

12.933W |

Reference#

[1] https://docs.opencv.org/4.x/da/d22/tutorial_py_canny.html