Multitask Image Classification

Multitask classification expects a directory of images and two CSVs for training labels and validation labels. The image directory should contain all images for both training and validation (but it can contain additional images). Only images specified in training CSV file will be used during training and same for validation.

The data structure should look like following:

|--dataset_root:

|--images

|--1.jpg

|--2.jpg

|--3.jpg

|--4.jpg

|--5.jpg

|--6.jpg

|--train.csv

|--val.csv

Training and validation CSV files contain the labels for training and validation images. Both CSVs should have same format: the first column of the CSV must be fname, standing for the filename of the image. If you have N tasks, you need additional N columns, each with the task name as column name. For each image (row entry in CSV), there must be one and only one label for each task cell. An example for train.csv with 3 classification tasks (color, type and size) is like following:

fname |

color |

type |

size |

1.jpg |

Blue |

1 |

Big |

2.jpg |

Red |

1 |

Small |

3.jpg |

Red |

0 |

Small |

Note: currently, multitask image classification only supports RGB training. The trained model will always have 3 input channels. For inferencing on grayscale images, user should load the image as RGB with same values in all channels. This is also how the training script handles grayscale training images.

Here is an example of a specification file for multitask classification:

random_seed: 42

model_config {

arch: "resnet"

n_layers: 101

use_batch_norm: True

use_bias: False

all_projections: False

use_pooling: True

use_imagenet_head: True

resize_interpolation_method: BICUBIC

input_image_size: "3,224,224"

}

training_config {

batch_size_per_gpu: 16

checkpoint_interval: 10

num_epochs: 80

enable_qat: false

learning_rate {

soft_start_annealing_schedule {

min_learning_rate: 5e-5

max_learning_rate: 2e-2

soft_start: 0.15

annealing: 0.8

}

}

regularizer {

type: L1

weight: 3e-5

}

optimizer {

adam {

epsilon: 1e-7

beta1: 0.9

beta2: 0.999

amsgrad: false

}

}

pretrain_model_path: "EXPERIMENT_DIR/resnet_101.hdf5"

}

dataset_config {

image_directory_path: "EXPERIMENT_DIR/data/images"

train_csv_path: "EXPERIMENT_DIR/data/train.csv"

val_csv_path: "EXPERIMENT_DIR/data/val.csv"

}

Model Config

The table below describes the configurable parameters in the model_config.

Parameter |

Datatype |

Default |

Description |

Supported Values |

|

bool |

|

For templates with shortcut connections, this parameter defines whether or not all shortcuts should be instantiated with 1x1 projection layers irrespective of whether there is a change in stride across the input and output. |

True or False (only to be used in ResNet templates) |

|

string |

|

This defines the architecture of the back bone feature extractor to be used to train. |

|

|

int |

|

Depth of the feature extractor for scalable templates. |

|

|

Boolean |

|

Choose between using strided convolutions or MaxPooling while downsampling. When True, MaxPooling is used to down sample, however for the object detection network, NVIDIA recommends setting this to False and using strided convolutions. |

True or False |

|

Boolean |

|

Boolean variable to use batch normalization layers or not. |

True or False |

|

float (repeated) |

– |

This parameter defines which blocks may be frozen from the instantiated feature extractor template, and is different for different feature extractor templates. |

|

|

Boolean |

|

You can choose to freeze the Batch Normalization layers in the model during training. |

True or False |

|

String |

|

The dimension of the input layer of the model. Images in the dataset will be resized to this shape by the dataloader when fed to the model for training. |

3,X,Y, where X,Y >=16 and X,Y are integers. |

|

enum |

|

The interpolation method for resizing the input images. |

BILINEAR, BICUBIC |

|

Boolean |

|

Whether or not to use the header layers as in the original implementation on ImageNet. Set this to True to reproduce the accuracy on ImageNet as in the literature. If set to False, a Dense layer will be used for header, which can be different from the literature. |

True or False |

|

float |

|

Dropout rate for Dropout layers in the model. This is only valid for VGG and SqueezeNet. |

Float in the interval [0, 1) |

|

proto message |

– |

Parameters for BatchNormalization layers. |

– |

|

proto message |

– |

Parameters for the activation functions in the model. |

– |

BatchNormalization Parameters

The parameter batch_norm_config defines parameters for BatchNormalization layers in the model (momentum and epsilon).

Parameter |

Datatype |

Default |

Description |

Supported Values |

|

float |

|

Momentum of BatchNormalization layers. |

float in the interval (0, 1), usually close to 1.0. |

|

float |

|

Epsilon to avoid zero division. |

float that is close to 0.0. |

Activation functions

The parameter activation defines the parameters for activation functions in the model.

Parameter |

Datatype |

Default |

Description |

Supported Values |

|

String |

– |

Type of the activation function. |

Only |

Training Config

The training configuration (training_config) defines the parameters needed for

training, evaluation, and inference. Details are summarized in the table below.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

batch_size_per_gpu |

The batch size for each GPU; the effective batch size is

|

Unsigned int, positive |

– |

checkpoint_interval |

The number of training epochs per one model checkpoint/validation |

Unsigned int, positive |

10 |

num_epochs |

The number of epochs to train the network |

Unsigned int, positive. |

– |

enable_qat |

A flag to enable/disable quantization-aware training |

Boolean |

– |

learning_rate |

This parameter supports one

|

Message type |

– |

regularizer |

This parameter configures the regularizer to use while training and contains the following nested parameters:

|

Message type |

L1 (Note: NVIDIA suggests using the L1 regularizer when training a network before pruning, as L1 regularization makes the network weights more prunable.) |

optimizer |

The optimizer can be

The optimizer parameters are the same as those in Keras. |

Message type |

– |

pretrain_model_path |

The path to the pretrained model, if any At most, one |

String |

– |

resume_model_path |

The path to the TAO checkpoint model to resume training, if any At most, one |

String |

– |

pruned_model_path |

The path to the TAO pruned model for re-training, if any At most, one |

String |

– |

The learning rate is automatically scaled with the number of GPUs used during training, or the effective learning rate is learning_rate * n_gpu.

Dataset Config

Parameter |

Datatype |

Description |

|

string |

Path to the image directory |

|

string |

Path to the training CSV file |

|

string |

Path to the validation CSV file |

Use the tao multitask_classification train command to tune a pre-trained model:

tao multitask_classification train -e <spec file>

-k <encoding key>

-r <result directory>

[--gpus <num GPUs>]

[--gpu_index <gpu_index>]

[--use_amp]

[--log_file <log_file_path>]

[-h]

Required Arguments

-r, --results_dir: Path to a folder where the experiment outputs should be written.-k, --key: User specific encoding key to save or load a.tltmodel.-e, --experiment_spec_file: Path to the experiment spec file.

Optional Arguments

--gpus: Number of GPUs to use and processes to launch for training. The default value is 1.--gpu_index: The GPU indices used to run the training. We can specify the GPU indices used to run training when the machine has multiple GPUs installed.--use_amp: A flag to enable AMP training.--log_file: Path to the log file. Defaults to stdout.-h, --help: Print the help message.

See the Specification File for Multitask Classification section for more details.

Here’s an example of using the tao multitask_classification train command:

tao multitask_classification train -e /workspace/tlt_drive/spec/spec.cfg -r /workspace/output -k $YOUR_KEY

After the model has been trained, using the experiment config file, and by following the steps to

train a model, the next step is to evaluate this model on a test set to measure the

accuracy of the model. The TAO Toolkit includes the tao multitask_classification evaluate command to do this.

The multitask_classification app computes per-task evaluation loss and accuracy as metrics.

When training is complete, the model is stored in the output directory of your choice in

$OUTPUT_DIR. Evaluate a model using the tao multitask_classification evaluate command:

tao multitask_classification evaluate -e <experiment_spec_file>

-k <key>

-m <model>

[--gpu_index <gpu_index>]

[--log_file <log_file>]

[-h]

Required Arguments

-e, --experiment_spec_file: Path to the experiment spec file.-k, --key: Provide the encryption key to decrypt the model.-m, --model: Provide path to the trained model.

Optional Arguments

-h, --help: Show this help message and exit.--gpu_index: The GPU indices used to run the evaluation. We can specify the GPU indices used to run training when the machine has multiple GPUs installed.--log_file: Path to the log file. Defaults to stdout.

Inferencing models on a labeled dataset can give confusion matrices from which you can see where the model makes mistakes.

TAO offers a command to easily generate confusion matrices for all tasks:

tao multitask_classification confmat -i <img_root>

-l <target_csv>

-k <key>

-m <model>

[--gpu_index <gpu_index>]

[--log_file <log_file>]

[-h]

Required Arguments

-i, --img_root: Path to the image directory.-l, --target_csv: Path to the ground truth label CSV file.-k, --key: Provide the encryption key to decrypt the model.-m, --model: Provide path to the trained model.

Optional Arguments

-h, --help: Show this help message and exit.--gpu_index: The GPU indices used to run the confmat. We can specify the GPU indices used to run training when the machine has multiple GPUs installed.--log_file: Path to the log file. Defaults to stdout.

The tao multitask_classification inference command runs the inference on a specified image.

Execute tao multitask_classification inference on a multitask classification model trained

on TAO Toolkit.

tao multitask_classification inference -m <model> -i <image> -k <key> -cm <classmap> [--gpu_index <gpu_index>] [--log_file <log_file>] [-h]

Here are the arguments of the tao multitask_classification inference tool:

Required arguments

-m, --model: Path to the pretrained model (TAO model).-i, --image: A single image file for inference.-k, --key: Key to load model.-cm, --class_map: The json file that specifies the class index and label mapping for each task.

Optional arguments

-h, --help: Show this help message and exit.--gpu_index: The GPU indices used to run the inference. We can specify the GPU indices used to run training when the machine has multiple GPUs installed.--log_file: Path to the log file. Defaults to stdout.

A classmap (-cm) is required, which

should be a byproduct (class_mapping.json) of your training process.

Pruning removes parameters from the model to reduce the model size without compromising the

integrity of the model itself using the tao multitask_classification prune command.

The tao multitask_classification prune command includes these parameters:

tao multitask_classification prune -m <model>

-o <output_file>

-k <key>

[-n <normalizer>

[-eq <equalization_criterion>]

[-pg <pruning_granularity>]

[-pth <pruning threshold>]

[-nf <min_num_filters>]

[-el <excluded_list>]

[--gpu_index <gpu_index>]

[--log_file <log_file>]

[-h]

Required Arguments

-m, --model: Path to pretrained model-o, --output_file: Path to output checkpoints-k, --key: Key to load a .tlt model

Optional Arguments

-h, --help: Show this help message and exit.-n, –normalizer:maxto normalize by dividing each norm by the maximum norm within a layer;L2to normalize by dividing by the L2 norm of the vector comprising all kernel norms. (default:max)-eq, --equalization_criterion: Criteria to equalize the stats of inputs to an elementwise op layer, or depth-wise convolutional layer. This parameter is useful for ResNet and MobileNet. Options arearithmetic_mean,geometric_mean,union, andintersection. (default:union)-pg, -pruning_granularity: Number of filters to remove at a time (default: 8)-pth: Threshold to compare normalized norm against (default: 0.1)-nf, --min_num_filters: Minimum number of filters to keep per layer (default: 16)-el, --excluded_layers: List of excluded_layers. Examples: -i item1 item2 (default: [])--gpu_index: The GPU indices used to run the pruning. We can specify the GPU indices used to run training when the machine has multiple GPUs installed.--log_file: Path to the log file. Defaults to stdout.

After pruning, the model needs to be retrained. See Re-training the Pruned Model for more details.

After the model has been pruned, there might be a slight decrease in accuracy. This happens

because some previously useful weights may have been removed. In order to regain the accuracy,

NVIDIA recommends that you retrain this pruned model over the same dataset. To do this, use

the tao multitask_classification train command as documented in Training the model, with

an updated spec file that points to the newly pruned model as the pretrained model file.

Users are advised to turn off the regularizer in the training_config for classification to recover

the accuracy when retraining a pruned model. You may do this by setting the regularizer type

to NO_REG. All the other parameters may be retained in the spec file from the previous training.

Exporting the model decouples the training process from inference and allows conversion to

TensorRT engines outside the TAO environment. TensorRT engines are specific to each hardware

configuration and should be generated for each unique inference environment.

The exported model may be used universally across training and deployment hardware.

The exported model format is referred to as .etlt. Like .tlt, the .etlt model

format is also an encrypted model format with the same key of the .tlt model that it is

exported from. This key is required when deploying this model.

Exporting the Model

Here’s an example of the tao multitask_classification export command:

tao multitask_classification export

-m <path to the .tlt model file generated by training>

-k <key>

-cm <classmap>

[-o <path to output file>]

[--cal_data_file <path to tensor file>]

[--cal_cache_file <path to output calibration file>]

[--data_type <data type for the TensorRT backend during export>]

[--batches <number of batches to calibrate over>]

[--max_batch_size <maximum trt batch size>]

[--max_workspace_size <maximum workspace size]

[--batch_size <batch size for calibration data>]

[--engine_file <path to the TensorRT engine file>]

[--verbose]

[--force_ptq]

[--gpu_index <gpu_index>]

[--log_file <log_file_path>]

Required Arguments

-m, --model: Path to the.tltmodel file to be exported.-k, --key: Key used to save the.tltmodel file.-cm, --class_map: The json file that specifies the class index and label mapping for each task.

A classmap (-cm) is required, which

should be a by product (class_mapping.json) of your training process.

Optional Arguments

-o, --output_file: Path to save the exported model to. The default is./<input_file>.etlt.--data_type: Desired engine data type, generates calibration cache if in INT8 mode. The options are: {fp32,fp16,int8} The default value isfp32. If using INT8, the following INT8 arguments are required.-s, --strict_type_constraints: A Boolean flag to indicate whether or not to apply the TensorRT strict type constraints when building the TensorRT engine.--gpu_index: The index of (discrete) GPUs used for exporting the model. We can specify the GPU index to run export if the machine has multiple GPUs installed. Note that export can only run on a single GPU.--log_file: Path to the log file. Defaults to stdout.-v, --verbose: Verbose log.

INT8 Export Mode Required Arguments

--cal_data_file: The tensorfile generated for calibrating the engine. This can also be an output file if used with--cal_image_dir.--cal_image_dir: A directory of images to use for calibration.

The --cal_image_dir parameter for images applies the necessary preprocessing

to generate a tensorfile at the path mentioned in the --cal_data_file

parameter, which is in turn used for calibration. The number of generated batches in the

tensorfile is obtained from the --batches parameter value,

and the batch_size is obtained from the --batch_size

parameter value. Ensure that the directory mentioned in --cal_image_dir has at

least batch_size * batches number of images in it. The valid image extensions are

.jpg, .jpeg, and .png. In this case, the input_dimensions

of the calibration tensors are derived from the input layer of the .tlt model.

INT8 Export Optional Arguments

--cal_cache_file: The path to save the calibration cache file to. The default value is./cal.bin.--batches: The number of batches to use for calibration and inference testing. The default value is 10.--batch_size: The batch size to use for calibration. The default value is 8.--max_batch_size: The maximum batch size of the TensorRT engine. The default value is 16.--max_workspace_size: The maximum workspace size of TensorRT engine. The default value is1073741824 = 1<<30--engine_file: The path to the serialized TensorRT engine file. Note that this file is hardware specific, and cannot be generalized across GPUs. It is useful to quickly test your model accuracy using TensorRT on the host. As TensorRT engine file is hardware specific, you cannot use this engine file for deployment unless the deployment GPU is identical to the training GPU.--force_ptq: A boolean flag to force post training quantization on the exported.etltmodel.

When exporting a model trained with QAT enabled, the tensor scale factors to calibrate

the activations are peeled out of the model and serialized to a TensorRT readable cache file

defined by the cal_cache_file argument. However, note that the current version of

QAT doesn’t natively support DLA INT8 deployment on Jetson. To deploy

this model on a Jetson with DLA int8, use the --force_ptq flag for

TensorRT post-training quantization to generate the calibration cache file.

The deep learning and computer vision models that you’ve trained can be deployed on edge devices, such as a Jetson Xavier or Jetson Nano, a discrete GPU, or in the cloud with NVIDIA GPUs. TAO Toolkit has been designed to integrate with DeepStream SDK, so models trained with TAO Toolkit will work out of the box with DeepStream SDK.

DeepStream SDK is a streaming analytic toolkit to accelerate building AI-based video analytic applications. This section will describe how to deploy your trained model to DeepStream SDK.

To deploy a model trained by TAO Toolkit to DeepStream we have two options:

Option 1: Integrate the

.etltmodel directly in the DeepStream app. The model file is generated by export.Option 2: Generate a device specific optimized TensorRT engine using

tao-converter. The generated TensorRT engine file can also be ingested by DeepStream.

Machine-specific optimizations are done as part of the engine creation process, so a distinct engine should be generated for each environment and hardware configuration. If the TensorRT or CUDA libraries of the inference environment are updated (including minor version updates), or if a new model is generated, new engines need to be generated. Running an engine that was generated with a different version of TensorRT and CUDA is not supported and will cause unknown behavior that affects inference speed, accuracy, and stability, or it may fail to run altogether.

Option 1 is very straightforward. The .etlt file and calibration cache are directly

used by DeepStream. DeepStream will automatically generate the TensorRT engine file and then run

inference. TensorRT engine generation can take some time depending on size of the model

and type of hardware. Engine generation can be done ahead of time with Option 2.

With option 2, the tao-converter is used to convert the .etlt file to TensorRT; this

file is then provided directly to DeepStream.

See the Exporting the Model section for more details on how to export a TAO model.

Generating an Engine Using tao-converter

The tao-converter tool is provided with the TAO Toolkit

to facilitate the deployment of TAO trained models on TensorRT and/or Deepstream.

This section elaborates on how to generate a TensorRT engine using tao-converter.

For deployment platforms with an x86-based CPU and discrete GPUs, the tao-converter

is distributed within the TAO docker. Therefore, we suggest using the docker to generate

the engine. However, this requires that the user adhere to the same minor version of

TensorRT as distributed with the docker. The TAO docker includes TensorRT version 8.0.

Instructions for x86

For an x86 platform with discrete GPUs, the default TAO package includes the tao-converter

built for TensorRT 8.2.5.1 with CUDA 11.4 and CUDNN 8.2. However, for any other version of CUDA and

TensorRT, please refer to the overview section for download. Once the

tao-converter is downloaded, follow the instructions below to generate a TensorRT engine.

Unzip the zip file on the target machine.

Install the OpenSSL package using the command:

sudo apt-get install libssl-dev

Export the following environment variables:

$ export TRT_LIB_PATH=”/usr/lib/x86_64-linux-gnu”

$ export TRT_INC_PATH=”/usr/include/x86_64-linux-gnu”

Run the

tao-converterusing the sample command below and generate the engine.

Make sure to follow the output node names as mentioned in Exporting the Model

section of the respective model.

Instructions for Jetson

For the Jetson platform, the tao-converter is available to download in the NVIDIA developer zone. You may choose

the version you wish to download as listed in the overview section.

Once the tao-converter is downloaded, please follow the instructions below to generate a

TensorRT engine.

Unzip the zip file on the target machine.

Install the OpenSSL package using the command:

sudo apt-get install libssl-dev

Export the following environment variables:

$ export TRT_LIB_PATH=”/usr/lib/aarch64-linux-gnu”

$ export TRT_INC_PATH=”/usr/include/aarch64-linux-gnu”

For Jetson devices, TensorRT comes pre-installed with Jetpack. If you are using older JetPack, upgrade to JetPack-5.0DP.

Run the

tao-converterusing the sample command below and generate the engine.

Make sure to follow the output node names as mentioned in Exporting the Model

section of the respective model.

Using the tao-converter

tao converter -k <encryption_key>

-d <input_dimensions>

-o <comma separated output nodes>

[-c <path to calibration cache file>]

[-e <path to output engine>]

[-b <calibration batch size>]

[-m <maximum batch size of the TRT engine>]

[-t <engine datatype>]

[-w <maximum workspace size of the TRT Engine>]

[-i <input dimension ordering>]

[-p <optimization_profiles>]

[-s]

[-u <DLA_core>]

[-h]

input_file

Required Arguments

input_file: Path to the.etltmodel exported usingexport.-k: The key used to encode the.tltmodel when doing the traning.-d: Comma-separated list of input dimensions that should match the dimensions used fortao multitask_classification export. Unliketao multitask_classification export, this cannot be inferred from calibration data. This parameter is not required for new models introduced in TAO Toolkit v3.0 (for example, LPRNet, UNet, GazeNet, etc).-o: Comma-separated list of output blob names that should match the printout when usingtao multitask_classification export. The number of the outputs equals to the number of tasks.

Optional Arguments

-e: Path to save the engine to. The default value is./saved.engine.-t: Desired engine data type, generates calibration cache if in INT8 mode. The default value isfp32. The options are {fp32,fp16,int8}.-w: Maximum workspace size for the TensorRT engine. The default value is1073741824(1<<30).-i: Input dimension ordering, all other TAO commands use NCHW. The default value isnchw. The options are {nchw,nhwc,nc}. For classification, we can omit it (defaults tonchw).-p: Optimization profiles for.etltmodels with dynamic shape. Comma separated list of optimization profile shapes in the format<input_name>,<min_shape>,<opt_shape>,<max_shape>, where each shape has the format:<n>x<c>x<h>x<w>. Can be specified multiple times if there are multiple input tensors for the model. This is only useful for new models introduced in TAO v3.0. This parameter is not required for models that are already existed in TAO v2.0.-s: TensorRT strict type constraints. A Boolean to apply TensorRT strict type constraints when building the TensorRT engine.-u: Use DLA core. Specifying DLA core index when building the TensorRT engine on Jetson devices.

INT8 Mode Arguments

-c: Path to calibration cache file, only used in INT8 mode. The default value is./cal.bin.-b: Batch size used during the export step for INT8 calibration cache generation. (default:8).-m: Maximum batch size for TensorRT engine.(default:16). If met with out-of-memory issue, decrease the batch size accordingly.

Integrating the Model to DeepStream

There are 2 options to integrate models from TAO with DeepStream:

Option 1: Integrate the model (.etlt) with the encrypted key directly in the DeepStream app. The model file is generated by

tao multitask_classification export.Option 2: Generate a device specific optimized TensorRT engine, using tao-converter. The TensorRT engine file can also be ingested by DeepStream.

In order to integrate the models with DeepStream, you need the following:

Download and install DeepStream SDK. The installation instructions for DeepStream are provided in the DeepStream Development Guide.

An exported

.etltmodel file and optional calibration cache for INT8 precision.A

labels.txtfile containing the labels for classes in the order in which the networks produces outputs.A sample

config_infer_*.txtfile to configure the nvinfer element in DeepStream. The nvinfer element handles everything related to TensorRT optimization and engine creation in DeepStream.

DeepStream SDK ships with an end-to-end reference application which is fully configurable. Users

can configure input sources, inference model and output sinks. The app requires a primary object

detection model, followed by an optional secondary classification model. The reference

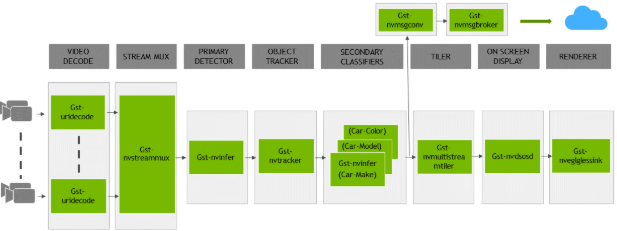

application is installed as deepstream-app. The graphic below shows the architecture of the

reference application.

There are typically 2 or more configuration files that are used with this app. In the install

directory, the config files are located in samples/configs/deepstream-app or

sample/configs/tlt_pretrained_models. The main config file configures all the high level

parameters in the pipeline above. This would set input source and resolution, number of

inferences, tracker and output sinks. The other supporting config files are for each individual

inference engine. The inference specific config files are used to specify models, inference

resolution, batch size, number of classes and other customization. The main config file will call

all the supporting config files. Here are some config files in

samples/configs/deepstream-app for your reference.

source4_1080p_dec_infer-resnet_tracker_sgie_tiled_display_int8.txt: Main config fileconfig_infer_primary.txt: Supporting config file for primary detector in the pipeline aboveconfig_infer_secondary_*.txt: Supporting config file for secondary classifier in the pipeline above

The deepstream-app will only work with the main config file. This file will most likely

remain the same for all models and can be used directly from the DeepStream SDK will little to no

change. Users will only have to modify or create config_infer_primary.txt and

config_infer_secondary_*.txt.

Integrating a Multitask Image Classification Model

See Exporting The Model for more details on how to export a TAO model. After the model has been generated, you can use the DeepStream sample app provided in GitHub repository to integrate the exported model. The GitHub repository also provides a README file for adjustments needed to integrate a custom model you trained on your own dataset.