3D Multi Camera Detection and Tracking (Sparse4D)#

The Real Time Video Intelligence CV Microservice leverages NVIDIA DeepStream SDK to generate metadata for each stream that downstream microservices can use to generate spatial metrics and alerts.

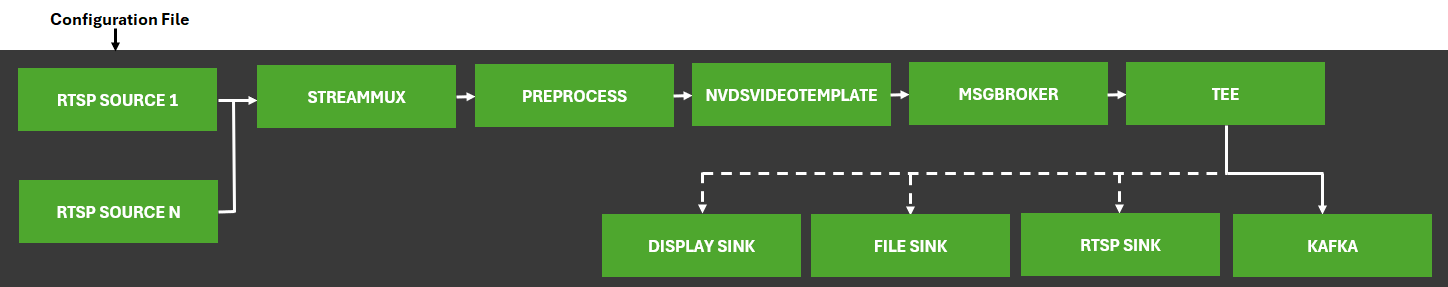

The microservice features metropolis-perception-app, a DeepStream pipeline that builds on the built-in deepstream-test5 app in the DeepStream SDK. This perception app provides a complete application that takes streaming video inputs, decodes the incoming streams, performs inference & tracking, and sends the metadata to other microservices using the defined Protobuf schema.

The application features a modular architecture that integrates preprocessing plugins and a custom video template plugin (Sparse4D) specifically designed for multi-object detection and tracking in three-dimensional space.

At its core, Sparse4D performs Birds-Eye-View (BEV) 3D object detection and temporal tracking across multiple synchronized camera sensors within defined BEV groups. Refer to the TAO Sparse4D Finetuning page for more details on the model architecture and training process.

The pipeline ingests multicamera video streams, processes them through calibrated projection matrices for spatial alignment, and utilizes a feedback mechanism with temporal instance banking to maintain object identity across frames. Detection results include 3D position, orientation, velocity, and instance IDs, enabling sophisticated multi-camera fusion capabilities.

For detailed information on all components, APIs, and customization options, refer to the Object Detection and Tracking.

The diagram below shows the perception pipeline used in the microservice:

Configurations#

The Perception microservice requires several configuration files that control various aspects of the 3D multi-camera detection and tracking system. These files allow users to customize the system’s behavior according to their specific requirements.

Inference Configuration File#

The main configuration file for Sparse4D, config.yaml, handles properties related to model inference and controls the core functionality of the 3D detection and tracking system. The configuration parameters organize into the following categories:

Inference Properties#

Parameter |

Description |

Default Value |

|---|---|---|

|

Path to the ONNX model file |

|

|

Path to the TensorRT engine file |

|

|

Path to the object class labels file |

|

|

GPU ID to use for tensor operations |

|

|

Sparse4D model batch size |

|

|

Number of camera sensors (DeepStream batch size) |

|

|

Enable FP16 precision (faster but less accurate) |

|

|

Force rebuild of the engine even if it exists |

|

|

Enable temporal feedback mechanism |

|

|

Use partial batch for inference |

|

|

Number of consecutive batches to be skipped for inference |

|

Calibration Properties#

Parameter |

Description |

Default Value |

|---|---|---|

|

Path to the calibration file |

|

|

BEV group name for camera grouping |

|

|

Calibration mode (synthetic or real) |

|

|

Groups cameras for multi-view fusion in synthetic mode |

|

|

The model is trained and inferred in BEV coordinates. This flag re-centers the 3D bounding boxes from the original OV coordinates to the BEV coordinates. The Perception microservice converts the final output back to OV coordinates. Keep this flag enabled. |

|

Preprocessing Properties#

Parameter |

Description |

Default Value |

|---|---|---|

|

Height of preprocessed images |

|

|

Width of preprocessed images |

|

|

Dictionary of image augmentation parameters |

See below |

Augmentation Configuration:

aug_configs:

resize: 0.5

resize_dims: [960, 540]

crop: [0, 0, 960, 540] # [x, y, width, height]

flip: False

rotate: 0

rotate_3d: 0

Instance Bank Properties#

Parameter |

Description |

Default Value |

|---|---|---|

|

Number of anchors |

|

|

Embedding dimensions |

|

|

Path to anchor file |

|

|

Anchor handler implementation |

|

|

Number of temporal instances to track |

|

|

Default time interval for temporal anchor projection |

|

|

Confidence decay rate for temporal tracking |

|

|

Enable anchor gradients |

|

|

Enable feature gradients |

|

|

Maximum time interval to maintain object identity |

|

|

Re-identification dimensions |

|

Decoder Properties#

Parameter |

Description |

Default Value |

|---|---|---|

|

Maximum number of bounding boxes to output |

|

|

Score threshold for decoding output |

|

|

Number of threads for PyTorch |

|

Debugging Properties#

Parameter |

Description |

Default Value |

|---|---|---|

|

Logging level: info (all messages), warn (warnings & errors), error (errors only) |

|

|

Display partial batch information |

|

|

Whether to display tensor information |

|

|

Whether to display bboxlist information |

|

|

Maximum number of objects to display in bboxlist |

|

|

Enable/disable frame dumping for debugging |

|

|

Number of frames to dump from each batch |

|

|

Whether to perform postprocessing (instance bank & decoder) on GPU |

|

|

TensorRT logger severity level: 0=INTERNAL_ERROR 1=ERROR 2=WARNING 3=INFO 4=VERBOSE 5=Show all messages |

|

|

Enable TensorRT layer-wise profiler for performance analysis |

|

DeepStream Configuration File#

The DeepStream main configuration file (ds-main-config.txt) builds on the DeepStream test5 application configuration and provides essential settings for the overall pipeline. This file controls various aspects of the application, including source configuration, stream multiplexing, message broker settings, and visualization parameters.

Key Configuration Sections#

Section |

Purpose |

|---|---|

|

Controls performance measurement settings and global application parameters |

|

Defines input sources (RTSP streams), sensor IDs, and source management settings |

|

Configures source attributes like latency handling and reconnection parameters |

|

Sets stream multiplexer parameters for batch processing and timestamp handling |

|

Configures various output sinks (visualization, messaging, file output) |

|

Links to the preprocessing configuration file for input transformation |

|

Specifies the custom video template plugin configuration for Sparse4D |

For a complete understanding of all configuration options, refer to the DeepStream SDK Documentation.

Preprocess Plugin Configuration File#

The gst-nvdspreprocess plugin performs all preprocessing operations such as resizing, scaling, cropping, format conversion, and normalization on incoming video frames before feeding them to the neural network. These preprocessing operations prepare the input data according to the specific requirements of the Sparse4D model. You can configure all these preprocessing operations via the preprocess configuration file ds-mtmc-preprocess-config.txt.

For detailed information about the preprocessing configuration parameters and options, refer to the DeepStream Preprocessing Plugin Documentation.

Custom Video Template Plugin Configuration File#

The ds-mtmc-videotemplate_custom_lib_config.txt file contains the configuration parameters for the custom video template plugin. This file configures the custom video template plugin for the Perception microservice.

gpu-id=0

customlib-name=/opt/nvidia/deepstream/deepstream-8.0/lib/libnvdsgst_sparse4d.so

The configuration specifies which GPU to use for processing and the path to the custom Sparse4D library that implements the 3D detection and tracking functionality.

Kafka Configuration File#

The ds-kafka-config.txt file contains the Kafka configuration parameters for the Perception microservice. This file configures the Kafka producer and consumer settings for the Perception microservice.

[message-broker]

partition-key = sensorId

The partition key setting ensures that messages from the same sensor go to the same Kafka partition, maintaining message ordering per camera stream.

Calibration File#

The calibration.json file defines camera calibration parameters essential for 3D spatial understanding. This file contains intrinsic and extrinsic camera parameters for each camera in the system. For detailed information about camera calibration parameters and setup, refer to the calibration.

Labels File#

The labels.txt file contains the class labels that the model can detect along with class-wise confidence thresholds for filtering detections. Each entry in the file corresponds to a class that the model recognizes with its associated confidence threshold. Users can customize this file to match their specific detection requirements and fine-tune the detection sensitivity for each object class.

The file format uses a semicolon-separated list where each entry follows the pattern ClassName:ConfidenceThreshold:

Person:0.85;Fourier_GR1_T2_Humanoid:0.85;Agility_Digit_Humanoid:0.85;Nova_Carter:0.90;Transporter:0.90;Forklift:0.75;

These class labels represent common objects in industrial and warehouse environments that the Sparse4D model can detect and track in 3D space. Classes can be customized by training on domain-specific datasets using TAO Toolkit.

Anchor File#

The /opt/nvidia/deepstream/deepstream-8.0/sources/sparse4d/_ov_kmeans900_v0.5.npy file contains the anchor parameters for the Sparse4D model. This file configures the initial anchor parameters for the Sparse4D model.

Runtime Configuration Adjustments#

This section covers common configuration changes that users may need to make when adapting the Sparse4D pipeline for their specific deployment scenarios.

Modifying the Number of Input Streams#

When updating the number of input streams/cameras, you must update the batch size settings in multiple configuration files to ensure consistency. This step is critical for the proper operation of the Sparse4D pipeline.

The related deepstream configuration files can be found in the following directory for docker compose deployment: deployments/warehouse/warehouse-3d-app/deepstream/configs/

Updating the Inference Configuration#

Modify the config.yaml file to update the number of sensors:

num_sensors: 4 # Change this to match number of camera streams

Update DeepStream Configuration#

Modify the ds-main-config.txt file to update source and batch size settings:

[source-list]

# Set num-source-bins to match the number of streams

num-source-bins=4

# Update the source URLs, sensor IDs and names accordingly

list=rtsp://server1:port/stream1;rtsp://server1:port/stream2;...

sensor-id-list=Camera1;Camera2;Camera3;...

sensor-name-list=Camera1;Camera2;Camera3;...

[streammux]

# Change batch-size to the number of streams

batch-size=4

Updating Preprocess Configuration#

Modify the ds-mtmc-preprocess-config.txt file to update the network input shape:

# Update network-input-shape 1st value to match the number of streams

network-input-shape=4;3;540;960 # Change the first value to match number of camera streams

Integrating a Sparse4D Model Checkpoint#

The Sparse4D plugin supports model swapping, allowing you to use different Sparse4D models based on your specific use case.

Model Compatibility Requirements#

Ensure your new model meets these requirements:

Uses the same input tensor names and shapes as the plugin expects

Has a compatible output format with the post-processing logic

Follows the Sparse4D architecture pattern for 3D object detection

Updating Sparse4D Configuration#

Mount the model in docker compose & modify the

onnx_fileconfig inconfig.yamlto point to your new model:

# Mount your new model file in docker compose

volumes:

- $MDX_DATA_DIR/deepstream/models/your_new_model.onnx:/opt/nvidia/deepstream/deepstream-8.0/sources/sparse4d/your_new_model.onnx

# Update model file paths

onnx_file: "/opt/nvidia/deepstream/deepstream-8.0/sources/sparse4d/your_new_model.onnx"

# Force TensorRT engine rebuild for the new model

force_engine_rebuild: True

The Perception microservice will automatically build the TensorRT engine for the new model based on the above configuration changes.

2. Mount your new labels file in docker compose & modify the labels_file config in config.yaml to update the class labels.

Your new labels should be in the same order as your model was trained on.

# Mount your new labels file in docker compose: deployments/warehouse/warehouse-3d-app/warehouse-3d-app.yml

volumes:

- $MDX_SAMPLE_APPS_DIR/warehouse-3d-app/deepstream/label/your_new_labels.txt:/opt/nvidia/deepstream/deepstream-8.0/sources/sparse4d/your_new_labels.txt

# Update model file paths

labels_file: "/opt/nvidia/deepstream/deepstream-8.0/sources/sparse4d/your_new_labels.txt"

# Update the class labels - Example ``your_new_labels.txt``

Person:0.85;Fourier_GR1_T2_Humanoid:0.85;Agility_Digit_Humanoid:0.85;Nova_Carter:0.90;Transporter:0.90;Forklift:0.75;

3. Mount your new anchor file in docker compose & modify the anchor config in config.yaml to reflect the correct initialized anchors.

Since the model training & inference depends on the initialized anchor .npy` file, you must use the right anchor file for accurate detection & tracking.

If you finetune a model on a new scene or set of classes, you should use the new anchor file obtained from the TAO finetuning process.

# Mount your new anchor file in docker compose: deployments/warehouse/warehouse-3d-app/warehouse-3d-app.yml

volumes:

- $MDX_DATA_DIR/deepstream/anchor/your_new_anchors.npy:/opt/nvidia/deepstream/deepstream-8.0/sources/sparse4d/your_new_anchors.npy

# Update the anchor file path

anchor: "/opt/nvidia/deepstream/deepstream-8.0/sources/sparse4d/your_new_anchors.npy"

If the resolution is changed for the new model, set the

NETWORK_WIDTHandNETWORK_HEIGHTenvironment variables to match the new model’s input resolution.

The sparse4d_setup.sh script automatically handles updating the required configuration parameters in different config files (config.yaml and ds-mtmc-preprocess-config.txt) based on these values.

# Example: Set environment variables for a model with 960x540 input resolution

export NETWORK_WIDTH=960

export NETWORK_HEIGHT=540