2D Single Camera Detection and Tracking (RT-DETR)#

The Real Time Video Intelligence CV Microservice leverages NVIDIA DeepStream SDK to generate metadata for each stream that downstream microservices can use to generate spatial metrics and alerts.

The microservice features metropolis-perception-app, a DeepStream pipeline that builds on the built-in deepstream-test5 app in the DeepStream SDK. This perception app provides a complete application that takes streaming video inputs, decodes the incoming streams, performs inference & tracking, and sends the metadata to other microservices using the defined Protobuf schema.

The application features a modular architecture that integrates preprocessing plugins and RT-DETR (Real-Time Detection Transformer), specifically designed for 2D single-camera object detection and tracking in warehouse environments.

RT-DETR is an advanced real-time detection transformer that generates precise 2D bounding boxes for diverse objects including people, humanoid robots, autonomous vehicles, and warehouse equipment. The model features an EfficientViT/L2 backbone, pretrained on warehouse scene datasets for accurate 2D object detection in industrial environments. Refer to the TAO RT-DETR Finetuning page for more details on the model architecture and training process.

The pipeline ingests single-camera video streams, performs real-time 2D object detection, and utilizes DeepStream’s tracking capabilities to maintain object identity across frames. Detection results include 2D bounding boxes, class labels, confidence scores, and instance IDs, enabling sophisticated tracking and analytics capabilities.

For detailed information on all components, APIs, and customization options, refer to the Object Detection and Tracking.

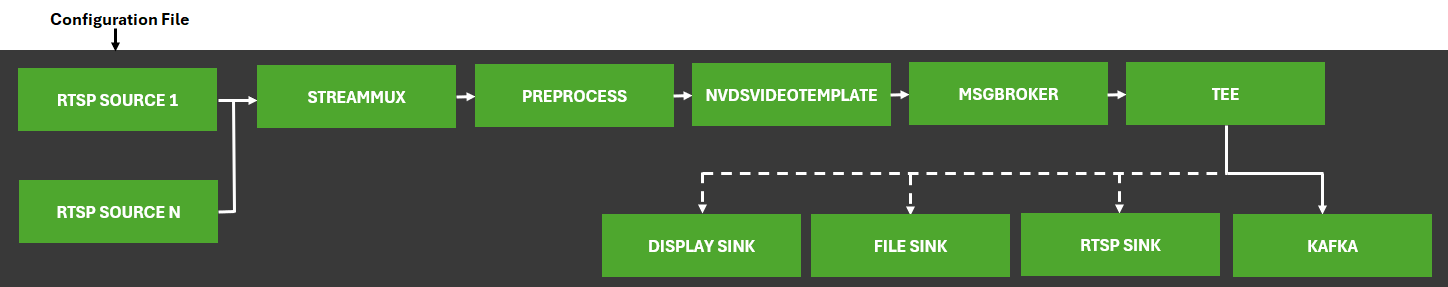

The diagram below shows the perception pipeline used in the microservice:

Configurations#

The Perception microservice requires several configuration files that control various aspects of the 2D single-camera detection and tracking system. These files allow users to customize the system’s behavior according to their specific requirements.

Inference Configuration File#

The main configuration file for RT-DETR, ds-ppl-analytics-pgie-config.yml, handles properties related to model inference and controls the core functionality of the 2D detection system. This YAML configuration file contains two main sections: property and class-attrs-all.

Model and Inference Properties#

Parameter |

Description |

Default Value |

|---|---|---|

|

Path to the ONNX model file |

|

|

Path to the TensorRT engine file |

|

|

Path to the object class labels file |

|

|

GPU ID to use for inference |

|

|

Precision mode (0=FP32, 1=INT8, 2=FP16) |

|

|

Number of object classes the model can detect |

|

|

Inference interval (process every Nth frame) |

|

|

Unique ID for the inference engine |

|

|

Type of detection network (0=Detector) |

|

|

Clustering algorithm (1=DBSCAN, 2=NMS, 3=DBSCAN+NMS, 4=None) |

|

Input/Output Properties#

Parameter |

Description |

Default Value |

|---|---|---|

|

Scaling factor for preprocessing (1/255) |

|

|

Input color format (0=RGB, 1=BGR) |

|

|

Input dimensions (C;H;W) |

|

|

Maintain aspect ratio during preprocessing |

|

|

Mean subtraction offsets for preprocessing |

|

|

Enable output tensor metadata |

|

|

Names of output tensors from the model |

|

Detection and Filtering Properties#

Parameter |

Description |

Default Value |

|---|---|---|

|

Detection confidence threshold |

|

|

Maximum number of top detections to keep per class |

|

|

Custom bounding box parsing function |

|

|

Path to custom parsing library |

|

DeepStream Configuration File#

The DeepStream main configuration file (ds-main-config.txt) builds on the DeepStream test5 application configuration and provides essential settings for the overall pipeline. This file controls various aspects of the application, including source configuration, stream multiplexing, message broker settings, and visualization parameters.

Key Configuration Sections#

Section |

Purpose |

|---|---|

|

Controls performance measurement settings and global application parameters |

|

Defines input sources (RTSP streams), sensor IDs, and source management settings |

|

Configures source attributes like latency handling and reconnection parameters |

|

Sets stream multiplexer parameters for batch processing and timestamp handling |

|

Configures various output sinks (visualization, messaging, file output) |

|

Specifies the RT-DETR inference engine configuration |

|

Configures the object tracking component for maintaining object IDs across frames |

For a complete understanding of all configuration options, refer to the DeepStream SDK Documentation.

Tracker Configuration File#

The tracker configuration files configure the object tracking component that maintains object identity across frames. The tracker configuration consists of two files:

Tracker plugin configuration in

ds-main-config.txtunder the[tracker]sectionLow-level tracker configuration in

ds-nvdcf-accuracy-tracker-config.yml

RT-DETR performs detection, and the NvDCF (NVIDIA Discriminative Correlation Filter) tracker associates detections across time to generate consistent object IDs.

For detailed information about the tracker configuration parameters and options, refer to the DeepStream Tracker Plugin Documentation.

Tracker Plugin Configuration (in ds-main-config.txt)

Parameter |

Description |

Default Value |

|---|---|---|

|

Enable or disable tracker |

|

|

Frame width at which tracking is performed (must be multiple of 32) |

|

|

Frame height at which tracking is performed (must be multiple of 32) |

|

|

Low-level tracker library |

|

|

Low-level NvDCF tracker configuration file |

|

|

GPU ID to use for tracking |

|

|

Enable batch processing for multiple streams |

|

|

Enable past frame tracking for better accuracy |

|

|

Display tracking ID in visualizations |

|

Low-Level Tracker Configuration (ds-nvdcf-accuracy-tracker-config.yml)

The NvDCF tracker configuration file contains advanced parameters for fine-tuning tracker behavior. Key configuration sections include:

BaseConfig: Basic tracker confidence thresholdsTargetManagement: Object lifecycle management (max targets, probation age, termination)TrajectoryManagement: Track association and re-identification settingsDataAssociator: Data association algorithm parameters (IOU, visual similarity)StateEstimator: Kalman filter parameters for motion predictionVisualTracker: Visual feature extraction settings (HOG, color features)ReID: Re-identification network configuration (optional)

For most use cases, the default NvDCF accuracy configuration provides robust tracking without modification.

Kafka Configuration File#

The ds-kafka-config.txt file contains the Kafka configuration parameters for the Perception microservice. This file configures the Kafka producer and consumer settings for the Perception microservice.

[message-broker]

partition-key = sensorId

The partition key setting ensures that messages from the same sensor go to the same Kafka partition, maintaining message ordering per camera stream.

Labels File#

The ds-detector-labels.txt file contains the class labels that the RT-DETR model can detect. Each line in the file corresponds to a class that the model recognizes. Users can customize this file to match their specific detection requirements.

The file format uses a newline-separated list of object class names:

Person

Agility_Digit_Humanoid

Fourier_GR1_T2_Humanoid

Nova_Carter

Transporter

Forklift

Pallet

These class labels represent the 7 object categories that the RT-DETR warehouse model can detect:

Person: Human workers in warehouse environments

Agility_Digit_Humanoid: Agility Robotics Digit humanoid robot

Fourier_GR1_T2_Humanoid: Fourier Intelligence GR1_T2 humanoid robot

Nova_Carter: NVIDIA Nova Carter autonomous mobile robot

Transporter: Warehouse transport vehicles

Forklift: Industrial forklifts

Pallet: Warehouse pallets and cargo containers

Runtime Configuration Adjustments#

This section covers common configuration changes that users may need to make when adapting the RT-DETR pipeline for their specific deployment scenarios.

Modifying the Number of Input Streams#

When updating the number of input streams/cameras, you must update the batch size settings in the configuration files to ensure consistency. RT-DETR processes each camera stream independently, making it straightforward to scale to multiple cameras.

The related deepstream configuration files can be found in the following directory for docker compose deployment: deployments/warehouse/warehouse-2d-app/deepstream/configs/cnn-models/

Update DeepStream Configuration#

Modify the ds-main-config.txt file to update source and batch size settings:

[source-list]

# Set max-batch-size to the maximum number of streams you plan to use

max-batch-size=4

# Update the source URLs, sensor IDs and names accordingly

# Note: num-source-bins=0 enables dynamic source management via HTTP API

num-source-bins=0

use-nvmultiurisrcbin=1

http-ip=localhost

http-port=9000

# Example static configuration (uncomment if not using dynamic sources):

# num-source-bins=4

# list=rtsp://server1:port/stream1;rtsp://server1:port/stream2;...

# sensor-id-list=Camera1;Camera2;Camera3;Camera4

# sensor-name-list=Camera1;Camera2;Camera3;Camera4

[streammux]

# Change batch-size to the number of streams

batch-size=4

[primary-gie]

# Update batch-size to match streammux batch-size

batch-size=4

[tiled-display]

# If tiled display is enabled, update the rows and columns

enable=0 # Set to 1 to enable tiled display

rows=2 # Adjust based on your streams count

columns=2 # Adjust based on your streams count

Note

The default configuration uses dynamic source management (use-nvmultiurisrcbin=1), which allows adding/removing camera streams at runtime via HTTP API without restarting the application. The max-batch-size parameter sets the upper limit for concurrent streams.

Integrating a New RT-DETR Model#

The RT-DETR pipeline supports model swapping, allowing you to use different RT-DETR models based on your specific use case, such as models fine-tuned on custom datasets via TAO Toolkit.

Model Compatibility Requirements#

Ensure your new model meets these requirements:

Exported as ONNX format from TAO Toolkit with

serialize_nvdsinfer: trueUses the same input dimensions (544x960 or compatible resolution)

Has compatible output tensors (

pred_boxesandpred_logits)Follows the RT-DETR architecture pattern for 2D object detection

Update RT-DETR Configuration#

Mount the model in docker compose & modify the

ds-ppl-analytics-pgie-config.ymlto point to your new model:

# Mount your new model file in docker compose: deployments/warehouse/warehouse-2d-app/warehouse-2d-app.yml

volumes:

- $MDX_DATA_DIR/deepstream/models/your_new_rtdetr_model.onnx:/opt/storage/your_new_rtdetr_model.onnx

# Update ds-ppl-analytics-pgie-config.yml

property:

onnx-file: /opt/storage/your_new_rtdetr_model.onnx

model-engine-file: /opt/storage/your_new_rtdetr_model.onnx_b4_gpu0_fp16.engine

The Perception microservice will automatically build the TensorRT engine for the new model on first run.

2. Mount your new labels file in docker compose & modify the labelfile-path in ds-ppl-analytics-pgie-config.yml to update the class labels.

Your new labels should be in the same order as your model was trained on.

# Mount your new labels file in docker compose: deployments/warehouse/warehouse-2d-app/warehouse-2d-app.yml

volumes:

- $MDX_SAMPLE_APPS_DIR/warehouse-2d-app/deepstream/configs/cnn-models/your_new_labels.txt:/opt/configs/your_new_labels.txt

# Update ds-ppl-analytics-pgie-config.yml

property:

labelfile-path: your_new_labels.txt

num-detected-classes: 7 # Update based on your number of classes

# Update the class labels - Example ``your_new_labels.txt``

Person

Agility_Digit_Humanoid

Fourier_GR1_T2_Humanoid

Nova_Carter

Transporter

Forklift

Pallet

Update input dimensions if your model uses different resolution:

# Update ds-ppl-analytics-pgie-config.yml

property:

infer-dims: 3;544;960 # Update C;H;W based on your model's input size

Adjust detection threshold if needed:

# Update ds-ppl-analytics-pgie-config.yml

class-attrs-all:

pre-cluster-threshold: 0.5 # Adjust based on your model's performance

topk: 20 # Maximum detections to keep per class

Update output blob names if your model uses different tensor names:

# Update ds-ppl-analytics-pgie-config.yml

property:

output-blob-names: pred_boxes;pred_logits # TAO RT-DETR default output names

After making these changes, restart the Perception microservice for the changes to take effect. For detailed instructions on fine-tuning RT-DETR models via TAO Toolkit, refer to the TAO RT-DETR Finetuning page.