Quickstart#

This guide provides a comprehensive step-by-step walkthrough to help you quickly set up and start using the Warehouse Blueprint.

The Warehouse Blueprint is a video analytics solution that supports real-time object detection, tracking, and analytics. It offers two message broker options to accommodate different deployment scenarios:

Kafka: High-throughput message broker optimized for datacenter deployments with robust persistence and scalability.

Redis Streams: Lightweight message broker ideal for edge deployments with minimal memory footprint and low-latency requirements.

Choose the message broker based on your deployment environment: Kafka for centralized datacenter installations, or Redis for distributed edge locations where resources are constrained.

Components Overview#

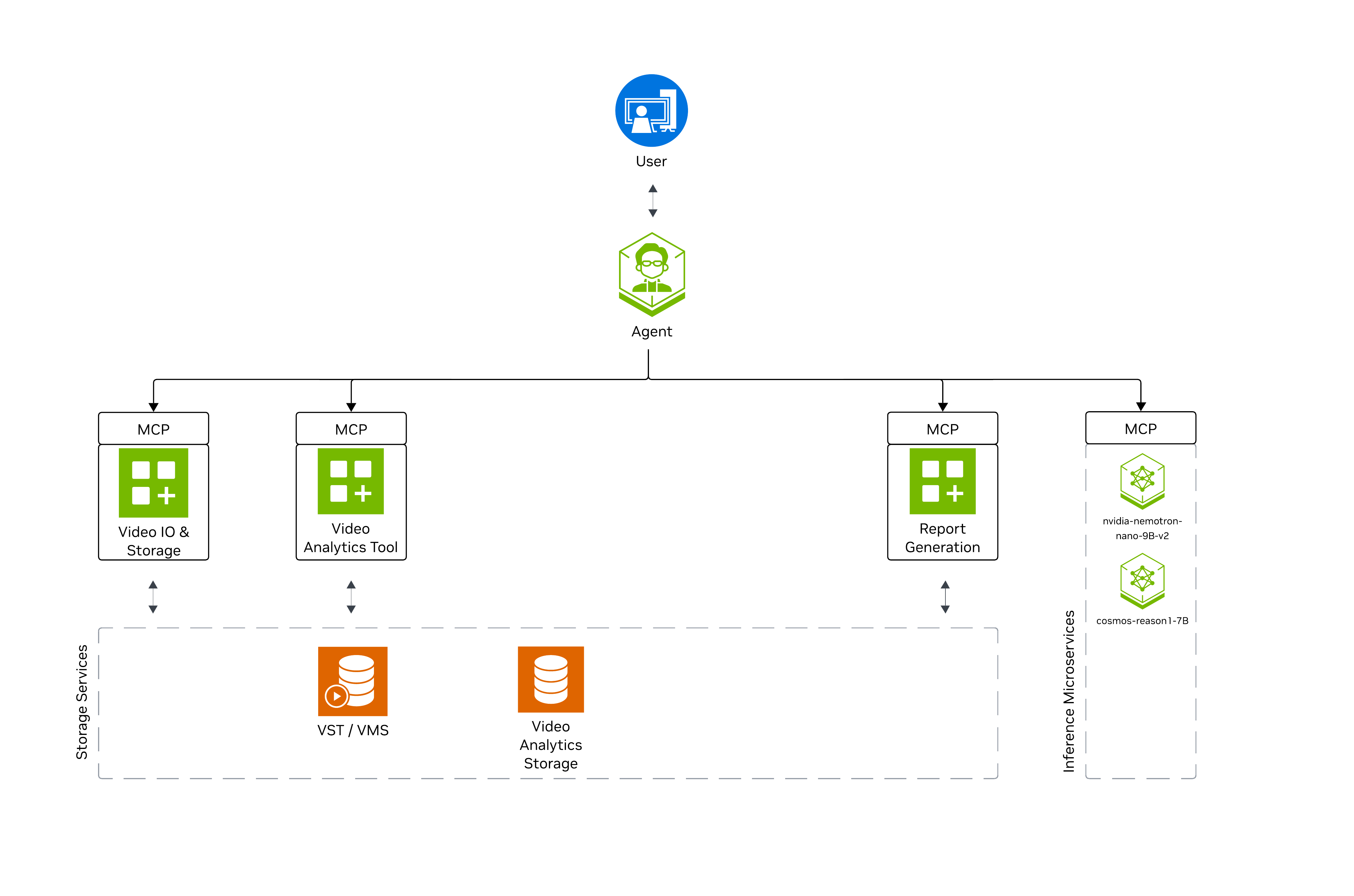

The high-level diagram illustrates the components of the Warehouse Blueprint. The diagram features the 2D or 3D blueprint. The Agent communicates with the Blueprint’s data services through APIs.

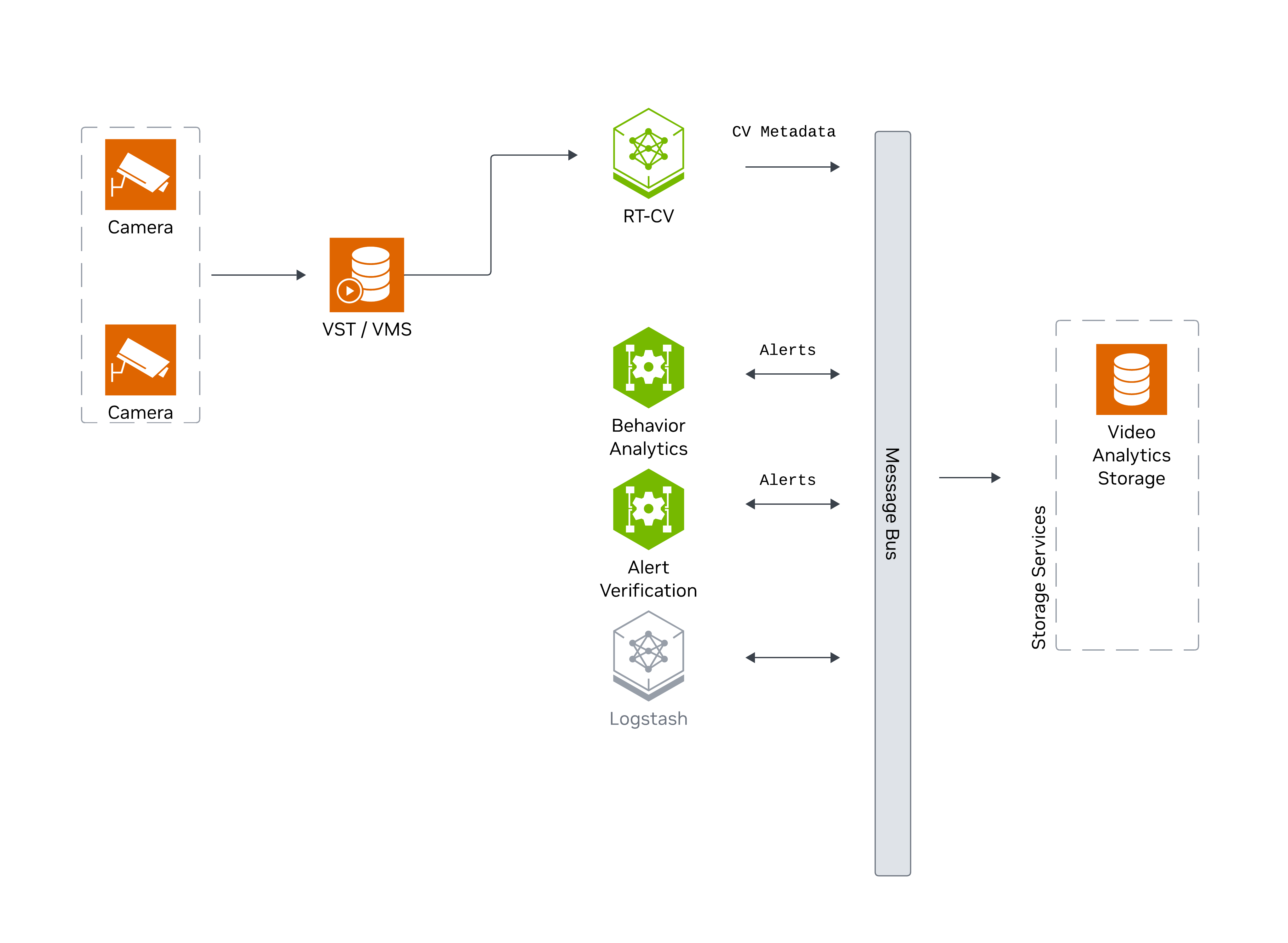

The following diagram shows the major components of the Warehouse Blueprint:

Camera streams are ingested by VST/VMS, processed by RT-CV (perception) and Behavior Analytics microservices, with CV metadata and alerts published to the Message Bus and persisted via Storage Services.

The following diagram shows how AI Agents interact with the Warehouse Blueprint:

The Agent orchestrates MCP servers for Video IO, Video Analytics, and Report Generation, leveraging Nemotron (LLM) and Cosmos-Reason (VLM) inference microservices.

Prerequisites#

Before you begin, ensure all of the prerequisites are met. See Prerequisites for more details.

Deployment Options#

The Warehouse Blueprint offers flexible deployment options with the following profiles:

2D Vision AI Profile - 2D detection and tracking (bp_wh_kafka, bp_wh_redis)

2D Vision AI with Agents Profile - 2D detection and tracking with VSS agent integration (bp_wh)

3D Vision AI Profile - 3D multi-camera detection, tracking, and analytics (bp_wh_kafka, bp_wh_redis)

Profile Type |

Features |

Constraints |

Supported GPUs |

|---|---|---|---|

2D Vision AI Profile |

2D single-camera detection, tracking, and analytics |

Model trained on real-world data |

RTX PRO 6000 BW, H100 NVL, H100 SXM HBM3, RTX A6000 ADA, L40S, L4, IGX-THOR, DGX-SPARK |

2D Vision AI with Agents Profile |

2D single-camera detection, tracking, analytics, and VSS agent integration |

Model trained on real and synthetic data |

RTX PRO 6000 BW, H100 NVL, H100 SXM HBM3, L40S (L40S only for remote LLM and VLM NIMs) |

3D Vision AI Profile |

3D multi-camera detection, tracking, and analytics |

Model trained on synthetic data, recommended for simulated environments |

RTX PRO 6000 BW, H100 NVL, H100 SXM HBM3, RTX A6000 ADA, L40S, L4, IGX-THOR, DGX-SPARK |

Supported GPU Hardware#

This section provides an overview of the hardware profiles supporting the various deployment options described in the previous section. Each profile is designed to meet specific use case performance and resource requirements, ensuring optimal operation of the blueprint.

See supported deployment options per GPU type for 2D and 3D Warehouse Blueprint further in this section.

Warehouse blueprints are optimized to work on a single supported GPU serving 4 real-time camera streams. When you choose to install the Agents, you have the following options:

Download and locally run the required NVIDIA NIM services (requires additional GPU resources)

Use a remote NVIDIA NIM endpoint or any OpenAI API compatible endpoint (no additional GPU required)

NVIDIA Certified GPU Servers and Workstations

GPU Type |

Number of streams |

2D Vision AI Profile (Number of GPUs) |

2D Vision AI with Agents Profile (Number of GPUs) |

|---|---|---|---|

RTX PRO 6000 BW |

16 |

1 |

1 (remote LLM+VLM)

2 (local-shared LLM+VLM)

3 (Local VLM+LLM (dedicated GPU each) (default))

|

H100 (NVL, SXM HBM3) |

26 |

1 |

1 (remote LLM+VLM)

2 (local-shared LLM+VLM)

3 (Local VLM+LLM (dedicated GPU each) (default))

|

RTX A6000 ADA |

8 |

1 |

1 (remote LLM+VLM) |

L40S |

12 |

1 |

1 (remote LLM+VLM) |

L4 |

4 |

1 |

1 (remote LLM+VLM) |

Note

Recommended GPUs:

2D Vision AI Profile: RTX PRO 6000 BW, H100 NVL, H100 SXM HBM3, RTX A6000 ADA, L40S, L4

2D Vision AI with Agents Profile: RTX PRO 6000 BW, H100 NVL, H100 SXM HBM3, L40S

When you choose to install the agents, you have the following options:

Download and locally run the required NVIDIA NIM services (requires additional GPU resources).

Use L40S with remote LLM and VLM NIMs (no additional GPU required).

GPU Type |

Number of Streams |

FPS |

3D Vision AI Profile (Number of GPUs) |

|---|---|---|---|

RTX PRO 6000 BW |

13 |

30 |

1 |

H100 (NVL, SXM HBM3) |

12 |

30 |

1 |

RTX A6000 ADA |

6 |

30 |

1 |

L40S |

7 |

30 |

1 |

L4 |

2 |

30 |

1 |

IGX-THOR |

6 |

15 |

1 |

DGX-SPARK |

5 |

15 |

1 |

Note

We recommend RTX PRO 6000 BW, H100 NVL, H100 SXM HBM3, RTX A6000 ADA, L40S, L4, IGX-THOR, DGX-SPARK GPUs for 3D Vision AI Profile.

Download Warehouse Artifacts#

This section guides you through the steps to download the Warehouse Blueprint artifacts.

Setup NGC Access#

# Setup NGC access

export NGC_CLI_API_KEY=<NGC_CLI_API_KEY>

export NGC_CLI_ORG='nvidia'

Deployment Resources#

Refer to the Prerequisites section for the NGC CLI installation guide.

ngc \

registry \

resource \

download-version \

"nvidia/vss-warehouse/vss-warehouse-compose:3.0.0"

#OR Manually download the tar file from NGC

#URL https://catalog.ngc.nvidia.com/orgs/nvidia/teams/vss-warehouse/resources/vss-warehouse-compose?version=3.0.0

# Extract the package

cd vss-warehouse-compose_v3.0.0

tar -xvf deploy-warehouse-compose.tar.gz

# This is the path to the deployments directory. It is set in the warehouse/.env file for env MDX_SAMPLE_APPS_DIR.

#MDX_SAMPLE_APPS_DIR="/path/to/deployments"

Warehouse App Data#

Warehouse App Data contains sample video datasets (real-world and synthetic), pre-trained TensorRT models (RT-DETR for 2D, Sparse4D for 3D, NvDCF tracker), camera calibration data, and configuration templates for deploying 2D and 3D warehouse profiles.

The package includes three video datasets (MP4 format, 1920x1080, 30 FPS):

- nv-warehouse-4cams (Real-world footage, 4 cameras, for 2D with Agents profile)

Camera Views: High-angle fixed cameras mounted above warehouse aisles capturing operational activities

Content: Storage aisles with tall metal racks holding palletized inventory, workers in safety vests (white and high-visibility yellow/green), cardboard boxes and shrink-wrapped pallets on shelves, forklift and pallet movement patterns

Duration: Approximately 10 minutes per camera (600 seconds)

- warehouse-loading-dock-3cams-synthetic (Omniverse-generated, 3 cameras, for 2D Kafka/Redis profiles)

Camera Views: Overhead cameras showing loading dock staging area from multiple angles

Content: Clean synthetic loading dock environment with loading bays, yellow-and-black striped safety zones marked on floor, cardboard boxes on pallets, forklift equipment.

Duration: 4 minutes per camera (240 seconds)

- warehouse-4cams-20mx20m-synthetic (Omniverse-generated 3D, 4 cameras, 20x20m coverage, for 3D profiles)

Camera Views: Four overhead corner-mounted cameras providing overlapping coverage of 20mx20m warehouse floor space

Content: Organized 3D warehouse floor with numbered blue-outlined zones (1, 2, 3), palletized boxes arranged in designated areas, yellow forklifts, warehouse workers, yellow-and-black striped safety boundaries, blue safety barriers, equipment and inventory items.

Duration: 5 minutes per camera (300 seconds)

Purpose: Multi-camera synchronized tracking for 3D spatial analytics and cross-camera object re-identification

Note

After extracting the package, set MDX_DATA_DIR in warehouse/.env to point to the extracted data directory.

ngc \

registry \

resource \

download-version \

"nvidia/vss-warehouse/vss-warehouse-app-data:3.0.0"

# OR Manually download the tar file from NGC

# URL https://catalog.ngc.nvidia.com/orgs/nvidia/teams/vss-warehouse/resources/vss-warehouse-app-data?version=3.0.0

# Extract the package

cd vss-warehouse-app-data_v3.0.0

tar -xvf vss-warehouse-app-data.tar.gz

# Set permissions

sudo chmod -R 777 /path/to/vss-warehouse-app-data

# This is the path to the data directory. It is set in the warehouse/.env file for MDX_DATA_DIR.

#MDX_DATA_DIR="/path/to/vss-warehouse-app-data"

Deploy Warehouse Blueprint#

Configure Environment settings#

This section explains the most commonly edited variables in deployments/warehouse/.env.

Deployment Selection#

Select the appropriate configuration based on your deployment profile:

Profile |

BP_PROFILE |

SAMPLE_VIDEO_DATASET |

NUM_STREAMS |

|---|---|---|---|

2D Vision AI Profile (MODE=2d) |

|

|

3 |

2D Vision AI with Agents Profile (MODE=2d) |

|

|

4 |

3D Vision AI Profile (MODE=3d) |

|

|

4 |

Hardware Profile Configuration#

Determine your HARDWARE_PROFILE by running nvidia-smi -L to identify your GPU model.

GPU |

HARDWARE_PROFILE Value |

|---|---|

RTX PRO 6000 BW |

|

H100 (NVL, SXM HBM3) |

|

RTX A6000 ADA |

|

L40S |

|

L4 |

|

IGX-THOR |

|

DGX-SPARK |

|

Deployment Paths and Services#

Variable |

Description |

|---|---|

MDX_SAMPLE_APPS_DIR |

(Required) Path to your deployments directory |

MDX_DATA_DIR |

(Required) Path to the extracted warehouse app data directory |

HOST_IP |

(Required) Host IP address of the machine where the blueprint is deployed |

GOOGLE_MAPS_API_KEY |

Required for map UI features. Applies to MODE=”2d” only.

Obtain from Google API key instructions

|

STREAM_TYPE |

kafka or redis.Default:

kafka, use redis for bp_wh_redis profiles |

PERCEPTION_TAG |

Tag for RTVI CV container image.

Use

3.0.0-<version> for x86_64/aarch64 (DGX-THOR), or 3.0.0-sbsa-<version> for aarch64 (DGX-SPARK) |

NUM_STREAMS |

Number of concurrent streams to process.

Default:

4 |

NIM Deployment Modes#

The following modes apply to the LLM_MODE and VLM_MODE environment variables.

NIM |

Required Settings |

Description |

|---|---|---|

|

MODE=2dBP_PROFILE=bp_whNGC_CLI_API_KEY |

Download and run NIMs locally (requires additional GPU resources), Runs LLM and VLM on different GPU devices. |

|

MODE=2dBP_PROFILE=bp_whNGC_CLI_API_KEY |

Download and run NIMs locally (requires additional GPU resources), Runs LLM and VLM on single GPU device. |

|

MODE=2dBP_PROFILE=bp_whLLM_BASE_URL, VLM_BASE_URLNVIDIA_API_KEY |

Use remote NIM endpoints (no additional GPU resources required).

Obtain a valid

NVIDIA_API_KEY via https://build.nvidia.com/explore/discover or via the NVIDIA Developer Program website. |

|

MODE=2d/3dBP_PROFILE=bp_wh_kafka/bp_wh_redis |

Deploy 2D or 3D Vision AI Profile without agents , NIM is not required for bp_wh_kafka and bp_wh_redis profiles. |

Model Configuration#

Variable |

Description |

|---|---|

LLM_NAME / VLM_NAME |

Model selection for the agent when NIM is enabled |

VLM_CUSTOM_WEIGHTS |

(Optional) Path to custom VLM weights for local VLM NIM.

|

Note

For the full set of variables (ports, agent settings, VST adaptor, calibration, etc.), use the inline comments in the shipped warehouse/.env.

For DGX-SPARK (SBSA), RTVI CV(Perception) and VST separate container tags to be used ( Refer warehouse/.env for the latest tags commented out in the file, use the uncommented tags for the deployment and make sure to comment out the default multi-arch tags).

Deploy the Blueprint#

Warning

Ensure warehouse/.env is configured for your deployment before proceeding. Review the Deployment Selection and environment settings sections above.

source /path/to/deployments/warehouse/.env

cd /path/to/deployments

# Docker login to the NGC docker registry

docker login \

--username '$oauthtoken' \

--password "${NGC_CLI_API_KEY}" \

nvcr.io

# Start the blueprint

docker compose \

--env-file warehouse/.env \

up \

--detach \

--pull always \

--force-recreate \

--build

Note

Initialization of some components might take a while, especially the first time as large containers will be pulled.

Verify Deployment#

Verify if containers are in running state:

docker ps

docker compose ls

Check to make sure streams were properly added to VST. To do so, navigate to the VST UI (see endpoint below) and check the Dashboard to confirm your streams are in a healthy state. If you do not see them there, check NVStreamer or your source to make sure they are active.

Check Perception FPS to make sure DeepStream is running properly. View the Perception logs by running the below command and looking for FPS lines in the logs. Ensure it is running at the desired FPS. If this is lower than expected, make sure your GPU is not oversaturated.

# For 2D Vision AI Profile

docker logs -f perception-2d

# For 3D Vision AI Profile

docker logs -f perception-3d

Check Kibana for traffic statistics and alerts. Go to the endpoint listed below and navigate to the dashboard.

Check the VSS UI (see endpoint below) and test a few prompts after the system is up for a few minutes and a few alerts are present.

For detailed testing and validation steps, refer to:

Service Access Points#

Once deployed, the following service access points are available:

Service |

URL |

|---|---|

VSS-UI (2D) |

|

Video-Analytics-UI (2D) |

|

Kibana-UI(2D/3D) |

|

NvStreamer-UI(2D/3D) |

|

VST-UI(2D/3D) |

|

Phoenix-UI (Telemetry)(2D/3D) (optional) |

|

Note

The Phoenix UI is only available when agent telemetry is enabled. For how to enable telemetry and inspect traces, see VSS Agents Observability.

Observability stack access details (Grafana, Prometheus, and exporters) are documented in Observability.

Service |

Access Point |

|---|---|

Video-Analytics-API |

|

VST-MCP |

|

VA-MCP |

|

LLM-NIM |

|

VLM-NIM |

|

Teardown the Deployment#

To stop and remove the Warehouse Blueprint deployment:

# Stop the running deployment

docker compose --env-file warehouse/.env down

# Alternatively to remove all the containers, images and volume

docker compose --env-file warehouse/.env down -v --rmi all

# Tear down all dangling volumes

docker volume ls -q -f "dangling=true" | xargs docker volume rm

# Cleanup all data (from deployments directory)

bash ./cleanup_all_datalog.sh -b warehouse

Customization#

The Blueprint supports several levels of customization, including but not limited to adding new cameras and updating models. For details, refer to the following sections under the Blueprint deep dive pages: