RT-DETR#

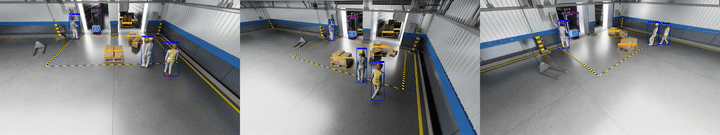

TAO RT-DETR is an advanced 2D Single-Camera Real-Time Detection Transformer tailored for warehouse environments and industrial automation settings. It generates precise 2D bounding boxes for a diverse set of objects including people, humanoid robots, autonomous vehicles, and warehouse equipment. The RT-DETR Warehouse 2D Model v1.0 is part of NVIDIA’s RT-DETR family and features an EfficientViT/L2 backbone, pretrained on warehouse scene datasets for precise 2D object detection in industrial environments.

Note

This model is optimized and ready for commercial deployment with support for fine-tuning via TAO Toolkit.

Hardware & Software Requirements#

Please refer to the Requirements section of the TAO Toolkit Quick Start Guide for more details.

Dataset Requirements#

The RT-DETR model can be fine-tuned on your own dataset for warehouse object detection tasks. The model is pre-trained on large-scale warehouse datasets including synthetic and real-world data.

For fine-tuning on your custom dataset, the data requirements are as follows:

Minimum Requirements:

Image Format

RGB images in standard formats (JPEG, PNG)

Recommended resolution: 960x544 or higher

Number of Training Images

Minimum: 1,000 annotated images per class

Recommended: 5,000+ images for robust performance

Annotations

Ground Truth 2D bounding boxes in COCO format or compatible format

Class labels for all objects

Data Split

Training set: 70-80% of total data

Validation set: 10-15% of total data

Test set: 10-15% of total data

The model supports 7 object categories: Person, Agility Digit (humanoid robot), Fourier GR1_T2 (humanoid robot), Nova Carter, Transporter, Forklift, and Pallet.

Expected time for finetuning#

The expected data requirements and time to fine-tune the RT-DETR model on a custom warehouse dataset are as follows:

Backbone Type |

GPU Type |

Image Size |

No. of Training Images |

Total No. of Epochs |

Total Training Time (approx.) |

|---|---|---|---|---|---|

EfficientViT-L2 |

1 x Nvidia H100 - 80GB |

960x544 |

10,000 |

30 |

30 min |

We recommend a multi-GPU training setup for faster training. The training time may vary based on dataset complexity and hardware configuration.

Model Card#

The model card provides a comprehensive deep dive into the following topics:

Model Overview

Model Architecture - Inputs & Outputs

Training, Testing & Evaluation Datasets - Data Formats

Accuracy of pre-trained models & KPIs

Real-time FPS/Throughput of the model on various GPUs

Please refer to the TAO RT-DETR Model Card on NGC Catalog for more details.

Inference using Perception Microservice#

Detailed information can be found in the 2D Single Camera Detection and Tracking (RT-DETR) page.

Fine-tuning using NVIDIA TAO Toolkit#

RT-DETR can be fine-tuned via the TAO containers and the TAO CLI Notebook.

The documentation provided below accompanies the cells in the TAO fine-tuning notebook and offers guidance on how to execute them. TAO RT-DETR supports the following tasks via the Jupyter notebook:

dataset

convertmodel

trainmodel

evaluatemodel

inferencemodel

export

An experiment specification file (also known as a configuration file) is used for fine-tuning the model. It consists of several main components:

datasetmodeltrainevaluateinferenceexport

For more information on experiment spec file, please refer to the Train Adapt Optimize (TAO) Toolkit User Guide - RT-DETR.

The following is an example spec file for training an RT-DETR model on warehouse datasets.

results_dir: /results

dataset:

augmentation:

eval_spatial_size:

- 544

- 960

multi_scales:

- - 480

- 832

- - 512

- 896

- - 544

- 960

- - 544

- 960

- - 544

- 960

- - 576

- 992

- - 608

- 1056

- - 672

- 1184

- - 704

- 1216

- - 736

- 1280

- - 768

- 1344

- - 800

- 1408

train_spatial_size:

- 544

- 960

batch_size: 16

dataset_type: serialized

num_classes: 7

remap_mscoco_category: false

train_data_sources:

- image_dir: ??

json_file: ??

val_data_sources:

image_dir: ??

json_file: ??

workers: 8

model:

backbone: efficientvit_l2

dec_layers: 6

enc_layers: 1

num_queries: 300

return_interm_indices:

- 1

- 2

- 3

train_backbone: true

train:

checkpoint_interval: 1

ema:

decay: 0.999

enable_ema: false

num_epochs: 30

num_gpus: 8

num_nodes: 1

optim:

lr: 1e-4

lr_backbone: 1e-5

lr_steps:

- 1000

momentum: 0.9

precision: bf16

pretrained_model_path: ??

validation_interval: 1

inference:

checkpoint: ??

conf_threshold: 0.5

input_width: 960

input_height: 544

color_map:

person: green

nova_carter: red

transporter: blue

forklift: yellow

pallet: purple

gr1_t2: orange

agility_digit: pink

evaluate:

checkpoint: ??

input_width: 960

input_height: 544

export:

checkpoint: ??

gpu_id: ??

input_height: 544

input_width: 960

onnx_file: ??

opset_version: 17

serialize_nvdsinfer: true

Dataset Preparation#

The RT-DETR model supports two data input formats for training. You can prepare your dataset in either format depending on your data source.

Option 1: COCO Format

The standard format for RT-DETR training is the COCO (Common Objects in Context) format. This format uses JSON annotation files with the following structure:

{

"images": [

{

"id": 1,

"file_name": "image_001.jpg",

"width": 960,

"height": 544

}

],

"annotations": [

{

"id": 1,

"image_id": 1,

"category_id": 1,

"bbox": [x, y, width, height],

"area": 1234.5,

"iscrowd": 0

}

],

"categories": [

{"id": 1, "name": "person"},

{"id": 2, "name": "agility_digit"},

{"id": 3, "name": "gr1_t2"},

{"id": 4, "name": "nova_carter"},

{"id": 5, "name": "transporter"},

{"id": 6, "name": "forklift"},

{"id": 7, "name": "pallet"}

]

}

For detailed information on the COCO format specification, please refer to the TAO Toolkit Data Annotation Format - Object Detection COCO Format documentation.

The directory structure for COCO format should be:

dataset/

├── images/

│ ├── train/

│ │ ├── image_001.jpg

│ │ ├── image_002.jpg

│ │ └── ...

│ ├── val/

│ │ ├── image_001.jpg

│ │ └── ...

│ └── test/

│ ├── image_001.jpg

│ └── ...

└── annotations/

├── train.json

├── val.json

└── test.json

Option 2: H5 Files Format

For synthetic data generation pipelines or large-scale datasets, RT-DETR also supports data stored in HDF5 (H5) format. In this format, RGB images are stored within H5 files organized by camera.

The directory structure for H5 format is as follows:

dataset/

├── Camera_01.h5

├── Camera_02.h5

├── Camera_03.h5

├── ...

└── annotations/

├── train.json

└── val.json

Each H5 file contains an rgb group with RGB image frames (e.g., rgb_00000.jpg, rgb_00001.jpg, …).

The ground truth annotations use the same COCO JSON format as Option 1, but the file_name field in the images array uses a special H5 URI format:

h5://<h5_file_name_without_extension>:<rgb_file_key in rgb group>

For example:

{

"images": [

{

"id": 1,

"file_name": "h5://Camera_01:rgb_00000.jpg",

"width": 960,

"height": 544

},

{

"id": 2,

"file_name": "h5://Camera_01:rgb_00001.jpg",

"width": 960,

"height": 544

},

{

"id": 3,

"file_name": "h5://Camera_02:rgb_00000.jpg",

"width": 960,

"height": 544

}

],

"annotations": [...],

"categories": [...]

}

Launch Model Fine-tuning#

Below are the important configurations that need to be updated to launch training:

dataset:

augmentation:

eval_spatial_size:

- 544

- 960

multi_scales:

- - 480

- 832

- - 512

- 896

- - 544

- 960

# ... additional scales

train_spatial_size:

- 544

- 960

batch_size: 16 # Depending on your GPU memory

dataset_type: serialized

num_classes: 7 # Update based on your dataset

remap_mscoco_category: false

train_data_sources:

- image_dir: ?? # Update this based on your training image directory

json_file: ?? # Update this based on your training annotations file

sample_size: '10000' # Update based on your dataset size

val_data_sources:

image_dir: ?? # Update this based on your validation image directory

json_file: ?? # Update this based on your validation annotations file

workers: 8 # Depending on your CPU memory

model:

backbone: efficientvit_l2

dec_layers: 6

enc_layers: 1

num_queries: 300

return_interm_indices:

- 1

- 2

- 3

train_backbone: true

train:

checkpoint_interval: 1 # Update this based on your dataset size

ema:

decay: 0.999

enable_ema: false

num_epochs: 30 # Update this based on your dataset size

num_gpus: 8 # Update this based on your GPU count

num_nodes: 1 # Update this based on your GPU count

optim:

lr: 1e-4

lr_backbone: 1e-5

lr_steps:

- 1000

momentum: 0.9

precision: bf16 # Use bf16 for faster training on supported GPUs

pretrained_model_path: ?? # Update this to the pretrained model path

validation_interval: 1 # Update this based on your dataset size

Once the configurations are modified, launch training by running the following command:

tao model rtdetr train \

-e=<spec configuration YAML file> \

dataset.train_data_sources.image_dir=<training image directory> \

dataset.train_data_sources.json_file=<training annotations file> \

dataset.val_data_sources.image_dir=<validation image directory> \

dataset.val_data_sources.json_file=<validation annotations file> \

results_dir=<results directory>

The pretrained RT-DETR for warehouse detection task is available at NGC Catalog

Evaluate the fine-tuned model#

The fine-tuned model’s accuracy can be evaluated using the Average Precision (AP) metrics.

Below are the important configurations that need to be updated to launch evaluation:

dataset:

batch_size: 4

num_classes: 7

remap_mscoco_category: false

test_data_sources:

image_dir: ?? # Update this based on your test image directory

json_file: ?? # Update this based on your test annotations file

augmentation:

eval_spatial_size:

- 544

- 960

evaluate:

checkpoint: ?? # Update this based on your fine-tuned model path

input_width: 960

input_height: 544

Once the configurations are modified, launch evaluation by running the following command:

tao model rtdetr evaluate \

-e=<spec configuration YAML file> \

evaluate.checkpoint=<fine-tuned model path> \

dataset.test_data_sources.image_dir=<test image directory> \

dataset.test_data_sources.json_file=<test annotations file>

results_dir=<results directory>

Run Inference on the fine-tuned model#

The fine-tuned model’s output can be inspected by running inference and visualizing the 2D bounding boxes. Below are the important configurations that need to be updated to launch inference:

inference:

checkpoint: ?? # Update this based on your fine-tuned model path

conf_threshold: 0.5 # Update this based on your confidence threshold

input_width: 960 # Update this based on your input width

input_height: 544 # Update this based on your input height

color_map: # Update this based on your color map

person: blue

agility_digit: green

gr1_t2: red

nova_carter: yellow

forklift: purple

pallet: orange

transporter: pink

dataset:

infer_data_sources:

image_dir: ?? # Update this based on your test image directory

classmap: ?? # Update this based on your classmap file

num_classes: 7 # Update this based on your number of classes

batch_size: 4 # Update this based on your batch size

workers: 8 # Update this based on your workers

remap_mscoco_category: false

Once the configurations are modified, launch inference by running the following command:

tao model rtdetr inference \

-e=<spec configuration YAML file> \

inference.checkpoint=<fine-tuned model path> \

dataset.infer_data_sources.image_dir=<test image directory> \

dataset.infer_data_sources.classmap=<classmap file> \

results_dir=<results directory>

Export the fine-tuned model#

Below are the important configurations that need to be updated to launch export:

export:

checkpoint: ?? # Update this based on your fine-tuned model path

gpu_id: ?? # Update this based on your GPU id

input_height: 544

input_width: 960

onnx_file: ?? # Update this based on your desired model name

opset_version: 17

serialize_nvdsinfer: true # Set to true for DeepStream integration

Once the configurations are modified, launch export by running the following command:

tao model rtdetr export \

-e=<spec configuration YAML file> \

export.checkpoint=<fine-tuned model path> \

export.onnx_file=<onnx file> \

results_dir=<results directory>

By default, the exported model is in ONNX format, FP32 precision. To further optimize the model for deployment, you can convert the model to FP16 precision using the NVIDIA Model Optimizer

Step 1: Install NVIDIA Model Optimizer and set up your environment by following the official instructions here: https://nvidia.github.io/Model-Optimizer/getting_started/_installation_for_Linux.html

Step 2: Convert the model to FP16 precision using the following command:

python -m modelopt.onnx.autocast --onnx_path <ONNX file path> \ --output_path <OUTPUT FP16 ONNX file path> \ --low_precision_type fp16 --keep_io_types

Using the fine-tuned model in the Perception Microservice#

Once the model is fine-tuned and exported to ONNX format, it can be used in the Perception Microservice. Refer to the Integrating a New RT-DETR Model section in 2D Single Camera Detection and Tracking (RT-DETR) for more details.

Real-Time Inference Throughput & Latency#

Inference runs through the DeepStream pipeline on TensorRT with mixed precision (FP16+FP32). The table below summarizes how many camera streams each GPU supports at 30 FPS and 15 FPS (with inference interval=1) for the RT-DETR model with EfficientViT-L2 backbone.

GPU |

@30 FPS |

@15 FPS (interval=1) |

|---|---|---|

1x DGX Spark |

3 |

7 |

1x RTX PRO 6000 (Server) |

16 |

33 |

1x RTX PRO 6000 (Workstation) |

16 |

33 |

1x Jetson AGX Thor - T5000 |

4 |

7 |

1x B200 |

36 |

81 |

1x GB200 |

42 |

93 |

1x H100 |

26 |

58 |

1x H200 |

26 |

58 |

1x RTX 6000 Ada |

8 |

16 |

1x A100 |

12 |

29 |

1x L4 |

4 |

7 |

1x L40S |

12 |

24 |

1x Jetson AGX Orin |

2 |

4 |

KPI#

The key performance indicators are Average Precision (AP) per-class evaluated on the Warehouse Synthetic Test dataset. AP quantifies a detector’s ability to trade off precision and recall for a single object category by computing the normalized area under its precision-recall curve.

The model supports 7 object categories: Person, Agility Digit (humanoid robot), Fourier GR1_T2 (humanoid robot), Nova Carter, Transporter, Forklift, and Pallet.

Evaluation Settings

The reported metrics use the following evaluation configuration:

AP Variant: COCO AP@0.50

IoU Thresholds: 0.50

Max Detections: 100 detections per image

Matching Policy: Greedy matching based on IoU with ground truth boxes, highest confidence predictions matched first

The evaluation is performed on the MTMC Tracking 2025 subset from the NVIDIA PhysicalAI-SmartSpaces dataset. This is a comprehensive, annotated dataset for multi-camera tracking and 2D/3D object detection, synthetically generated with NVIDIA Omniverse. The dataset consists of time-synchronized video from indoor warehouse scenes with annotations for 2D & 3D bounding boxes and multi-camera tracking IDs. The Warehouse Synthetic Test dataset used for evaluation is the Warehouse_019 scene from the test split.

Dataset |

Person |

Agility Digit |

GR1_T2 |

Nova Carter |

Transporter |

Forklift |

Pallet |

|---|---|---|---|---|---|---|---|

Warehouse Synthetic Test |

0.970 |

0.969 |

0.920 |

0.960 |

0.940 |

0.851 |

0.891 |

Please refer to the Model Card for more details on benchmark datasets and evaluation methodology.