Search Workflow#

Warning

Alpha Feature: This workflow is in early development and is not recommended for production use.

The Search Workflow enables natural language queries across video archives to locate specific events, objects, or actions.

Use Cases

Event retrieval from large video archives

Cross-video search for specific objects or actions

Forensic analysis of recorded footage

Estimated Deployment Time: 15-20 minutes

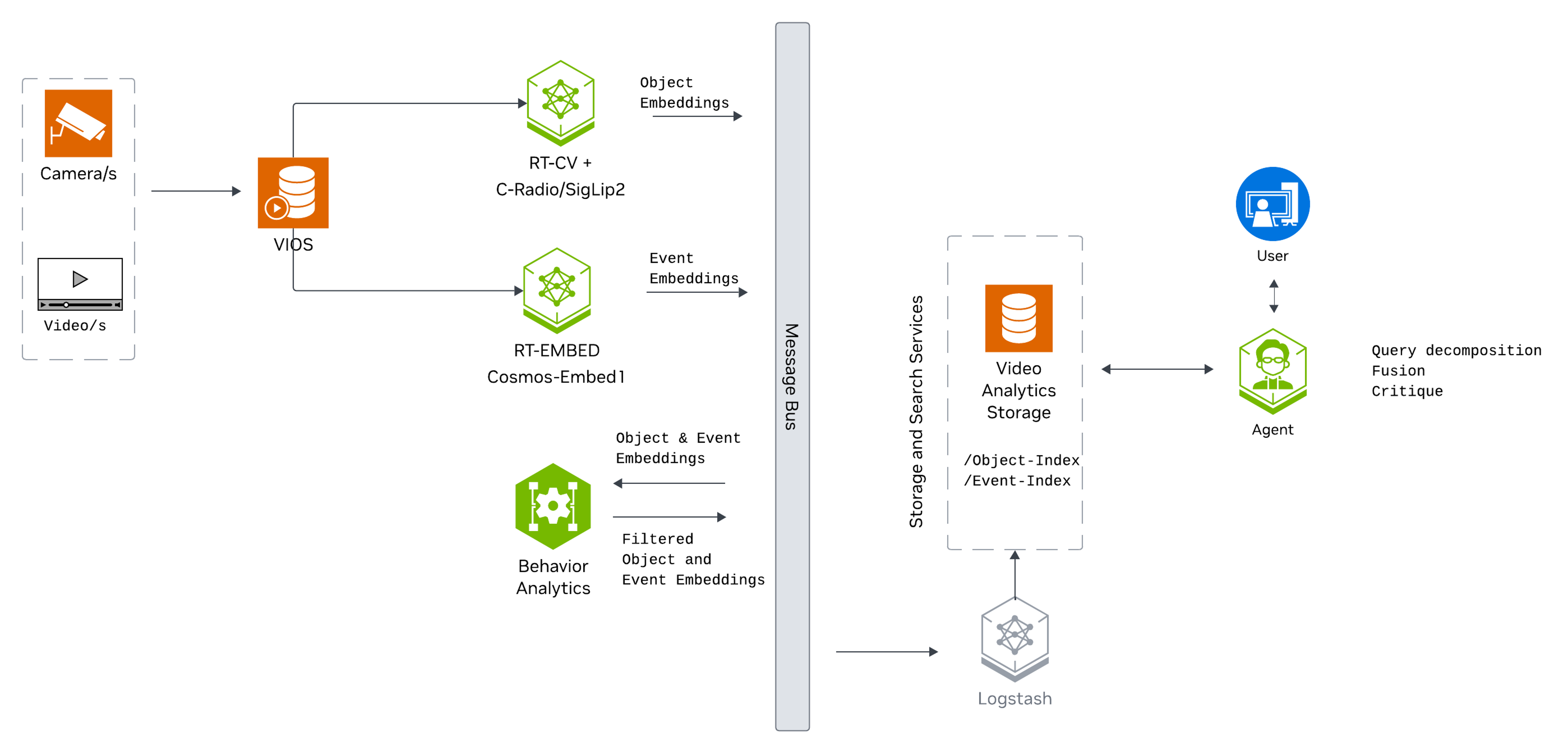

The following diagram illustrates the search workflow architecture:

Key Features of the Vision Agent with Search:

Upload videos to the agent for search.

Semantic search of videos for key actions, events, and object attributes using embedding-based video indexing.

Natural language query support (e.g., “find all instances of forklifts”).

Filter and retrieve timestamped results using similarity scores, time range, video name, description, and source.

What’s being deployed#

VSS Agent: Agent service that orchestrates tool calls and model inference to answer questions and generate outputs

VSS Agent UI: Web UI with chat, video upload, and different views

VSS Video IO & Storage (VIOS): Video ingestion, recording, and playback services used by the agent for video access and management

Nemotron LLM (NIM): LLM inference service used for reasoning, tool selection, and response generation

Phoenix: Observability and telemetry service for agent workflow monitoring

ELK: Elasticsearch, Logstash and Kibana stack to index and search embeddings of video clips

Kafka: A real-time message bus to publish embeddings, to be consumed and indexed by ELK for search

RTVI-Embed: Real Time Video Intelligence Embed Microservice to generate action/event embeddings for videos and text, based on Cosmos-Embed1

RTVI-CV: Real Time Video Intelligence Computer Vision Microservice to generate object attribute embeddings for videos

Behavior Analytics: Behavior Analytics microservice to perform sequential frame analysis for object detection and tracking in videos/streams.

Prerequisites#

Before you begin, ensure all of the prerequisites are met. See Prerequisites for more details.

Deploy#

Note

For instructions on downloading sample data and the deployment package, see Download Sample Data and Deployment Package in the Quickstart guide.

Skip to Step 1: Deploy the Agent if you have already downloaded and deployed another agent workflow.

Step 1: Deploy the Agent#

Note

Set the NGC CLI API key, then run the deploy commands for your GPU type.

Refer to VSS-Agent-Customization-configure-llm and VSS-Agent-Customization-configure-vlm for all LLM and VLM (local and remote) configuration options.

For advanced settings and Agent Customization, see the deploy command help.

Elasticsearch dense vector embedding dimensions (optional; set in .env to override):

ELASTICSEARCH_RTVI_CV_EMBEDDINGS_DIM=1536 # RTVI-CV / RADIO-CLIP model, 1536-dim

ELASTICSEARCH_VISION_LLM_EMBEDDINGS_DIM=768 # RTVI-Embed / Cosmos-Embed1 model, 768-dim

ELASTICSEARCH_RTVI_CV_EMBEDDINGS_DIM— Used by RTVI-CV for object embeddings (default: RADIO-CLIP, 1536). If you change the RTVI-CV model, set this to the new model’s embedding dimension. See Object Detection and Tracking for model options.ELASTICSEARCH_VISION_LLM_EMBEDDINGS_DIM— Used by RTVI-Embed for action/event embeddings (default: Cosmos-Embed1, 768). If you change the RTVI-Embed model, set this to the new model’s embedding dimension. See Real-Time Embedding for model options.

# Set NGC CLI API key

export NGC_CLI_API_KEY='your_ngc_api_key'

# View all available options

scripts/dev-profile.sh --help

scripts/dev-profile.sh up -p search -H H100

scripts/dev-profile.sh up -p search -H H100 \

--llm-device-id 2

export LLM_ENDPOINT_URL=https://your-llm-endpoint.com

scripts/dev-profile.sh up -p search -H H100 \

--use-remote-llm

export VLM_ENDPOINT_URL=https://your-vlm-endpoint.com

scripts/dev-profile.sh up -p search -H H100 \

--use-remote-vlm

export LLM_ENDPOINT_URL=https://your-llm-endpoint.com

export VLM_ENDPOINT_URL=https://your-vlm-endpoint.com

scripts/dev-profile.sh up -p search -H H100 \

--use-remote-llm --use-remote-vlm

scripts/dev-profile.sh up -p search -H RTXPRO6000BW

scripts/dev-profile.sh up -p search -H RTXPRO6000BW \

--llm-device-id 2

export LLM_ENDPOINT_URL=https://your-llm-endpoint.com

scripts/dev-profile.sh up -p search -H RTXPRO6000BW \

--use-remote-llm

export VLM_ENDPOINT_URL=https://your-vlm-endpoint.com

scripts/dev-profile.sh up -p search -H RTXPRO6000BW \

--use-remote-vlm

export LLM_ENDPOINT_URL=https://your-llm-endpoint.com

export VLM_ENDPOINT_URL=https://your-vlm-endpoint.com

scripts/dev-profile.sh up -p search -H RTXPRO6000BW \

--use-remote-llm --use-remote-vlm

scripts/dev-profile.sh up -p search -H L40S \

--llm-device-id 2

export LLM_ENDPOINT_URL=https://your-llm-endpoint.com

scripts/dev-profile.sh up -p search -H L40S \

--use-remote-llm

export VLM_ENDPOINT_URL=https://your-vlm-endpoint.com

scripts/dev-profile.sh up -p search -H L40S \

--use-remote-vlm

export LLM_ENDPOINT_URL=https://your-llm-endpoint.com

export VLM_ENDPOINT_URL=https://your-vlm-endpoint.com

scripts/dev-profile.sh up -p search -H L40S \

--use-remote-llm --use-remote-vlm

See Local LLM and VLM deployments on OTHER hardware for known limitations and constraints.

scripts/dev-profile.sh up -p search -H OTHER \

--llm-env-file /path/to/llm.env

scripts/dev-profile.sh up -p search -H OTHER \

--llm-device-id 2 --llm-env-file /path/to/llm.env

export LLM_ENDPOINT_URL=https://your-llm-endpoint.com

scripts/dev-profile.sh up -p search -H OTHER \

--use-remote-llm

export VLM_ENDPOINT_URL=https://your-vlm-endpoint.com

scripts/dev-profile.sh up -p search -H OTHER \

--use-remote-vlm

export LLM_ENDPOINT_URL=https://your-llm-endpoint.com

export VLM_ENDPOINT_URL=https://your-vlm-endpoint.com

scripts/dev-profile.sh up -p search -H OTHER \

--use-remote-llm --use-remote-vlm

This command will download the necessary containers from the NGC Docker registry and start the agent. Depending on your network speed, this may take a few minutes.

Note

To deploy with the Critic Agent:

To deploy the search agent with the critic agent, modify the search agent configuration file to enable the critic agent.

The configuration file can be found in the met-deployment repository at

deployments/developer-workflow/dev-profile-search/vss-agent/configs/config.ymlModify the following section to enable the critic agent:

search_agent: enable_critic: true

Ensure that the VLM endpoint URL has been configured for the deployment; see VLM configuration in deployment commands for details.

If using a custom VLM: - Deploy using a remote VLM by following the instructions above. - Ensure the VLM has access to the VST endpoint.

The critic agent will only run while running search under agent/chat mode.

Note

To deploy with Temporal Deduplication:

To deploy the search agent with the temporal deduplication, modify the video analytics configuration file to enable the feature.

The configuration file can be found in the met-deployment repository at

deployments/developer-workflow/dev-profile-search/video-analytics-2d-app/vss-search-analytics/configs/vss-search-analytics-kafka-config.jsonModify the following section to enable temporal deduplication:

{ "app": [ { "name": "embedEnableDownsampling", "value": "true" } ] }

This deployment uses the following defaults:

Host IP: src IP from

ip route get 1.1.1.1LLM model: nvidia/nvidia-nemotron-nano-9b-v2

To use a different IP than the one derived:

-i: Manually specify the host IP address.-e: Optionally specify an externally accessible IP address for services that need to be reached from outside the host.

Note

When using a remote VLM of model-type nim (not openai), see How does a remote nim VLM access videos? for access requirements.

Once the deployment is complete, check that all the containers are running and healthy:

docker ps

Once all the containers are running, you can access the agent UI at http://<HOST_IP>:3000/.

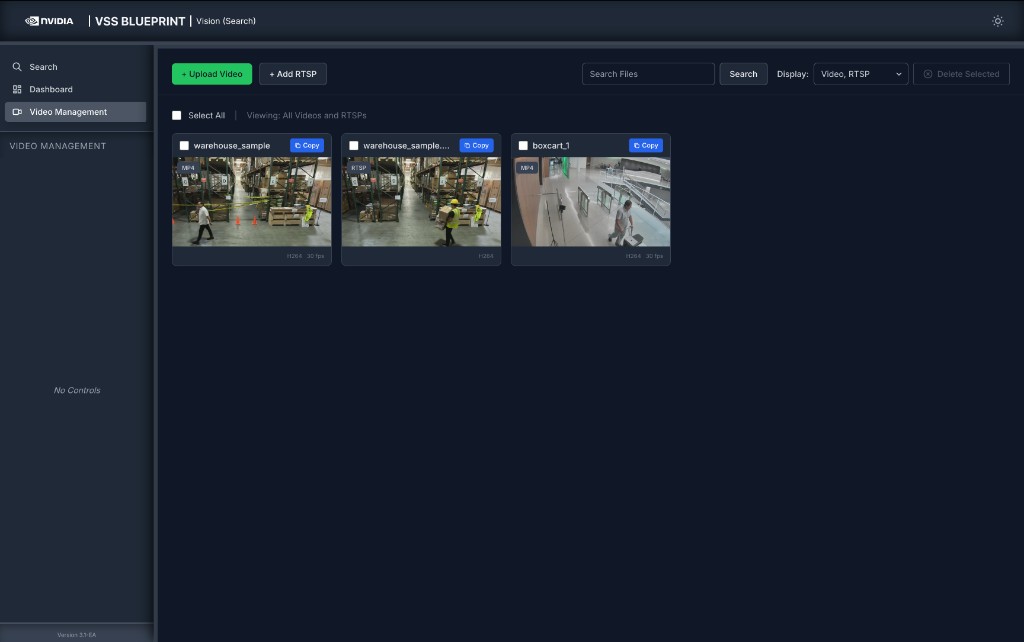

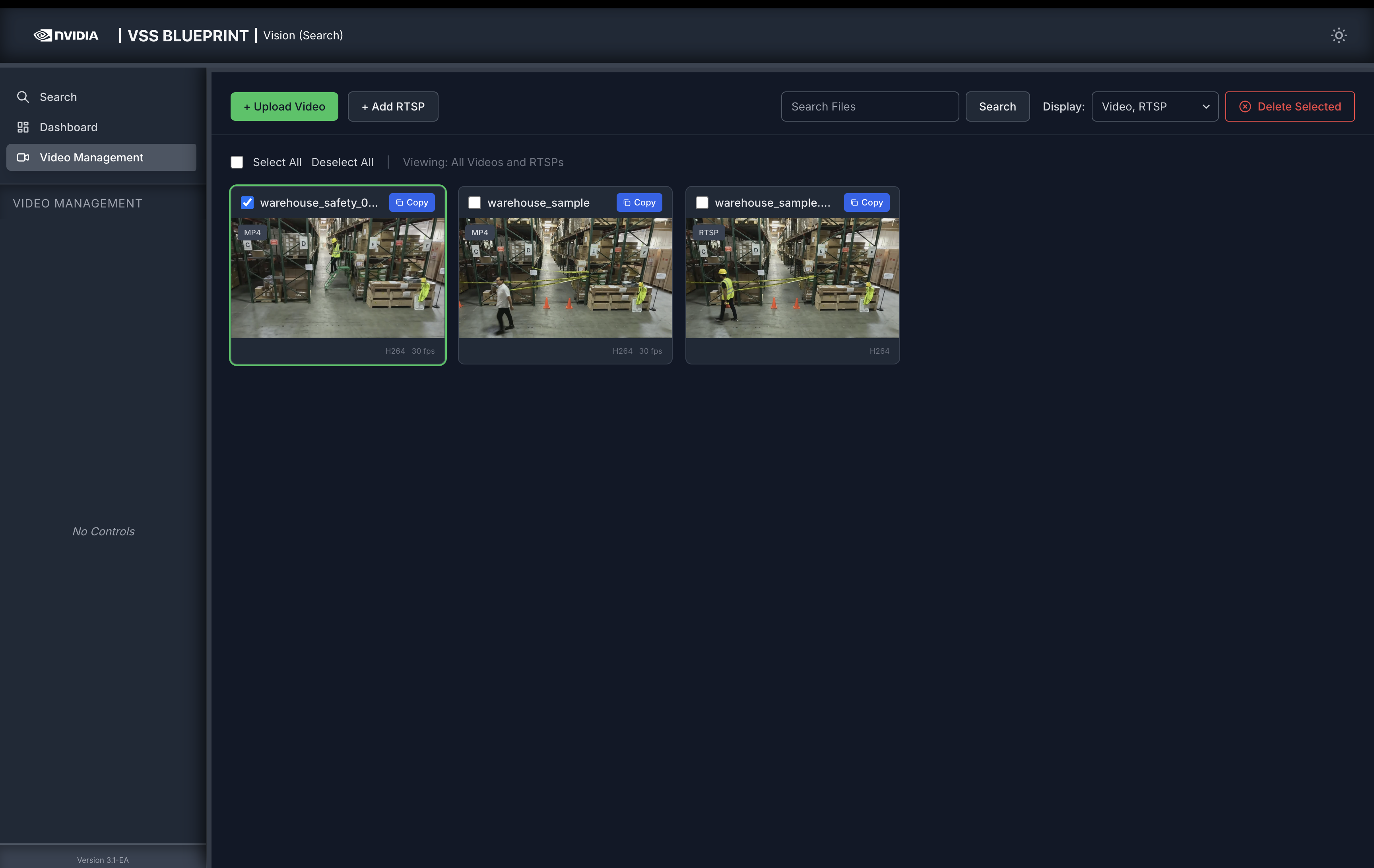

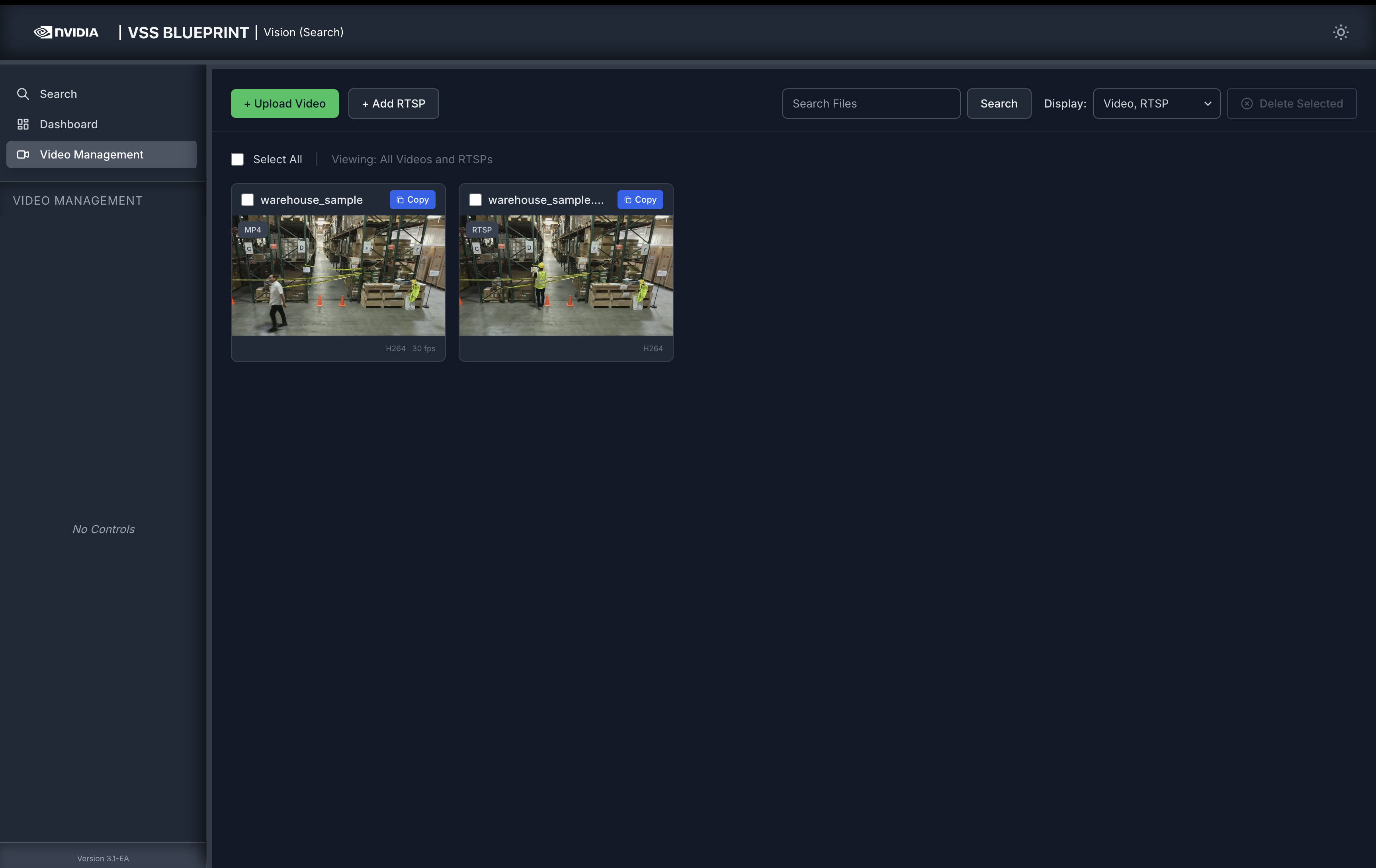

Step 2: Video Management#

Click on the Video Management tab in the left sidebar to access video management features. You can add video sources by uploading video files or adding RTSP streams.

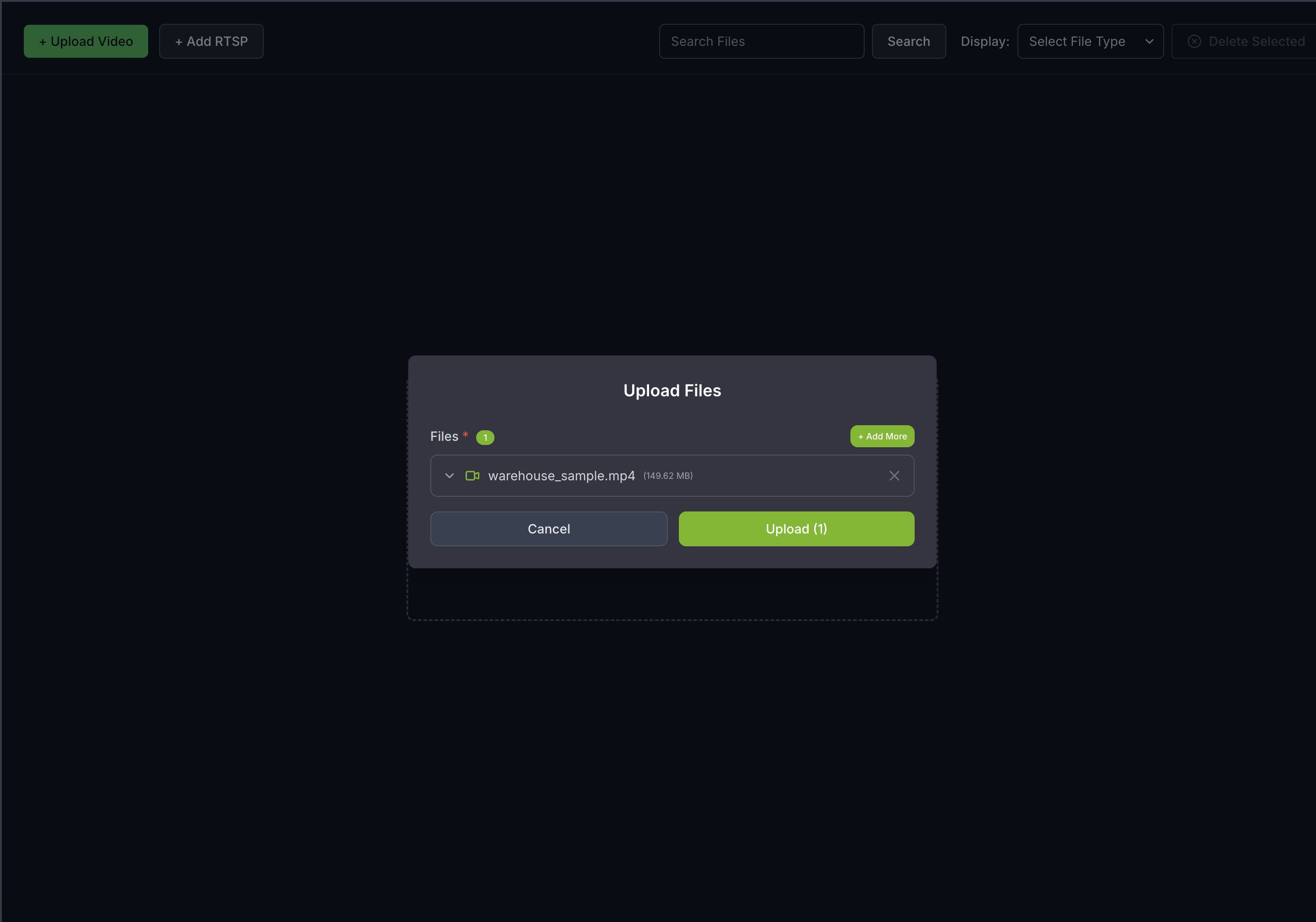

Upload a Video#

Click on the Video Management tab.

Click the Upload Video button.

Select the video file

warehouse_sample.mp4from your local machine.

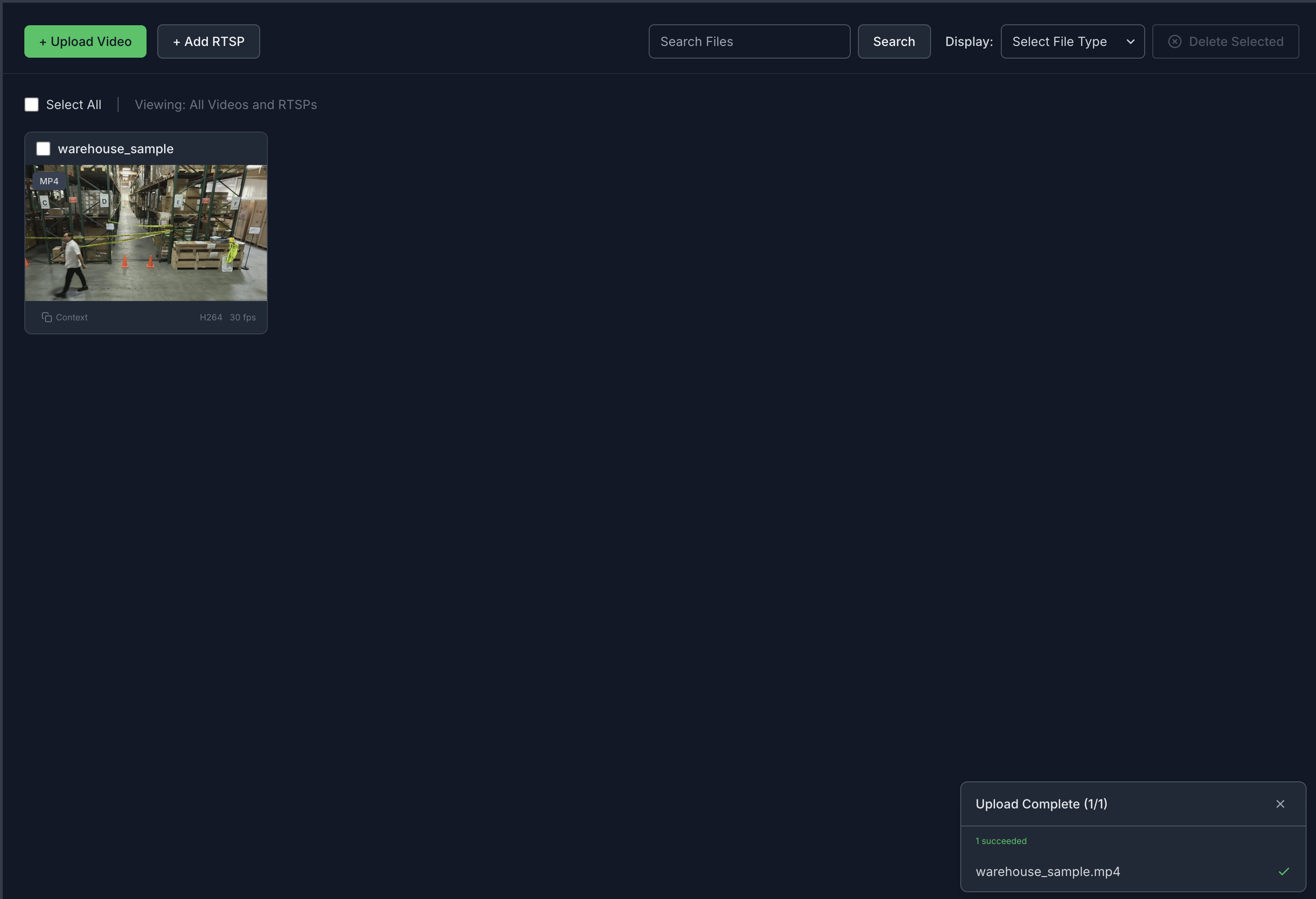

Once the video(s) is/are selected, click the Upload button and wait for the video(s) to be uploaded. It may take a few minutes depending on the size of the video(s). Once the video(s) is successfully uploaded, it will appear in the video list.

Note

Vector embeddings generated for the uploaded videos and streams remain only until minimum index age is reached (from the time of first upload).

After that, the embeddings are deleted and the uploaded videos and streams are no longer searchable.

However, the uploaded videos and streams are still accessible in the video management tab. The videos and streams have to be re-uploaded/added to make them searchable again.

Default minimum index age is 48 hours for the Search Workflow. This can be configured in the ILM (Index Lifecycle Management) policy settings in the Dashboard, before the indices are expired.

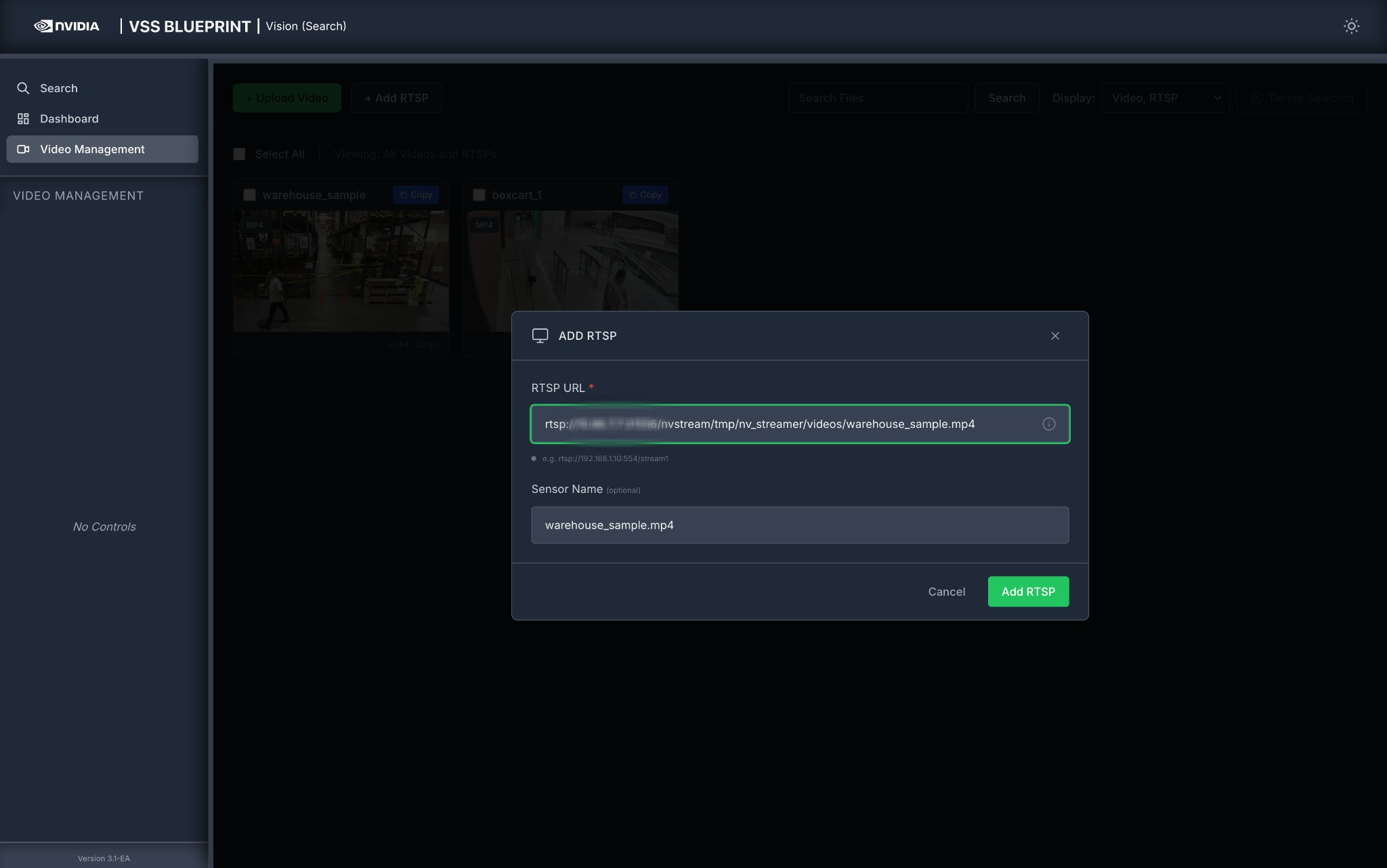

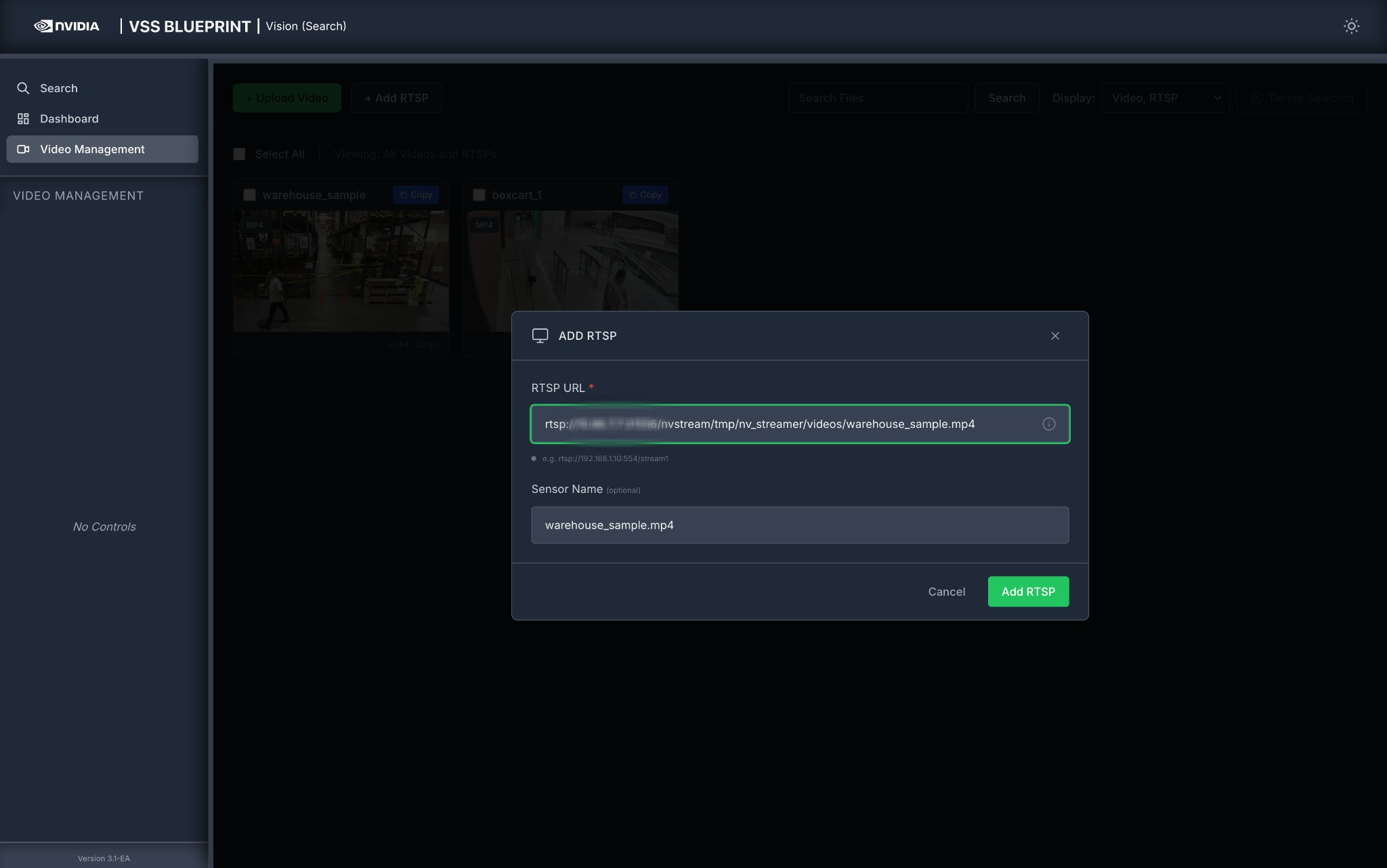

Add an RTSP Stream#

To add a live RTSP stream as a video source:

Click on the Video Management tab.

Click the Add RTSP button.

In the ADD RTSP dialog, enter the following:

RTSP URL (required): The RTSP stream URL (e.g.,

rtsp://<HOST_IP>:<PORT>/nvstream/tmp/nv_streamer/videos/warehouse_sample.mp4)Sensor Name (required): A descriptive name for the camera (e.g.,

warehouse_sample.mp4). This field is automatically populated from the RTSP URL but can be edited.

Click Add RTSP to add the stream, or Cancel to close the dialog.

Once added, the RTSP stream will appear in the video list alongside uploaded videos and can be used for search queries.

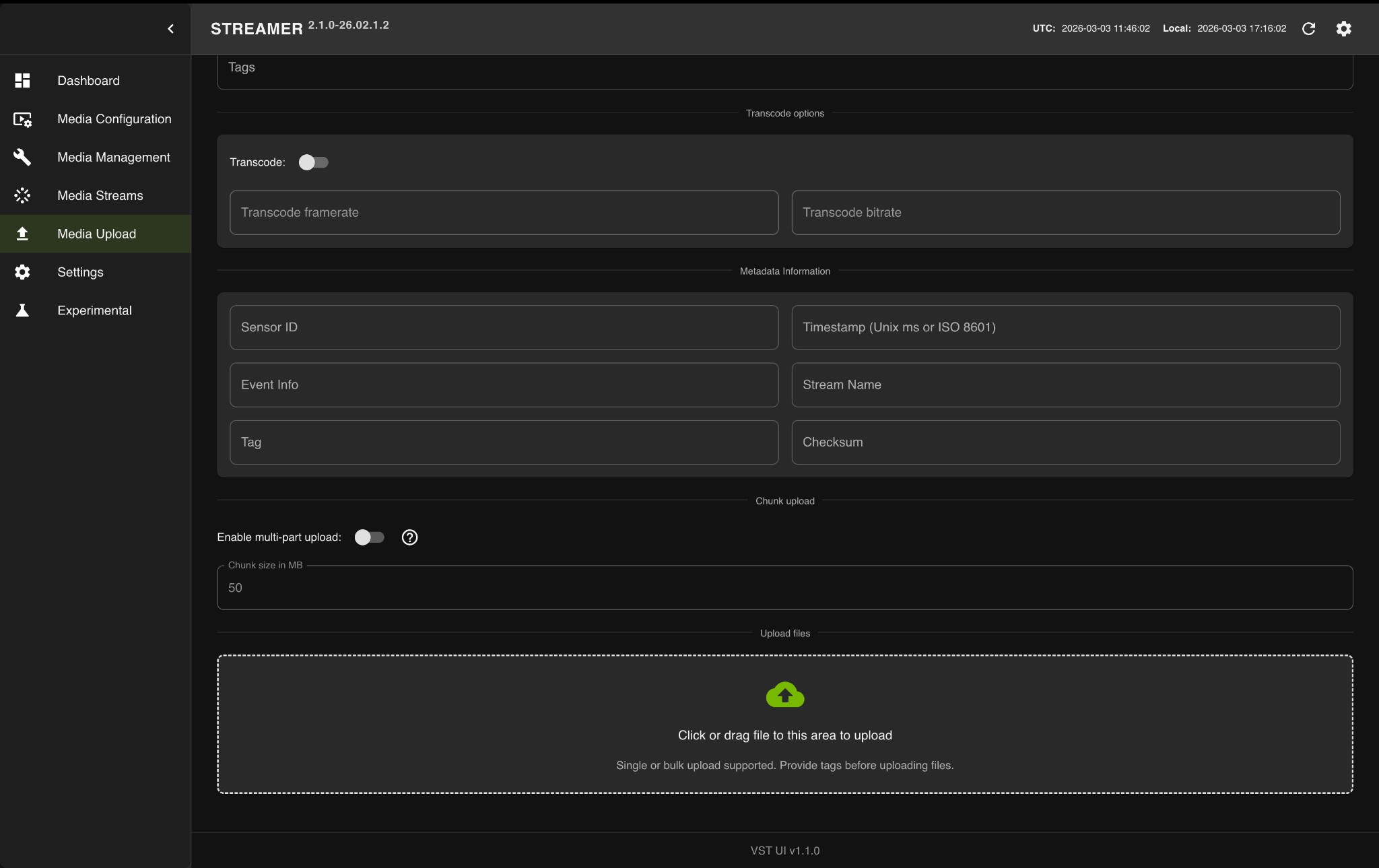

Create an RTSP Stream from a Video Using NVStreamer#

If you have a video file and want to stream it as an RTSP source, you can use the NVStreamer service that is deployed alongside other services.

Access NVStreamer

The NVStreamer UI is available at http://<HOST_IP>:31000, where <HOST_IP> is the same IP address used for the agent UI.

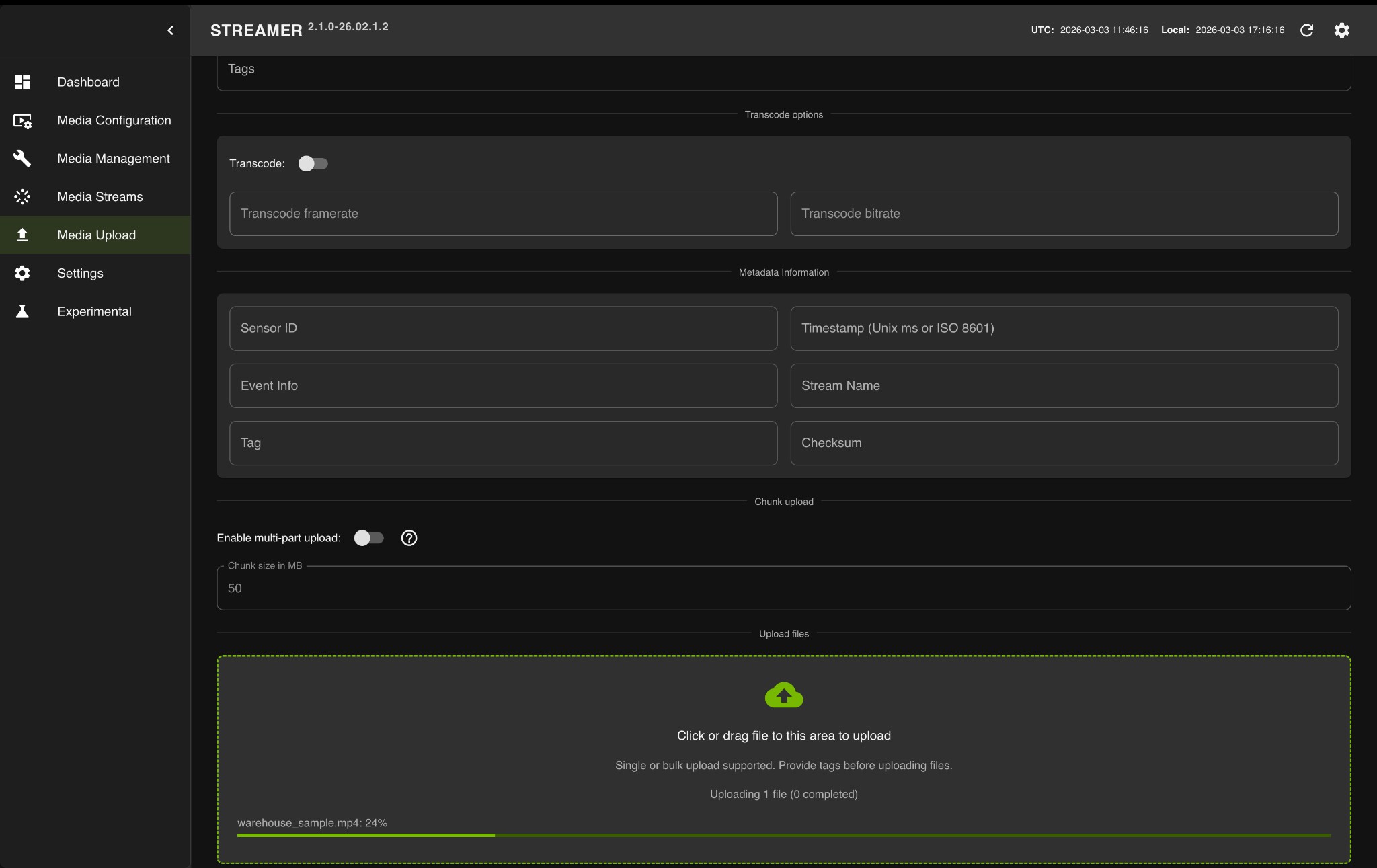

Upload a Video to NVStreamer

Open the NVStreamer UI at

http://<HOST_IP>:31000.Click on the Media Upload tab in the left sidebar.

Click or drag video files to the upload area.

Wait for the upload to complete.

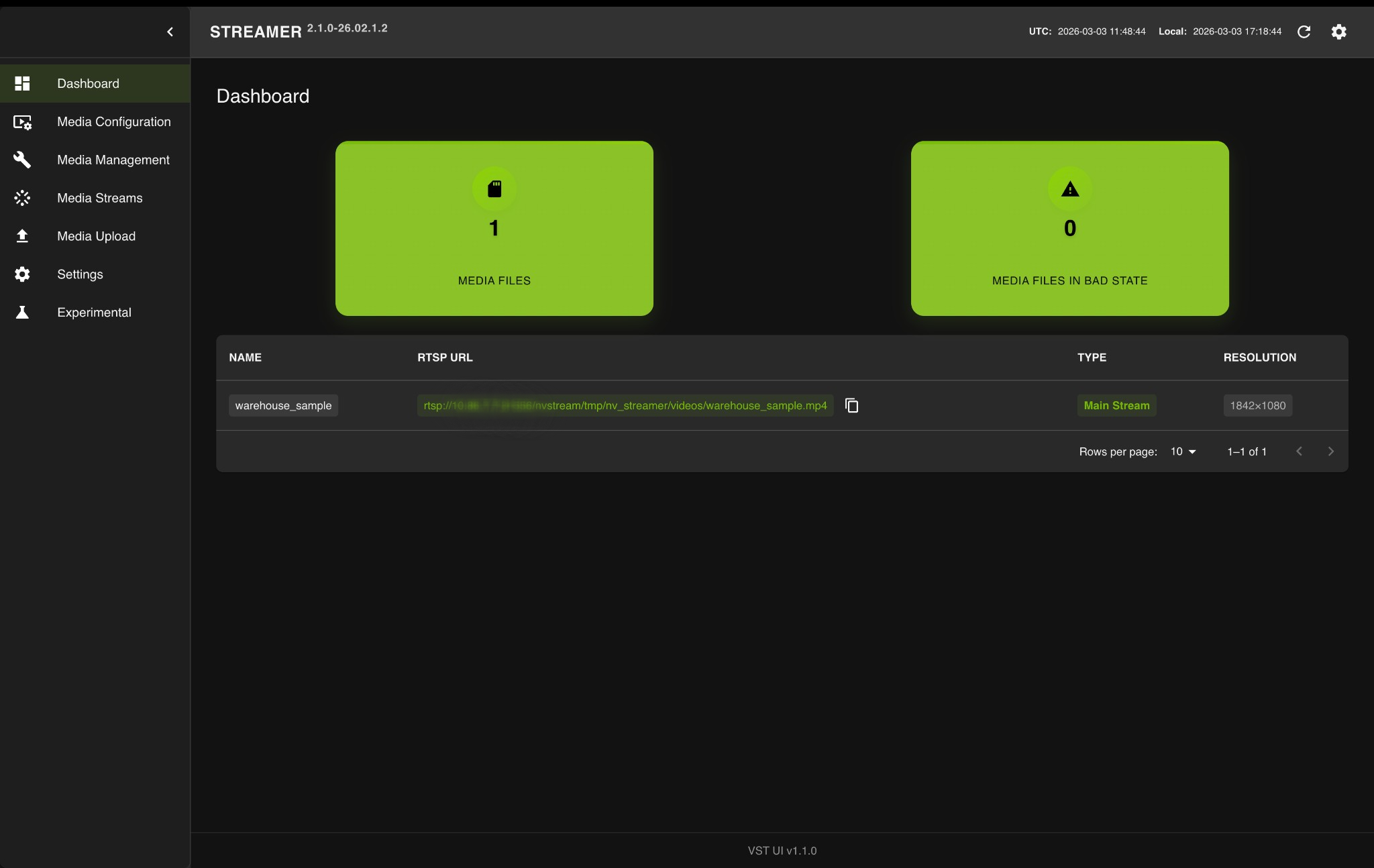

Get the RTSP URL

After the upload completes, click on the Dashboard tab.

Locate your video in the table and copy the RTSP URL using the copy button.

Add the RTSP URL to the Agent

Use the copied RTSP URL (e.g., rtsp://<HOST_IP>:31556/nvstream/tmp/nv_streamer/videos/warehouse_sample.mp4) in the Add RTSP Stream (see above) dialog to add it as a video source for search queries. Click Add RTSP to add the stream, or Cancel to close the dialog.

Note

To avoid duplicate results due to looping of the same video, ensure that the RTSP stream is deleted from the video management tab some time after it has been added to the agent for search queries.

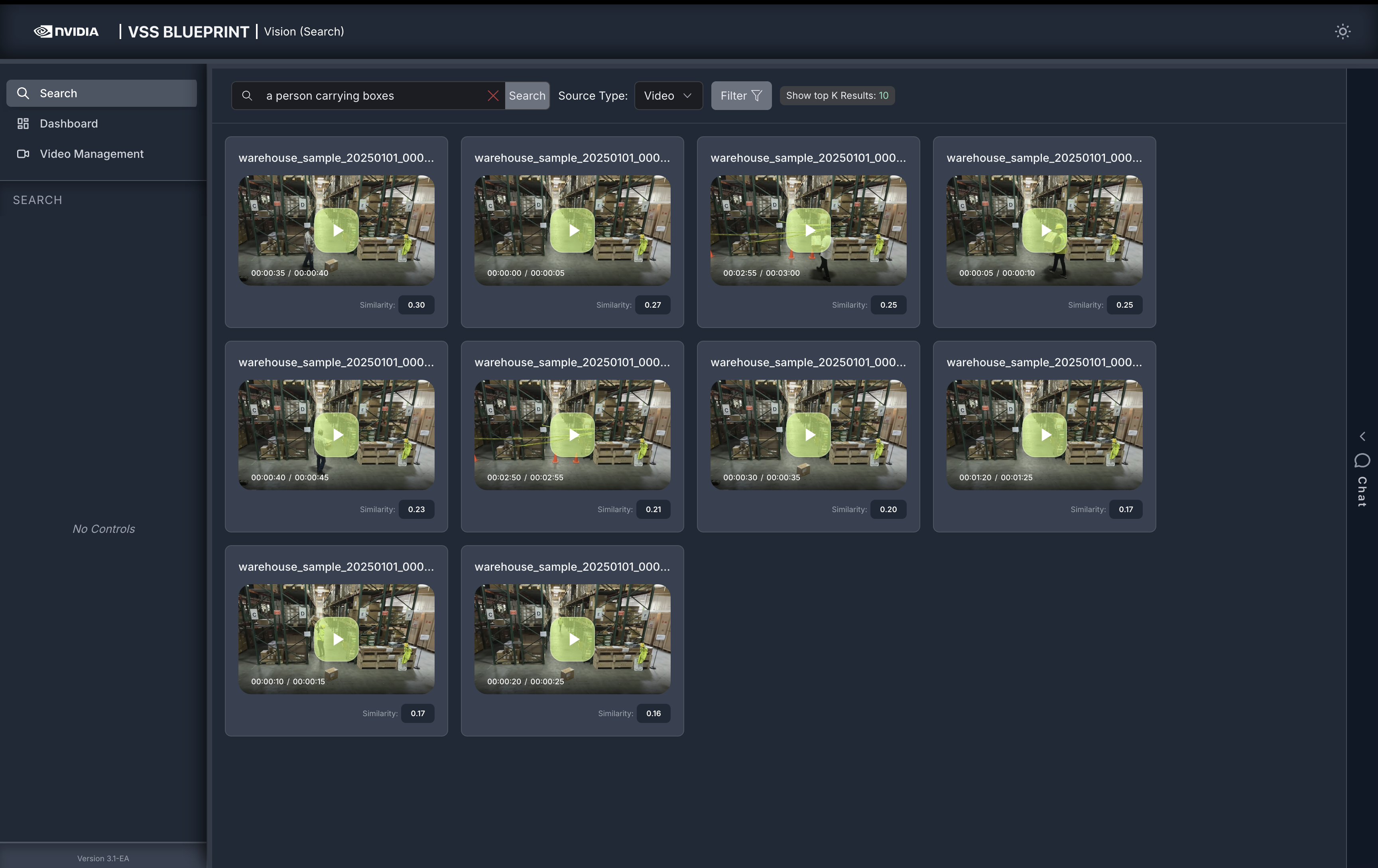

Step 3: Search with a simple query#

Note

To use manual filters, ensure that the Vision Agent chat interface is collapsed.

Navigate to the Search tab and enter a natural language query in the search input box. For example:

a person carrying boxes

Click the Search button to execute the query. The agent will return video clips that match your search description.

Note

By default, the Show top K Results filter is applied to display the top 10 results. This value can be changed in the filters to show more or fewer results.

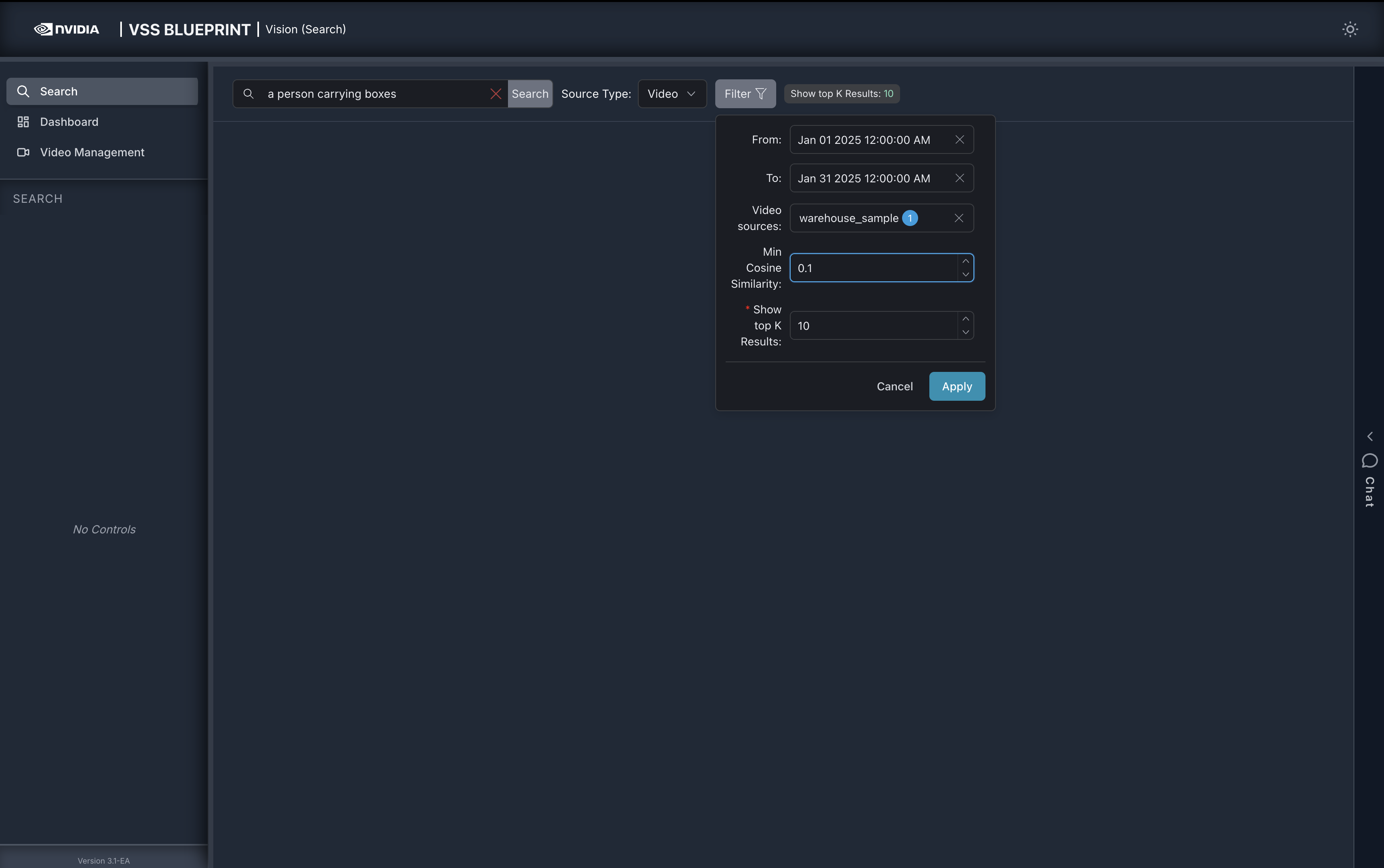

Step 4: Search with additional filters#

To try additional filters, upload the video file sample-warehouse-ladder.mp4 (following the steps in Step 2: Upload a video).

Navigate to the Search tab and click the Filter button to open the filter panel with the following options:

From: Filter results by the start date and time of the video clips

To: Filter results by the start date and time of the video clips

Video sources: Select specific videos to search within

Min Cosine Similarity: Set a minimum similarity threshold (-1.00 to 1.00) to filter results based on how closely they match your query. Set a lower threshold for broader results, or raise it for high-confidence matches. Optimal values vary depending on the video content.

Show top K Results: Set the maximum number of results to display

Source Type: Select the type of media source to search within. Choose between ‘Video’ and ‘RTSP’.

Enter your search query in the Search box, configure the desired filters, and click Confirm to apply.

For example, in the filter panel above:

Set From and To timestamps to filter results within a specific time range

Select specific Video sources to search within particular videos

Set Min Cosine Similarity to

0.2to only show results with a similarity score of 0.2 or higherSet Show top K Results to

5to display maximum of top 5 results

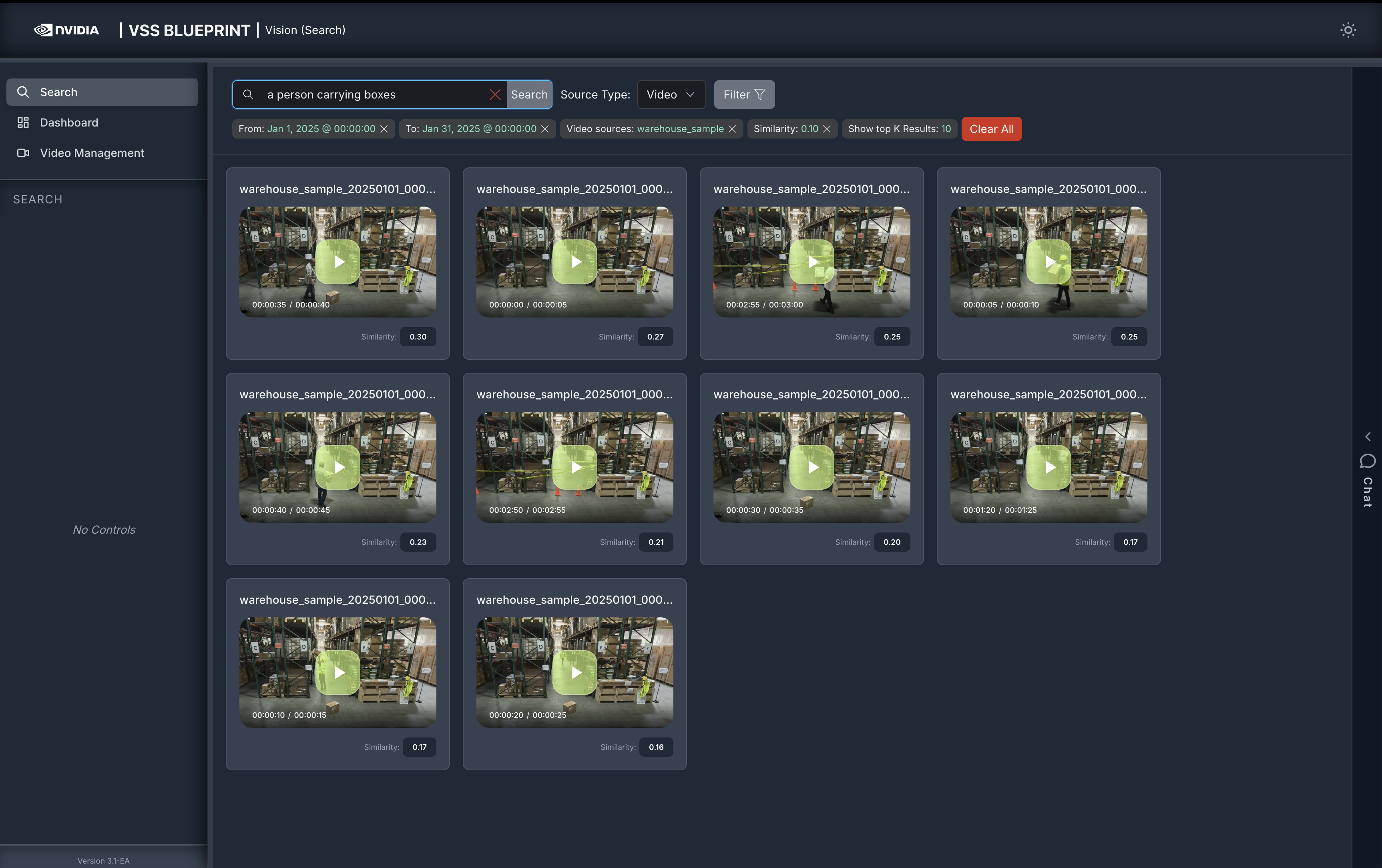

After configuring the filters, the search results will be refined based on the applied criteria:

Step 5: Vision Agent Chat#

The Search tab includes a Vision Agent chat interface on the right side that provides an interactive way to search through videos using natural language. The Vision Agent automatically selects the best search method based on your query. You can also specify filters directly within your query instead of using the filter panel.

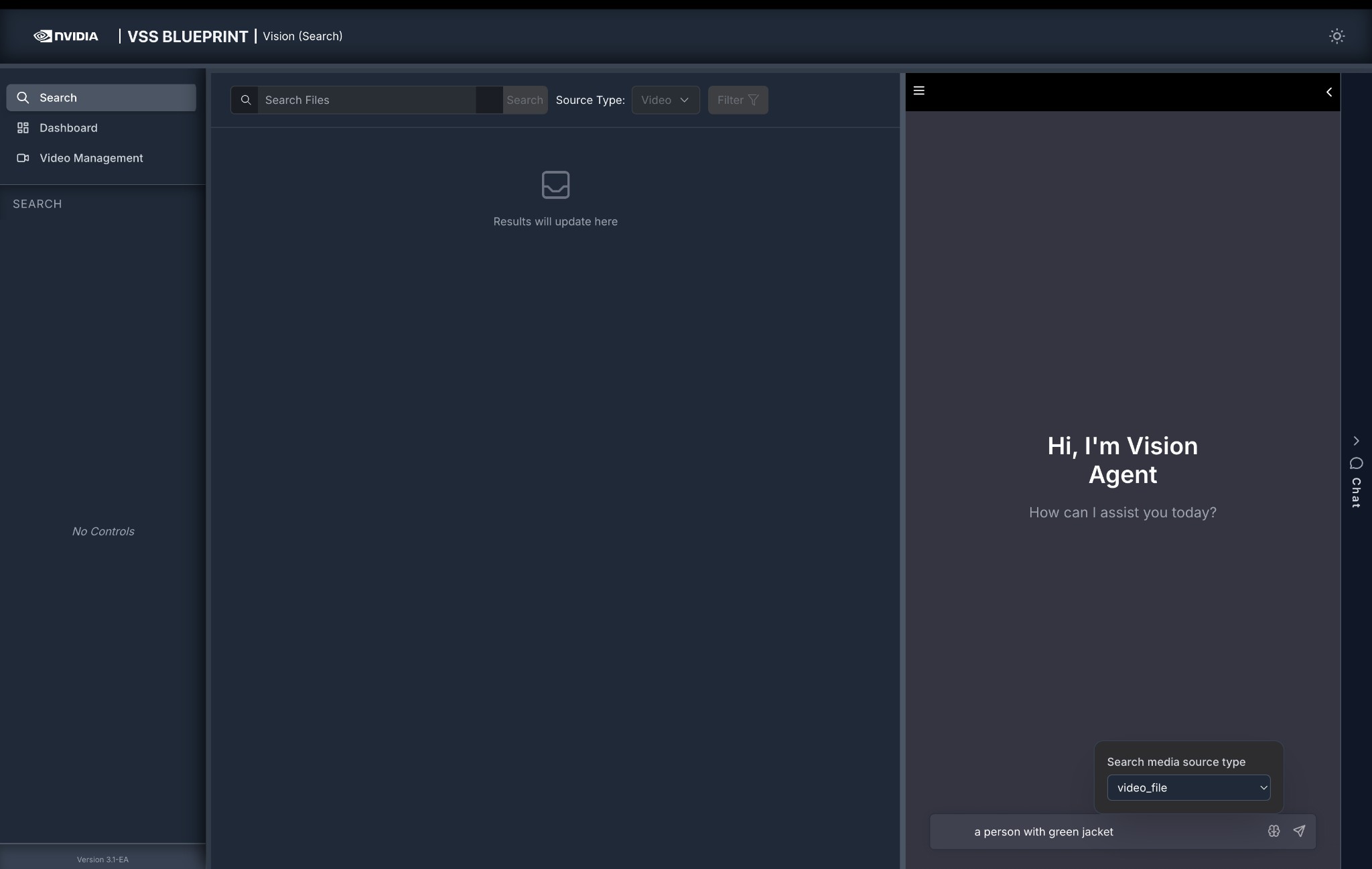

Using the Chat Interface#

Navigate to the Search tab.

Open the Chat panel on the right side of the screen.

Select the Search media source type from the dropdown (e.g.,

video_file) to specify which sources to search.Enter a natural language query in the chat input at the bottom (e.g.,

a person with green jacket).Press Enter or click the send button to submit your query.

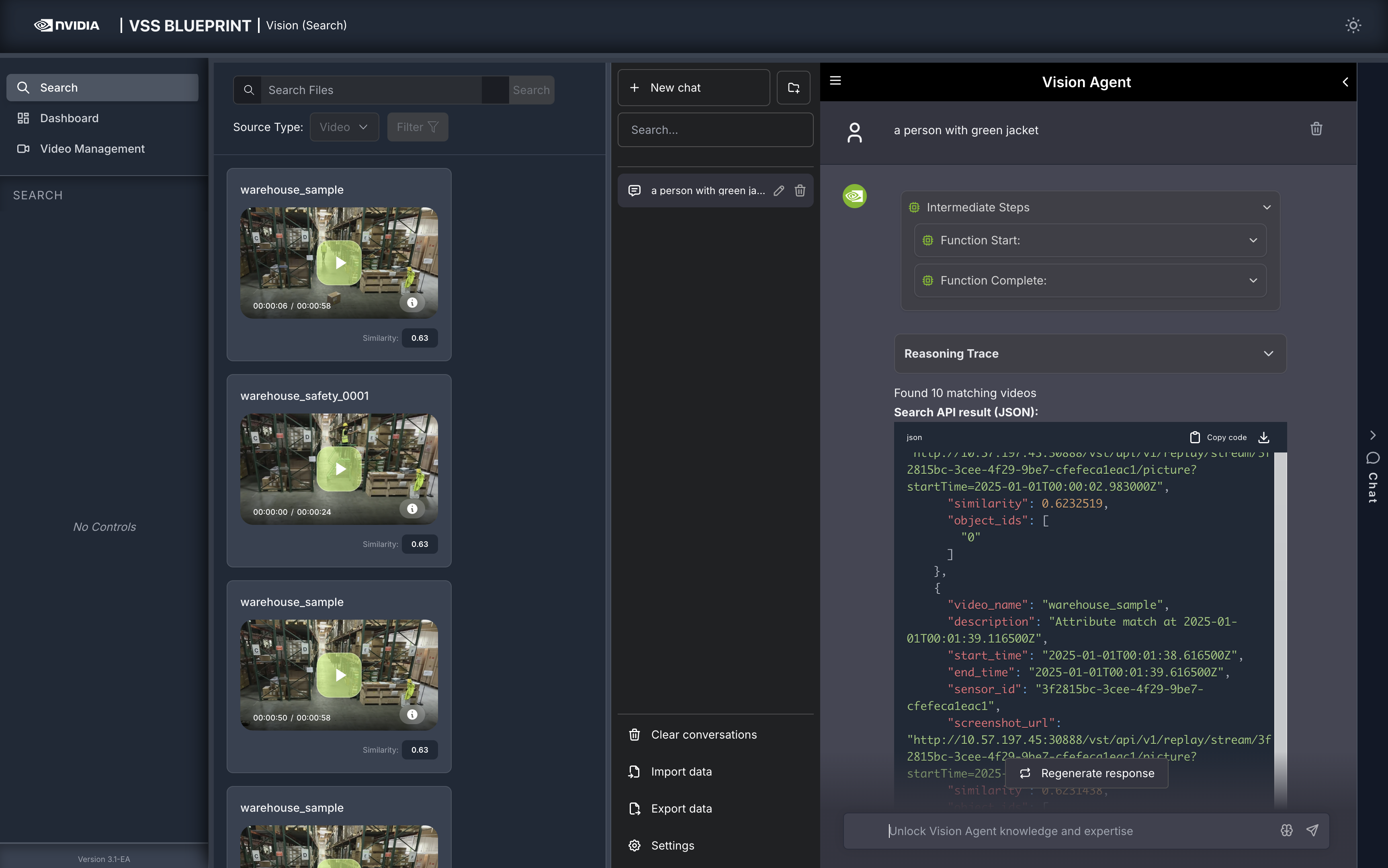

Result Returned by the Agent#

The Vision Agent processes your query, selects the best search method (Embed, Attribute, or Fusion search), and returns matching video clips in the left panel.

Each response in the chat window includes:

Intermediate Steps — Expandable section showing the sequence of function calls and tool invocations the agent makes to process your query.

Reasoning Trace — Step-by-step breakdown of the agent’s decision-making: query decomposition, search method selection, and result summary. Expand each step for full details.

Search Results Summary — Number of matching videos found (e.g., “Found 10 matching videos”).

Search API result (JSON) — Raw JSON response with detailed metadata for each result:

video_name: Name of the source videodescription: Match descriptionstart_time/end_time: Clip timestamps in ISO 8601 formatsimilarity: Cosine similarity scoresensor_id: Source sensor identifierscreenshot_url: URL to the clip thumbnailobject_ids: Detected object identifiers

Download — Download the JSON response as a file.

Regenerate response — Re-run the query for updated results.

Note

The Vision Agent chat interface in the Search tab only supports searching for events, actions, and object attributes in videos and RTSP streams.

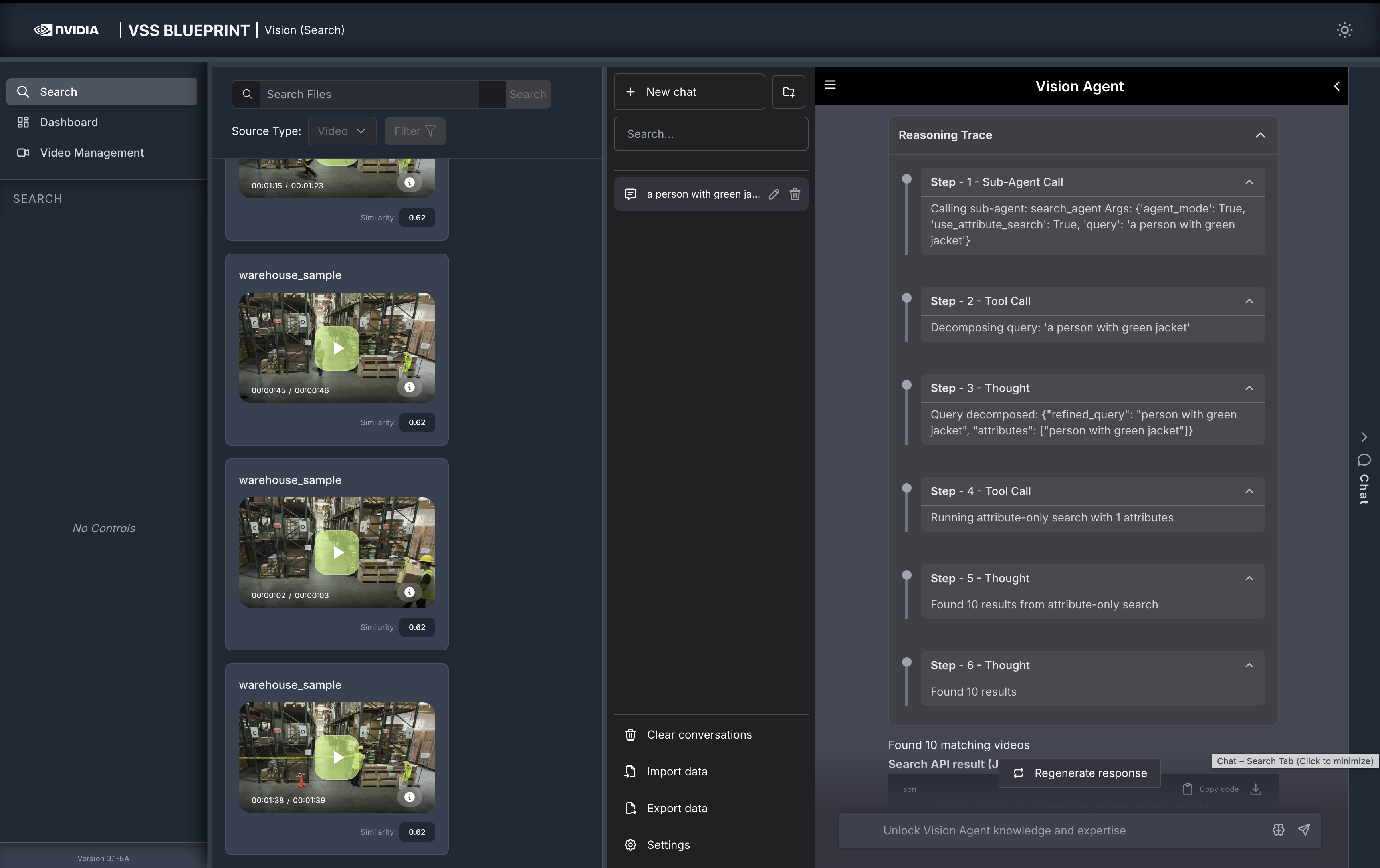

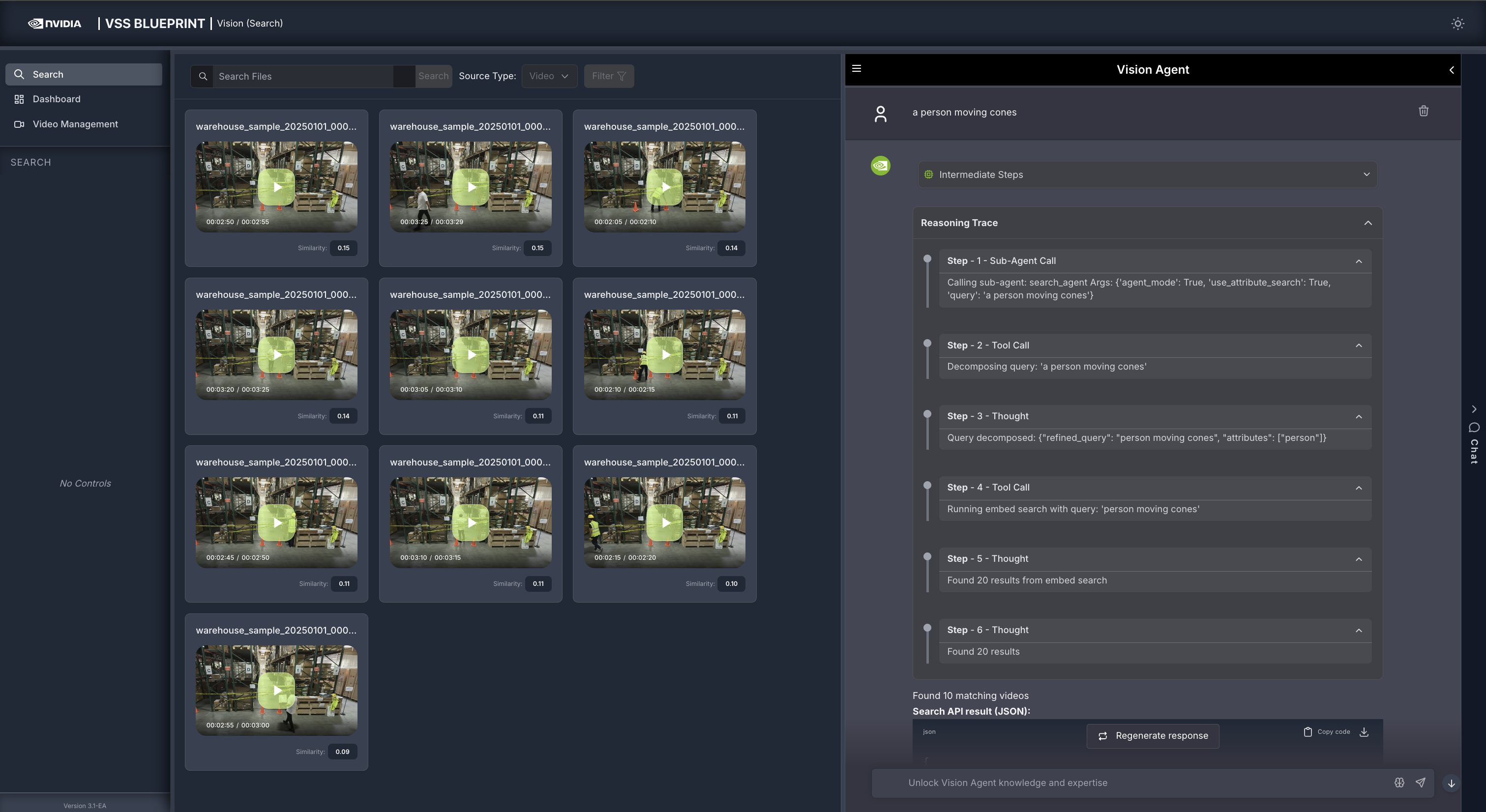

Reasoning Trace

The Reasoning Trace section provides a step-by-step breakdown of the agent’s internal decision-making process. You can see which search method was used and how the agent interpreted your query by expanding the Reasoning Trace in the chat response.

It shows:

Sub-Agent Call — The initial call to the

search_agentwith parameters such asagent_mode,use_attribute_search, and the user query.Tool Call — Query decomposition step where the agent breaks down the natural language query into a

refined_queryand extractedattributes.Thought — The agent’s interpretation, showing the search method selected (Embed, Attribute, or Fusion) and the final result count.

Understanding Search Types in Agent Chat

The Vision Agent uses three types of search methods to find relevant video clips. It automatically selects the best search method based on your query:

- Embed Search

Searches for events, actions, and activities in videos (e.g., “carrying boxes”, “walking”, “driving”)

Uses semantic embeddings to understand the context and meaning of actions

Used for queries that describe what is happening in the video

Note: Searching from input text box will only perform embed search.

- Attribute Search

Searches for visual descriptors and object attributes (e.g., “person with green jacket”, “person in a hard hat”)

Uses behavior embeddings to find specific visual characteristics

Used for queries that describe how objects or people look

Note: Results with the same object (same sensor_id and object_id) are automatically merged together, combining their time ranges into a single longer clip. Clips shorter than 1 second are extended to at least 1 second. This means attribute search results can have variable durations depending on how many times the same object appears in the top results.

Multiple Attributes: When multiple attributes are recognized in an attribute-only search, the system uses “append mode” - each attribute is searched independently with the requested top_k, and results from all attributes are combined.

- Fusion Search

Combines both Embed and Attribute search for queries that include both actions and visual descriptors

First finds relevant events using embed search, then reranks the embed results based on attributes to search for

Automatically falls back to attribute-only search if the embed search confidence is low

Used for complex queries like “a person with a green jacket carrying boxes”

Multiple Attributes in Fusion: When multiple attributes are recognized in fusion search, the system uses “fuse mode” - each attribute is searched with top_k=1 in the same video, and object IDs from all matching attributes are combined into a single result with one screenshot. This ensures that fusion results show objects that match all specified attributes together.

Fusion Algorithm Options

By default, fusion search uses Reciprocal Rank Fusion (RRF) with default weights. No configuration changes are required.

If you need to customize the fusion algorithm, you can modify the settings in the search agent configuration file at deployments/developer-workflow/dev-profile-search/vss-agent/configs/config.yml:

Reciprocal Rank Fusion (RRF) - Default

Formula: rrf_score = 1.0 / (rank_action + rrf_k) + rrf_w * normalised_attribute_score

search_agent:

fusion_method: rrf

rrf_k: 60 # RRF constant k

rrf_w: 0.5 # RRF weight for attribute component

Weighted Linear Fusion

Formula: fusion_score = w_embed * embed_score + w_attribute * normalised_attribute_score

search_agent:

fusion_method: weighted_linear

w_embed: 0.35 # Weight for embed score

w_attribute: 0.55 # Weight for attribute score

Embed Confidence Threshold

You can also configure the embed_confidence_threshold parameter to control when fusion search falls back to attribute-only search:

search_agent:

embed_confidence_threshold: 0.1 # Minimum embed score to proceed with fusion

Understanding Search Path Selection

The Vision Agent automatically selects the best search method based on your query. Here’s how different queries are processed:

Example 1: Embed Search Only

- Query: “a person moving cones”

Search Type: Embed Search

Why: The query describes an action/event with no descriptive visual attributes

Process: Searches event embeddings to find clips showing the action

Example 2: Attribute Search Only

- Query: “person in green jacket”

Search Type: Attribute Search

Why: The query contains only visual descriptors (attributes), no actions

Process: Searches behavior embeddings to find objects matching the visual description

Example 3: Fusion Search

- Query: “a person carrying boxes in green jacket”

Search Type: Fusion Search

Why: The query contains both visual attributes (“green jacket”) and an action (“carrying boxes”)

Process: 1. First performs Embed Search to find clips showing “carrying boxes” 2. Then reranks the embed results based on attributes to search for (e.g., “green jacket”) 3. Combines scores from both searches for more accurate results

Example 4: Fallback to Attribute Search

- Query: “person running in green jacket”

Search Type: Attribute Search (fallback from Fusion)

Why: The query contains both attributes and action, but the embed search confidence is too low (below

embed_confidence_threshold)Process: Falls back to Attribute Search only when embed search confidence is insufficient for fusion

Critic Agent Overview

The Critic Agent is a specialized agent that reviews search results and removes any results that do not match the query’s parameters. Note that this may result in fewer results than requested.

When deployed, the critic agent reviews all search results before they are returned to the user and removes results that do not match the query. The agent can be configured to repeat the search process to improve results; each iteration finds new candidates to replace removed results. By default, the critic agent does not repeat the search and only modifies the initial search results.

How the Critic Agent Appears in the Reasoning Trace

When the critic agent is enabled, the Vision Agent’s reasoning trace shows the verification flow:

Verifying results with critic agent — The critic agent is invoked to evaluate a set of candidates (e.g., 20 results).

Critic verification complete — The trace reports how many results were verified versus unverified (e.g., “11/20 results verified, 0/20 results unverified”).

Found N results — The final count reflects only the results that passed critic verification.

Critic Agent Input and Output

The critic agent uses a VLM (Vision Language Model) to verify each search result clip against the user query. It receives the query and metadata for multiple video clips, fetches and analyzes each clip via the VLM, then classifies each clip as confirmed, rejected, or unverified.

Input — For each search result clip, the critic receives:

The user query (e.g., “a person carrying boxes”).

Video metadata for that clip:

sensor_id,start_time, andend_timestamp.

Verification — For each clip:

The query is turned into a verification prompt that asks the VLM to break the query into criteria and judge each as true or false for that video.

A playable URL for the clip is obtained and the clip plus the prompt is sent to the VLM.

The VLM returns a small JSON object per clip, e.g.,

{"person": true, "carrying boxes": false}.

Decision — Each clip is classified as follows:

CONFIRMED — Every criterion is true → keep the result.

REJECTED — Any criterion is false → remove the result; optionally the system can increment

top_kand run another search iteration to find more candidates.UNVERIFIED — Response missing or not parseable → keep the result (treated as “could not verify”), with a warning.

The agent output includes a result (e.g., "confirmed" or "rejected") and a criteria_met breakdown (e.g., person: true, carrying boxes: false) so you can see why a segment was confirmed or rejected.

Temporal Deduplication for Video Embeddings

Temporal deduplication is an optional ingestion optimization that keeps only embeddings for new or changing content and skips those similar to recent ones, yielding a smaller, more meaningful set with less storage and processing. It uses a sliding-window algorithm:

Window — A fixed-size buffer holds the last N vectors (e.g., 60); when full, the oldest is dropped.

Similarity — For each new embedding, count how many consecutive window entries (newest backward) are “similar” (distance or cosine-similarity threshold). Only consecutive similar neighbors are counted.

Decision — Below a minimum count (e.g., 3) → store (novel or transitional). At or above minimum → skip (redundant).

Max interval — If too much time has passed since the last stored point, always store the current point (long gaps are never dropped).

Example (Conceptual)

Suppose the window holds recent embeddings for scenes A, A, B, B, B (oldest to newest).

New point like B Counting backward: B, B, B → 3 consecutive similar neighbors. Result: Skip (redundant—same pattern as recent B’s).

New point like C (different scene) Counting backward: C is not similar to B → 0 consecutive similar neighbors. Result: Store (novel—new pattern).

New point like B, but we’re just coming from A Window: A, A, A, B, B. New point like B. Backward: B, B → 2 similar; then A is not similar → count stays 2. Result: Store (transitional—B is “new” relative to recent A’s).

So the same “B-like” embedding is sometimes stored (when it marks a transition) and sometimes skipped (when it’s just more of the same), which is what you want for temporal deduplication.

Advantages

Fewer embeddings and faster search while preserving scene changes and transitions; loops and repetitive content are deduplicated. Window size, similarity threshold, and minimum-neighbor count are configurable (stricter = more points kept; looser = more compression).

Caveat

Deduplication is lossy — skipped embeddings do not appear in search results. A higher similarity threshold reduces missing important transitions but can lower query recall (e.g., a static 30-second scene may return results covering only part of it). Use this feature when optimizing storage or search performance by reducing embedding volume.

Step 6: Delete Videos or Streams#

To remove uploaded videos or RTSP streams from the agent:

Click on the Video Management tab.

Select the video(s) or stream(s) you want to delete by clicking the checkbox next to each item, or click Select All to select all items.

Click the Delete Selected button in the top-right corner.

The selected videos or streams will be removed from the video list and will no longer appear in search results.

Note

Deleting a video or stream removes it from the agent’s video management. For uploaded videos, the vector embeddings associated with the deleted videos will also be removed. For RTSP streams, the stream will be disconnected and removed from the video list in video management tab; however, any data previously ingested from those streams will be retained and will remain available for chat and search queries.

Step 7: Teardown the agent#

To teardown the agent, run the following command:

scripts/dev-profile.sh down

This command will stop and remove the agent containers.

Known Issues#

When setting a filter threshold for minimum cosine similarity, results with similarity scores equal to the threshold may be omitted.

A race condition between RTVI-embed and LLM NIM during deployment can result in an unhealthy state for the RTVI-embed container. To resolve this:

Stop the LLM NIM.

Wait for RTVI-embed to become healthy.

Restart LLM NIM.

Queries with negative intent (e.g., “people without a yellow hat”) may return the same results as positive intent queries (e.g., “people with a yellow hat”).

Sometimes, the agent may also return false positive results (i.e., results that are not relevant to the query).

Queries with a single word (e.g., “person”) may return no results.

The duration of video clips in search results may be longer than the displayed duration.

‘Description’ is empty in the response generated by the Vision Agent chat interface.

‘Source Type’ is not available in the response generated by the Vision Agent chat interface.

Adding 8 or more RTSP streams for search profile may result in degraded FPS in the Perception service (RTVI-CV).

Sometimes, if there are 8 or more RTSP streams, one of them may drop after few days of continuous usage.

By default, the timestamps for uploaded videos start from

2025-01-01 00:00:00.Deleting an RTSP stream that has ended, may subsequently fail new stream addition or a new video upload.

There may be more number of unique object ids in the chat interface response than the number of unique objects detected in the video.

When the critic agent is enabled and VLM service is not available, the search results may not appear in the main window.

Occasionally, the search results may include results with file name ‘0’ with 0 duration.

For RTSP streams with H265 encoding that have been removed, thumbnail may not be visible in the VSS UI. See Image capture failure for more details.

An ‘Index not found’ error may occur, when there are no videos corresponding to the source type selected.

When uploading a video to VIOS, if the video is larger than the maximum upload size, the upload may fail. See Why do large file uploads to VIOS fail? for more details.