Getting Started#

This guide will help you access and start using the VSS Auto Calibration Microservice and User Interface.

Prerequisites#

Before using the microservice and UI, ensure you have:

System Requirements

x86_64 system

OS Ubuntu 24.04

NVIDIA GPU with hardware encoder (NVENC)

NVIDIA driver 580

Docker (setup to run without sudo privilege)

NVIDIA container toolkit (Refer to the Prerequisites section)

Required

At least 2 camera video files (MP4, AVI, MOV, or MKV format)

Layout/map image (PNG, JPG, or JPEG format)

Optional

Ground truth data (ZIP file) for calibration evaluation

Pre-existing alignment data (JSON file)

Focal length values for cameras

Config parameters if any for your dataset

Deployment Steps (Docker Compose)

Deploy the UI and backend microservice using Docker Compose. Currently we use the VSS Auto Calibration deployment resources from the Warehouse Blueprint. Refer to the Warehouse Blueprint Introduction for more details.

Setup NGC access:

# Setup NGC access export NGC_CLI_API_KEY=<NGC_CLI_API_KEY> export NGC_CLI_ORG='nvidia'

Download deployment resources. Refer to the Prerequisites section for NGC CLI installation guide.

ngc \ registry \ resource \ download-version \ "nvidia/vss-warehouse/vss-warehouse-compose:3.1.0" #OR Manually download the tar file from NGC #URL https://catalog.ngc.nvidia.com/orgs/nvidia/teams/vss-warehouse/resources/vss-warehouse-compose?version=3.1.0 # Extract the package cd vss-warehouse-compose_v3.1.0 tar -xvf deploy-warehouse-compose.tar.gz

Navigate to the VSS Auto Calibration directory:

cd deployments/auto-calib

Your directory structure should be:

├── compose.yml ├── ms │ └── compose.yml └── ui └── compose.ymlCreate a

.envfile in your current directory with the following environment variables:VSS_AUTO_CALIBRATION_PORT=8000 VSS_AUTO_CALIBRATION_UI_PORT=5000 MDX_SAMPLE_APPS_DIR=/path/to/your/sample_apps_dir MDX_DATA_DIR=/path/to/your/data_dir HOST_IP=<HOST_IP_ADDRESS>

Replace the paths, ports and IP address with your actual values.

Download and set up the VGGT model and create projects directory:

Download the VGGT commercial model from HuggingFace.

Note

You need to sign up for a HuggingFace account and accept the model license to download.

Move the downloaded model file (

vggt_1B_commercial.pt) to the VGGT model directory:mkdir -p ${MDX_DATA_DIR}/auto-calib/vggt mv vggt_1B_commercial.pt ${MDX_DATA_DIR}/auto-calib/vggt/

Create projects directory:

mkdir -p ${MDX_SAMPLE_APPS_DIR}/auto-calib/projects

Note

Projects will be saved in:

${MDX_SAMPLE_APPS_DIR}/auto-calib/projectsChange the ownership of the directory to UID 1000 and GID 1000:

sudo chown -R 1000:1000 ${MDX_DATA_DIR}/auto-calib sudo chown -R 1000:1000 ${MDX_SAMPLE_APPS_DIR}/auto-calib

Ensure you have access to NGC to pull the containers.

Start both the microservice and UI servers:

docker compose --profile "auto-calib" up -d

Open your browser and navigate to:

http://<HOST_IP>:<VSS_AUTO_CALIBRATION_UI_PORT>

For example, with the default settings:

http://<HOST_IP>:5000To stop the containers

docker compose --profile "auto-calib" down

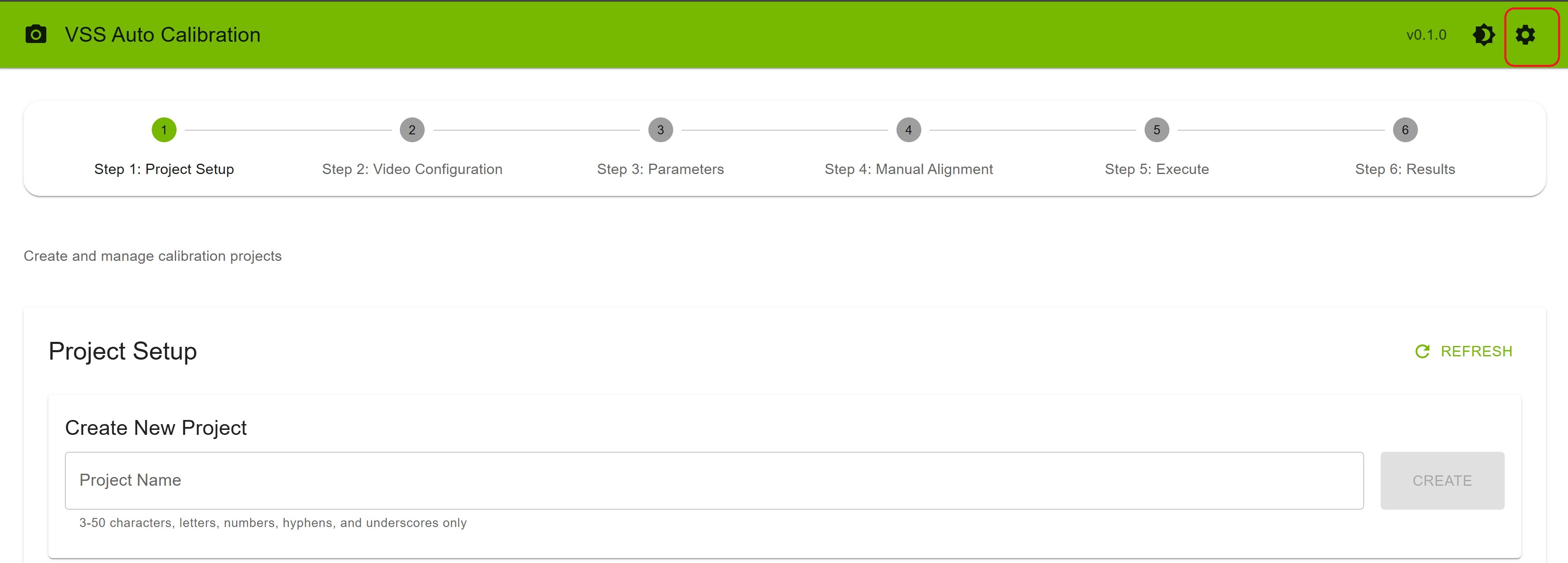

First Time Setup#

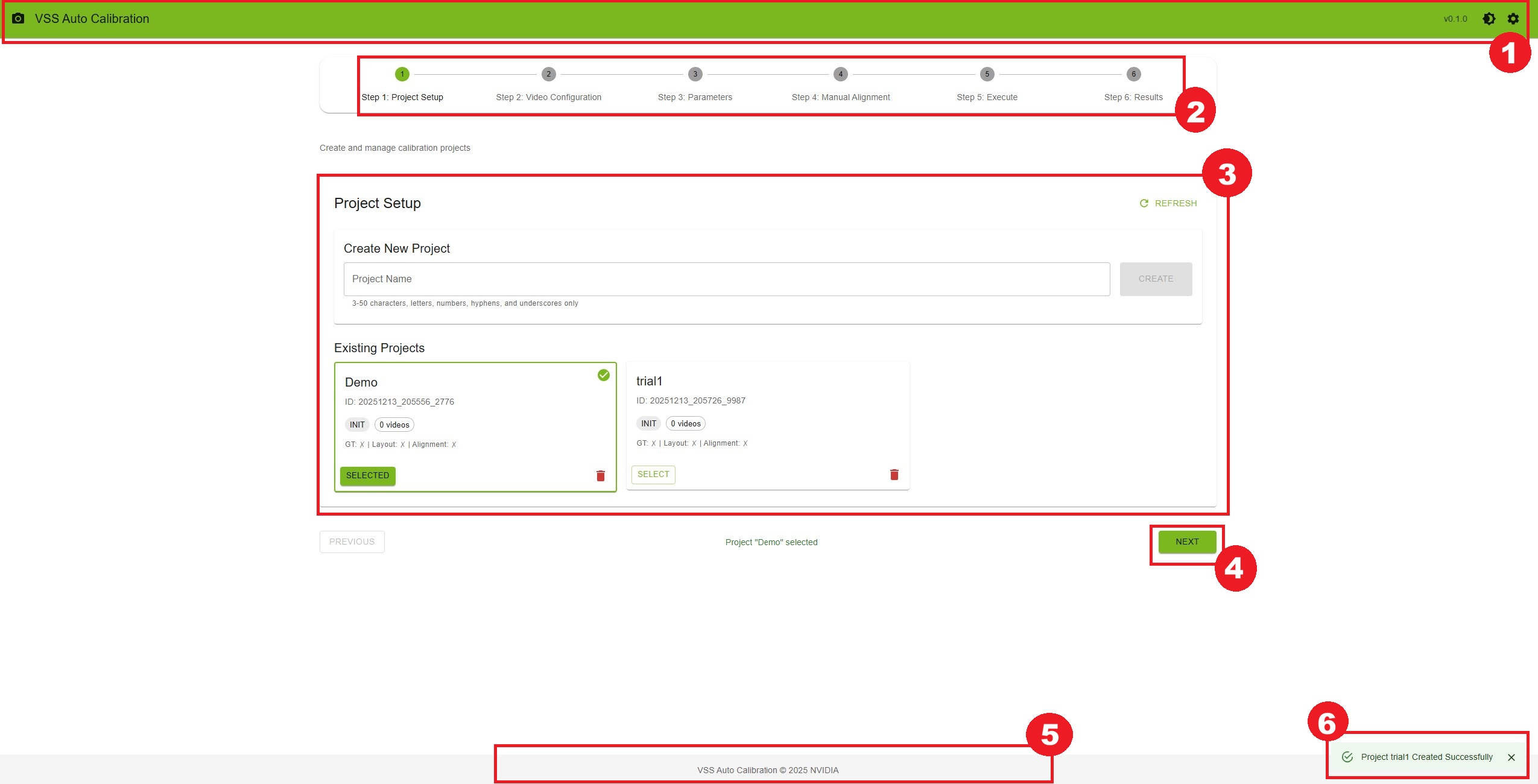

When you first access the UI, you’ll see the main interface with a stepper showing 6 workflow steps.

Interface Overview

The interface consists of:

Header Bar

Application name and version

Theme toggle button (light/dark mode)

Settings button (visible only when you are on the Parameters step)

Stepper Navigation

Visual progress indicator

Click on steps to navigate (after selecting a project)

Current step is highlighted

Main Content Area

Step-specific content and controls

Forms, file uploads, and interactive tools

Navigation Buttons

“Previous” button to go back

“Next” button to proceed

Disabled when requirements aren’t met

Footer

Copyright information

Application version

Notifications

Success/error messages appear in bottom-right corner

Auto-dismiss after 6 seconds

Quick Start Guide#

Follow these steps to perform your first calibration:

Step 0: Deploy the UI (If Not Already Running)

If you’re deploying via Docker Compose, follow the steps in the Production Mode (Docker Compose) section above. Once deployed, access the UI at http://<HOST_IP>:<VSS_AUTO_CALIBRATION_UI_PORT> (default: port 5000).

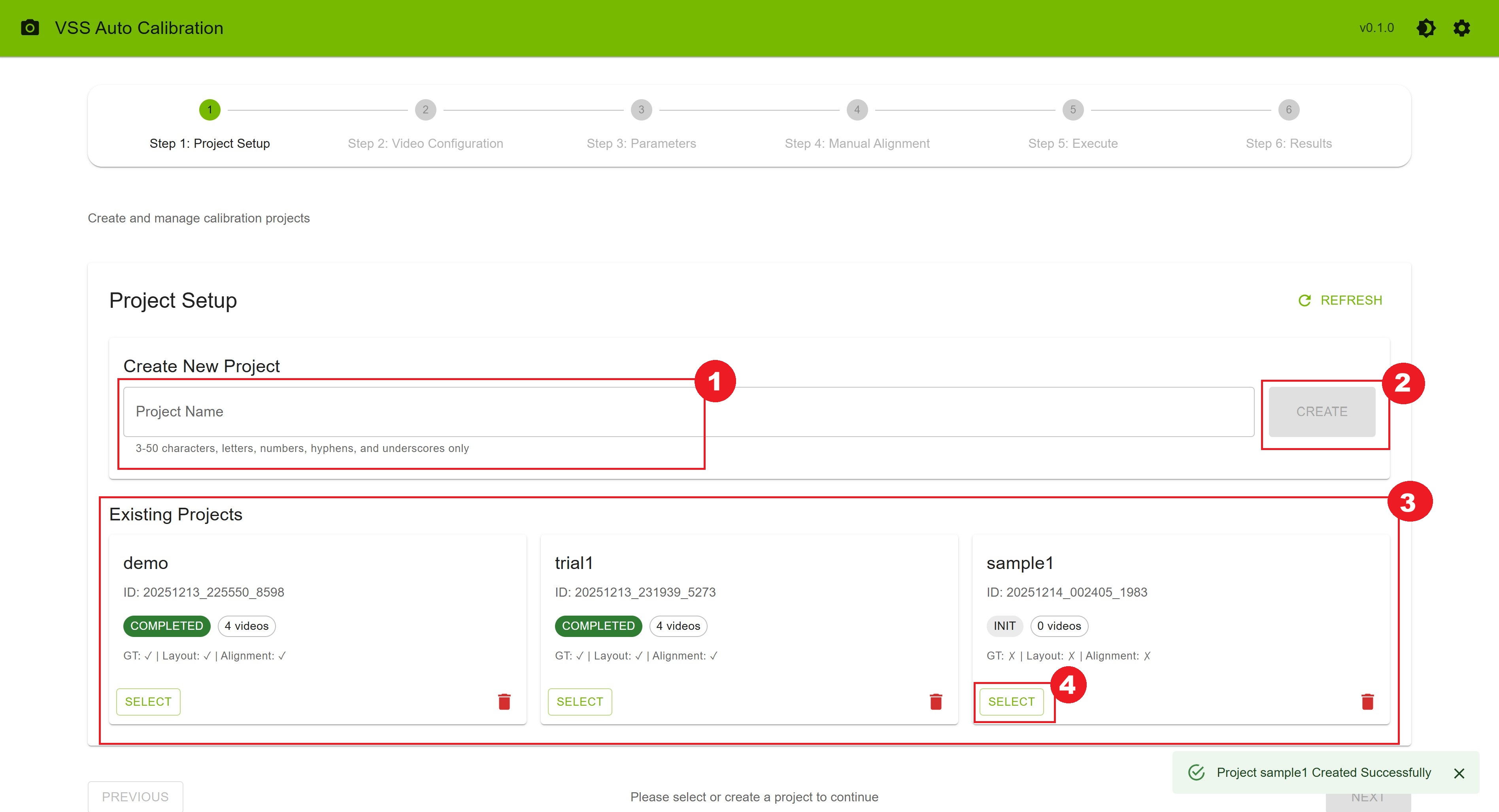

Step 1: Create a Project

On the Project Setup page, enter a project name (e.g.,

warehouse_calibration)Click “Create” button

Your new project appears in the list below

Click “Select” on your project card

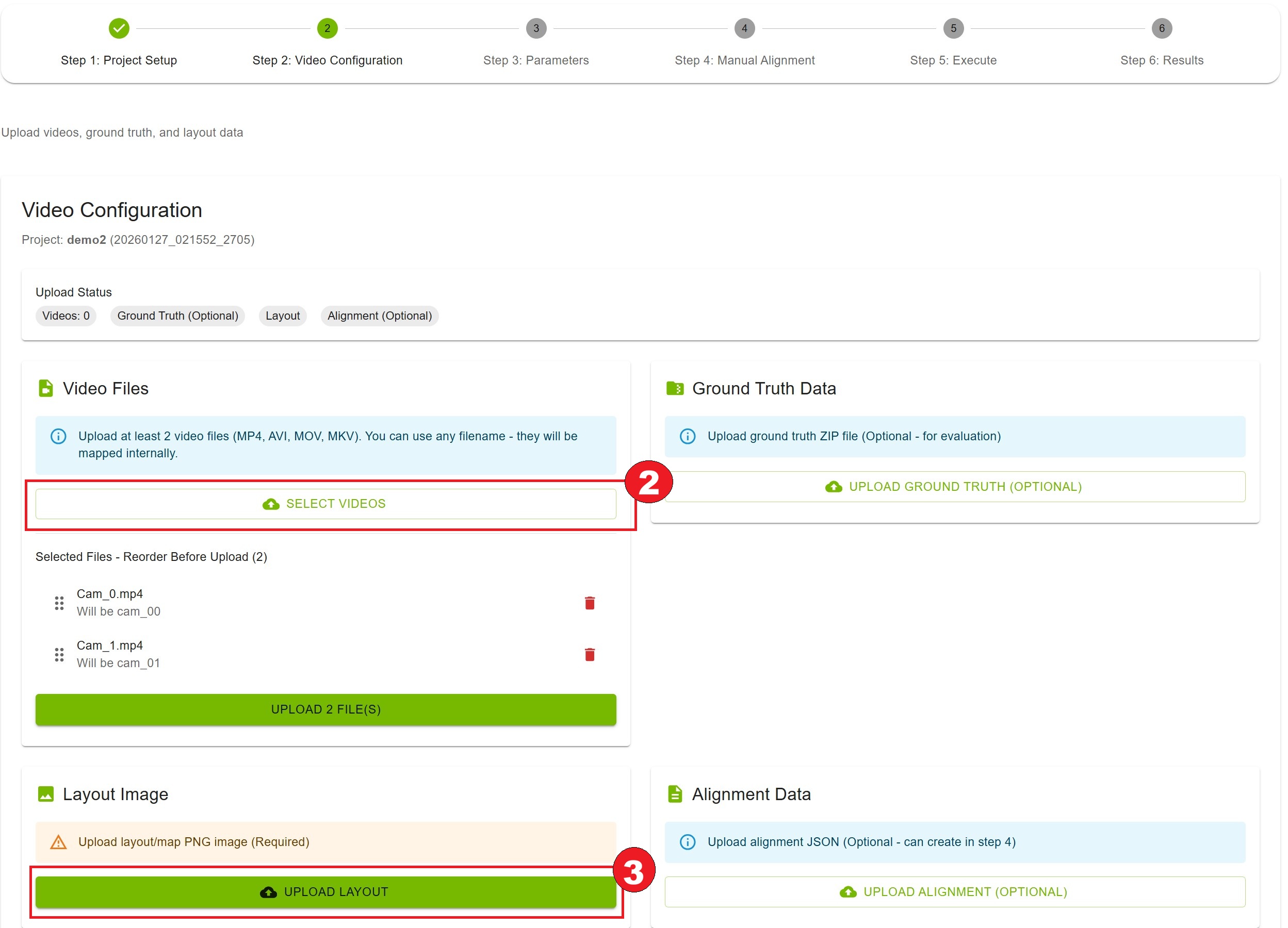

Step 2: Upload Files

Click “Next” to go to Video Configuration

Upload at least 2 video files:

Click “Select Videos” button

Select video files named

cam_00.mp4,cam_01.mp4, etc.Reorder videos by dragging.

Click “Upload Videos” to upload the videos.

Upload layout image:

Click “Upload Layout” button

Select your PNG/JPG layout/map image

Confirm upload success

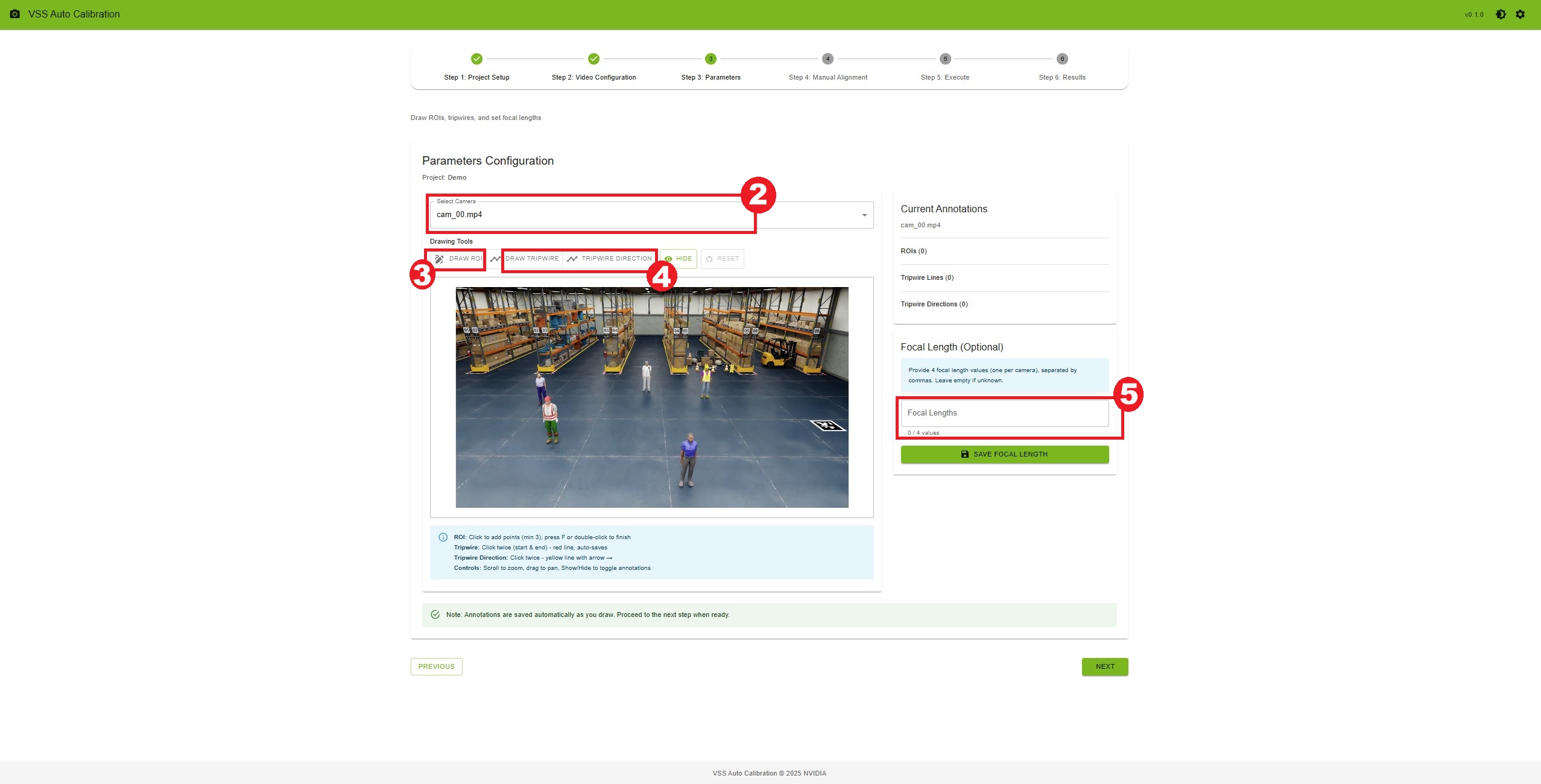

Step 3: Configure Parameters

Click “Next” to go to Parameters

Select a camera from the dropdown

Draw ROIs (optional):

Click “Draw ROI” button

Click on the video frame to add points (minimum 3)

Press ‘F’ or double-click to finish

ROI is saved automatically

Draw tripwires (optional):

Click “Draw Tripwire” button

Click twice to define start and end points

Tripwire is saved automatically

Add focal lengths (optional):

Enter comma-separated values (one per camera)

Click “Save Focal Length”

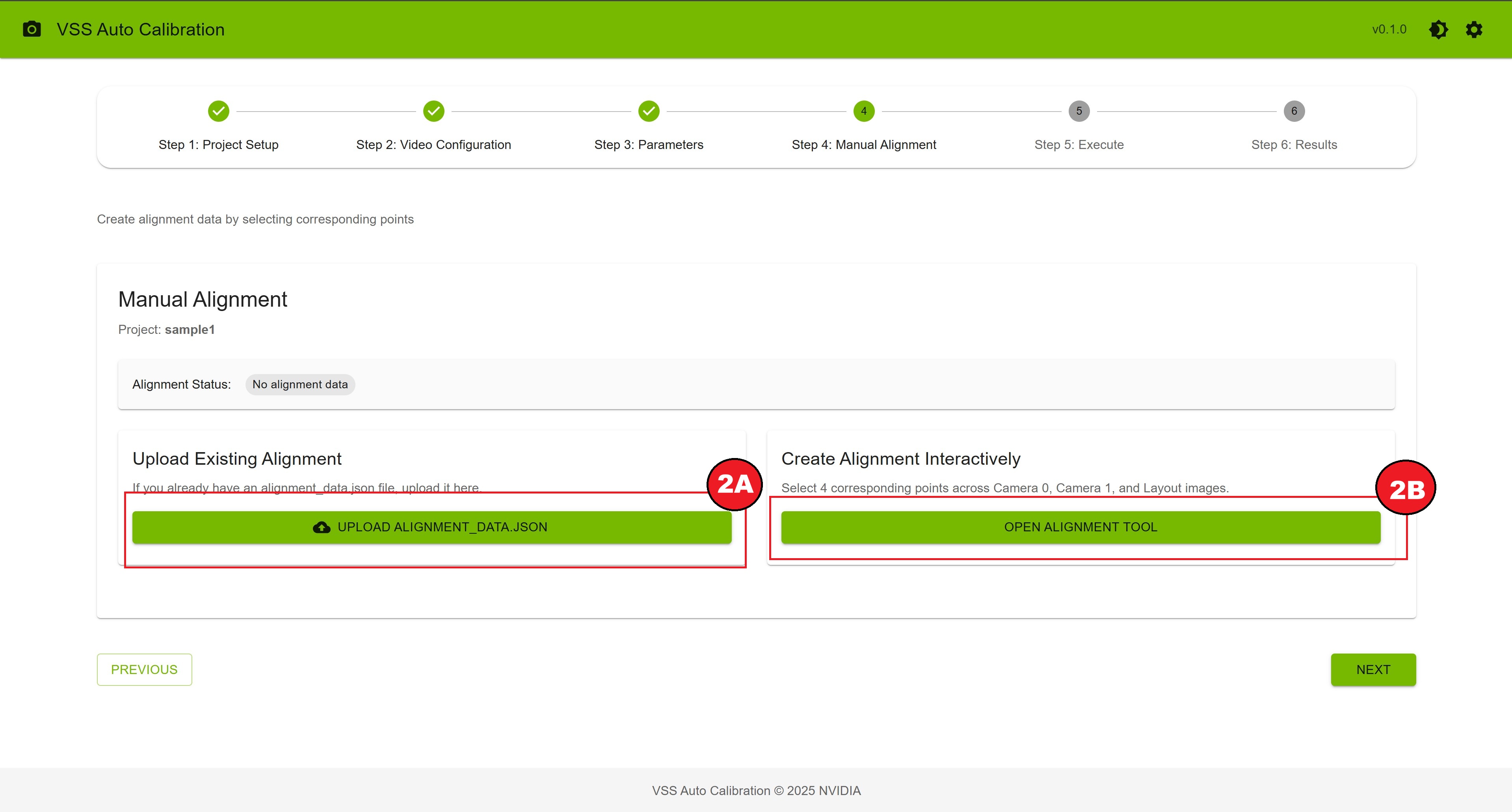

Step 4: Create Alignment Data

Click “Next” to go to Manual Alignment

Choose one of two options:

Option A: Upload Existing Alignment

Click “Upload alignment_data.json”

Select your JSON file

Wait for upload confirmation

Option B: Create Alignment Interactively

Click “Open Alignment Tool”

Click the same physical point on Camera 0, Camera 1, and Layout (in order)

Repeat for at least 4 different points

Click “Save Alignment” when complete

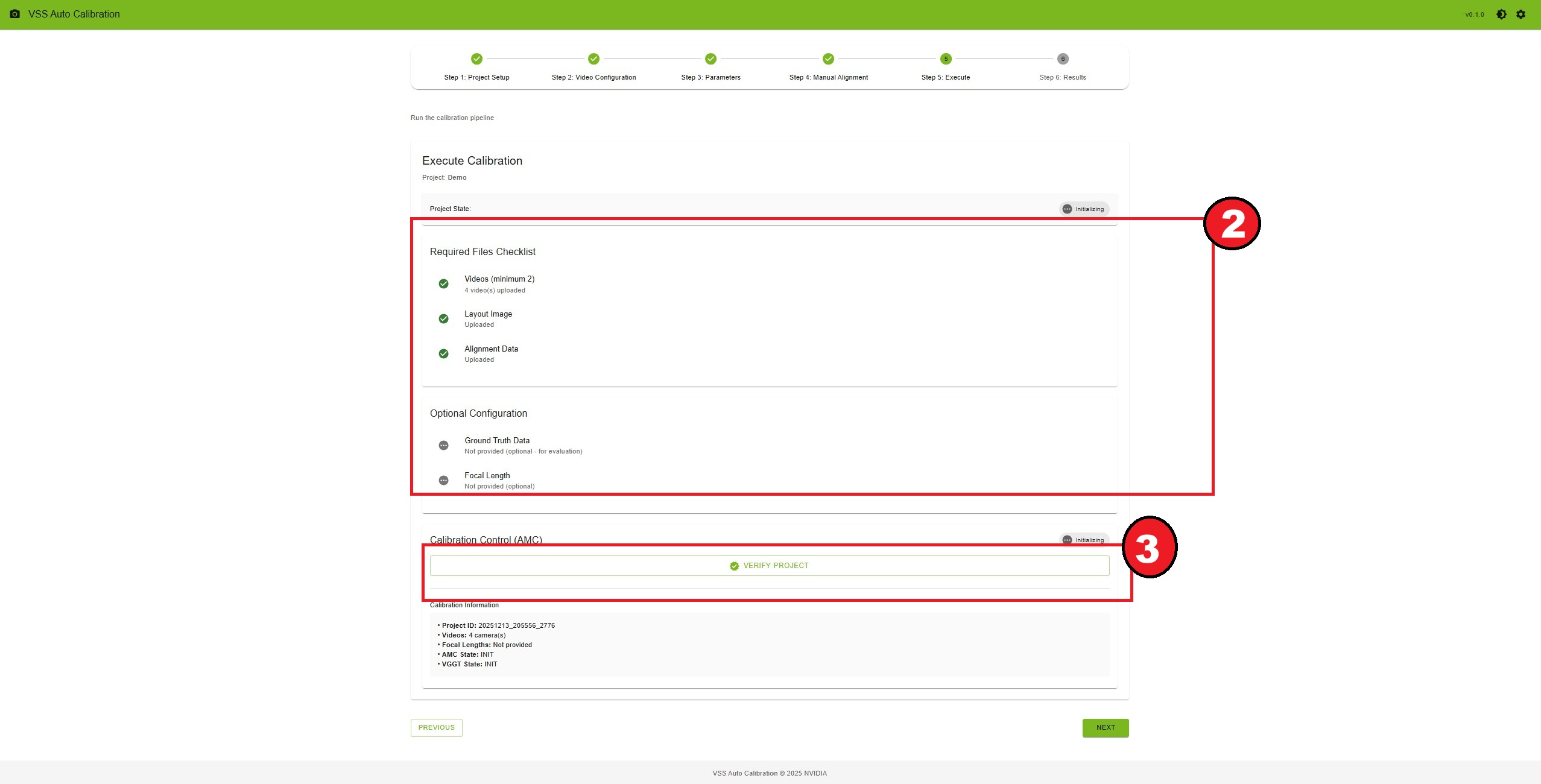

Step 5: Run Calibration

Click “Next” to go to Execute

Review the requirements checklist

Click “Verify Project” button

Once verified, click “Start Calibration”

Monitor the progress (status updates every 3 seconds)

Wait for “Calibration completed successfully” message

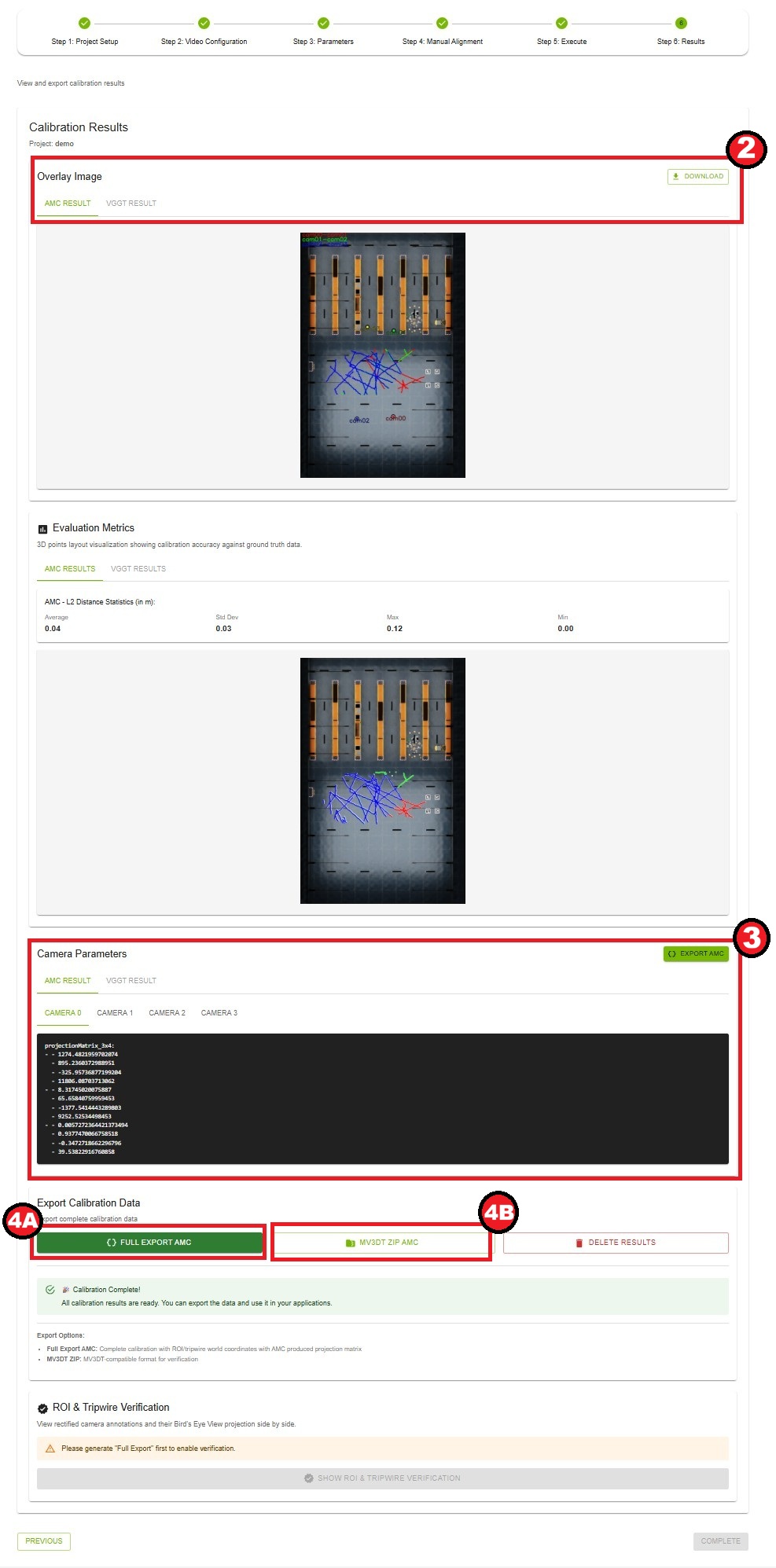

Step 6: View Results

Click “Next” to go to Results

View the overlay image showing calibration results. Click “Download” to save overlay image

Review camera parameters for each camera

Export calibration data:

Click “Full Export AMC” for complete calibration data. It will load the json which contains the calibration data for all the cameras in the project, which you can edit according to your usecase.

Click “MV3DT ZIP AMC” for MV3DT-compatible format

Settings#

Click the settings icon in the top-right corner to access application settings. Note: The settings icon is only visible when you are on the Parameters step.

Available Settings

Theme: Switch between light and dark themes

Version Information: View current application version

Note: Most settings are configured during deployment and cannot be changed from the UI.

Tips for Success#

File Naming Convention

Ensure videos are synchronized in time

While uploading videos maintain the order based on their FOV overlapping.

Input Video

Use high-resolution videos for better calibration accuracy

AMC is heavily dependent on the moving people instances in the videos. The videos should have enough number of clearly visible moving people.

Alignment Points

Choose points on the ground plane visible in all cameras

Select points at different depths and locations

Avoid points on moving objects

Use distinct features (corners, markings, etc.)

ROI and Tripwire Drawing

Draw ROIs to cover areas of interest

Place tripwires perpendicular to expected motion

Use tripwire directions to indicate motion direction

Test with different zoom levels for precision

Keyboard Shortcuts#

Parameters Step (Drawing)

Fkey: Finish current ROIEsckey: Cancel current drawingScroll wheel: Zoom in/out on canvasClick + Drag: Pan around zoomed canvas

Manual Alignment Step

Scroll wheel: Zoom in/out on alignment canvasClick + Drag: Pan around zoomed canvas (when zoomed)

Next Steps#

Now that you’re familiar with the basics, explore:

Workflow Steps - Detailed documentation for each step

Custom Dataset - Custom dataset preparation and calibration

Troubleshooting - Solutions to common problems