Inference Builder MCP#

Inference Builder integrates with MCP (Model Context Protocol) to enable AI‑assisted generation of inference pipelines with AI agents including Cursor and Claude Code. With MCP, you can use natural language to generate pipelines, build Docker images, and explore sample configurations.

Prerequisites#

Ubuntu 24.04

Nvidia GPU with Ada, Hopper or Blackwell architecture

Python 3.12

The Inference Builder MCP server runs locally on your development machine, which should typically be an Ubuntu system with GPU access. Before setting it up, make sure you have already completed the Inference Builder setup on your machine.

Note

Refer to Inference Builder to set up all prerequisites and complete the Inference Builder installation before proceeding.

Ensure you are in the inference_builder root directory before continuing.

Set up for Cursor#

Generate the MCP configuration on your development machine where the Inference Builder resides.

Global configuration (available to all projects):

python3 mcp/setup_mcp.py ~/.cursor/mcp.json

Project‑specific configuration:

python3 mcp/setup_mcp.py /path/to/your_project/.cursor/mcp.json

Open your project folder and verify the MCP server is successfully loaded.

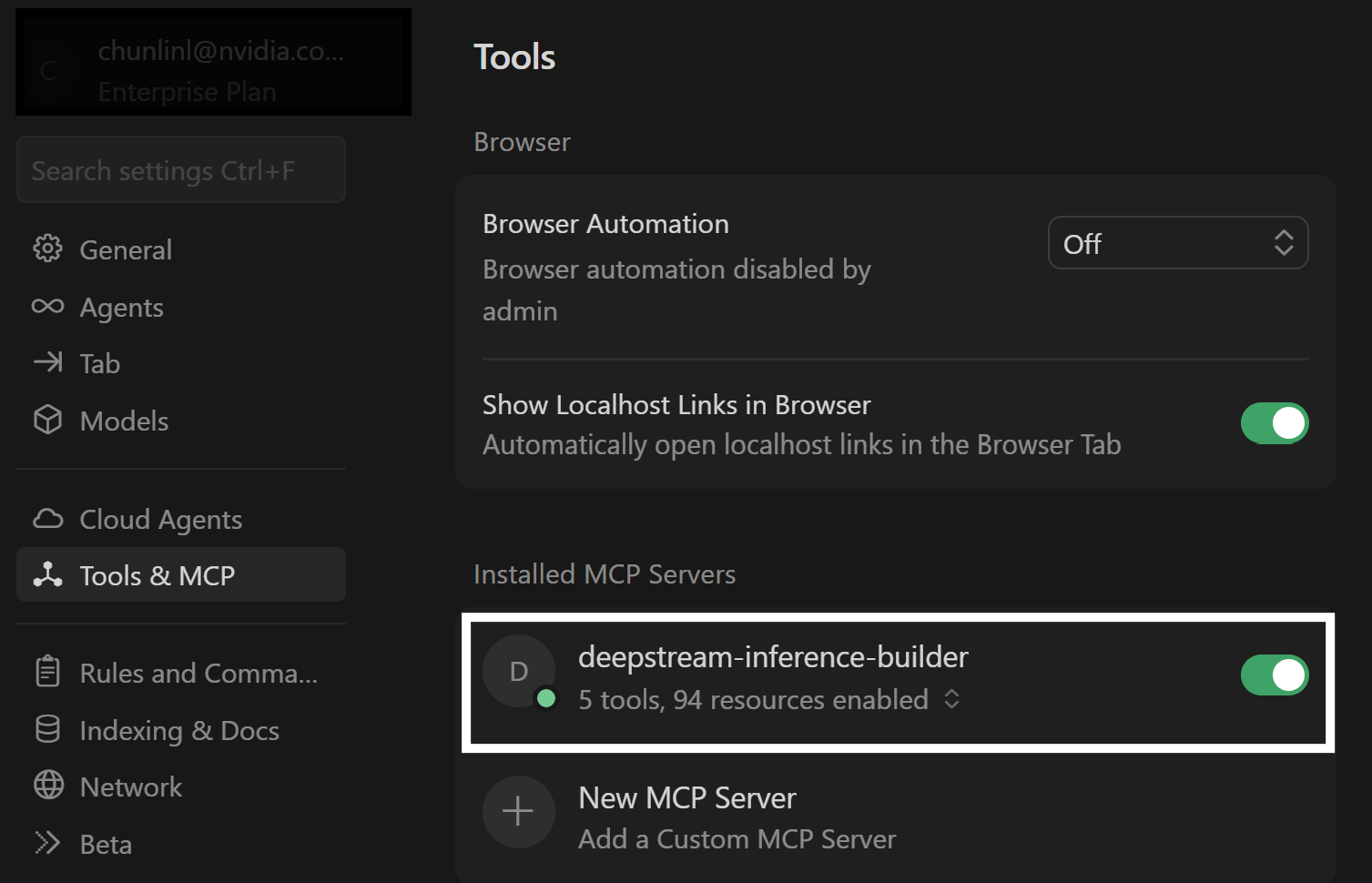

If you have Cursor installed in your development machine, you can directly open the project folder. If not, you need to set up the SSH remote connection to your development machine and open the project folder. Then navigate to File > Preferences > Cursor Settings > MCP. You should see:

A green status icon next to

deepstream-inference-builderindicates the server is connected (If your MCP server is project-specific, you need to open the project in Cursor to see the green status icon).Begin using the tools by starting a new agent with your prompts (for best results, choose more capable models such as Opus 4.6 from Claude). For example:

“Show me what sample configurations are available from the inference builder?”

“Generate a DeepStream object detection pipeline using the inference builder with PeopleNet transformer model from NGC.”

Set up for Claude Code#

You must have Claude Code installed in your development machine.

Generate the MCP configuration.

Global configuration:

python3 mcp/setup_mcp.py ~/.claude/.mcp.json

Project‑specific configuration:

python3 mcp/setup_mcp.py /path/to/your_project/.mcp.json

Start Claude Code from your project folder and verify the MCP server is connected by running

/mcpin the Claude Code console. You should see:❯ deepstream-inference-builder · ✔ connected

If your MCP server is project-specific, you need to open Claude Code in the project directory.

Start using the tools by prompting, for example:

“Show me what sample configurations are available from the inference builder?”

“Generate a DeepStream object detection pipeline using the inference builder with PeopleNet transformer model from NGC.”

Sample prompts#

When pairing with the Opus 4.6 model from Claude, the following prompts reliably generate functional pipelines. Note that while the outputs are consistent in quality, the number of iterations, the time to reach a working result, and the specific implementation details may vary between runs.

Before trying the following prompts, ensure your development has the access to the models:

Ensure that the NGC CLI is installed on your system in order to download models from NGC. Additionally, set your NGC API key in an environment variable named NGC_API_KEY.

Make sure you have a valid Hugging Face account and that your access token is set as the HF_TOKEN environment variable.

Prompt 1 — basic detection with smoke test:

Use deepstream inference builder tool to create an object detection pipeline with PeopleNet Transformer model from NGC and verify it with a smoke test.

Prompt 2 — multi-stream detection with tracker:

Use deepstream inference builder tool to create an object detection pipeline with the PeopleNet transformer model from NGC; the pipeline should support 4 video inputs in parallel and track all the objects in the inputs using NVDCF tracker. Do a smoke test once done.

Prompt 3 — detection with custom post-processing logic:

Use deepstream inferenc builder tool to create an object detection pipeline with PeopleNet transformer model from NGC; the pipeline accepts video url as input and output detected bounding boxes. Count the number of people in each frame and raise an alarm whenever the number exceeds 10 by writing it down to a file. Do a smoke test once done.

Prompt 4 — end-to-end VLM microservice (master prompt with follow-ups):

Note: The VLM model specified in this prompt has high memory requirements and is best run on high-end GPUs such as H100 or B200. If your GPU cluster is shared, be sure to monitor memory allocation and, if needed, instruct the agent to select a GPU with sufficient available memory before running.

Locate the MCP tool deepstream-inference-builder and complete the task: Start a new project under name rtvi_vlm and create a video summarization/caption microservice there using Qwen3-VL-2B-Instruct model driven by vllm backend and Deepstream accelerated decoder. The microservice supports adding/deleting RTSP streams as assets and generating video caption for added streams. The given RTSP stream will be chunked to a batch of frames with a given interval for summarization. I also want to build a container image for the microservice and run a smoke test on the container image once done.

Follow-up prompts for Prompt 4:

Download the Qwen3-VL-2B-Instruct model from Hugging Face and run a video summarization test.

Generate a service management script to start, restart, stop, rebuild the service and check the service status, also fetch the service logs. Generate a client script to add/delete streams and trigger a summarization request on streams.

Now let's add a new feature to the project. After the model generates summarizations, let's postprocess it for each chunk and send them to a Kafka server. Create an environment variable to toggle the feature.

Write a detailed documentation for the project to ensure seamless and consistent future work.

Expectations#

The Agent is expected to retrieve the information from the inference builder MCP server set up locally on your development machine before moving on to the next step.

The Agent is expected to draft a plan based on the prompt and generates required files accordingly (file names may differ in each run unless explicitly specified):

peoplenet_transformer_pipeline.yaml (pipeline configuration yaml)

Dockerfile

nvdsinfer_config.yaml (nvinfer configuration yaml)

peoplenet.tgz (python code for inference)

processor.py (python code for preprocessing and postprocessing, expected when custom logic is required)

models/peoplenet (folder for required model files)

openapi.yaml (openapi specification, expected when the output is a microservice)

The Agent is expected to fix all the build and runtime errors while doing the smoke test.

For complex tasks such as prompt 4, you can start with a master prompt and add features gradually using follow-up prompts.

Important Notes & Things to Watch Out For:

Cursor MCP connection: Intermittent MCP connection issues observed with multiple Cursor versions. If the agent complains about MCP connection issues or the MCP server status is not green, try to restart the agent or restart Cursor.

Time to complete the task: The total time for the agent to accomplish the task may vary on different runs, and the average is about 15-30 minutes for each sample. (A significant amount of time is expected to be spent on building the container image and smoke testing).

Project directory creation: The

generate_inference_pipelinetool produces a tarball and does not create an output directory on its own. If you need a separate project folder, explicitly include “create a new project directory” in the prompt you give to the AI coding agent.