Overview#

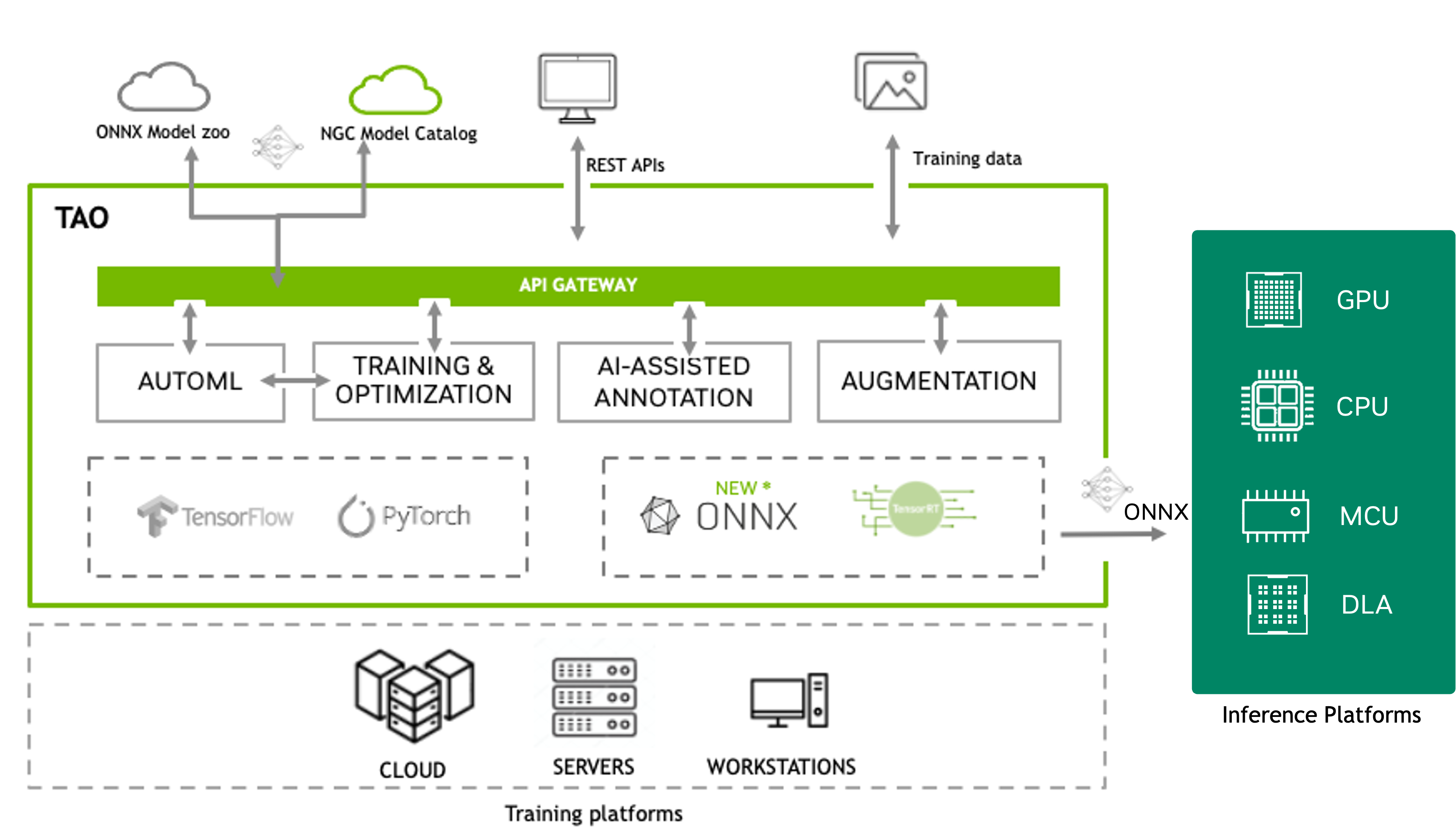

NVIDIA TAO is a low-code AI toolkit built on TensorFlow and PyTorch that simplifies and accelerates model training by abstracting away the complexity of deep learning frameworks. TAO also provides applications to visualize and validate trained models.

TAO focuses on training, fine-tuning, and optimizing computer vision foundation models. You can select from 100+ pretrained vision AI models on NGC and fine-tune them on your own dataset without writing a single line of code. TAO outputs trained models in ONNX format that you can deploy on any platform that supports ONNX. TAO also provides inference applications to validate your trained models.

To train and fine-tune Large Language Models (LLMs), refer to NVIDIA NeMo.

TAO Overview Image#

TAO supports most of the popular CV tasks such as:

Multi-camera 3D object detection and tracking

Self-supervised pretraining and domain adaptation for foundation models

Image classification

Object detection

Instance segmentation

Semantic segmentation

Optical character detection and recognition (OCD/OCR)

Body pose estimation

Key point estimation

Action recognition

Siamese network

Change detection

CenterPose

Segmentation in context

Embedding models for computer vision

For image classification, object detection and segmentation, you can choose one of the many feature extractors and use it with one of many heads for classification, detection and segmentation tasks, giving you access to as many as 100+ model combinations. TAO supports some of the leading vision transformers (ViTs) like RADIOv2, DINOv2, FAN, GC-ViT, SWIN, DINO, D-DETR and SegFormer.

Backbone |

Image classification |

|---|---|

NVCLIP |

✓ |

C-RADIOv2 |

✓ |

NvDINOv2 |

✓ |

GcViT |

✓ |

ViT |

✓ |

FAN |

✓ |

FasterViT |

✓ |

ResNet |

✓ |

Swin |

✓ |

EfficientNet |

✓ |

Backbone |

DINO |

D-DETR |

Grounding DINO |

RT-DETR |

EfficientDet |

|---|---|---|---|---|---|

C-RADIOv2 |

✓ |

||||

ConvNext |

✓ |

||||

NvDINOv2 |

✓ |

||||

GcViT |

✓ |

✓ |

|||

ViT |

✓ |

✓ |

|||

FAN |

✓ |

||||

ResNet |

✓ |

✓ |

✓ |

||

Swin |

✓ |

||||

EfficientNet |

✓ |

Backbone |

MAL |

Mask GroundingDINO |

Mask2Former |

|---|---|---|---|

ViT |

✓ |

||

Swin |

✓ |

✓ |

Backbone |

SegFormer |

Mask2Former |

|---|---|---|

C-RADIOv2 |

x |

|

NvDINOv2 |

x |

|

FAN |

✓ |

|

Swin |

✓ |

|

MIT-b |

✓ |

Backbone |

Mask2Former |

|---|---|

Swin |

✓ |

Backbone |

OCD |

OCR |

|---|---|---|

FAN |

✓ |

✓ |

ResNet |

✓ |

✓ |

Backbone |

Classification |

Segmentation |

|---|---|---|

C-RADIOv2 |

✓ |

|

NvDINOv2 |

✓ |

|

ViT |

✓ |

✓ |

FAN |

✓ |

✓ |

Backbone |

Pose Classification |

|---|---|

ST-GCN (graph convolutional network) |

✓ |

Backbone |

Re-identification |

Metric Learning Recognition |

|---|---|---|

NvDINOv2 |

✓ |

|

ViT |

✓ |

|

ResNet |

✓ |

✓ |

Swin |

✓ |

TAO 6.26.3 expands the toolkit with new model architectures, pretrained models, and platform capabilities for training, optimization, and deployment.

New fine-tuning capabilities:

NVPanoptix3D: End-to-end 3D panoptic reconstruction with unified 2D/3D segmentation and multi-plane occupancy prediction

CLIP: Contrastive vision-language model for zero-shot classification and retrieval, with SigLIP2 and RADIO-CLIP support

Cosmos Embed1: Visual embedding model for image retrieval and classification with LoRA fine-tuning

Pretrained models:

Cosmos-Embed1: Visual embedding model for image retrieval and classification with LoRA fine-tuning

RADIO-CLIP and SigLIPv2: Embedding models for Metropolis Blueprints

RT-DETR Warehouse 2D: 2D object detection model for warehouse environments

C-RADIOv3 (B/L/H/G): Enhanced multi-teacher distilled foundation model for generating rich visual embeddings, available on Hugging Face

Training and optimization:

Expanded quantization support: QDQ ONNX quantization for 12 additional computer vision models, including Deformable DETR, DINO, Grounding DINO, Mask2Former, OneFormer, and SegFormer

Expanded TensorRT engine generation: INT8/FP8 calibration support for newly supported networks

Fine=tuning for Cosmos-Reason 2.0 VLMs: SFT, PEFT, AutoML and Reinforcement Learning Fine-Tuning of Cosmos-Reason2.0 VLMs.

New AutoML algorithms: Six new algorithms (BOHB, BFBO, ASHA, PBT, DEHB, and Hyperband-ES) with Weights & Biases integration

Platform and deployment:

SLURM and Lepton execution backends: Run training and evaluation jobs on remote SLURM clusters or Lepton AI

HuggingFace Inference Microservice: Deploy any HuggingFace Hub model as an inference microservice

Existing features:

Pretraining and fine-tuning workflows for foundation models like the ConvNext and NvDINOv2 series of backbones

Fine-tuning the NVIDIA-trained commercially viable foundation model C-RADIOv2 with several downstream tasks

Distilling foundation models to smaller and faster backbones on structured and unstructured data

Distilling heavier object detection models into faster, smaller models for edge deployment

Deploying TAO Training workflows as scalable microservices via Fine-Tuning Micro-Services (FTMS)

NVIDIA also includes several inference applications as part of TAO.

A Gradio application to try out zero-shot in-context segmentation using the SEGIC model, in the TAO PyTorch GitHub repository.

A Triton inference application for the FoundationPose model, in TAO Triton Apps.

A catalog of NVIDIA Inference Microservices (NIMs) to try out different TAO models:

A GitHub repository called metropolis_nim_workflows reference workflows, using the published NIMs

Note

NVIDIA Triton Inference Server is open-source software designed for deploying and scaling AI models in production environments. It supports various machine learning frameworks, hardware platforms (GPUs and CPUs), and deployment environments (cloud, data center, and edge).

NVIDIA Inference Microservices (NIMs) are a collection of pre-built, optimized, ready-to-use microservices that enable developers to deploy and scale AI models in production environments. NIMs are designed to simplify the deployment process by providing a set of preconfigured components that can be used to build and deploy AI models.

For more information about Triton Inference Server and NIMs, visit the Triton Inference Server documentation and NIMs pages.

Note

As of version 6.0.0, the TAO containers can run on x86 and ARM64 platforms with discrete GPUs. For more information about the supported GPUs, refer to the Quick Start Guide.

Pretrained Models#

TAO has an extensive selection of foundation models and lighter pretrained models that are trained either on public datasets like ImageNet, COCO, and OpenImages, or on proprietary datasets for task-specific use cases like people detection, vehicle detection, and action recognition. The task-specific models can be used directly for inference, but can also be fine-tuned on custom datasets for better accuracy.

Go to the section Model Zoo to learn more about all of the pretrained models.

Key Features#

TAO packages have several key features to help developers accelerate their AI training and optimization. Here are a few of them:

Fine-tuning and model optimization workflows

Self-Supervised Learning <self_supervised_learning>: Pretrains and performs domain adaptation on foundation models (Transformers and CNN based models).

Distillation <distillation>: Distills learnings from a heavier model to a smaller and faster model for edge deployment.

Fine-Tuning Cosmos-Reason VLM <cosmos_rl>: Full SFT, PEFT, AutoML and Reinforcement Learning Fine-Tuning of Cosmos-Reason2.0 VLMs.

ONNX export: Supports model output in industry-standard ONNX format, which can then be used directly on any platform.

Multimodal Embedding model fine-tuning <embedding_model_finetuning>: Pretrains and performs domain adaptation of foundational multimodal embedding models like Cosmos-Embed1 and RADIO-CLIP/SigLIPv2

Multi-GPU: Accelerates training by parallelizing training jobs across multiple GPUs on a single node.

Multi-Node: Accelerates training by parallelizing training jobs across multiple nodes.

Quantization-Aware Training <quantization_aware_training>: Emulates lower precision quantization during training to reduce accuracy loss from training to lower precision inference.

Model Pruning <model_pruning>: Reduces the number of parameters in a model to reduce model size and improve accuracy.

Training Visualization <visualization>: Visualizes training graphs and metrics in TensorBoard or in third-party services.

Data Services

Data Augmentation <offline_data_augmentation>: Performs offline and online augmentation to add data diversity to your dataset, which can then generalize the model.

AI-assisted data annotation <auto_labeling>: Class-agnostic auto-labeler that generates segmentation masks provided the bounding box.

Data Analytics <data_analytics>: Analyzes object-detection annotation files and image files, calculates insights, and generate graphs and a summary.

TAO also provides several features for service providers and NVIDIA partners looking to integrate TAO with their workflows to provide additional services.

AutoML: Automatic hyperparameter sweeps and optimization to generate best accuracy on a given dataset.

REST APIs: Use cloud API endpoints to call into your managed TAO services in the cloud.

Kubernetes deployment: Deploy TAO services in a Kubernetes cluster either on-premise or with one of the cloud-managed Kubernetes services.

Docker Compose deployment: Deploy TAO services in a Docker Compose environment.

Source code availability: Access source code for TAO to add your own customization.

How to Get Started#

You can find detailed information about getting started in TAO getting started guide.

The “getting started” package contains install scripts, Jupyter notebooks, and model configuration files for training and optimization. There are Jupyter notebooks for all the models that can be used as templates to run your training. Each notebook has a call to download a sample dataset to run a training job. You can replace this with your own dataset.

TAO Architecture#

TAO is a multi-container, cloud-native AI fine-tuning microservice. The component Docker containers are available from the NVIDIA GPU Accelerated Container Registry (NGC), which come preinstalled with all dependencies required for training. You can run the CLI from Jupyter notebooks packaged as part of the Getting Started Guide, or from the command line.

The output of the TAO workflow is a trained model that can be deployed for inference on NVIDIA devices using DeepStream and NVIDIA TensorRT <TensorRT>™.

Depending on the execution environment, the TAO CLI can be run on a local machine or as a cloud-based service running on a Kubernetes cluster.

The TAO application layer is built on top of NVIDIA CUDA-X™, which contains all the lower-level NVIDIA libraries, including NVIDIA Container Runtime for GPU acceleration, NVIDIA® CUDA and NVIDIA cuDNN™ for deep learning (DL) operations, and TensorRT (the NVIDIA inference optimization and runtime engine) for optimizing models. Models that are generated with TAO are completely compatible with and accelerated for TensorRT, which ensures maximum inference performance without any extra effort.

TAO Deployment Modes#

Deployment Type |

Use Case |

User Level |

Deployment Method |

API/Access Type |

Infrastructure |

Data and Model Store |

Multi-GPU |

Multi-Node |

AutoML |

Job Orchestration |

|

|---|---|---|---|---|---|---|---|---|---|---|---|

Local Single Machine |

Quick and simple experiments |

Beginner |

Docker Run |

Single Server/Workstation |

Local (mounted at runtime) |

✓ |

|||||

Docker Compose |

Quick setup of finetuning microservices |

Intermediate |

Docker Compose |

Single Server/Workstation |

Local or Cloud |

✓ |

✓ |

✓ |

|||

Cloud Service Provider |

At-scale deployment in cloud with multi-tenancy |

Advanced |

Kubernetes |

Cloud |

Cloud Storage |

✓ |

✓ |

✓ |

✓ |

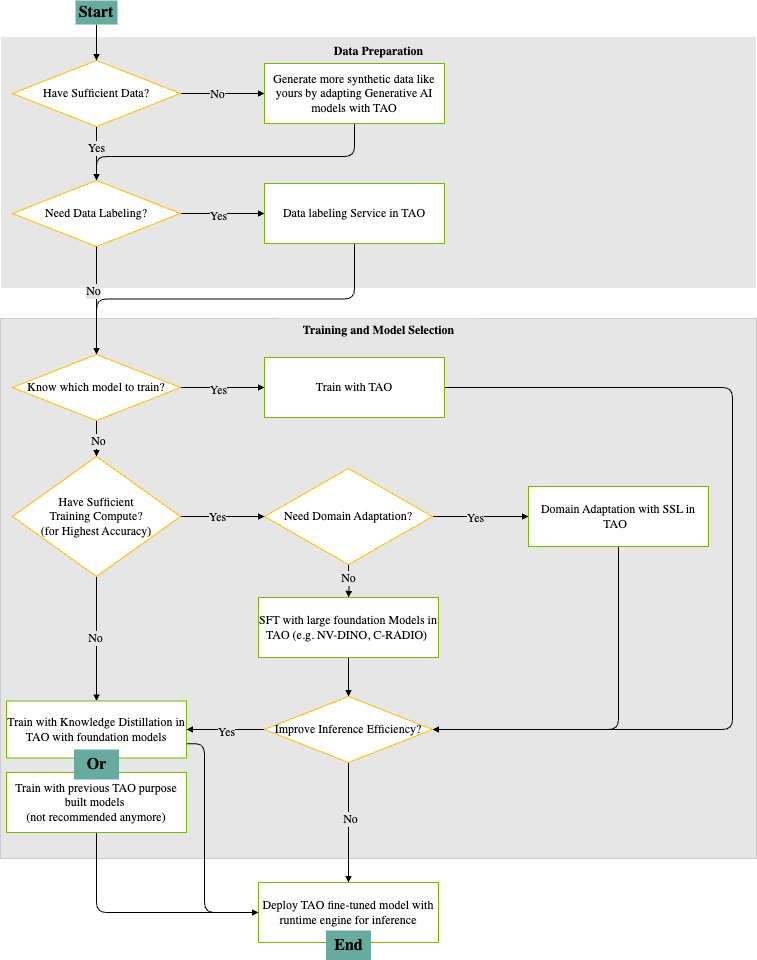

TAO Workflows#

The following diagram provides a visual guide for building, adapting, and deploying deep learning models using TAO. It outlines the decision points and actions, and provides a recipe for an end-to-end workflow that includes:

Selecting models

Preparing data

Adapting to new domains

Optimizing models

Deploying models for inference

Data Preparation#

Assess Data Availability

The process begins by determining if you have sufficient data for training your model.

If data is insufficient, TAO enables you to generate additional synthetic data using advanced tools like StyleGAN-XL, ensuring you have enough examples to achieve robust model performance.

Data Labeling Needs

If your data lacks labels, TAO offers built-in auto-labeling actions to generate annotations, preparing your dataset for fully supervised fine-tuning with tasks like object detection and instance segmentation.

If your data does not need labeling, you can proceed directly to the model training phase.

Training and Model Selection#

Model Selection

If you already know which model architecture suits your task, you can immediately begin training with TAO, leveraging its optimized pipelines for popular AI models.

If you are unsure what model to use, the workflow guides you to evaluate your available training compute resources.

Training Compute Assessment

With sufficient compute resources, you can pursue the highest degree of accuracy by training large, state-of-the-art foundation models (such as NV-DINOv2 or C-RADIOv2) within TAO.

If compute resources are limited, we recommend using knowledge distillation from foundation models, or as a fallback, previous-generation TAO models (although this is no longer the preferred option).

Domain Adaptation

If your application requires adapting a model to a new domain, TAO supports domain adaptation using self-supervised learning (SSL) techniques, which can significantly improve performance on specialized datasets.

Inference Optimization and Deployment#

Inference Efficiency

Before you deploy you have the option to apply inference efficiency improvements, making your model run faster and use fewer resources in production environments.

If your model does not need further optimization, you can proceed to deployment.

Deployment

The final step is deploying your fine-tuned TAO model using the runtime engine, making it ready for efficient inference in your target application.

This workflow ensures a logical, efficient progression from raw data to a high-performance deployed AI model, utilizing NVIDIA TAO’s comprehensive suite of tools for data generation, labeling, training, adaptation, and inference optimization.

Learning Resources#

NVIDIA provides many tutorial videos, developer blogs, and other resources that can help you get started with TAO Toolkit.

Tutorial Videos#

TAO Toolkit provides the following tutorial videos to cover popular use cases:

Developer Blogs#

NVIDIA publishes several blogs that can help you learn to use TAO Toolkit:

How to Build a Real-Time Visual Inspection Pipeline with NVIDIA TAO 6 and NVIDIA Deepstream 8

About the New Foundational Models and Training Capabilities with NVIDIA TAO 5.5

About the latest features in TAO 5.0

How to train with PeopleNet and other pretrained models using TAO Toolkit.

How to improve INT8 accuracy using quantization aware training (QAT) with TAO Toolkit.

How to create a real-time license plate detection and recognition app

How to prepare state of the art models for classification and object detection with TAO Toolkit

How to train and optimize a 2D body-pose estimation model with TAO: 2D Pose Estimation Part 1 and 2D Pose Estimation Part 2.

White Papers#

You can learn more about different use cases for TAO Toolkit in the white paper Endless Ways to Adapt and Supercharge Your AI Workflows with Transfer Learning.

Webinars#

Recent developments in TAO in AI Models Made Simple Using TAO from GTC Spring 2023

Support Information#

If you have any questions when using TAO to train a model and deploy to NVIDIA Riva or DeepStream, post them here: