SSD#

With SSD, the following tasks are supported:

dataset_convert

train

evaluate

prune

inference

export

These tasks may be invoked from the TAO Launcher by following the below mentioned convention from command line:

tao model ssd <sub_task> <args_per_subtask>

where, args_per_subtask are the command line arguments required for a given subtask. Each

of these sub-tasks are explained in detail below.

Data Input for Object Detection#

The object detection apps in TAO expect data in KITTI format for training and evaluation.

See the Data Annotation Format page for more information about the KITTI data format.

Pre-processing the Dataset#

The ssd dataloader supports the raw KITTI formatted data as well as TFrecords.

To use TFRecords for optimized iteration across the data batches, the the raw input data need to be converted to TFRecords format first.

This can be done using the dataset_convert subtask. Currently, the KITTI and COCO formats are supported.

The dataset_convert tool requires a configuration file as input. Details of the

configuration file and examples are included in the following sections.

Configuration File for Dataset Converter#

The dataset_convert tool provides several configurable parameters. The parameters are encapsulated in

a specification file to convert data from the original annotation format to the TFRecords format which the trainer can ingest.

KITTI and COCO formats can be configured by using either kitti_config or coco_config

respectively. You may use only one of the two in a single specification file.

The specification file is a prototxt format file with following global parameters:

kitti_config: A nested prototxt configuration with multiple input parameterscoco_config: A nested prototxt configuration with multiple input parametersimage_directory_path: The path to the dataset root. Theimage_dir_nameis appended to this path to get the input images and must be the same path specified in the experiment spec file.target_class_mapping: The prototxt dictionary that maps the class names in the tfrecords to the target class to be trained in the network.

kitti_config#

Here are descriptions of the configurable parameters for the kitti_config field:

Parameter |

Datatype |

Default |

Description |

Supported Values |

|---|---|---|---|---|

root_directory_path |

string |

– |

The path to the dataset root directory |

– |

image_dir_name |

string |

– |

The relative path to the directory containing images from the path in root_directory_path. |

– |

label_dir_name |

string |

– |

The relative path to the directory containing labels from the path in root_directory_path. |

– |

partition_mode |

string |

– |

The method employed when partitioning the data to multiple folds. Two methods are supported:

|

|

num_partitions |

int |

2 (if partition_mode is random) |

The number of partitions to use to split the data (N folds). This field is ignored when the partition model is set to random, as by default only two partitions are generated: val and train. In sequence mode, the data is split into n-folds. The number of partitions is ideally fewer than the total number of sequences in the kitti_sequence_to_frames file. |

n=2 for random partition n< number of sequences in the kitti_sequence_to_frames_file |

image_extension |

str |

.png |

The extension of the images in the image_dir_name parameter. |

.png .jpg .jpeg |

val_split |

float |

20 |

The percentage of data to be separated for validation. This only works under “random” partition mode. This partition is available in fold 0 of the TFrecords generated. Set the validation fold to 0 in the dataset_config. |

0-100 |

kitti_sequence_to_frames_file |

str |

The name of the KITTI sequence to frame mapping file. This file must be present within the dataset root as mentioned in the root_directory_path. |

||

num_shards |

int |

10 |

The number of shards per fold. |

1-20 |

The sample configuration file shown below converts the 100% KITTI dataset to the training set.

kitti_config {

root_directory_path: "/workspace/tao-experiments/data/"

image_dir_name: "training/image_2"

label_dir_name: "training/label_2"

image_extension: ".png"

partition_mode: "random"

num_partitions: 2

val_split: 0

num_shards: 10

}

image_directory_path: "/workspace/tao-experiments/data/"

target_class_mapping {

key: "car"

value: "car"

}

target_class_mapping {

key: "pedestrian"

value: "pedestrian"

}

target_class_mapping {

key: "cyclist"

value: "cyclist"

}

target_class_mapping {

key: "van"

value: "car"

}

target_class_mapping {

key: "person_sitting"

value: "pedestrian"

}

target_class_mapping {

key: "truck"

value: "car"

}

coco_config#

Here are descriptions of the configurable parameters for the coco_config field:

Parameter |

Datatype |

Default |

Description |

Supported Values |

|---|---|---|---|---|

root_directory_path |

string |

– |

The path to the dataset root directory |

– |

img_dir_names |

string (repated) |

– |

The relative path to the directory containing images from the path in root_directory_path for each partition. |

– |

annotation_files |

string (repated) |

– |

The relative path to the directory containing JSON file from the path in root_directory_path for each partition. |

– |

num_partitions

|

int

|

2

|

The number of partitions in the data. The number of partition must match the length of the list for img_dir_names and annotation_files.

By default, two partitions are generated: val and train.

|

|

num_shards |

int (repeated) |

[10] |

The number of shards per partitions. If only one value is provided, same number of shards is applied in all partitions |

The sample configuration file shown below converts the COCO dataset with training and validation data where number of shard is 32 for validation and 256 for training.

coco_config {

root_directory_path: "/workspace/tao-experiments/data/coco"

img_dir_names: ["val2017", "train2017"]

annotation_files: ["annotations/instances_val2017.json", "annotations/instances_train2017.json"]

num_partitions: 2

num_shards: [32, 256]

}

image_directory_path: "/workspace/tao-experiments/data/coco"

Sample Usage of the Dataset Converter Tool#

The dataset_convert tool is described below:

tao model ssd dataset-convert [-h] -d DATASET_EXPORT_SPEC

-o OUTPUT_FILENAME

[-v]

You can use the following arguments:

-h, --help: Show this help message and exit-d, --dataset-export-spec: The path to the detection dataset spec containing the config for exporting.tfrecordfiles-o, --output_filename: The output filename-v: Enable verbose mode to show debug messages

The following example shows how to use the command with the dataset:

tao model ssd dataset_convert -d /path/to/spec.txt

-o /path/to/tfrecords/train

Creating a Configuration File#

Below is a sample of the SSD specification file. It has six major components: ssd_config,

training_config, eval_config, nms_config, augmentation_config,

and dataset_config. The format of the specification file is a protobuf text(prototxt) message

and each of its fields can be either a basic data type or a nested message.

random_seed: 42

ssd_config {

aspect_ratios: "[[1.0, 2.0, 0.5], [1.0, 2.0, 0.5, 3.0, 1.0/3.0], [1.0, 2.0, 0.5, 3.0, 1.0/3.0], [1.0, 2.0, 0.5, 3.0, 1.0/3.0], [1.0, 2.0, 0.5], [1.0, 2.0, 0.5]]"

scales: "[0.07, 0.15, 0.33, 0.51, 0.69, 0.87, 1.05]"

two_boxes_for_ar1: true

clip_boxes: false

variances: "[0.1, 0.1, 0.2, 0.2]"

arch: "resnet"

nlayers: 18

freeze_bn: false

freeze_blocks: 0

}

training_config {

batch_size_per_gpu: 16

num_epochs: 80

enable_qat: false

learning_rate {

soft_start_annealing_schedule {

min_learning_rate: 5e-5

max_learning_rate: 2e-2

soft_start: 0.15

annealing: 0.8

}

}

regularizer {

type: L1

weight: 3e-5

}

}

eval_config {

validation_period_during_training: 10

average_precision_mode: SAMPLE

batch_size: 16

matching_iou_threshold: 0.5

}

nms_config {

confidence_threshold: 0.01

clustering_iou_threshold: 0.6

top_k: 200

}

augmentation_config {

output_width: 300

output_height: 300

output_channel: 3

image_mean {

key: 'b'

value: 103.9

}

image_mean {

key: 'g'

value: 116.8

}

image_mean {

key: 'r'

value: 123.7

}

}

dataset_config {

data_sources: {

# option 1

tfrecords_path: "/path/to/train/tfrecord"

# option 2

# label_directory_path: "/path/to/train/labels"

# image_directory_path: "/path/to/train/images"

}

include_difficult_in_training: true

target_class_mapping {

key: "car"

value: "car"

}

target_class_mapping {

key: "pedestrian"

value: "pedestrian"

}

target_class_mapping {

key: "cyclist"

value: "cyclist"

}

target_class_mapping {

key: "van"

value: "car"

}

target_class_mapping {

key: "person_sitting"

value: "pedestrian"

}

validation_data_sources: {

label_directory_path: "/path/to/val/labels"

image_directory_path: "/path/to/val/images"

}

}

The top level structure of the specification file is summarized in the sections below.

Training Config#

The training configuration (training_config) defines the parameters needed for the training,

evaluation, and inference. Details are summarized in the table below.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

|

batch_size_per_gpu |

The batch size for each GPU, so the effective batch size is batch_size_per_gpu * num_gpus |

Unsigned int, positive |

– |

|

num_epochs |

The number of epochs to train the network |

Unsigned int, positive |

– |

|

enable_qat

|

Whether to use quantization-aware training

|

Boolean

|

Note: SSD does not support loading a pruned non-QAT model and retraining

it with QAT enabled, or vice versa. For example, to get a pruned QAT model,

perform the initial training with QAT enabled or

enable_qat=True. |

|

learning_rate

|

Only soft_start_annealing_schedule with these nested parameters is supported.

1. min_learning_rate: The minimum learning during the entire experiment

2. max_learning_rate: The maximum learning during the entire experiment

3. soft_start: Time to lapse before warm up ( expressed in percentage of progress

between 0 and 1)

4. annealing: Time to start annealing the learning rate

|

Message type

|

–

|

|

regularizer

|

This parameter configures the regularizer to be used while training and contains the

following nested parameters.

1. type: The type or regularizer to use. NVIDIA supports NO_REG, L1, and L2

2. weight: The floating point value for the regularizer weight

|

Message type

|

L1

Note: NVIDIA suggests using the L1 regularizer when training a network

before pruning as L1 regularization helps make the network weights more

prunable.

|

|

max_queue_size |

The number of prefetch batches in data loading |

Unsigned int, positive |

– |

|

n_workers |

The number of workers for data loading (set to less than 4 when using tfrecords) |

Unsigned int, positive |

– |

|

use_multiprocessing |

Whether to use multiprocessing mode of keras sequence data loader |

Boolean |

||

visualizer |

Training visualization config |

Message type |

||

early_stopping |

Early stopping config |

Message type |

Note

The learning rate is automatically scaled with the number of GPUs used during training, or the effective learning rate is learning_rate * n_gpu.

Training Visualization Config#

Visualization during training is configured by the visualizer parameter. The parameters of it are described in the table

below.

Parameter |

Description |

Data Type and Constraints |

Recommended/Typical Value |

enabled |

Boolean flag to enable or disable this feature |

bool. |

– |

num_images |

The maximum number of images to be visualized in TensorBoard. |

int. |

|

If the visualization is enabled, the tensorboard log will be produced during training including the graphs for learning rate, training loss, validation loss, validation mAP and validation AP of each class. And the augmented images with bboxes will also be produced in the tensorboard.

Early Stopping#

The parameters for early stopping are described in the table below.

Parameter |

Description |

Data Type and Constraints |

Recommended/Typical Value |

monitor |

The metric to monitor in order to enable early stopping. |

string |

|

patience |

The number of checks of |

int |

|

min_delta |

The delta of the minimum value of |

float |

Evaluation Config#

The evaluation configuration (eval_config) defines the parameters needed for the evaluation

either during training or as a standalone procedure. Details are summarized in the table below.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

validation_period_during_training |

The number of training epochs per validation |

Unsigned int, positive |

10 |

average_precision_mode

|

The Average Precision (AP) calculation mode can be either SAMPLE or INTEGRATE. SAMPLE

is used as VOC metrics for VOC 2009 or before. INTEGRATE is used for VOC 2010 or after.

|

ENUM type ( SAMPLE or INTEGRATE)

|

SAMPLE

|

matching_iou_threshold |

The lowest IoU of the predicted box and ground truth box that can be considered a match. |

Boolean |

0.5 |

visualize_pr_curve |

Boolean flag to enable or disable visualization of Precision-Recall curve. |

Boolean |

NMS Config#

The NMS configuration (nms_config) defines the parameters needed for NMS postprocessing.

The NMS configuration applies to the NMS layer of the model in training, validation, evaluation,

inference, and export. Details are summarized in the table below.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

confidence_threshold |

Boxes with a confidence score less than confidence_threshold are discarded before applying NMS. |

float |

0.01 |

cluster_iou_threshold |

The IoU threshold below which boxes will go through the NMS process. |

float |

0.6 |

top_k |

top_k boxes will be output after the NMS keras layer. If the number of valid boxes is less than k, the returned array will be padded with boxes whose confidence score is 0. |

Unsigned int |

200 |

infer_nms_score_bits |

The number of bits to represent the score values in NMS plugin in TensorRT OSS. The valid range is integers in [1, 10]. Setting it to any other values will make it fall back to ordinary NMS. Currently this optimized NMS plugin is only available in FP16 but it should also be selected by INT8 data type as there is no INT8 NMS in TensorRT OSS and hence this fastest implementation in FP16 will be selected. If falling back to ordinary NMS, the actual data type when building the engine will decide the exact precision(FP16 or FP32) to run at. |

int. In the interval [1, 10]. |

0 |

Augmentation Config#

The augmentation_config parameter defines the image size after preprocessing.

The augmentation methods in the SSD paper will be performed during training, including random flip, zoom-in,

zoom-out and color jittering. And the augmented images will be resized to the output shape defined

in augmentation_config. In evaluation process, only the resize will be performed.

Note

The details of augmentation methods can be found in setcion 2.2 and 3.6 of the paper.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

output_channel |

Output image channel of augmentation pipeline. |

integer |

– |

output_width |

The width of preprocessed images and the network input. |

integer, multiple of 32 |

– |

output_height |

The height of preprocessed images and the network input. |

integer, multiple of 32 |

– |

random_crop_min_scale |

Minimum patch scale of RandomCrop augmentation. Default:0.3 |

float >= 1.0 |

– |

random_crop_max_scale |

Maximum patch scale of RandomCrop augmentation. Default:1.0 |

float >= 1.0 |

– |

random_crop_min_ar |

Minimum aspect ratio of RandomCrop augmentation. Default:0.5 |

float > 0 |

– |

random_crop_max_ar |

Maximum aspect ratio of RandomCrop augmentation. Default:2.0 |

float > 0 |

– |

zoom_out_min_scale |

Minimum scale of ZoomOut augmentation. Default:1.0 |

float >= 1.0 |

– |

zoom_out_max_scale |

Maximum scale of ZoomOut augmentation. Default:4.0 |

float >= 1.0 |

– |

brightness |

Brightness delta in color jittering augmentation. Default:32 |

integer >= 0 |

– |

contrast |

Contrast delta factor in color jitter augmentation. Default:0.5 |

float of [0, 1) |

– |

saturation |

Saturation delta factor in color jitter augmentation. Default:0.5 |

float of [0, 1) |

– |

hue |

Hue delta in color jittering augmentation. Default:18 |

integer >= 0 |

– |

random_flip |

Probablity of performing random horizontal flip. Default:0.5 |

float of [0, 1) |

– |

image_mean |

A key/value pair to specify image mean values. If omitted, ImageNet mean will be used for image preprocessing. If set, depending on output_channel, either ‘r/g/b’ or ‘l’ key/value pair must be configured. |

dict |

– |

Note

If set random_crop_min_scale = random_crop_max_scale = 1.0, RandomCrop augmentation will be disabled. Similarly, set zoom_out_min_scale = zoom_out_max_scale = 1, ZoomOut augmentation will be disabled. And all color jitter delta values are set to 0, color jittering augmentation will be disabled.

Dataset Config#

The dataset_config parameter defines the path to the training dataset, validation dataset,

and target_class_mapping.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

data_sources |

The path to the training dataset. When using tfrecord as dataset ingestion, set:

When using raw KITTI labels and images, set:

|

Message type |

|

include_difficult_in_training |

Specifies whether to include difficult objects in the label (the Pascal VOC difficult label or KITTI occluded objects) |

bool |

true |

validation_data_sources |

The path to the training dataset images and labels |

Message type |

|

target_class_mapping |

A mapping of classes in labels to the target classes |

Message type |

Note

data_sources and validation_data_sources are both repeated fields.

Multiple datasets can be added to sources.

SSD config#

The SSD configuration (ssd_config) defines the parameters needed for building the SSD model.

Details are summarized in the table below.

Field |

Description |

Data Type and Constraints |

Recommended/Typical Value |

aspect_ratios_global |

The anchor boxes of aspect ratios defined in aspect_ratios_global will be generated for each feature layer used for prediction. Note that either the aspect_ratios_global or aspect_ratios parameter is required; you don’t need to specify both. |

string |

“[1.0, 2.0, 0.5, 3.0, 0.33]” |

aspect_ratios |

The aspect ratio of anchor boxes for different SSD feature layers Note: Either the aspect_ratios_global or aspect_ratios parameter is required; you don’t need to specify both. |

string |

“[[1.0,2.0,0.5], [1.0,2.0,0.5], [1.0,2.0,0.5], [1.0,2.0,0.5], [1.0,2.0,0.5], [1.0, 2.0, 0.5, 3.0, 0.33]]” |

two_boxes_for_ar1 |

If this parameter is True, two boxes will be generated with an aspect ratio of 1. One with a scale for this layer and the other with a scale that is the geometric mean of the scale for this layer and the scale for the next layer. |

Boolean |

True |

clip_boxes |

If true, all corner anchor boxes will be truncated so they are fully inside the feature images. |

Boolean |

False |

scales |

A list of positive floats containing scaling factors per convolutional predictor layer. This list must be one element longer than the number of predictor layers so that, if two_boxes_for_ar1 is true, the second aspect-ratio 1.0 box for the last layer can have a proper scale. Except for the last element in this list, each positive float is the scaling factor for boxes in that layer. For example, if for one layer the scale is 0.1, then the generated anchor box with aspect ratio 1 for that layer (the first aspect-ratio 1 box if two_boxes_for_ar1 is set to True) will have its height and width as 0.1*min(img_h, img_w). min_scale and max_scale are two positive floats. If both of them appear in the config, the program can automatically generate the scales by evenly splitting the space between min_scale and max_scale. |

string |

“[0.05, 0.1, 0.25, 0.4, 0.55, 0.7, 0.85]” |

min_scale/max_scale variances |

If both appear in the config, scales will be generated evenly by splitting the space between min_scale and max_scale. A list of 4 positive floats. The four floats, in order, represent variances for box center x, box center y, log box height, and log box width. The box offset for box center (cx, cy) and log box size (height/width) w.r.t. anchor will be divided by their respective variance value. Therefore, larger variances result in less significant differences between two different boxes on encoded offsets. |

float |

|

steps |

An optional list inside quotation marks with a length that is the number of feature layers for prediction. The elements should be floats or tuples/lists of two floats. The steps define how many pixels apart the anchor-box center points should be. If the element is a float, both vertical and horizontal margin is the same. Otherwise, the first value is step_vertical and the second value is step_horizontal. If steps are not provided, anchor boxes will be distributed uniformly inside the image. |

string |

|

offsets |

An optional list of floats inside quotation marks with length equal to the number of feature layers for prediction. The first anchor box will have a margin of offsets[i]*steps[i] pixels from the left and top borders. If offsets are not provided, 0.5 will be used as default value. |

string |

|

arch |

The backbone for feature extraction. Currently, “resnet”, “vgg”, “darknet”, “googlenet”, “mobilenet_v1”, “mobilenet_v2” and “squeezenet” are supported. |

string |

resnet |

nlayers |

The number of conv layers in a specific arch. For “resnet”, 10, 18, 34, 50 and 101 are supported. For “vgg”, 16 and 19 are supported. For “darknet”, 19 and 53 are supported. All other networks don’t have this configuration, and users should delete this parameter from the config file. |

Unsigned int |

|

freeze_bn |

Whether to freeze all batch normalization layers during training. |

boolean |

False |

freeze_blocks |

The list of block IDs to be frozen in the model during training. You can choose to freeze some of the CNN blocks in the model to make the training more stable and/or easier to converge. The definition of a block is heuristic for a specific architecture. For example, by stride or by logical blocks in the model, etc. However, the block ID numbers identify the blocks in the model in a sequential order so you don’t have to know the exact locations of the blocks when you do training. As a general principle, the smaller the block ID, the closer it is to the model input; the larger the block ID, the closer it is to the model output. You can divide the whole model into several blocks and optionally freeze a subset of it. Note that for FasterRCNN, you can only freeze the blocks that are before the ROI pooling layer. Any layer after the ROI pooling layer will not be frozen anyway. For different backbones, the number of blocks and the block ID for each block are different. It deserves some detailed explanations on how to specify the block IDs for each backbone. |

list(repeated integers)

|

Training the Model#

Train the SSD model using this command:

tao model ssd train [-h] -e <experiment_spec>

-r <output_dir>

-k <key>

[--gpus <num_gpus>]

[--gpu_index <gpu_index>]

[--use_amp]

[--log_file <log_file>]

[-m <resume_model_path>]

[--initial_epoch <initial_epoch>]

Required Arguments#

-r, --results_dir: Path to the folder where the experiment output is written.-k, --key: Provide the encryption key to decrypt the model.-e, --experiment_spec_file: Experiment specification file to set up the evaluation experiment. This should be the same as the training specification file.

Optional Arguments#

--gpus num_gpus: Number of GPUs to use and processes to launch for training. The default = 1.--gpu_index: The GPU indices used to run the training. We can specify the GPU indices used to run training when the machine has multiple GPUs installed.--use_amp: A flag to enable AMP training.--log_file: The path to the log file. Defaults tostdout.-m, --resume_model_weights: Path to a pre-trained model or model to continue training.--initial_epoch: Epoch number to resume from.--use_multiprocessing: Enable multiprocessing mode in data generator.-h, --help: Show this help message and exit.

Input Requirement#

Input size: C * W * H (where C = 1 or 3, W >= 128, H >= 128)

Image format: JPG, JPEG, PNG

Label format: KITTI detection

Sample Usage#

Here’s an example of using the train command on an SSD model:

tao model ssd train --gpus 2 -e /path/to/spec.txt -r /path/to/result -k $KEY

Evaluating the Model#

Use the following command to run evaluation for an SSD model:

tao model ssd evaluate [-h] -m <model>

-e <experiment_spec_file>

[-k <key>]

[--gpu_index <gpu_index>]

[--log_file <log_file>]

Required Arguments#

-m, --model: The.tltmodel orTensorRTengine to be evaluated.-e, --experiment_spec_file: The experiment specification file to set up the evaluation experiment. This should be the same as the training specification file.

Optional Arguments#

-h, --help: Show this help message and exit.-k, --key:The encoding key for the.tltmodel--gpu_index: The index of the GPU to run evaluation (useful when the machine has multiple GPUs installed). Note that evaluation can only run on a single GPU.--log_file: The path to the log file. The default path isstdout.

Here is a sample command to evaluate a SSD model:

tao model ssd evaluate -m /path/to/trained_tlt_ssd_model -k <model_key> -e /path/to/ssd_spec.txt

Running Inference on the Model#

The inference command for SSD networks can be used to visualize bboxes or generate

frame-by-frame KITTI format labels on a directory of images. Here’s an example of using this tool:

tao model ssd inference [-h] -i <input directory>

-o <output annotated image directory>

-e <experiment specification file>

-m <model file>

-k <key>

[-l <output label directory>]

[-t <bbox filter threshold>]

[--gpu_index <gpu_index>]

[--log_file <log_file>]

Required Arguments#

-m, --model: The path to the pretrained model (TAO model).-i, --in_image_dir: The directory of input images for inference.-o, --out_image_dir: The directory path to output annotated images.-k, --key: The key to the load model.-e, --config_path: The path to an experiment specification file for training.

Optional Arguments#

-t, --threshold: The threshold for drawing a bbox and dumping a label file. (default: 0.3).-h, --help: Show this help message and exit.-l, --out_label_dir: The directory to output KITTI labels to.--gpu_index: The index of the GPU to run inference (useful when the machine has multiple GPUs installed). Note that evaluation can only run on a single GPU.--log_file: The path to the log file. The default path isstdout.

Here is a sample of using inference with the SSD model:

tao model ssd inference -i /path/to/input/images_dir -o /path/to/output/dir -m /path/to/trained_tlt_ssd_model -k <model_key> -e /path/to/ssd_spec.txt

Pruning the Model#

Pruning removes parameters from the model to reduce the model size without compromising the integrity of the model itself.

The prune command includes these parameters:

tao model ssd prune [-h] -m <pretrained_model>

-o <output_file> -k <key>

[-n <normalizer>]

[-eq <equalization_criterion>]

[-pg <pruning_granularity>]

[-pth <pruning threshold>]

[-nf <min_num_filters>]

[-el [<excluded_list>]

[--gpu_index <gpu_index>]

[--log_file <log_file>]

Required Arguments#

-m, --pretrained_model: The path to the pretrained model.-o, --output_file: The path to output checkpoints to.-k, --key: The key to load a.tltmodel.

Optional Arguments#

-h, --help: Show this help message and exit.-n, normalizer:maxto normalize by dividing each norm by the maximum norm within a layer;L2to normalize by dividing by the L2 norm of the vector comprising all kernel norms. (default: max)-eq, --equalization_criterion: Criteria to equalize the stats of inputs to an element wise op layer, or depth-wise convolutional layer. This parameter is useful for resnets and mobilenets. Options arearithmetic_mean,geometric_mean,union, andintersection. (default:union)-pg, -pruning_granularity: Number of filters to remove at a time. (default:8)-pth: Threshold to compare normalized norm against. (default:0.1)-nf, --min_num_filters: Minimum number of filters to keep per layer (default:16)-el, --excluded_layers: List of excluded_layers. Examples: -i item1 item2 (default: [])--gpu_index: The index of the GPU to run pruning (useful when the machine has multiple GPUs installed). Note that evaluation can only run on a single GPU.--log_file: The path to the log file. Defaults tostdout.

Here’s an example of using the prune command:

tao model ssd prune -m /workspace/output/weights/resnet_003.tlt \

-o /workspace/output/weights/resnet_003_pruned.tlt \

-eq union \

-pth 0.7 -k $KEY

After pruning, the model needs to be retrained. See Re-training the Pruned Model for more details.

Re-training the Pruned Model#

Once the model has been pruned, there might be a slight decrease in accuracy. This happens

because some previously useful weights may have been removed. To regain accuracy,

NVIDIA recommends that you retrain this pruned model over the same dataset. To do this, use

the tao model ssd train command with an updated specification file that points to the newly pruned model

as the pretrained model file.

Users are advised to turn off the regularizer in the training_config for SSD to

recover the accuracy when retraining a pruned model. You may do this by setting the regularizer

type to NO_REG, as mentioned here. All the other parameters may be

retained in the specification file from the previous training.

Note

SSD does not support loading a pruned non-QAT model and retraining it with QAT

enabled, or vice versa. For example, to get a pruned QAT model, perform the initial training with

QAT enabled or enable_qat=True.

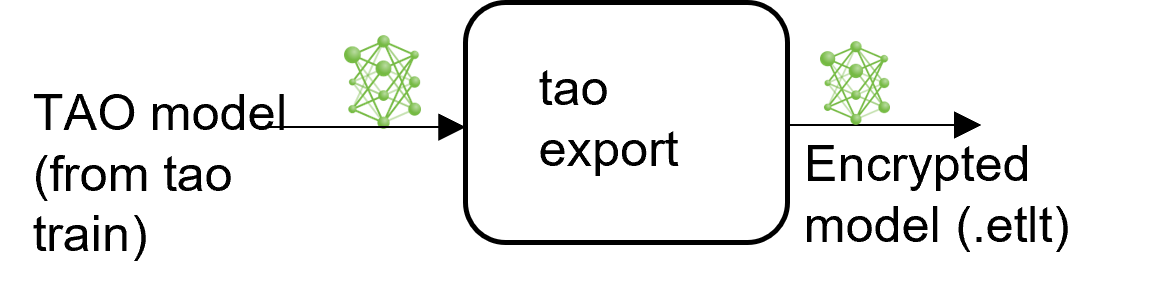

Exporting the Model#

TAO includes the export command to export and prepare

TAO models for Deploying to DeepStream. The export

command optionally generates the calibration cache for TensorRT INT8 engine calibration.

Exporting the model decouples the training process from inference and allows conversion to

TensorRT engines outside the TAO environment. TensorRT engines are specific to each hardware

configuration and should be generated for each unique inference environment. This may be

interchangeably referred to as the .trt or .engine file. The same exported TAO

model may be used universally across training and deployment hardware. This is referred to as the

.etlt file or encrypted TAO file. During model export, the TAO model is encrypted with a

private key. This key is required when you deploy this model for inference.

INT8 Mode Overview#

TensorRT engines can be generated in INT8 mode to improve performance, but require a calibration

cache at engine creation-time. The calibration cache is generated using a calibration tensor

file, if export is run with the --data_type flag set to int8.

Pre-generating the calibration information and caching it removes the need for calibrating the

model on the inference machine. Moving the calibration cache is usually much more convenient than

moving the calibration tensorfile, since it is a much smaller file and can be moved with the

exported model. Using the calibration cache also speeds up engine creation as building the

cache can take several minutes to generate depending on the size of the Tensorfile and the model

itself.

The export tool can generate an INT8 calibration cache by ingesting training data using the following method:

Pointing the tool to a directory of images that you want to use to calibrate the model. For this option, make sure to create a sub-sampled directory of random images that best represent your training dataset.

FP16/FP32 Model#

The calibration.bin is only required if you need to run inference at INT8 precision. For

FP16/FP32-based inference, the export step is much simpler: all you need to do is provide

a model from the train step to export to convert it into an encrypted TAO

model.

Exporting command#

Use the following command to export an SSD model

tao model ssd export [-h] -m <path to the .tlt model file generated by tao model train>

-k <key>

-e <path to experiment specification file>

[-o <path to output file>]

[--cal_json_file <path to calibration json file>]

[--gen_ds_config]

[--gpu_index <gpu_index>]

[--log_file <log_file_path>]

[--verbose]

Required Arguments#

-m, --model: The path to the.tltmodel file to be exported usingexport.-k, --key: The key used to save the.tltmodel file.-e, --experiment_spec: The path to the specification file.

Optional Arguments#

-o, --output_file: The path to save the exported model to. The default path is./<input_file>.etlt.--gen_ds_config: A Boolean flag indicating whether to generate the template DeepStream related configuration (“nvinfer_config.txt”) as well as a label file (“labels.txt”) in the same directory as theoutput_file. Note that the config file is NOT a complete configuration file and requires the user to update the sample config files in DeepStream with the parameters generated.--gpu_index: The index of (discrete) GPUs used for exporting the model. We can specify the GPU index to run export if the machine has multiple GPUs installed. Note that export can only run on a single GPU.--log_file: Path to the log file. Defaults to stdout.

QAT Export Mode Required Arguments#

--cal_json_file: The path to the json file containing tensor scale for QAT models. This argument is required if engine for QAT model is being generated.

Note

When exporting a model that was trained with QAT enabled, the tensor scale factors to

calibrate the activations are peeled out of the model and serialized to a TensorRT-readable cache

file defined by the cal_json_file argument.

Exporting a Model#

Here’s a sample command to export SSD model:

tao model ssd export -m $USER_EXPERIMENT_DIR/data/ssd/ssd_kitti_retrain_epoch12.tlt \

-o $USER_EXPERIMENT_DIR/data/ssd/ssd_kitti_retrain.int8.etlt \

-e $SPECS_DIR/ssd_kitti_retrain_spec.txt \

--key $KEY

TensorRT engine generation, validation, and int8 calibration#

For TensorRT engine generation, validation, and int8 calibration, please refer to TAO Deploy documentation.

Deploying to DeepStream#

For deploying to deep stream, please refer to Deploying to DeepStream for SSD.