Deploying to DeepStream for DSSD#

The deep learning and computer vision models that you’ve trained can be deployed on edge devices, such as a Jetson Xavier or Jetson Nano, a discrete GPU, or in the cloud with NVIDIA GPUs. TAO has been designed to integrate with DeepStream SDK, so models trained with TAO will work out of the box with DeepStream SDK.

DeepStream SDK is a streaming analytic toolkit to accelerate building AI-based video analytic applications. This section will describe how to deploy your trained model to DeepStream SDK.

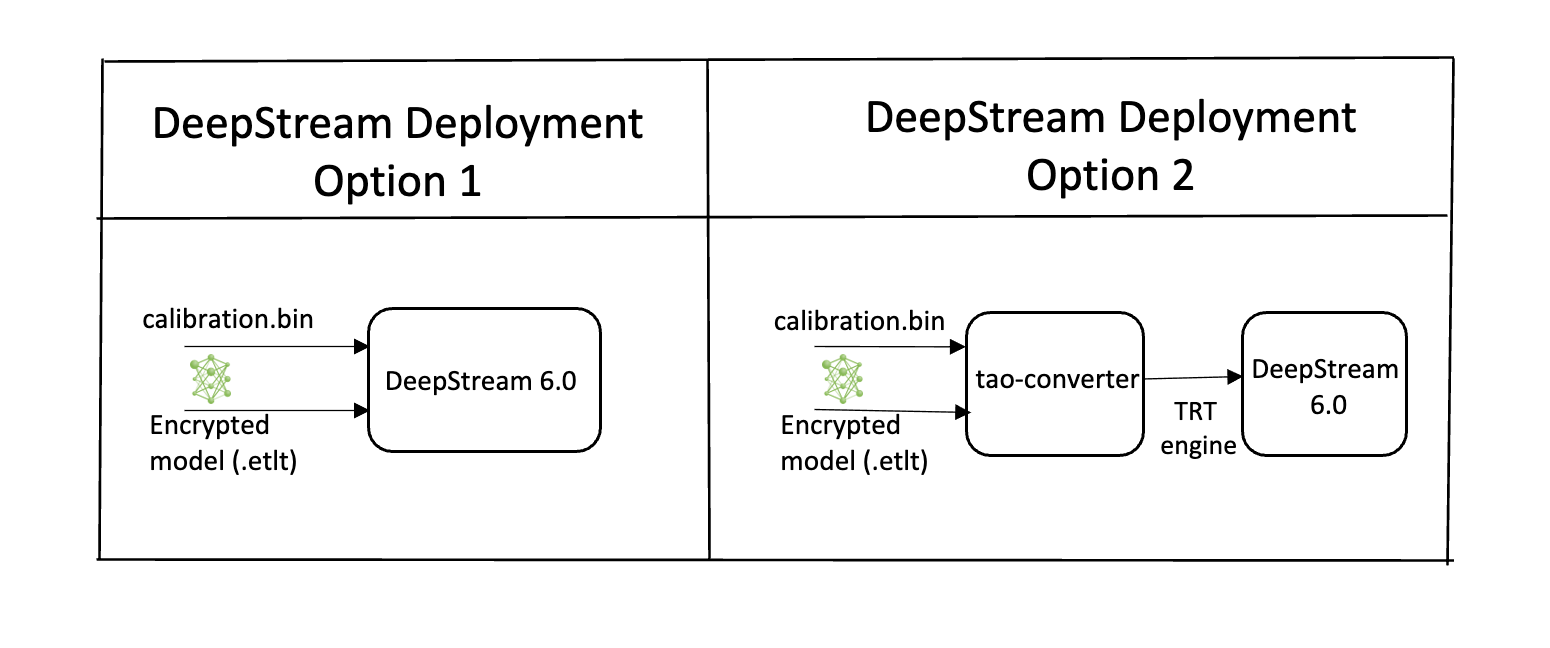

To deploy a model trained by TAO to DeepStream we have two options:

Option 1: Integrate the

.etltmodel directly in the DeepStream app. The model file is generated by export.Option 2: Generate a device-specific optimized TensorRT engine using TAO Deploy. The generated TensorRT engine file can also be ingested by DeepStream.

Option 3 (Deprecated for x86 devices): Generate a device-specific optimized TensorRT engine using TAO Converter.

Machine-specific optimizations are done as part of the engine creation process, so a distinct engine should be generated for each environment and hardware configuration. If the TensorRT or CUDA libraries of the inference environment are updated (including minor version updates), or if a new model is generated, new engines need to be generated. Running an engine that was generated with a different version of TensorRT and CUDA is not supported and will cause unknown behavior that affects inference speed, accuracy, and stability, or it may fail to run altogether.

Option 1 is very straightforward. The .etlt file and calibration cache are directly

used by DeepStream. DeepStream will automatically generate the TensorRT engine file and then run

inference. TensorRT engine generation can take some time depending on size of the model

and type of hardware.

Engine generation can be done ahead of time with Option 2: TAO Deploy is used to convert the .etlt

file to TensorRT; this file is then provided directly to DeepStream. The TAO Deploy workflow is similar to

TAO Converter, which is deprecated for x86 devices from TAO version 4.0.x but is still required for

deployment to Jetson devices.

See the Exporting the Model section for more details on how to export a TAO model.

TensorRT Open Source Software (OSS)#

TensorRT OSS build is required for DSSD models. This is required because several TensorRT

plugins that are required by these models are only available in TensorRT open source repo and not

in the general TensorRT release. Specifically, for DSSD, we need the batchTilePlugin and

NMSPlugin.

If the deployment platform is x86 with NVIDIA GPU, follow instructions for x86; if your deployment is on NVIDIA Jetson platform, follow instructions for Jetson.

TensorRT OSS on x86#

Building TensorRT OSS on x86:

Install Cmake (>=3.13).

Note

TensorRT OSS requires cmake >= v3.13, so install cmake 3.13 if your cmake version is lower than 3.13c

sudo apt remove --purge --auto-remove cmake wget https://github.com/Kitware/CMake/releases/download/v3.13.5/cmake-3.13.5.tar.gz tar xvf cmake-3.13.5.tar.gz cd cmake-3.13.5/ ./configure make -j$(nproc) sudo make install sudo ln -s /usr/local/bin/cmake /usr/bin/cmake

Get GPU architecture. The

GPU_ARCHSvalue can be retrieved by thedeviceQueryCUDA sample:cd /usr/local/cuda/samples/1_Utilities/deviceQuery sudo make ./deviceQuery

If the

/usr/local/cuda/samplesdoesn’t exist in your system, you could downloaddeviceQuery.cppfrom this GitHub repo. Compile and rundeviceQuery.nvcc deviceQuery.cpp -o deviceQuery ./deviceQuery

This command will output something like this, which indicates the

GPU_ARCHSis75based on CUDA Capability major/minor version.Detected 2 CUDA Capable device(s) Device 0: "Tesla T4" CUDA Driver Version / Runtime Version 10.2 / 10.2 CUDA Capability Major/Minor version number: 7.5

Build TensorRT OSS:

git clone -b 21.08 https://github.com/nvidia/TensorRT cd TensorRT/ git submodule update --init --recursive export TRT_SOURCE=`pwd` cd $TRT_SOURCE mkdir -p build && cd build

Note

Make sure your

GPU_ARCHSfrom step 2 is in TensorRT OSSCMakeLists.txt. If GPU_ARCHS is not in TensorRT OSSCMakeLists.txt, add-DGPU_ARCHS=<VER>as below, where<VER>representsGPU_ARCHSfrom step 2./usr/local/bin/cmake .. -DGPU_ARCHS=xy -DTRT_LIB_DIR=/usr/lib/x86_64-linux-gnu/ -DCMAKE_C_COMPILER=/usr/bin/gcc -DTRT_BIN_DIR=`pwd`/out make nvinfer_plugin -j$(nproc)

After building ends successfully,

libnvinfer_plugin.so*will be generated under`pwd`/out/.Replace the original

libnvinfer_plugin.so*:sudo mv /usr/lib/x86_64-linux-gnu/libnvinfer_plugin.so.8.x.y ${HOME}/libnvinfer_plugin.so.8.x.y.bak // backup original libnvinfer_plugin.so.x.y sudo cp $TRT_SOURCE/`pwd`/out/libnvinfer_plugin.so.8.m.n /usr/lib/x86_64-linux-gnu/libnvinfer_plugin.so.8.x.y sudo ldconfig

TensorRT OSS on Jetson (ARM64)#

Install Cmake (>=3.13)

Note

TensorRT OSS requires cmake >= v3.13, while the default cmake on Jetson/Ubuntu 18.04 is cmake 3.10.2.

Upgrade TensorRT OSS using:

sudo apt remove --purge --auto-remove cmake wget https://github.com/Kitware/CMake/releases/download/v3.13.5/cmake-3.13.5.tar.gz tar xvf cmake-3.13.5.tar.gz cd cmake-3.13.5/ ./configure make -j$(nproc) sudo make install sudo ln -s /usr/local/bin/cmake /usr/bin/cmake

Get GPU architecture based on your platform. The

GPU_ARCHSfor different Jetson platform are given in the following table.Jetson Platform

GPU_ARCHS

Nano/Tx1

53

Tx2

62

AGX Xavier/Xavier NX

72

Build TensorRT OSS:

git clone -b 21.03 https://github.com/nvidia/TensorRT cd TensorRT/ git submodule update --init --recursive export TRT_SOURCE=`pwd` cd $TRT_SOURCE mkdir -p build && cd build

Note

The

-DGPU_ARCHS=72below is for Xavier or NX, for other Jetson platform, change72referring toGPU_ARCHSfrom step 2./usr/local/bin/cmake .. -DGPU_ARCHS=72 -DTRT_LIB_DIR=/usr/lib/aarch64-linux-gnu/ -DCMAKE_C_COMPILER=/usr/bin/gcc -DTRT_BIN_DIR=`pwd`/out make nvinfer_plugin -j$(nproc)

After building ends successfully,

libnvinfer_plugin.so*will be generated under‘pwd’/out/.Replace

"libnvinfer_plugin.so*"with the newly generated.sudo mv /usr/lib/aarch64-linux-gnu/libnvinfer_plugin.so.8.x.y ${HOME}/libnvinfer_plugin.so.8.x.y.bak // backup original libnvinfer_plugin.so.x.y sudo cp `pwd`/out/libnvinfer_plugin.so.8.m.n /usr/lib/aarch64-linux-gnu/libnvinfer_plugin.so.8.x.y sudo ldconfig

Integrating the model to DeepStream#

There are 2 options to integrate models from TAO with DeepStream:

Option 1: Integrate the model (.onnx) with the encrypted key directly in the DeepStream app. The model file is generated by

tao model dssd export.Option 2: Generate a device specific optimized TensorRT engine, using tao-converter. The TensorRT engine file can also be ingested by DeepStream.

For DSSD, we will need to build TensorRT Open source plugins and custom bounding box parser. The instructions are provided below in the TensorRT OSS section above and the required code can be found in this GitHub repo.

In order to integrate the models with DeepStream, you need the following:

Download and install DeepStream SDK. The installation instructions for DeepStream are provided in the DeepStream Development Guide.

An exported

.onnxmodel file and optional calibration cache for INT8 precision.A

labels.txtfile containing the labels for classes in the order in which the networks produces outputs.A sample

config_infer_*.txtfile to configure the nvinfer element in DeepStream. The nvinfer element handles everything related to TensorRT optimization and engine creation in DeepStream.

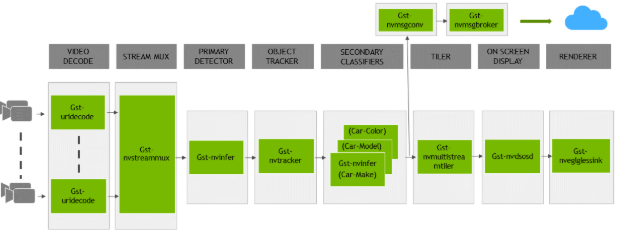

DeepStream SDK ships with an end-to-end reference application which is fully configurable. Users

can configure input sources, inference model and output sinks. The app requires a primary object

detection model, followed by an optional secondary classification model. The reference

application is installed as deepstream-app. The graphic below shows the architecture of the

reference application.

There are typically 2 or more configuration files that are used with this app. In the install

directory, the config files are located in samples/configs/deepstream-app or

sample/configs/tlt_pretrained_models. The main config file configures all the high level

parameters in the pipeline above. This would set input source and resolution, number of

inferences, tracker and output sinks. The other supporting config files are for each individual

inference engine. The inference specific config files are used to specify models, inference

resolution, batch size, number of classes and other customization. The main config file will call

all the supporting config files. Here are some config files in

samples/configs/deepstream-app for your reference.

source4_1080p_dec_infer-resnet_tracker_sgie_tiled_display_int8.txt: Main config fileconfig_infer_primary.txt: Supporting config file for primary detector in the pipeline aboveconfig_infer_secondary_*.txt: Supporting config file for secondary classifier in the pipeline above

The deepstream-app will only work with the main config file. This file will most likely

remain the same for all models and can be used directly from the DeepStream SDK will little to no

change. User will only have to modify or create config_infer_primary.txt and

config_infer_secondary_*.txt.

Integrating an DSSD Model#

To run a DSSD model in DeepStream, you need a label file and a DeepStream configuration file. In addition, you need to compile the TensorRT 7+ Open source software and DSSD bounding box parser for DeepStream.

A DeepStream sample with documentation on how to run inference using the trained DSSD models from TAO is provided on GitHub here.

Prerequisite for DSSD Model#

DSSD requires batchTilePlugin and NMS_TRT. This plugin is available in the TensorRT open source repo, but not in TensorRT 7.0. Detailed instructions to build TensorRT OSS can be found in TensorRT Open Source Software (OSS).

DSSD requires custom bounding box parsers that are not built-in inside the DeepStream SDK. The source code to build custom bounding box parsers for DSSD is available here. The following instructions can be used to build bounding box parser:

Step1: Install git-lfs (git >= 1.8.2)

curl -s https://packagecloud.io/install/repositories/github/git-lfs/script.deb.sh | sudo bash

sudo apt-get install git-lfs

git lfs install

Step 2: Download Source Code with SSH or HTTPS

git clone -b release/tlt3.0 https://github.com/NVIDIA-AI-IOT/deepstream_tlt_apps

Step 3: Build

// or Path for DS installation

export CUDA_VER=10.2 // CUDA version, e.g. 10.2

make

This generates libnvds_infercustomparser_tlt.so in the directory post_processor.

Label File#

The label file is a text file containing the names of the classes that the DSSD model is trained to detect. The order in which the classes are listed here must match the order in which the model predicts the output. During the training, TAO DSSD will specify all class names in lower case and sort them in alphabetical order. For example, if the dataset_config is:

dataset_config {

data_sources: {

label_directory_path: "/workspace/tao-experiments/data/training/label_2"

image_directory_path: "/workspace/tao-experiments/data/training/image_2"

}

target_class_mapping {

key: "car"

value: "car"

}

target_class_mapping {

key: "person"

value: "person"

}

target_class_mapping {

key: "bicycle"

value: "bicycle"

}

validation_data_sources: {

label_directory_path: "/workspace/tao-experiments/data/val/label"

image_directory_path: "/workspace/tao-experiments/data/val/image"

}

}

Then the corresponding dssd_labels.txt file would be:

background

bicycle

car

person

DeepStream Configuration File#

The detection model is typically used as a primary inference engine. It can also be used as a

secondary inference engine. To run this model in the sample deepstream-app, you must modify

the existing config_infer_primary.txt file to point to this model.

Option 1: Integrate the model (.onnx) directly in the DeepStream app.

For this option, users will need to add the following parameters in the configuration file.

The int8-calib-file is only required for INT8 precision.

onnx-file=<TAO exported .onnx>

int8-calib-file=<Calibration cache file>

From TAO 5.0.0, .etlt is deprecated. To integrate .etlt directly in the DeepStream app,

you need following parmaters in the configuration file.

tlt-encoded-model=<TLT exported .etlt>

tlt-model-key=<Model export key>

int8-calib-file=<Calibration cache file>

The tlt-encoded-model parameter points to the exported model (.etlt) from TLT.

The tlt-model-key is the encryption key used during model export.

Option 2: Integrate the TensorRT engine file with the DeepStream app.

Generate the device-specific TensorRT engine using TAO Deploy.

After the engine file is generated, modify the following parameter to use this engine with DeepStream:

model-engine-file=<PATH to generated TensorRT engine>

All other parameters are common between the two approaches. To use the custom bounding box parser instead of the default parsers in DeepStream, modify the following parameters in [property] section of primary infer configuration file:

parse-bbox-func-name=NvDsInferParseCustomNMSTLT

custom-lib-path=<PATH to libnvds_infercustomparser_tlt.so>

Add the label file generated above using:

labelfile-path=<dssd labels>

For all the options, see the sample configuration file below. To learn about what all the parameters are used for, refer to the DeepStream Development Guide.

[property]

gpu-id=0

net-scale-factor=1.0

offsets=103.939;116.779;123.68

model-color-format=1

labelfile-path=<Path to dssd_labels.txt>

onnx-file=<Path to DSSD onnx model>

maintain-aspect-ratio=1

batch-size=1

## 0=FP32, 1=INT8, 2=FP16 mode

network-mode=0

num-detected-classes=4

interval=0

gie-unique-id=1

is-classifier=0

#network-type=0

parse-bbox-func-name=NvDsInferParseCustomNMSTLT

custom-lib-path=<Path to libnvds_infercustomparser_tlt.so>

[class-attrs-all]

threshold=0.3

roi-top-offset=0

roi-bottom-offset=0

detected-min-w=0

detected-min-h=0

detected-max-w=0

detected-max-h=0