Introduction#

The NVIDIA Blueprint for Video Search and Summarization (VSS) provides a suite of reference architectures for building vision agents and AI-powered video analytics applications. These reference architectures include accelerated vision-based microservices, VLMs, and LLMs which can be used in your existing applications, as standalone microservices, or as part of a larger vision agent built using the blueprint.

Architecture Overview#

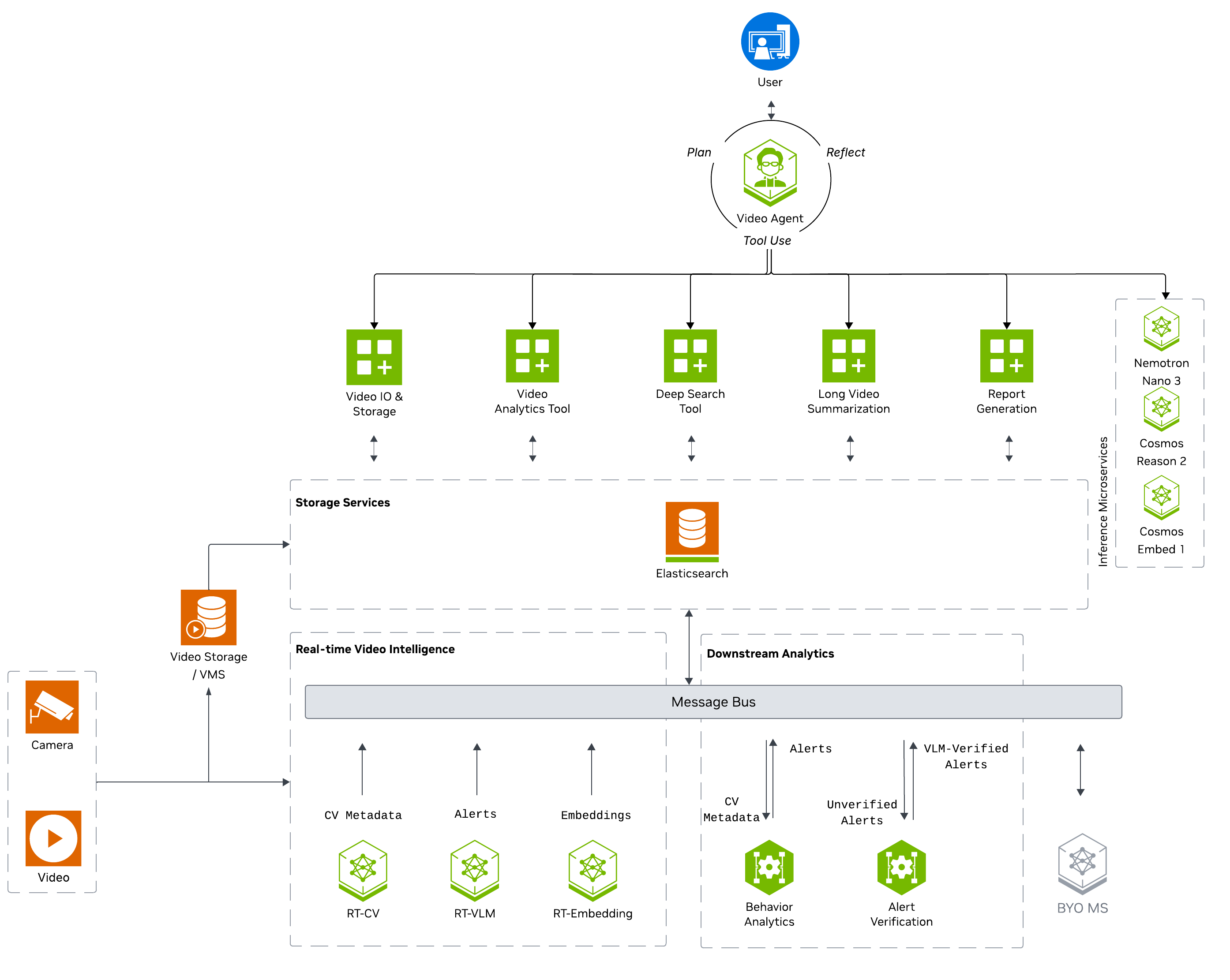

VSS is broken down into 3 major areas of video processing and analysis:

Real-time video intelligence: Extracts features from stored and streamed video in real-time, either continuously or on demand.

Downstream analytics: Analyzes extracted features posted to a message broker or stored in a database for downstream analysis.

Agentic and offline processing: Processes extracted features to generate reports, answers questions, and provides video search capabilities.

Real-Time Video Intelligence#

The Real-Time Video Intelligence layer extracts rich visual features, semantic embeddings, and contextual understanding from video data in real-time, publishing results to a message broker for downstream analytics and agentic workflows. It provides three core microservices for processing video streams:

Real-Time Computer Vision (RT-CV): leverages NVIDIA DeepStream SDK with models like RT-DETR, Grounding DINO, and Sparse4D to perform real-time object detection, classification, and multi-object tracking on single or multi-camera streams.

Real-Time Embedding (RT-Embedding): generates semantic embeddings from video, images, and live RTSP streams using Cosmos-Embed1 models, enabling efficient video search and similarity matching.

Real-Time VLM (RT-VLM): applies Vision Language Models (such as Cosmos Reason1/2 and Qwen3-VL) to generate natural language captions, detect incidents, and identify anomalies in video streams.

Downstream Analytics#

The Downstream Analytics layer processes and enriches the metadata streams generated by real-time video intelligence microservices, transforming raw detections into actionable insights and verified alerts. It provides two core microservices:

Behavior Analytics: consumes frame metadata from message brokers (Kafka, Redis Streams, or MQTT), tracks objects over time across camera sensors, and computes behavioral metrics including speed, direction, and trajectory. It detects spatial events (tripwire crossings, ROI entry/exit) and generates incidents based on configurable violation rules (proximity detection, restricted zones, confined areas).

Alert Verification Service: ingests alerts and incidents from upstream analytics or computer vision pipelines, retrieves corresponding video segments based on alert timestamps, and uses Vision Language Models to verify alert authenticity. Verified results with verdicts (confirmed/rejected/unverified) and reasoning traces are persisted to Elasticsearch and optionally published to Kafka for downstream consumption.

Agent and Offline Processing#

The Agent and Offline Processing layer provides an agent that orchestrates vision-based tools to generate insights, reports, and search capabilities from video content. The top-level agent leverages the Model Context Protocol (MCP) to access video analytics data, incident records, and vision processing capabilities through a unified tool interface. It integrates multiple vision-based tools including video understanding with Vision Language Models (VLMs), semantic video search using embeddings, long video summarization for extended footage analysis, and video snapshot/clip retrieval. These tools can also be used as standalone microservices, independent of the agent.

Deployment Types#

To demonstrate the use of the VSS architecture, we provide two deployment types:

Developer Profiles: Deployment type for developers to test and experiment with the VSS architecture. It starts with deploying a basic video agent and then builds on it with additional workflows.

Blueprint Examples: Deployment type for industry-specific use cases, demonstrating typical E2E deployments from video input to agentic workflows.

Developer Profiles#

Developer profiles are docker compose deployments which demonstrate the assembly of various VSS microservices to fullfill specific agent workflows. They are designed to be a starting point for developers to test and experiment with the VSS architecture. To see the developer profiles and their respective workflows, see Agent Workflows.

Industry-Specific Examples#

Industry-specific examples demonstrate the use of the VSS architecture in a variety of industry-specific use cases. These advanced reference deployments include parameters, sample data, and configurations which address key use cases. We provide two blueprint examples:

Smart City Blueprint: A blueprint example which leverages VSS for smart city use cases, including person and vehicle detection/tracking and event verification of collisions.

Warehouse Operations Blueprint: A blueprint example which leverages VSS for warehouse operations use cases, including people and forklift detection/tracking and event verification of near-miss events.