RT-DETR#

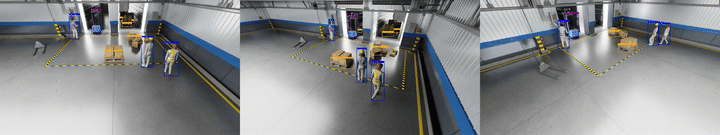

TAO RT-DETR is an advanced 2D Single-Camera Real-Time Detection Transformer tailored for warehouse environments and industrial automation settings. It generates precise 2D bounding boxes for a diverse set of objects including people, humanoid robots, autonomous vehicles, and warehouse equipment. The RT-DETR Warehouse 2D Model v1.0.1 is part of NVIDIA’s RT-DETR family and features an EfficientViT/L2 backbone, pretrained on warehouse scene datasets for precise 2D object detection in industrial environments.

Note

This model is optimized and ready for commercial deployment with support for fine-tuning via TAO Toolkit.

Model Card#

The TAO RT-DETR model card on NGC describes architecture, datasets, and accuracy methodology. TAO fine-tuning (notebook walkthrough, CLI commands, export, FP16 optimization) is documented in RT-DETR (TAO fine-tuning) so it is not duplicated here.

Inference using Perception Microservice#

Detailed information can be found in the 2D Single Camera Detection and Tracking (RT-DETR) page.

Real-Time Inference Throughput & Latency#

Inference runs through the DeepStream pipeline on TensorRT with mixed precision (FP16+FP32). The table below summarizes how many camera streams each GPU supports at 30 FPS and 15 FPS (with inference interval=1) for the RT-DETR model with EfficientViT-L2 backbone.

GPU |

@30 FPS |

@15 FPS (interval=1) |

|---|---|---|

1x DGX Spark |

4 |

8 |

1x RTX PRO 6000 (Server) |

28 |

57 |

1x RTX PRO 6000 (Workstation) |

30 |

61 |

1x Jetson AGX Thor - T5000 |

4 |

8 |

1x IGX Thor - T7000 |

4 |

9 |

1x B200 |

58 |

116 |

1x GB200 |

63 |

126 |

1x H100 |

17 |

35 |

1x H200 |

22 |

45 |

1x RTX 6000 Ada |

8 |

16 |

1x A100 |

9 |

19 |

1x L4 |

2 |

4 |

1x L40S |

7 |

15 |

KPI#

The key performance indicators are Average Precision (AP) per-class evaluated on the Warehouse Synthetic Test dataset. AP quantifies a detector’s ability to trade off precision and recall for a single object category by computing the normalized area under its precision-recall curve.

The model supports 7 object categories: Person, Agility Digit (humanoid robot), Fourier GR1_T2 (humanoid robot), Nova Carter, Transporter, Forklift, and Pallet.

Evaluation Settings

The reported metrics use the following evaluation configuration:

AP Variant: COCO AP@0.50

IoU Thresholds: 0.50

Max Detections: 100 detections per image

Matching Policy: Greedy matching based on IoU with ground truth boxes, highest confidence predictions matched first

The evaluation is performed on the MTMC Tracking 2025 subset from the NVIDIA PhysicalAI-SmartSpaces dataset. This is a comprehensive, annotated dataset for multi-camera tracking and 2D/3D object detection, synthetically generated with NVIDIA Omniverse. The dataset consists of time-synchronized video from indoor warehouse scenes with annotations for 2D & 3D bounding boxes and multi-camera tracking IDs. The Warehouse Synthetic Test dataset used for evaluation is the Warehouse_019 scene from the test split.

Dataset |

Person |

Agility Digit |

GR1_T2 |

Nova Carter |

Transporter |

Forklift |

Pallet |

|---|---|---|---|---|---|---|---|

Warehouse Synthetic Test |

0.970 |

0.969 |

0.920 |

0.960 |

0.940 |

0.851 |

0.891 |

Please refer to the Model Card for more details on benchmark datasets and evaluation methodology.