Overview of NeMo Microservices#

NVIDIA NeMo is a modular, enterprise-ready software suite for managing the AI agent lifecycle, enabling enterprises to build, deploy, and optimize agentic systems.

NVIDIA NeMo microservices, part of the NVIDIA NeMo software suite, are an API-first modular set of tools that you can use to customize, evaluate, and secure large language models (LLMs) and embedding models while optimizing AI applications across on-premises or cloud-based Kubernetes clusters.

The NeMo microservices are categorized into the following two types: functional and platform component.

Functional Microservices#

The following are the functional microservices for LLMs and embedding models.

NVIDIA NeMo Customizer: Facilitates the fine-tuning of large language models (LLMs) and embedding models using full-supervised and parameter-efficient fine-tuning techniques.

NVIDIA NeMo Evaluator: Provides comprehensive evaluation capabilities for LLMs and embedding models, supporting academic benchmarks, custom automated evaluations, and LLM-as-a-Judge approaches.

NVIDIA NeMo Guardrails: Adds safety checks and content moderation to LLM endpoints, protecting against hallucinations, harmful content, and security vulnerabilities.

NVIDIA NeMo Data Designer (Early Access): Generates high-quality synthetic datasets using AI models, statistical sampling, and configurable data schemas.

NVIDIA NeMo Auditor (Early Access): Audits models and agentic applications for security vulnerabilities and harmful content.

Platform Component Microservices#

The following are the platform component microservices that form the infrastructure for the functional microservices.

NVIDIA NeMo Data Store: Serves as the default file storage solution for the NeMo microservices platform, exposing APIs compatible with the Hugging Face Hub client (

HfApi).NVIDIA NeMo Entity Store: Provides tools to manage and organize general entities such as namespaces, projects, datasets, and models.

NVIDIA NeMo Deployment Management: Provides an API to deploy NIM on a Kubernetes cluster and manage them through the NIM Operator microservice.

NVIDIA NeMo NIM Proxy: Provides a unified endpoint that you can use to access all deployed NIM for inference tasks.

NVIDIA NeMo Operator: Manages custom resource definitions (CRDs) for NeMo Customizer fine-tuning jobs.

NVIDIA DGX Cloud Admission Controller: Enables multi-node training requirements for NeMo Customizer jobs through a mutating admission webhook.

Target Users#

This documentation serves two types of users.

Cluster administrators: Deploy and manage the NeMo microservices together or as individual components on a Kubernetes cluster.

Users: Use deployed NeMo Microservices by cluster administrators in their organization to fine-tune, evaluate, run inference, or apply guardrails to their models. This category includes data scientists and AI application developers.

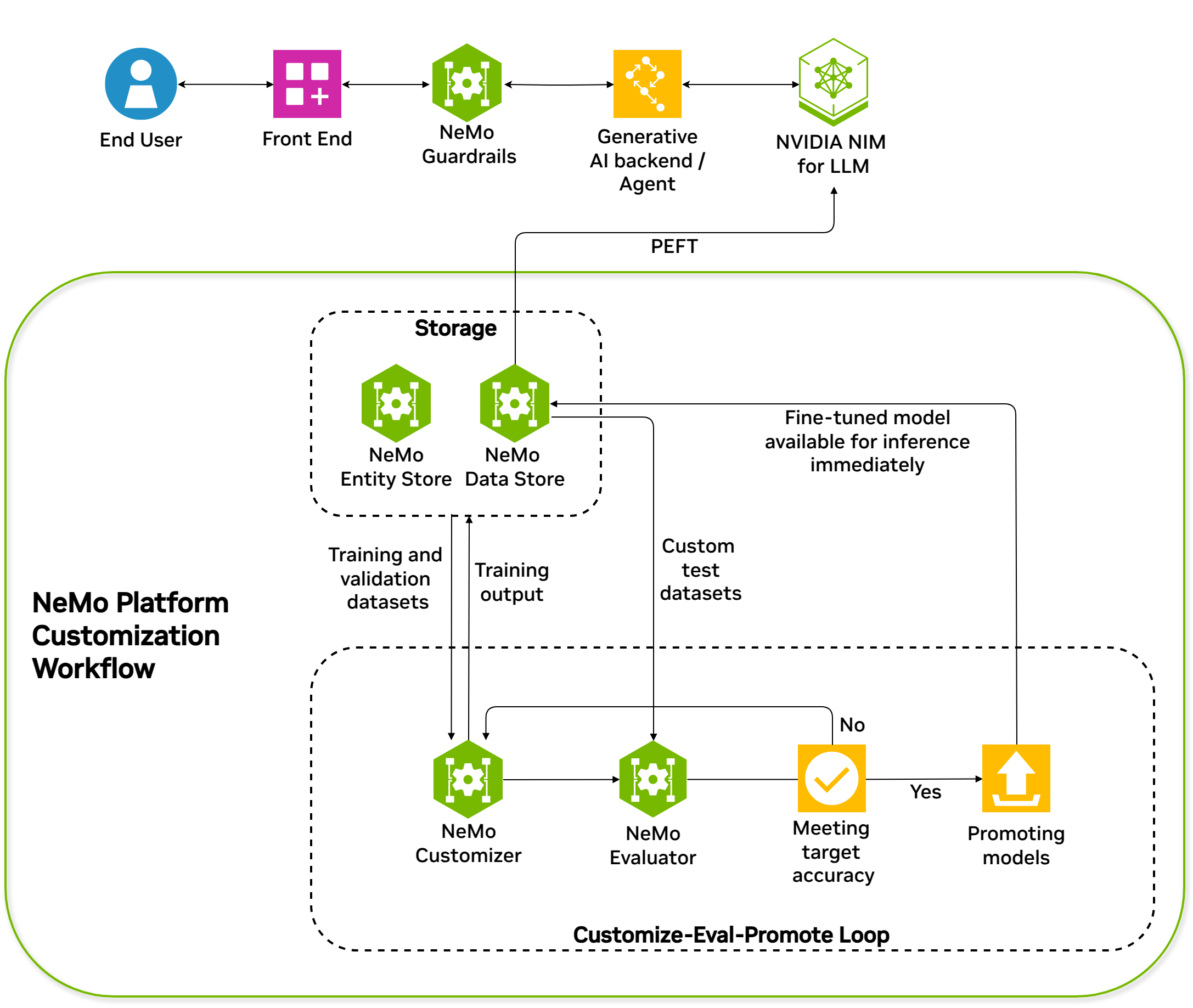

High-level Data Flywheel Architecture Diagram with NeMo Microservices#

A data flywheel represents the lifecycle of models and data in a machine learning workflow. The process cycles through data ingestion, model training, evaluation, and deployment.

The following diagram illustrates how the NeMo microservices can construct a complete data flywheel.

Concepts#

Explore the foundational concepts and terminology used across the NeMo microservices platform.

Start here to learn about the concepts that make up the NeMo microservices platform.

Learn about the core entities you can use in your AI workflows.

Learn about the fine-tuning concepts you’ll need to be familiar with to customize base models.

Learn about the concepts you’ll need to be familiar with to evaluate your AI workflows.

Learn about the concepts you’ll need to be familiar with to use the Inference service for testing and serving your custom models.

Learn about the concepts you’ll need to be familiar with to control the interaction with your AI workflows.